Shalin Jyotishi

Founder and Director, Future of Work and Innovation Economy Initiative

The third in a three-part series from New America’s New Models for Career Preparation Project.

Download a PDF copy of this brief.

Despite the growing demand for and availability of non-degree workforce training, outcomes for these programs are mixed. For some, non-degree programs are a faster, more affordable pathway to a good job, and, more importantly, a career that offers economic security—they represent the future of education. But for others, non-degree programs are a hyped-up distraction from degree attainment that leads to unemployment, underemployment, or employment in poverty-wage jobs with limited advancement opportunities—particularly for Black and brown learners.

This project aims to help unlock the full potential of non-degree workforce training, especially at public community colleges where these programs are commonly found. In 2019, the Center on Education & Labor at New America (CELNA) launched the New Models for Career Preparation Project, a research and storytelling initiative to build a better understanding of non-degree workforce education and identify common characteristics of high-performing programs, including the design, financing, and strategy principles that go into creating high-quality non-degree workforce programs at community colleges. Many of these principles will also be relevant to universities, nonprofits, and even companies offering such programs.

Over the past three years, we studied literature in the field to synthesize, develop, and validate a concise quality framework for non-degree programs at colleges. Then, we partnered with 12 colleges in two rounds of research to study what goes into quality program design.

We conducted site visits at each college to understand what works—and what doesn’t—when creating non-degree programs. We collected data on colleges’ best-performing programs and conducted extensive research on the work of dozens of peer organizations, academics, and researchers to ensure that we were adding to the field’s understanding and, more importantly, the adoption of quality non-degree program development principles.

With both program and institutional levels in mind, we wrote this three-part series to help community college leaders—mainly presidents, provosts, and workforce leaders—better plan, deliver, and improve high-quality non-degree workforce programs.

We hope these briefs serve as valuable resources for the field—and we know that our work is not done. We look forward to continuing to help community colleges maximize their workforce impact in the years ahead.

This brief is the third in a three-part series that focuses on creating non-degree workforce programs:

Community college leaders bring decades of expertise to their work, drawing from their own experiences and national best practices. We hope that this collection of briefs will help these leaders with practical examples that will inspire both institutional and program-level changes necessary to maximize the impact of non-degree workforce training.

We recognize that community colleges are often striving for excellence in non-degree workforce innovation without receiving appropriate support from policymakers. We also produced a special policy brief to provide state and federal lawmakers with action items to help colleges maximize the benefits of non-degree workforce programs while mitigating the risks to students, institutions, employers, and the economy as a whole.

Adequate financing is critical to these programs, so together with our partners, we also recommended innovative strategies for financing non-degree programs to help colleges plan for and fund these programs.

Five Criteria for Building Quality Non-Degree Workforce Programs

We reviewed the literature on program quality and met with hundreds of colleges, employers, policy leaders, workforce and economic development professionals, philanthropy officers, and academics to help us synthesize five criteria for identifying quality non-degree workforce programs:

A college must collect and use program-level outcomes data, including job placement rates, starting salaries, and details about the kinds of jobs graduates receive—all broken out by demographics—to be sure that a non-degree workforce program is successful and equitable. Unfortunately, community colleges, especially their workforce development units, face significant barriers to using data to evaluate success.

The federal government and most states do not systematically collect outcomes data for non-degree workforce programs at community colleges, especially not for programs offered in the non-credit format.[1] Colleges operate in an increasingly resource-constrained environment with growing expectations and limited staff bandwidth, expertise, and technology to collect and use outcomes data appropriately.

In recent years, as the student success narrative in higher education policy has evolved from an emphasis on program completion to employment outcomes, many organizations and academics have dedicated substantial resources to study, develop, improve, or advocate for federal, state, and college-level solutions to collecting and using program-level outcomes data.

Community college associations such as Achieving the Dream have built a robust set of activities to help college administrators strengthen data, technology, and analytics capabilities. Policy and research organizations like New America, the National Association of College and Employers, the Institute for Higher Education Policy, and many others have studied and argued for systemic fixes to help all higher education institutions better use program-level outcomes data.

Meanwhile, there is growing federal interest in capturing outcomes from non-degree programs. For example, the Interagency Working Group on Expanded Measures of Enrollment and Attainment (GEMEnA) working group’s work led to the Adult Training and Education Survey (ATES) and the upcoming National Adult Training and Education Survey (NATES) survey. Debate among lawmakers around performance-based funding and the federal gainful employment rule have drawn attention to program-level outcomes data.

Formal federal initiatives, such as the U.S. Census Bureau’s Post-Secondary Educational Outcomes Project (PSEO), have matured, providing many stakeholders earnings and employment outcomes for college graduates by degree level, degree major, and postsecondary institution. As of July 2022, 23 states have signed data-sharing partnerships with the U.S. Census Bureau to track employment outcomes for degree programs and seven of those states include outcomes data for certificate programs. At the state level, higher education systems in Texas, Pennsylvania, Minnesota, and beyond have sought to link student record data to Unemployment Insurance (UI) Wage Records, which consists of employer information alongside an individual's identifying information and wages.

While many of these efforts have focused either on four-year institutions or on generating outcomes data for degree programs and credit-bearing academic certificates, there has been steady progress in helping community colleges use data to drive decision-making for workforce programs as well.

Colleges still struggle with institutionalizing data-driven program improvement, however, especially for non-degree workforce programs. Colleges and the learners they serve can’t afford to wait. Colleges need practical advice for collecting and using program-level data to help improve outcomes for their students, and that is the topic of this final publication in New America’s New Models for Career Preparation brief series.

In the planning and delivery of quality non-degree programs, as discussed in New Models brief 1 and brief 2, colleges should have defined success and identified specific goals for their programs that align with the five quality criteria summarized above. Figure 1, below, juxtaposes outcomes data with the quality criteria.

Data are especially critical to ensure equitable program outcomes. Outcomes data should be broken out by factors like age, race, gender, socioeconomic status, and parameters such as veteran status or whether the student is returning to higher education or is a first-time credential earner.

For a systemic approach to program improvement, colleges need a process to collect, organize, and use data from faculty and staff working in institutional research, workforce development, academic programs, communications, student affairs, and beyond.

In his book Getting Things Done, productivity author David Allen outlines a framework for moving from strategy to execution. Figure 2, below, is based on that framework and outlines three steps for using data to drive program improvement.

The first step is to understand what outcomes data need to be collected, how, by whom. The second step is to clarify who will sort, analyze, and organize the data. The final step focuses on the use of the data for the purposes of program improvement.

Colleges should know how decisions about program improvement are currently made and identify how outcomes data can be used to support each part of the decision-making process.

Our research has suggested that colleges should avoid siloing all the steps described above within institutional research because most research offices are already fully occupied with compliance issues. A cross-functional staffing approach helps ensure that all senior leaders and relevant decision-makers are complementing their personal experiences with data.

To accomplish this goal, most colleges will require staff to upskill. For example, Lorain County Community College is offering “Local Econ 101” to advising and career services staff to help them become conversant in regional economics, including the labor market. At Montgomery Community College in Maryland, various employees are required to take data ethics training to think about how student data should be handled.

Staff adjacent to the program improvement process can also make use of outcomes data when writing grant proposals, advocating with state lawmakers, and building marketing campaigns and social media content.

Ideally, states and community college systems will systematize the collection and use of program-level outcomes data. States and state systems should also support colleges in organizing and using data, as we will describe below through two examples from California and Wisconsin.

The California Community College system built a database called LaunchBoard to track employment and earnings outcomes for the state’s 115 community colleges and it followed up that investment with capacity-building grants and technical assistance to help college personnel learn how to effectively use outcomes data. LaunchBoard links UI wage records to student-level data, allowing every college in the system to match qualitative data and anecdotes with quantitative outcomes data. In 2016, when California created the Strong Workforce Program, a multi-million dollar investment in workforce education, the San Diego Imperial Counties Community College Regional Consortium used LaunchBoard to track and report outcomes metrics and drive improvement for programs supported by Strong Workforce dollars. Colleges like Miracosta College also used the data to better direct money from federal Perkins grants towards the best performing programs.[2]

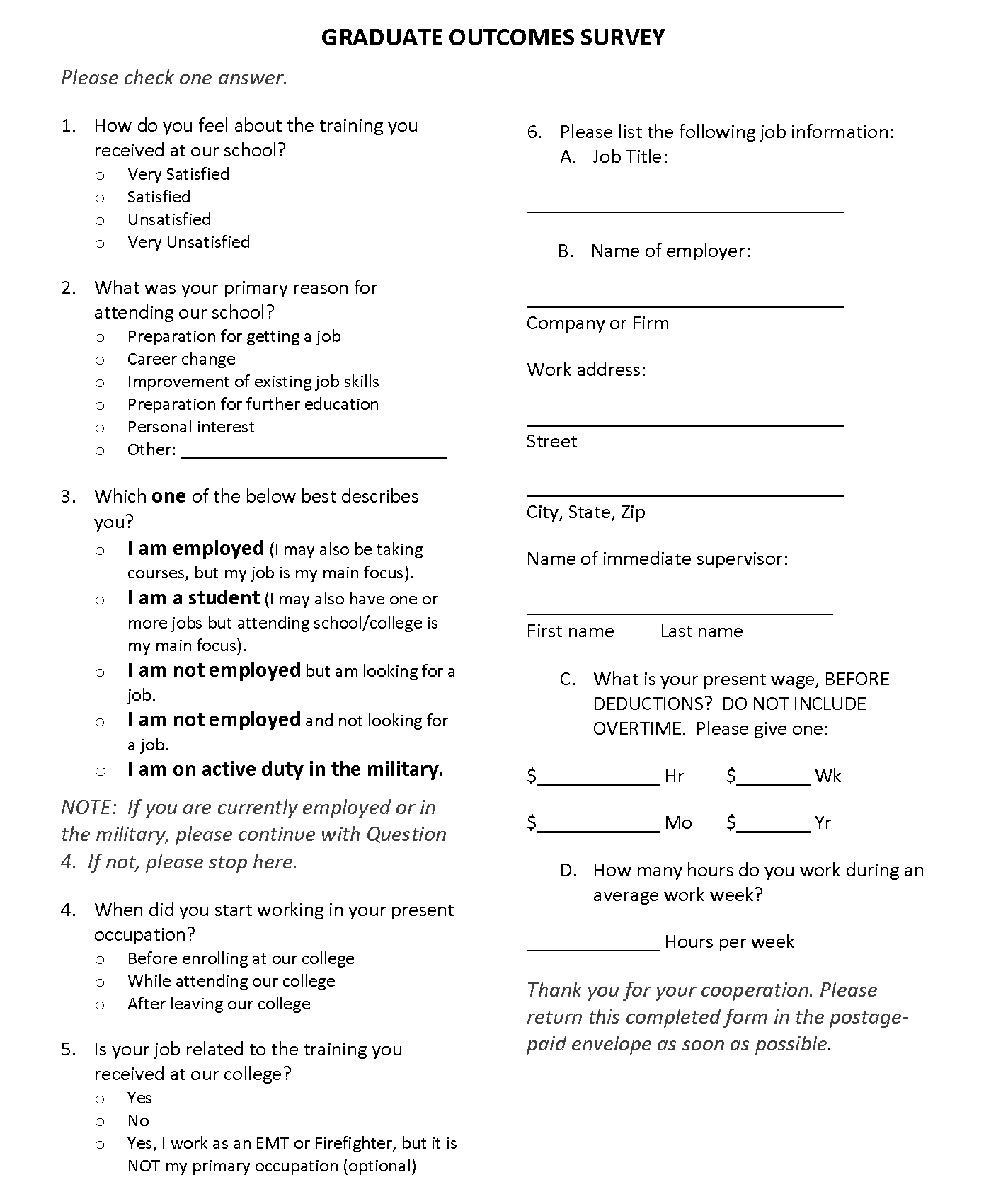

The Wisconsin Technical College System (WTCS)’s Graduate Outcomes Survey tracked labor market and employment outcomes broken out by demographics for all 16 colleges in the system. WTCS boast a survey response rate of 64 percent, and the survey instrument and methodology are publicly available and pictured below in Figure 3. Colleges have the incentive to call, text, and email students to obtain responses to the survey due to the state’s outcome-based funding incentive structure, a strong alignment of state policy and action at the system level.

The survey is administered at colleges or contracted out to collection centers. Data are collected six months after graduation. Institutional research staff members often visit classrooms to tell students about the importance of completing the survey, and colleges use the outcomes data to inform program improvement, marketing, advocacy, and any other need in which outcomes data are useful.

The survey also asks students to voluntarily offer supervisors’ mailing addresses and contact information, so that the college can also survey employers. In addition to the six-month surveys, the system also conducts five-year follow-up surveys and even an outcomes survey specific to apprenticeships.

Community colleges can also rely on state workforce agencies for information about non-degree program outcomes. Many state and local workforce boards collect data on program-level outcomes of providers on the eligible training provider list (ETPL). In our research, many colleges report challenges in obtaining this information from workforce boards, but it can be done. A good example is Washington State’s CareerBridge website, which New America has used to analyze short-term programs in the state.

In the absence of, or in concert with, state-level systems to collect outcomes data, colleges can gather this information themselves. Much like in the Wisconsin Technical College System example described above, that means surveying students or collecting outcomes data from employers.

There are obvious limitations to surveying students about their workforce outcomes, but some data are better than none and the data can improve with time. Many specialized accreditors and the federal Perkins program already require it, so colleges likely have a baseline from which to build out data collecting for non-degree programs.

Colleges could require exit surveys, for example, as the final task in the final course of a program. This approach would also allow colleges to ask students for program feedback and how the college could support them after graduation, including interest in stackability.

Mott Community College uses a software system to track data related to employment for all non-credit workforce programs. Mott’s efforts-to-outcomes system allows the college to send a monthly follow-up to graduates for up to one year after employment. Information about program graduates’ employers and graduates’ job titles, start dates, pay rates, and benefits is collected.

A less targeted but worthwhile approach would be to ask marketing and communications offices to share outcomes surveys via social media and outreach channels to solicit feedback from graduates. Most colleges have social media platforms, newsletters, alumni associations, mailers, and community-based organization partners, all of which can be used to distribute outcomes surveys.

Colleges could also obtain student outcomes data directly from employer partners. As described in New Models brief 1 and brief 2, programs should be created with substantial co-creation with local hiring managers. While many employers will be reluctant to share data about starting salaries, placement rates, and benefits packages, it is worth asking for the information in the spirit of continuous improvement.

Colleges that partner with single employers for a customized training program are especially well positioned to include a data-sharing element in their contracts with these employers. Mesa Community College and Boeing, for example, partnered for a credit-bearing, week-long manufacturing boot camp to address a concrete labor market need. Even for such a short program, Mesa was able to collect critical outcomes data that informed program improvement.

Additionally, tools from private companies such as Lightcast's Alumni Pathways Program and partnership with the National Student Clearing House have also helped community colleges derive insights to inform program improvement, fundraising, marketing, and other efforts.

Colleges should ensure that student outcomes are aligned with the stated program goals, but they must also ensure that the program is leaving employers satisfied with graduates.

States, systems, or colleges should institutionalize employer outcomes surveys the way Wisconsin Technical College System has—mirroring the way it gathers student outcomes data described above. The survey is conducted every four years and collects high-level information such as employers' views of the "importance of local technical college" and whether they would "hire graduate again" or "recommend graduate to another employer." The survey obtains an impressive 40 percent response rate. Regularly scheduled employer surveys complement ongoing discussions between college personnel and employers. A copy of the survey instrument can be found on the Wisconsin Technical College System website.

In addition to outcomes data and employer feedback, LMI is a second layer of quality assurance in case there have been shifts from program conception. LMI is an umbrella term to describe all the data a college can learn about its local job market. LMI can be granular (e.g., real-time data about the demand for manufacturing technicians in three zip codes) or general (e.g., projected demand for nurses in a state or region). Not all non-degree programs are short-term, and even if they are, the job market can rapidly change. Checking both outcome data and LMI when improving programs is prudent.

LMI should augment, not replace, insights gleaned from industry advisory committees and other structures to collect feedback directly from employer partners. LMI data points include:

Data USA by Deloitte is a collection of publicly available LMI data at the federal level. State workforce agencies are another source of LMI reports. Popular private LMI vendors include Lightcast (formerly Emsi Burning Glass), Chmura, and LinkedIn Insights. These vendors use methods like scraping real-time job postings or salary data to derive insights about what employers seek, including in-demand skills, industry certifications, and years of experience, among other data.

Some colleges have reported that LMI is less relevant to jobs related to non-degree workforce training because those jobs may not be posted on public job boards. However, we believe that keeping the limitations of LMI in mind, it is worth investing in.

Economic development organizations, chambers of commerce, industry trade associations, regional economic research centers based at universities, think tanks, and states can all be sources of free or low-cost LMI. Each provider uses a variety of methods to develop LMI, ranging from economic analysis and modeling to surveys of local employers.

The final data point for a strong improvement process is learning from the experiences of alumni. Beyond outcomes surveys, colleges can make use of virtual or in-person town halls, focus groups, alumni advisory committees, or even conversations with graduates led by faculty and staff.

These relationships can help schools understand future education needs and alumni aspirations, which can help them understand how to make stackability a reality, which is another component of our quality framework. Colleges could include alumni on industry advisory committees or other standardized protocols used to hear feedback on workforce programs.

Some colleges may have informal or formal alumni associations, but alumni associations are rarely used strategically for program improvement, especially for non-degree programs. Embedding feedback loops within any college-wide alumni association is another way workforce leaders can gain insights on how to improve their non-degree programs.

In addition to improving programs, outcomes data can be used for two other important purposes aligned with the quality framework described at the start of the brief: meeting equity goals and supporting job quality.

Colleges should use student outcomes data to gauge how they are progressing towards their stated equity goals, designed in the planning and delivery stages described in briefs 1 and 2. Colleges should have a sense of the current demographics of the sector they are training for from their LMI. For example, perhaps manufacturing technicians in a college’s region are mostly white males, and the college wants to recruit more women of color into those roles. Using enrollment and outcomes data, the college can track if it is making progress toward that goal.

Research from the Joint Center for Economic and Political Studies about the state of Black students at community colleges found that Black students earn certificates at a higher rate than other groups. Colleges serious about advancing equity must invest in a way to know what happens to all of their non-degree program graduates. Outcomes data will tell colleges whether or not they are on track.

Research from New America has highlighted that many short-term, non-degree credentials lead to unemployment, underemployment, or employment in jobs that don’t offer financial stability or a lifestyle that is much better than jobs available to high school graduates. Black and Hispanic men earn less from short-term certificates than White men, with Black workers experiencing the lowest earnings among workers of any race. The relationship between non-degree programs and job quality was addressed in detail in brief 1 but also comes into play during program improvement.

Outcomes and LMI data can be used to advocate for better job quality. If the data suggest graduates are landing jobs with employers that don’t offer a livable wage, basic benefits, or a career ladder, colleges will be able to raise the issue with employers, who complain about filling vacancies, or with policymakers, who place too much attention on the number of jobs in a region, rather than the quality of those jobs. Hawkeye Community College, for example, leveraged data to push back on employers who demanded more training programs for prospective workers when, in reality, it was the employers that needed to focus on job quality and retention. Data can help colleges advance job quality improvements with employer partners.

We would like to thank Lumina Foundation and especially Chauncy Lennon, Kermit Kaleba, and Georgia Reagan for graciously supporting the New Models for Career Preparation project, under the auspices of which this brief was commissioned. Thanks also to Holly Zanville for her support in conceptualizing this project and earlier research on non-degree credentials.

The views expressed in this report are those of its author and do not necessarily represent the views of Lumina Foundation, its officers, or its employees.

Our gratitude also goes to Mary Alice McCarthy for guidance and feedback on this brief and project. We thank Riker Pasterkiewicz, Fabio Murgia, Sabrina Detlef, Amanda Dean, and Kim Akker for editing, design, and communications support.

We thank our 2021 New Models for Career Preparation cohort and campus contacts: Anne Bartlett, Brazosport College; Lori Keller, Bates Technical College; Leah Palmer, Mesa Community College; Marcy Lynch, Monroe Community College; Antonio Delgado, Miami Dade College; and Beth Stall, Dallas College.

We thank our 2022 New Models for Career Preparation cohort and campus contacts: Rick Hodge and Gayle Pitman, Sacramento City College; Robert Matthews, Marcus Matthews, and Autumn Scherzer, Mott Community College; Patrick Enright and Katrina Bell, County College of Morris; Tamara Williams and Laura Hanson, Tidewater Community College; Michael Hoffman, Jenny Foster, and Curt Buhr, Des Moines Area Community College; and Linda Head, Lone Star College.

Finally, this work would have not been possible without invaluable input from and collaboration with our advisory committee: Abby Snay, deputy secretary, Future of Work at California Labor & Workforce; Karen Stout, president and CEO, Achieving the Dream; Rey Garcia, professor, University of Maryland College Global; Amanda Cage, president & CEO, National Fund for Workforce Solutions; Amy Kardel, VP Strategic Workforce Relationships, CompTIA; Rebecca Hanson, executive director, SEIU UHW-West & Joint Employer Education Fund; Deborah Bragg, fellow, New America; Anthony Caison, VP for Workforce Continuing Education, Wake Technical College; Ian Roark, vice chancellor of Workforce Development and Strategic Partnerships, Pima Community College; Pam Eddinger, president, Bunker Hill Community College; Gregory Haile, president, Broward College; Annette Parker, president, South Central College; Bill Pink, past president, Grand Rapids Community College; and Paul Pullido, interim executive director, Slate-Z.

[1] As discussed in our first brief, it is a common misconception that all non-degree workforce programs are also non-credit.

[2] Priority-based funding is a good tactic to ensure resources are dedicated to the best performing non-degree workforce programs: https://www.newamerica.org/education-policy/edcentral/three-innovative-ways-community-colleges-can-fund-non-degree-workforce-programs/.