The Long View of Digital Authoritarianism

Rulers looking to consolidate power are using digital technologies more than ever before to surveil, censor, and suppress their people. Just last month, it was reported that Moscow is weaving artificial intelligence (AI) into its city surveillance system. Much has been made about the spread of this “digital authoritarianism” and what to do about it, especially as AI plays a greater role in enhancing and enabling authoritarian governance.

Yet digital authoritarianism has existed for decades—not in the physical space that AI is emerging in now, but online, through the internet; it’s not new in principle. What analysts today call “digital authoritarianism” is “networked authoritarianism” and other terms by other names. For those wringing their hands over what to do about dictators spying on and repressing their citizens via AI, looking at the spread of digital authoritarianism on the far-less-flashy internet is a good place to start.

Digital Authoritarianism Online

For the better part of two decades, liberal-democracies idealized the internet as a free, open, interoperable, secure, and resilient global network. A 2003 U.S. Federal Communications Commission document on network reliability and interoperability underscored several of these exact principles, and other government policy documents spanning a number of liberal-democracies have literally or nearly echoed these principles in the years since. These principles have manifested in domestic and foreign policies geared toward the protection of free speech online, the defense of net neutrality, and a relatively hands-off government approach to managing internet infrastructure and content.

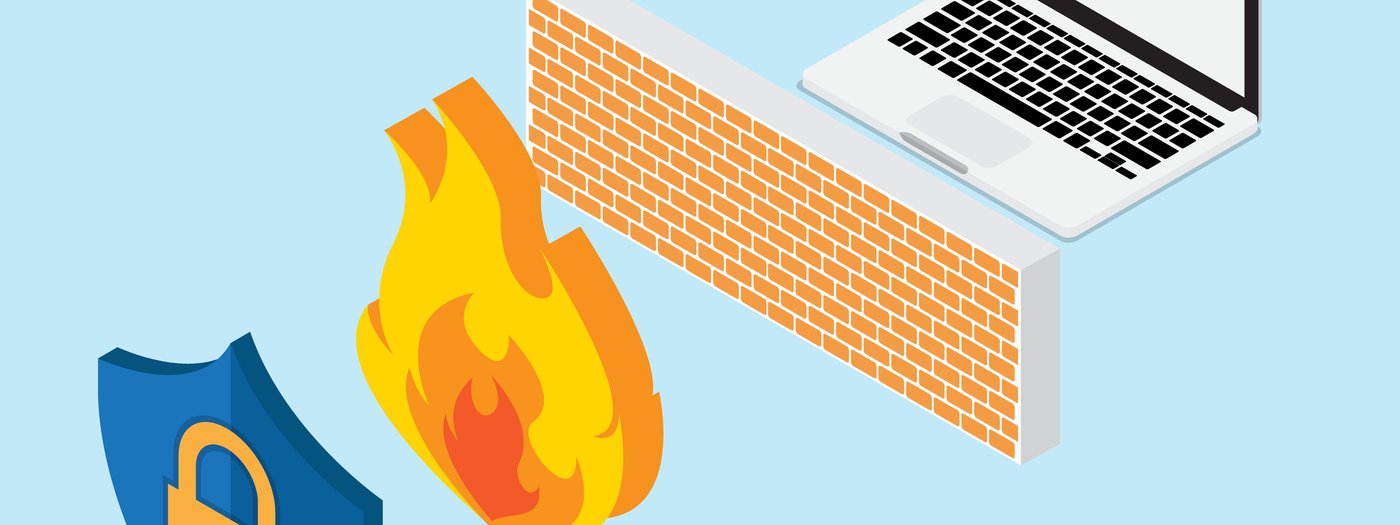

Authoritarian regimes have historically taken a different approach toward the internet within their borders. Rather than maintaining a relatively laissez-faire attitude around internet governance and online content, autocrats have upheld the notion of “cyber sovereignty”—state control of the internet as an element of a state’s right to self-governance within its sovereign borders. In 2000, the Chinese state initiated the Golden Shield Project to this end, aimed at bolstering police control, responsiveness, and crime-fighting. Today, the massive censorship system better-known as the “Great Firewall” uses techniques like deep packet inspection to filter and control the flow of online content and uphold what Chinese President Xi Jinping has called a “clean and righteous” internet within China’s borders. The Russian state, believing similarly that online information is a potential threat to its political stability, also builds its internet governance regime around the notion of cyber sovereignty. Laws that ban Virtual Private Networks, bolster state control over data flows, and even look to build out a domestic Russian internet all work in service of this end as well.

While once relatively concentrated in a few countries like China and Russia, digital authoritarianism online is spreading; more and more countries are exerting control over the internet architecture within their borders and enacting governance structures (laws, regulations, etc.) that allow them to do so. Empirical questions remain about the underlying drivers of this spread, but widespread perceptions of cyber insecurity and the presence of available digital authoritarianism models from China and Russia appear to be growing in impact and are likely compelling many policymakers to exert greater control over the internet. The ways this spread has occurred hold lessons for policymakers looking at digital authoritarianism and AI—including the ways digital authoritarianism online has spread through technology exports, domestic example-setting, and international engagement.

Technology Exports

First, digital authoritarianism online has spread through exports of technologies (e.g., surveillance software) and related capabilities (e.g., hardware needed to run certain software). Private firms around the world have exported dual-use surveillance technologies that enable censorship, surveillance, and other like practices—“dual-use” meaning the technologies have applications in both civilian and military spaces. Tools for inspecting packets of internet data, for instance, could be used to filter and censor online content in addition to filter and block malware.

Chinese companies have exported dual-use surveillance technologies for inspecting and filtering internet communications to a number of governments around the world, including in Ethiopia, Ecuador, South Africa, Bolivia, Egypt, Rwanda, and Saudi Arabia. Russian firms have similarly exported wiretapping and internet surveillance technologies to countries such as Kazakhstan, Tajikistan, Turkmenistan, and Uzbekistan. This enables countries with less technological sophistication to acquire digital tools for surveillance and control used by more experienced counterparts. As Anne-Marie Slaughter notes, “dictators are creating and sharing tools for greater population control than ever before.”

Democracies take steps to limit such exports, like through the 41-member Wassenaar Arrangement to control the sale and trafficking of dual-use technologies. Yet there are documented cases in which companies incorporated in democracies export dual-use surveillance technology to authoritarian or authoritarian-leaning countries as well; it’s not just companies in autocratic countries that enable digital authoritarianism to spread online. And even with a variety of stakeholders contributing to the Wassenaar Arrangement language, signatory countries have still run into difficulties, such as inadvertently impeding cybersecurity research and vulnerability information sharing through overly broad controls.

This relates directly to AI given that Chinese companies have exported AI applications that are also dual-use to governments in Singapore, the United Arab Emirates, Zimbabwe, and other less-than-democratic countries. As with internet monitoring tools, differentiating harmful AI applications from ones that are commercial or benign here is extremely difficult. The same technologies that could be used to target faces on a gun turret could be used to identify passengers boarding an airplane, for instance. AI tools are often dual-use.

Those policymakers advocating for sweeping export controls on dual-use AI technologies would be wise to see the difficulties the Wassenaar Arrangement has faced in regulating dual-use technologies as evidence of the need for nuanced, multi-stakeholder input in combating digital authoritarianism and artificial intelligence. If export controls are viable for AI—and that’s a big if—they have to be done very carefully, and updated regularly. Overly broad controls could harm industry and the open nature of global AI research. Related, policymakers could also pay attention to governments educating and training other regimes on repressive uses of AI, alongside exports of those dual-use technologies (as they have with internet technologies); it may not just be private-sector entities involved in this spread of tools for digital authoritarianism.

Domestic Example-Setting

Second, world powers like China, Russia, and the United States have influenced the spread of digital authoritarianism online (or the lack thereof) via domestic example-setting. This is because the viability and attractiveness of internet governance models in such large and influential countries—for instance, the ways in which a global and open internet boosts the economy, or the ways in which digital authoritarianism allows leaders to consolidate control over information flows—impacts policy decisions outside those countries’ borders.

Beijing and Moscow in particular have provided viable technical and policy models that allow for censoring foreign news content and cracking down on online dissent. China’s use of sophisticated internet monitoring and filtering tools has long provided the government robust capabilities to control the flow of online content. Russia, meanwhile, is advancing plans for a national internet that would push internet fragmentation much deeper than ever before—not just filtering content on top of the internet, but altering the internet’s architecture itself. The economic benefits of a global and open internet, in contrast, have been at the core of policy documents in democratic countries like the United States that stand against digital authoritarianism online.

In the current political and information climate, the capabilities that digital authoritarians demonstrate are attractive to many countries. And many are acting on it, as governments around the world crack down on dissent and embrace digital authoritarianism online—oftentimes, following quite closely the examples set by world powers. These policies enable authoritarian governments to further consolidate power and manage domestic instability.

When considering the emerging use of artificial intelligence in the service of digital authoritarianism, then, policymakers ought to keep in mind the importance of domestic example-setting. The fashions in which influential countries like the United States, India, China, and Russia engage with AI can influence other smaller states’ decisions; they can also shape markets in ways that, say, attempt to fight malicious uses of AI. For countries looking to digital authoritarianism, it’s not just about having the capability (i.e., through tech exports), but about seeing how others use it.

International Engagement

And third, world powers have promoted digital authoritarianism online through international engagement. This has taken place directly with other countries, mainly through infrastructure build-outs that buy political influence, and in bodies like the United Nations General Assembly, where norms are set around internet governance writ large.

China’s infrastructure investments in Tanzania, Nigeria, and elsewhere have lent it influence over recipient countries’ internet governance policies, which have already seen some returns: Tanzania and Nigeria have notably cracked down on internet content in the last year. For countries that may already be attracted to digital authoritarianism online through the examples Beijing sets domestically, these investments may push them further along that path.

Beyond bilateral investments, China, Russia, and other advocates for digital authoritarianism online have also been quite active in the U.N. General Assembly, submitting internet code of conduct proposals in contest to the ones submitted by the United States and other advocates of a global and open internet. They attempt to set international norms around internet governance. And disagreements about definitions—like the meaning of the term “information security,” which could refer to protecting computer networks or to controlling information and media, depending on who you talk to—have been used in a variety of proposals over the years as top cover for digital authoritarianism online.

China, Russia, and others politicize these terms in ways that don’t escape global and open internet advocates—they’re referring to control of online content, not cybersecurity of networks. But should these terms be codified in binding agreements about how to best regulate and protect cyberspace, that would leave open a large window of interpretation for such documents. It could thus provide top cover for digital authoritarianism online. A 2011 proposal, for instance, aimed to “increase governmental power over the internet” absent multi-stakeholder engagement.

Discussions of AI governance are increasingly entering international forums, and as digital authoritarianism intersects more and more with AI, it’s likely the same kinds of international engagement will come into play here. U.S. policymakers have engaged heavily for the last couple decades on issues of internet freedom around the world—in the U.N., and also through capacity-building abroad. U.S. governmental and nongovernmental entities have an opportunity to continue leadership here in combating digital authoritarianism with respect to AI, explicitly advocating for democratic models of AI regulation around the world and increasing engagement in technology capacity-building. Likewise, democratic policymakers can better analyze the spread of digital authoritarianism around AI by paying attention to adversaries’ international engagement on digital authoritarianism and AI.

In light of the above, it’s clear that AI’s slowly growing role in digital authoritarianism only underscores what has long been true in the online space: The modern digital ecosystem “is by no means guaranteed to play the same ‘liberating’ role or sustain the same user rights in all times and settings.” The use of AI for digital authoritarianism certainly has unique properties, like the potential for more intelligent, more automated censorship and surveillance. Yet digital authoritarianism is not a new concept; and paying attention to the lessons of the past—spanning technology exports, domestic example-setting, and international engagement—can help policymakers better understand the spread of digital authoritarianism into the future.