Seonghyun Nam

Intern, Cybersecurity Initiative

What is it, how does it work, and what does it mean?

In this Internet Realities Analysis, we break down and analyze South Korea’s recent decision to block a number of overseas-based websites—primarily those hosting pornography or pirated content, or those for gambling—by having internet service providers (ISPs) monitor the Server Name Indication (SNI).

The policy shift in South Korea, which was initially announced by the Korean Communications Commission (KCC) in February of this year, is essentially aimed at enabling the Korean Communications Standards Commission (KCSC)—the Korean governmental entity that regulates and effectively monitors and censors the internet—to enforce laws (about offline information) online by blocking access to gambling websites and certain pornography sites. There will be an inclination by policymakers in countries like Russia and China to equate the Korean policy to the Great Firewall in China or Russia’s new internet law.

Arguably, what distinguishes the Korean policy from these things is the presence of adequate oversight and the democratic process through which the laws that underpin the filtering program were created. Indeed, this program is the result of a desire to take laws that were democratically established offline (e.g., banning gambling or pornography featuring unconsenting parties) and enforcing them online. In Korea, it could be argued that democratic processes, checks and balances, and oversight back this practice up. And that is what distinguishes this case from similar ones in countries where executive government entities have relatively uninhibited, arbitrary power to censor content at their will—like with Roskom, Russia’s internet regulator—leading to censorship that goes well beyond blocking child pornography or gambling websites.

Nonetheless, this may be a cause for concern to some in the internet freedom community. There are, for example, similarities to the United States’ proposed Stop Online Piracy Act (SOPA) and the PROTECT IP Act (PIPA), which made headlines in January 2012 when major internet companies “blacked out” their websites in protest to the proposed legislation. Essentially, the laws would have allowed the Department of Justice to go after websites hosting pirated content and force U.S.-based internet service providers to block access to those sites. The bills were not passed, even though they would have been taking laws previously established offline (through democratic processes) and enforcing them online. The point is, South Korea is not the only democracy with interest in building out internet censorship regimes based on existing laws.

In essence, therefore, what South Korea is attempting to do is configure a national content firewall, but based on pre-existing laws. However, it can be seen as a wider realization, even by democratic governments, that there is a need to balance the responsibility to protect their citizens from harms delivered through the internet and to preserve the openness of the internet that has been part of its success. In the Korean case, media and non-governmental organizations, like the Korean Sexual Violence Center, have called for the government to improve its capacity to block access to illegal websites.

As Justin recently argued in Foreign Policy, democratic governments are increasingly realizing the need to do more to protect their constituents from cyber harms. The vision of a global, free, and open internet is under threat—in part because of concerns over harm and security. It is therefore incumbent on leaders of the democratic world to negotiate the difficult grey spaces between outright censorship and protecting their citizens from harm. All the while, these same policymakers can’t lose sight of the precedents their actions set for others around the world, and the need to develop an internet model that preserves the benefits of a global and open internet while taking some action to address cybersecurity threats.

In practical terms, this is not really a new policy. Rather, it is a stepped-up enforcement of existing laws using existing regulatory provisions, but a new technical method of enforcement. The policy derives from several laws and regulations, such as the Criminal Law, the Sexual Violence Punishment Act, the Information and Communication Network Act, and the Information and Communication Reviewing Regulation. Due to these legal statutes and KCSC’s regulatory authority, the KCSC has long sought to either delete illegal material hosted on servers inside of South Korea or block requests for illegal material hosted outside of South Korea.

In an explanatory blog post (in Korean) from February, the Korean Communications Commission—the regulatory body in charge of media—specifically cites child pornography sites and illegal gambling websites as the types of websites that would be blocked. Although the KCC does not suggest political content will be blocked, there are instances of the KCSC blocking what could be deemed political content—such as content praising North Korea—in select cases.

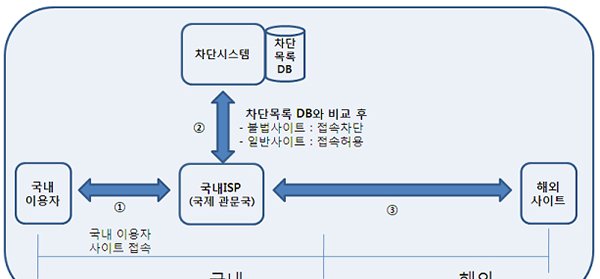

The big change appears to be the KCSC’s method for blocking requests for material hosted outside of the country. In order to enforce laws around illegal content, the KCSC creates a set of blacklisted web addresses that host illegal content in question. Then, the new policy compels internet infrastructure operators to block those blacklisted websites by monitoring the Server Name Identification field, which we explain in greater detail below. In essence, the SNI field allows a network operator to see where traffic is coming from and where it intends to go, but not the actual content of the traffic.

By monitoring this way, the KCSC can leverage ISPs to block certain blacklisted domains, while not snooping on traffic containing legal internet content. In the past, the KCSC appears to have used conventional blocking techniques like Domain Name System (DNS) filtering to achieve similar ends—making this a notable technical switch from previous means and one of the first observed uses of SNI-based filtering for national-level content filtering.

The new policy compels internet service providers to monitor the Server Name Identification field, which is an extension of the Transport Layer Security (TLS) protocol. The TLS protocol is the security protocol that encrypts the traffic between an internet browser (application element) and the server hosting the requested content (physical element). In the early days of the internet, because of the way requests were sent, each web address had be be hosted on its own server with its own Internet Protocol (IP) address. Today, through a method called “virtual hosting,” single servers can host multiple web addresses using a single IP address. SNI is the means that enables these servers with single IPs to host multiple web addresses, by helping the server identify and send the requested domain. The SNI field, then, indicates which host is being requested by the browser.

Although TLS encrypts the content of the traffic, neither it nor SNI encrypts the requested server name. Because the server name is in the open throughout the data transmission, monitoring the SNI allows ISPs to identify and block server names that have been blacklisted by the KCSC. This avoids the risk of potentially over-filtering by blocking entire IPs, which can again correspond to multiple website domains—some of which may host legal content and others of which may host illegal content.

Image reproduced in English from: 불법 음란 사이트 접속차단, 방송통신심의위 심의로 결정 (translation: Blocking Illegal Overseas Websites Is Not Censorship or Infringing Freedom of Expression), http://www.korea.kr/news/actuallyView.do?newsId=148858720.

Traffic filtering using this method is unlikely to be completely effective, as the KCC and the Blue House (Korea’s equivalent to the White House) acknowledge (post in Korean). Depending on their configurations, certain browsers—like Tor—and some virtual private networks (VPNs) will be effective at circumventing the blocking regime, which some online discussion posts have already brought up. In other words, given the legality and availability of VPNs in the Korean market, this method for blocking content is unlikely to be effective in preventing motivated Koreans from accessing illegal information. In addition, an ongoing process at the Internet Engineering Task Force (IETF) is moving to encrypt the SNI field. If this were to happen, ISPs would no longer be able to read server names without decrypting the field first. This would complicate the website filtering process.

One argument for SNI-based filtering over other forms of content filtering, like DNS filtering, is that of precision. Traditional DNS filtering typically blacklists either an IP address or a block of IP addresses. In a world where virtual hosting exists—meaning that a single server with a single IP can host multiple web addresses—blocking entire IPs or blocks of IPs is likely to block more than just the intended web address. By contrast, SNI-based filtering identifies specific web addresses and blocks them.

Policy is not made in a vacuum, and the current political moment in Korea sheds a great deal of light on why this change was made now. In early 2018, the #MeToo movement took root in South Korea. The issue driving many people to the street was that of adult videos and photos that were taken without the knowledge or consent of the subject of those photos. For websites hosting such content whose primary operations and servers reside within South Korea, Korean law enforcement is able to seize servers and order websites shuttered, as was the case for Soranet, a revenge porn site.

However, in South Korea—as with much of the world outside of the U.S.—a good deal of internet content that citizens might access is hosted outside of the country. In the absence of a technical filtering regime, Korea would have two avenues to prevent Koreans from accessing these websites. The first is via requests sent directly to content hosts, like Google and Amazon Web Services, which provide servers that power websites, or Twitter and Facebook, whose platforms host content. In these cases, Korea’s capacity to remove illegal content is only as good as the host company’s willingness to comply with Korean governmental requests. Korea’s second option would be to seek mutual legal assistance with a law enforcement entity in the country in which the server hosting the content is located. However, the process of enforcing internet laws through mutual legal assistance treaties (MLATs) is fraught with complications and is usually very slow. The KCSC has, therefore, sought an alternative method of preventing harmful activity.

According to an IETF document, SNI-based filtering has been a known method of filtering internet traffic for some time. However, as of May 2018, it was mostly used by enterprise networks, and there was no clear evidence that the method had been used at scale to institute a national-level content filtering regime. It appears, therefore, that the Korean government may be pioneering a new method for content filtering at the national level.

As Rob has written elsewhere, the spread of repressive internet policies and practices—sometimes called “digital authoritarianism”—is largely a game of copycat. The more that a country provides a viable model for digital governance, the more influential it may become. In other words, as our colleague Jaclyn Kerr notes, the adoption of digital authoritarian policies and practices is driven by the availability of models and the ease with which those models fit with existing capacities and legal frameworks. Proving concepts work—like SNI-based filtering at the national level—could therefore provide a model for governmental abuse in areas where less robust rule of law exists.

That said, it’s likely that motivated Koreans will be able to circumvent the filter; in a Korean context, the blocking isn’t very effective. This begs the question of why the Korean government would bother with the new blocking regime. The answer comes down to domestic politics, as we alluded to above, and and this is another example of how, as we have observed in the past, the future of the internet’s architecture and governance is less likely to be decided in international forums than through a series of decisions at the national level around the world.

Also worth noting is that discussions for whether and how to encrypt the SNI field have been underway at IETF since 2017. Standards organizations like the IETF are able to essentially set the physics of the space—the realities of the environment within which governments and others work—and encrypting the SNI field would make SNI-based filtering via ISPs (who presumably would not break the encryption efficiently) essentially inviable.

It is worth considering how governments deploying SNI-based filtering would react to this new reality. In our view, it is unlikely that they would give up on building technical filtering regimes designed to enforce their laws and would likely revert to other forms of filtering, like blocking IPs. This begs an interesting question worth considering: Given that SNI-based filtering is more precise (less likely to unintentionally block content other than the target content), do we want governments reverting to other filtering techniques like blocking IPs that may have collateral damage? Questions like this underscore the notion—which we’ve highlighted previously—that decisions made at standards bodies like the IETF increasingly carry political implications.

Filtering through the SNI fields of internet traffic is quite different than deep packet inspection or outright blocking thousands and thousands of IP addresses. Nonetheless, South Korea’s national-level filtering program is a novel application for SNI-based filtering. But the ramifications of the SNI filtering in Korea do not stop at Korean borders. The risk that this filtering method will spread and be abused around the world is present. However, it is worth acknowledging that other methods for filtering out internet content pre-date this method and these pre-existing methods are likely more easily scalable.

While Korea may have the requisite checks in place to limit its use of filtering out demonstrably harmful content—like revenge porn—other countries may lack these checks, and “harmful” is an extremely subjective indicator, especially when talking about internet content. Yet at a time in which it’s reasonable for any country to strike some balance between internet openness and internet control—for purposes of blocking cybersecurity threats—the United States and many of its allies hold firm in their resolve that governments shouldn’t touch the global internet. And this one of the reasons why an authoritarian model of traffic filtering and tight control of the web is compelling. In the face of cyber insecurity, when it comes to doing nothing or doing something, doing something is preferable for most governments.