No One’s Watching the Watch Lists

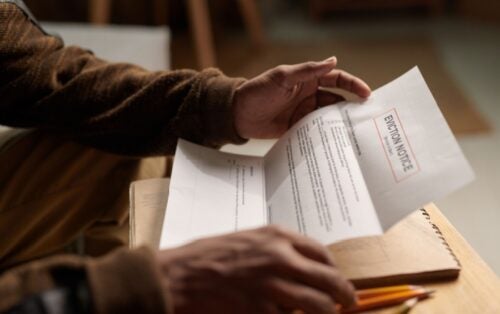

Last week, the U.S.. Department of Education unveiled the latest set of tuition watch lists, the annual exercise to “shame” colleges and universities by publicly identifying those with the biggest price increases. The lists were arguably the House and Senate’s most public effort to tackle rising college costs in the 2008 reauthorization Higher Education Act, and were trumpeted at the time by various members of Congress as an important step to hold colleges accountable for their costs. Yet after three iterations it is clear that the lists are not a meaningful accountability tool, but the end result of a Congressional punt that avoided tough conversations about college costs and instead created little more than a digital version of Congressmen’s favorite histrionics–finger wagging and stern talking-tos.

The failure is less the lists themselves and more misplaced expectations about what they could accomplish. The cost of college is a complex problem driven by state budgeting practices, instructional delivery, available competition, and a host of other factors that have their own set of solutions and challenges. In that light, transparency is not a bad thing, but it’s an incomplete and insufficient solution that makes for good press releases and tough talk without needing to actually engage in important battles and conversations.

But tempering expectations can’t make up for the morass of lists of limited consumer utility created by Congress. The law requires the production of 54(!) different lists, with six different types of rankings for each of the nine postsecondary sectors . The result is lists for the 89 schools in the private nonprofit less-than 2-year sector that have between one and four institutions on them while no one national ranking.

More importantly, the lists do very little as a consumer tool. Take a look at the highest tuition public 4-year institutions, which enroll one-third of students and where costs are high enough to generate some concerns:

If you live in Pennsylvania, the lists tell you that you’re basically screwed. If you don’t live in Pennsylvania, the only thing the lists tell you is that you probably shouldn’t move to Pennsylvania. But for a typical consumer, there’s little of value here. That’s because the list presume a national college search when most students don’t look much beyond what’s nearby. According to the Beginning Postsecondary Students Survey, the first institution attended by 75 percent of students is within 56 miles of their home. And for students at public or private nonprofit 4-year institutions–the two sectors where students are most likely to travel–half still attend schools within 50 and 100 miles, respectively of their homes.

Other lists are only full of small, obscure rabbinical schools or tribal colleges that enroll at most dozens of people. For example, look at the top 15 private nonprofit 4-year institutions with the greatest percentage increase in net price:

Now shaming an institution with fewer students than enroll in the typical introductory psychology course may be important if it truly is ripping off its students. But its certainly not effective in a world with limited resources for accountability and enforcement.

Not only do the lists not reflect actual students’ search, they also lack any other information. The University of Illinois at Urbana-Champaign is more expensive than Chicago State University, but it has a graduation rate of 82 percent compared to 21 percent of students at Chicago State. Surely the extra $6,000 a year is worth considering for having nearly a four times greater chance of completing. That’s why the College Scorecard is a much more promising because it combines information not just on cost, but also graduation, borrowing, and other important things that should be at least part of a student’s college selection process.

So what about as an accountability metric? Institutions on the list are required to submit reports to the Department detailing the drivers of cost and what’s being done to address them. Maybe the result will be thoughtful analyses and careful planning. Or maybe it will be a set of apologias related to state appropriations, personnel costs, or other factors that check the box and allow schools to move on. But realistically, being one of several hundred colleges on dozens of lists isn’t scary–it’s a big crowd that it’s easy to get lost in. That’s why Sarah Lawrence defends its tuition when it ends up as number one on the list, but Wake Forest University, which sits in last place, can keep largely quiet.

In general, the notion of naming and shaming isn’t the worst idea in the world (and it’s certainly better than some of the silly reporting disclosure requirements, such as posting file sharing policies, that Congress also included in the Higher Education Act reauthorization). But it needs to decide what it is. If you want a useful consumer tool, then combine it with other targeted information and try to reflect how students actually make choices (and maybe consider enforcing other disclosure requirements around graduation rates that colleges willfully flaunt). If you want it for accountability purposes, then put out one list of 10 and attach some real penalties. But right now it’s the worst of both worlds. Shaming the 57-student Talmudical Academy of New Jersey doesn’t make consumers any smarter. And taking credit for it as a meaningful attempt to address important and fundamental issues of college cost in this country is just an exercise in misleading buck passing.