Key Transparency Recommendations for Content Shaping and Moderation

The recommendations below are drawn from the RDR Corporate Accountability Index, and reflect over a decade of civil society and academic research into platform accountability. Many of the companies that RDR ranks (including Facebook, Google, and Twitter) already meet some of these standards, but their disclosures are by no means comprehensive. As we have argued throughout this report, we don’t know nearly enough about how companies use algorithmic systems to determine what we see online—and what we don’t see. Congress should consider requiring internet platforms to disclose key information about their content shaping and content moderation practices as a first step toward potentially regulating the practices themselves.

Access to Key Policy Documents

- Companies should publish the rules (otherwise known as terms of service or community guidelines) for what user-generated content and behavior are or aren’t permitted.

- Companies should publish the content rules for advertising (e.g., what kinds of products and services can or cannot be advertised, how ads should be formatted, and what kind of language may be prohibited in ads, such as curse words or vulgarity).

- Companies should publish the targeting rules for advertising (e.g., who users are, where they live, and what their interests are can be used to target ads).

Notification of Changes

- Companies should notify users when the rules for user-generated content, for advertising content, or for ad targeting change so that users can make an informed decision about whether to continue using the platform.

Rules and Processes for Enforcement

- Companies should disclose the processes and technologies (including content moderation algorithms) used to identify content or accounts that violate the rules for user-generated content, advertising content, and ad targeting.

- Companies should notify users when they make significant changes to these processes and technologies.

Transparency Reporting

- Companies should regularly publish transparency reports with data about the volume and nature of actions taken to restrict content that violates the rules for user-generated content, for advertising content, and for ad targeting.

- Transparency reports should be published at least once a year, preferably once a quarter.

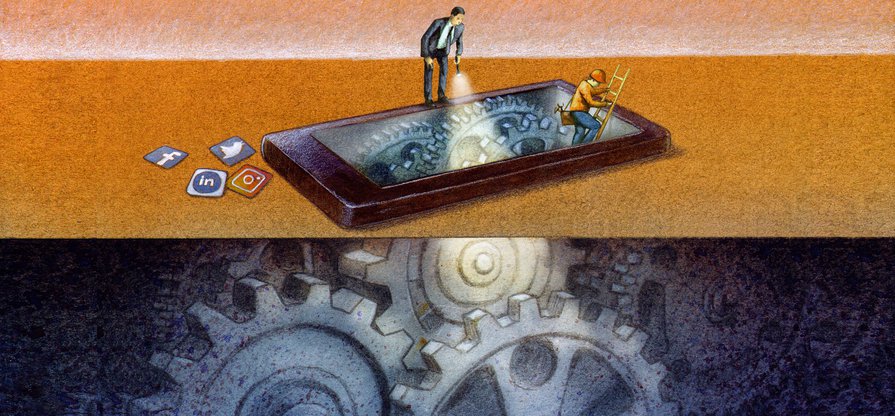

Content-shaping Algorithms

- Companies should disclose whether they use algorithmic systems to curate, recommend, and/or rank the content that users can access through their platforms.

- Companies should explain how such algorithmic systems work, including what they optimize for and the variables they take into account.

- Companies should enable users to decide whether to allow these algorithms to shape their online experience, and to change the variables that influence them.

It’s Not Just the Content, It’s the Business Model: Democracy’s Online Speech Challenge

- Executive Summary

- Introduction

- A Tale of Two Algorithms

- Russian Interference, Radicalization, and Dishonest Ads: What Makes Them So Powerful?

- Algorithmic Transparency: Peeking Into the Black Box

- Who Gets Targeted—Or Excluded—By Ad Systems?

- When Ad Targeting Meets the 2020 Election

- Regulatory Challenges: A Free Speech Problem—and a Tech Problem

- So What Should Companies Do?

- Key Transparency Recommendations for Content Shaping and Moderation

- Conclusion