Five Recommendations for Community Colleges to Equitably Implement Early Alert Systems

Abstract

Since the existing literature on the impact of early alert systems (EAS) focuses on four-year institutions, we lack widespread understanding of EAS effectiveness for community college students. In addition, very few studies evaluate racial equity implications for EAS, which makes it challenging for community college leaders to translate results to a college context that offers diverse types of sub-baccalaureate credentials and serves more students of color, low-income, non-residential, and working students than the four-year sector. For the research that does exist in a community college context, the findings are inconsistent and inconclusive.

To fill this knowledge gap, we talked with community college leaders, third-party EAS vendors, and experts in the field to understand the challenges surrounding system design/configuration, implementation, use, and perceived effectiveness of EAS at community colleges. We emphasize the importance of mitigating racial discrimination and labeling when using these systems. From our interviews, we identify five of the most common reasons many EAS do not live up to their potential in rendering improved student success and offer five recommendations on how to equitably address those issues. Our report focuses on the early alert feature as a component of a case management system that many community colleges adopt.

Acknowledgments

New America would like to thank Lumina Foundation and the Bill & Melinda Gates Foundation for their generous support of our work. The views expressed in this report are those of its authors and do not necessarily represent the views of the foundations, their officers, or employees.

The authors also thank Shara Davis at Achieving the Dream for serving as an external reviewer, Brittney Davidson at the Ada Center for sharing her expertise in the early stages of the project, and the participating community colleges and vendors who generously committed their time and expertise.

Downloads

Introduction

We are in the thick of the midterm season, typically a high-stress time of the year where college students across the country are preparing for exams and assignments to show how much of the course material they have retained so far. However, unless you have been a college student in the past decade, you may be unaware that this is also the time of year for midterm faculty surveys. Either mandatory or optional for faculty to complete, this is an opportunity for professors to identify students who are academically at-risk in a given course. It is also the opportunity for faculty to praise those who are performing well. This is typically done digitally through “flags” (for academically at-risk students) and “kudos” (for high-performing students).

But the jury is still out on whether receiving flags and kudos through early alert systems (EAS) are an effective technology to promote academic success, and while there is literature on the impact of EAS at four-year institutions, we lack widespread understanding of its effectiveness for community college students. For the research that does exist in a community college context, the findings are inconsistent and inconclusive.1 As New America wrote last year, critics fear that student support technologies that use predictive analytics, such as EAS, run the risk of reifying racial discrimination and labeling for many college students.2

In response to the drastic community college enrollment declines over the past two years in the United States, community college leaders are under increasing pressure to retain students.3 As a result, many campus leaders are turning to technology to guide the distribution of limited resources to support student success. Yet despite limited empirical evidence demonstrating a positive impact on student outcomes, community college leaders across the country are readily investing in EAS.

Understanding that technology is not a simple solution to college retention and completion problems, we talked with community college leaders, practitioners, and experts in the field to understand the benefits and challenges of implementing an EAS, while paying attention to the importance of mitigating racial discrimination and labeling that are potential by-products of such technology. This report begins to fill in the knowledge gap with qualitative data so we might understand the components of the design/configuration, implementation, use, and perceived effectiveness of EAS at community colleges.

Purpose of Our Study

As of 2019, about four in five community colleges are adopting some variation of a congratulatory and alert system to promote student success.4 However, most of the research on EAS is oversaturated with insights applicable to four-year institutions, with very few studies evaluating the racial equity implications of the use of this technology. It is challenging for community college leaders to translate results from these studies to a context that offers diverse types of sub-baccalaureate (and some baccalaureate) credentials and serves more students of color, low-income, non-residential, and working students.

Since a large percentage of traditionally underserved students in the U.S. attend two-year institutions,5 it is critical to evaluate how EAS are used to improve and remove potential barriers to their academic success. Addressing this knowledge gap will help faculty, staff, and administrators ensure college students reach their academic goals.

In 2020, Joe Biden’s presidential campaign promised billions of dollars to invest in community colleges with evidence-based student success technologies, such as EAS. Although after his election Build Back Better Act did not materialize,6 this summer the U.S. Department of Education announced a $5 million investment to higher education institutions that primarily serve students of color, to invest in evidence-based initiatives that encourage postsecondary retention, transfer, and completion. This grant is known as the College Completion Fund for Postsecondary Student Success. Although it is aimed at four-year historically Black colleges and universities (HBCUs), tribal colleges and universities (TCUs), and minority-serving institutions (MSIs) such as Hispanic-serving institutions (HSIs), the Department is extending “invitational priority” to community colleges that experienced the brunt of enrollment declines during the pandemic.7

There is increased interest in empirical studies to inform student support practices as a result of a combination of federal investments and institutional motivation to recover from the impact of the pandemic. This study provides insight for community colleges who wish to use this technology equitably and effectively to promote student success.

It is important to understand what is and is not working to guide limited resources to support community college students. Our study provides insights that we gathered from interviewing various community college leaders, third-party EAS vendors, and experts in the field. Through our interviews, we identify the five most common challenges to implementing EAS at community colleges. We hope that our recommendations will help guide two-year campus leaders and third-party EAS vendors to address popular challenges amongst notification and intervention systems to ensure they are adequately serving all students and are promoting student success.

Methods

For this study, we are exclusively interested in understanding the challenges and experiences of implementing early alert systems (EAS), a component of a case management system, at community colleges.

From November 2021 to April 2022, we gathered qualitative data through semi-formal virtual video interviews, an invaluable source of information to explore community college leaders’ experiences, challenges, and nuances of implementing technology with EAS. We also interviewed third-party EAS vendors and experts in the field.

Community colleges, third-party EAS vendors, and experts were selected based on New America’s research, as well as suggestions from researchers and prominent experts in the field, including Achieving the Dream. All interviewees remain anonymous in the report.

Selection Criteria

Community colleges met three or more of the following criteria:

- Nonprofit community college

- Implemented EAS no later than 2016

- Used a third-party vendor’s EAS platform

- Used EAS to improve student retention and completion in associate degree programs

- Provided permission for New America to speak with campus leaders about EAS

The majority of community college leaders we spoke with hired an external technology vendor to facilitate their implementation of an EAS. A small percentage of our interviewees used an EAS tool that they either developed internally or personalized extensively to fit their unique student population.

Limitations

Due to the limited scope of the project, from our qualitative data, we are unable to account for students’ experiences with EAS at community colleges.

Limitations of interview data include, but are not limited to, the fact that interviewees may not feel encouraged to provide accurate, honest answers or may not be fully aware of their reasons for any given answer, all of which is beyond our ability to control.

The selection of interviewees is not nationally representative and thus cannot be generalized across all community colleges in the country. In addition, the qualitative findings cannot address causality of the effectiveness of EAS on student success outcomes. However, the findings shed light on some perceptions, beliefs, and experiences of implementing EAS at community colleges.

Citations

- Lori Jean Dwyer, “An Analysis of the Impact of Early Alert on Community College Student Persistence in Virginia” (PhD dissertation, Old Dominion University, 2017), source.

- Monique O. Ositelu and Alejandra Acosta, “The Iron Triangle of College Admissions: Institutional Goals to Admit the Perfect First-Year Class May Create Racial Inequities to College Access,” EdCentral (blog), New America, October 6, 2021, source.

- Rachel Fishman, Sophie Nguyen, Shelbe Klebs, and Tamara Hiler, “One Year Later: COVID-19’s Impact on Current and Future College Students,” EdCentral (blog), New America, June 30 2021, source.

- See page 14 of 2019 Effective Practices for Student Success, Retention, and Completion Report (Cedar Rapids, IA: Ruffalo Noel Levitz, 2019), source.

- Ashley A. Smith, “Study Finds More Low-Income Students Attending College,” Inside Higher Ed, May 23, 2019, source.

- The Build Back Better Framework intended to set the U.S. on course to meet its climate goals, create millions of good-paying jobs, enable more Americans to join and remain in the labor force, and grow our economy from the bottom up and the middle out. “The Build Back Better Framework,” The White House, source.

- “New $5M Grant Program for Student Retention, Success,” Community College Daily, August 11, 2022, source.

Background

How a Typical Early Alert System Works

While the types of interventions offered to students may vary across colleges, the purpose of an EAS is to identify academically at-risk students. This can range from a simple nudge or notification to more “intrusive” individualized approaches. Nudges or notifications can be phone calls, text messages, and/or emails from an early alert staff member to students informing them about their academic behavior.1

An EAS typically entails a systematic process that includes at least two key steps: alerts and interventions.2 Alerts are formal, proactive feedback levers that send “flags” about student behavior to signal to the college that additional support is needed. Flags are activated by academic (e.g., poor class performance on an assignment, low letter grade, absence) and non-academic (e.g., financial issue, inappropriate conduct, lack of transportation) behavior from students to institutional support staff who can intervene.

These interventions are the next step that typically includes some sort of strategic method of outreach to connect students to the appropriate resources to address the issue(s) identified through the alert system. For example, academic interventions may include tutoring, meeting with an advisor, or assigning a mentor to the student.3 Reflective of the literature, many vendors’ EAS features and campus leaders we spoke with go beyond academic performance to trigger early alerts for social/emotional indications.4 Such indicators5 include, but are not limited to, financial aid support such as emergency grants funded by the CARES Act6 or referrals to other supports like mental and medical health care, child care, transportation, housing, and food.7

It is important to note that not all EAS are implemented the same way.8 Community colleges can use different types of flags and combinations of student behavior data to inform the alert system. In addition, colleges vary in the interventions offered and their alert frequencies. Some update students in real time and others identify students once per semester during midterms.9

Most colleges use predictive analytics software developed by a third-party vendor, but some community colleges opt to develop an EAS tool internally to cater to the unique needs of their student body.10 Yet despite the infinite variation in development and use, EAS typically uses student behavior data, demographics, and self-survey data on students to identify who is likely to struggle academically.11

Studies Evaluating EAS Show Mixed Results

Four-Year Institutions

Among survey respondents to a 2020 Gardner Institute for Excellence in Undergraduate Education survey, about 40 percent of four-year institutional practitioners reported “improved retention and graduation rates” as a result of using an EAS.12 However, survey data have limitations due to perceived effectiveness, where national studies on impact analysis of early-alert models find “very little empirical evidence to validate the use of these programs.”13

Yet some evidence suggests that early alert interventions are more effective within designated programs (such as STEM programs) or small sub-populations (such as first-year students).14 Nevertheless, the existing research has produced very little empirical evidence to validate or invalidate the use of EAS. Future research beyond perceptions from surveys are needed.

Two-Year Institutions

The scant number of studies focusing on community college students have given attention almost exclusively to evaluating EAS effectiveness for sub-populations like developmental education and online students.15 Although community college leaders rate EAS in the top five very effective practices16 to retain online learners, only 13 percent17 believe their EAS is a very effective strategy for student retention and completion for the entire student body population. Echoing the collective sentiment from our interviews with community college leaders, the literature suggests EAS are a “well-meaning investment [that] often fails to produce results”18 on student success outcomes.

From our interviews with third-party vendors, community college leaders, and experts in the field, we identify five of the most common reasons many EAS do not live up to their potential in rendering improved student success outcomes. To support community colleges’ efforts to use EAS to promote student success, we provide the following five recommendations as solutions to pressing challenges, and close with a discussion of a framework to guide equity-minded implementation of EAS.

Citations

- Hanover Research, Early Alert Systems in Higher Education (Washington, DC: Hanover Research, 2014), source.

- Hanover Research, Early Alert Systems in Higher Education.

- Manuela Ekowo and Iris Palmer, Predictive Analytics in Higher Education: Five Guiding Practices for Ethical Use (Washington, DC: New America, 2017), source.

- Hanover Research, Early Alert Systems in Higher Education.

- Ekowo and Palmer, Predictive Analytics in Higher Education.

- The Coronavirus Aid, Relief, and Economic Security Act or, CARES Act, was passed by Congress on March 27, 2020. This bill allotted $2.2 trillion to provide fast and direct economic aid to the American people negatively impacted by the COVID-19 pandemic. Of that money, approximately $14 billion was given to the Office of Postsecondary Education as the Higher Education Emergency Relief Fund, or HEERF.

- Hanover Research, Early Alert Systems in Higher Education.

- Manuela Ekowo and Iris Palmer, The Promise and Peril of Predictive Analytics in Higher Education: A Landscape Analysis (Washington, DC: New America, 2016), source.

- Ekowo and Palmer, The Promise and Peril of Predictive Analytics.

- Serena Klempin, Markeisha Grant, and Marisol Ramos, Practitioner Perspectives on the Use of Predictive Analytics in Targeted Advising for College Students (New York: Community College Research Center, 2018), source.

- Klempin, Grant, and Ramos, Practitioner Perspectives.

- Zachary Michael Jack, “Early-Alerting Early-Alert Systems on College Campuses,” Front Porch Republic (website) January 13, 2020, source.

- Jack, “Early-Alerting Early-Alert Systems on College Campuses.”

- Hanover Research, Early Alert Systems in Higher Education.

- Dwyer, “An Analysis of the Impact of Early Alert on Community College Student Persistence in Virginia.”

- See page 5 of 2015 Student Retention and College Completion Practices Benchmark Report (Coralville, IA: Ruffalo Noel Levitz, 2015), source">source.

- See page 14 of 2019 Effective Practices for Student Success, Retention, and Completion Report.

- “3 Reasons Why Your Early-Alert Program Is Falling Short,” Education Advisory Board (EAB) (website), February 19, 2019, source.

Five Common Challenges and Recommendations

Challenge 1: Uncertainty about How to Navigate Procurement

Choosing an EAS can be time-consuming and difficult. It is a big decision that can cost thousands of dollars. It can lock a school into a particular system well into the future, even if the system turns out not to work as advertised. At the same time, administrators do not always know the best questions to ask vendors or the answers they should expect. Even more complicated, salespeople do not always know the answers to technical questions around data integration and data use.

Many college leaders believe salespeople pitch a product during the initial conversation that is good in principle but often falls short in practice. The community college practitioners we spoke to said that third-party EAS vendors can be misleading about the automated functionalities of a system, when in fact many processes require manual integrations. Or third-party vendors sometimes misinform community colleges to believe their EAS can link to other college platforms, which is not always possible in practice.

The community college needs to be sure that any tool it chooses will work well for its particular student population, have the flexibility to provide what the college needs in a timely manner, include data transparency, ensure data privacy and security, work interoperably with existing data systems, and support evaluation and professional development.

Recommendation: Make an informed decision between using a third-party vendor or developing an internal system, while being intentional of decision-makers at the table.

To decide what tool to procure, the college should bring together a diverse group of stakeholders to evaluate how the school plans to use it and how it will fit into existing workflows. This group should include someone in institutional leadership who is charged with leading the process, information technology staff members, institutional research staff, key faculty members, student advisors and navigators, other student support staff, student representatives, and communications staff. For many college leaders we interviewed, this diverse group of professionals participate at various stages of the procurement process. This group helps to decide:

- What functionality the system should have. The leadership team should decide what the most important functions are. Many times it is tempting for colleges to think about how EAS can solve all of their student support problems. However, many of the most effective EAS are focused on solving one or two problems well. That is what colleges indicated in a 2017 Tyton Partners’ survey, which found that the majority of schools believe more focused solutions perform better than systems with a lot of different functionality.1

- How the system might need to be customized to the college. When the group decides on the most important functionality, it should also think through how much of that functionally will require customization. The more unusual the functionality, the more likely a college will need to customize its tool. That customization can be very expensive to create and maintain.

- Whether the college should purchase a system or build its own. The questions around use and customization may help a college decide whether or not to build their own EAS rather than going with a vendor created system. But a community college must start with one question first: do they have the staff capacity to create and maintain an EAS? If the answer to that question is yes, the leadership team may want to explore some other reasons for creating a system in-house rather than buying a product. If a college wants to evaluate interventions that take discrete, nimble analysis, it can be easier to keep that analysis in-house rather than trying to work through a third party. If the team concludes that any system will need a lot of customization, that too could mean the college should build its own system. Cost of purchasing a system is another consideration. However, in-house systems are not always as inexpensive as they seem. Thousands of staff hours can go into creating and maintaining these systems and many colleges end up setting them aside for a purchased product because they are too difficult to maintain through staff turnover. People we spoke to at one college described the challenge of maintaining their in-house system when institutional knowledge was lost because of the retirement of staff.

- What kind of privacy and security protections are needed. This team should think through who at the college will have access to which student data. Figuring out what kinds of permissions the system can have so it protects student privacy and supports the school’s information security is a key consideration for any system.

- What kind of process the school needs around messaging alerts. We know that messages about seeking help can be discouraging to students who are struggling. As one administrator we talked to said, "a strong procurement process involves stakeholders of diverse backgrounds and experiences, where student voices are included as well. Students can share feedback on what different kinds of alerts mean to them to inform the type of language to use and whether systems are user-friendly for students." To avoid harm, messages to students should be accurate, timely, and framed as strength-based rather than deficit-based.2

Challenge 2: Low Faculty Buy-In

Faculty members play a fundamental role in the use of EAS within community colleges. Professors see the different challenges that students face firsthand. One college interviewee said that their campus initially considered adopting an EAS because faculty collectively asked the college to do so, based on the needs they witnessed from their students. However, it is common for many community colleges to struggle with faculty buy-in when implementing an EAS.

Many college administrators shared reasons why faculty were reluctant to use an EAS. Some faculty did not believe in the promises of the system because of a lack of proof on short-term and long-term benefits of EAS on academic performance. Others had reservations about handling student data. Others were not interested in being held accountable to a system that they were not familiar with, while some were discouraged, from previous experiences with the system, about feeling left in the dark about what happens to students once they initiate a flag. Without adequate faculty buy-in, EAS will not work because these systems depend on faculty input for flagging alerts and engaging with student interventions.

Recommendation: Include faculty feedback during the procurement and pilot phase, require faculty training on how to use EAS that includes DEI and implicit bias training, and be considerate of faculty workload.

To develop faculty buy-in, it is important that colleges should involve faculty in the procurement and pilot phase prior to adopting an EAS or developing an in-house system. With the prevalence of faculty use in raising alerts, their input is imperative, and their feedback should be carefully considered. This can be done in the form of creating faculty focus groups or including faculty (adjunct faculty as well) on an EAS advisory committee during the pilot phase. This allows them to collaborate with administrators on ways to understand the context of signs that would cause them to initiate an alert. This inclusion would also help cultivate the faculty-administrator relationship that is critical to EAS implementation. Additionally, faculty can advise colleges on ways to personalize inputs of the system (e.g., pre-populated flag types based on common student behavior or options to personalize alerts) so that faculty members are not discouraged or overwhelmed by the types of flags to raise.

Faculty should be informed about the goals and outcomes administrators hope to achieve by implementing EAS so that institutional efforts are aligned across all offices and departments. Colleges can do this by disseminating problem statements and the vision with faculty or sharing evidence of the advantages of EAS and best practices adopted by other community colleges. This will help signal that this is not just another system adoption. Colleges should also implement mandatory professional development training so faculty can learn how to use the alert system and establish a form of accountability for faculty to continue to use the system and improve their practices. It is key that faculty members understand how and why flags are raised and how their participation feeds into institutional goals.

To cultivate equitable use of EAS, faculty training must include diversity, equity, and inclusion (DEI) and implicit bias training. Because community colleges enroll a large percentage of racially minoritized, low-income, and working students, alert notifications can sometimes be mistakenly triggered by faculty who are not knowledgeable about teaching students from diverse backgrounds.3 These alerts can have negative effects on students of color.4 Without these trainings, colleges run the risk of embedding biases into the system, resulting in flagging certain students at higher rates and inaccurately labeling students as academically at-risk. These trainings can open doors to inclusive opportunities for colleges to personalize features of their systems and equip faculty to meet the needs of minority students.

Another way to ensure faculty participation is that once faculty raise a flag, they remain informed through a feedback loop process to know what intervention(s) a student did or did not receive. For example, if a student is flagged for missing an assignment, the professor should be kept in the loop on what the advisor or assigned departmental staff is doing to intervene after the flag is raised. Tailoring EAS to update faculty members with notifications about the stages of an intervention institutes an additional layer of accountability and can help ensure students’ cases do not fall through the cracks. This will also allow professors to know how to continue to support their students and navigate next steps, depending on where students are in the EAS cycle.

Lastly, considering that many faculty members at community colleges are adjunct professors, administrators must be considerate about other responsibilities they have, so they do not disincentivize faculty from using the system. Flagging multiple students and keeping track of alerts can quickly become taxing, especially for faculty with bigger class sizes. It will be helpful to create built-in tools that can easily allow faculty to fulfill their role within EAS. Colleges should create an atmosphere where faculty members are not just using EAS to fulfill their responsibilities, but genuinely understand the results their participation can yield.5

Challenge 3: Failure to Supply Appropriate Support Services

Although EAS are designed to help colleges identify students who are academically at-risk or who need non-academic support services, administrators shared with us how connecting students with the right support services can be a challenge with their alert system. The top two reasons are:

- Student support staff are overwhelmed. Some colleges do not have a clear or adequate process for handling all open cases across departments, which runs the risk of EAS staff overburdened with alerts. For some colleges, once a faculty member raises a flag, it takes a considerable amount of time for an EAS representative to review the flag, reach out to the student, and connect them to the appropriate support service. Unfortunately, as we heard from some EAS staff at community colleges, many students and faculty members assume there is a call center managing the influx of the alerts in a quick and efficient process. However, the reality is that it is usually one or two staff members managing the alerts, and they often have additional responsibilities outside of EAS. As a result of this limited staffing, flags sometimes fall through the cracks, with many students not receiving the support they need.

- Messaging is ineffective. When faculty members send alerts and/or when staff members reach out to students to connect them to an intervention, both messaging approaches must be treated with care so that students' needs are adequately met.6 One administrator we interviewed shared how their college did not think much about the word “probation” when used in the context of financial aid or a student’s academic standing. However, this college primarily serves students of color, and so the students interpreted the term “probation” in a negative context that adversely affected their reception of the notifications.7 This resulted in decreased student engagement with the interventions the college was attempting to offer.

Recommendation: Build up staff capacity in monitoring early alerts, develop a process to streamline flags between offices, and establish inclusive communication practices with students.

One campus administrator told us that “one coordinator cannot manage all the interventions that need to be done with alerts.” This person candidly expressed that community colleges must address staffing needs to manage and monitor EAS flags, especially for institutions that enable manual alerts to be submitted throughout an academic term. Increasing staffing is a challenge for many community colleges that have limited financial resources to hire additional staff. Yet colleges must think of innovative funding mechanisms, through applying for grants and leveraging technical assistance organizations, such as Achieving the Dream, to support staffing needs to manage alerts.8 If colleges do not build staff capacity, rethink procedures, and create institutional policies to support EAS infrastructure, they risk students falling through the cracks and worse: a decline in student success outcomes.

It is imperative to develop an efficient process that streamlines flags initiated by faculty to connect students to appropriate interventions. One community college interviewee said that their process uses an intermediary staff member who receives the alert and then connects students to the appropriate interventions. Another community college leader told us that their school programmed their system to streamline flags directly from faculty in the classroom to the appropriate department that houses the intervention. For example, if a student is experiencing financial issues, the professor’s flag is immediately sent to a financial aid representative who then connects with the student to provide an emergency grant. Both options have pros and cons, yet both rely heavily on staffing. Without bolstering staff capacity, EAS are limited to the misconception that flags are lost in a black hole and students are not receiving support.

Lastly, colleges should carefully consider the type of language used in message notifications to students. A recurring theme from our conversations with community college administrators is the importance of effective messaging when sending early alerts to students. The choice of words used to alert or intervene is critical in effectively connecting with students and encouraging them to use appropriate student support services.9 Mindfulness about inclusive language when creating messages helps ensure that students are receptive to the notifications and interventions.10 One community college leader shared that their school hired an external consulting company to revamp their messaging for their EAS system. Although it was an expensive collaboration, it paid dividends in effectively communicating with their student population who are primarily students of color, the person we spoke to said. Not only did the consultants help the college implement inclusive language, which increased student engagement with the EAS process, but it was an eye-opening experience for administrators to reevaluate their positions of privilege to ensure they meet all of their students' needs. Effective messaging ensures that targeted approaches or support services are reaching the students they were designed to support. Activating the student voice in system design, configuration, testing, and implementation is critical for an effective and efficient EAS.

Challenge 4: Inadequate Evaluation of EAS Data

The effectiveness of an EAS is determined by how well it is used by faculty members. However, the laser focus on faculty inputs at many community colleges make the mistake of only engaging in the measurement and evaluation of EAS data based upon frequency of flags triggered by faculty and faculty participation. Limiting EAS evaluation with these superficial analyses poses a major challenge to fully understanding what is and is not working with a college’s alert system. The frequency of flags and the rate of faculty participation do not dig deep enough to understand the process of streamlining flags from faculty alerts to the connection of services to students. Looking only at these factors also does not help college leaders understand the impact interventions have on students’ academic and non-academic outcomes. Yet the majority of the colleges and vendors we spoke with focus their EAS evaluations on those two outcomes. This significantly restrains insight to a small aspect of the system and limits colleges from truly understanding whether the system is working to promote student success.

Almost all of the community colleges and vendors we spoke with had not previously evaluated flag frequency across key demographic characteristics such as race, gender, and full-time status, with many not thinking about this approach before our interview. To not disaggregate data is to admit ignorance about how sub-populations of students are faring. Wearing these blindfolds prevents colleges from implementing student-centered approaches with EAS and is a disservice to students. Many colleges do not uncover the full potential of EAS because of data evaluation limitations they impose on themselves.

Recommendation: Go beyond surface analyses of flag frequency and faculty participation, and disaggregate data by demographics, intervention type, and student outcomes.

The leadership team should think about what student success looks like for an EAS at their college and how to measure it. For many colleges represented in our sample, success was defined in terms of fall-to-fall retention, course completion rates, and graduation rates. Once colleges understand how they want to measure student success, they should come up with a measurement strategy to support regular evaluation. To guide interim evaluations, measurement strategies might include research questions like:

- Are certain types of students more likely to receive alerts than others? Why?

- How do students who receive alerts tend to perform compared to students who do not?

- What kinds of interventions in response to alerts seem to work best for students?

- How many alerts remain open without properly addressing students?

- How long are alerts opened before they are closed?

- Do closed alerts mean that students received adequate interventions? If not, what type of students are receiving interventions compared to those who are not?

A plan to disaggregate data by key demographic characteristics and answering these questions at regular intervals will help ensure the system is working for the college to reach their goals of student success. This approach can improve student-centered practices and streamline the process from faculty alerts to the provision of intervention(s) in a timely manner.

Challenge 5: Difficulty Shifting the Use of EAS during the Pandemic

By the end of the 2020-21 academic year, community colleges felt the brunt11 of the COVID-19 global health pandemic, causing enrollment rates to decline by 10 percent12 and retention rates to decline by 2.1 percentage points.13 These unprecedented declines, coupled with the transition to emergency distance learning, made it difficult for colleges to seamlessly adopt and effectively use EAS. It was challenging for many community colleges to monitor students’ attendance and identify students’ needs in a virtual setting. For example, inconsistent attendance policies for remote learning made it difficult for faculty to input correct information regarding absences into the system. There was a general concern during the height of the pandemic that students were not flagged accurately because faculty members were unable to rely on traditional ways of identifying at-risk academic behaviors.

During the peak of the pandemic, there were countless reasons for absences and falling behind academically: testing positive for COVID-19, being exposed to the virus, caregiving for sick family members, homeschooling children, lacking access to broadband internet or not owning a laptop, and more. Many college administrators grappled with how to swiftly identify and connect students to appropriate interventions in a remote context. Furthermore, many colleges struggled with defining what a successful intervention looked like virtually. These challenges called for colleges to be flexible and discover new ways to meet students' needs. A third-party EAS vendor shared one example, about one of their community colleges hosting virtual advisor meetings on Saturdays to offer flexible slots for students with inconsistent weekly work schedules. This innovative thinking was one answer to pivot EAS interventions during the height of the pandemic.

Recommendation: Redefine student engagement and attendance policies, holistically support students by strengthening student support services, and maintain the personal touch within EAS.

Although most colleges are back to in-person classes, lessons from the pandemic are important as higher education increasingly moves to hybrid learning. Colleges must redefine what constitutes an alert in different learning modalities and ensure that institutional policies are aligned and clear for faculty to understand and implement an EAS in a hybrid environment.

The pandemic was an unexpected opportunity for community colleges to reevaluate and approach student success more holistically and to strengthen retention and completion efforts. We heard from some community college leaders that emergency distance learning caused a ripple effect, increasing student access to mental health counseling and one-on-one meetings with professors through virtual engagement. Once colleges addressed the disparities in access to broadband internet and laptops, online meetings reduced the equity gap in accessing campus resources and staff because they created more flexibility for more students (e.g. working students) and faculty to interact. Colleges must continue to provide hybrid support services to maximize the accessibility benefits that virtual interactions offer.

Colleges should also emphasize the importance of the use of EAS flags beyond signaling for academic support services. The rate of mental health services among community college students skyrocketed during the pandemic and more student support services were needed.14 An EAS vendor shared with us how "the pandemic forced colleges to not just see students as academic performers, but take a more holistic approach” in meeting their needs. Student support staff must improve their practices to adapt to the growing demand for non-academic support services.15

However, maintaining the personal touch when using EAS is key.16 Although EAS can be effective in promoting student success, students may be discouraged to engage with a notification or an intervention if they believe the notification came from a bot rather than a person. And with the reliance on technology increasing because of the pandemic, it is imperative that each flagged student receives some form of personalized engagement from faculty and staff.17

Citations

- See page 8 of Gates Bryant, Jeff Seaman, Nicholas Java, and Kathryn Martin, Driving Toward a Degree: The Evolution of Academic Advising in Higher Education, Part 1: State of the Academic Advising Field (New York: Tyton Partners, 2017), source.

- A strength-based approach identifies and promotes students’ strengths to address an academic or non-academic issue. In contrast, a deficit-based approach solely identifies the existing academic or non-academic issue.

- Edward Pittman, “Beyond Implicit Bias,” Inside Higher Ed, May 20, 2021, source.

- Ewaoluwa Ogundana and Monique O. Ositelu, PhD, “Early Alert Systems: Why the Personal Touch is Key,” EdCentral (blog), New America, March 15, 2022, source.

- Claudine Bentham, “Faculty Perspectives and Participation in Implementing an Early Alert System and Intervention in a Community College” (doctoral dissertation, University of Central Florida, 2017), source.

- Ewaoluwa Ogundana, “Messaging Matters When Using Early-Alert Systems,” EdCentral (blog), New America, May 24, 2022, source.

- Shannon T. Brady, “A Scarlet Letter? Institutional Messages About Academic Probation Can, but Need Not, Elicit Shame and Stigma” (doctoral dissertation, Stanford University, 2017), source.

- Achieving the Dream (ATD) is a network of more than 300 community colleges across the U.S. that provides institutions with holistic, tailored support for every aspect of their work. See source.

- Ross O’Hara, “4 Best Practices for Excellent Digital Communication,” EDUCAUSE Review (website), June 22, 2020, source.

- Alejandra Acosta, How You Say it Matters: Communicating Predictive Analytics Findings to Students (Washington, DC: New America, 2020), source.

- Nina Chamlou, “The Effects of COVID on Community Colleges and Students,” Affordable Colleges (website), October 13, 2021, source.

- “Current Term Enrollment Estimates,” National Student Clearinghouse Research Center (website), May 26, 2022, source.

- Persistence and Retention: Fall 2019 Beginning Cohort (Herndon, VA: National Student Clearinghouse Research Center, July 2021), source.

- Chris Geary, “The Growing Mental Health Crisis in Community Colleges,” EdCentral (blog), New America, May 3, 2022, source.

- Chris Geary, “Colleges Can Redesign Advising to Better Support Students,” EdCentral (blog), New America, August 9, 2022, source.

- Ogundana and Ositelu, “Early Alert Systems: Why the Personal Touch is Key.”

- Alejandra Acosta, “Coronavirus Throws Predictive Algorithms Out for a Loop,” EdCentral (blog), New America, May 4, 2020, source.

Discussion: How to Avoid Racial Discrimination and Labeling Pitfalls

“[The EAS] is going to reflect the biases that are out there. Just because it's technology does not mean it's going to be immune from biases.”

— Community college leader interviewee, spring 2022

Although many of the community college leaders and third-party EAS vendors we spoke with had not previously evaluated EAS data by race/ethnicity, they all believe it is critical to do so in order to avoid the inherent potential of EAS to racially discriminate and label. Yet many campus leaders were unsure how to use their EAS tool to mitigate such biases.

One of the community college administrators we spoke with admitted that their school recently witnessed an increase in flags for Black male students. To find out whether this disproportionate increase of flags reveals disparate low-academic performance among Black males in need of additional student support services or the perpetuation of implicit biases and racial stigma, this college plans to evaluate the nature of the flags, the faculty triggering the flags, and student outcomes. Interestingly, this particular college is not new to the adoption of alerts and intervention systems, having gone through years of various EAS iterations. Yet after a decade of familiarity with EAS, this college is finally taking a step back to ask whether its systems are potentially perpetuating racial discrimination and labeling.

Many of the community colleges participating in this study have been implementing EAS for at least six years, with some adopting for a little over a decade, but it was not until our conversations that they had the opportunity and space to think about the racial implications of their EAS tool. This reveals the complexity of mission-driven community colleges. They are often strapped for resources and staff time, that many lack the capacity to evaluate their EAS data to ensure the predictive analytic tool, fed by faculty input, is not computing systemic discrimination.

Although many of our interviews turned out to be the beginning of future conversations for college leaders and vendors to start thinking of ways to ensure their systems serve all students and are not a disservice to students of color, there is a collective appetite from both community colleges and vendors to implement EAS through an equity-conscious approach.

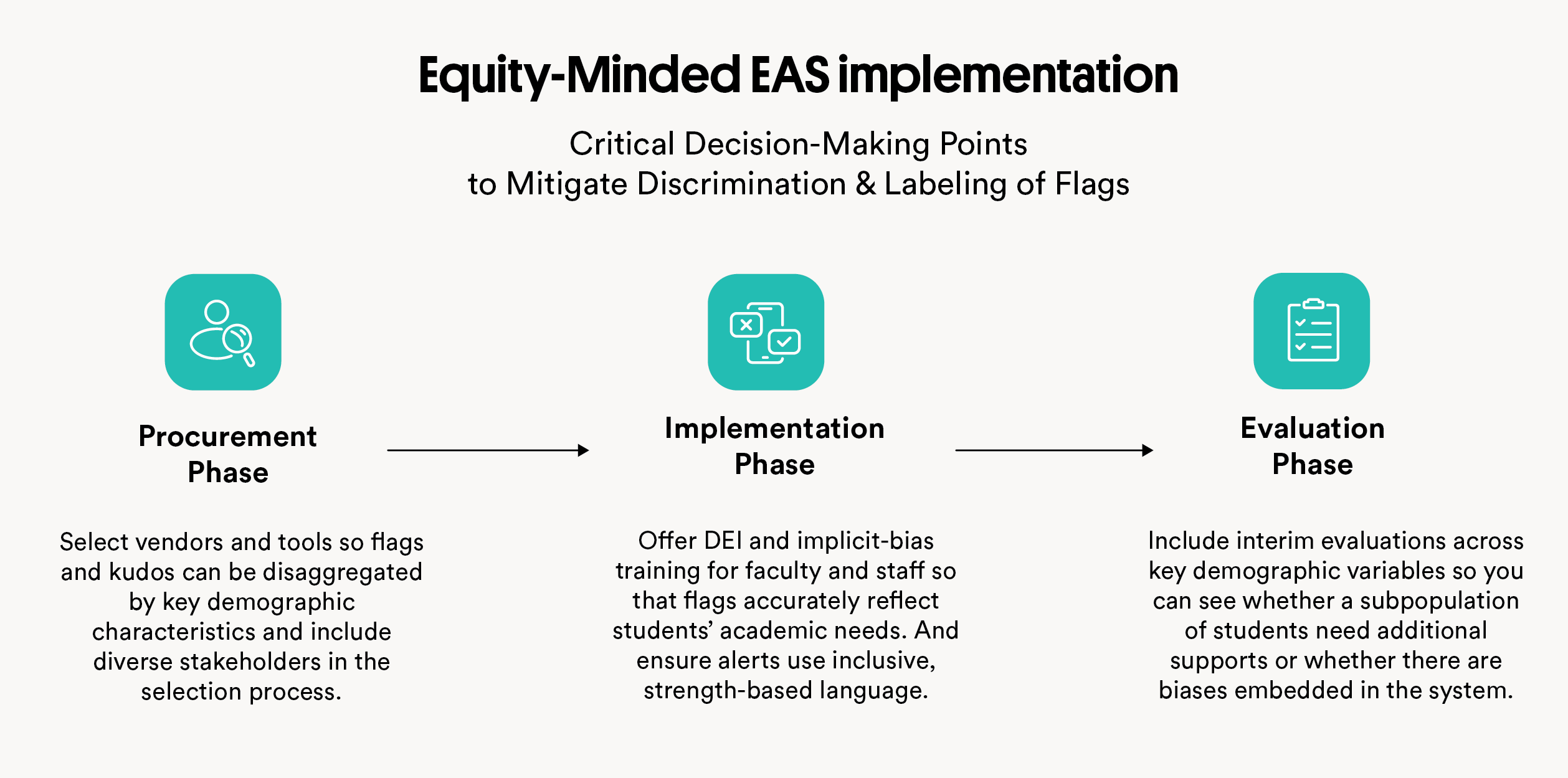

To guide campus leaders and vendors through an equity-minded implementation of EAS, we created a framework (see Figure 1) for community colleges and vendors. The tool highlights three major decision-making phases in the adoption and implementation processes, where taking additional care around issues of equity can mitigate the inherent biases of technology.

This framework is a culmination of our five recommendations in this report, in a cohesive visual format. Community colleges and vendors must activate student perspectives in design, configuration, testing, and implementation to ensure an equitable student-centered approach. If they use a critical lens on the equity implications of the procurement phase, implementation phase, and evaluation phase, colleges have the opportunity to mitigate biases.