The Problem of Misinformation in a Democracy

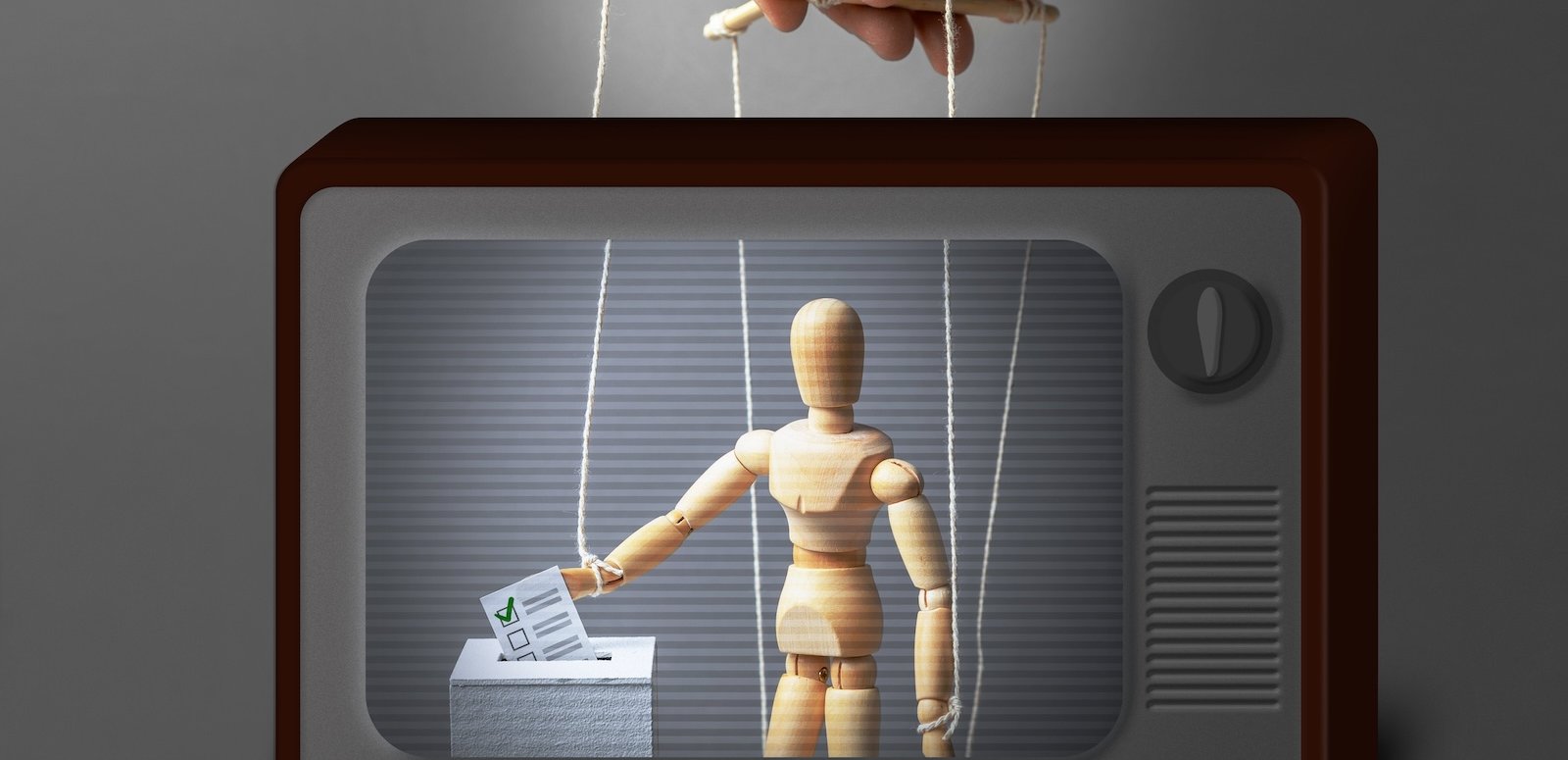

Misinformation—defined here as deliberately shared wrong information (e.g., fake news), which is distinct from simple misperceptions (e.g., genuine mistakes)—poses a number of dangers to a democratic society. Democracy thrives on the active and honest participation of citizens and misinformation threatens its success by obfuscating or discouraging the best course of action for voters and distorting perceptions of political opponents.

Most obviously, misinformation can decrease the chances that people are voting in their real interests, or what the literature refers to as “correct voting.” One recent example of possible incorrect voting was the propensity of uninsured conservative Americans to oppose candidates who supported the Affordable Care Act due to misinformation about its policies and use of “death panels.”1 Supplied with perfectly reliable information—unfiltered by partisanship—many of these same voters might have supported the legislation that would have given them affordable healthcare, and thus had a real benefit for their lives.

Incorrect voting like this is an especially pernicious possible effect of misinformation. Because a democracy relies on voting, the entire apparatus of government may lose legitimacy as a result of too many voters being fooled into supporting candidates, parties, or policies that do not actually benefit them. This should be especially worrying in the American context where—due to the relatively large number of candidates, ballot measures, offices, flexibility of the platforms of major parties, and frequency of elections—voters must invest significant time and energy to obtain accurate information, making correct voting difficult even without the presence of misinformation.2

Misinformation can also reduce political participation by clouding the truth and sowing distrust in both the infrastructure of government and political actors. One channel through which misinformation could do this is by distorting perceptions of procedural fairness in how the government and elections work. Voters’ ideas of the procedural fairness of a governmental action—their perception that a system or process is objectively fair for all parties involved regardless of whether their party won—can have a significant impact on whether they think proceedings are legitimate.3 Procedural fairness can also be a significant measure of voters’ views on how their democracy is performing, and some research has even found that perceptions of fairness—including the role of money in politics and representation of women—far outweigh traditional “pocket book” economic concerns, which have long been thought to dominate politics.4 If misinformation is able to cast doubt on the fairness of a result, there is ample reason to be concerned this could impact voters’ perceptions of democratic legitimacy, and, as a result, their willingness to participate in or accept the result of elections.

Finally, if misinformation is used to attack political opponents, it may increase political hostility and extremism. While some level of animosity and zealotry are unavoidable in a democracy, the danger for a well-functioning democracy when these forces become extreme lie in their power to sow distrust and dissatisfaction with the system as a whole. A democracy relies on the consent of its losers. If political opponents are so extreme or despised as to constitute an existential threat to “our side” their victory, regardless of its root in a fair election, it will be perceived as an intolerable cataclysm. Existing research suggests that partisans on the losing side have become increasingly dissatisfied with democracy.5

Common Solutions to Misinformation and Their Shortcomings

While there are many commonly discussed solutions to misinformation, most focus on controlling the media environment as opposed to reducing the power and appeal of misinformation itself. All of these suggested solutions also presuppose some level of novelty to our current information diet, particularly focusing on social media as the greatest market for misinformation. Inherently, such narratives suggest that there was an age of politics in which voters had bountiful correct information and were not susceptible to misinformation. However, these ideas do not reflect the reality of the past or present state of U.S. politics: Misinformation, particularly in the form of paranoia and conspiracy theories, has been a durable feature of American politics for most of our history—a feature social media has amplified but certainly did not create.

In his 1964 article, “The Paranoid Style in American Politics,” Richard Hofstadter traces the consistent willingness to believe a wide variety of conspiracy theories among the American public. Hofstadter characterized the time as being dominated by “angry minds at work mainly among extreme right-wingers.”6 He cites as examples the widespread nature of baseless conspiracies against the Freemasons in the late eighteenth and early nineteenth centuries, Catholics and Jesuits in the latter half of the nineteenth century, and Communists during the McCarthyism of Hofstadter’s own era. While the lessons Hofstadter draws from this genealogy of conspiracy were meant to address the extremity of the mid-twentieth century, many are prescient of our current era of inter-party hostility and conspiracy mongering, illustrating more evidently than any historical record the durability of this issue in American politics.

Misinformation has been salient in our politics for many years, as shown by an analysis of National Opinion Research surveys regarding Americans’ beliefs about the assassination of John F. Kennedy.7 By the late 1960s, conspiracies regarding the assassination were already widely believed, reflecting America’s longtime willingness to believe and spread misinformation regardless of the media environment. Historical work like this can illustrate the salience of our infatuation with misinformation regardless of the media platform. Contemporaneous research on our current media environment only confirms this narrative, and clearly illustrates that while social media is an important factor in the spread of misinformation, it is not a root cause in itself.

Fixing Social Media?

One of the more popular responses to misinformation focuses on regulating social media. If social media is inherently given to misinformation or something about its use ensures people will believe or spread it, then this may be effective. However, if social media simply reflects trends that are being produced elsewhere, the effect of regulations will be severely limited. As new collaborative research between Meta and academics found, tweaking various aspects of Facebook and Instagram during the 2020 U.S. elections—like removing reshared content8 or providing a chronological news feed instead of a feed curated by the platforms’ algorithms9—did not significantly alter polarization or political attitudes.

As a variety of research indicates, social media seems to be amplifying existing behaviors. The conventional wisdom is that online interactions make people more hostile because in social media platforms we lack facial cues, have much more power to keep ourselves anonymous, and have more public discussions accessible by a great number of outside people. Essentially, our self-regulation or empathy may be weaker online because our opportunity to create conflict is greater while social cues that might normally discourage it are absent. However, these expectations are not supported by evidence. Research looking into various studies across different countries found that online and offline hostility are closely connected.10 Other work finds that, while the norms that moderate our behavior may very well be weaker online, the root cause is likely a dangerous lack of moderating norms for political discussions everywhere in our society, suggesting that our political elites, campaigns, and culture encourage hostility towards opposition, which social media may amplify but does not create.11

Online political hostility may also be a way to signal status, reflecting deliberate decisions motivated by status-driven individuals, whose actions are much more visible online than they are offline.12 Political hostility online is born from a social need to demonstrate one’s own allegiances and gain status. While the nature of online discussion makes such demonstrations more visible, their cause is not fundamentally different or separate from the cause of similar actions offline. Although these status-driven individuals using hostility online may be a minority compared to the backdrop of largely benign interactions, their activity can make online environments significantly more hostile and help spread misinformation more quickly.13

This evidence should not be understood to suggest that nothing has changed in the universe of political information. New media types and communication methods have made the distribution of information cheaper than ever, allowing those with incentives to share misinformation the ability to reach a greater audience in a less filtered manner.14 However, this broadly fits with a historical pattern: New media environments that result from the advent of new technology or social shifts (radio, mass newspaper, 24-hour news, and social media) create more opportunity for information sharing and thus more opportunity for misinformation to spread. The effect of different types of media is also heterogeneous, with different methods of delivering information having different or opposite effects on consumers’ engagement, feelings on democracy, and likelihood to vote or take other political action.15 While these conclusions do point to the importance of the method of information delivery, they also show that the underlying culture necessary for the widespread belief and spread of misinformation has already been present in our political culture for much longer than social media. Further, this illustrates that as long as the social incentives to share misinformation or become politically hostile exist in wider society, they will exist wherever social interactions happen regardless of regulation.

Diversifying Media Environments?

Going by conventional wisdom again, misinformation is amplified because Americans are largely siloed in their media consumption, especially when it comes to media found online or on social sites.16 Thus, to prevent false claims bouncing around in echo chambers—and being strengthened by repetition and lack of any challenge—we must diversify our media environment and break the average American out of their bubble. However, it turns out that the vast majority of Americans are far less isolated from dissenting political information online than we often think.

Mathew Gentzkow and Jesse Shapiro use aggregate website audience, individual level browsing, and Cross-National Election Study survey data to construct an index that captures how segregated media platforms are by ideology. Their index ranges from zero to 100, where zero means people from across the ideological spectrum obtain their news from the same sources, and 100 means that there is no overlap in the media sources across ideological groups.17

On this scale, the American internet had a score of 7.5, slightly above most traditional media sources which ranged from 1.8 (broadcast television) to 10.5 (national newspapers) but significantly below all offline social interactions which ranged from 14.5 (voluntary associations) to 39.4 (political discussions). These results lead Gentzkow and Shapiro to conclude that online information is less segregated than often thought, especially compared to our interpersonal interactions offline.

Other research further shows that face-to-face social interactions are far more segregated than online interactions.18 Social media, particularly non-anonymous social media, can lead to greater cross-cutting exposure than offline behavior because there is a higher level of multidimensionality as well as weaker norms discouraging disagreement in online social connections.19 Thus, while social media may not expose us to people who differ from us dramatically, it does expose us to a greater diversity of people than our other interactions and we may be more willing to discuss uncomfortable topics—like politics—in those online settings.

A more targeted investigation of Facebook user data reached similar conclusions, finding that about 20 percent of the average American’s friend network identifies with the opposite party.20 This work also finds that, while there is a small chance they will interact with or share this content, users see a relatively large proportion of cross-cutting news on their newsfeeds (due to both the content curated by Facebook and what is shared by their network). The same methodology has been used to show that the average American’s media intake online is overwhelmingly moderate.21 On Twitter, while the majority of information shared was with those who were ideologically similar, users were exposed to a wide variety of sources of information depending on the subject matter.22 However, new research produced collaboratively between academics and Meta recently found that Facebook is more ideologically segregated than previously thought, especially when it comes to news shared in Facebook Pages and Groups compared to content shared by friends.23 The problem with echo chambers, if they exist, thus has far less to do with the information we are exposed to than the information we are willing to share and make use of—the motivations for which are driven by social-identity factors—as established in the previous section.

There are two important caveats to this conclusion. First, the majority of Americans simply do not spend that much of their time consuming political news online, instead having only a casual interest in the subject.24 Furthermore, those that are especially active in politics drive a disproportionate amount of the traffic towards partisan outlets.25 So while the majority of Americans do not exist in media bubbles, a vocal and highly active minority might. Researchers looking at the efforts of the Russian Internet Research Agency (IRA) observed that those that are more likely to engage with its propaganda are already the most polarized, active on social media, and interested in politics.26 This may in fact limit the damage caused by actors like the IRA, as their efforts primarily interact with the few Americans who are siloed online and reinforces the idea that those who want to share misinformation for political gain will find a way to do so.

Second, while overall information consumption is moderate in nature, Republicans, particularly Trump supporters, face greater exposure to misinformation while Democrats are more likely to share information that comes from across the aisle.27 This is at least partly due to the supply of information on each side of the spectrum.28 While the incentives to spread misinformation or information that is beneficial to one's own party may be the same for liberals and conservatives, the well of fake news may be deeper on the conservative side and take more work to avoid while still finding information useful to one’s partisan preferences. This is the case for Facebook, where conservatives consume more homogenous news content than liberals and most misinformation circulates in conservative networks on Facebook.29

Fact-Checking?

Failing system-wide regulation and a paradigm shift in how Americans get their information, fact-checking is often pointed to as one of the easier and already practiced means to address misinformation. Inherently, this suggestion relies on the reason for misinformation’s salience being its believability—it assumes that if people were only shown that a narrative is a lie they will stop spreading, using, or believing it.

However, the power of social identity goals to create incentives to share misinformation points to an important takeaway for countering it: Fact-checking or simply correcting false information will not work as it does not counteract the social identity determinants of misinformation sharing. While it might clearly prove that a story is fake, fact-checking will not stop the spread of misinformation if the need to signal one’s politics, derogate the opposition, or generate chaos is a more powerful motivator than truth.30 An experiment in Côte d’Ivoire tested this hypothesis, concluding that interventions aimed at reducing the belief and spread of misinformation were successful when they targeted social-identity factors, like empathy, social norms, and elite endorsements, but not when they promoted digital literacy.31

“Fact-checking will not stop the spread of misinformation if the need to signal one’s politics, derogate the opposition, or generate chaos is a more powerful motivator than truth.”

Indeed, fact-checking may often be more harmful than beneficial, as multiple studies have proven that even minimal corrections to flagrantly untrue stories can have a strong exposure effect: just having seen the correction makes people more likely to remember the false information.32 To have any hope of actually correcting misinformation, fact-checking must come from an unexpected messenger—someone from the in-group the misinformation benefits who is going against their own interests to set the record straight.33 In contrast, correction from someone of the opposing party can cause acceptance of misinformation to grow stronger. Thus PolitiFact, Reuters, or any of the myriad of other fact-checking sites will almost always fail to counteract a lie told by Donald Trump because they are not a trusted member of the in-group sharing that information. To be effective, a correction would have to come from Trump himself or someone in his camp with enough credibility to go against the grain and set the record straight.

This is not to say that there is no intervention that might reduce misinformation’s power and appeal. As the Côte d’Ivoire study shows, interventions that target the motivations to use misinformation can be much more successful at curbing its use.34

Citations

- Adam J. Berinsky, “Rumors and Health Care Reform: Experiments in Political Misinformation,” British Journal of Political Science 47, no. 2 (April 2017): 241–62.

- Richard R. Lau, Parina Patel, Dalia F. Fahmy, and Robert R. Kaufman, “Correct Voting Across Thirty-Three Democracies: A Preliminary Analysis,” British Journal of Political Science 44, no. 2 (April 2014): 239–59; Richard R. Lau, David J. Anderson, and David P. Redlawsk, “An Exploration of Correct Voting in Recent U.S. Presidential Elections,” Midwest Political Science Association 52, no. 2 (April 2008): 395–411.

- Aaron Martin, Gosia Mikołajczak, and Raymond Orr, “Does Process Matter? Experimental Evidence on the Effect of Procedural Fairness on Citizens’ Evaluations of Policy Outcomes,” International Political Science Review 43, no. 1 (January 1, 2022): 103–17.

- Jonas Linde and Joakim Ekman, “Satisfaction with Democracy,” European Journal of Political Research 42, no. 3 (May 2003): 391-408; Pippa Norris, “Do Perceptions of Electoral Malpractice Undermine Democratic Satisfaction? The US in Comparative Perspective,” International Political Science Review 40, no. 1 (2019): 5–22.

- Shanto Iyengar, Gaurav Sood, and Yphtach Lelkes, “Affect, Not Ideology: A Social Identity Perspective on Polarization,” The Public Opinion Quarterly 76, no. 3 (Fall 2012): 405–31.

- Richard Hofstadter, “The Paranoid Style in American Politics,” Harper’s Magazine, October 31, 1964, source.

- Karlyn Bowman and Andrew Rugg, Public Opinion on Conspiracy Theories (Washington, DC: American Enterprise Institute for Public Policy Research, November 2013), source.

- Andrew Guess et al., “Reshares on Social Media Amplify Political News but Do Not Detectably Affect Beliefs or Opinions,” Science 381, no. 6656 (July 27, 2023): 404-408.

- Andrew Guess et al., “How Do Social Media Feed Algorithms Affect Attitudes and Behavior in an Election Campaign?” Science 381, no. 6656 (July 27, 2023): 398-404.

- Alexander Bor and Michael Bang Petersen, “The Psychology of Online Political Hostility: A Comprehensive, Cross-National Test of the Mismatch Hypothesis,” American Political Science Review 116, no. 1 (2022): 1–18.

- Shanto Iyengar and Sean J. Westwood, “Fear and Loathing across Party Lines: New Evidence on Group Polarization,” American Journal of Political Science 59, no. 3 (July 2015): 690–707.

- Alexander Bor and Michael Bang Petersen, “The Psychology of Online Political Hostility: A Comprehensive, Cross-National Test of the Mismatch Hypothesis,” American Political Science Review 116, no. 1 (2022): 1–18.

- Christopher A. Bail, Brian Guay, Emily Maloney, Aidan Combs, D. Sunshine Hillygus, Friedolin Merhout, Deen Freelon, and Alexander Volfovsky, “Assessing the Russian Internet Research Agency’s Impact on the Political Attitudes and Behaviors of American Twitter Users in Late 2017,” Proceedings of the National Academy of Sciences 117, no. 1 (November 25, 2019): 243–50; Alexander Bor and Michael Bang Petersen, “The Psychology of Online Political Hostility: A Comprehensive, Cross-National Test of the Mismatch Hypothesis,” American Political Science Review 116, no. 1 (2022): 1–18.

- Brendan Nyhan, “Facts and Myths about Misperceptions,” Journal of Economic Perspectives 34, no. 3 (Summer 2020): 220–36; Alan I. Abramowitz and Steven W. Webster, “Negative Partisanship: Why Americans Dislike Parties But Behave Like Rabid Partisans,” Advances in Political Psychology 39, no. 1 (2018): 119–135.

- Susan A. Banducci and Jeffrey A. Karp, “How Elections Change the Way Citizens View the Political System: Campaigns, Media Effects and Electoral Outcomes in Comparative Perspective,” British Journal of Political Science 33, no. 3 (2003): 443–67.

- Mathew Gentzkow and Jesse M. Shapiro, “Ideological Segregation Online and Offline,” Quarterly Journal of Economics 126, no. 4 (November 2011): 1799–1839; Andrew M. Guess, “(Almost) Everything in Moderation: New Evidence on Americans’ Online Media Diets,” American Journal of Political Science 65, no. 4 (February 19, 2021): 1007–22; Andrew M. Guess, Brendan Nyhan, and Jason Reifler, “Exposure to Untrustworthy Websites in the 2016 US Election,” Nature Human Behaviour 4 (May 2020): 472–80.

- Mathew Gentzkow and Jesse M. Shapiro, “Ideological Segregation Online and Offline,” Quarterly Journal of Economics 126, no. 4 (November 2011): 1799–1839.

- Mathew Barnidge, “Exposure to Political Disagreement in Social Media Versus Face-to-Face and Anonymous Online Settings,” Political Communications 34, no. 2 (2017): 302–21.

- Mathew Barnidge, “Exposure to Political Disagreement in Social Media Versus Face-to-Face and Anonymous Online Settings,” Political Communications 34, no. 2 (2017): 302–21.

- Eytan Bakshy, Solomon Messing, and Lada A. Adamic, “Exposure to Ideologically Diverse News and Opinion on Facebook,” Science 348, no. 6239 (June 2015): 1130–32.

- Andrew M. Guess, “(Almost) Everything in Moderation: New Evidence on Americans’ Online Media Diets,” American Journal of Political Science 65, no. 4 (February 19, 2021): 1007–22.

- Pablo Barberá, John T. Jost, Jonathan Nagler, Joshua A. Tucker, and Richard Bonneau,“Tweeting From Left to Right: Is Online Political Communication More Than an Echo Chamber?” Psychological Science 26, no. 10 (2015): 1531–42.

- Sandra Gonzalez-Bailon, “Asymmetric Ideological Segregation in Exposure to Political News on Facebook,” Science 381, no. 6656 (July 27, 2023): 392–98.

- Andrew M. Guess, “(Almost) Everything in Moderation: New Evidence on Americans’ Online Media Diets,” American Journal of Political Science 65, no. 4 (February 19, 2021): 1007–22.

- Andrew M. Guess, “(Almost) Everything in Moderation: New Evidence on Americans’ Online Media Diets,” American Journal of Political Science 65, no. 4 (February 19, 2021): 1007–22.

- Christopher A. Bail, Brian Guay, Emily Maloney, Aidan Combs, D. Sunshine Hillygus, Friedolin Merhout, Deen Freelon, and Alexander Volfovsky, “Assessing the Russian Internet Research Agency’s Impact on the Political Attitudes and Behaviors of American Twitter Users in Late 2017,” Proceedings of the National Academy of Sciences 117, no. 1 (November 25, 2019): 243–50.

- Elizabeth Suhay, Emily Bello-Pardo, and Brianna Maurer, “The Polarizing Effects of Online Partisan Criticism: Evidence from Two Experiments,” International Journal of Press/Politics 23, no. 1 (November 2017): 95–115; Pablo Barberá, John T. Jost, Jonathan Nagler, Joshua A. Tucker, and Richard Bonneau,“Tweeting From Left to Right: Is Online Political Communication More Than an Echo Chamber?” Psychological Science 26, no. 10 (2015): 1531–42; Sandra Gonzalez-Bailon et al., “Asymmetric Ideological Segregation in Exposure to Political News on Facebook,” Science 381, no. 6656 (2023): 392–398.

- Mathias Osmundsen, Alexander Bor, Peter Bjerregaard Vahlstrup, Anja Bechmann, and Michael Bang Petersen, “Partisan Polarization Is the Primary Psychological Motivation Behind Political Fake News Sharing on Twitter,” American Political Science Review 115, no. 3 (March 2020): 999–1015.

- Sandra Gonzalez-Bailon, “Asymmetric Ideological Segregation in Exposure to Political News on Facebook,” Science 381, no. 6656 (July 27, 2023): 392–98.

- Jay J. Van Bavel, Elizabeth A. Harris, Philip Pärnamets, Steve Rathje, Kimberly C. Doell, and Joshua A. Tucker, “Political Psychology in the Digital (Mis)Information Age,” Social Issues and Policy Review 15, no. 1 (January 2021): 84–113; Mathias Osmundsen, Alexander Bor, Peter Bjerregaard Vahlstrup, Anja Bechmann, and Michael Bang Petersen, “Partisan polarization is the primary psychological motivation behind political fake news sharing on Twitter,” American Political Science Review 115, no. 3 (March 2020): 999–1015; Brendan Nyhan, “Facts and Myths about Misperceptions,” Journal of Economic Perspectives 34, no. 3 (Summer 2020): 220–36; Adiren Friggeri, Lada A. Adamic, Dean Eckles, and Justin Cheng, “Rumor Cascades,” Proceedings of the International AAAI Conference on Web and Social Media 8, no. 1 (2014): 101–110; Drew B. Margolin, Aniko Hannak, and Ingmar Weber, “Political Fact-Checking on Twitter: When Do Corrections Have an Effect?” Political Communication 35, no. 2 (2018): 196–219; Adam J. Berinsky, “Rumors and Health Care Reform: Experiments in Political Misinformation,” British Journal of Political Science 47, no. 2 (April 2017): 241–62.

- Jessica Gottlieb, Claire L. Adida, and Richard Moussa, “Reducing Misinformation in a Polarized Context: Experimental Evidence from Côte d’Ivoire,” OSF Preprints (November 2022): 1–64.

- Brendan Nyhan, “Facts and Myths about Misperceptions,” Journal of Economic Perspectives 34, no. 3 (Summer 2020): 220–36; Adam J. Berinsky, “Rumors and Health Care Reform: Experiments in Political Misinformation,” British Journal of Political Science 47, no. 2 (April 2017): 241–62.

- Drew B. Margolin, Aniko Hannak, and Ingmar Weber, “Political Fact-Checking on Twitter: When Do Corrections Have an Effect?” Political Communication 35, no. 2 (2018): 196–219; Adam J. Berinsky, “Rumors and Health Care Reform: Experiments in Political Misinformation,” British Journal of Political Science 47, no. 2 (April 2017): 241–62.

- Jessica Gottlieb, Claire L. Adida, and Richard Moussa, “Reducing Misinformation in a Polarized Context: Experimental Evidence from Côte d’Ivoire,” OSF Preprints (November 2022): 1–64.