Why Am I Seeing This?

Abstract

Internet platforms are increasingly adopting artificial intelligence and machine learning tools in order to shape the content we see and engage with online. Today, numerous internet platforms utilize algorithmic decision-making to provide users with recommendations on content, connections, purchases, and more.

This report is the last in a series of four reports that explore different issues regarding how internet platforms use automated tools to shape the content we see and influence how this content is delivered to us. The first report in this series focused on how automated tools can be leveraged to moderate content online. The second report focused on how internet platforms deploy algorithms to rank and curate content in search engine results and in news feeds. The third report focused on how platforms use artificial intelligence to optimize the targeting and delivery of advertisements. This final report focuses on how platforms use automated tools to make recommendations to users. All four of these reports also seek to explore how internet platforms, policymakers, and researchers can better promote fairness, accountability, and transparency around these automated tools and decision-making practices.

Acknowledgments

In addition to the many stakeholders across civil society and industry who have taken the time to talk to us about ad targeting and delivery over the past few months, we would particularly like to thank Dr. Nathalie Maréchal from Ranking Digital Rights for her help in closely reviewing and providing feedback on this report. We would also like to thank Craig Newmark Philanthropies for its generous support of our work in this area.

Downloads

Introduction

Today, personalized recommendation systems, particularly those that are based on machine learning (and the choice architecture decisions associated with them),1 have come to govern many internet platforms, including social media, e-commerce, and media streaming platforms. Recommendation systems are algorithmic tools that internet platforms use to identify and recommend content, products, and services that may be of interest to their users. These systems are responsible for recommending a range of content including friends, posts, ads, news articles, trending topics, items to purchase, jobs, and more. In doing so, these systems are able to influence user interests, opinions, and behaviors as well as their social group formation.2

Many internet platforms assert that these systems enhance users’ experiences through personalized and relevant recommendations. However, it is important to note that in deploying these systems, internet platforms also seek to retain user attention on their services. This translates to significant financial benefits for the companies, as they can then target these users with advertisements and recommend further content to consume or items to purchase.3 In addition, definitions of relevance vary across platforms and are largely based on what a platform believes a user is interested in through its data collection and inference practices.

Widely used by internet platforms today, recommendation systems have a significant amount of influence over how users engage with—and are influenced by—the online sphere.4 For example, recommender systems have the power to influence product purchases. They can also determine what content—such as which news articles—a user sees. This power has raised concerns around the use of algorithmic recommendation systems to intentionally or unintentionally create echo chambers in which users have a homogenized experience and engage with only certain viewpoints, or only with popular or trending topics.5

In addition, researchers have found that these recommender systems create a number of concerning outcomes. Notably, these include reinforcing societal biases and augmenting harmful perspectives, such as those of extremists and conspiracy theorists. Internet platforms that deploy these recommendation systems do not currently provide meaningful transparency and accountability around how these systems are created, how they operate, and how they make decisions.6 This makes it very difficult to analyze and combat the problematic recommendations that come from these systems.7 Because of this, critics have called recommendation systems “the biggest threat to societal cohesion on the internet” and a major contributor to offline threats.8

Further, recommender systems now also influence the operations of internet platforms themselves. For example, platforms such as Amazon and Netflix produce films and television shows based on behavioral data collected on their users through these systems. As a result, these recommender systems are not only influencing what existing content users see and engage with online, but they are also shaping the database of options that users have to choose from.9 To the extent that these productions are based on popularity signals, this could create a feedback loop that narrows the choices available to users.

This report is the final report in a series of four reports that explore how major technology companies rely on automated tools to shape the content we see and engage with online, and how internet platforms, policymakers, and researchers can promote greater fairness, accountability, and transparency around these algorithmic decision-making practices. This report focuses on the use of automated tools to provide recommendations to users. It relies on case studies on three internet platforms—YouTube, Amazon, and Netflix—to highlight the different ways algorithmic tools can be deployed by technology companies to enable recommendations. These case studies will also highlight the challenges associated with these practices.

Editorial disclosure: This report discusses policies by Google (YouTube), which is a funder of work at New America but did not contribute funds directly to the research or writing of this report. New America is guided by the principles of full transparency, independence, and accessibility in all its activities and partnerships. New America does not engage in research or educational activities directed or influenced in any way by financial supporters. View our full list of donors at www.newamerica.org/our-funding.

Citations

- Renee DiResta, "Up Next: A Better Recommendation System," WIRED, April 11, 2018, source

- Renee DiResta, "How Amazon's Algorithms Curated a Dystopian Bookstore," WIRED, March 5, 2019, source

- Spandana Singh, Special Delivery: How Internet Platforms Use Artificial Intelligence to Target and Deliver Ads, February 18, 2020, source

- Zeynep Tufekci, "How Recommendation Algorithms Run the World," WIRED, April 22, 2019, source

- Azadeh Nematzadeh et al., How Algorithmic Popularity Bias Hinders Or Promotes Quality, July 14, 2017, source

- Sinha and Swearingen, The Role.

- Ryan Bigge, "Better Personalized Recommendations Through Transparency and Content Design," Medium (blog), entry posted February 6, 2019, source

- Diresta, "Up Next".

- Nematzadeh et al., How Algorithmic.

An Overview of Algorithmic Recommendation Systems

There are three main types of algorithms that can be used in recommendation systems:

1. Content-based Recommender Systems: Content-based recommender systems operate by suggesting items to a user that are similar in attributes to items that a user has previously demonstrated interest in.1 Content-based recommender systems evaluate the attributes of an item, such as its metadata (e.g. tags or text).2 Although different recommendation systems can vary in their exact composition, most content-based recommender systems operate by creating a profile of a user that outlines their interests and preferences, such as alternative rock music or drug store makeup. The system will then compare this user profile to existing items in its database in order to identify a match.3 A user’s profile is based on explicit and implicit feedback that a user provides on recommendations as a way to indicate their preferences and interests further.4 For example, a user may explicitly indicate preferences by rating a particular item, or implicitly by clicking on certain suggested items and not others. The algorithm uses this information to categorize these preferences and refine recommendations going forward. Unlike other systems discussed below, content-based recommender systems do not typically account for the preferences or actions of other users when making recommendations. Rather, their recommendations are primarily based on a given user’s past interactions.5

2. Collaborative-filtering Recommender Systems: Collaborative-filtering recommender systems operate by suggesting items to a user based on the interests and behaviors of other users who are identified as having similar preferences or tastes.6 The process is considered collaborative because these recommender systems make automated predictions (known as filtering) about a user’s interests and preferences based on data about other user’s who have similar preferences and interests.7 Collaborative-filtering recommender systems make these determinations by mining user behavior, such as purchase records and user ratings,8 and through techniques like pattern recognition.9

Collaborative-filtering recommender systems are considered particularly useful because they allow comparison and ranking of completely different types of items. This is because these algorithms do not need to know about the attributes of an item. Rather, they only need to know which items are bought together.10 In addition, because collaborative-filtering recommender systems make recommendations based on a vast dataset of other user behaviors and preferences, researchers have found that this approach yields more accurate results compared to other techniques. For example, keyword searching offers a more narrow assessment of datasets.11 Keyword searching is typically deployed during behavioral targeting, which is when advertisers use information on a user’s browsing history and behavior to customize the ad targeting and delivery process.12

In addition, some researchers consider collaborative-filtering recommender systems to be able to deliver more accurate results for users with both mainstream and niche interests when compared to the other types of recommender systems outlined in this report. This is because a programmer can control how many users in the database should be considered as part of the data set for calculating recommendations; as a result, the programmer can optimize the algorithm to balance recommending popular and niche results.13 According to researchers, collaborative-filtering recommender systems are modeled after the ways individuals solicit feedback and recommendations from their social circle offline.14 Such recommender systems seek to automate this process based on the notion that if two similar individuals like an item or piece of content, there are also likely several other items that they would both find interesting.15

Collaborative-filtering recommender systems rely on two primary types of algorithms: user-based and item-based collaborative-filtering algorithms. Both types rely on users’ ratings on items. In the user-based category of algorithms, the algorithm scores an item a user has not rated by combining the ratings of similar users.16 In the item-based approach, the algorithm matches a user’s purchased and rated items with similar items, and then combines these similar items into a list of recommended products for the initial user.17

3. Knowledge-based Recommender Systems: Knowledge-based recommender systems make suggestions based on the attributes of a user and an item. These systems typically rely on data-mining methods and advanced natural language processing (NLP) to identify and evaluate an item’s attributes (e.g. price or technical specifications such as HD or BluRay functionality). The system then identifies similarities between Item A’s attributes and User A’s preferences (e.g. preference for high-end equipment), and makes recommendations based on its findings. For example, such a system could identify attribute similarities between a job seeker’s resume and a job description. This recommender system does not typically consider a user’s past behaviors, and it is therefore considered most effective when engaging with a new user or a new item.18

Most recommendation systems deploy a hybrid of these three filter categories.19 Traditionally, both content-based and collaborative-filtering recommender systems rely on explicit input from a user. For example, algorithms can collect user ratings, likes, or reactions to an item or piece of content. These systems can also use implicit user input, which is drawn from data on user activities and behaviors.20 These can include a user’s clicks, search queries, and purchase history, as well as a user adding an item to their cart, completing a purchase, and reading an entire article.21 Using both of these data types, these systems can be refined so that they deliver more personalized recommendations.

Citations

- Ido Guy et al., "Social Media Recommendation based on People and Tags," SIGIR '10: Proceedings Of The 33rd International ACM SIGIR Conference on Research and Development In Information Retrieval, July 2010, source

- Michael D. Ekstrand et al., "All The Cool Kids, How Do They Fit In? Popularity and Demographic Biases in Recommender Evaluation and Effectiveness," Proceedings of Machine Learning Research: Conference on Fairness, Accountability, and Transparency 81 (2018):, source

- Michael J. Pazzani and Daniel Billsus, "Content-Based Recommendation Systems," in The Adaptive Web: Methods and Strategies of Web Personalization (2007), source

- Pazzani and Billsus, "Content-Based Recommendation".

- Pavel Kordík, "Recommender Systems Explained," Recombee (blog), entry posted July 12, 2016, source

- Guy et al., "Social Media".

- Remus Titiriga, "Social Transparency through Recommendation Engines and its Challenges: Looking Beyond Privacy," Informatica Economică 15, no. 4 (2011): source

- Ekstrand et al., "All The Cool".

- Titiriga, "Social Transparency".

- Charu K. Aggarwal, Recommender Systems (Springer International Publishing, 2016).

- John Riedl and Joseph Konstan, Word of Mouse: The Marketing Power of Collaborative Filtering (Warner Books, 2002), source

- Titiriga, "Social Transparency".

- Kordík, "Recommender Systems," Recombee (blog).

- Rashmi Sinha and Kirsten Swearingen, The Role of Transparency in Recommender Systems, 2002, source

- Titiriga, "Social Transparency".

- Kordík, "Recommender Systems," Recombee (blog).

- Michael Martinez, "Amazon: Everything You Wanted To Know About Its Algorithm and Innovation," IEEE Computer Society, source

- Aggarwal, Recommender Systems.

- Ekstrand et al., "All The Cool".

- Aggarwal, Recommender Systems.

- Guy et al., "Social Media".

Case Study: YouTube

YouTube is an American video-sharing company founded in 2005 by Chad Hurley, Steve Chen, and Jawed Karim.1 In 2006, YouTube was acquired by Google for $1.65 billion, and has since operated as a subsidiary of the company.2 YouTube is the world’s largest online video source,3 with approximately 2 billion users worldwide.4 The company currently ranks second for global internet engagement on Alexa rankings.5 According to a Pew Research Center study, 94 percent of Americans between the ages of 18 and 24 use YouTube, a higher percentage than for any other online platform.6

Today, individuals turn to YouTube to access a range of content, including music videos, instructional videos, and the news. The company operates a vast database of videos, and has been referred to as a library of content.7 YouTube utilizes an algorithmic recommendation system to generate personalized video recommendations to its users.8 According to YouTube, although many users visit the platform to search for something specific, the company has expanded its recommendation system in order to also engage those who did not come to the platform with a specific idea of what they wanted to watch.9 YouTube’s videos also often appear in Google search results.10 YouTube seeks to maximize the time that users spend on the platform as it enables the company to deliver more ads to users. Given that the recommendation system is designed to infer user interests and behaviors, and subsequently suggest content that may be of interest to a user, the system is part and parcel of the company's revenue generation model.

YouTube’s recommendation system determines what content should appear on a user’s home page and in the user’s “Up Next” sidebar, which appears next to videos that a user is currently watching. The Up Next feature autoplays recommended content unless a user turns the autoplay off.11 Today, YouTube’s recommendation system is responsible for generating over 70 percent of viewing time on the platform.12 This has a significant impact on its users. According to a Pew Research Center study, 81 percent of YouTube users say that they at least occasionally watch recommended videos, including 15 percent who say they watch recommended videos regularly.13

YouTube is one of the largest video repositories on the internet, and many users incorrectly equate the site’s popularity with the credibility of its recommendation system. However, despite the fact that YouTube’s recommendation system is responsible for shaping how billions of individuals engage with content on the service, and influencing how they see the world, the company has provided relatively little transparency around how this system works.14 According to YouTube, user recommendations and search results are influenced by factors such as the videos a user has liked, the playlists a user has created,15 and a user’s watch history and activity on YouTube, Google, and Chrome. Some researchers have suggested that the system also considers data points such as a user’s account preferences16 and the keywords they search for.17 The company has not, however, offered comprehensive disclosures outlining the key factors its recommendation system considers.18 This lack of transparency is concerning, as the company’s recommendation system has been found to suggest controversial and harmful videos, including those that promote extremist propaganda, conspiracy theories, and misinformation. Further, YouTube provides users with only a limited set of controls over how they would like their platform experience to be shaped by such algorithmic decision-making. Without insight into how YouTube’s recommendation systems work, it is difficult to understand why these suggestions are made, and how to develop targeted interventions to prevent them.

A Technical Overview of YouTube’s Recommendation System

According to a 2016 paper authored by three researchers at Google, in order for YouTube’s recommendation system to deliver personalized recommendations, it has to be able to process YouTube’s expansive user base and collection of videos.19 In addition, given that over 500 hours of new content are uploaded to YouTube every minute,20 the recommendation system also needs to be responsive enough that it can rapidly integrate these new videos as well as any new user behaviors and patterns into its suggestions.21

According to YouTube, it makes minor changes to the recommendation system every year.22 But, the company has provided little transparency around how its recommendation system is structured and makes decisions, and how the system has changed over time. However, numerous researchers and journalists have attempted to document the system’s various iterations and evolutions.

Prior to March 2012, the recommendation algorithm was designed to maximize user views by recommending videos that the system calculated users were likely to click on. However, many creators figured out how to influence this recommendation system and gain more views on their videos. In addition, the prioritization of user views by the algorithm meant that creators had a greater incentive to produce clickbait content23 that garnered a large number of clicks, such as content with sensational titles, compared to content that a user would actually want to fully watch.24

In response to these concerns, YouTube altered the recommendation algorithm so that it placed more weight on a user’s watch time rather than a video’s views.25 The platform defines watch time as how much time a user spends viewing content on the platform. YouTube asserts that this change encouraged creators to produce “higher quality content” that users would watch fully, rather than content users would click on and then abandon.26 This would in turn increase the likelihood that users would be satisfied with the service, view more videos and advertisements, and generate more revenue.27

The introduction of the watch time metric also influenced how the company displays videos in search results, runs ads, and pays video creators on the platform.28 After introducing this new model, the company also changed their rules so that all creators—rather than a vetted group—could run ads on their content and accrue revenue from them.29 A few weeks after the company introduced these changes to its recommendation system, YouTube reported that the number of views on the platform was decreasing.30 The overall watch time, however, was increasing and it grew 50 percent a year for three consecutive years.31 However, critics both inside and outside the company argued that this metric also rewarded offensive, harmful, and often fringe content that garnered high watch times.32

In 2015, Google’s artificial intelligence division, Google Brain, began reconstructing YouTube’s recommendation system around neural networks. A neural network is a series of algorithms that aims to identify relationships by finding patterns in a dataset by mimicking how animal brains operate.33 Prior to Google Brain, YouTube had implemented machine learning tools in their recommendation system through a Google-produced system known as Sibyl.34 However, the new algorithm introduced by Google Brain brought in a range of new functionalities. For example, Google Brain used supervised learning systems, which operate using inputs (training data sets that are pre-labeled by humans) and predetermined output data.35 These supervised learning techniques enabled the system to identify adjacent relationships between videos, and generalize these findings in ways that humans could not. Before the use of such supervised learning techniques, if a user watched an episode from a particular beauty vlogger, the recommendation system suggested videos with high degrees of similarity. However, by identifying adjacent relationships, the Google Brain model was able to suggest other vloggers who were comparable, but not exactly identical.

In addition, the Google Brain algorithm was able to identify important patterns in consumption. For example, the algorithm noted and optimized a relationship between a user’s device and their watch times, recommending shorter videos for mobile users and longer videos for YouTube TV users.36 The Brain algorithm also enabled YouTube to incorporate insights on a user’s behavior into its recommendations at a faster rate, thus making it easier for the company to identify trending topics and offer updated recommendations.37 Following the introduction of the Google Brain model, 70 percent of the time that users spend on the site consuming content has been driven by the recommendation system.38

This recommendation system was created by combining two deep-learning neural networks: one for candidate generation and another for ranking. Candidate generation is the first stage of the recommendation process. During this stage, the recommendation system is given a query and produces a group of relevant videos as candidates. The ranking network comes second, and is responsible for ranking these candidates in order. The candidate generation network uses information on a user’s behaviors and history on the platform to identify a small group of a couple hundred videos from YouTube’s larger corpus that are considered broadly of interest to the user. The candidate generation network relies on collaborative filtering to produce these personalized results. The ranking network is then tasked with delivering a select number of best recommendations to each user. It does this by assigning each video a score based on information about the video (e.g. length) and information about the user (e.g. whether they watch long videos or short videos).The videos that are assigned the highest scores are then ranked and displayed to the user. During the ranking stage, the model has greater access to information on a specific video and a user’s relationship to the video than it does during the candidate generation stage, as the model only needs to consider a small group of a couple hundred videos. In simplified terms, the ranking function can be thought of as expected watch time per impression. Researchers from Google have asserted that this formula promotes more “relevant” videos to users compared to ones that emphasize click-through rate, as click-through rate functions often result in the promotion of clickbait.39

This two-stage model enables YouTube to make personalized video recommendations from a large database of content.40 In addition, these deep neural networks create an average of a user’s search history and watch history in order to make recommendations. It also considers additional data points, including a user’s geographic region, device, gender, logged-in status, and age.41 YouTube’s recommendation system has undergone a number of changes over the past few years. It is unclear how much of Google Brain’s changes are still in use.

Youtube evaluates and refines this recommendation system using a range of offline metrics, such as precision, ranking loss, and recall.42 The company also runs A/B tests43 during live experiments. During these experiments, researchers can measure minor changes in click-through rate, watch time, and other user engagement metrics.44 This method of testing is considered the gold standard for evaluating the effectiveness of a recommendation system, compared to offline testing, which has a number of problems.45 However the company has not provided any insight into the results of such tests and how they contribute to the company’s assessment of how effective its recommendation system is. The examples that the recommendation system model has been trained on consist of more than just videos that the system recommended. It features videos from all YouTube watches, including embedded videos from other sites, so as to ensure the recommendation system can surface new content (especially content that may not be widely viewed yet, but is still considered of interest to a user).46 The company noted that it has been able to identify other mechanisms by which users are discovering new content, and integrate it into the model, although it did not specify what these mechanisms are.47

This new two-stage algorithm also created new problems. It often forced users into specific content niches by consistently recommending content that was similar in nature to videos the user had previously watched. As a result, users got bored. This prompted researchers at Google Brain to explore whether they could maintain user engagement by guiding them to content in other sectors of the platform, rather than just in existing interest buckets. These questions led the company to test a new algorithm, which incorporated a type of artificial intelligence known as reinforcement learning.

Reinforcement learning is used to train machine-learning models to make a certain sequence of decisions using a trial and error process that features rewards and penalties.48 The team called this new algorithm Reinforce. Its primary goal was to predict which video recommendations would broaden the range of subjects that a user would watch content on, pushing users to consume more content and maximizing user engagement over time. A YouTube spokesperson also said that Reinforce was intended to improve the accuracy of recommendations on the platform by mitigating the recommendation system’s bias toward popular content.49 Google considered the introduction of Reinforce a massive success. Sitewide views across YouTube increased by almost 1 percent, a staggering amount given the platform’s size. This gain translated into millions more hours of watch time and a significant bump in the company's ad revenue.50 As YouTube’s algorithm has evolved, the company has shared that the fundamental components of this model remain intact today.51

According to a YouTube spokeswoman, in late 2016, the company adopted social responsibility as a core value for the company.52 During this time, the recommendation system was altered so it considered inputs such as how many times a video was shared, liked, and disliked. 53 These changes were introduced amidst growing pressure on internet platforms to be more proactive in their efforts to combat harmful content, such as extremist propaganda, disinformation and misinformation, and content unsafe for children.54 Recently, the company has provided more detail around this concept of responsibility, outlining that it consists of the four Rs of responsibility:55

- Removing content that violates the platform’s Community Guidelines as quickly as possible

- Raising up authoritative information sources, especially during breaking news moments

- Reducing the spread of content that comes close to violating, but does not violate the platform’s Community Guidelines (known as borderline content)

- Rewarding trusted creators

Also in 2016, a YouTube spokesperson stated that the recommendation system had changed significantly, and was no longer geared to optimize for watch time. Rather, the system began to emphasize satisfaction to ensure users were happy with the content they were viewing,56 and so that the recommendation system would suggest clickbait videos less often.57 This new metric aimed to balance watch time with factors such as likes, dislikes, shares, and satisfaction surveys that the company prompts users to fill out after they finish certain videos on the platform.58 According to the company, it receives millions of survey responses every week.59

Further, between 2017 and 2019, the company introduced two new internal metrics for evaluating how videos are performing on the site. The first metric monitors the total amount of time users are spending on YouTube, including by posting and reading the comments. The second metric, known as “quality watch time,” aims to identify content that goes beyond just retaining a user’s attention; the company has not explained what this involves. In calculating these two new metrics, YouTube aimed to reward content considered generally acceptable to YouTube users and advertisers and push back on criticisms that it uses its algorithmic recommendation system to capture user attention and make the platform more addictive.60 A recent Pew Research Center study indicates, however, that the algorithmic system still seeks to reel users in and get them to consume more content. In addition, the study found that the longer a user spends watching videos on the platform, the more the system recommends longer and more popular content. In the initial stages of the Pew study, the recommendation engine suggested videos that were on average nine minutes and 31 seconds in length. During the final stages of the study, the recommended videos were on average 15 minutes in length.61

Since 2017, YouTube has introduced changes to its recommendation algorithm designed to promote videos from sources that the company considers to be authoritative,62 such as top and local news channels.63 YouTube determines which sources it considers to be authoritative based on Google News’s assessments of publishers and whether they abide by Google News’s content policies and are producing reliable content. These sources can also include organizations such as public health institutions.64 A publisher’s status as an authoritative source is not dependent on how many subscribers its YouTube channel has.65 If a publisher is considered authoritative, and it has a YouTube channel, then YouTube will promote this publisher’s related content when a user searches for information or news related queries.66 This is intended to deter the spread of misinformation and conspiracy theories on the platform.67

In June 2019, the company also began promoting authoritative sources in cases in which a user has consumed multiple videos that are close to violating the platform’s Community Guidelines,68 such as conspiracy theory videos,69 as well as queries related to election news.70 YouTube also promotes authoritative sources in its top news and breaking news shelves for more recent events.71 The company shared that it could expand this to other categories of content, such as entertainment. However, this comes with trade-offs: It is challenging to define authoritative sources across more subjective verticals, as these determinations are based on personal preference and taste.72

In January 2019, YouTube also altered its recommendation algorithm to reduce suggestions of “borderline” videos, such as harmful content and misinformation.73 According to YouTube, this change resulted in a 50 percent drop in watchtime for this type of content.74 However, this data has not been verified by independent researchers.75 The company implemented these changes using machine learning, as well as human evaluators and experts across the United States. These evaluators are responsible for providing input on the quality of the videos they review.76 This data is then used to train the machine-learning recommendation-generation systems.77 According to YouTube, the human evaluators themselves are trained using precise guidelines.78

The company stated that it would roll out these efforts to other countries to minimize recommendations of harmful content as its systems become more accurate in implementing this rule in the United States.79 According to a company blog post, this change was anticipated to impact less than 1 percent of the videos on YouTube and would only affect recommendations of these borderline videos, not the availability of this content on the platform as a whole. This means that users who search for or subscribe to channels that post such content would still be able to view these videos.80 According to YouTube, the company took this approach in order to adequately safeguard free expression on the platform.81

YouTube collects both explicit and implicit feedback from its users. Explicit feedback is collected through the thumbs up and thumbs down features in the product, as well as product surveys82 that the company runs to find out if a user enjoyed a video that was recommended to them.83 Some of the implicit data points that the company collects and uses include user activity on YouTube, Google, and Chrome,84 and user watch history.85 YouTube relies on this implicit feedback to inform recommendations, as well as to train the recommendation models.86 In such training processes, the fact that a user has finished a video is a positive signal.87

As described above, YouTube provides very little transparency and accountability around how its recommendation system is structured, how it operates, and how it makes decisions.88 Research has suggested that promoting awareness of the use of algorithmic tools and enabling users to control their own experiences on a platform are fundamental steps in building trust with users. This lack of transparency from YouTube therefore limits the agency users have over their own experiences.89

Controversies Related to YouTube’s Recommendation System

In addition, the lack of transparency and accountability from YouTube is also concerning considering the level of controversy and backlash around this system’s recommendations. It has also made evaluating these criticisms difficult, as most studies are operating with insufficient data.90 This makes it hard to draw reliable conclusions on how YouTube’s recommendation system shapes user perceptions and behaviors. In addition, a lack of transparency around the company’s training datasets also makes it challenging to run assessments to ensure the system is providing the same level of utility to its diversity of users (e.g. users of different genders and ethnicities),91 a common problem associated with defining fairness in recommender systems.92 This is important as such assessments can identify potential instances of bias within recommender systems.

Over the past decade, the company has come under particular criticism for enabling its recommendation engine to suggest content to users containing misleading or false information and conspiracy theories. For example, after a fire broke out in Paris’s Notre-Dame cathedral in April 2019, a number of conspiracy theories began circulating on the platform, claiming that the fire was an act of terrorism, and promoting Islamophobic rhetoric. One vlogger on YouTube also claimed that the French government had started the fire as a covert operation, and that French President Emmanuel Macron could not be trusted. The video was viewed almost 50,000 times overnight,93 and this expanded to over 100,000 views soon after.94 Shortly after the video was posted, it was also monetized through the use of advertisements.95 YouTube’s recommendation system continued to recommend the video despite changes implemented by the company in January 2019, which aimed to limit algorithmic promotion of conspiracy theory content and instead promote content from authoritative sources.96 Although YouTube has stated that conspiracy theory videos make up less than 1 percent of all content on the platform, this is still a staggering amount of content, and the problem is compounded whenever the recommendation algorithm promotes this content.

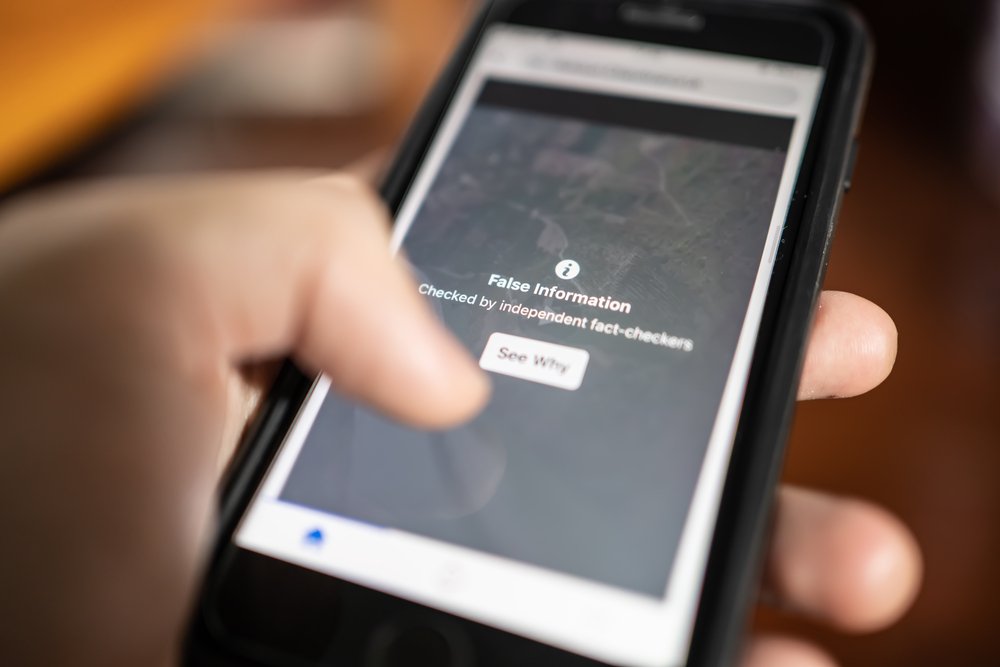

YouTube has also introduced algorithmically-recommended information panels with links to third-party sources that offer to fact-check video content. These panels appear next to content featuring common conspiracies, as well as content posted by some foreign state-run media outlets.97 However, journalists found that the panels the company appended to livestreams of the Notre-Dame fire rerouted users to information about the 9/11 terrorist attacks in the United States instead.98

Similarly, in 2017, academics and other commenters spotlighted the role of the company’s search and recommendation algorithms in promoting disinformation during the 2016 U.S. presidential election. Based on these findings, prominent sociologist and technology critic Zeynep Tufekci termed these algorithms “misinformation engines.”99

In addition, YouTube has faced particular criticism for creating a “rabbit hole” effect, in which the algorithm delivers personalized recommendations that prompt users to consume harmful or radical content100 that they did not originally seek out. 101 In 2019, Mozilla began publishing anecdotes of how anonymous users encountered such rabbit holes on the platform under a project known as YouTube Regrets. The project aimed to push YouTube to let independent researchers study their algorithmic decision-making systems.102 In September 2019, YouTube representatives met with the Mozilla Foundation to discuss the issues raised in the campaign.103 In addition, a YouTube spokesman told CNET that while the company welcomed research on these issues, it had not seen the videos, screenshots, or data that Mozilla was using and was therefore unable to review Mozilla’s claims.104

The Media Manipulation Initiative at Data & Society Research Institute spearheaded a research project exploring the far-right in the United States and Germany, through which they examined YouTube’s recommendation system. Their research uncovered that the system concerningly combined communities associated with Fox News and GOP accounts with communities associated with conspiracy theory channels, such as those belonging to far-right commentator Alex Jones. Similarly, the researchers found that the recommendation system categorized communities associated with the religious right together with communities associated with the international right-wing. The researchers outlined that these categorizations could create a rabbit hole effect, because if a user is consuming content produced by conservative groups on the platform, they are only a few clicks away from receiving recommendations for content produced by far-right extremist groups.105 In addition, the researchers raised concerns that this grouping also generates a filter bubble on the platform, in which users are prevented from accessing information that may challenge their perspectives or broaden their horizons because of a system’s predictions on what they will like.106

Several researchers, including former YouTube employee-turned-critic Guillaume Chaslot, have argued that it is in the company's business interest to promote such polarizing and fringe videos and channels, as they drive engagement and greater watch times.107 Further, critics such as Chaslot have suggested that the recommendation system is biased toward promoting divisive, sensational, and conspiratorial content,108 perhaps because the system has learned that such content is engaging.109 Given the vast number of users who consume recommended content, this raises significant concerns about the platform serving as a radicalization pipeline.110

YouTube executives have contested these notions, claiming that the company considers more than just watch time when making content recommendations, and that because advertisers do not want their content to appear alongside such harmful content, there is no financial interest in promoting these videos.111 The company also stated that after a user consumes a video, the recommendation system does not account for whether the content of the video was less or more extreme, and it therefore would not seek to necessarily recommend similar videos. Rather, recommendations in such instances rely on the context and user behavior associated with the consumption of the initial video.112 YouTube also stated that after reviewing internal testing data, they found that on average, users who watch one extreme video are subsequently recommended more moderate content113 suggesting that the rabbit hole effect toward more radical content is not inevitable.114 However, because the company provides little transparency around the factors that its recommendation system actually does consider, and because the company has not shared any of the data it collected or evaluated during these tests, it is difficult to corroborate these statements.115

It is important to note that some researchers, such as Penn State Political Scientists Kevin Munger and Joseph Phillips, have also pushed back against the notion that the company's recommendation system is a central component of online radicalization.116 They contend that prior studies have not been able to determine that the algorithm has had a noticeable effect on radicalization, and that instead this narrative has been highlighted by policymakers and the media because it offers simple policy prescriptions.117 These researchers instead suggest that radicalization online is similar to radicalization offline, in that it relies on providing an individual with new, radicalizing information at scale, and that the supply of such content (and the ease with which producers can create content on YouTube) caters to this demand.118 Other researchers, such as Data & Society’s Becca Lewis, also point to the role of other algorithms, like the company’s search algorithm, as well as less technical factors, such as online social-networking interactions between creators and audiences, as more significant factors in promoting radicalization. As a result, these researchers suggest that focusing solely on the algorithmic recommendation component of the radicalization process provides a limited view of the overall problem and hinders potential solutions.119

YouTube has also faced significant backlash over how its recommendation system—which makes content recommendations both on YouTube’s main platform as well as on YouTube Kids—interfaces with children. YouTube Kids is a separate video app, curated by humans and algorithms, that features age-tailored content.120 However, children’s videos are some of the most watched categories of content on YouTube’s main platform as well. As a result, producing and reproducing popular children’s content has emerged as a lucrative business on the service, as it enables creators to reap the benefits of advertising dollars.121 According to a Pew Research Center Study,122 children’s videos constituted the majority of the 10-most recommended posts on YouTube,123 and 80 percent of parents said they occasionally let their children watch content on YouTube.124

In 2017, the company was hit with the “ElsaGate scandal,” in which its recommendation system recommended seemingly child-friendly content featuring characters such as Elsa from Disney’s Frozen, but that actually contained inappropriate themes related to topics like violence, sex, drugs, and alcohol.125 In addition, researchers, journalists, and YouTube creators have found that the recommendation engine was suggesting ordinary videos of children that were rampant with sexualized comments as well as comments suggesting timestamps in which children were in sexualized positions.126 These videos were often recommended by YouTube’s system after a user searched for videos of adult women, such as using the term “bikini haul,” raising concerns about how the system was making links about searches for adult women and children.127 In response, the company said they would implement changes such as closing down the comments section of such posts and more rigorously removing posts that were found to violate its Community Guidelines.128 However, YouTube has said little publicly about how the company’s recommendation system would be altered to prevent the promotion of such content going forward.129

Although YouTube has introduced some technical and policy changes to combat the spread of misinformation, conspiracy theories, and egregious content, numerous reports have circulated, often with employee input,130 claiming that YouTube executives repeatedly ignored warnings and suggestions to alter the company’s recommendation system in a more significant manner.131 This raises concerns that the company is placing profits over ensuring the company’s use of automated tools is responsible, transparent, and accountable.

User Controls Related to YouTube’s Recommendation System

As highlighted, YouTube does not provide significant transparency around how its recommendation system operates, thus limiting the agency users have over their personal YouTube experience. The company does, however, offer its users a limited set of controls over how this system shapes their platform experience.

As previously mentioned, YouTube users have the ability to turn off the AutoPlay feature.132 In June 2019, the company announced that they were expanding the controls users have over the homepage and Up Next recommendations.133 These changes made it easier for signed-in users to view recommendations on both of these areas of the platform.134 The changes also let users mark certain channels so that they do not appear in their recommendations. However, if a user subscribes to the channel, searches for the channel, or visits the channels page, they will still see its content. In addition, if the channel appears in the Trending tab, the user will still see its content.135

YouTube’s expansion of controls also enables users to learn why a video may have been suggested to them, particularly on the homepage.136 Users can also remove specific videos from their watch history and specific queries from their search history to prevent these data points from being considered in recommendations. They can also pause their watch and search history, or clear them altogether.137 Further, users can remove videos, channels, sections, and playlists from their homepage, and indicate that they are not interested in this content or do not want to be recommended content based on these factors.138 They can also remove liked videos from their playlists or edit and delete playlists to further control their recommendations.139

If a user wants to revert to having all the information YouTube has collected on their behaviors and interests used for personalizing their recommendations, they can clear their “not interested” and “don’t recommend channel” feedback through a tool in their My Activity tab.140

Citations

- Christopher McFadden, "YouTube: Its History and Impact on the Internet," Interesting Engineering, October 4, 2019, source

- Michael Arrington, "Google Has Acquired YouTube," TechCrunch, October 9, 2006, source

- Joan E. Solsman, "Mozilla Is Sharing YouTube Horror Stories To Prod Google For More Transparency," CNET, October 15, 2019, source

- Maryam Mohsin, "10 Youtube Stats Every Marketer Should Know in 2020 [Infographic]," Oberlo, last modified November 11, 2019, source

- "Youtube.com Competitive Analysis, Marketing Mix and Traffic," Alexa Internet, source

- Kevin Roose, "The Making of a YouTube Radical," New York Times, June 8, 2019, source

- Ben Popken, "As Algorithms Take Over, YouTube's Recommendations Highlight A Human Problem," NBC News, April 19, 2018, source

- Adrienne LaFrance, "The Algorithm That Makes Preschoolers Obsessed With YouTube," The Atlantic, July 25, 2017, source

- Casey Newton, "How YouTube Perfected The Feed," The Verge, August 30, 2017, source

- Popken, "As Algorithms".

- Matt Elliott, "How To Turn Off YouTube's New Autoplay Feature," CNET, March 20, 2015, source

- Jack Nicas, "How YouTube Drives People to the Internet's Darkest Corners," Wall Street Journal, February 7, 2018, source

- Aaron Smith, Skye Toor, and Patrick Van Kessel, "Many Turn to YouTube for Children's Content, News, How-To Lessons," Pew Research Center, last modified November 7, 2018, source

- “The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems — Pilot Study and Lessons Learned,” Ranking Digital Rights, March 2020, rankingdigitalrights/pilot-report-2020

- "Manage Your Recommendations and Search Results," YouTube Help, source

- Jonas Kaiser and Adrian Rauchfleisch, "Unite the Right? How YouTube's Recommendation Algorithm Connects The U.S. Far-Right," D&S Media Manipulation: Dispatches from the Field (blog), entry posted April 11, 2018, source

- Caroline O'Donovan et al., "We Followed YouTube's Recommendation Algorithm Down The Rabbit Hole," BuzzFeed News, January 24, 2019, source

- "Manage Your," YouTube Help, “The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems — Pilot Study and Lessons Learned,” Ranking Digital Rights, March 2020, rankingdigitalrights/pilot-report-2020

- Paul Covington, Jay Adams, and Emre Sargin, "Deep Neural Networks for YouTube Recommendations," Proceedings of the 10th ACM Conference on Recommender Systems, ACM, New York, NY, USA, 2016, source

- Roose, "The Making".

- Covington, Adams, and Sargin, "Deep Neural".

- Roose, "The Making".

- Mark Bergen and Lucas Shaw, "To Answer Critics, YouTube Tries A New Metric: Responsibility," The Star, April 15, 2019, source

- Roose, "The Making".

- Roose, "The Making".

- Bergen and Shaw, "To Answer."

- Roose, "The Making".

- Bergen and Shaw, "To Answer."

- Roose, "The Making".

- Michael Learmonth, "YouTube's Video Views Are Falling — By Design," AdAge, May 14, 2012, source

- Newton, "How YouTube".

- Bergen and Shaw, "To Answer."

- "Neural Network," DeepAI, source

- Alex Woodie, "Inside Sibyl, Google's Massively Parallel Machine Learning Platform," Datanami, last modified July 17, 2014, source

- Margaret Rouse and Matthew Haughn, "Supervised Learning," Search Enterprise AI, source

- Newton, "How YouTube".

- Newton, "How YouTube".

- Newton, "How YouTube".

- Covington, Adams, and Sargin, "Deep Neural".

- Covington, Adams, and Sargin, "Deep Neural".

- Covington, Adams, and Sargin, "Deep Neural".

- In this context, precision can be understood as how useful search results are. Recall can be understood as how complete search results are. Ranking loss functions winnow down the list of potential recommendations.

- A/B testing compares two versions of a variable by testing a user’s response to variable A against variable B, and establishing which of the two variables is more effective.

- Covington, Adams, and Sargin, "Deep Neural".

- Ekstrand et al., "All The Cool".

- Alexis C. Madrigal, "How YouTube's Algorithm Really Works," The Atlantic, November 8, 2018, source

- Covington, Adams, and Sargin, "Deep Neural".

- Błażej Osiński and Konrad Budek, "What Is Reinforcement Learning? The Complete Guide," Deep Sense AI, last modified July 5, 2018, source

- Roose, "The Making".

- Roose, "The Making".

- "[YouTube Recommendations] Ask us anything! YouTube Team will be here Friday February 8th.," YouTube Help, last modified February 8, 2019, source

- Mark Bergen, "YouTube Executives Ignored Warnings, Letting Toxic Videos Run Rampant," Bloomberg, April 2, 2019, source

- Bergen, "YouTube Executives".

- YouTube, "The Four Rs of Responsibility, Part 1: Removing Harmful Content," Official YouTube Blog, entry posted September 3, 2019, source

- YouTube, "Susan Wojcicki: Preserving Openness Through Responsibility," Official YouTube Blog, entry posted August 27, 2019, source

- Paul Lewis, "'Fiction is Outperforming Reality': How YouTube's Algorithm Distorts Truth," The Guardian, February 2, 2018, source

- YouTube, "Continuing Our Work To Improve Recommendations On YouTube," Official YouTube Blog, entry posted January 25, 2019, source

- Popken, "As Algorithms".

- Bergen, "YouTube Executives".

- Bergen and Shaw, "To Answer."

- Smith, Toor, and Kessel, "Many Turn," Pew Research Center.

- YouTube, "The Four Rs of Responsibility, Part 2: Raising Authoritative Content and Reducing Borderline Content and Harmful Misinformation," Official YouTube Blog, entry posted December 3, 2019, source

- YouTube, "Building a Better News Experience On YouTube, Together," Official YouTube Blog, entry posted July 9, 2018, source

- Conversation with representatives from YouTube on March 2, 2020

- Conversation with representatives from YouTube on March 2, 2020

- Conversation with representatives from YouTube on March 2, 2020

- Roose, "The Making".

- Roose, "The Making".

- YouTube, "Our Ongoing Work To Tackle Hate," Official YouTube Blog, entry posted June 5, 2019, source

- YouTube, "How YouTube Supports Elections," Official YouTube Blog, entry posted February 3, 2020, source

- Conversation with representatives from YouTube on March 2, 2020

- Kevin Roose, "YouTube's Product Chief On Online Radicalization, Algorithmic Rabbit Holes," SF Gate, April 6, 2019, source

- YouTube, "Continuing Our Work," Official YouTube Blog.

- YouTube, "Our Ongoing," Official YouTube Blog.

- Solsman, "Mozilla Is Sharing".

- YouTube, "Continuing Our Work," Official YouTube Blog.

- YouTube, "Continuing Our Work," Official YouTube Blog.

- "External Evaluators and Recommendations," YouTube Help, source

- YouTube, "Continuing Our Work," Official YouTube Blog.

- YouTube, "Continuing Our Work," Official YouTube Blog.

- YouTube, "Continuing Our Work," Official YouTube Blog.

- Covington, Adams, and Sargin, "Deep Neural".

- Newton, "How YouTube".

- "Manage Your," YouTube Help.

- Kaiser and Rauchfleisch, "Unite the Right?," D&S Media Manipulation: Dispatches from the Field (blog).

- Covington, Adams, and Sargin, "Deep Neural".

- Covington, Adams, and Sargin, "Deep Neural".

- “The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems — Pilot Study and Lessons Learned,” Ranking Digital Rights, March 2020, rankingdigitalrights/pilot-report-2020

- Jaron Harambam, Natali Helberger, and Joris van Hoboken, "Democratizing Algorithmic News Recommenders: How To Materialize Voice In A Technologically Saturated Media Ecosystem," Philosophical Transactions of The Royal Society A Mathematical Physical and Engineering Sciences, October 2018, source

- Chris Stokel-Walker, "YouTube's Deradicalization Argument Is Really a Fight About Transparency," FFWD (blog), entry posted December 29, 2019, source

- Ekstrand et al., "All The Cool".

- Ekstrand et al., "All The Cool".

- Jesselyn Cook, "YouTube And Google Algorithms Promoted Notre Dame Conspiracy Theories," The Huffington Post, April 17, 2019, source

- Cook, "YouTube And Google".

- Cook, "YouTube And Google".

- Cook, "YouTube And Google".

- O'Donovan et al., "We Followed".

- Cook, "YouTube And Google".

- Lewis, "'Fiction is Outperforming".

- Roose, "The Making".

- Roose, "YouTube's Product"

- "YouTube Regrets," Mozilla Foundation, source

- Email conversation with representative from the Mozilla Foundation

- Solsman, "Mozilla Is Sharing".

- Kaiser and Rauchfleisch, "Unite the Right?," D&S Media Manipulation: Dispatches from the Field (blog).

- Pariser, The Filter Bubble: What the Internet is Hiding From You.

- Cook, "YouTube And Google".

- Lewis, "'Fiction is Outperforming".

- Lewis, "'Fiction is Outperforming".

- O'Donovan et al., "We Followed".

- Roose, "YouTube's Product"

- Roose, "YouTube's Product"Kevin Roose, "YouTube's Product Chief on Online Radicalization and Algorithmic Rabbit Holes," New York Times, March 29, 2019, source

- Roose, "The Making".

- Roose, "YouTube's Product"

- Roose, "The Making".

- Charlie Warzel, "Big Tech Was Designed to Be Toxic," New York Times, April 3, 2019, source

- Paris Martineau, "Maybe It's Not YouTube's Algorithm That Radicalizes People," WIRED, October 23, 2019, source

- Martineau, "Maybe It's",

- Becca Lewis, "All of YouTube, Not Just the Algorithm, is a Far-Right Propaganda Machine," FFWD (blog), entry posted January 8, 2020, source

- LaFrance, "The Algorithm".

- LaFrance, "The Algorithm".

- Smith, Toor, and Kessel, "Many Turn," Pew Research Center.

- Madrigal, "How YouTube's".

- Madrigal, "How YouTube's".

- Russell Brandom, "Inside Elsagate, The Conspiracy-Fueled War on Creepy YouTube Kids Videos," The Verge, December 8, 2017, source

- Natasha Lomas, "YouTube Under Fire For Recommending Videos Of Kids With Inappropriate Comments," TechCrunch, February 18, 2019, source

- Julia Alexander, "YouTube Still Can't Stop Child Predators In Its Comments," The Verge, February 19, 2019, source

- YouTube, "5 Ways We're Toughening Our Approach To Protect Families On YouTube and YouTube Kids," Official YouTube Blog, entry posted November 22, 2017, source

- Alexander, "YouTube Still".

- Bergen, "YouTube Executives".

- Bergen, "YouTube Executives".

- Elliott, "How To Turn".

- YouTube, "Giving You More Control Over Your Homepage And Up Next Videos," Official YouTube Blog, entry posted June 26, 2019, source

- YouTube, "Giving You More," Official YouTube Blog.

- YouTube, "Giving You More," Official YouTube Blog.

- YouTube, "Giving You More," Official YouTube Blog.

- " Manage Your," YouTube Help.

- "Manage Your," YouTube Help.

- "Manage Your," YouTube Help.

- "Manage Your," YouTube Help.

Case Study: Amazon

Amazon is an American technology company that offers services such as e-commerce, cloud computing, and streaming. The company was founded in 1994 by Jeff Bezos1 and is considered the largest online marketplace in the world in terms of revenue and market capitalization, and the largest internet company in the world in terms of revenue.2 The company currently ranks fourteenth for global internet engagement on Alexa rankings.3 In 2019, Amazon accounted for 37.7 percent of all U.S. e-commerce sales.4

Amazon deploys recommendation systems across a number of its services, including its e-commerce platform and its streaming platform. Given the large scale influence the introduction of Amazon’s recommendation system has had on online commerce, this analysis will focus on Amazon.com, the company’s e-commerce platform. Amazon generates revenue from its e-commerce platform through product sales, targeted advertising, subscriptions (e.g. Amazon Prime), and the fees sellers pay in order to be able to sell on the platform. The longer a user spends on the platform, the more products they will see and potentially buy and the more ads they will see. Given that the company’s recommendation system is designed to understand and predict user interest and behaviors, and make recommendations based on these insights, it is an integral tool for driving user purchases and increasing and maintaining user attention and engagement on the platform. Therefore, the recommendation system is an important contributor to revenue generation on the platform. In addition, some researchers and commentators have argued that Amazon uses its recommendation system to promote its own brands over others, thereby further increasing its profits, maintaining its market dominance, and raising antitrust concerns.5

Amazon’s e-commerce recommendation engine is powerful, and it engages with users at every stage of their journey on the website. It can therefore influence everything from what products a user sees to which items they eventually buy. Despite the extensive influence this system has over user behaviors and purchasing decisions, Amazon has provided little transparency around how these algorithmic systems are designed and how they operate. This is especially concerning given that numerous researchers and journalists have highlighted how the platform’s recommendation engine has suggested products that are misleading, false, or conspiracy-theory based. This lack of transparency therefore makes it challenging to understand why these recommendations are made, and how to prevent them going forward. In addition, the platform offers users a limited set of controls around whether and how they would like their platform experience to be shaped by such algorithmic decision-making processes.

A Technical Overview of Amazon’s Recommendation System

Amazon first deployed a recommendation system across its e-commerce platform almost two decades ago.6 Before deploying this system, Amazon made product recommendations to users based on human curation and best-seller lists. However, according to Amazon, this approach was found to be inherently biased and did not sufficiently provide recommendations to users with niche interests.7 The company later developed and deployed an algorithmic system that matches a user’s purchased and rated items with similar items, and then combines these similar items into a list of recommended products for a user.8 This unique approach to making recommendations became known as “item-based collaborative filtering” or “item-to-item collaborative filtering.”9

According to a 2001 paper authored by three Amazon employees, the company opted to use the item-to-item collaborative-filtering model rather than a traditional collaborative-filtering model, or models such as content-based models. This is because its algorithm’s online computation grows at a rate that is not connected to the growth in the number of customers and items in the product catalog. As a result, this model is able to generate real-time recommendations, scale to large data sets, and produce recommendations that are more likely to be of interest to users. This model is also less computationally expensive than the other models previously outlined.10 When a model is computationally expensive it requires a considerable amount of resources to complete. These resources include the overall run time, processing power, and memory usage required to complete a function. In addition, researchers and journalists have found that the item-to-item collaborative-filtering model helped the company recommend niche items to shoppers in a compelling manner, thus increasing their potential to gain revenue on slow-moving inventory.11 Further, given the vast size of Amazon’s product catalog, the use of this algorithmic approach also helped the company address the issues of which recommendations to present and in which order (known in data science as the “learning to rank” problem), and how to ensure that there are a diversity of products in each recommendation set.12

The introduction of the item-to-item collaborative-filtering model has served as a significant targeted marketing tool and has transformed the e-commerce space tremendously.13 It has also enabled the company to generate a personalized shopping experience for each user in a novel manner. Amazon asserts that providing a personalized experience will enhance users’ overall experience with the platform. However, in doing so, the company also strives to increase the time a user spends on the platform, as well as the company’s average order value (the average amount spent every time a user places an order on the platform), and overall revenue the company generates from each user.14 The company also sells advertisements, and therefore users who spend a longer time on the platform will see and potentially click on more ads, thus driving revenue through ad impressions (views) and clicks.

Amazon’s recommendation system relies on numerous explicit and implicit data points. Users are able to provide explicit feedback data to the platform by rating items from one to five stars, through both public and private ratings. The private ratings are not shared with other Amazon customers, nor do they impact the average customer review for an item. These private ratings are used to refine the recommendations that the user receives.15 Although Amazon publicly notes how it uses the private ratings, the company has not indicated whether and how its recommendation systems rely upon the public ratings and feedback that users provide on items they have purchased and sellers they have purchased from.16 Each action that a user takes on the platform provides implicit feedback data to the company, which it uses to refine its recommendations in real time.17 These data points include a user’s browsing and purchase histories, which items a user added to their virtual shopping cart, which items a user rated and liked, and what items similar users have browsed and purchased.18 For new users, there is less implicit and explicit data available to the company. As the user continues using the Amazon platform, however, the company is able to collect a vast amount of additional data, which can then be used to refine recommendations.19

Today, Amazon uses recommendation algorithms to offer different categories of recommendations to users at a range of junctures on its e-commerce platform. The company, however, provides little transparency around these recommendation system use cases. This limits the understanding and agency that users have over these tools. Several engineers, journalists, and researchers, however, have identified some of the categories of product recommendations that the company’s recommendation system generates. These categories are broken down below:

- Recommended For You: When a user visits the Amazon.com webpage and20 logs in, they will see a tab on the main toolbar that is tied to their account (e.g. Gabby’s Amazon.com). Once they click on this they are presented with a range of product recommendations across multiple categories.21 For example, this can include recommendations specific to Amazon Books, apparel, or electronics.

- Frequently Bought Together: In order to increase average order value, the company makes recommendations on items that have been purchased together in the past. These product recommendations aim to convince customers to purchase an additional item (known as up-selling) and/or purchase a different product or service (known as cross-selling) by providing suggestions based on items a user has added to their shopping cart or below items that they are currently exploring on the website.22

- Similar Items: The company also makes recommendations for products similar to ones that a user has viewed recently. These recommendations are based on a user’s browser history and the recommended items typically vary in terms of shape, size, and brand.23

- General Browsing History: The company will make product recommendations based on a user’s browsing history in case they want to access and purchase something they have previously demonstrated an interest in.24

- Items Recently Viewed: The company also makes recommendations based on the items that a user recently viewed. Amazon has the same goal in making these recommendations as with the Similar Items recommendations, in that they want to suggest products based on a user’s recent browsing history.25

- Related To/Based On Items You Viewed: These categories of recommendations suggest products that are similar to items that a user recently viewed. For example, if a user searched for hangers on the platform, these recommendations would suggest hangers of different shapes, sizes, brands, etc. These recommendations are also based on a user’s recent browsing history.

- Customers Who Bought This Item Also Bought: This category of recommendations suggests items that have been purchased collectively by users in the past. This category of recommendations is similar to the Frequently Bought Together section and it similarly aims to increase average order value through up-selling and cross-selling.26

- Recommended Items Other Customers Often Buy Again: This category of recommendations suggests items that similar users often purchase multiple times.

- New Version of This Item: This category of recommendations is based on the assumption that users like to upgrade items, such as electronics, that they purchased. As a result, this category of recommendations informs users when a new edition of an item they purchased is available.27

- Recommended For You Based on a Previous Purchase/Inspired By Your Purchases/Inspired By Your Shopping Trends: These categories of recommendations make product suggestions to a user based on a recent purchase they made. After a user makes a purchase on the platform, they are directed to an order details page. On this page, the user will receive further recommendations for items that can be paired with the initial order. For example, if a user purchases an iPad on Amazon, they might receive recommendations for iPad covers on the subsequent order details page. These recommendations also appear on the homepage. This category of recommendations aims to encourage users to make a second purchase by offering a relevant cross-sell offer.28

- Best-Selling in Different Categories: This category of recommendations features top-selling items across the different categories of products on the platform. It is based on the notion that an item that has been widely purchased by other users is validated as worthwhile. In addition, these recommendations aim to help users identify popular products and make purchases from new categories of products that they have not made purchases from before. This produces a range of up-sell and cross-sell opportunities for the platform.29

- Popular in Brands You May Like: This category recommends tems that are popular and are sold by brands or sellers that a user may be interested in.

- Off-site Email Recommendations: Amazon also sends users product recommendations via email. Users can opt out of receiving these marketing emails, and can also select certain categories of items (e.g. beauty, books, Amazon Echo) for which they would like to receive marketing emails. These emails vary in their content and focus, but contain similar categories of recommendations as those available on the platform.30

In the early 2000s, Amazon’s product recommendation system relied on both automated and human decision-making. Initially, the recommendations put forth on the website relied more on automated decision-making, whereas the email recommendations users received were generated by humans. These recommendations were produced by Amazon employees who were tasked with using software tools to target users based on their purchasing and browsing history. These employees were also assigned a certain product and were responsible for identifying similar items to recommend along with the original item.31

In the early 2010s, journalists noted that the company relied on a range of metrics when constructing and deploying its email recommendations. These included email open rates, click rates, opt out rates, as well as revenue-prioritizing metrics. The company’s use of revenue-prioritizing metrics and related marketing and targeting techniques meant that if the recommendation system determined a user should receive a recommendation email for best-selling shoes and best-selling books, the company would only send them the email with the highest average revenue-per-mail. In this way, the company aimed to avoid spamming users and therefore maximize purchases. According to Sucharita Mulpuru, a Forrester analyst, the conversion rate and efficiency of Amazon’s recommendation emails were high, and considerably more effective than the on-site recommendations a user receives.32 Mulpuru shared that based on the performance of other e-commerce sites, one could estimate that Amazon’s on-site recommendation conversion is approximately 60 percent, suggesting a much more staggering rate of success for email recommendations.33 The company also offers users the option to receive marketing newsletters by traditional mail.

The introduction of a robust recommendation system has radically transformed Amazon as a business. Between 2011 and 2012, the company integrated its recommendation system across all stages of the purchasing process, beginning with the product discovery stage, when a user first identifies an item of interest, and ending with the checkout stage. In the second fiscal quarter of 2012, the company reported a 29 percent increase in sales (approximately $12.83 billion). This was a significant increase from the $9.9 billion in sales that the company reported during the same quarter the previous year.34 According to the 2013 data released by McKinsey & Company, recommendation systems drive 35 percent of purchases at Amazon.35

Controversies Related to Amazon’s Recommendation System

As outlined, recommendation algorithms are used widely on the Amazon platform. They have the power to significantly influence what products a user sees, and can subsequently drive purchases. The company, however, has provided little transparency around the data it uses to train these recommendation systems and how they contribute to the company’s determinations on how effective its recommendation system is. Therefore, although the recommendation system has impacted the company’s success as a business, it is difficult to know how this system impacts user behaviors and whether its recommendations are equally as refined for all of its users, across demographics. This therefore also prevents researchers from identifying and understanding any sources of bias. In addition, the results of these automated decision-making processes are not always positive. For example, over the past few years, journalists and researchers have identified numerous instances of the platform’s recommendation engine-making suggestions for products that are misleading, false, or conspiracy-theory based.

For example, in March 2019 WIRED reported finding that Amazon recommended a number of anti-vaccine books in the best-selling categories of various health sections on Amazon Books, including the Epidemiology, Emergency Pediatrics, History of Medicine, and Chemistry categories. Many of these books were marked as #1 Best Sellers in their respective categories.36 In addition, the outlet also found that in the Oncology category, one of the books marked as a Best Seller made recommendations on cancer treatment that are contrary to the consensus of medical experts, such as consuming juice instead of undergoing chemotherapy. Further, when WIRED journalists searched for “cancer,” a misinformation-filled book titled The Truth About Cancer was one of the first product recommendations that appeared. The outlet found that the book had 1,684 reviews and had a 96 percent five-star rating.

Also in March 2019, NBC News reported that the company’s recommendation engine was recommending QAnon: An invitation to the great awakening in its Hot New Releases section of Amazon Books. The book outlined a number of the common beliefs held by the QAnon conspiracy theory movement,37 including that Democrats murder and eat children and that the U.S. government manufactured both AIDS and the movie Monsters Inc.38 The book rapidly climbed to the top 75 books sold on Amazon that month. The company did not respond to questions about how its algorithmic recommendation system had been used to make these product suggestions, and they did not clarify whether the recommendation of the book in the Amazon Books section meant that the book had also been recommended through its other recommendation mechanisms on the platform.39 This raised significant concerns regarding the lack of oversight over how product recommendations are made, and the subsequent consequences that could result from recommending a harmful and misleading product such as this book.40 Further, cases like these have raised concerns around popularity-based recommendations on the platform, as researchers and academics have suggested that popularity is a weak metric for quality, and it homogenizes results.41

In 2015, Amazon introduced the “Amazon Choice” label to indicate highly-recommended items across the platform. According to the Amazon website, these recommendations are based on highly-rated, well-priced products that can be shipped immediately. However, there is little transparency around how these signals are weighted and how the final recommendations are made, and the company has not shared any information around the performance of its recommendation system and how and whether it is able to deliver personalized recommendations to users. 42