Table of Contents

What Makes Artificial Intelligence “Artificial?”

Before the boom of AI, computer scientists John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon convened the 1956 Dartmouth Workshop, where the term “AI” was coined.1 McCarthy positioned the term to mean the science and engineering of building intelligent machines alongside tools that seek to mimic human intelligence, rationality, and thought.2 Even with McCarthy’s initial definition, there is still no consensus today on a definition for AI.3

Similarly to the lack of consensus on a definition for AI, there is no consensus on a definition for human intelligence. Human intelligence can roughly be broken into three concepts: (1) the use of complex heuristics to arrive at a decision faster, (2) the process of learning, and (3) the retention of information. The brain is the center of human intelligence and comprises a network of decentralized nerve cells called neurons that help command thoughts, reason, and emotion with fluidity. Neurons hold information that humans have learned through experiences and education. Humans simplify the process of making complex decisions by using heuristics. For example, bias is colloquially thought of negatively, but bias is a type of heuristic humans use to arrive at a result faster. The biological process of human intelligence and learning provided computer scientists and engineers with the basis to create intelligence in machines.

Generally, AI uses a combination of statistical probability and algorithms (“set of rules”) to arrive at a desired result. Importantly, AI systems are probabilistic and not deterministic. In order for machines to arrive at a statistically probable result, a similar process to human learning and cognitive patterns called “machine learning” occurs, where large volumes of data are used to train a machine or system toward a desired result.4 Like the human brain, machines need a network of units to retain information. Neural networks retain information for machines and consist of computational units that hold information to build predictions. Information is learned and refined in a neural network by adjusting the weight of each unit’s long-term information storage through linear algebra methods. Bias is also used by machines to arrive at a result faster. (Although this is an oversimplification of the human and machine learning processes, it speaks to the facsimile present in machine intelligence and further shows both the rapid development of the AI sector and the confluence of the human and artificial worlds.)

Progresses in deep learning in 2006 helped rapidly develop AI.5 Deep learning refers to neural networks with a large number of parameters and layers. The progress of deep learning led to the development of a class of AI systems capable of generating human language, commonly referred to as a large language model (LLM). LLMs include translation tools such as Google’s Google Translate and generative pre-trained transformers such as Open AI’s ChatGPT.

Generative Artificial Intelligence (GenAI)

The size of the AI market is poised to reach $826 billion by 2030.6 Generative AI, sometimes referred to as “gen AI,” refers to artificial intelligence capable of creating seemingly new and meaningful content such as text, images, or audio.7 One of the most popular generative AI tools is a chatbot or a generative pre-trained transformer (GPT), classified by its ability to generate human language. Although chatbots are not new, OpenAI’s release of ChatGPT in November 2022 garnered over 100 million monthly active users, making it one of the fastest growing consumer applications in history.8

Chatbots such as ChatGPT are one of a variety of generative AI tools that purport to enhance artistic expression, increase worker productivity, and simplify mundane tasks.9 However, these advancements also present challenges to the fields of ethics, climate, and labor economics.10 The widespread use of labor from the Global South to help power generative AI content has further fomented aspects of digital colonialism.11 In addition, a Goldman Sachs report noted that the use of generative AI could raise the gross domestic product by 7 percent and potentially replace 300 million jobs.12 Although data on the impact of AI on climate change is murky, AI tools are intensive and require more energy than other software applications.13 Some proponents of AI point to the potentiality of its opportunities in a variety of sectors, but to embrace its positives sustainable and responsible practices must be built into its development, implementation, and deployment.

Generative Pre-Trained Transformers (GPTs)

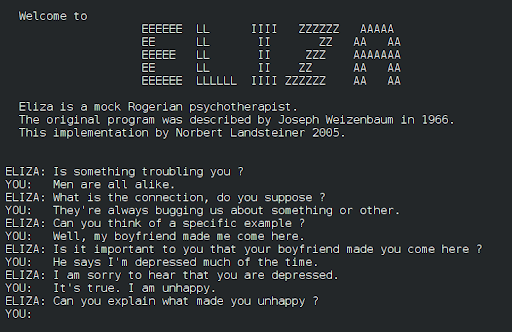

The first iteration of a generative pre-trained transformer (GPT) was the ELIZA-1960, an early natural language processing (NLP) program developed by Joseph Weizenbaum. NLP focuses on developing engineering communication strategies in machines for the purposes of manipulation, understanding, and generation of human language. Advancements in NLP resulted in tools such as Siri in 2010, Google Now in 2012, and Alexa in 2014. Today, GPTs are used for a variety of task-based work such as language generation, virtual personal assistants, and code generation due to progress in natural language understanding (NLU) and natural language generation (NLG). GPTs learn through unsupervised learning techniques and fine-tuning where models gain the ability to predict the upcoming sequential word.14 While GPTs can be an incredible source of information, researchers have pointed to vulnerabilities in its use in health care, computer programming, and media literacy.15

Wikimedia Commons

Generative Adversarial Networks (GANs)

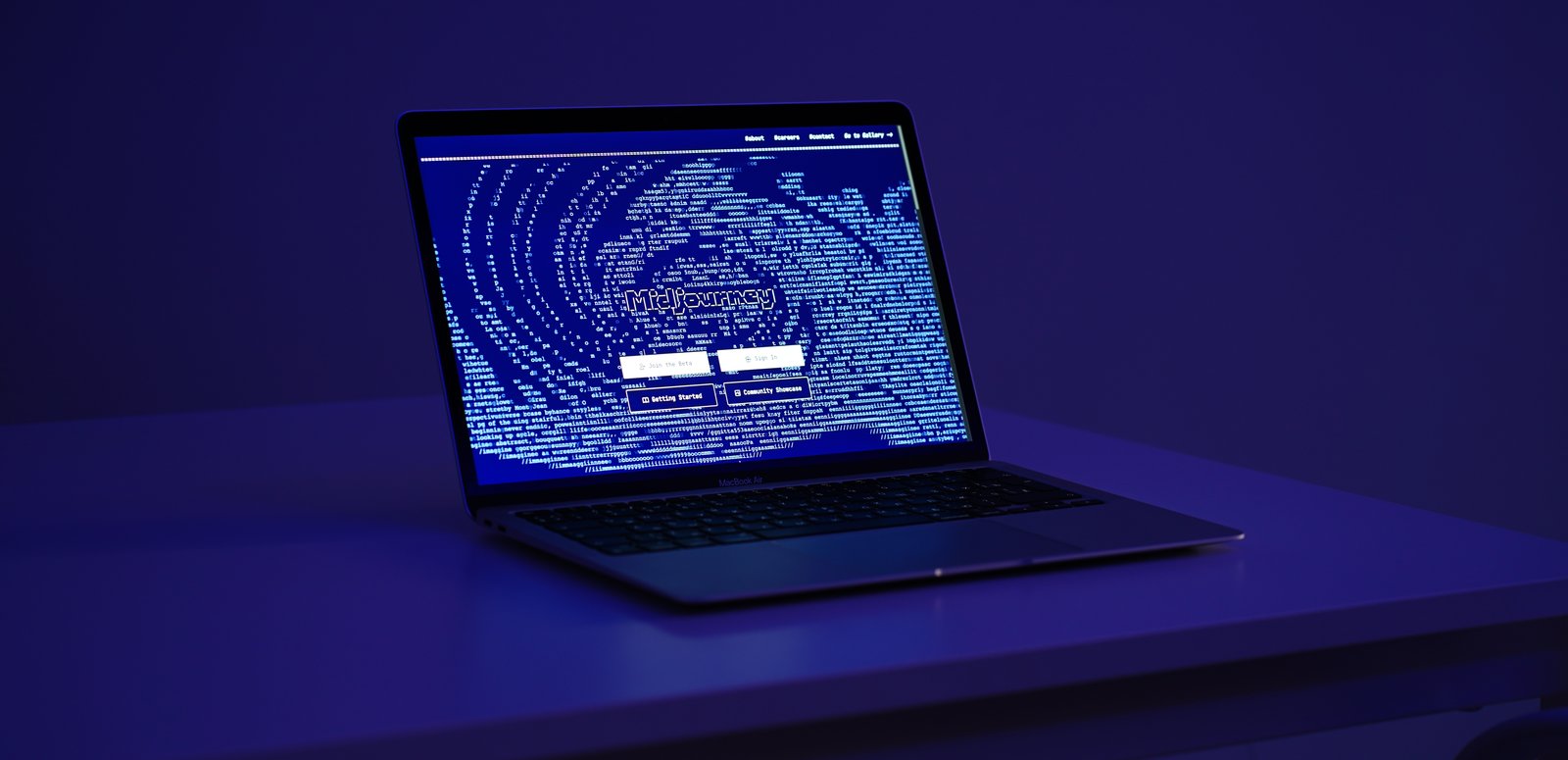

Generative adversarial networks (GANs) refer to a class of generative AI that represent text-to-image tools such as MidJourney and Dall-E-3. GANs are able to model images by training a set of networks in competition with one another. In training these models, the networks learn to assess the authenticity of synthetic and real images, with the goal of producing greater realistic images.16 The adversarial training allows for GANs to be utilized in a variety of scenarios from the medical field (drug discovery) to data science (data augmentation). However, GANs have become most popular within the field of images, audio synthesis, and audio recognition.17 GANs, like other generative AI tools, present unique opportunities and challenges. For example, GANs can help individuals who have experienced vocal trauma but can also spread misinformation and non-consensual sexually explicit material.18

Citations

- Roberto Cordeschi, “AI’s Half Century: On the Thresholds of The Dartmouth Conference,” Università degli Studi di Roma, 2006, 109–114, source.

- John McCarthy, “What is Artificial Intelligence?” University of Nebraska–Lincoln School of Computing, 2004, 1–14, source.

- P.M. Krafft et al., “Defining AI in Policy Versus Practice,” Proceedings of the AAAI/ACM Conference on AI, Ethics, and Society (2019): 72–78, source.

- Maddalena Favaretto et al., “What is Your Definition of Big Data? Researchers’ Understanding of the Phenomenon of the Decade,” PLoS ONE 15, no. 2 (2020), source.

- Josh Patterson and Adam Gibson, Deep Learning: A Practitioner’s Approach, ed. Mike Loukides and Tim McGovern (Sebastopol, CA: O’Reilly Media Inc., 2017), 81.

- “Global AI Market Size Worldwide in 2021 With a Forecast until 2030,” Statista, 2024, source.

- Stefan Feuerriegel et al., “Generative AI,” Business and Information System Engineering 66 (2024): 111–126, source.

- Krystal Hu, “ChatGPT sets record for fastest-growing user base-analyst note,” Reuters, February 2, 2023, source.

- Kate Glazko et al., “An Autoethnographic Case Study of Generative Artificial Intelligence’s Utility for Accessibility,” Proceedings of the 25th International ACM SIGACCESS Conference on Computers and Accessibility (Fall 2023): 1–8, source.

- Divy Thakkar et al., “Towards an AI-Powered Future that Works for Vocational Workers,” CHI ’20: Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems (April 2020): 1–13, source.

- Michael Kweet et al., “Digital Colonialism: US Empire and The New Imperialism in the Global South,” Institute of Race Relations 60, no. 4 (2019): 3–26, source; Anna Milanez, “The Impact of AI on the Workplace: Evidence from OECD Case Studies of AI Implementation,” Organization for Economic Cooperation and Development (OECD) Social, Employment and Migration Working Papers No. 289, March 27, 2023, source.

- “Generative AI Could Raise Global GDP,” Goldman Sachs, 2023, source.

- Lauren Leffer, “The AI Boom Could Use a Shocking Amount of Electricity,” Scientific American, October 13, 2023, source.

- Gokul Yenduri et al., “GPT (Generative Pre-Trained Transformer) – A Comprehensive Review on Enabling Technologies, Potential Applications, Emerging Challenges, and Future Directions,” arXiv, May 21, 2023, source.

- Luigi De Angelis et al., “ChatGPT and the rise of large language models: The New AI-Driven Infodemic Threat in Public Health,” Frontiers in Public Health 11 (2023), source.

- Antonia Creswell et al., “Generative Adversarial Networks: An Overview,” arXiv, 2017, source.

- Jie Gui et al., “A Review on Generative Adversarial Networks: Algorithms, Theory, and Applications,” Journal of Latex Class Files 14, no. 8 (2015), source.

- Nicholas Diakopoulos and Deborah Johnson, “Anticipating and Addressing the Ethical Implications of Deepfakes in the Context of Elections,” New Media & Society 23 no. 7 (2020): 2072–2098, source; Derek B. Johnson, “New Hampshire Robocall Kicks Off Era of AI-Enabled Election Disinformation,” CyberScoop, January 24, 2024, source; Natasha Singer, “Teen Girls Confront an Epidemic of Deepfake Nudes in Schools,” New York Times, April 8, 2024, source.