Table of Contents

Appendix

Methodology for Conducting Interviews

The methodology for collecting interviews included sending out a scheduling form that provided details on the interview and research project. The scheduling form collected information on potential respondents, email addresses, preferred names, consent to record, permission to contact in the future, consent to receive a copy of the final research report, and option for scheduling an interview slot. The scheduling form was disseminated to prospective interviewees through social media platforms including, LinkedIn and Slack, email, and by word of mouth.

Methodology for Evaluating Generative Artificial Intelligence Applications

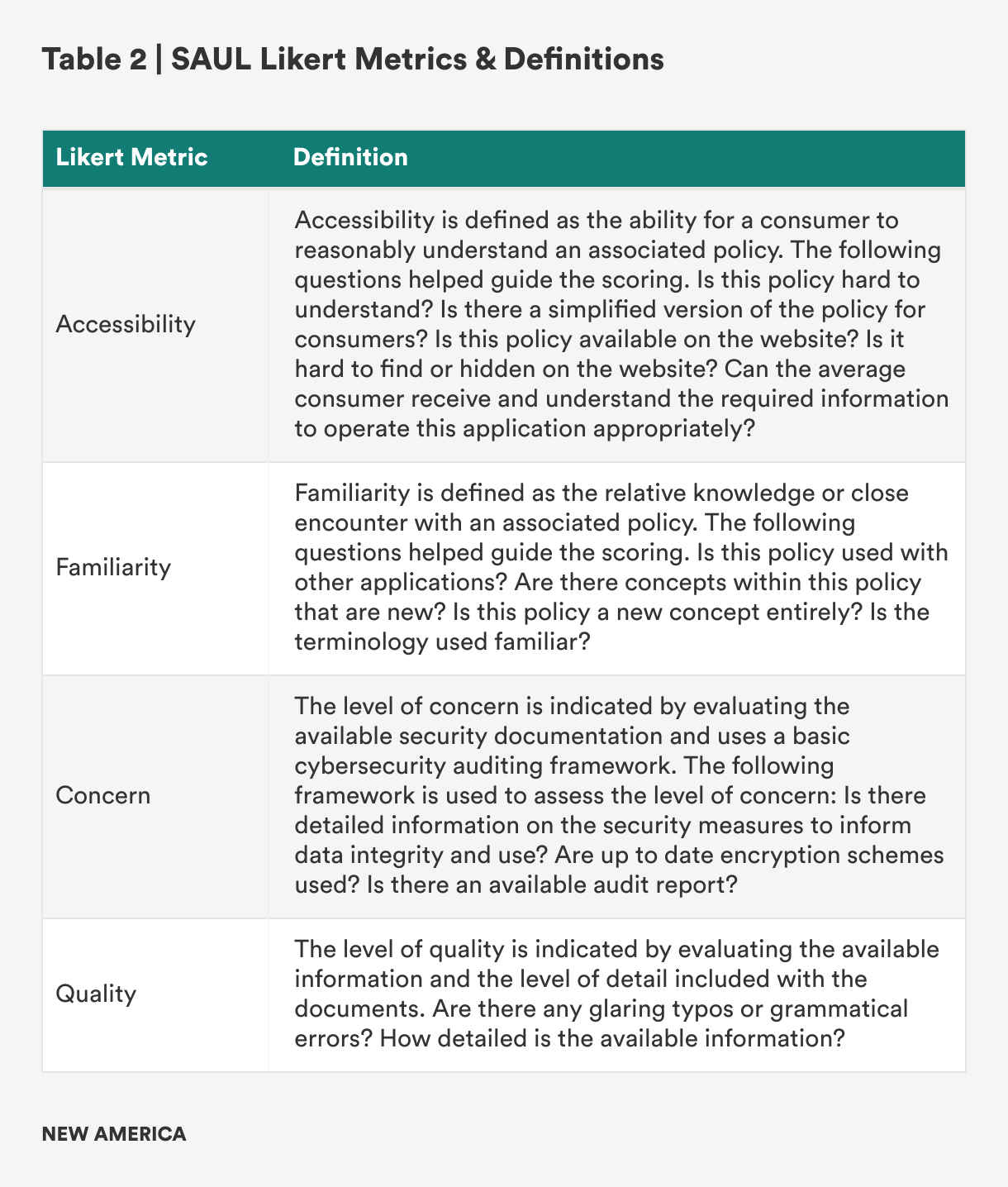

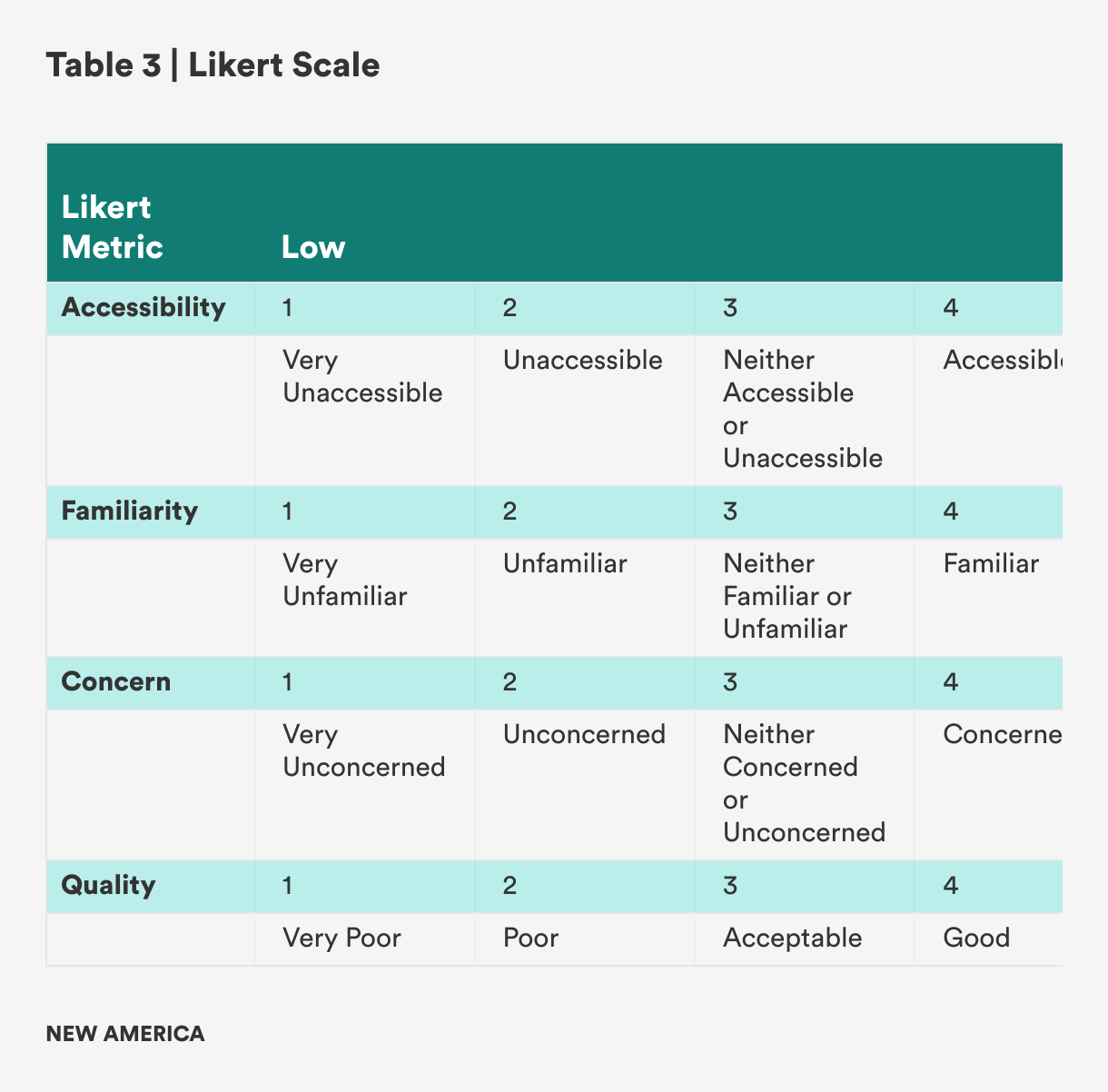

Three generative AI applications were chosen for the SAUL prototype pilot, including one generative pre-trained transformer (GPT) and two generative adversarial networks (GANs). The three applications included: Claude, MidJourney, and Sora. These tools were chosen for the pilot design of the label due to their popularity. In developing the wireframe of the label, available documentation such as privacy policies, terms of use/service, developer documentation, community guidelines, and any other addendum documents were reviewed. Each document collected was scored along four general criteria of “accessibility,” “familiarity,” “level of concern,” and “quality.” These four criteria were used to inform the widgets of the wireframe. If a particular policy scored high (e.g., a mean score of 3.5 and above), the associated indicator would be highlighted on the label. For policies that scored lower (e.g., a mean score of 3.4 and below), the associated indicator would not be highlighted on the label. The following definitions and Likert scale for each of the criteria are included in Tables 2 and 3 below.

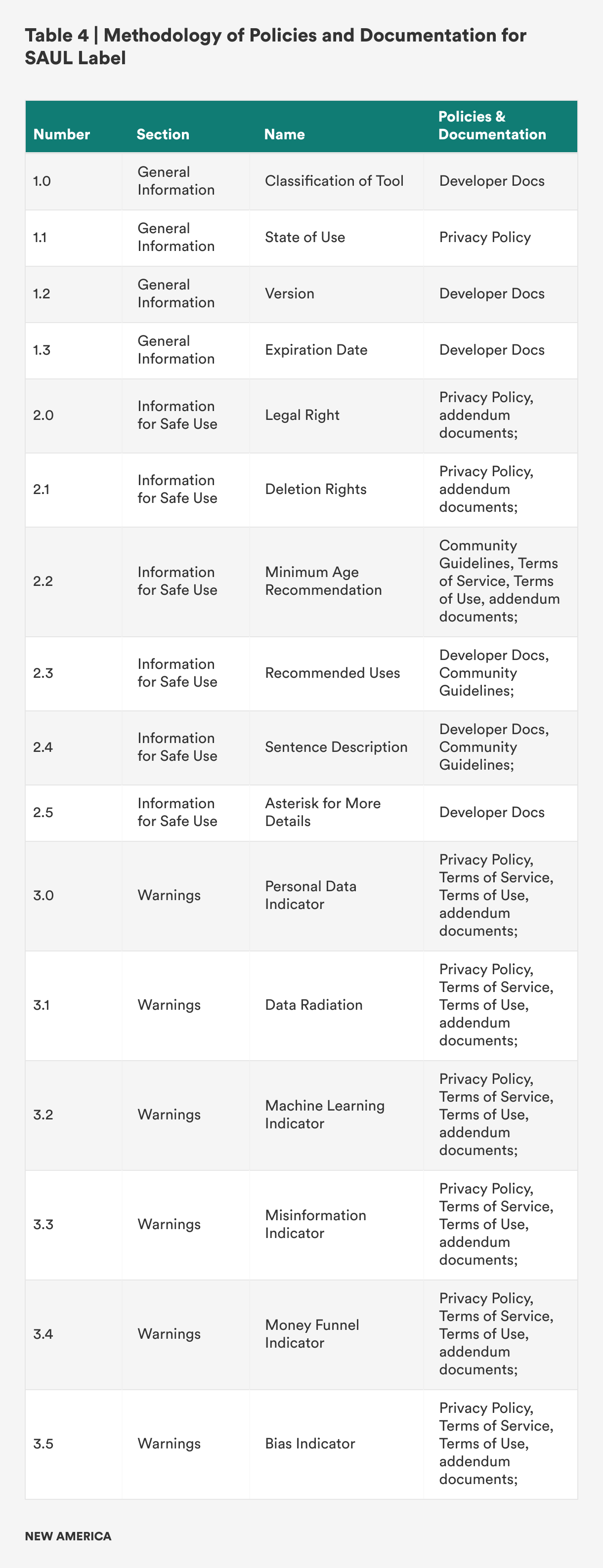

Methodology of Policies and Documentation for SAUL Label

The methodology for developing the three sections of the SAUL label are included in Table 4, which shows the mapping for each section to the associated icon tool and documentation.

Glossary of Key Terms

- Algorithmic Decision Systems (ADS): Machine systems that utilize algorithms for a range of automated tooling such as credit score generation, rideshare applications, and supply and demand predictions.

- Artificial Intelligence (AI): The science of engineering machines with human-like intelligence.

- Cloaked Consent: The act of hiding or obscuring choice on digital platforms through manipulative design.

- Deep Learning: A subset of machine learning methods that uses multiple, complex layers of networks.

- Explainable Artificial Intelligence (XAI): A subset of the AI field that seeks to use tools and frameworks to help users understand and interpret predictions made by machine learning models. It is also referred to as explainability.

- Generative Artificial Intelligence (Generative AI or Gen AI): A form of AI that produces seemingly new and synthetic content such as text, images, audio, and videos.

- Generative Adversarial Network (GAN): A form of AI with the ability to model images by training a set of networks in competition with one another. In training these models, the networks learn to assess the authenticity of synthetic and real images, with the goal of producing greater realistic images.

- Generative Pretrained Transformer (GPT): A form of AI that learns from human text to generate new text in response to a user’s prompts through chat.

- Natural Language Generation (NLG): A subfield of natural language focused on how machines generate human language.

- Natural Language Processing (NLP): A subfield of natural language focused on how machines can process human language.

- Natural Language Understanding (NLU): A subfield of natural language focused on how machines understand human language.

- AI Transparency: A subfield of ethical AI that refers to the study of creating openness and clarity for AI-based systems.