Translating the Artificial: Universal Design for Communicating Uses, Harms, and Policies for Generative AI Tools

Abstract

The launch of generative artificial intelligence (AI) tools in 2022 ignited a global tinderbox of AI innovation with the release of ChatGPT. The emergence of generative AI tools spurred discussions on responsibility, ethics, and regulation. However, communicating the potential opportunities and vulnerabilities of generative AI tools to the public remains scant, posing major risks for the security of society. To build a secure AI-enabled future, the federal government, associated agencies, and research institutions need to prioritize and standardize the design of a dynamic and voluntary labeling system for generative AI tools that inform users of the appropriate uses, potential harms, and available data protections for users of the tool.

This report proposes a preliminary universal design labeling system called “Simplified Algorithms for User Learning,” or “SAUL.” Starting with two generative AI tools, generative pre-trained transformer (GPTs) and generative adversarial networks (GANs), SAUL displays three sections of information: tool functionality, potential harms of use, and data protection policies. This report also advocates for further research of the label, enforcement of the voluntary design, and oversight by a participatory public council.

Acknowledgments

This endeavor would not have been possible without the financial and technical support of New America including: Camile Stewart Gloster, Lauren Zabierek, Bridget Chan, Christina Morillo, and Peter Singer. I would also like to extend thanks to my supportive husband, my reviewers, and the many participants that helped contribute to my research.

Editorial disclosure: The views expressed in this report are solely those of the author(s) and do not reflect the views of any government entities or of New America, its staff, fellows, funders, or board of directors.

Downloads

Executive Summary

Advancements in deep learning and natural language processing (NLP) catapulted financial markets into a cultural and economic hype cycle for AI-based tools. Although AI-based tools are not new, consumers have experienced a dramatic increase in access to generative AI tools since 2022.1 Collectively, the AI market will eclipse $298.2 million by the end of 2024 and is projected to rise to roughly $1.8 billion by 2030.2 With the rise of generative AI tools, anxieties about the future of work, an individual’s likeness and privacy, and election security have increased.3 However, users are often not provided with the information needed to understand the how and why—or transparency and explainability—behind the algorithms that power their favorite applications. “Explainability” refers to the ability of human users to understand and trust the results created by machine learning algorithms. Explaining generative AI tools to the general public is confined to algorithmic decision systems in social media feeds and privacy labels, but there are still substantial gaps in explaining appropriate uses, potential harms, and available data privacy protections.4 While explainability efforts focused on social media feeds have increased awareness about the field of algorithmic explainability, there is still a deficit of tools available for consumer audiences.

In 1969, the White House Conference on Food, Nutrition, and Health led to a refocusing of explainability efforts for the U.S. Food and Drug Administration (FDA), resulting in nutrition labels. Since the advent of the FDA nutrition label, iterations of its iconic design have inspired a new generation of labels for broadband policies and data scientists.5 Nutrition labels democratize access to information, increase transparency, and expand freedom of choice.

“Nutrition labels democratize access to information, increase transparency, and expand freedom of choice.”

However, one significant drawback of nutrition labeling for software products is its static labeling. Software products change with updates, depreciation of features, and bug fixes, therefore the labeling system for software must be dynamic and offer information relevant to the consumer. Many of the labeling efforts for software tools tend to approach labeling from a top-down governance approach by including dense details relevant only to subject matter experts such as lawyers, security professionals, and engineers.

This report seeks to develop a preliminary universal design labeling system for two generative AI tools. The Simplified Algorithms for User Learning (SAUL) label displays three sections of information in plain English, including tool functionality, potential harms of use, and data protection policies.6 In addition, this report seeks to advocate for further research of the label, enforcement of the voluntary design by the Federal Communications Commission (FCC), and oversight by a participatory public council led by the Department of Homeland Security’s Artificial Intelligence Safety and Security Board.

Citations

- John Schulman et al., “Introducing ChatGPT,” OpenAI Blog (blog), OpenAI, November 30, 2022, source; Linyuan Lu et al., “Recommender Systems,” Physics Reports, February 7, 2012, source.

- “Global AI Market Size Worldwide in 2021 With a Forecast until 2030,” Statista, 2024, source.

- Anna Milanez, “The Impact of AI on the Workplace: Evidence from OECD Case Studies of AI Implementation,” Organization for Economic Cooperation and Development (OECD) Social, Employment and Migration Working Papers No. 289, March 27, 2023, source; Natasha Singer, “Teen Girls Confront an Epidemic of Deepfake Nudes in Schools,” New York Times, April 8, 2024, source; Ali Swenson and Will Weissert, “New Hampshire Investigating Fake Biden Robocall Meant to Discourage Voters Ahead of Primary,” AP News, January 22, 2024, source.

- “Introducing 22 System Cards That Explain How AI Powers Experiences on Facebook and Instagram,” Meta AI (blog), Meta AI, June 29, 2023, source; “Privacy Labels,” Apple, 2024, source.

- Cora Lewis, “Internet Providers Must Now Be More Transparent About Fees, Pricing, FCC Says,” AP News, April 10, 2024, source; “The Data Nutrition Project,” Data Nutrition Project, 2024, source.

- Plain English is described as being understood on the Flesch-Kincaid scale of 7th–8th grade.

Introduction

“Cloaked consent,” the practice of gaining consent through deception and manipulative design patterns, is a common practice embedded in the technical landscape.1 In 2013, TouchID came to iPhone users as a breakthrough in an effortless, password-absent future.2 Six years later, the use of biometrics has fully integrated into consumer products, services, and even family vacations.3 With the turn of the 2010s, AI-powered facial recognition arrived at doorbell cameras and airport terminals, ushering in an array of opaque data practices with AI-based tooling.4 At the center of these innovations, standards for transparency are absent, leading to distrust, lack of understanding, and loss of autonomy.5

Transparency is a powerful lever for consumer education at scale, which is evidenced through the ubiquity of Food and Drug Administration (FDA) nutrition labels. Of respondents interviewed for this report, 95 percent read FDA nutrition labels ‘every’ or ‘most of the time’ and look to them for information on components (e.g., ingredients or materials), potential harms of use (e.g., allergens or toxicity), and provenance. With 91 percent of consumers (97 percent for those ages 18–34) skipping over data and privacy policies due to lack of accessibility, consumers then lack understanding of the tools they use. This is further exacerbated by emergent technologies like generative AI.6 By not creating a transparent design system for communicating policies and appropriate uses for generative AI tools, global consumers will experience a wider digital divide, an eroded national security landscape, and continued propagation of misinformation. This research proposes a label for generative AI tools based in universal design to provide consumers accessible information on generative AI tool functionality, potential harms of use, and available data protection policies.

Citations

- Jamie Luguri and Lior Strahilevitz, “Shining a Light on Dark Patterns,” Coase-Sandor Institute for Law and Economics 13 (2021), source.

- Jess Weatherbed, “10 Years Ago, Apple Finally Convinced Us To Lock Our Phones,” The Verge, September 12, 2024, source.

- “Do You Have To Use Your Finger Print To Enter The Parks or Does The Wrist Band Work Instead of the Finger Print,” Disney, October 11, 2023, source.

- Joy Buolamwini, “The Face is the Final Frontier of Privacy,” Time Magazine, November 21, 2023, source.

- Patrick Gage Kelley et al., “A ‘Nutrition Label’ for Privacy,” Symposium On Usable Privacy and Security, 2009, source.

- Patrick Gage Kelley et al., “A ‘Nutrition Label’ for Privacy,” Symposium On Usable Privacy and Security, 2009, source.

What Makes Artificial Intelligence “Artificial?”

Before the boom of AI, computer scientists John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon convened the 1956 Dartmouth Workshop, where the term “AI” was coined.1 McCarthy positioned the term to mean the science and engineering of building intelligent machines alongside tools that seek to mimic human intelligence, rationality, and thought.2 Even with McCarthy’s initial definition, there is still no consensus today on a definition for AI.3

Similarly to the lack of consensus on a definition for AI, there is no consensus on a definition for human intelligence. Human intelligence can roughly be broken into three concepts: (1) the use of complex heuristics to arrive at a decision faster, (2) the process of learning, and (3) the retention of information. The brain is the center of human intelligence and comprises a network of decentralized nerve cells called neurons that help command thoughts, reason, and emotion with fluidity. Neurons hold information that humans have learned through experiences and education. Humans simplify the process of making complex decisions by using heuristics. For example, bias is colloquially thought of negatively, but bias is a type of heuristic humans use to arrive at a result faster. The biological process of human intelligence and learning provided computer scientists and engineers with the basis to create intelligence in machines.

Generally, AI uses a combination of statistical probability and algorithms (“set of rules”) to arrive at a desired result. Importantly, AI systems are probabilistic and not deterministic. In order for machines to arrive at a statistically probable result, a similar process to human learning and cognitive patterns called “machine learning” occurs, where large volumes of data are used to train a machine or system toward a desired result.4 Like the human brain, machines need a network of units to retain information. Neural networks retain information for machines and consist of computational units that hold information to build predictions. Information is learned and refined in a neural network by adjusting the weight of each unit’s long-term information storage through linear algebra methods. Bias is also used by machines to arrive at a result faster. (Although this is an oversimplification of the human and machine learning processes, it speaks to the facsimile present in machine intelligence and further shows both the rapid development of the AI sector and the confluence of the human and artificial worlds.)

Progresses in deep learning in 2006 helped rapidly develop AI.5 Deep learning refers to neural networks with a large number of parameters and layers. The progress of deep learning led to the development of a class of AI systems capable of generating human language, commonly referred to as a large language model (LLM). LLMs include translation tools such as Google’s Google Translate and generative pre-trained transformers such as Open AI’s ChatGPT.

Generative Artificial Intelligence (GenAI)

The size of the AI market is poised to reach $826 billion by 2030.6 Generative AI, sometimes referred to as “gen AI,” refers to artificial intelligence capable of creating seemingly new and meaningful content such as text, images, or audio.7 One of the most popular generative AI tools is a chatbot or a generative pre-trained transformer (GPT), classified by its ability to generate human language. Although chatbots are not new, OpenAI’s release of ChatGPT in November 2022 garnered over 100 million monthly active users, making it one of the fastest growing consumer applications in history.8

Chatbots such as ChatGPT are one of a variety of generative AI tools that purport to enhance artistic expression, increase worker productivity, and simplify mundane tasks.9 However, these advancements also present challenges to the fields of ethics, climate, and labor economics.10 The widespread use of labor from the Global South to help power generative AI content has further fomented aspects of digital colonialism.11 In addition, a Goldman Sachs report noted that the use of generative AI could raise the gross domestic product by 7 percent and potentially replace 300 million jobs.12 Although data on the impact of AI on climate change is murky, AI tools are intensive and require more energy than other software applications.13 Some proponents of AI point to the potentiality of its opportunities in a variety of sectors, but to embrace its positives sustainable and responsible practices must be built into its development, implementation, and deployment.

Generative Pre-Trained Transformers (GPTs)

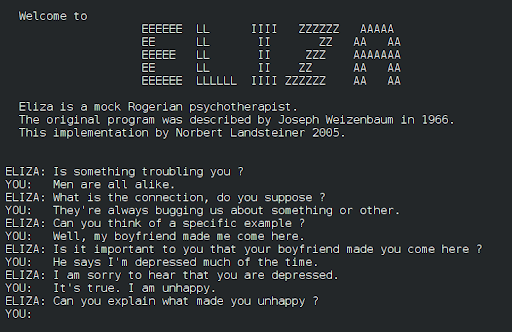

The first iteration of a generative pre-trained transformer (GPT) was the ELIZA-1960, an early natural language processing (NLP) program developed by Joseph Weizenbaum. NLP focuses on developing engineering communication strategies in machines for the purposes of manipulation, understanding, and generation of human language. Advancements in NLP resulted in tools such as Siri in 2010, Google Now in 2012, and Alexa in 2014. Today, GPTs are used for a variety of task-based work such as language generation, virtual personal assistants, and code generation due to progress in natural language understanding (NLU) and natural language generation (NLG). GPTs learn through unsupervised learning techniques and fine-tuning where models gain the ability to predict the upcoming sequential word.14 While GPTs can be an incredible source of information, researchers have pointed to vulnerabilities in its use in health care, computer programming, and media literacy.15

Wikimedia Commons

Generative Adversarial Networks (GANs)

Generative adversarial networks (GANs) refer to a class of generative AI that represent text-to-image tools such as MidJourney and Dall-E-3. GANs are able to model images by training a set of networks in competition with one another. In training these models, the networks learn to assess the authenticity of synthetic and real images, with the goal of producing greater realistic images.16 The adversarial training allows for GANs to be utilized in a variety of scenarios from the medical field (drug discovery) to data science (data augmentation). However, GANs have become most popular within the field of images, audio synthesis, and audio recognition.17 GANs, like other generative AI tools, present unique opportunities and challenges. For example, GANs can help individuals who have experienced vocal trauma but can also spread misinformation and non-consensual sexually explicit material.18

Citations

- Roberto Cordeschi, “AI’s Half Century: On the Thresholds of The Dartmouth Conference,” Università degli Studi di Roma, 2006, 109–114, source.

- John McCarthy, “What is Artificial Intelligence?” University of Nebraska–Lincoln School of Computing, 2004, 1–14, source.

- P.M. Krafft et al., “Defining AI in Policy Versus Practice,” Proceedings of the AAAI/ACM Conference on AI, Ethics, and Society (2019): 72–78, source.

- Maddalena Favaretto et al., “What is Your Definition of Big Data? Researchers’ Understanding of the Phenomenon of the Decade,” PLoS ONE 15, no. 2 (2020), source.

- Josh Patterson and Adam Gibson, Deep Learning: A Practitioner’s Approach, ed. Mike Loukides and Tim McGovern (Sebastopol, CA: O’Reilly Media Inc., 2017), 81.

- “Global AI Market Size Worldwide in 2021 With a Forecast until 2030,” Statista, 2024, source.

- Stefan Feuerriegel et al., “Generative AI,” Business and Information System Engineering 66 (2024): 111–126, source.

- Krystal Hu, “ChatGPT sets record for fastest-growing user base-analyst note,” Reuters, February 2, 2023, source.

- Kate Glazko et al., “An Autoethnographic Case Study of Generative Artificial Intelligence’s Utility for Accessibility,” Proceedings of the 25th International ACM SIGACCESS Conference on Computers and Accessibility (Fall 2023): 1–8, source.

- Divy Thakkar et al., “Towards an AI-Powered Future that Works for Vocational Workers,” CHI ’20: Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems (April 2020): 1–13, source.

- Michael Kweet et al., “Digital Colonialism: US Empire and The New Imperialism in the Global South,” Institute of Race Relations 60, no. 4 (2019): 3–26, source; Anna Milanez, “The Impact of AI on the Workplace: Evidence from OECD Case Studies of AI Implementation,” Organization for Economic Cooperation and Development (OECD) Social, Employment and Migration Working Papers No. 289, March 27, 2023, source.

- “Generative AI Could Raise Global GDP,” Goldman Sachs, 2023, source.

- Lauren Leffer, “The AI Boom Could Use a Shocking Amount of Electricity,” Scientific American, October 13, 2023, source.

- Gokul Yenduri et al., “GPT (Generative Pre-Trained Transformer) – A Comprehensive Review on Enabling Technologies, Potential Applications, Emerging Challenges, and Future Directions,” arXiv, May 21, 2023, source.

- Luigi De Angelis et al., “ChatGPT and the rise of large language models: The New AI-Driven Infodemic Threat in Public Health,” Frontiers in Public Health 11 (2023), source.

- Antonia Creswell et al., “Generative Adversarial Networks: An Overview,” arXiv, 2017, source.

- Jie Gui et al., “A Review on Generative Adversarial Networks: Algorithms, Theory, and Applications,” Journal of Latex Class Files 14, no. 8 (2015), source.

- Nicholas Diakopoulos and Deborah Johnson, “Anticipating and Addressing the Ethical Implications of Deepfakes in the Context of Elections,” New Media & Society 23 no. 7 (2020): 2072–2098, source; Derek B. Johnson, “New Hampshire Robocall Kicks Off Era of AI-Enabled Election Disinformation,” CyberScoop, January 24, 2024, source; Natasha Singer, “Teen Girls Confront an Epidemic of Deepfake Nudes in Schools,” New York Times, April 8, 2024, source.

Consumer Choice and Data Policies: Sentiment Analysis of User Interviews

“I wish that there was a way for companies to make data policies easier to understand so people aren’t signing blindly. Where’s my data going? I have a master’s degree, and I still don’t understand.”—Gen Z interviewee

Consumers’ preferences, opinions, and thoughts on the design and features of emerging technology are often neglected. Additionally, attempts toward greater explainability and accessibility are focused on the perspective of engineers and data scientists, such as with Mozilla’s AI Guide or the Data Nutrition Project’s Data Labels. Although these industry strides are needed, they often come at the expense of developing accessible tools for the consumer. Additionally, consumer explainability tools focus on a specific product’s underlying feature, such as with Meta’s System Cards, an explainability tool aimed at helping consumers better understand their feed.1 Apple also released explainability tools for the privacy policies of applications within their App Store. Moreover, exercises in providing choice to consumers largely centered on data broker deletion apps such as Permission Slip or Delete Me. Although these tools are highly valuable, they are not centered around explaining generative AI tools. An accessible, easy-to-read tool that can explain the generative AI tool in use and its data policies is needed to help bridge the ever-widening explainability gap between tool and consumer.

To address this gap and better understand consumers’ needs, qualitative interviews were conducted for three months in 2024 with the goal of collecting information on consumers and their opinions toward generative AI, digital literacy comprehension, understanding of data policies, and attitudes on nutrition labeling. Interviews consisted of a questionnaire, consent form, and an interview. For individuals interested in an interview, a scheduling form was disseminated to prospective interviewees through social media platforms, email, and word of mouth. Interviews ranged in length from 30 to 60 minutes, totaling almost 1,500 minutes of audio data. Participants predominantly lived in the United States (80 percent of respondents), while 14 percent were based in Europe, 3 percent were based in Asia, and the remaining 3 percent resided in Africa. Generations represented included baby boomers, Generation X, millennials, and Generation Z, with a majority of respondents being millennials. Almost all respondents (26) had interacted with a generative AI tool, with only one having no familiarity or past use of generative AI tools. Gaining insight into how individuals across various demographic backgrounds engage with technology—and in particular, generative AI—helped steer the design and information presented on the label.

Overall, respondents reported negative feelings about signing up for and using software tools, including generative AI tools. A sentiment analysis of user data concluded that 33 percent of respondents reported experiencing negative feelings when describing their personal autonomy when signing up for software tools. In addition, users reported lacking significant understanding of the data policies behind the software tools they used. A millennial user referenced feeling that, “I have to suspend my discomfort in order to function in the digital space.” Respondents shared similar feelings on the trade-off between access and paywalls, with one noting, “I’d rather pay for resources to limit the types of personal information I give to companies.” Some respondents within the arts, teaching, and consulting fields noted that access to certain services and tools directly impacted their ability to find work, network, and produce more relevant content. These feelings were compounded when discussing generative AI tools.

Additionally, interviewees often felt a lack of ability to control where their private information was and who had access to it. When discussing comprehension of data policies, respondents noted a lack of understanding, citing incomprehensible legalese. Often, their lack of knowledge due to the legalese resulted in feeling a lack of choice regarding which companies would best protect their private information. Across sectors, including those with post-secondary and terminal degrees, 93 percent noted that they do not understand privacy policies and do not read them. While some blindly accept privacy policies, 33 percent of respondents reported trying to read portions of privacy policies.

“Lack of knowledge due to the legalese resulted in feeling a lack of choice regarding which companies would best protect their private information.”

For artists and teachers, the question of consent and privacy loomed larger. Of those in the artistic and teaching sectors, 100 percent questioned whether the work they created using generative AI tools would be used to further train the model or pass off their work as its own. An actor discussed how the SAG-AFTRA and WGA union strikes affected their ability to work as a stage actor. The actor temporarily switched to voice acting to earn a living and noted during the interview that they wrestled with how their voice could be used without their consent. Another interviewee hypothesized whether users would feel good knowing their work could be used to train AI models, referencing the lack of awareness of how these policies affect user data. Almost all respondents (96 percent) echoed the sentiment of one interviewee who noted that they “don’t feel protected as a consumer.” In addition, interviewees were asked to define personal data. Many respondents highlighted that personal data included the photos they share, their preferences, searches, and other traditionally understood components of personal information.

When asked about habits and attitudes towards labels such as Food and Drug Administration (FDA) nutrition labels, cosmetics labels, and others, 89 percent of respondents revealed that they read labels at a frequency of several times and above. For those respondents, labels helped communicate components of the product, provenance, and potential harms. Some respondents (25 percent) that read labels noted that labeling provides verifiable credentials and a level of trustworthiness that elevates comfort levels. One respondent mentioned that having objective data required by law is not only a powerful advertising function, but also provides much needed information for the consumer to navigate choice. Most respondents wanted to know about the “right to delete” and any potential security concerns. In addition, the ability to scan the most pertinent information was often cited as a must have for a label.

Data collected in these interviews helped create a label prototype using plain language to describe the generative AI tool and skimmable information on the data policies of the generative AI tool. Consumers often noted that traditional nutrition labels are often too dense and use complex language that is hard to understand. The first iteration of the data nutrition label focused on plain language and understandability. Although further testing and consumer feedback is needed, this research provides a starting point for exploring a more robust consumer facing label for generative AI tools.

Citations

- “Introducing 22 System Cards That Explain How AI Powers Experiences on Facebook and Instagram,” Meta AI (blog), Meta AI, June 29, 2023, source.

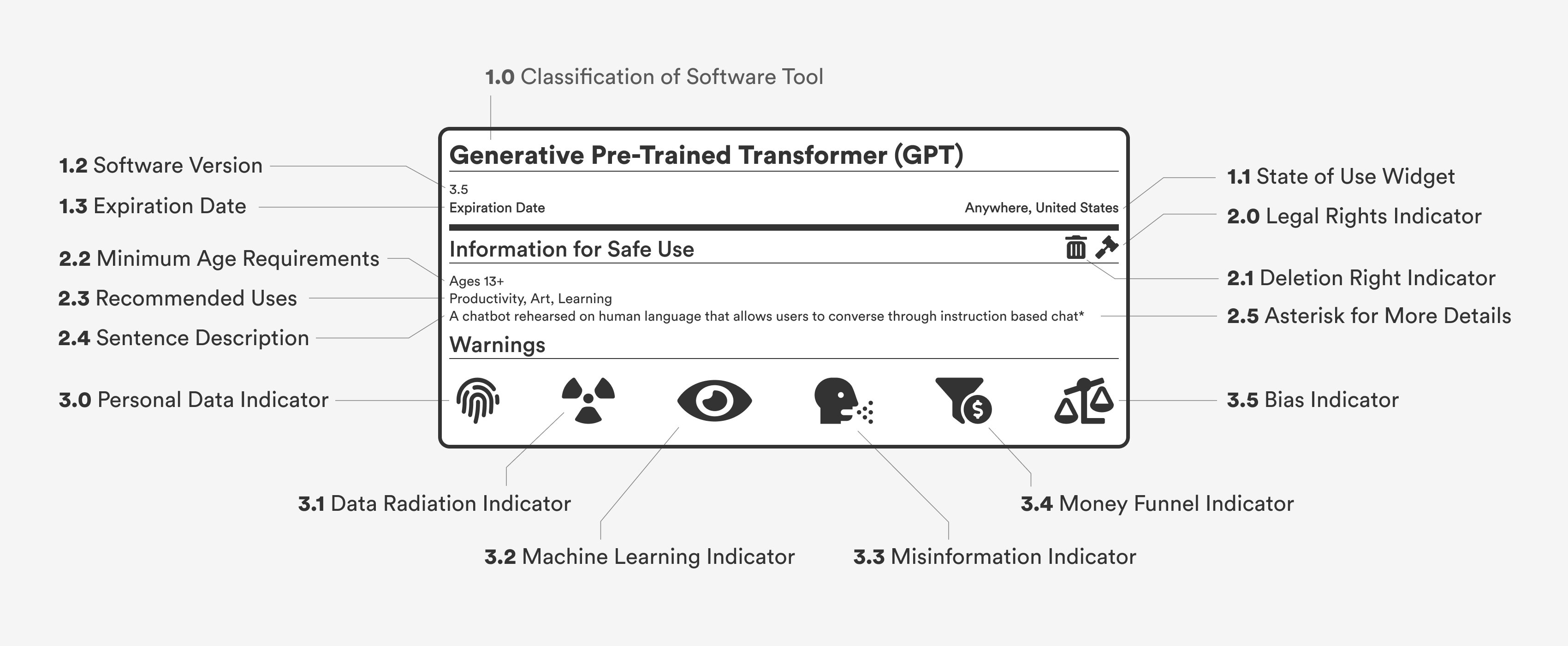

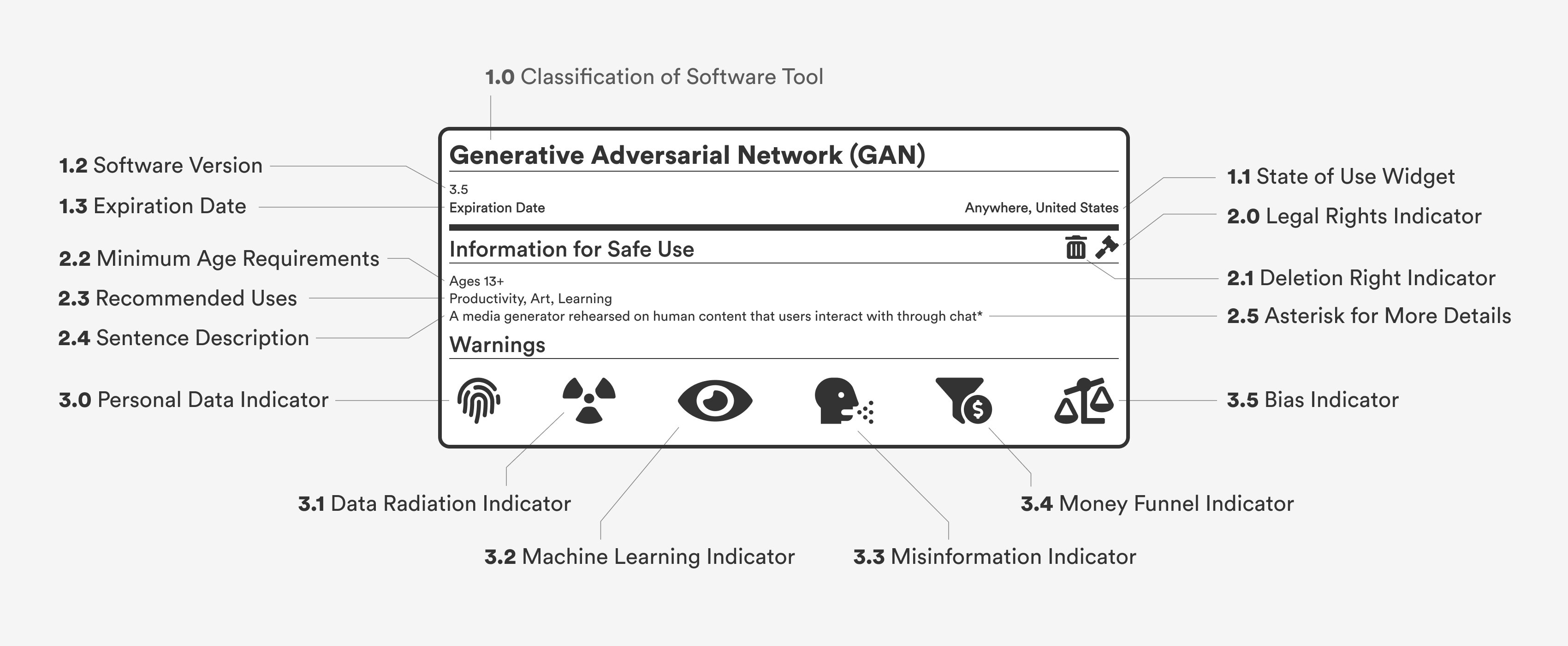

The Simplified Algorithms for User Learning (SAUL) Label

The Simplified Algorithms for User Learning (SAUL) label is an explainability label that features universal design elements, plain language at the 8th to 9th grade reading level, and familiar iconography from civil and technical engineering. SAUL also features a one-sentence explainer describing how the generative AI tool works. For more information, users can navigate to the asterisk above the sentence description to the SAUL website, which features a more detailed explanation of generative pre-trained transformers (GPTs) and generative adversarial networks (GANs).

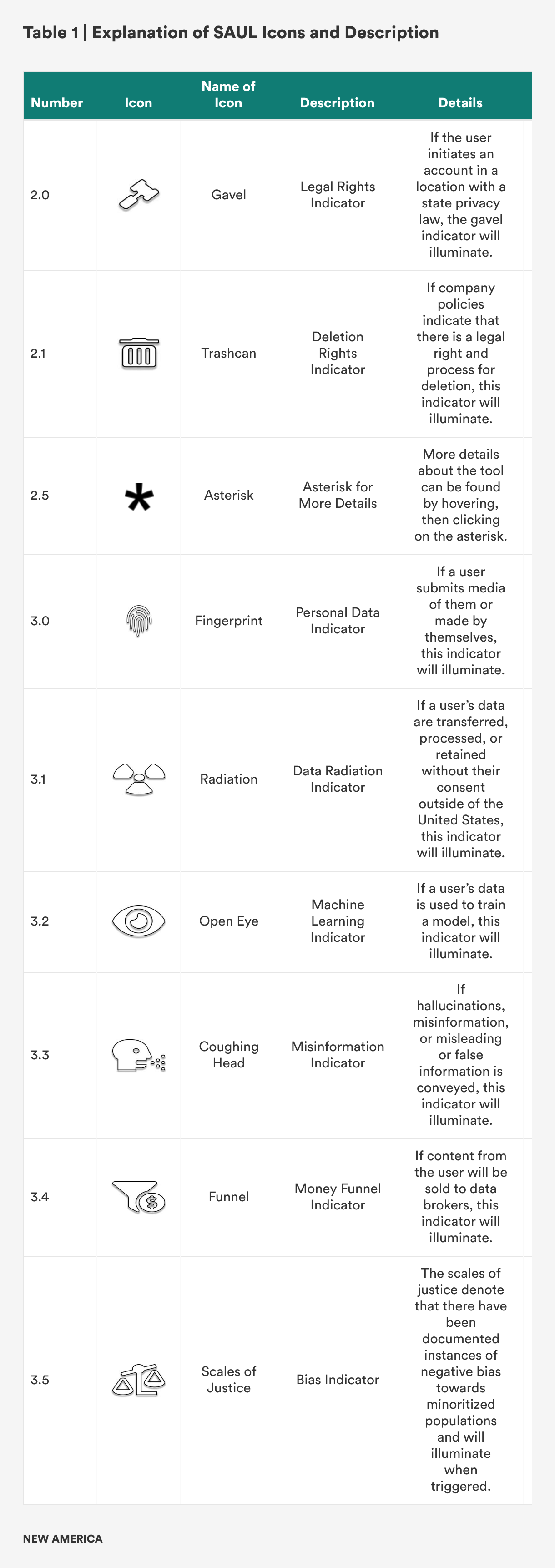

The label is organized in three sections with corresponding widgets and icons: general information, information for safe use, and warnings. To communicate whether a particular widget is in use, the widget will become illuminated. When an icon is not illuminated there is not enough information to provide a definitive or reasonable answer. General information shows the classification of the tool (e.g., ‘generative pre-trained transformer’ or ‘generative adversarial network’), the state of use, the version currently in use, and an expiration date. The second section communicates necessary information for safe use, including the right to delete personal data, recommended age of use, and a one-sentence description of how to interact with the tool. The last section of the SAUL label features six different warnings, including the training of personal data for machine learning purposes, misinformation, bias, data leakage, and data broker involvement. Table 1 shows each of the three sections with detailed descriptions of the icons and their descriptions.

Wireframes of SAUL

With the interview data, I created two prototype SAUL labels informed by the information that consumers want to know before signing up to use a generative AI tool. The labels are meant to appear before signing up for a service to inform potential users of the data policies at play. The information featured is written in accessible language for users of most educational backgrounds and utilizes a clean layout to ensure information can be easily scanned. For users who want more information on the label widgets, detailed information can be found on the SAUL website, accessible by clicking on widgets within the labels.

Label courtesy of the author, used with permission.

Label courtesy of the author, used with permission.

Policy Recommendations

Although the Simplified Algorithms for User Learning (SAUL) label seeks to add to the field of explainability, policy changes concerning consumer education and data transparency are needed to ensure a successful and iterative rollout of these kinds of nutrition labels as generative AI evolves. Below are the associated policy recommendations for the federal government and civil society to advance the wider field of data nutrition labels and the SAUL label itself.

Supervise the Research Process

The White House Office of Science and Technology Policy (OSTP) should supervise the process of researching the components of a nutrition data label due to their work with the AI Executive Order. The OSTP should also publish a retrospective on pilot initiatives of data labels such as SAUL to further increase transparency efforts. To ensure the viability of data nutrition labels like SAUL, research from government agencies and leading academic organizations in the artificial intelligence space will be recommended to further efforts. Research efforts will be led by agencies such as the Defense Advanced Research Projects Agency and the National Institute of Standards and Technology’s U.S. AI Safety Council.

Convene a Participatory Public Council on Generative AI

A participatory public council that consists of the general public, civil society organizations (CSOs), and non-governmental organizations (NGOs) will ensure that consumer interests, needs, wants, and concerns are addressed. The research process will focus on creating standards for the design and continual integration of feedback from the accountability process in three ways: (1) assurance of keeping up to date on the latest developments of the space, (2) adherence to updates on the design and research process, and (3) commitment to incorporating feedback from consumers. To ensure a policy process that evolves alongside the development of generative AI tools, a four-part cyclical policy process should be considered that sequentially flows from development to deployment to engagement to feedback.

For example, this four-part cycle could include submission to the SAUL development program, research of data policies and algorithms, and a closed-door quality assurance (QA) process with industry and non-industry stakeholders to evaluate SAUL. Stakeholders involved in the development process would include five to seven private companies within the generative AI space. During the research and QA processes, industry partners from the private sector, academia, CSOs, and nonprofits would be selected to help guide research and QA. After successful rounds of QA, members of the Safety Council would determine the deployment of the subsequent releases of the SAUL label.

Commit to Consumer Education and Consumer Rights

The U.S. Department of Education in conjunction with OSTP should work to develop educational benchmarks for K–12 students to learn about the wider field of AI. Moreover, advocacy efforts for consumer rights within the AI field should be developed to ensure that the changing needs of the consumer are understood and integrated into the field of explainability.

Conclusion

The explosive use of generative AI tools has led to the development of productivity gains from art to medicine. Despite the breakthroughs, generative AI tools have also fomented a steady stream of misinformation, disinformation, and mal-information, causing an uptick of socioeconomic issues within the realms of labor, national security, and data privacy. An explainability tool to combat the lack of transparency and understandability when engaging with a generative AI tool is needed. This research seeks to showcase the Simplified Algorithms for User Learning (SAUL) label, an explainability tool developed using universal design features. SAUL displays three sections of information including: tool functionality, potential harms of use, and data protection policies. In addition to the creation of the SAUL label, policy recommendations are included to aid in the adoption of the SAUL label.

Implementing SAUL at scale would not only democratize access to information but could also build a foundation for a more consumer-led tech landscape where consumers have a voice. Although there will never be a unified set of principles that all consumers agree on, the research conducted in this report sheds light on the fact that consumers want accessible information on the data policies of emerging tech tools such as generative AI tools. Often, consumer technology is built without the consent of consumers, with tech companies believing they know what is best for users. Utilizing the SAUL label or a similar label could help rebalance the share of power between tech companies and consumers.

Appendix

Methodology for Conducting Interviews

The methodology for collecting interviews included sending out a scheduling form that provided details on the interview and research project. The scheduling form collected information on potential respondents, email addresses, preferred names, consent to record, permission to contact in the future, consent to receive a copy of the final research report, and option for scheduling an interview slot. The scheduling form was disseminated to prospective interviewees through social media platforms including, LinkedIn and Slack, email, and by word of mouth.

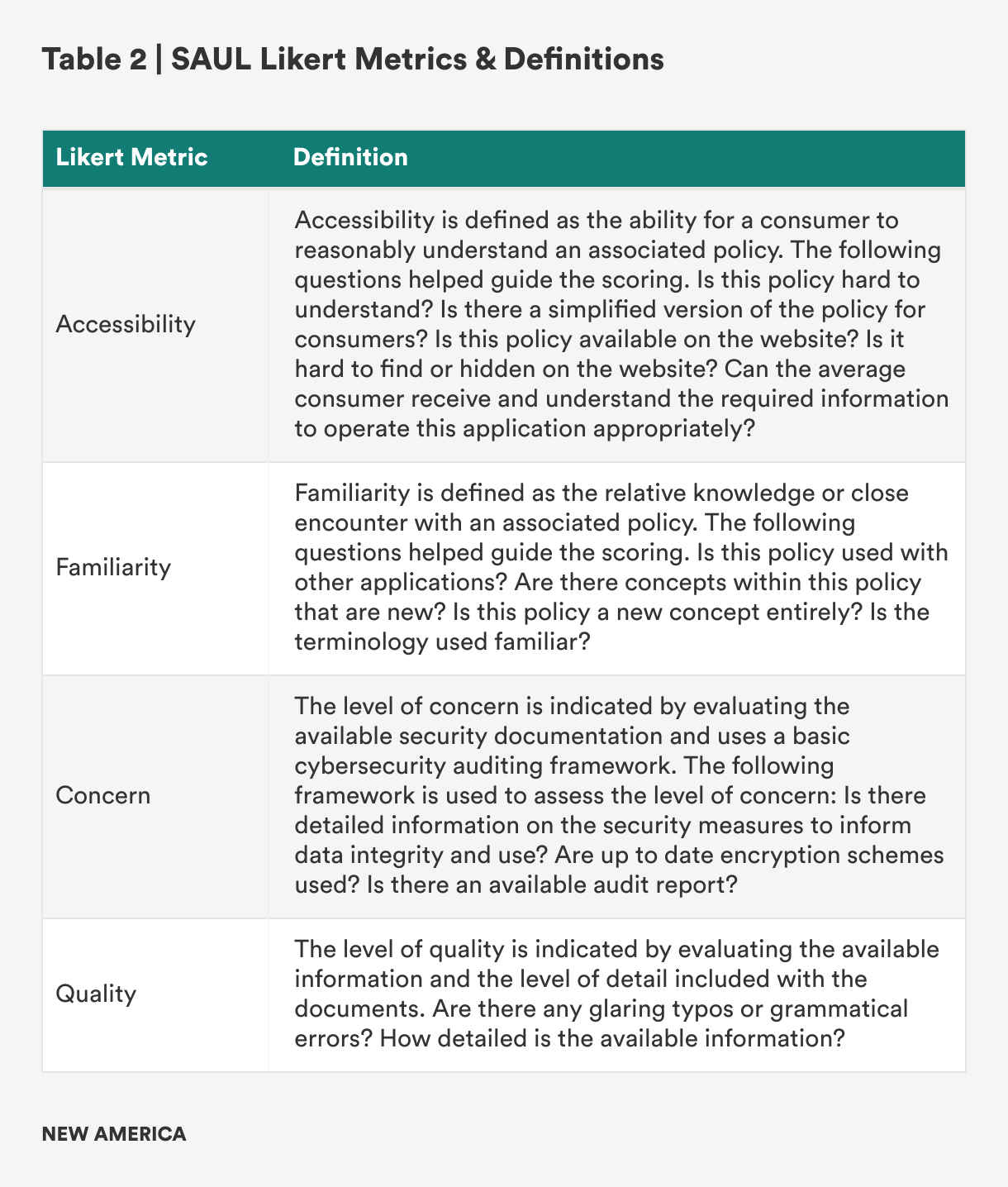

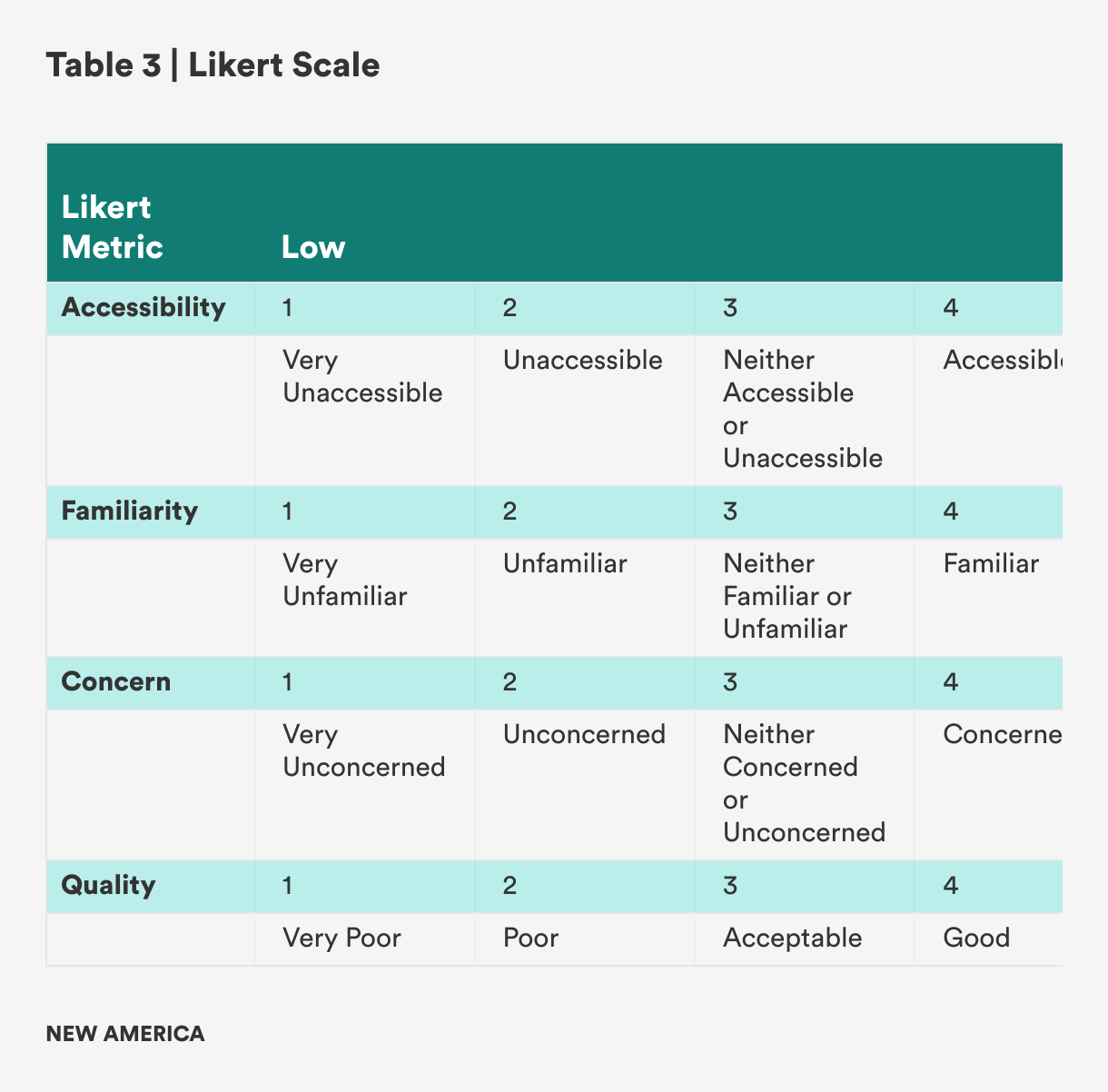

Methodology for Evaluating Generative Artificial Intelligence Applications

Three generative AI applications were chosen for the SAUL prototype pilot, including one generative pre-trained transformer (GPT) and two generative adversarial networks (GANs). The three applications included: Claude, MidJourney, and Sora. These tools were chosen for the pilot design of the label due to their popularity. In developing the wireframe of the label, available documentation such as privacy policies, terms of use/service, developer documentation, community guidelines, and any other addendum documents were reviewed. Each document collected was scored along four general criteria of “accessibility,” “familiarity,” “level of concern,” and “quality.” These four criteria were used to inform the widgets of the wireframe. If a particular policy scored high (e.g., a mean score of 3.5 and above), the associated indicator would be highlighted on the label. For policies that scored lower (e.g., a mean score of 3.4 and below), the associated indicator would not be highlighted on the label. The following definitions and Likert scale for each of the criteria are included in Tables 2 and 3 below.

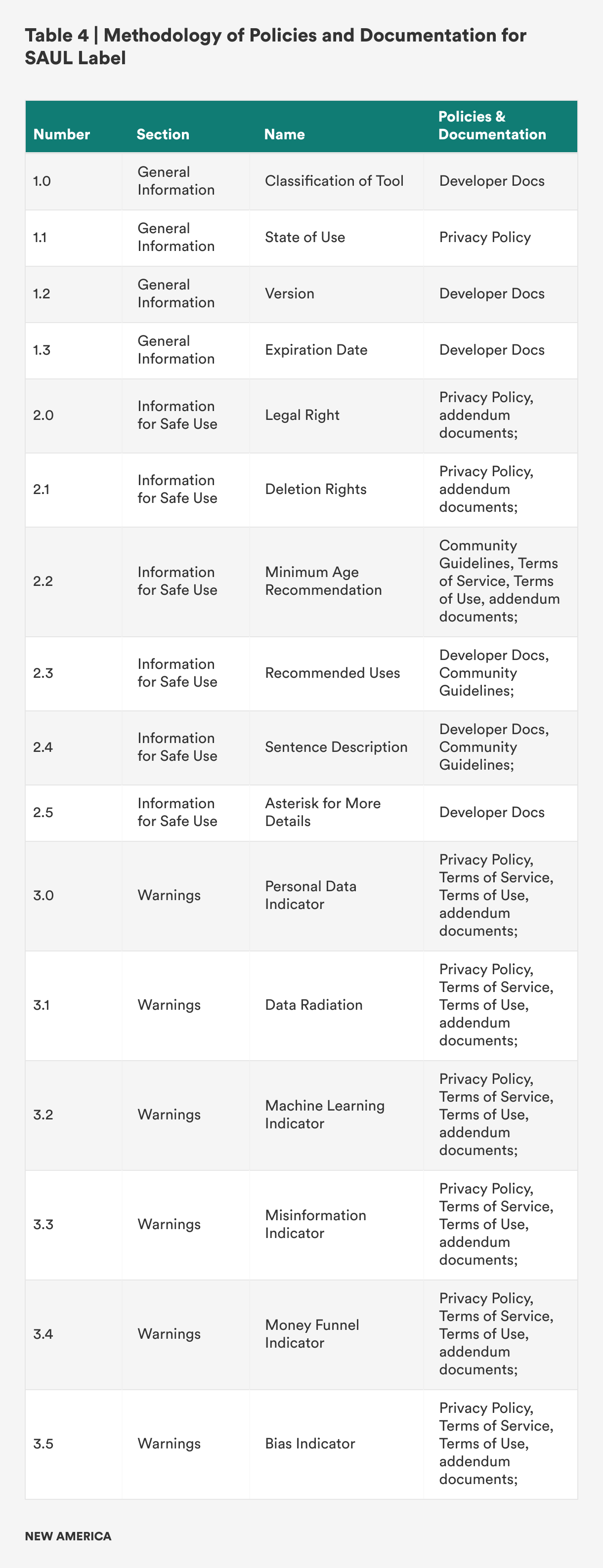

Methodology of Policies and Documentation for SAUL Label

The methodology for developing the three sections of the SAUL label are included in Table 4, which shows the mapping for each section to the associated icon tool and documentation.

Glossary of Key Terms

- Algorithmic Decision Systems (ADS): Machine systems that utilize algorithms for a range of automated tooling such as credit score generation, rideshare applications, and supply and demand predictions.

- Artificial Intelligence (AI): The science of engineering machines with human-like intelligence.

- Cloaked Consent: The act of hiding or obscuring choice on digital platforms through manipulative design.

- Deep Learning: A subset of machine learning methods that uses multiple, complex layers of networks.

- Explainable Artificial Intelligence (XAI): A subset of the AI field that seeks to use tools and frameworks to help users understand and interpret predictions made by machine learning models. It is also referred to as explainability.

- Generative Artificial Intelligence (Generative AI or Gen AI): A form of AI that produces seemingly new and synthetic content such as text, images, audio, and videos.

- Generative Adversarial Network (GAN): A form of AI with the ability to model images by training a set of networks in competition with one another. In training these models, the networks learn to assess the authenticity of synthetic and real images, with the goal of producing greater realistic images.

- Generative Pretrained Transformer (GPT): A form of AI that learns from human text to generate new text in response to a user’s prompts through chat.

- Natural Language Generation (NLG): A subfield of natural language focused on how machines generate human language.

- Natural Language Processing (NLP): A subfield of natural language focused on how machines can process human language.

- Natural Language Understanding (NLU): A subfield of natural language focused on how machines understand human language.

- AI Transparency: A subfield of ethical AI that refers to the study of creating openness and clarity for AI-based systems.