Table of Contents

- Introduction

- Brain-Computer Interfaces: Fundamentals and Applications in Commercial and Medical Contexts

- Privacy and Security Challenges

- Current Legislative Landscape and Proposed Regulatory Approaches

- Proposed Approaches for Data Protection

- The Path Forward: Balancing Security, Ethics, and Regulation

Privacy and Security Challenges

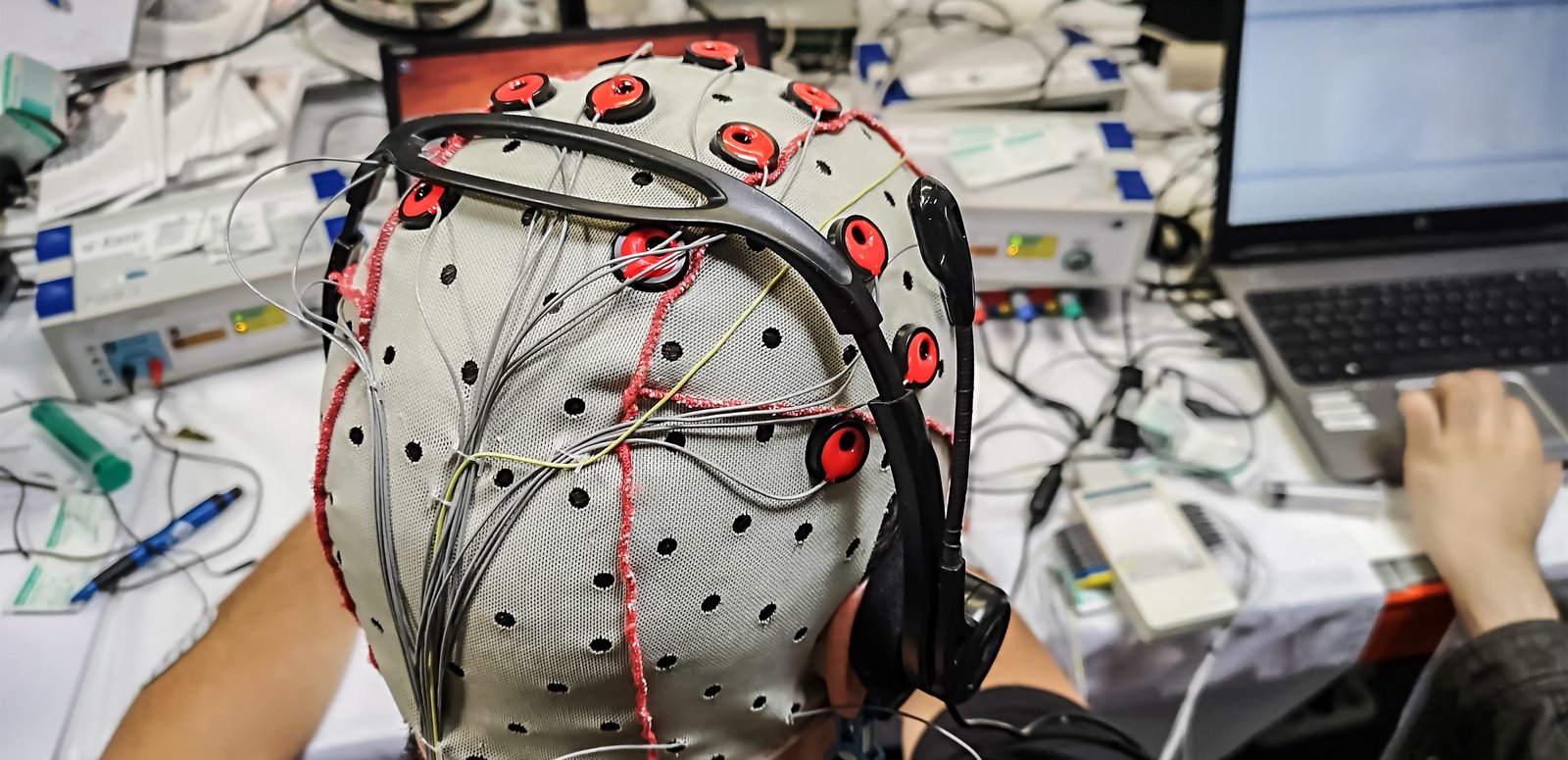

Brain-computer interfaces (BCIs) can access neural signals directly from an individual’s brain and potentially translate these signals into data without that person’s explicit consent. This capability poses significant privacy concerns, primarily because the data extracted can reveal intimate information about a person’s thoughts, emotions, and subconscious states. As noted, BCI utilization can be concerning in contexts such as the workplace or consumer spaces where BCIs might be employed under less stringent ethical standards. For example, China has deployed BCI technology to detect changes in emotional states in employees on the production line.1

Privacy risks are exacerbated by the depth of information available from neural data, which could be more revealing and personal than any data collected through conventional means. Marketers might exploit emotional or cognitive information to tailor advertisements in real-time, manipulating consumer behavior more effectively and intrusively than ever before. In an article published by the audience agency Nielsen, the authors noted that by “using electroencephalography, we’re able to tell, second by second, what parts of the commercial elicit a response from the viewer, including what in particular is catching the attention and activating the memory of the viewer.”2

This level of insight into a viewer’s brain activity has troubling potential for manipulation, behavioral conditioning, and the erosion of personal autonomy. Unlike traditional digital marketing, which relies on engagement tracking and browsing behavior, BCIs provide direct access to subconscious cognitive and emotional responses, creating an entirely new frontier for behavioral targeting. Advertisers, political strategists, and technology companies could leverage this data to develop hyper-personalized content that not only responds to user preferences but actively shapes them over time.

The ability to analyze and exploit neural activity introduces significant ethical concerns. If companies can detect which types of content trigger emotional responses such as excitement, fear, or trust, they can optimize not just ad placement but the neurological impact of the content itself. This could lead to a feedback loop where users are unknowingly conditioned to respond more strongly to specific emotional triggers, making them more susceptible to targeted persuasion, compulsive engagement, and even ideological manipulations.

Beyond commercial applications, the potential for coercion and psychological influence through neural data analysis presents broader societal risks. Governments, interest groups, and political campaigns could use real-time neural feedback to refine messaging strategies that subtly nudge individuals toward specific attitudes or beliefs. The ability to prime emotional responses before conscious decision-making occurs could be exploited to influence voting behavior, shape public opinion, and reinforce ideological divides, all while bypassing traditional forms of cognitive resistance.

“Neuro-targeting could become one of the most invasive forms of psychological influence ever deployed in digital spaces, allowing corporations and institutions to alter consumer behavior, political preferences, and emotional states without users ever realizing.”

The lack of regulatory safeguards surrounding this technology makes these risks even more urgent. Without strict legal protections, there are no clear restrictions preventing companies from storing, analyzing, or selling neural response data, nor are there established guidelines limiting how deeply artificial intelligence (AI) models can interpret and manipulate cognitive states. If left unchecked, neuro-targeting could become one of the most invasive forms of psychological influence ever deployed in digital spaces, allowing corporations and institutions to alter consumer behavior, political preferences, and emotional states without users ever realizing the extent of external influence.

Cybersecurity Threats

BCI technologies face unique cybersecurity threats, as stolen neural data could not only compromise personal privacy but also exacerbate risks related to national security, economic stability, and digital safety.3 Neural data is a prime target for cyberattacks, as it provides valuable insights into an individual’s mental and emotional state, cognitive functions, and decision-making processes. If hacked or misused, this data could be exploited in ways that extend far beyond individual harm, affecting government operations, financial markets, and public health systems.

From a national security perspective, adversarial nations or criminal organizations could use stolen neural data to manipulate individuals in sensitive government, military, or corporate positions. The ability to map cognitive patterns, detect psychological weaknesses, or influence neural responses could be exploited for blackmail, psychological warfare, and even cognitive hacking, where external actors attempt to subtly alter thought patterns or decision-making processes over time.

The economic risks are also profound. BCI-generated insights could become a highly valuable commodity, leading to an underground market where businesses and malicious entities might trade in stolen neural profiles. The unauthorized use of such data could fuel insider trading, corporate espionage, and fraudulent financial activity, as businesses or investors gain access to real-time cognitive responses to market trends, corporate strategies, or high-stakes negotiations.

From a public health and safety perspective, compromised BCI systems could pose severe risks for medical patients relying on neurotechnology for essential functions, such as prosthetic control, communication assistance, or cognitive therapy. A cyberattack targeting neural implants or cloud-based BCI platforms could disable medical functions, manipulate cognitive states, or even induce distressing neurological effects. Such vulnerabilities raise urgent questions about the need for stronger cybersecurity protections, fail-safe mechanisms, and regulatory oversight in the deployment of BCI technology.

As BCI adoption accelerates, clear cybersecurity frameworks and enforcement mechanisms must be established to protect against these growing risks. Without proactive intervention, BCI technology could become a prime vector for cyberattacks that threaten not only individual users but also global stability in security, economic, and public health domains.

Neural Flooding and Neural Scanning Cyberattacks

While BCI technology has demonstrated immense potential in medical applications, such as neurostimulation for treating Parkinson’s disease, epilepsy, and depression, these same advancements could also be exploited for harm. Researchers have studied various neuro-attacks that could manipulate brain activity, cognitive functions, and even emotional states, indicating that the very mechanisms designed to restore function could also be used to disrupt it.

One study explored two types of attacks on neural systems: neuronal flooding (FLO) and neuronal scanning (SCA). The FLO attack involves overloading a specific group of neurons at once, while the SCA attack targets individual neurons to disrupt normal activity.4 The findings highlight the dual-use nature of BCI technology—while BCIs can improve quality of life for patients with neurological disorders, adversaries could theoretically exploit these same systems to mimic or induce the symptoms of neurological diseases. In a later study, researchers proposed that similar attacks could theoretically replicate the effects of neurodegenerative diseases like Parkinson’s and Alzheimer’s and suggested that if attackers were able to access BCI-connected neurostimulation systems, they could artificially trigger tremors, memory loss, or cognitive impairment, raising ethical and security concerns over the potential for targeted neurological sabotage.5

Beyond the medical field, this dual-use risk extends to other BCI-integrated systems, such as advertising, smart home control, and military applications. The ability to track, interpret, and influence neural responses is already being leveraged to enhance human-computer interaction, optimize user engagement, and automate cognitive decision-making. However, these same advancements also expand the attack surface for adversaries looking to manipulate thoughts, behaviors, and actions.

This follows a broader pattern seen in cyber warfare and information operations, where innovations originally developed for commercial or personal convenience, including internet of things (IoT) devices, social media algorithms, and biometric authentication systems, have later been exploited for misinformation campaigns, cyberattacks, and digital surveillance. If BCI systems become widely adopted for decision-making, productivity enhancement, or communication, they may become new targets for cyber-enabled influence operations, potentially allowing attackers to introduce false sensory inputs, alter emotional responses, or distort an individual’s perception of reality.

Citations

- Phillip Tracy, “China Is Using Brain-Scanning Hats to Track Workers’ Emotions,” Daily Dot, April 30, 2018, source.

- Michael Smith and Carl Marci, “From Theory to Common Practice: Consumer Neuroscience Goes Mainstream,” Nielsen, July 2016, source.

- Sergio López Bernal, Alberto Huertas Celdrán, and Gregorio Martínez Pérez, “Eight Reasons to Prioritize Brain-Computer Interface Cybersecurity,” Communications of the Association for Computing Machinery 66, no. 4 (April 2023): 68–78, source.

- Sundararajan Karthikeyan, “Cyberattacks on Miniature Brain Implants to Disrupt Spontaneous Neural Signaling,” IEEE Consumer Electronics Magazine 9, no. 6 (November 2020): 35–41, source.

- Sergio López Bernal, Alberto Huertas Celdrán, and Gregorio Martínez Pérez, “Security in Brain-Computer Interfaces: State-of-the-Art, Opportunities, and Challenges,” Association for Computing Machinery Computing Surveys 54, no. 1 (January 2021): 1–35, source.