The Evolution of Data Centers

A Brief History

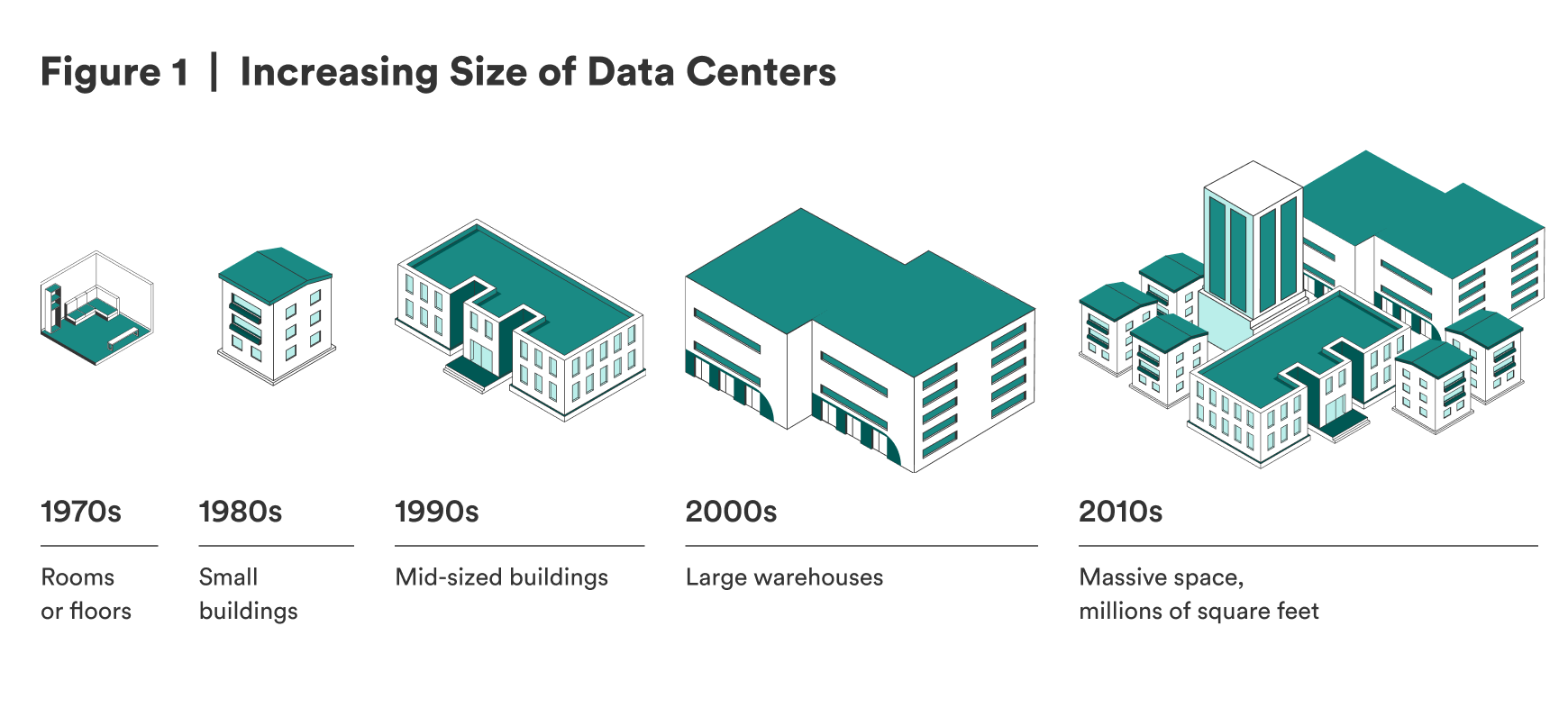

The prototypes of data centers have been around since the 1950s and 1960s and were called mainframes. Mainframes were single, isolated supercomputers that processed data and executed complex calculations. Mainframes were not connected to a network until the 1990s, when multiple microprocessor computers—later servers—started replacing mainframes.1 The modern data center is a physical facility with servers, networking equipment, storage devices, redundant power, and cooling infrastructure to store or process a large amount of data and to compute calculations. Data centers are critical information technology (IT) infrastructure because they store, distribute, and interpret data that is foundational to organizations’ day-to-day operations.2 As data centers get increasingly complex due to evolving technologies, operators deploy smart control systems and management software to optimize performance and energy efficiency.3 Figure 1 illustrates the increasing size of data centers to help envision the evolving complexity.

Data Centers

The Telecommunications Industry Association (TIA) has a system that classifies data centers into four tiers based on data center design—including the center’s architecture and topology, environmental design, power and cooling systems, cabling systems, redundancy, and safety and physical security—which affects resiliency. Tier 4 data centers are the most resilient, and Tier 1 centers are the least resilient as represented in Figure 2 below.4

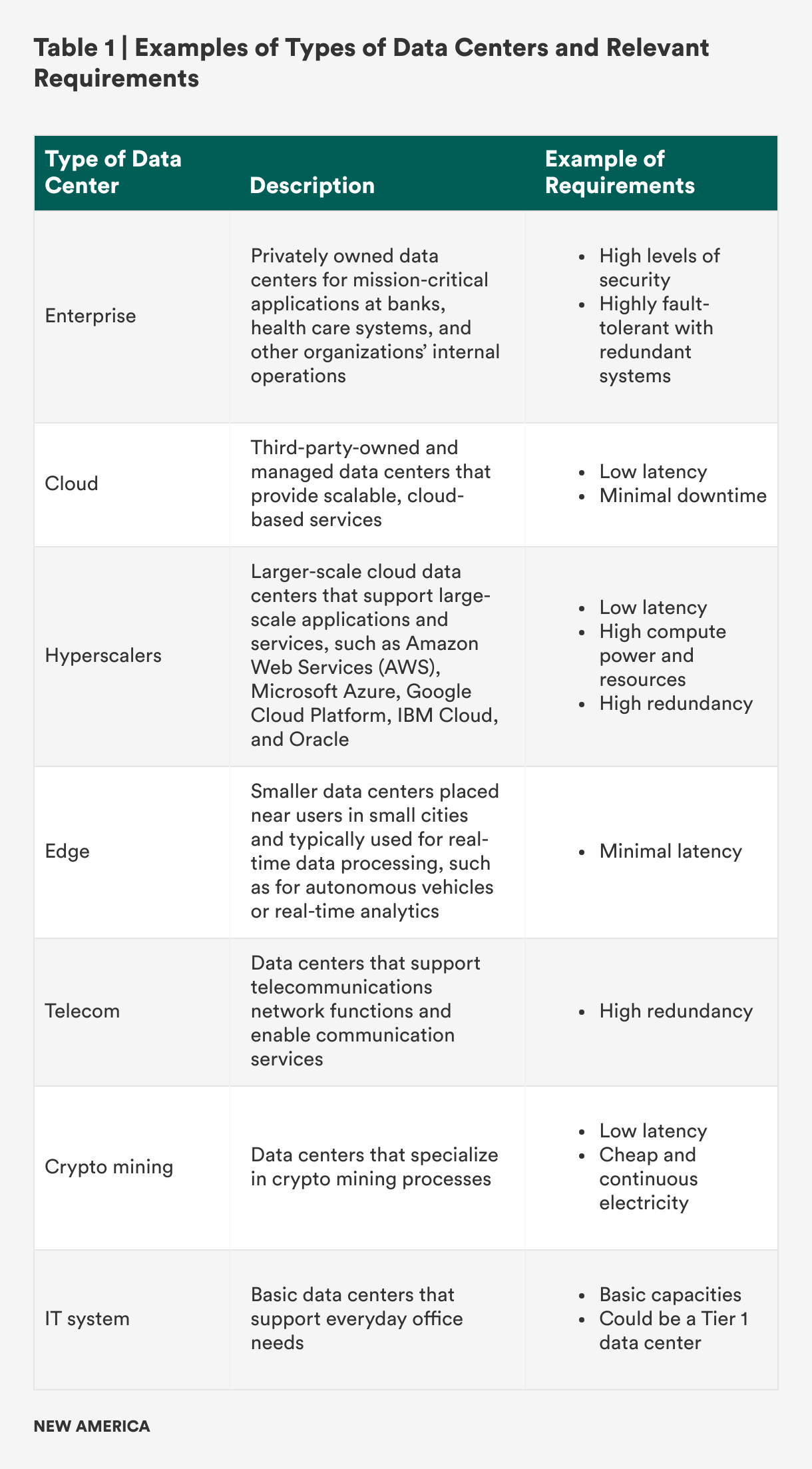

In addition to the tier system, latency also factors into data center performance. Latency refers to the time it takes for the data center to receive and process a user request. Low latency signifies high performance with less delay and time spent before the data center responds to the request.5 While Tier 4 data centers with the lowest latency possible may sound like the goal for all data centers, not all centers require this level of capability because they vary in their specific purposes and critical requirements. To highlight this point, Table 1 provides a high-level summary of different types of data centers and a sample set of critical requirements.6

Given limited resources and the tradeoff between productivity and security, data centers prioritize different requirements when planning, constructing, and operating depending on their main purpose. Supporting AI is an emerging purpose for data centers. While there are still many unknowns about the related requirements, experts believe that AI data centers require high efficiency, significant power and electricity, high compute power, and low latency.7

AI Data Centers

An AI data center is commonly defined as an emerging type of data center built to support the high computational requirements of AI workloads. They can be divided into two subcategories: training AI data centers and inference AI data centers.8 Training AI data centers process large amounts of data to train AI models and conduct machine learning training, while inference AI data centers deliver AI-driven insights and support the deployment of AI models into applications for end users.9

Like traditional data centers, AI data centers have five major components: (1) compute resource, (2) data storage system, (3) network infrastructure, (4) power or energy capability, and (5) cooling system—but in the AI context, all five components need to demonstrate high performance and productivity.10 (Table A1 in the appendix summarizes the differences between AI and traditional data centers across these five major components.) Of these five components, this report focuses on the differences in compute resources due to the different impacts they have on AI data center cybersecurity.

Traditional data centers typically utilize central processing units (CPUs) that use logic circuitry to process data and execute commands. CPUs can have multiple cores that can support multiple software types, but they cannot process operations simultaneously. CPUs also do not have enough memory to support AI data centers’ workloads.11 Therefore, AI data centers use more powerful compute resources:

- Graphics processing units (GPUs) allow for parallel operations. They are often used for AI training data centers and sometimes in other types of large-scale data centers.

- Field-programmable gate arrays (FPGAs) and application-specific integrated circuits (ASICs) can be customized to efficiently process AI workloads.12

- Google’s tensor processing units (TPUs) are a type of ASIC that optimizes performance for specific frameworks and effectively supports deep learning.

A key tradeoff when opting for the more powerful compute resource, such as GPUs, is that they may require much more power and create more heat than CPUs.13 Also, while CPUs and the more powerful compute hardware options face similar cyber threats, such as side-channel attacks, GPUs are vulnerable to additional cyber threats, which will be further detailed in the AI Data Center Threats section.

Beyond the five major components, supplementary considerations exist, such as facility location. As of April 2024, about 50 percent of the over 2,500 data centers in the United States are in Northern Virginia, Northern California, and Dallas, Texas, to prioritize low latency.14 Yet new AI data centers, especially training ones, are now being constructed in more remote areas—for example, in Indiana, Iowa, and Wyoming—to be closer to power plants and farther from cities due to concerns of draining power grids.15 AI inference data centers can be closer to cities and users: Cerebras AI is planning to build them in Santa Clara, California; Atlanta, Georgia; and Montreal, Canada.16 The location of AI data centers—which often depends on latency and power—can increase risks, especially if AI data centers are built in countries with cheap energy and land, as in Southeast Asia.17 This risk will be further detailed in the Changing World Threats section.

Given that AI data centers require the same five components as traditional ones, the two types of centers face similar cybersecurity threats. However, AI data centers face unique or increased threats due to different hardware requirements and location. Additional threats from evolving technologies and changing geopolitics also heighten security requirements.

Citations

- “A Brief History of Data Centers,” Digital Realty, accessed June 14, 2025, source.

- “What Is a Data Center?,” Zscaler, accessed July 14, 2025, source.

- Emil Sayegh, “The Billion-Dollar AI Gamble: Data Centers as the New High-Stakes Game,” Forbes, September 30, 2024, source.

- “What Are Data Center Tiers?,” Digital Realty, accessed June 14, 2025, source; “What Is a Data Center?,” Amazon Web Services, accessed June 14, 2025, source.

- “What Is Low Latency?,” Cisco, accessed June 15, 2025, source.

- Sayegh, “The Billion-Dollar AI Gamble: Data Centers as the New High-Stakes Game,” source; Melissa Palmer, “Hyperscalers: The Complete Guide to What, Why and How,” SolarWinds (blog), January 24, 2023, source.

- Srivathsan et al., “AI Power,” source; Sayegh, “The Billion-Dollar AI Gamble,” source.

- “AI Data Center,” Sunbird, accessed June 15, 2025, source.

- Sayegh, “The Billion-Dollar AI Gamble,” source.

- “What Is an AI Data Centre, and How Does It Work?,” Macquarie Data Centres, July 15, 2024, source.

- Jacob Roundy, “How Do CPU, GPU, and DPU Differ from One Another?,” TechTarget, February 5, 2025, source.

- “How Are AI Data Centers Changing Infrastructure?,” TRG Datacenters, accessed June 15, 2025, source.

- Brian Venturo, “The Redesign of the Data Center Has Already Started. Here’s What It Looks Like,” CoreWeave, March 19, 2024, source.

- Mary Zhang, “United States Data Centers: Top 10 Locations in the USA” Dgtl Infra, April 11, 2024, source.

- Srivathsan et al., “AI Power,” source.

- David Chernicoff and Matt Vincent, “Cerebras Unveils Six Data Centers to Meet Accelerating Demand for AI Inference at Scale,” Data Center Frontier, March 18, 2025, source.

- Dylan Butts, “Malaysia Is Emerging as a Data Center Powerhouse amid Booming Demand from AI,” CNBC, June 16, 2024, source.