Cyber Threats

The different pieces of a data center—the purposes or types, the five key components, and additional considerations—influence a data center’s vulnerabilities and risks, and all need to be secured for a cyber-secure AI data center. This includes the requirements of AI workloads: the models, weights, and training data.

Additionally, while not a foundational requirement, increasingly complex and large data centers utilize data center infrastructure management (DCIM) software to efficiently maintain and manage the facilities. DCIMs will be useful for AI data centers in the following capacities:1

- Intelligent capacity search enables quickly finding which device or rack has the capacity for additional AI deployment.

- Predictive analysis predicts the impact of AI workloads on rack capacity and power usage.

- Automatic server power budgeting calculates the required power for servers.

- Dynamic single-line power diagrams depict power capacity and load to support redundancy planning and protect against break trips.

There is also newer software designed specifically for certain hardware mostly found in AI data centers, such as Trend Vision One—Sovereign and Private Cloud (SPC) for GPUs and TensorFlow for TPUs, further emphasizing the importance of cyber-secure software and applications deployed in AI data centers.2

A Security Framework for AI Data Centers

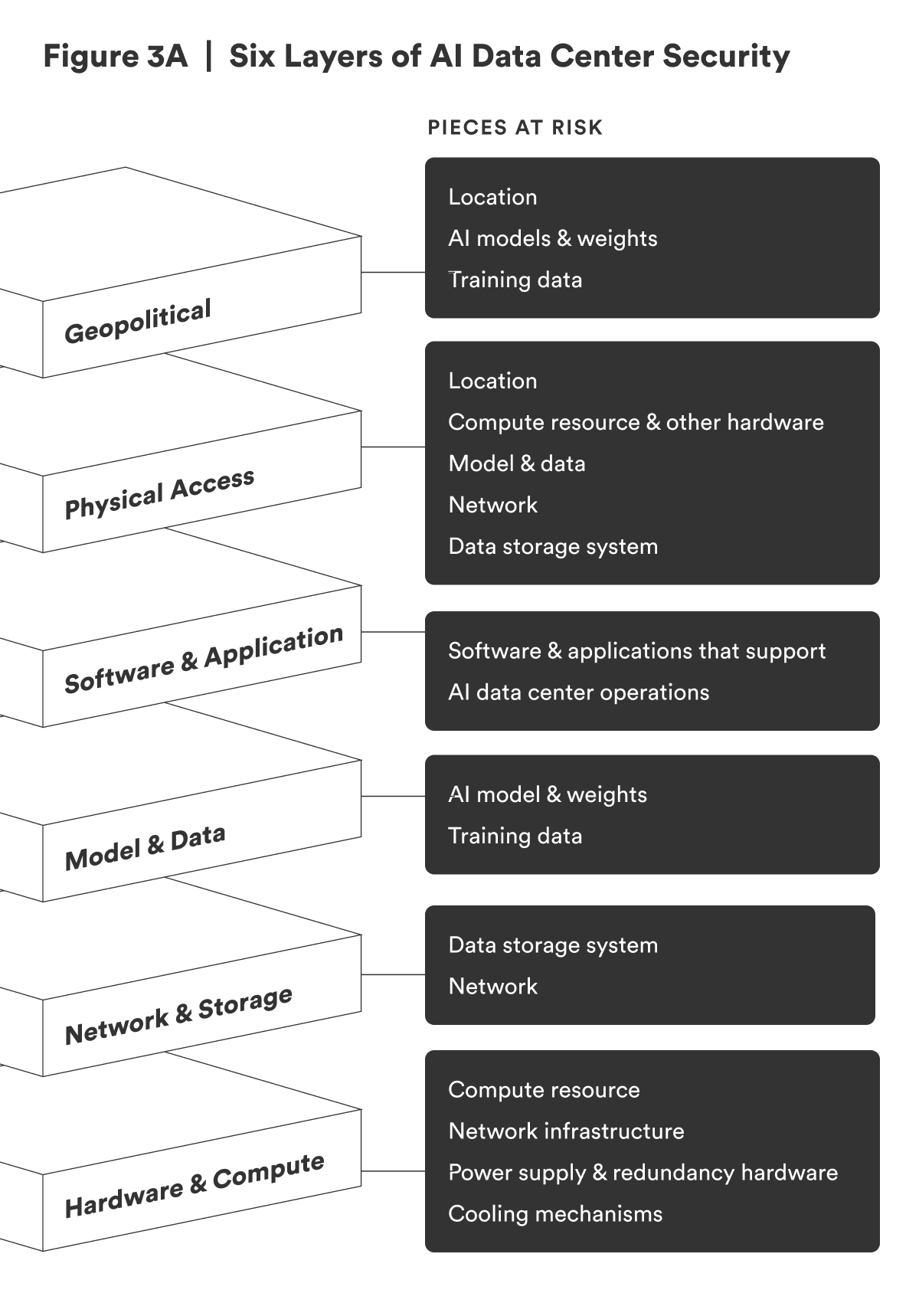

In considering all the pieces inside an AI data center, this report proposes the following framework with six layers of security: (1) hardware & compute, (2) network & storage, (3) model & data, (4) software & application, (5) physical access, and (6) geopolitical.

Some elements may require cybersecurity considerations at multiple layers depending on the threats they face. For example, training data in AI data centers requires security at the model & data layer from cyberattacks, at the physical access layer from infiltrators who physically enter the facilities to steal the data, and at the geopolitical layer from state-sponsored actors who are targeting AI training data for their own AI sovereignty. Figure 3A below maps the data center pieces at risk at each of the security layers.

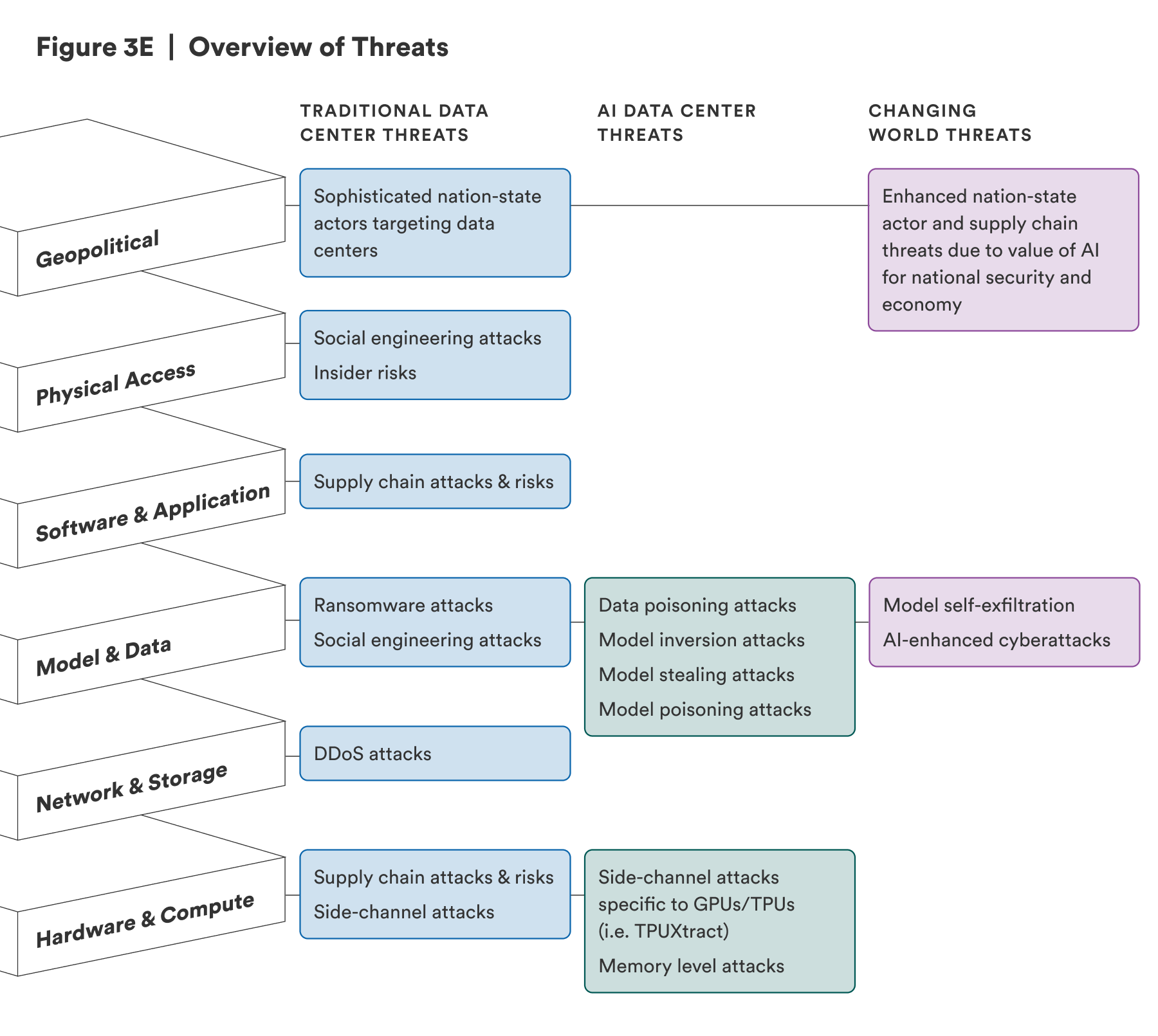

This section of the report will dive into the different threats faced by both traditional and AI data centers and map them to the six-layer framework.

Traditional Data Center Threats

Data centers are high-value targets because they can store important data, provide critical services, and run networks, applications, security, and virtual machines. As previously mentioned in Table 1, data centers support mission-critical applications at banks and health care systems, allow cloud-based services to be scalable, process data for autonomous vehicles and the Internet of Things, and enable communication services. It is not an understatement to say that data centers are the backbone of all online services. Thus, a disruption or outage at data centers—whether caused intentionally by threat actors or unintentionally by human error or process failures—can be costly for data center operators, risking financial loss, reputation damage, regulatory penalties, and loss of customers.3 For example, in 2021, when data center outages cost companies an average of $100,000 per incident, Amazon Web Services experienced a five-hour data center outage due to a failure in network devices in the US-EAST-1 Region, costing the company $34 million in revenue.4

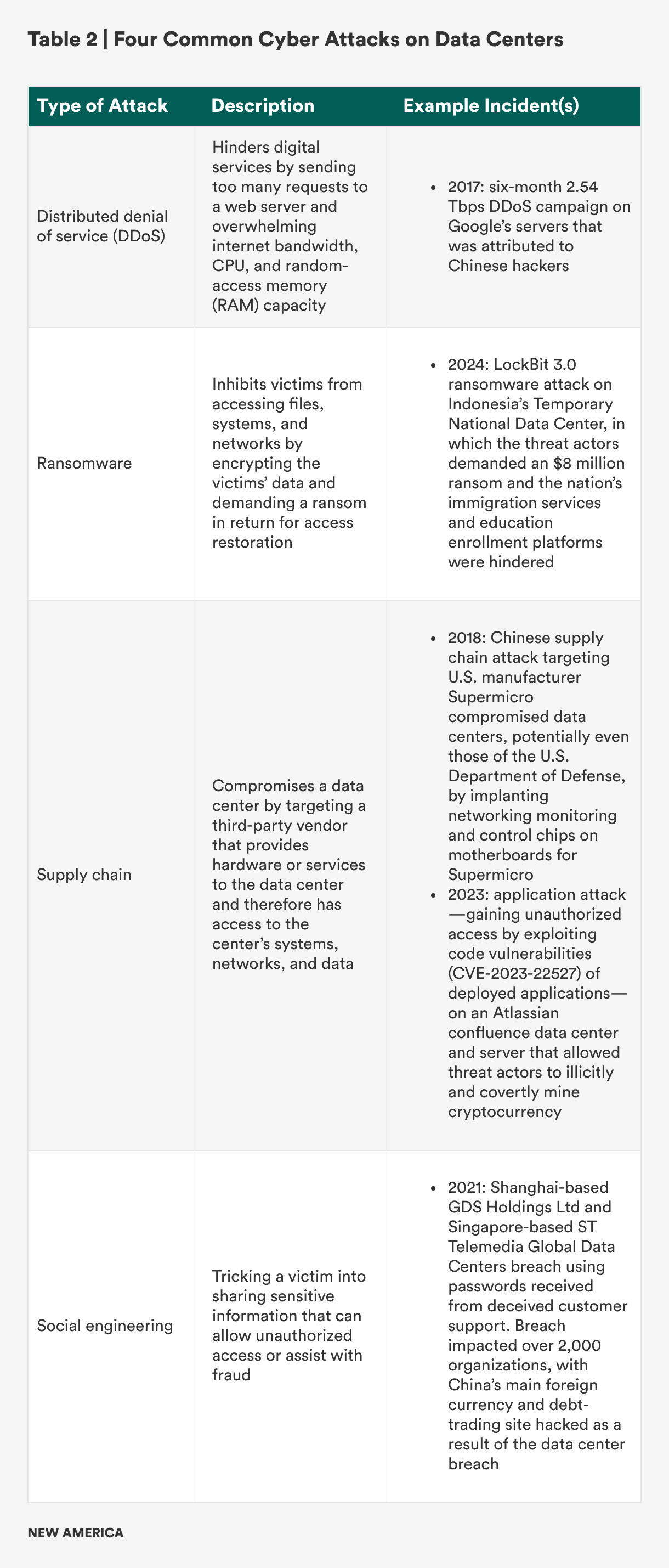

Both sophisticated nation-state actors and financially motivated cyber criminals target data centers with disruption attacks, ransomware incidents, and data breaches.5 Four common cyberattacks on data centers are listed in Table 2 along with examples of incidents.6

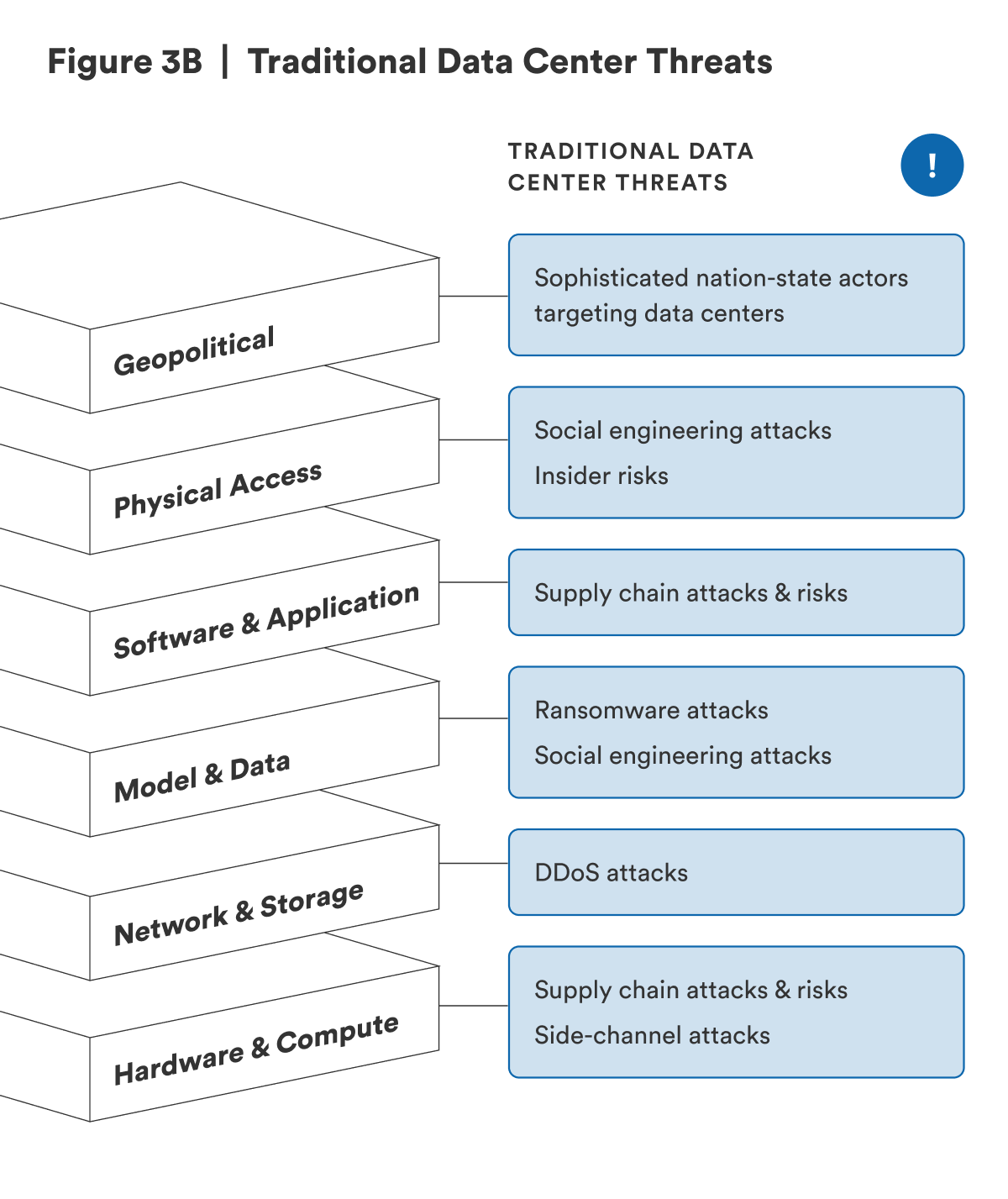

Distributed denial of service (DDoS) attacks rely on network connectivity to be able to target and overload a system or service, making defense at the network & storage layer critical.7 A ransomware attack targets and encrypts data, highlighting the importance of cybersecurity at the model & data layer.8 A supply chain attack can make both hardware and software inside a data center vulnerable, proving hardware and software layers to be crucial.9 Social engineering attacks depend on threat actors gaining a form of access into the data center whether through calling and deceiving the target’s customer support or manipulating employees to install remote access Trojans (RAT)—malware that gives hackers remote access into and control of a targeted system—or through backdoors into data center components.10 Social engineering attacks necessitate model & data as well as physical access layers of security. Furthermore, the 2017 DDoS campaign against Google and the 2018 Supermicro supply chain attack referenced in Table 2 involved actors in China, requiring consideration of the geopolitical layer that will be further detailed in the Changing World Threats section.

Threat actors can also combine these types of attacks in one operation. For example, in 2024, the German data center power supply company Bender experienced a ransomware attack in which hackers infiltrated its operating systems and gained unauthorized access to account, financial, and banking data.11 This incident, which affected the power and operations of a data center, was a combination of ransomware and supply chain attacks.

Beyond the four common types of cyberattack, there is another type of attack that data centers are particularly vulnerable to: side-channel attacks, a cybersecurity attack that collects information from a system’s process and execution or attempts to influence a system’s program.12 In data centers, side-channel attacks include measuring or surveilling fan power or the sounds a CPU makes, or using sensors that measure the electromagnetic field. These attacks can show threat actors CPU-level activity, the data architecture, and data usage, requiring security at the hardware & compute layer. In July 2025, global semiconductor company Advanced Micro Devices reported that four new processor vulnerabilities could allow hackers to conduct timing-based side-channel attacks, and cybersecurity company CrowdStrike labeled these weaknesses as critical threats.13

Finally, there are two specific types of risks also critical to data center security. The first is insider risk, defined by the Cybersecurity and Infrastructure Security Agency as “the potential for an insider to use their authorized access or understanding of an organization to harm that organization,” whether intentional or unintentional.14 Insider risk can be mitigated by limiting access to trusted people, and it requires cyber defense at the physical access layer. The second is supply chain risk. Beyond supply chain attacks described earlier in this section, there is an added risk because a majority of the components in a data center are developed by third-party suppliers. At the hardware layer, data centers rely on a global network of hardware suppliers, which creates risks of counterfeit and flawed hardware even without a threat actor’s interception or attack.15 At the software layer, third-party tools and applications hosted on data centers can have vulnerable code, making the selection of third-party suppliers as well as secure design of applications and code critical.16 Figure 3B below maps traditional data center cyberattacks and risks to the proposed six layers of security.

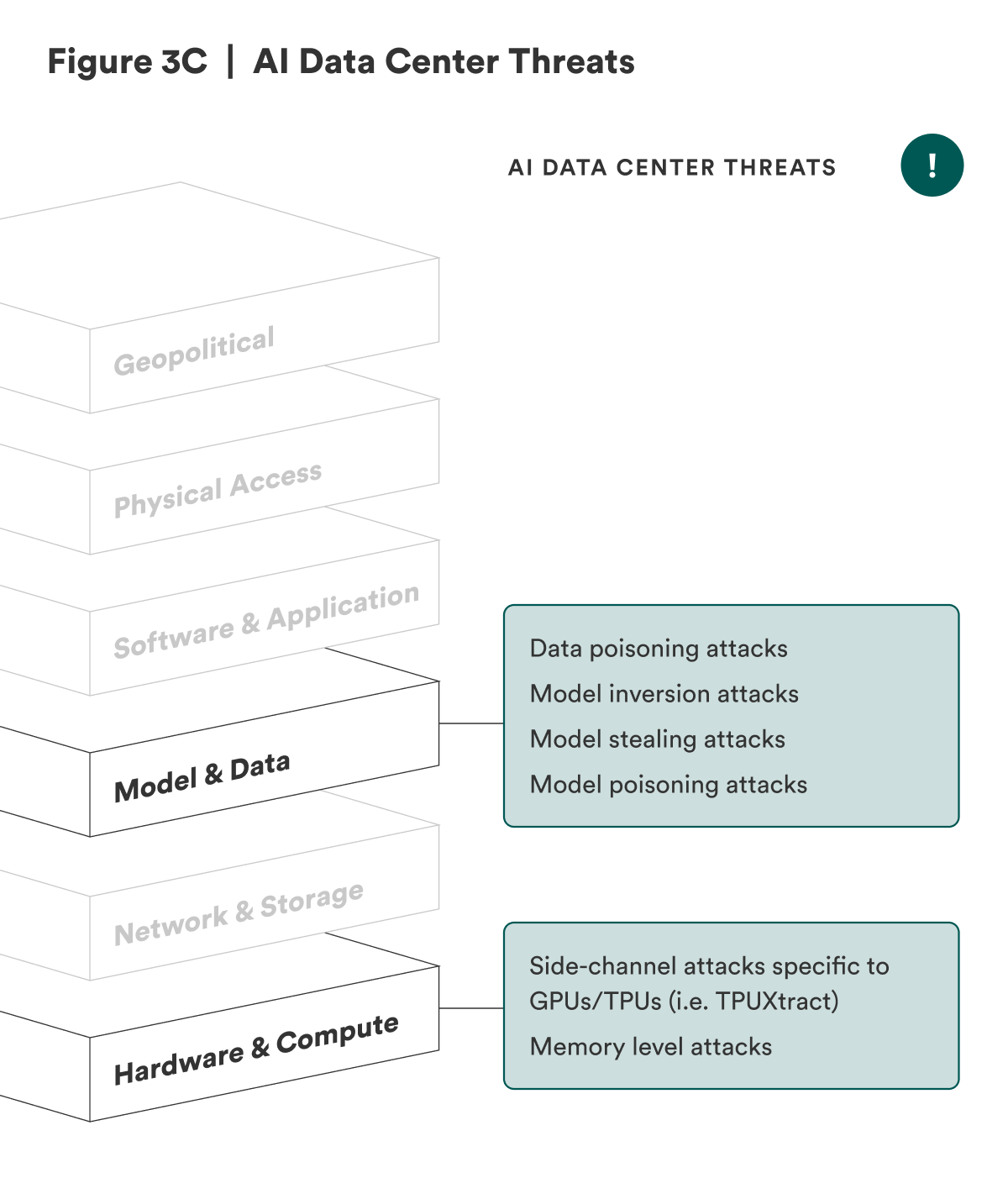

AI Data Center Threats

In addition to all the threats that traditional data centers face, AI data centers face unique threats. Specifically: (1) The hardware & compute layer needs to consider ASIC and AI-specific hardware-level attacks, such as memory-level and TPU-specific attacks, and (2) the data layer needs to expand to include model security since AI model weights and training data are in AI data centers.

At the hardware & compute layer, defending against supply chain attacks and vulnerabilities, as well as side-channel attacks, are still critical. AI data centers need trusted and secured GPUs and ASICs, which, like CPUs, rely on a global supply chain.17

Once the hardware is inside the AI data center, the sounds from GPUs can reveal information to threat actors about those GPUs, model architecture, and architecture weights.18 A common GPU side-channel attack is keystroke inference, through which hackers monitor GPU-based rendering workloads to get access to keystrokes and user inputs.19 Tim Fist, director of emerging technology at the Institute for Progress, highlighted that “GPUs are more vulnerable than CPUs to memory-level attacks.”20 GPUs do not always have sufficient memory isolation, which means that memory can be carried from one process to another and allow threat actors to get access to model weights and training data.21 There is also GPU-specific malware that can execute malicious code on a GPU’s memory and could bypass traditional CPU security tools. Notably, in January 2025, Nvidia announced that seven new vulnerabilities were found in its GPUs, with three of them being of high severity.22

GPU vulnerabilities can be found in traditional data centers because large-scale centers deploy GPUs. On the other hand, TPUs—which are also subject to the side-channel and memory-level attacks mentioned above—are uniquely designed for AI and were first deployed internally by Google in 2015.23 In early 2025, a TPU-specific side-channel attack, TPUXtract, was discovered. TPUXtract exploits unintentional TPU data leaks to enable a threat actor to infer an AI model’s parameters, essentially allowing AI model exfiltration and intellectual property (IP) theft.24

Given that the hardware & compute layer threats enable hackers to extract information about the AI model and weights deployed in the AI data center, the threats at the data layer can extend to the model layer. When data is exfiltrated from a traditional data center, the impacts can include the loss of sensitive data, regulatory issues, and reputational damage.25 When AI model information and IP are accessed and stolen, the impacts expand to include risking the integrity and confidentiality of the AI model. Threat actors can manipulate exfiltrated models to create vulnerabilities and bias decision-making.26 Additionally, as it becomes increasingly evident that AI will influence geopolitics, the global economy, national security, and human lives, the importance of protecting models becomes critical.27 This point will be further described in the Changing World Threats section.

Model threats include AI training and model weight exfiltration through traditional ransomware and social engineering attacks. Furthermore, AI models face specific model-level threats:28

- Data poisoning attacks: accidentally or purposefully including incorrect data in the AI training dataset, leading to erroneously trained AI models

- Model inversion attacks: recovering training data from AI models by querying models, examining the outputs, and extracting information

- Model stealing attacks: querying AI models and using the outputs to train a replacement model trained like the original model

- Model poisoning attacks: modifying model parameters or architecture to create a backdoor or change the model’s behavior29

At a high level, AI hardware-specific cyber threats and model threats expand cyber threats to AI data centers. Figure 3C below maps cyberattacks specific to AI data centers to the proposed six layers of security.

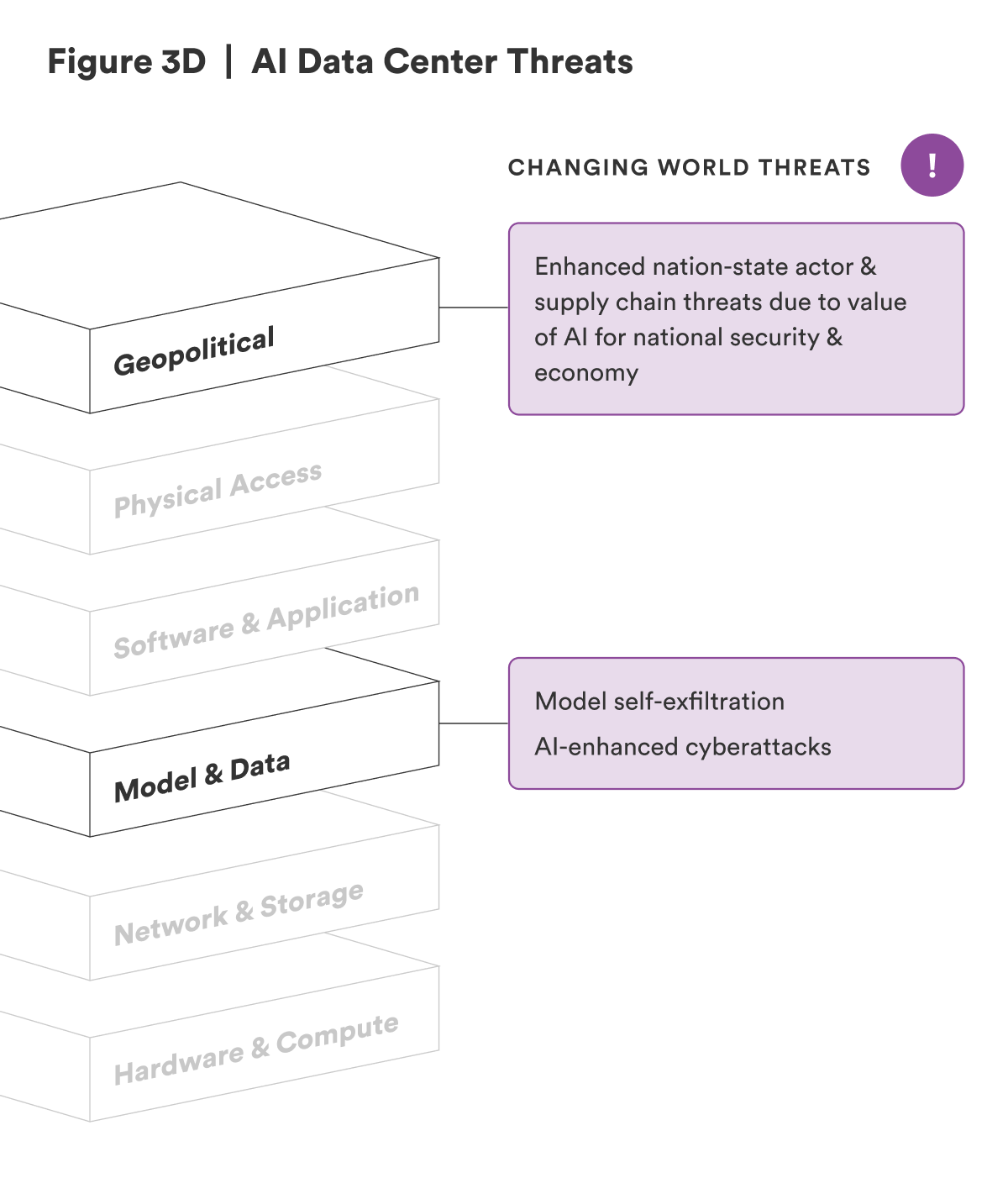

Changing World Threats

As data centers and their components change to meet the requirements of AI data centers, the world continues to transform as well, introducing new or enhanced threats for emerging AI data centers.

The first type of change is in volatile geopolitics. While traditional data centers also face geopolitical threats and are targeted by nation-state actors, these threats are heightened for AI data centers due to AI’s significance for national security and economic competitiveness. With the advent of the AI race, the concept of sovereign AI—the ability of a nation to develop, use, and govern its own AI models and related infrastructure—is on the rise, and many believe that sovereign AI is crucial for national security and economic competitiveness.30

In April 2025, the U.S. Department of Defense highlighted that the Joint Staff is implementing AI to improve military operations, to improve a commander’s decision-making and responsiveness, and to streamline processes.31 The potential for AI technologies to help counter threats across all sectors, including critical energy infrastructure, will only embed AI deeper into critical infrastructure.32 In terms of economic competitiveness, technological innovation results in high-level economic improvement, and with AI specifically, automation of routine tasks and AI-supported creative and technical work are predicted to further innovation, allow for strategic tasks, and improve a nation’s economy.33

These uses for AI indicate that if actors stole AI models, weights, or training data, sensitive data related to the military, economy, critical infrastructure, and more would be leaked. Additionally, stolen AI models can help hackers create deepfakes or convincing phishing emails for enhanced psychological warfare and disinformation campaigns, election interference, cybercrime and financial fraud, and critical infrastructure attacks.34

As AI becomes a key component of national security and economic competitiveness, AI data centers become key assets to protect from foreign adversaries, especially from sophisticated cyber threat actors sponsored by Russia, China, Iran, and North Korea. Nation-state threat actors are well resourced and sophisticated, posing a serious threat to even the biggest U.S. companies and critical industries, as CrowdStrike’s chief security officer Shawn Henry stated in 2023.35 For example, the Chinese state-sponsored hacking group Salt Typhoon successfully gained access to an unprecedented amount of information from the largest U.S. telecommunications companies and accessed information on high-value targets like Donald Trump and JD Vance.36

Unfortunately, like most businesses and companies, AI data centers’ commercial operators are typically not equipped to defend against such sophisticated operations. In 2024, OpenAI emphasized the importance of increasing AI data center and infrastructure security.37

Even before an AI data center is operational, there is potential for sabotage attempts, given that Chinese companies exclusively manufacture many AI data center components, such as most transformer substations critical for power systems. This means that Chinese companies could install backdoors into the hardware components. In addition, most AI-specific GPUs are made in Taiwan, which is threatened by China as part of a larger national sovereignty debate. Historically, Taiwan Semiconductor Manufacturing Company (TSMC) technologies and products have been unlawfully transferred to mainland China. There are also concerns and a strong suspicion that the Chinese Communist Party (CCP) has infiltrated TSMC and U.S. labs with spies.38

Once an AI data center is operational, geopolitical threats continue as AI data centers are vulnerable to state-sponsored disruption and exfiltration attacks due to an insufficient level of cybersecurity.39 For example, Russia has sophisticated cyber capabilities to infiltrate AI data centers, steal models, and potentially run a model in its own infrastructure.40

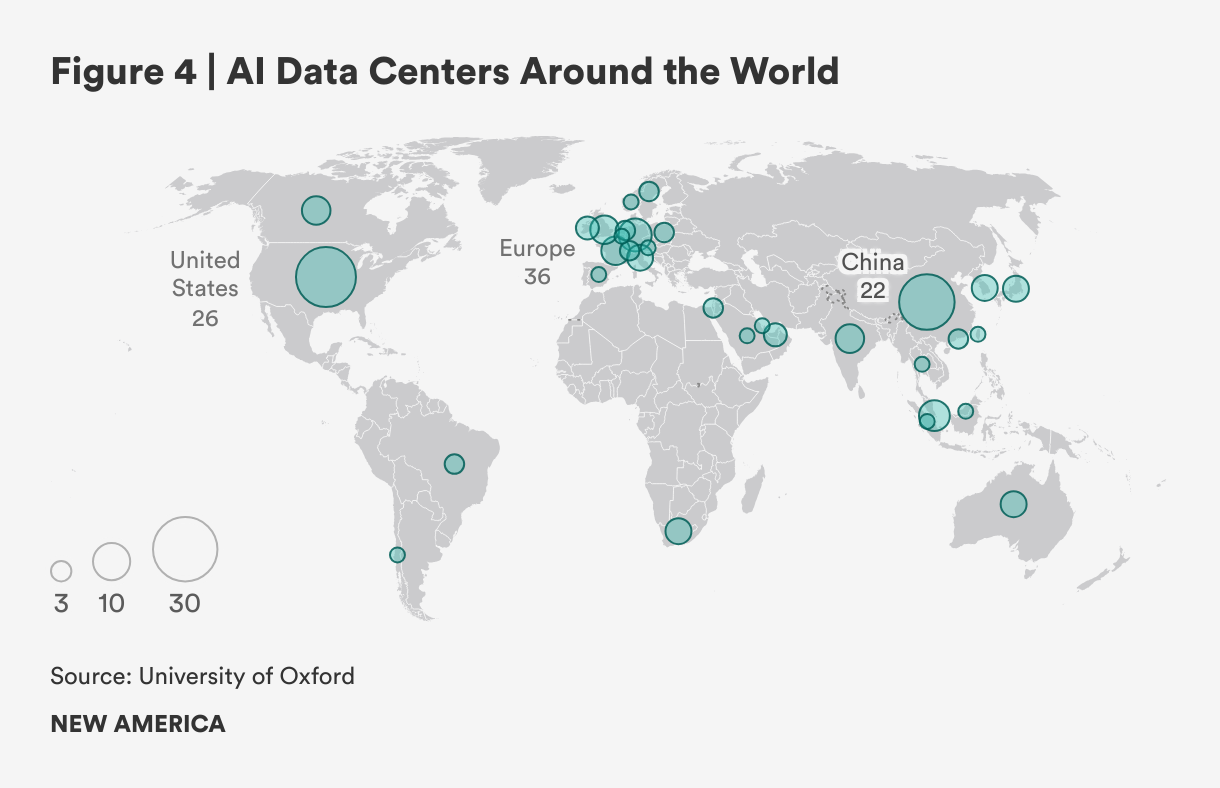

Despite the potential state-sponsored targeting of AI data centers, companies and nations are risking increased threats by building AI data centers abroad and joint centers shared with other countries. Figure 4 below shows the number of AI data centers in different countries, and also reveals that Asia has the most.41 In January 2025, the United States and the United Arab Emirates (UAE) announced a partnership to build a data center in Abu Dhabi, which will be the largest AI data center outside the United States.42 Concerningly, the Persian Gulf is a part of China’s Digital Silk Road 2.0, and the UAE has adopted Chinese 5G technology and city-wide surveillance programs, potentially giving the CCP access to the AI data center. Companies also choose to build AI data centers abroad in locations that have cheap energy and land, such as Malaysia.43

The second type of change is evolving technologies. As previously mentioned, the capabilities of AI data centers have conversely enabled an increasingly robust system, which wielded by U.S. competitors, increases risks to critical data center infrastructures. Threat actors are using AI tools to enhance phishing campaigns, research target networks, conduct post-compromise activities, and support coding tasks.44 They are also using AI-generated deepfakes to bypass multifactor authentication.45

AI models themselves can also pose a threat to data centers through AI self-exfiltration. Traditional data centers face threats from backdoors installed into data center components, allowing a threat actor to exfiltrate or access sensitive information. AI data centers face an additional threat of backdoors installed into AI models through model poisoning, which could enable threat actors to steal the model weights and information. In addition, model self-exfiltration is a novel threat that refers to AI models deceiving the user and cybersecurity measures in place in order to self-leak its model weight and sensitive data.46 Typical methods to protect model weights—such as air gaps—may not stop model self-exfiltration because the model may take over the system.47 This new threat—a novel form of data exfiltration and leakage—further necessitates enhanced model security.

Besides AI, there are other technological evolutions in the works that will enhance threats to AI data centers, such as quantum computing, which will soon require next-generation cryptography.48 Figure 3D below maps changing world threats to the proposed six layers of security, and Figure 3E compiles Figures 3B–3D to map the different types of threats across the layers.

Citations

- “Data Center Infrastructure Management,” Sunbird, accessed June 16, 2025, source.

- Agam Shah, “Trend Micro, Nvidia Partner to Secure AI Data Centers,” DarkReading, June 6, 2024, source.

- “Data Center Threats and Vulnerabilities,” Check Point Software Technologies, accessed June 18, 2025, source.

- Rich Miller, “Problems With AWS Network Devices Caused Widespread Cloud Outage,” Data Center Frontier, December 8, 2021, source; Bill Kleyman, “The Data Center Ransomware Attack That Costs You Everything,” Data Center Knowledge, September 1, 2023, source.

- Beth Maundrill, “Cybersecurity Implications of Data Centres as Critical National Infrastructure,” Infosecurity Europe, October 28, 2024, source.

- “Datacenter Vulnerabilities: 7 Life‑Changing Attacks You Must Know,” Enterprise Engineering Solutions Corporation, accessed July 15, 2025, source; Catalin Cimpanu, “Google Says It Mitigated a 2.54 Tbps DDoS Attack in 2017, Largest Known to Date,” ZDNet, October 16, 2020, source; Daryna Antoniuk, “Indonesia’s National Data Centre Encrypted With LockBit Ransomware Variant,” The Record, June 24, 2024, source; Curtis Franklin, “Report: In Huge Hack, Chinese Manufacturer Sneaks Backdoors Onto Motherboards,” DarkReading, October 5, 2018, source; “Application Attacks,” Contrast Security, accessed July 15, 2025, source; Abdelrahman Esmail, “Cryptojacking via CVE‑2023‑22527: Dissecting a Full‑Scale Cryptomining Ecosystem,” Trend Micro, August 28, 2024, source; “Cyber Attacks on Data Center Organizations,” Resecurity, February 20, 2023, source.

- Josh Fruhlinger and Lucian Constantin, “DDoS Attacks: Definition, Examples and Techniques,” CSO, May 17, 2024, source.

- Kurt Baker, “Introduction to Ransomware,” CrowdStrike, March 4, 2025, source.

- “Third‑Party Data Breaches: What You Need to Know,” Mitratech, January 7, 2025, source.

- Computer Security Resource Center, “Social Engineering,” National Institute of Standards and Technology, accessed July 15, 2025, source; “What Is a Remote Access Trojan?,” Fortinet, accessed July 15, 2025, source; “Data Center Threats and Vulnerabilities,” Check Point Software Technologies, accessed June 18, 2025, source.

- Sebastian Moss, “Data Center Power Supply Business Bender Hit by Ransomware Attack,” Data Center Dynamics, December 3, 2024, source.

- Scott Robinson, Gavin Wright, and Alexander S. Gillis, “What Is a Side-Channel Attack?,” TechTarget, April 8, 2025, source.

- Gyana Swain, “AMD Discloses New CPU Flaws That Can Enable Data Leaks via Timing Attacks,” CSO, July 10, 2025, source.

- Maundrill, “Cybersecurity Implications of Data Centres,” source; “Defining Insider Threats,” Cybersecurity and Infrastructure Security Agency, accessed June 18, 2025, source.

- Matt Vincent, “How Tariffs Could Impact Data Centers, AI, and Energy amid Supply Chain Shifts,” Data Center Frontier, April 3, 2025, source; “Securing the Hardware Supply Chain,” OPSWAT, accessed June 18, 2025, source.

- “Data Center Threats and Vulnerabilities,” Check Point Software Technologies, source.

- “Reimagining Secure Infrastructure for Advanced AI,” OpenAI, May 3, 2024, source.

- Tim Fist, interview by Seungmin Lee, April 24, 2025.

- “GPU Vulnerability: Side-Channel Attacks,” Liquid Web, accessed July 15, 2025, source.

- Tim Fist, interview by Seungmin Lee, April 24, 2025.

- “GPU Vulnerability: Side-Channel Attacks,” Liquid Web, source.

- Davey Winder, “Nvidia Security Warning—Act Now as 7 New GPU Vulnerabilities Confirmed,” Forbes, January 28, 2025, source.

- Chaim Gartenberg, “TPU Transformation: A Look Back at 10 Years of Our AI-Specialized Chips,” Google Cloud, July 31, 2024, source.

- Nate Nelson, “With ‘TPUXtract,’ Attackers Can Steal Orgs’ AI Models,” DarkReading, December 13, 2024, source.

- “Risks of Data Exfiltration,” SentinelOne, source.

- “Case Study: How TPUXtract Leveraged Keysight Tools for AI Model Extraction,” source.

- Satariano and Mozur, “The Global AI Divide,” source.

- “Top 14 AI Security Risks in 2024,” SentinelOne, accessed June 18, 2025, source.

- “Top 14 AI Security Risks in 2024,” source.

- Muath Alduhishy, “Sovereign AI: What It Is, and 6 Strategic Pillars for Achieving It,” World Economic Forum, April 25, 2024, source.

- Wes Shinego, “Defense Officials Outline AI’s Strategic Role in National Security,” U.S. Department of Defense, April 23, 2025, source.

- Shingeo, “Defense Officials Outline AI’s Strategic Role in National Security,” source.

- Alduhishy, “Sovereign AI,” source.

- Shlomit Wagman, “Weaponized AI: A New Era of Threats and How We Can Counter It,” Harvard Kennedy School Ash Center, April 8, 2025, source.

- Carrie Pallardy, “What CISOs Need to Know About Nation-State Actors,” InformationWeek, December 12, 2023, source.

- Erica D. Lonergan and Michael Poznansky, “A Tale of Two Typhoons: Properly Diagnosing Chinese Cyber Threats,” War on the Rocks, February 25, 2025, source.

- “Reimagining Secure Infrastructure for Advanced AI,” source.

- Jeremie Harris and Edouard Harris, America’s Superintelligence Project (Gladstone AI, April 2025), source.

- Billy Perrigo, “Exclusive: Every AI Datacenter Is Vulnerable to Chinese Espionage, Report Says,” Time, April 22, 2025, source.

- Harris and Harris, America’s Superintelligence Project, source.

- Satariano and Mozur, “The Global AI Divide,” source; Zoe Hawkins, Vili Lehdonvirta, and Boxi Wu, “AI Compute Sovereignty: Infrastructure Control Across Territories, Cloud Providers, and Accelerators,” SSRN, June 24, 2025, source.

- Amy Gunia, “Will ‘Massive’ Gulf Deals Cement the U.S. Lead in the Race for Global AI Dominance?,” CNN, May 22, 2025, source.

- Tye Graham and Peter W. Singer, “How China’s Tech Giants Wired the Gulf,” Defense One, May 13, 2025, source; Butts, “Malaysia Is Emerging as a Data Center Powerhouse,” source.

- Google Threat Intelligence Group, “Adversarial Misuse of Generative AI,” Google Cloud, January 29, 2025, source.

- Andersen Cheng, “The Race to Build Data Centers Is On–Here’s How We Keep Them Secure,” TechRadar Pro, December 4, 2024, source.

- Marius Hobbhahn, “Scheming Reasoning Evaluations,” Apollo Research, January 23, 2025, source.

- Austin Carson, interview by Seungmin Lee, April 17, 2025.

- Cheng, “The Race to Build Data Centers Is On,” source.