A Framework for Cyber-Secure AI Data Centers

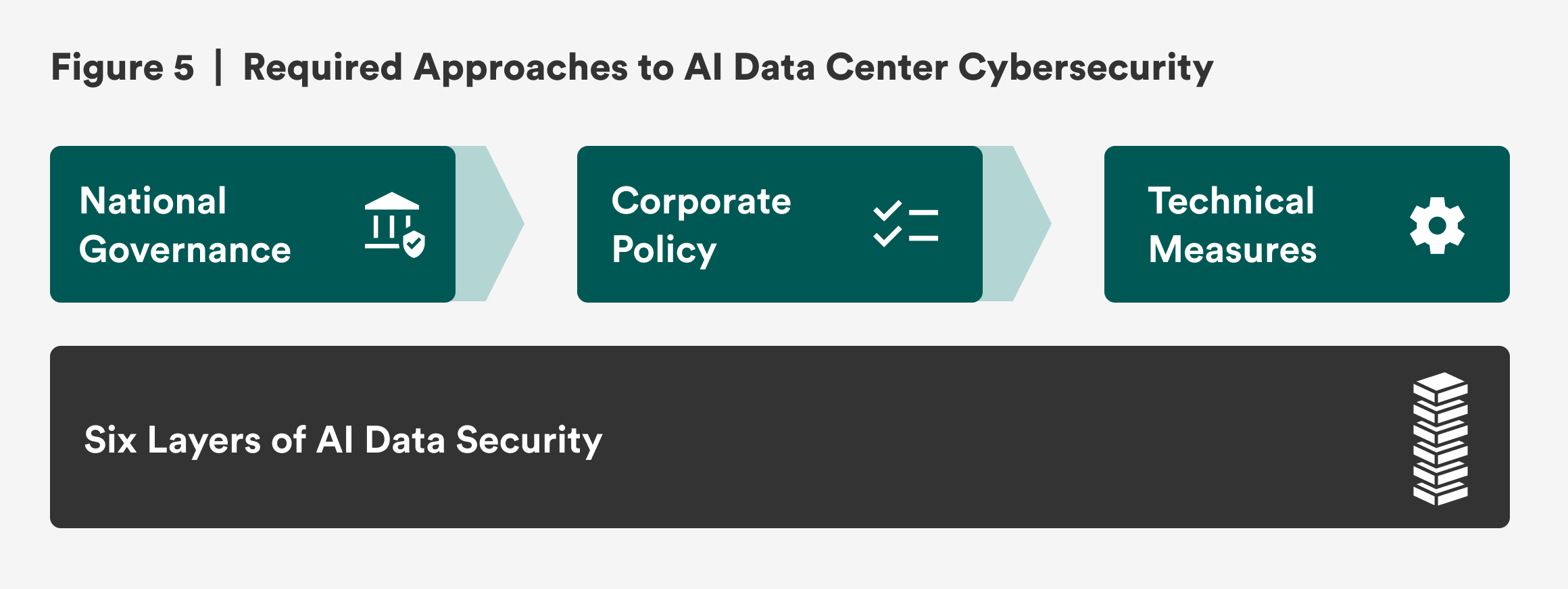

Given the threats and high-value nature of the target, the cybersecurity of AI data centers needs to be top tier. As Tim Fist commented, “AI data centers used to train and run the most powerful models will likely need to be secured with nation-state-level adversaries in mind—this will likely require taking some of the measures typically only used on government data centers used to store and process highly classified information, as well as many additional measures specific to AI performance and security requirements.”1 In line with this comment, this report suggests that each of the six layers of security require three approaches: technical, corporate policy, and national governance (see Figure 5). Bridge the gaps between the technical, corporate policy, and national governance approaches with a framework that maps the threats to AI data centers across the six layers of security.

Technical Measures

Recommendation 1: Implement existing research and standards for technical requirements in AI data centers.

Informing the security approach from a technical perspective, RAND published a report in May 2024 that created security levels from one to five for securing model weights.2 Security Level 1 (SL1) indicates an AI system that can defend against amateur attacks, and Security Level 5 (SL5) can protect against the most sophisticated attacks—even ones by nation-state actors. The report includes a benchmark for each level, detailing technical security measures necessary for that level.3 The Institute for Progress built on RAND’s work and outlined an overview of technical measures required for AI data centers to reach SL4 across supply chain, network & storage, hardware, and physical access security.4 The technical needs for a cyber-secure AI data center are thus generally well known. However, corporate policy and national governance measures need to be in place for a top-tier consolidated cybersecurity approach that incentivizes AI data center companies and operators to implement the required technical measures.

Corporate Policy Measures

Recommendation 2: Corporate policies need to require technical measures across the six layers of security.

For AI data center companies and operators to have the appropriate technical measures in place, they would need corporate governance measures that require the technical mitigations in a structured process. For example, corporate policies that require all AI data centers to have a Faraday cage or shield chamber deployed with the hardware are necessary in order to mitigate side-channel attacks on data center hardware and defend against tracking and monitoring of electromagnetic emanations.5 At the model & data layer, a corporate policy needs to require continuous AI audits and monitoring in order to secure AI models, identify backdoors and vulnerabilities in them, and defend against model and data exfiltration.

National Governance Measures

Recommendation 3: National governance measures should focus on incentivizing operators to meet the technical and corporate policies needed for a cyber-secure AI data center.

National governance approaches need to provide a framework that encourages responsible AI data center construction and operations. Even though companies have natural incentives—such as reputational risks from data leakage or financial risks from AI data center outages and disruptions—to make AI data centers cyber secure, defending these assets from the most sophisticated threat actors is not easy and requires significant resources and investments. National policy approaches are currently immature: While there are over 200 national or supranational AI laws and regulations, very few actually govern AI usage, deployment, and infrastructure with binding legislation.6 Some existing standards that can help support AI data center security include:7

- The National Institute of Standards and Technology (NIST)’s Secure Software Development Framework (SSDF) includes best practices for decreasing software-level vulnerabilities and has an AI addendum that includes best practices for AI models.8

- NIST’s SP 800-171 provides standards for protecting unclassified information.9

- NIST’s SP 800-53 includes standards for security and privacy control.10

- NIST’s FIPS 140-3 outlines the design and operation of computer hardware that processes and protects sensitive data.11

- The Federal Risk and Authorization Management Program (FedRAMP), based on NIST SP 800-3, looks at security assessments, authorization, and monitoring to determine whether a cloud service provider is in compliance with the program’s standards.12

- The U.S. Department of Defense has a Cybersecurity Maturity Model Certification (CMMC) program that requires defense contractors to have sufficient security measures to protect unclassified and sensitive information.13

- The Cybersecurity and Infrastructure Security Agency (CISA) has a Zero Trust Maturity model that helps define best practices for controlling access to sensitive data.14

- CISA’s Software Bill of Materials (SBOM) offers an ingredients list for software to identify software and supply chain vulnerabilities.15

Policies and objectives focused on AI data centers have also emerged but have not consistently required nor incentivized AI data center security. President Biden’s 2025 Executive Order (EO) 14141: Advancing United States Leadership in Artificial Intelligence Infrastructure required AI data center operators to submit security proposals if requesting to build on federal land.16 Unfortunately, President Trump revoked EO 14141 in July 2025. Furthermore, the Trump administration’s EO 14179: Removing Barriers to American Leadership in Artificial Intelligence and EO 14154: Unleashing American Energy led the Department of Energy to designate 16 potential federal sites for rapid AI data center construction that may not take into account security requirements.17 AI data center construction on federal land has yet to materialize.

More recently, in July 2025, President Trump revealed his AI Action Plan, which further mandates that federal land be available for AI data centers and supporting infrastructure construction without security requisites.18 Additionally, the action plan’s central theme of “Build, Baby, Build!” encourages businesses to build AI tech stacks and data centers abroad and only mentions high-security technical standards for AI data centers utilized by the military and intelligence community.19

National regulations with significant fines and risk-based frameworks that require stronger security measures in AI data centers are currently lacking but are crucial for incentivizing AI data center operators and companies to meet high-security technical requirements.20 Benefits such as tax breaks or access to federal land tied to strong security requirements can also encourage operators.

Once national governance measures exist, they can further incentivize AI data center businesses and operators by highlighting the return on investment when complying with regulations: When there is a distinction between noncompliant and compliant AI data centers, investors, customers, and potential employees will flock to the more cyber-secure centers.21

For example, at the software & application layer, supply chain attacks and vulnerabilities pose risks to AI data centers. A technical approach to mitigate the risk would be to implement secure coding or test software with penetration testing and red teaming.22 The necessary corporate policy would be to only allow tested software and applications into the company’s AI data centers and to require audits of source code before deploying the software.23 Finally, national governance measures that allow only AI data center operators who follow CISA’s Secure by Design approach24 or implement software with SBOMs to be government contractors can incentivize AI data center companies to implement corporate policies that meet the technical requirements.25

Citations

- Tim Fist, interview by Seungmin Lee, April 24, 2025; Fist and Datta, How to Build the Future of AI in the United States, source.

- Sella Nevo et al., Securing AI Model Weights: Preventing Theft and Misuse of Frontier Models (RAND, 2024), source.

- Nevo et al., Securing AI Model Weights, source.

- Fist and Datta, How to Build the Future of AI in the United States, source.

- Vladimir Antić et al., “Protecting Data at Risk of Unintentional Electromagnetic Emanation: TEMPEST Profiling,” Applied Sciences 14, no. 11 (June 3, 2024): 4830, source.

- Swati Srivastava, “Regulate or Innovate? Governing AI amid the Race for AI Sovereignty,” New America, May 1, 2025, source.

- Arnab Datta and Tim Fist, Compute in America: A Policy Playbook (Institute for Progress, February 3, 2025), source.

- Computer Security Resource Center, “Secure Software Development Framework (SSDF),” National Institute of Standards and Technology, updated February 27, 2025, source.

- Ron Ross and Victoria Pillitteri, Protecting Controlled Unclassified Information in Nonfederal Systems and Organizations, Special Publication 800-171, rev. 3 (National Institute of Standards and Technology, May 2024), source.

- Joint Task Force Working Group, Security and Privacy Controls for Information Systems and Organizations, Special Publication 800-53, rev. 5, update 1 (National Institute of Standards and Technology, October 2024), source.

- National Institute of Standards and Technology (NIST), Security Requirements for Cryptographic Modules, FIPS PUB 140-3 (NIST, March 22, 2019), source.

- “FedRAMP,” Government Services Administration, updated March 31, 2025, source.

- U.S. Department of Defense Chief Information Officer, “Cybersecurity Maturity Model Certification,” accessed July 24, 2025, source.

- “Zero Trust Maturity Model,” Cybersecurity and Infrastructure Security Agency, accessed April 11, 2023, source.

- “Software Bill of Materials (SBOM),” Cybersecurity and Infrastructure Security Agency, accessed June 21, 2025, source.

- Biden, Executive Order on Advancing United States Leadership in Artificial Intelligence, source.

- Donald J. Trump, Executive Order 14179: Removing Barriers to American Leadership in Artificial Intelligence, 90 FR 874, (The White House, January 31, 2025), source; Secretary of the Interior, Secretary’s Order No. 3418: Unleashing American Energy (U.S. Department of the Interior, February 3, 2025), source; “DOE Identifies 16 Federal Sites Across the Country for Data Center and AI Infrastructure Development,” Department of Energy, April 3, 2025, source.

- Winning the Race: America’s AI Action Plan (The White House, July 2025), accessed August 7, 2025, source.

- Winning the Race, source.

- Srivastava, “Regulate or Innovate?,” source.

- Mariami Tkeshelashvili and Tiffany Saade, Navigating AI Compliance, Part 2: Risk Mitigation Strategies for Safeguarding Against Future Failures (Institute for Security and Technology, March 2025), source.

- “Data Center Threats and Vulnerabilities,” Check Point Software Technologies, source; Nevo et al., Securing Artificial Intelligence Model Weights, source.

- Fist and Datta, How to Build the Future of AI in the United States, source.

- Cybersecurity and Infrastructure Security Agency (CISA), Shifting the Balance of Cybersecurity Risk—Principles and Approaches for Secure by Design Software (CISA, October 25, 2023), source.

- CISA, Shifting the Balance of Cybersecurity Risk, source; “Software Bill of Materials (SBOM),” CISA, source.