Francisca Afua Opoku-Boateng

#ShareTheMicInCyber Fellow, 2024 Class

The impact of artificial intelligence (AI) on open-source intelligence (OSINT) is shaping the future of how we gather information. Integrating the two practices presents profound ethical and privacy challenges, highlighting the urgent need for a robust privacy-preserving framework. This report explores the intersection of OSINT and AI, analyzing their impact on national security, privacy, and ethics. It traces the evolution of intelligence gathering from traditional methods to AI-enhanced OSINT, showcasing recent advancements in real-time intelligence and predictive analytics. A survey reveals significant ethical concerns among cybersecurity professionals, with many respondents noting the potential for AI to analyze vast amounts of data without explicit consent. Beyond ethical implications, this lack of consent poses substantial risks to individual privacy, potentially leading to unauthorized data exposure and misuse.

This report underscores the significant benefits of AI integration in OSINT, while also highlighting critical concerns, such as data privacy, ethical challenges, and potential biases in AI systems. Through an examination of legal and regulatory frameworks, the report identifies key compliance and privacy challenges and concludes with the development of an OSINT privacy-preserving impact framework designed to help organizations balance AI-enhanced capabilities with privacy protection and ethical accountability. By prioritizing privacy and ethical standards, this framework will not only protect individual rights but also enhance the credibility and trustworthiness of AI-integrated OSINT practices.

I am grateful to my advisors, Christina Morillo and Peter W. Singer, for their invaluable guidance throughout this project. My appreciation extends to New America, especially Camile Stewart Gloster, Lauren Zabierek, and Bridget Chan, for their support. To my mentor, Desmond Israel, ESQ, my reviewers, and all participants whose contributions enriched this work, thank you. Lastly, thank you to my husband, Danny, for his unwavering encouragement.

Editorial disclosure: The views expressed in this report are solely those of the author(s) and do not reflect the views of New America, its staff, fellows, funders, or board of directors.

This report examines the intersection between the advancement of open-source intelligence (OSINT) and the rapid integration of artificial intelligence (AI) technologies, focusing on the implications on national security, privacy, and ethics. The report begins by providing a historical perspective on the evolution of intelligence gathering, tracing its transformation from traditional methods to modern, AI-enhanced OSINT practices. The challenges and limitations of historical intelligence methods highlight the need for more sophisticated tools to meet the growing demands of contemporary cyber forensics. This sets the stage for a detailed analysis of real-world OSINT applications, demonstrating their significance in cyber forensics through specific use cases and the methodologies employed.

The report then discusses the impact of AI integration on OSINT capabilities, emphasizing the advancements in real-time intelligence gathering, predictive analytics, and cross-domain analysis. These developments have significantly enhanced the effectiveness of OSINT, allowing for more accurate and timely intelligence. However, the integration of AI also brings forth several critical implications, particularly concerning data privacy, ethical concerns, and cybersecurity challenges. The discussion on AI’s role in OSINT is crucial for understanding how these technologies can be both a boon and a potential risk, depending on how they are managed and regulated.

In exploring the intersection of OSINT and data privacy, the report examines current data collection practices and their implications for individual privacy. There is growing tension between the need for comprehensive intelligence and the protection of personal information in the digital age. By analyzing the current legal and regulatory frameworks that govern OSINT activities, several key challenges emerge in ensuring compliance and protecting privacy, underscoring the need for more robust privacy protections and the need for ongoing dialogue between stakeholders.

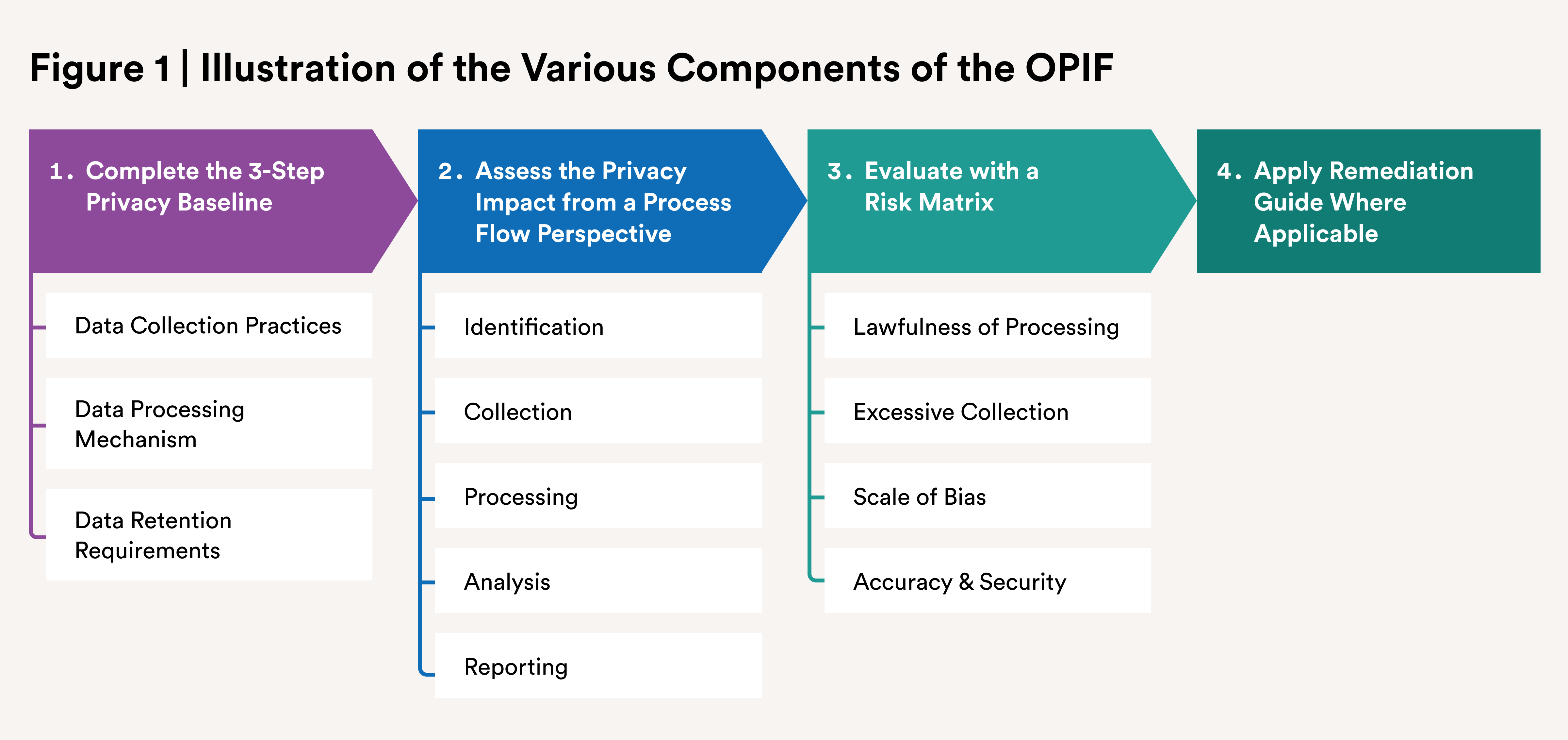

The report concludes by analyzing the ethical landscape of AI-powered OSINT, focusing on the potential biases that AI can introduce, the need for transparency, and the importance of accountability in intelligence practices. The research findings are synthesized into a proposed OSINT Privacy Impact Framework (OPIF), which offers a structured approach to assessing and mitigating the privacy risks associated with OSINT activities. This framework serves as a practical tool for organizations to balance the benefits of AI-enhanced OSINT with the need to protect individual privacy and uphold ethical standards.

In the last half-decade, open-source intelligence (OSINT) has revolutionized national security by enabling real-time threat detection, enhancing counterterrorism efforts, and supporting cyber defense strategies.1 OSINT has played a critical role in uncovering disinformation campaigns, tracking geopolitical developments, and identifying emerging threats. With artificial intelligence (AI) set to exponentially increase the speed and accuracy of OSINT, discussing its future implications is essential.2 AI-driven OSINT will enable faster data analysis, predictive intelligence, and automated threat detection, making it a pivotal tool for national security agencies in staying ahead of adversaries and responding to dynamic security challenges.

The convergence of OSINT and the rapid advancements in AI are redefining the terrain of national security and privacy.3 This research report explores the delicate balancing act between harnessing the expansive capabilities of OSINT—supercharged by AI technologies—and the imperative to protect individual privacy in a world increasingly dominated by cyber forensics. As AI empowers OSINT to process and analyze vast data streams with unprecedented speed and accuracy, the stakes have never been higher. As articulated by the Office of the Director of National Intelligence (ODNI), the integration of OSINT into the intelligence framework underscores its pivotal role in national security, yet raises significant privacy concerns given the enhanced capabilities afforded by AI in processing and analyzing public data.4 This fusion of AI with OSINT tools, while beneficial to investigative activities, necessitates a careful examination of its implications on privacy, presenting ethical and legal dilemmas that challenge existing frameworks.

The ethical dilemmas surrounding AI-powered OSINT are complex, intertwining with debates on the balance between national security imperatives and the safeguarding of individual privacy rights. As AI algorithms sift through public datasets to identify security threats, they simultaneously risk infringing on the privacy of individuals by potentially misidentifying benign activities as suspicious or by creating detailed profiles based on public online behavior.5 In the United States, an AI algorithm used for facial recognition misidentified a Detroit man, Robert Julian-Borchak Williams, leading to his wrongful arrest in 2020.6 The AI technology had falsely matched his face to footage from a shoplifting incident, showing how AI systems can misidentify individuals based on incomplete or incorrect data. AI systems employed by advertising networks or social media platforms, such as Facebook, have come under scrutiny for creating detailed profiles based on user public behavior, as outlined by Shoshana Zuboff in her book The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power.7 These profiles, built from publicly shared data or inferred from interactions online, are used to target advertisements but also have raised ethical concerns regarding privacy and the potential for manipulation. The critical discourse on this issue calls for a comprehensive strategy that includes legal, technological, and ethical measures to ensure AI’s application in OSINT respects privacy rights while achieving security goals.8

Proposing the OSINT Privacy Impact Framework (OPIF), represents a crucial step toward addressing these challenges. By systematically assessing the privacy implications of AI-powered OSINT practices and implementing appropriate controls and safeguards, the OPIF aims to promote responsible and ethical use of OSINT while upholding privacy principles and regulatory requirements.

As society grapples with the ethical and privacy challenges posed by AI-powered OSINT, developing robust frameworks and guidelines becomes imperative. OPIF is a proactive approach toward addressing these challenges, by promoting transparency, accountability, and ethical use of OSINT, and seeks to foster a digital ecosystem that respects individual privacy rights while harnessing the transformative potential of AI technologies.

In the forthcoming chapters, this work will begin with a literature review, tracing the historical evolution of intelligence gathering, traditional methods, and associated challenges. It will then explore real-world applications of OSINT in cyber forensics, focusing on case studies, methodologies, tools, and the significance of OSINT. The discussion will move to the advancements brought by AI integration into OSINT, including real-time intelligence gathering, predictive analytics, and cross-domain analysis, while addressing critical issues like data privacy, ethics, bias, and cybersecurity challenges. The intersection of OSINT and data privacy will be examined, covering data collection practices, privacy implications, and regulatory frameworks. Ethical considerations, potential biases, transparency, and accountability in AI-powered OSINT will also be explored. The methodology section will outline research phases, tool assessments, and data representation, leading to an analysis of findings, the development of the framework, and a concluding discussion.

The evolution of intelligence gathering has played a crucial role in shaping national security, cybersecurity, and global surveillance, transitioning from clandestine espionage to the widespread use of OSINT.1 Historically rooted in wartime espionage, intelligence gathering has advanced alongside technological progress. The introduction of OSINT, which utilizes publicly available information like social media and data breaches, has become a key method in modern cybersecurity.2 This shift towards digital tools offers a cost-effective and legal means of collecting intelligence without high-risk operations. Historically, intelligence collection saw significant advancements during the World Wars and the Cold War, with intelligence agencies using tools like satellite surveillance and covert operations.3 By the late 20th century, OSINT emerged as a crucial component, particularly within military and intelligence agencies, where digitalization transformed the scope of publicly available data and its accessibility. This digital transformation solidified OSINT’s importance in threat identification and modern security operations.

Traditional intelligence-gathering methods, such as human intelligence (HUMINT), signals intelligence (SIGINT), and imagery intelligence (IMINT), relied heavily on covert operations and espionage. However, with the rise of digital technology, automated tools like Maltego and Recon-ng have made intelligence collection faster and more efficient by gathering data from online sources.4 These tools exemplify the growing reliance on OSINT to identify vulnerabilities without direct engagement. Nonetheless, the vast amount of available data presents challenges, as analysts must sift through large volumes to identify actionable insights.5 Moreover, the reliability of sources, along with the rise of misinformation, poses significant hurdles. Additionally, the ethical and legal implications of digital intelligence gathering, particularly concerning privacy, highlight the need for careful consideration in balancing security and individual rights.6 The ongoing evolution of cyber threats further necessitates continuous adaptation by intelligence agencies to safeguard against misuse of OSINT by adversaries.7

OSINT plays a pivotal role in unearthing crucial evidence and thwarting cyber threats. Real-world examples illuminate its practical applications: in one notable case, OSINT was instrumental in identifying the perpetrators behind a sophisticated cyberattack on a financial institution by leveraging social media analysis to trace digital footprints back to a notorious hacking group.8 Similarly, forensic experts utilized OSINT to dismantle a large-scale phishing operation by tracking down the online infrastructure used for the scam, including domains and IP addresses linked to fraudulent activities.9 These examples underscore the critical importance of OSINT in contemporary cyber forensic investigations, showcasing its capability to leverage publicly available information for significant breakthroughs in cybercrime investigation and prevention.

The OSINT process underscores the criticality of clear objective definitions and tailored, operation-specific information sources and tools. The initial stages, planning and preparation, are pivotal in guiding the subsequent phases towards achieving relevant and actionable outcomes. Following the preparation stage, the data collection phase entails a rigorous process of extracting information from identified sources through both automated and manual methods. The efficiency of this phase hinges on the ability to navigate through a plethora of online data, making ethical considerations and adherence to privacy laws an imperative aspect of the OSINT methodology.10 Transitioning into the processing and analysis stage, the raw data undergoes a thorough cleansing and organization process, followed by the application of various analytical techniques. These techniques, ranging from content analysis to sentiment analysis, facilitate the extraction of actionable intelligence, highlighting the instrumental role of visualization tools in elucidating complex relationships and patterns. The culmination of this intricate process is the generation of comprehensive reports that not only summarize the findings but also propose actionable insights, thereby embodying the essence of OSINT in enhancing the decision-making process.

Cyber forensics benefits greatly from advancements in OSINT methodologies and tools that aid in addressing the complexities of digital investigations. The foundation of OSINT methodologies lies in the strategic collection and analysis of publicly accessible data from diverse online platforms such as social media, forums, blogs, and the dark web. These platforms serve as fertile grounds for uncovering valuable insights into cybercriminal activities, thereby facilitating a nuanced approach to cybersecurity investigations. The employment of specialized tools such as Maltego and the Harvester plays a pivotal role in mapping the digital footprints and networks of suspects, thereby uncovering hidden connections and patterns indicative of malicious activities.11 Additionally, the integration of advanced web scraping techniques alongside automated OSINT frameworks, further enhanced by machine learning algorithms, marks a significant evolution in the capability to monitor cyber threats in real time.12 This amalgamation of sophisticated tools and methodologies not only streamlines the investigative process but also equips cyber forensic professionals with the necessary means to proactively address and mitigate potential vulnerabilities.

The iterative and multifaceted nature of OSINT methodologies and tools underscores their significance in the realm of cyber forensics. By connecting the power of publicly available data, coupled with the strategic application of advanced analytical tools, OSINT methodologies facilitate a proactive and informed approach to cybersecurity, exemplifying the synergy between technology and strategic foresight in navigating the digital landscape.

Where digital evidence is paramount, the significance of OSINT cannot be overstated. The practice of collecting, analyzing, and validating information from publicly accessible sources is invaluable in both legal and cybersecurity contexts and offers critical insights that can significantly enhance investigations. OSINT allows investigators to gather and analyze publicly available information, which is essential for tracing digital activities, identifying threat actors, and gathering evidence in cybercrime cases.13 For example, when it comes to social media intelligence in criminal investigations, OSINT is used to track the online activities of suspects by analyzing social media profiles, posts, and interactions.14 In child exploitation cases, an investigator might use geolocation data from social media images to place a suspect at the scene of a crime, even when direct evidence is lacking, and this type of intelligence is vital for corroborating alibis or connecting suspects to criminal activities.15 OSINT provides an invaluable lens through which investigators can discern the intentions, methodologies, and identities of cyber adversaries. Its role in early detection and prevention of cyber threats is particularly crucial, offering a proactive approach to cybersecurity that goes beyond traditional reactive measures.16

OSINT contributes to the integrity of cyber forensic investigations by enabling a comprehensive analysis of digital trails left by cybercriminals. By leveraging publicly available information, forensic experts can construct a detailed and accurate narrative of cyber incidents, from the start of an investigation to the completion, facilitating not only the apprehension of perpetrators but also the fortification of cybersecurity measures against future threats. As such, OSINT is a cornerstone of modern cyber forensic practice, embodying the convergence of information gathering and technological prowess in the fight against cybercrime.

The integration of AI has restructured the field of OSINT by enhancing capabilities in data collection, analysis, and visualization. This section of the paper highlights the impact of AI integration on OSINT capabilities, examining advancements such as automated data collection, analysis, and visualization techniques, and discussing their implications for intelligence gathering and decision-making. AI in OSINT has significantly redefined the way of intelligence gathering, offering a blend of rapid data processing and analytical depth that was previously unattainable. AI’s capacity to sift through extensive online data pools swiftly enables a more efficient and accurate collection and analysis of information, especially beneficial in cyber threat intelligence. By analyzing historical data and emerging trends, AI algorithms can preemptively identify potential security threats, a capability that is critical in today’s digital age. AI’s role extends beyond mere data collection to encompass advanced analysis, employing Natural Language Processing and machine learning techniques to delve into the subtleties of human communication. This, for instance, allows for a nuanced understanding of public sentiment across social media platforms, enhancing the quality and relevance of the intelligence collected.17

AI integration into OSINT tools has changed the way data is visualized and interpreted. Sophisticated visualization techniques facilitated by AI, such as graph-based analysis, heatmaps, and geospatial mapping, allow analysts to navigate and make sense of complex data relationships faster and effortlessly. These tools not only aid concisely the communicating of findings but also support strategic decision-making processes by presenting data in an accessible and interpretable manner.18 Despite these advancements, incorporating AI into OSINT practices is not without its challenges. Issues of data accuracy, privacy, and ethical considerations surrounding automated surveillance demand a careful balance between technological innovation and ethical responsibility. As the field continues to advance, these concerns highlight the importance of establishing robust verification processes and ethical guidelines to navigate the complexities introduced by AI in the realm of intelligence gathering.19

The integration of AI into OSINT has reshaped real-time intelligence gathering, significantly enhancing the automation of data collection and analysis. Tools such as Pantomath utilize AI to streamline the aggregation of OSINT, leveraging existing resources to maximize efficiency and expand the scope of information captured. This progress has enabled a more sophisticated approach to monitoring and interpreting data in real time, providing invaluable insights with greater speed and accuracy.20 Advancements in AI technologies have facilitated the processing and analysis of voluminous datasets, improving the timeliness and relevance of intelligence gathered from open sources. However, these improvements also bring to the forefront the critical need for ensuring the reliability and ethical use of AI-driven tools. AI algorithms are only as good as the data they are trained on, and if the data is flawed, incomplete, or biased, the resulting tools can produce misleading, harmful, or inaccurate intelligence.

Furthermore, the ethical use of AI in OSINT involves ensuring that these tools do not infringe on privacy rights, perpetuate biases, or lead to decisions that could harm individuals or communities.21 For instance, AI-driven tools could unintentionally amplify existing biases if they rely on historical data that reflects those biases, leading to skewed intelligence outcomes. Ethical considerations also include explainability and transparency, where the processes and decisions made by AI systems should be understandable and accountable to human oversight.22 This emphasizes the importance of validating the sources of OSINT and the integrity of the information procured.

AI in OSINT has notably driven forward the capabilities of predictive analytics, offering a preemptive lens through which potential and relevant information can be identified. By harnessing the power of AI to sift through and analyze extensive datasets, predictive models have become increasingly adept at forecasting potential outcomes before they occur. This predictive approach to intelligence gathering is a significant leap forward, allowing for the timely implementation of preventative measures. AI-enhanced predictive analytics draw on a wide range of open-source data, such as using social media to predict trends, with unprecedented precision. As such, the role of AI in predictive analytics underscores the transformative impact of AI on OSINT, marking a pivotal shift towards more anticipatory forms of intelligence analysis.

AI’s ability to process and interpret large-scale datasets has enriched intelligence gathering from public sources like social media, facilitating sentiment analysis across vast swathes of data to glean insights into public opinion and emerging trends. This capability extends beyond social media, allowing for the cross-referencing of information from diverse sources to create a more holistic view of intelligence. The intersection of AI with OSINT has thus expanded not only the breadth of analysis possible but also the depth, offering a multidimensional perspective on data that spans different domains. The progression of cross-domain analysis through AI highlights the importance of maintaining stringent standards for data accuracy and privacy, ensuring that the advancements in OSINT continue to serve the interests of ethical and reliable intelligence gathering.

The application of AI to OSINT has established a transformative era in intelligence gathering. However, this growth raises pressing questions about data privacy and ethical considerations that are intrinsic to the responsible use of AI. As AI systems process an ever-increasing volume of publicly available information, the line between insightful intelligence and intrusive surveillance becomes blurred. This progression underscores the critical need for robust ethical guidelines and regulatory frameworks. Such measures are paramount to safeguarding individual privacy rights while ensuring that the deployment of AI in OSINT adheres to high ethical standards. The imperative for these frameworks stems not only from a commitment to protecting personal information but also from the need to maintain public trust in intelligence practices that increasingly rely on AI technologies. The Office of the Privacy Commissioner of Canada explains how a rights-based regime would not stand in the way of innovation, but would help support responsible innovation and foster trust in the marketplace, giving individuals the confidence to fully participate in the digital age.23 Additionally, given the risks associated with AI, a rights-based framework would help to ensure that it is used in a manner that upholds rights.24 Privacy laws should prohibit using personal information in ways that are incompatible with our rights and values. These laws emphasize that protecting privacy through regulation fosters public trust and ensures responsible use of AI technologies an imperative carried out in the development of the OPIF.

Despite the advanced analytical capabilities of AI-integrated OSINT tools, they are susceptible to inheriting biases present in their training data or algorithms, potentially leading to skewed analyses and erroneous conclusions that could impact the rights of individuals involved. This concern necessitates a proactive approach to identifying and mitigating biases, ensuring the accuracy and reliability of intelligence gathered through AI-enhanced OSINT. Technical solutions for refining algorithms are required, and so is a commitment to transparency and accountability in the development and deployment of AI tools.25 As AI becomes more ingrained in OSINT methodologies, there must be established guidelines and frameworks to prioritize the detection and correction of biases to uphold the veracity of their analyses and decisions.

The fusion of OSINT with data privacy concerns forms a critical junction in today’s digital data collection and analysis arena. OSINT involves the strategic gathering and examination of data that is publicly accessible, especially from online platforms, to extract valuable insights. This method has become a staple in cybersecurity operations, aiding in threat detection and situational awareness through the analysis of real-time information, largely sourced from social media platforms.1 Despite its significant contributions to domains like forensics, cybersecurity, and investigative journalism, as evidenced by the work of groups like Bellingcat, the practice of OSINT poses significant privacy dilemmas such as data collecting, processing, mining, and sharing of open-source information.2 These arise from the ethical and legal ramifications of collecting public online data, where the line between serving the public interest and infringing on individual privacy rights often becomes obscured.

Further complicating this sector are the broader privacy issues introduced in the era of big data, as discussed by Citron and Solove.3 The pervasive nature of data collection practiced today places individuals at an increased risk of privacy breaches, a situation exacerbated by OSINT activities. The process of aggregating and analyzing publicly available data from various sources can unintentionally violate privacy, underscoring the urgent need for stringent regulatory measures. Such frameworks must strive to balance the beneficial use of open-source data for security and public interest with the paramount importance of protecting individual privacy rights. This balance is crucial to navigating the complex interplay between advancing technology and preserving privacy in the digital age.

OSINT is deeply entwined with legal considerations, particularly within the ambit of data privacy laws such as the California Consumer Privacy Act (CCPA) in the United States and the General Data Protection Regulation (GDPR) in the European Union.4 These regulations mandate rigorous standards for the collection, processing, and storage of personal data, underscoring principles like data minimization and the necessity for explicit consent. This legislative framework places a significant onus on entities leveraging OSINT, requiring them to navigate a tightrope between the utility of OSINT for security and investigative purposes and the paramount importance of safeguarding privacy rights. The emphasis is thus on ensuring transparency and accountability, with the GDPR acting as a benchmark for privacy protection globally, including implications for similar regulations like the CCPA. Despite these stringent guidelines, the application of these laws to OSINT practices introduces a layer of ambiguity, especially when dealing with publicly available information that might be exempt from such privacy constraints, thereby complicating compliance efforts.

The intersection of OSINT practices with privacy regulations presents a complex needlepoint of challenges and compliance requirements. The use of OSINT for intelligence-gathering activities must adeptly balance the imperatives of gathering actionable intelligence with the ethical and legal mandate to protect individual privacy. This balancing act necessitates the adoption of comprehensive data governance frameworks that advocate for practices such as anonymization, pseudonymization, and the principle of data minimization. The concept of “privacy by design” emphasizes the proactive approach to privacy protection. Propounded by Anna Cavoukian to mean preventing privacy harms from arising, in this context it means advocating for the incorporation of privacy safeguards right from the development phase of OSINT tools and methodologies.5 Adherence to these principles not only ensures compliance with stringent data protection laws but also fosters a culture of trust and ethical responsibility.

In his paper “Artificial Intelligence and Privacy,” Daniel J. Solove explores the intricate relationship between AI and privacy, highlighting the significant challenges AI poses to privacy norms and the potential directions for the evolution of privacy law in this domain. Solove argues that while AI exacerbates existing privacy concerns by remixing them in complex and novel ways, it does not necessarily present an unforeseeable upheaval for privacy law. Rather, AI magnifies the longstanding inadequacies of current privacy frameworks, underscoring the urgent need for a recalibrated approach that can effectively address the privacy implications of AI technologies.6 Solove contends that existing privacy laws, with their heavy reliance on individual consent and control, fall short of addressing the multifaceted privacy issues presented by AI. He emphasizes that AI’s ability to process vast amounts of data, including publicly available information, challenges traditional privacy protections that are predicated on notions of secrecy and individual control.

AI algorithms may exhibit biases, perpetuating discrimination, and exacerbating privacy concerns. AI algorithms, particularly those involved in data mining and pattern recognition, can inherit biases from their training data, leading to outcomes that may disproportionately affect certain groups.7 This phenomenon is not merely a technical issue but a profound ethical concern that necessitates rigorous scrutiny and intervention. Addressing potential biases in AI-powered OSINT requires a multifaceted approach, such as diversifying the datasets used for training AI systems, ensuring they represent a broad spectrum of demographics and viewpoints, to reduce the likelihood of one-sided or prejudiced data. The ethical deployment of AI in OSINT also requires careful consideration of privacy, consent, and the potential for unintended consequences. Although public data is the primary resource for OSINT, the aggregation and analysis capabilities of AI can reveal sensitive information not intended for public disclosure, challenging conventional notions of privacy and emphasizing the need for ethical guidelines that prioritize respect for privacy and human dignity.

Transparency and accountability are cornerstone principles for the ethical use of AI in OSINT. As AI systems become increasingly complex, understanding their decision-making processes and ensuring they are accountable for their actions are paramount. This necessity is particularly pressing in intelligence gathering, where decisions based on AI analysis can have significant implications.8 To enhance transparency, developers and operators of AI systems must provide clear explanations of how their algorithms work, the data they use, and the rationale behind their decisions. This requirement is known as “explainability,” which seeks to make AI decisions understandable to humans. Explainability not only builds trust in AI systems but also facilitates the identification and correction of errors or biases in their operation. Accountability in AI-powered OSINT involves establishing clear lines of responsibility for the outcomes of AI decisions.9 This means that organizations employing AI tools must be prepared to answer for the system’s actions, including any errors, biases, or ethical breaches that occur. We will subsequently explore methodologies for verifying the discussed subject.

As part of this research, a multifaceted methodology was employed to capture a broad spectrum of insights from experts, practitioners, and enthusiasts in the field to ensure a comprehensive understanding of the current sector and future prospects of OSINT and AI integration. The methodology is structured around two primary data collection phases.

Phase one included a research survey targeted at a wide range of professionals. Recognizing that the complex and specialized nature of AI applications in OSINT requires in-depth, contextually relevant insights, the study purposefully sampled individuals with substantial professional experience and specialized knowledge in AI, privacy, cybersecurity, and OSINT.

Through purposeful sampling of experts in the field, the study prioritizes the quality and relevance of data over quantity, thus enhancing the study’s internal validity. Participants were identified based on their established credentials and active roles in AI, privacy, cybersecurity, and OSINT, ensuring that each contributor could provide informed perspectives. This method aligns with qualitative research best practices, where deep, nuanced understanding from domain-specific professionals can yield findings that are both insightful and directly applicable to the study’s focus. The survey was designed to capture detailed information under three themes: current practices, challenges, and the perceived future direction of these domains. The quantitative survey findings come from 51 expert respondents, and are represented in this report as rounded percentages.

In addition to the quantitative research survey, semi-structured interviews and a research webinar were conducted for additional qualitative insights. The semi-structured interviews were conducted with selected survey respondents to provide a more nuanced understanding of individual experiences and expert opinions. A research webinar featuring industry practitioners was used as a dynamic platform for discussing recent advancements, challenges, and ethical considerations in the field, further enriching the research data with dynamic exchanges and viewpoints.

Phase two used a methodology that included a thorough assessment of sample tools commonly used in performing OSINT. It helped identify key features, effectiveness, and user sentiments, contributing an additional layer of practical insights to the research findings. Together, these methodologies created a strong basis for understanding the evolving dynamics of AI in OSINT as it relates to individuals’ privacy, facilitating a comprehensive analysis of both the current state and future directions in the field. This multi-pronged approach ensured that the study captured a wide array of perspectives, making the findings relevant to both practitioners in the field and policymakers interested in the ethical and practical implications of AI in intelligence gathering.

This section presents an overview of the demographic characteristics of the purposive sampled 51 expert respondents who participated in the survey, a key component of the study. These participants brought diverse perspectives based on their expertise and experience. The survey captured various primary areas of experience, as shown in Figure A1, referenced in Appendix 1. The professional work of the expert sample spans multiple fields, resulting in overlapping responses across several areas, which accounts for the totals in the subsequent section exceeding 100 percent. A significant 74 percent of respondents identified general cybersecurity practices as their main area of focus, followed by 56 percent who emphasized privacy concerns. Half of the participants (50 percent) reported expertise in cyber forensics and intelligence investigations, while 46 percent reported specialization in OSINT. Additionally, 42 percent of respondents worked in AI. The survey also revealed that 22 percent of participants were involved in legal and ethical activities, highlighting an interest in compliance and risk management. Furthermore, 28 percent indicated involvement in other related fields, reflecting the interdisciplinary nature of the study.

The distribution among the respondents was broad in terms of years of experience, reflecting a mix of emerging talent and seasoned experts. Specifically, 41 percent of participants had one to five years of experience, 22 percent fell within the six to nine years range, and a notable 37 percent possessed over 10 years of experience, indicating a deep reservoir of knowledge contributing to the survey. The participants held a variety of titles, ranging from founders, cyber lawyers, frontline analysts and researchers to senior managers and policy advisors, each contributing insights into the study, as illustrated in years of experience shown in Figure A2, referenced in Appendix 1.

The survey representation showed that OSINT practitioners had not fully recognized the significant interest among professionals in regulating AI-integrated OSINT. This reveals overlooked perspectives and highlights a growing concern within the field about the ethical and legal implications of these advanced technologies. Additionally, the survey uncovered an unexpected trend: a relatively heavier reliance on HUMINT alongside AI tools. These findings underscore the evolving landscape of intelligence practices, where traditional methods continue to play a crucial role even as AI becomes more integrated. The survey examined three distinctive themes: current practices of OSINT and AI integration, ethical and legal concerns in AI-integrated OSINT, and OSINT privacy-preserving framework in the age of AI.

The current practices surrounding OSINT and AI integration reflect a wide engagement across various types of OSINT, with professionals utilizing sources such as the web, social media, and technical data. Despite this engagement, there remains a significant lack of awareness concerning AI-integrated OSINT tools, as 73 percent of respondents were unfamiliar with them. However, for those aware, social media platforms and public databases were the primary sources utilized. The perceived accuracy of AI-integrated OSINT is moderate, with 49 percent of respondents rating it as somewhat accurate, indicating room for improvement in the reliability of AI tools in this domain (see Figure A5).

Ethical and legal concerns are prominent in the use of AI for OSINT, particularly regarding privacy and bias. Nearly one-third of respondents expressed high concern over privacy implications, while most indicated moderate concern about biases in AI systems. A significant majority, 69 percent, support regulation to safeguard privacy and enforce ethical guidelines (see Figure A8). Transparency in the use of AI-integrated OSINT tools is also a major issue, with 63 percent of respondents advocating for full transparency to maintain ethical standards (see Figure A10). These findings underscore the need for a balanced approach that integrates both regulation and ethical considerations.

There is a clear gap in the awareness of privacy-preserving frameworks for AI-integrated OSINT, as 100 percent of respondents were unaware of such frameworks. This highlights an opportunity for further development in this area.

Confidence in the effectiveness of privacy-preserving frameworks is varied, with only a small percentage expressing high confidence. Additionally, respondents showed overwhelming support for the development of privacy-first frameworks, with 86 percent in favor (see Figure A16). The consensus also extends to the belief in the need for international agreements to regulate the use of AI in OSINT, with 88 percent agreeing on this necessity, reflecting global concern over the ethical use of AI in intelligence gathering (see Figure A17). The full survey and results can be found in Appendix 1.

To supplement the surveys, a mixed-methods approach, incorporating both quantitative and qualitative data, was employed. This provided further expert perspectives on the responsible integration of AI within Open-Source Intelligence (OSINT).

In this track of research, seven participants with professional and scholarly backgrounds in AI ethics, privacy, and OSINT applications, were selected to offer diverse insights into ethical and privacy considerations surrounding AI in OSINT. Five participants engaged in a hybrid survey-interview format, beginning with a structured quantitative survey followed by open-ended qualitative interview questions to deepen the data collected. Two participants participated in even more in-depth semi-structured interviews, allowing for follow-up questions, and a richer exploration of the complex ethical issues and more nuanced understandings of each expert’s views.

All qualitative responses were coded thematically to identify recurring themes, challenges, and best practices recommended for AI-integrated OSINT. A summary of key findings from both types of interviews is presented in Table A1 referenced in Appendix 2.

A research survey webinar roundtable was conducted, structured around four focus groups to gather diverse insights. Each focus group comprised a carefully selected mix of participants, ensuring a balanced representation of industry experience. The findings in Table A2 under Appendix 2 represent the focus group discussions, offering precise analysis of expert opinions and highlighting key trends and concerns in alignment with the focus group themes.

In this section, the research examined various aspects of data management in OSINT tools, and how the tools ensure privacy and security compliance. The evaluation focused on data collection practices, retention requirements, and robust encryption measures, and on the capabilities and limitations concerning data management for three critical OSINT tools: Shodan, Maltego, and SpiderFoot. Table A3 in Appendix 2 illustrates a detailed breakdown of the tools assessed as part of the research report. The tools assessment forms an integral part of step one in the OPIF.

Shodan, a search engine for internet-connected devices, retrieves information from banners about various devices, such as routers and servers, by leveraging their publicly available IP addresses. Although Shodan does not collect personal data, it exposes device vulnerabilities and can be misused. The evaluation identified significant data exposures, such as MongoDB databases with millions of credentials. Shodan does not seem to have comprehensive data retention policies, beyond using cookies to store user preferences. While the tool employs encryption for data in transit, it lacks transparency reports or clear guidelines on third-party integrations, posing potential compliance risks.

Maltego, an advanced link analysis tool, specializes in gathering OSINT data and representing it through visual graphs for easy identification of patterns and relationships. It aggregates data from diverse online sources via APIs, web scraping, and user-contributed transforms. While Maltego claims compliance with GDPR, it does not store data for investigations but relies on trusted third-party sources to ensure data relevance. The tool excels in integrating third-party data but presents potential privacy concerns due to the volume of sensitive information handled during its investigations. Though its encryption mechanisms are robust, Maltego’s reliance on external data providers raises questions about the security and privacy risks posed by third-party integrations.

SpiderFoot automates OSINT for threat intelligence and reconnaissance by analyzing publicly available information and directly querying target systems. It uses a localized web server for real-time scans, minimizing data retention risks as collected data is typically stored temporarily in memory. SpiderFoot supports secure data transmission through encryption and offers strong authentication mechanisms to control access. While the tool adheres to GDPR standards, the potential for users externally storing data introduces retention risks. Nevertheless, SpiderFoot’s focus on automation and customization allows it to provide comprehensive intelligence for identifying vulnerabilities, making it valuable for both offensive and defensive security operations.

This section of the research report connects the findings from the methodologies above and the ultimate focus of the research objectives, thus focussing on a critical balance needed between utilizing OSINT for investigations and protecting individual privacy with the rise of AI. This section emphasizes the ethical dilemmas and privacy issues that arise when AI-integrated OSINT tools analyze public datasets, potentially misidentifying benign activities and infringing on personal privacy and reinforcing the need for a privacy-preserving framework within AI-integrated OSINT tools, processes, or resources.

Based on interview surveys, core principles are crucial to guiding responsible AI adoption in OSINT.

Foremost among these is the lawfulness of data collection, underscoring that any AI deployment must comply with existing legal frameworks and respect fundamental human rights. This includes adhering strictly to principles of data minimization, where only the necessary data is collected to mitigate potential privacy risks, and ensuring that the scope of data gathered is proportionate to the intended purpose.

Moreover, the right to informed consent is a critical component in responsible AI integration. The findings in this section emphasized the necessity for transparency in data collection practices, advocating for individuals to be comprehensively informed about what data is being collected, how it will be used, and the implications of such usage. Ethical concerns in AI-integrated OSINT are paramount, and the research asserts that prioritizing these principles will contribute to a balanced approach where technological advancement does not come at the expense of individual rights.

Both the qualitative interviews and the quantitative survey underscore the need for clear regulations and comprehensive ethical guidelines governing the use of AI in OSINT. Participants highlighted the critical need for transparency with data subjects, ensuring data accuracy, and the implementation of risk assessment frameworks to preemptively address potential ethical dilemmas. These measures are vital to maintaining the balance between leveraging AI’s capabilities and protecting individual rights, thereby fostering trust in AI-integrated OSINT applications.

Despite the potential benefits of AI in enhancing OSINT efficiency, the findings reveal a significant gap in awareness among professionals regarding AI-integrated OSINT tools, with only 28 percent of respondents familiar with such technologies. Furthermore, perceptions of the accuracy of AI-derived OSINT data are mixed, with nearly half of the respondents’ expressing reservations. This calls for an increased focus on educating OSINT practitioners about the privacy impact of AI technologies and improving the technologies reliability. By addressing these concerns through targeted policies and education, AI integration in OSINT will not only enhance operational effectiveness but also adhere to the highest standards of privacy and ethics. This alignment with responsible AI practices is essential for the sustainable and ethical advancement of OSINT capabilities.

A connection is created between Article 35 of the GDPR and the objective for a privacy-preserving framework. Article 35 emphasizes the necessity for a rigorous risk assessment and mitigation to protect privacy and uphold ethical standards. It emphasizes high-risk data processing activities and mandates Data Protection Impact Assessments (DPIAs) in such situations.1 This aligns with the call for comprehensive ethical guidelines and risk assessment frameworks in AI-integrated OSINT. Both stress the importance of evaluating risks before implementing data processing technologies to ensure transparency, accuracy, and regulatory compliance.

The findings reveal profound ethical and privacy challenges with AI-enabled OSINT, necessitating the immediate implementation of a comprehensive privacy-preserving framework. There are significant ethical concerns among professionals, with a notable portion highlighting the potential for AI to analyze vast amounts of data without explicit consent. This lack of consent not only raises serious ethical issues but also poses substantial risks to individual privacy, potentially leading to unauthorized data exposure and misuse. The research underscores that 31 percent of respondents perceive the impact of AI on privacy as highly significant, while 49 percent recognize a moderate impact, indicating a broad consensus on the need for stringent privacy measures (see Figure A6). With 41 percent of respondents expressing extreme concern over AI biases, it is evident that professionals are troubled by the ethical implications of inaccuracies and discriminatory outcomes in AI-driven OSINT (see Figure A7). This apprehension necessitates robust regulatory interventions to mitigate these risks and ensure ethical integrity in AI-integrated OSINT.

Moreover, the survey highlights a strong demand for regulatory oversight in AI-integrated OSINT, with 69 percent of respondents advocating for regulation (see Figure A8). This support reflects a significant preference for formal oversight to safeguard privacy and ethical standards. Additionally, the call for transparency is compelling, with 63 percent of respondents emphasizing the need for full transparency in AI-integrated OSINT tools and processes (see Figure A10). The lack of awareness regarding existing privacy-preserving frameworks, reported by 90 percent of respondents, further underscores the urgent need for educational initiatives and the development of standardized guidelines (see Figure A11). A notable 59 percent of respondents strongly support standardized guidelines for the ethical use of AI in OSINT, reflecting a broad consensus on the importance of ethical standards (see Figure A15). The critical importance of personal data control is also evident, with a significant majority valuing this as essential within privacy frameworks. This consensus highlights the necessity of implementing mechanisms that ensure user consent and data control in AI-driven OSINT operations.

The findings also indicate overwhelming support for international agreements to protect privacy in the context of AI-integrated OSINT, with 88 percent of respondents advocating for global standards (see Figure A17). This call for international cooperation is crucial in addressing the cross-border nature of data and the global implications of AI technologies. Furthermore, the high level of concern about the misuse of AI OSINT tools by governments and corporations, expressed by 63 percent of respondents, underscores the need for stringent measures to prevent ethical abuse (see Figure A18).

To address these concerns, it is imperative to develop a privacy-preserving framework that incorporates data aggregation controls, user consent mechanisms, and ethical guidelines for AI developers. The willingness among respondents to participate in initiatives promoting responsible AI use reinforces the feasibility and urgency of implementing such a framework. By prioritizing privacy and ethical standards, this framework will not only protect individual rights but also enhance the credibility and trustworthiness of AI-integrated OSINT practices.

Implementing a privacy-preserving framework within OSINT processes necessitates rigorous attention to each stage: identification, collection, processing, analysis, and reporting. In the identification phase, clear protocols must be established to ensure data collection aligns with ethical standards and respects privacy rights. During collection, stringent measures such as anonymization and data minimization should be employed to protect individuals’ identities. Processing and analysis should incorporate advanced encryption and secure handling practices to prevent unauthorized access. The reporting phase must ensure that the disseminated information is devoid of personal identifiers unless explicit consent or a lawful basis has been established.

Considering the diverse categories of OSINT, as reflected in Appendix 1, the implementation of privacy-preserving measures must be tailored to the specific characteristics of each type. From the survey, 65 percent and 61 percent of professionals engage in web and social media OSINT, respectively, where appropriate lawful basis, robust consent mechanisms, and transparency in data use are crucial. Technical and document OSINT, used by 45 percent and 35 percent of survey respondents, respectively, require heightened security protocols to protect sensitive information. HUMINT and Dark Web OSINT, with their inherent risks, necessitate stringent ethical guidelines and secure communication channels. For image and video OSINT (18 percent), and geospatial OSINT (10 percent), utilized by survey respondents, data anonymization and secure storage solutions are imperative (see Figure A3).

The Three-Step Privacy Baseline is designed for AI-integrated OSINT frameworks to ensure the preservation of privacy throughout its processes. It details specific measures across Data Collection Processes, Data Processing Mechanisms, and Data Retention Requirements. This baseline ensures data is collected minimally, processed responsibly, and retained securely, aligning with both National Institute of Standards and Technology (NIST) and International Organization for Standardization (ISO) guidelines.

The Three-Step Privacy Baseline establishes a robust framework for privacy preservation in AI-integrated OSINT, addressing data collection, processing, and retention, with strict guidelines. While the foundation ensures compliance and security, it also sets the stage for seamless integration into a broader OSINT operation.

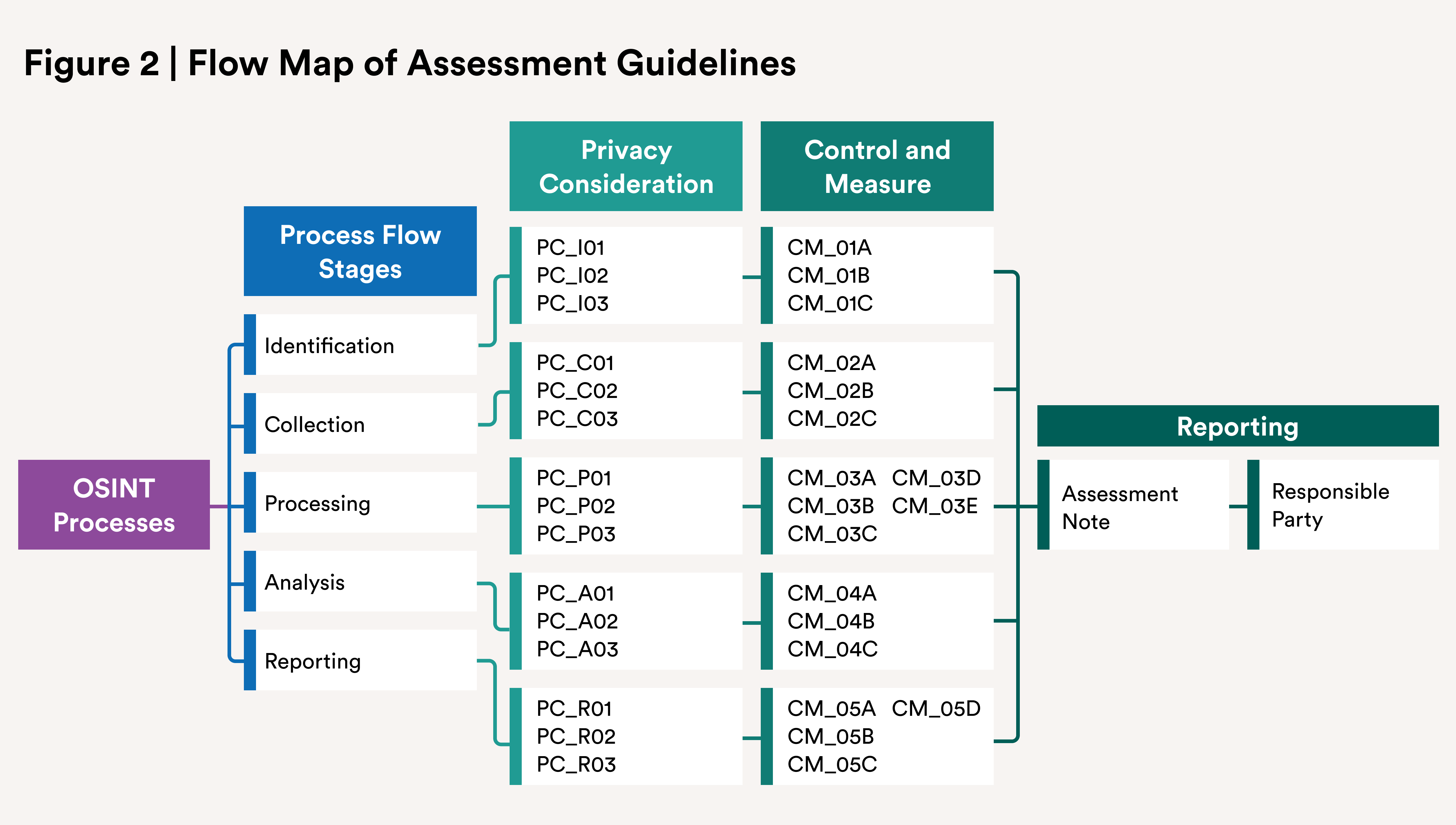

In the second phase of the framework, an assessment chart provides a structured approach to evaluating and ensuring and promoting privacy compliance throughout an OSINT process. This aligns with the GDPR DPIA and Privacy by Design principles, as illustrated in the image below. A detailed assessment guideline accompanies each OSINT process stage. The guideline seeks to ensure that privacy risks are identified, evaluated, and mitigated at every step of the OSINT workflow. Each OSINT process stage must be evaluated with the corresponding privacy considerations, controls, and mitigations. The assessment details must be documented leveraging the assessment notes including specific document findings, observations, and recommendations for each stage.

In this section of the OPIF, the framework combines the NIST Risk Management Framework (RMF) and ISO 31000 to develop risk metrics and remediation for a privacy-preserving, AI-integrated OSINT system, ensuring actionable intelligence while safeguarding data privacy across all OSINT process stages. To effectively assess the impact of risks in AI-integrated OSINT, a risk-scoring system based on the likelihood of occurrence and the severity of impact is leveraged. Each risk is scored on a scale of one (1) to five (5), where one represents minimal impact and likelihood, and five represents the highest. The final risk score is calculated by multiplying the likelihood and impact scores. Here is a breakdown of the risks, with associated metrics and calculated scores:

The risk scores in Table 4 are weighed to reflect the relative importance of impact over likelihood, recognizing that even less likely events can have disproportionately severe consequences when they do occur. This scoring aims to help prioritize risks that need the most immediate attention and resources for mitigation. The highest-scoring risks, such as the collection of Personally Identifiable Information (PII) without consent, should be addressed first with robust remediation measures.

This guide outlines specific administrative and technical remediation measures designed to enhance the security and integrity of AI-integrated OSINT processes. It addresses challenges ranging from data validation and privacy protection to bias mitigation and secure reporting, and ensures comprehensive risk management in line with best practices.

OPIF is designed to be dynamic and collaborative. It will be developed, used, and maintained as an open-source resource, enabling continuous refinement and adaptation by the global intelligence community. By embracing open-source principles, OPIF ensures transparency, encourages innovation, and facilitates widespread adoption as a self-regulatory document. It is not just a framework; it is a living document. As such, its success and growth depend on active contributions from industry experts, privacy advocates, and OSINT practitioners.

Looking to the future, OPIF will be accessible through a dedicated web portal, providing an easy-to-navigate platform for users. The framework will include features such as automation tools for self-assessment and compliance reporting, simplifying the processes for organizations to evaluate their adherence to privacy standards and regulatory requirements. In embracing these initiatives, OPIF will aim to become a ubiquitous publication, integral to the landscape of OSINT operations, and a benchmark for privacy and data protection standards worldwide.

This study underscores the critical relevance of understanding the evolution and impact of AI-integrated OSINT within the context of privacy preservation. By analyzing real-world cases and synthesizing the ethical and legal challenges posed by AI’s advancements, this work has provided a comprehensive framework—the OSINT Privacy Impact Framework, or OPIF—to aid the development of privacy-preserving practices in AI-powered OSINT.

OPIF is a four-part tool developed to manage privacy risks in AI-integrated OSINT processes. OPIF starts with a Three-Step Privacy Baseline, foundational to ensuring that data is handled with utmost care throughout its lifecycle. This baseline, informed by NIST and ISO standards, mandates minimal data collection, responsible processing, and secure retention. Such measures are pivotal not only in aligning with regulatory frameworks like GDPR, CCPA, and HIPPA but also in fostering trust and ethical standards in AI applications within OSINT. The second step in the framework, the OSINT Process Flow Impact Assessment, leverages the GDPR’s Data Protection Impact Assessment and the Privacy by Design approach to scrutinize each stage of the OSINT cycle. This impact assessment is crucial for preemptively identifying privacy risks and establishing guidelines that ensure these risks are effectively managed throughout the data lifecycle. By meticulously outlining how each stage should be handled, the framework guarantees that all personnel involved are well-informed of their responsibilities toward privacy preservation. In the heart of the OPIF lies a Risk Metric Score system, which integrates the risk management principles of NIST RMF and ISO 31000. This scoring system evaluates the potential privacy risks associated with AI-integrated OSINT activities by assessing both the likelihood of occurrence and the severity of impact, and therefore quantifies risks and guides prioritization of mitigation efforts. The final component of the OPIF is the Risk Guide, which provides comprehensive remediation strategies that can be employed by anyone who uses the framework. The guide outlines both administrative and technical measures that can be adhered to address identified risks. From data validation and bias mitigation to secure reporting and privacy protection, the guide covers a broad range of actions designed to enhance the integrity and security of the AI-integrated OSINT processes. This ensures that the framework is not merely reactive but proactive in strengthening the overall data handling and intelligence production processes.

OPIF will serve as a vital tool for future efforts in balancing the power of AI-driven intelligence gathering with the need to protect individual privacy and uphold ethical standards. An essential guide for navigating the complex landscape of modern intelligence practices and ensuring that the intelligence community remains aligned with ethical standards and public trust, OPIF will foster a regulatory framework that evolves as swiftly as the technologies it seeks to govern.

The research survey was administered online from March 2024 to June 2024. The survey findings in this report are drawn from a purposive sampling of 51 experts with requisite experience in OSINT, artificial intelligence, cybersecurity, and data privacy (referred to as the “expert sample” throughout this report). Participants were selected for their specialized knowledge because they converged in more than one of the requisite experiences, ensuring that the insights captured were both accurate and contextually relevant to the study’s specific aims. This approach, typical in exploratory research on complex topics, prioritizes expertise over a broad sample, thereby providing depth and reliability in the analysis. Targeting seasoned professionals under the expert sampling yields nuanced, actionable data that supports the study’s objectives and contributes to the field of practice. The aggregate results are illustrated in this section.

Survey responses indicate a wide range of experience across different kinds of OSINT engagements.

The research shows that on a daily basis, nearly 28 percent of the expert sample used OSINT tools for information-gathering purposes. This is significant when combined with the 33 percent who used it on a weekly and monthly basis, reinforcing the position that the use of OSINT practitioners is crucial, and that OSINT tools are central to their work.

The survey sought to understand how the expert sample perceived the accuracy of information obtained through AI-integrated OSINT tools. Nearly half (49 percent) perceive the tools as “somewhat accurate,” while roughly 16 percent perceive these tools as “very accurate.”

The expert sample exhibited varied perceptions about the impact of AI-integrated OSINT on individuals’ privacy. Overall, 31 percent perceived it as “high” and nearly half (49 percent) perceived it as having “some impact,” indicating concerns about AI’s effects on privacy practices.

Concerns About Potential Bias

The expert sample survey indicates an awareness and concern about biases in AI systems used for OSINT. Notably, none of the respondents expressed having “no concern,” and 41 percent were “extremely concerned.” Both findings underscored an apprehension about the potential inaccuracies and ethical implications.

From the expert sample, nearly 69 percent advocate for regulating AI-integrated OSINT, which in this survey means establishing legal frameworks to protect individual privacy. It includes enforcing ethical guidelines and implementing technological safeguards to ensure the responsible and secure use of AI in OSINT. The expert sample expressed a preference for an oversight, highlighting the need for frameworks to protect individual right to privacy through data minimization, transparency, accountability, and the enforcement of ethical guidelines to ensure responsible and secure use.

The survey, based on an expert sample, reflects a consensus for regulation; however, there is divergence in the degree of regulation of AI-integrated OSINT tools to safeguard privacy. It was recorded that nearly 53 percent advocate for moderate regulation as a balanced approach.

The expert sample was asked about the need for transparency requirements of AI-integrated OSINT tools, processes and sources. Notably, roughly 63 percent advocate for full transparency in the use of AI in OSINT, emphasizing the need for ethical standards through clear and open practices.

The expert sample survey was used to determine the level of awareness of any existing OSINT privacy frameworks where 90 percent confirmed no awareness. This highlights a gap in knowledge or dissemination of information regarding these frameworks, and indicates a potential area for increased educational efforts to raise awareness about available privacy OSINT frameworks within the professional community.

From the expert sample, the survey highlighted a lack of visibility or existence of privacy-preserving frameworks specifically designed for AI-integrated OSINT, tools, processes, and resources, suggesting that research, development, and education are needed to ensure privacy considerations are met in the deployment of AI-integrated OSINT technologies.

The responses from the expert sample indicate a varied level of confidence in the effectiveness of a privacy-preserving framework, where about 6 percent were “very confident,” 33 percent were “unsure,” 28 percent were “not very confident,” and 22 percent were “moderately confident.” This diversity in confidence levels highlights some degree of skepticism and uncertainty about the capabilities of these frameworks to address privacy concerns effectively.

The expert sample shared key features of their named privacy-preserving framework to mitigate risks associated with AI-integrated OSINT. Data aggregation, user consent mechanisms, and ethical guidelines for AI developers were mentioned by different respondents, to capture their understanding of what constitutes effective privacy-preserving measures in AI OSINT frameworks.

The expert sample responses see controlling personal data in AI-integrated OSINT tools as an important element within the privacy frameworks, with 29 percent considering it “extremely important,” 38 percent “very important,” and 21 percent rating it as “moderately important.”

The majority of the expert sample supports the need for standardized guidelines: 59 percent “strongly agree” and 31 percent “agree” with the need for regulatory measures in the ethical use of AI OSINT tools.

The expert sample shows a preference for privacy-first approaches, where 86 percent of the expert sample supports the development of frameworks that prioritize privacy protection over data collection and analysis.

The majority of the expert sample believes there should be international agreements or standards to protect individual privacy rights in the context of AI-integrated OSINT, with 88 percent recognizing a need for international cooperation in regulating AI-integrated OSINT privacy.

The expert sample survey identified a high level of concern about governments' or corporations' potential misuse of AI-integrated OSINT tools with 63 percent “extremely concerned,” and 33 percent rated “moderately concerned,” indicating apprehensions about these technologies’ ethical implications and potential abuses.

When asked in an open-ended question what measures could be implemented to enhance transparency and accountability, respondents suggested measures like improved regulation and oversight, enhanced user consent mechanisms, ethical guidelines development, and educational efforts to enhance transparency and accountability in AI OSINT tools. The variety of responses from the expert sample underscores a multifaceted approach to addressing transparency and accountability concerns in the use of AI-integrated OSINT.

About 88 percent of the expert sample are willing to engage in initiatives promoting responsible privacy-preserving use of AI-integrated OSINT tools and contribute to shaping better practices in the field of OSINT.

The following tables in Appendix 2 present a detailed synthesis of data collected through various research methodologies employed in this research study report. Table A1 offers key insights from the semi-structured interviews, capturing individual perspectives and nuanced themes that emerged during these discussions. Table A2 summarizes findings from focus groups during the research webinar conducted, highlighting collective viewpoints and shared experiences relevant to the research objectives. Finally, Table A3 provides results from the tools assessment. These tables collectively serve to contextualize and support the study’s findings.

This section details the semi-structured interview protocol used to gather in-depth insights from OSINT, AI, cybersecurity, and privacy experts. Open-ended questions focused on current practices, privacy challenges, ethical considerations, and future developments. The flexible structure allowed for tailored discussions, enhancing context-specific data collection on AI-integrated OSINT applications.

This is part of a New America #ShareTheMicInCyber research project that delves into an intriguing realm of the impact of AI-integrated OSINT on individual privacy.

Your privacy is of utmost importance to us. All data collected during this interview process will be used exclusively for research purposes and handled with the utmost confidentiality. Your responses will shape our understanding of AI-integrated OSINT and inform future processes.

Open-source intelligence (OSINT) involves gathering information from publicly available sources to gain insights and make informed decisions. It’s extensively utilized in various industries, including cybersecurity, law enforcement, and competitive intelligence, for threat analysis, reconnaissance, and trend monitoring. Recently, there’s been a notable evolution in OSINT with the integration of artificial intelligence (AI). This integration offers enhanced capabilities in data analysis, pattern recognition, and predictive analytics. As the landscape shifts towards AI-integrated OSINT, industry professionals’ opinions are pivotal in understanding its impact on individual privacy and shaping ethical frameworks.

This interview to gain deeper insights into the impact of AI-integrated OSINT on individual privacy, focusing on current practices, ethical concerns, and the necessity for a privacy-preserving framework in the age of AI. Your perspectives are invaluable for understanding the evolving dynamics of information collection and analysis in the context of OSINT and AI technologies.

The interview will last approximately 15–30 minutes.

Francisca Afua Opoku-Boateng, Ph.D.

New America #ShareTheMicInCyber | Fellow

opoku-boateng@newamerica.org