Tackling Misleading Advertising

As demonstrated during the 2016 presidential election, online advertising can be used to spread misleading information and foment social divides.1 We have written extensively about how platforms’ ad-targeting tools allow advertisers to precisely target users based on sensitive characteristics, political affiliation, and personal interests, as well as how advertising delivery algorithms can generate discriminatory and harmful results.2

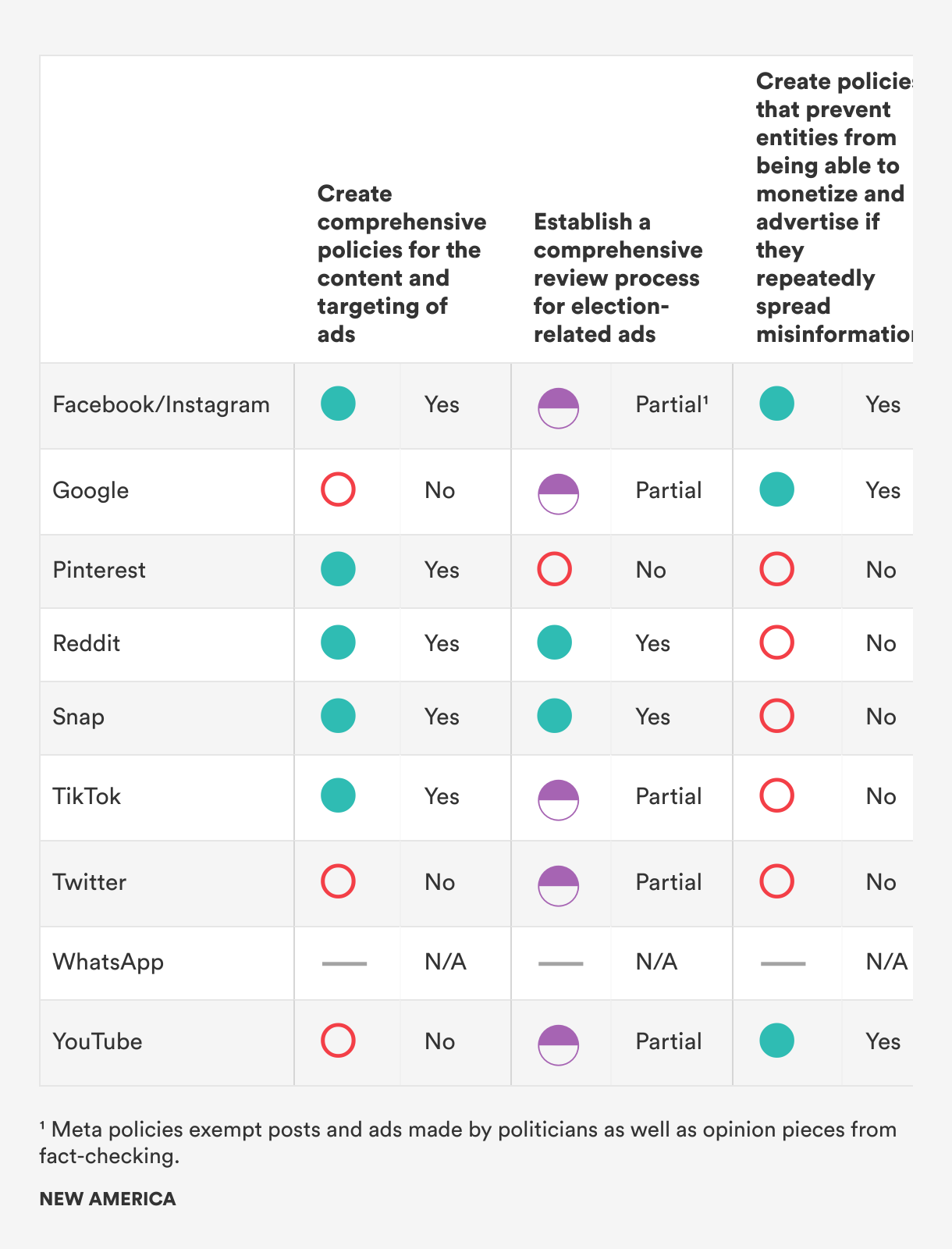

Internet platforms have adopted a variety of approaches when it comes to tackling misleading election information in advertisements. Some companies have clear policies on misinformation in advertising, which can help prevent the spread of election misinformation and disinformation. Other companies, including TikTok and Twitter, have banned political advertisements as a conduit for avoiding election information.3 While bans may help reduce election misinformation and disinformation in advertising, they are not a foolproof solution. It is often very difficult to draw clear lines around what is political content. TikTok, for example, notes that advertisements must not “reference, promote, or oppose a candidate for public office, current or former political leader, political party, or political organization. They must not contain content that advocates a stance (for or against) on a local, state, or federal issue of public importance in order to influence a political outcome.”4 This is a very specific definition of political advertising, but it does not account for a broad range of social issues that are often very politicized, including climate change and immigration. Facebook, on the other hand, broadly categorizes ads related to social issues, elections, and politics together, noting that these touch on topics including candidates for public office, political action committees, ongoing elections, referendums, or ballot initiatives, and social issues such as civil and social rights, education, guns, immigration, and environmental politics.5 Because it is difficult to draw clear lines around political content, we expect companies to have policies related to misinformation and disinformation in advertising, regardless of whether or not they ban political ads. If a platform has an advertising policy category that could be applied to election misinformation and disinformation, we gave them full credit.

We did not give Google or YouTube credit for this category because their advertising policies are vague. For example, the policy notes that the companies support “responsible political advertising.”6 Given that Google operates the largest digital advertising platform and there is a breadth of evidence demonstrating the relationship between online advertising tools and misleading information, the companies should have stronger policies against misleading election information. We did not evaluate WhatsApp against the recommendations in this chart because WhatsApp does not allow any advertisements on its services.

While many platforms have policies related to misinformation and disinformation in advertising, fewer have comprehensive processes for reviewing and fact-checking advertisements. Only two companies—Reddit and Snap—have both. If platforms do not have both, we did not give them full credit. Without a comprehensive review and fact-checking process, it is likely that companies will fail to consistently enforce their advertising policies, allowing misleading information to slip through the cracks, including on services that have banned political ads.7 Currently, few platforms discuss how much their advertising review processes rely on automated tools compared to human reviewers. This is an area where we need significantly more transparency to ensure that automated tools are being trained and deployed effectively, and to ensure that humans are kept in the loop in situations where there are lower levels of confidence in automated decision-making.8

Lastly, only three platforms have policies in place that prevent repeat spreaders of disinformation from monetizing their content. This is a major concern. Most internet platforms rely on a targeted advertising business model. This means that platforms have a strong incentive to increase user engagement and time spent on their services, as they can deliver more ads to users. Because of this structure, platforms often fail to remove harmful or misleading content, as it is often more engaging. These business models incentivize some of the largest spreaders of misleading information to generate more of such content.9 This is a major gap in how platforms are addressing election misinformation and disinformation that must be filled.

Citations

-

Issie Lapowksy, “How Russian Facebook Ads Divided and Targeted US Voters Before the 2016 Election,” Wired, April 16, 2018, source.

Scott Shane, “These Are the Ads Russia Bought on Facebook in 2016,” New York Times, November 1, 2017, source. - Spandana Singh, Special Delivery (Washington, DC: New America, 2020), source. Singh and Blase, Protecting the Vote, source.

- “TikTok Advertising Policies,” TikTok Business Help Center, source. “Political Content,” Ads Help Center, source.

- “TikTok Advertising Policies,” TikTok Business Help Center, source.

- “About Ads About Social Issues, Elections or Politics,” Meta Business Help Center, source. “About Social Issues,” Meta Business Help Center, source.

- “Google Ads Policies,” Advertising Policies Help, source.

- “These Are ‘Not’ Political Ads: How Partisan Influencers Are Evading TikTok’s Weak Political Ad Policies” (San Francisco, CA: Mozilla Foundation, 2021), source. Bill Chappell, “Researchers Explain Why They Believe Facebook Mishandles Political Ads,” NPR, December 9, 2021, source.

- Singh, Special Delivery, source.

- Singh, Special Delivery, source. OTI et al., Trained for Deception, source.