Sharing Authoritative Information and Promoting Informed User Decision-Making

Internet users often come to internet platforms for information and discussion on candidates, electoral processes, and more. As a result, many platforms have instituted efforts to surface and promote reliable information and ensure users can make informed decisions about voting. Ahead of the 2020 presidential election, many internet platforms partnered with fact-checking entities to promote, verify, or refute information circulating on their services. Based on our findings, there appears to be no significant change in platforms’ fact-checking efforts.

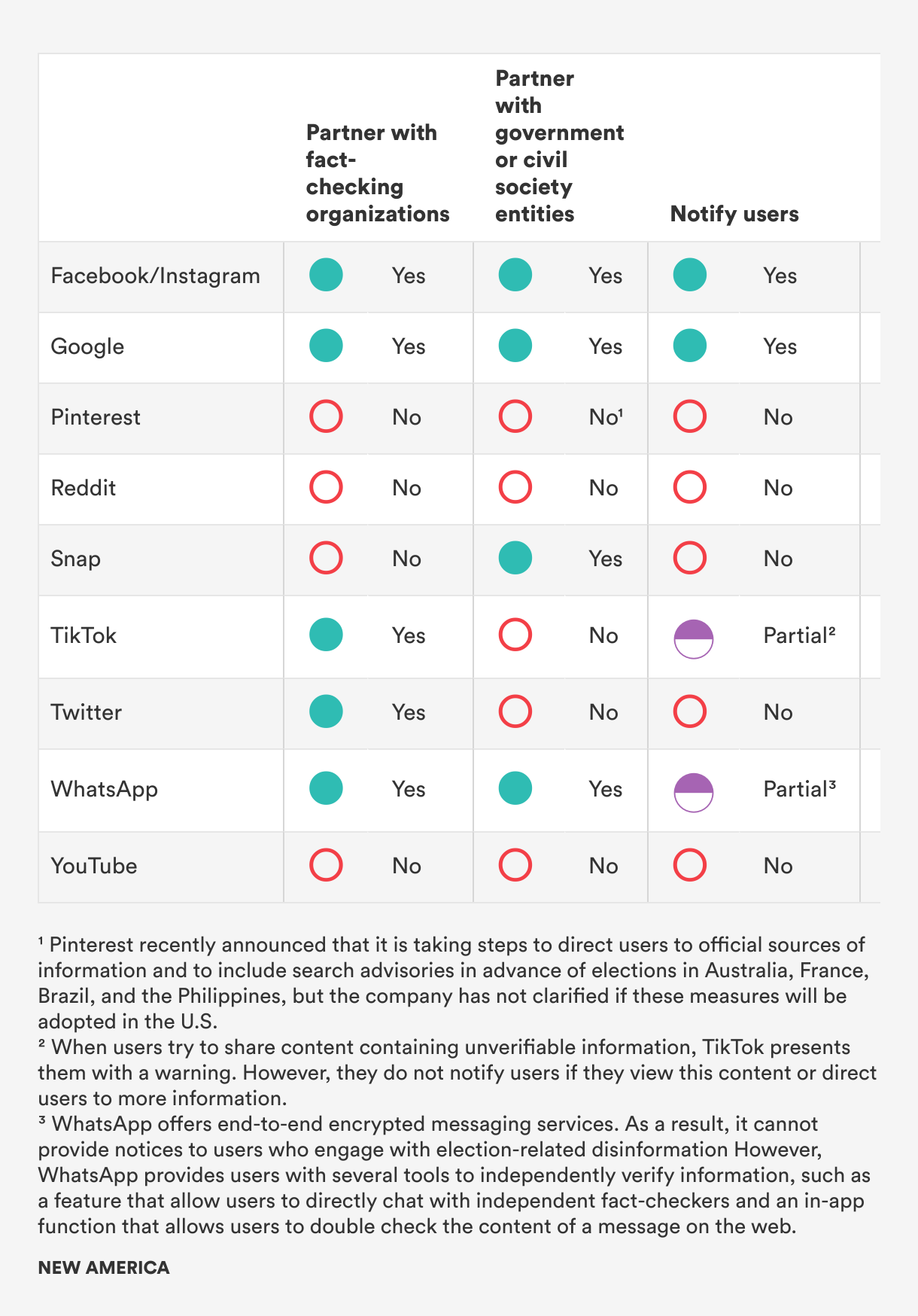

Platforms such as Facebook/Instagram and TikTok that had partnerships with fact-checking organizations in 2020 continue to do so, while those that did not have not initiated new partnerships. The only exception to this is Twitter, which formed a partnership with the Associated Press in August 2021 to surface reliable information in Twitter Moments, Trends, and other surfaces on the platform.1 Additionally, in January 2021, Twitter introduced Birdwatch, which allows Twitter users to add “notes” to tweets in order to flag misleading information and add contextual information. Twitter has partnered with reputable fact-checking entities to evaluate the nature of the tweets. However, for the most part, all of the fact-checking is done by Twitter users, raising concerns about the credibility of the fact-checks. Additionally, the Birdwatch program is still in its pilot stage and is therefore not operating at full scale.2 The Birdwatch program does, however, offer a unique perspective on how platforms can adopt a decentralized approach to managing misleading information on their services.

Prior to the 2020 presidential election, several platforms partnered with reputable government and civil society entities to promote, verify, or refute organic content circulating on their services. For many platforms, it is unclear whether these partnerships will continue into the midterm election season.

When evaluating how platforms notify users who engage with misleading election-related content and/or direct them to authoritative sources of information, we wanted to account for the fact that platforms may use different mechanisms to notify users that they’re engaging with misleading or unverified content. For example, TikTok presents a warning label to users who attempt to share unverifiable content.3 Similarly, WhatsApp notifies users that a piece of content has been shared several times. But, Whatsapp cannot comment on the details of the content itself, as WhatsApp offers end-to-end encrypted messaging services.4 We awarded partial credit to platforms if they either provide notification to users or direct users to authoritative services, but not both.

Very few platforms notify users if they have engaged with or are engaging with content that is misleading or unverified. Additionally, very few platforms connect users with authoritative information on elections as they navigate their services. Only three platforms—Facebook, Google, and Instagram—do both. Given that users often come to internet platforms to access information, this is a critical gap in platforms’ efforts to combat misleading information online.

OTI and numerous other organizations have written extensively about how internet platforms’ algorithmic curation tools can amplify harmful content, including misinformation and disinformation.5 In the run-up to the 2020 election, some internet platforms, such as Twitter, instituted changes to their algorithmic curation and content recommendation systems to prevent the amplification of misleading content.6 At that time, we pushed platforms to conduct regular impact assessments of their algorithmic curation tools to ensure they were not directing users to or surfacing misleading content.7 However, we were unable to find any public reporting indicating that platforms adopted this recommendation. Some platforms conduct impact assessments internally. However, if they do not provide any public transparency around this, there is no mechanism to evaluate their processes and hold them accountable for their policies and procedures.

One additional recommendation we evaluated platforms against is “providing vetted researchers with access to tools and datasets that could enable them to better evaluate company efforts to combat election-related misinformation and disinformation.” We believe it is critical that independent researchers have access to platform data, as independent evaluation helps hold platforms accountable. However, we were unable to find reliable information from many platforms and, as a result, omitted it from the scorecard.

Citations

- “AP Expands Access to Factual Information with New Twitter Collaboration,” Associated Press, August 2, 2021, source.

- Keith Coleman, “Introducing Birdwatch, a Community-Based Approach to Misinformation,” Twitter Blog, January 25, 2021, source.

- K. Bell, “TikTok Adds Warnings to Videos with 'Unverified' Information,” Engadget, February 3, 2021, source.

- “About WhatsApp and elections,” WhatsApp Help Center, source.

-

Spandana Singh, Holding Platforms Accountable: Online Speech in the Age of Algorithms (Washington, DC: New America, 2019), source.

OTI, Anti-Defamation League, Avaaz, Decode Democracy, and Mozilla, Trained for Deception: How Artificial Intelligence Fuels Online Disinformation (Washington, DC: New America, 2021), source.

Nathalie Maréchal, Rebecca MacKinnon, and Jessica Dheere, Getting to the Source of Infodemics: It’s the Business Model, (Washington, DC: New America, 2020), source. - Kate Conger, “Twitter Will Turn Off Some Features to Fight Election Misinformation,” New York Times, October 9, 2020, source.

- Singh and Blase, Protecting the Vote, source.