Table of Contents

- Executive Summary

- Introduction

- A Tale of Two Algorithms

- Russian Interference, Radicalization, and Dishonest Ads: What Makes Them So Powerful?

- Algorithmic Transparency: Peeking Into the Black Box

- Who Gets Targeted—Or Excluded—By Ad Systems?

- When Ad Targeting Meets the 2020 Election

- Regulatory Challenges: A Free Speech Problem—and a Tech Problem

- So What Should Companies Do?

- Key Transparency Recommendations for Content Shaping and Moderation

- Conclusion

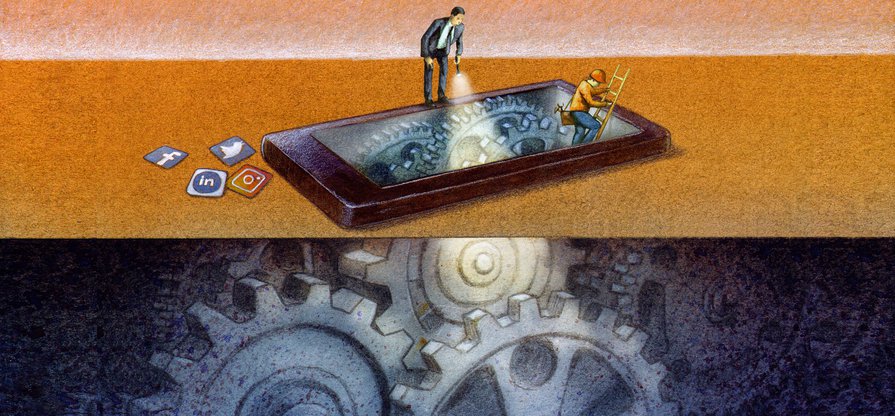

Algorithmic Transparency: Peeking Into the Black Box

While we know that algorithms are often the underlying cause of virality, we don’t know much more—corporate norms fiercely protect these technologies as private property, leaving them insulated from public scrutiny, despite their immeasurable impact on public life.

Since early 2019, RDR has been researching the impact of internet platforms’ use of algorithmic systems, including those used for targeted advertising, and how companies should govern these systems.1 With some exceptions, we found that companies largely failed to disclose sufficient information about these processes, leaving us all in the dark about the forces that shape our information environments. Facebook, Google, and Twitter do not hide the fact that they use algorithms to shape content, but they are less forthcoming about how the algorithms actually work, what factors influence them, and how users can customize their own experiences. 2

Companies largely failed to disclose sufficient information about their algorithmic systems, leaving us all in the dark about the forces that shape our information environments.

U.S. tech companies have also avoided publicly committing to upholding international human rights standards for how they develop and use algorithmic systems. Google, Facebook, and other tech companies are instead leading the push for ethical or responsible artificial intelligence as a way of steering the discussion away from regulation.3 These initiatives lack established, agreed-upon principles, and are neither legally binding nor enforceable—in contrast to international human rights doctrine, which offers a robust legal framework to guide the development and use of these technologies.

Grounding the development and use of algorithmic systems in human rights norms is especially important because tech platforms have a long record of launching new products without considering the impact on human rights.4 Neither Facebook, Google, nor Twitter disclose any evidence that they conduct human rights due diligence on their use of algorithmic systems or on their use of personal information to develop and train them. Yet researchers and journalists have found strong evidence that algorithmic content-shaping systems that are optimized for user engagement prioritize controversial and inflammatory content.5 This can help foster online communities centered around specific ideologies and conspiracy theories, whose members further radicalize each other and may even collaborate on online harassment campaigns and real-world attacks.6

One example is YouTube’s video recommendation system, which has come under heavy scrutiny for its tendency to promote content that is extreme, shocking, or otherwise hard to look away from, even when a user starts out by searching for information on relatively innocuous topics. When she looked into the issue ahead of the 2018 midterm elections, University of North Carolina sociologist Zeynep Tufekci wrote, “What we are witnessing is the computational exploitation of a natural human desire: to look ‘behind the curtain,’ to dig deeper into something that engages us. … YouTube leads viewers down a rabbit hole of extremism, while Google racks up the ad sales.”7

Tufekci’s observations have been backed up by the work of researchers like Becca Lewis, Jonas Kaiser, and Yasodara Cordova. The latter two, both affiliates of Harvard’s Berkman Klein Center for Internet and Society, found that when users searched videos of children’s athletic events, YouTube often served them recommendations of videos of sexually themed content featuring young-looking (possibly underage) people with comments to match.8

After the New York Times reported on the problem last year, the company removed several of the videos, disabled comments on many videos of children, and made some tweaks to its recommendation system. But it has stopped short of turning off recommendations on videos of children altogether. Why did the company stop there? The New York Times’s Max Fisher and Amanda Taub summarized YouTube’s response: “The company said that because recommendations are the biggest traffic driver, removing them would hurt creators who rely on those clicks.”9 We can surmise that eliminating the recommendation feature would also compromise YouTube’s ability to keep users hooked on its platform, and thus capture and monetize their data.

If companies were required by law to meet baseline standards for transparency in algorithms like this one, policymakers and civil society advocates alike would be better positioned to push for changes that benefit the public interest. And if they were compelled to conduct due diligence on the negative effects of their products, platforms would likely make very different choices about how they use and develop algorithmic systems.

Citations

- Maréchal, Nathalie. 2019. “RDR Seeks Input on New Standards for Targeted Advertising and Human Rights.” Ranking Digital Rights. source; Brouillette, Amy. 2019. “RDR Seeks Feedback on Standards for Algorithms and Machine Learning, Adding New Companies.” Ranking Digital Rights. source We identified three areas where companies should be much more transparent about their use of algorithmic systems: advertising policies and their enforcement, content-shaping algorithms, and automated enforcement of content rules for users’ organic content (also known as content moderation). Ranking Digital Rights. RDR Corporate Accountability Index: Draft Indicators. Transparency and Accountability Standards for Targeted Advertising and Algorithmic Decision-Making Systems. Washington D.C.: New America.

- Ranking Digital Rights. 2020. The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems – Pilot Study and Lessons Learned. Washington D.C.: New America. source

- Ochigame, Rodrigo. 2019. “The Invention of ‘Ethical AI’: How Big Tech Manipulates Academia to Avoid Regulation.” The Intercept. source">source; Vincent, James. 2019. “The Problem with AI Ethics.” The Verge. source">source.

- Facebook’s record in Myanmar, where it was found to have contributed to the genocide of the Rohingya minority, is a particularly egregious example. See Warofka, Alex. 2018. “An Independent Assessment of the Human Rights Impact of Facebook in Myanmar.” Facebook Newsroom. source

- Vaidhyanathan, Siva. 2018. Antisocial Media: How Facebook Disconnects Us and Undermines Democracy. New York, NY: Oxford University Press; Gary, Jeff, and Ashkan Soltani. 2019. “First Things First: Online Advertising Practices and Their Effects on Platform Speech.” Knight First Amendment Institute. source

- Lewis, Becca. 2020. “All of YouTube, Not Just the Algorithm, Is a Far-Right Propaganda Machine.” Medium. source

- Tufekci, Zeynep. 2018. “YouTube, the Great Radicalizer.” The New York Times. source

- Unpublished study by Kaiser, Cordova & Rauchfleisch, 2019. The authors have not published the study out of concern that the algorithmic manipulation techniques they describe could be replicated in order to exploit children.

- Fisher, Max, and Amanda Taub. 2019. “On YouTube’s Digital Playground, an Open Gate for Pedophiles.” The New York Times. source