What Will This All Mean for Counterinsurgency?

With so much change, it is too early to know all that will shake out from these new technologies. But we can identify a few key trends of what will matter for war and beyond, and resulting questions that future counter-insurgents will likely have to wrestle with.

The End of Non-Proliferation

A common theme through these diverse technology areas is that they are neither inherently military nor civilian. Both the people and organizations that research and develop these technologies and those that buy and use them will be both government and civilian. They will be applied to conflict, but also areas that range from business to family life. A related attribute is that they are less likely to require massive logistics systems to deploy, while the trend of greater machine intelligence means that they will also be easier to learn and use—not requiring large training or acquisitions programs. These factors mean that insurgent groups will be able to make far more rapid gains in technology and capability than previously possible.

In short, the game-changing technologies of tomorrow are most likely to have incredibly low barriers to entry, which means they will be proliferated. In addition, some of the technologies, such as 3D printing, will make it difficult to prevent the spread of capability via traditional non-proliferation approaches such as arms embargos and blockades. Interdicting weapons routes is less workable in a world where manufacturing can be done on site.

Transportation Security Administration

This issue is not one merely of the hardware, but also the spread of ideas. As vexing as the extent of terrorist ideology and “how to’s” have been in a world of social media, these platforms are still centrally controlled. The Twitters and Facebooks of the world can take down content when they are persuaded of the legal or public relations need. However, the move toward decentralized applications reduces this power, as there is no one place to appeal for censorship.1 This phenomenon is well beyond just the problem of a YouTube clip showing how to build an IED, or a cleric inspiring a watcher of a linked video to become a suicide bomber. It is that decentralization crossed with crowd-sourcing and open sourcing empowers anyone on the network to new scales. Indeed, there are already open-source projects like Tensorflow,2 where any actor can tap into AI resources that were science fiction just a decade ago.

Key Resulting Questions:

- How will U.S. and allied forces prepare for insurgent adversaries that have access to many of the very same technologies and capabilities that they previously relied upon for an edge?

- Will lower barriers to entry make it easier for insurgencies to gain the capability needed to rise? Will it make it more difficult to defeat them if they can rapidly recreate capability?

Multi-Domain Insurgencies

Whether it was the Marines battling the rebel forces in Haiti with the earliest of close air support missions a century ago to the Marines battling the Taliban today, counterinsurgents of the last 100 years have enjoyed a crucial advantage. When it came to the various domains of war, the state actor alone had the ability to bring true power to bear across domains. In enjoying unfettered access to the air and sea, they could operate more effectively on the land, not just by conducting surveillance and strikes that prevented insurgents from effectively massing forces, but by crucially moving their own forces to almost anywhere they wanted to go.

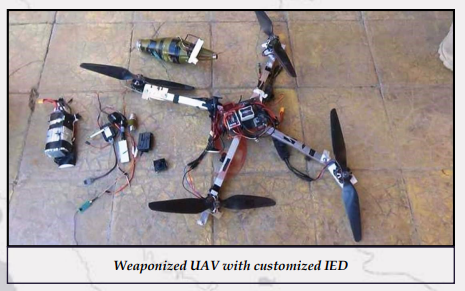

This monopolization of power may not be the case in the future. Indeed, ISIS has already been able to utilize the air domain (via a self-made air force of drones) to conduct ISR of U.S. and allied forces, as well as several hundred air strikes.3 It may be ad hoc, but it still achieved their goals at a minimal cost. More importantly, the ISIS drone use points to a change in the overall story of air power and insurgency. Now, as exemplified everywhere from Yemen to Ukraine, the insurgents can fly and fight back.4

This ability to cross domains is, of course, not just limited to air power, but also other new domains that technology is opening up to battle. Insurgencies will be able to tap into the global network of satellites that have given U.S. forces such advantage in ISR and communications, or even potentially be able to launch and operate cheap micro satellites, either via proxy aid or on their own (If college students can do it already,5 why not insurgents?).

New Jersey Office of Homeland Security and Preparedness

More importantly, the “cyber war” side of insurgency will likely move well past what has been experienced so far.6 The proliferation of capability through both dark markets and increasing automation, combined with the change in the internet’s form to more and more “things” operating online, points to insurgents being able to target command and control networks and even use Stuxnet-style digital weapons causing physical damage.7

The ability to operate across domains also means that insurgents will be able to overcome the tyranny of distance. Once-secure bases and even a force’s distant homeland will become observable, targetable, and reachable, whether by malware or unmanned aerial systems delivering packages of a different sort. To think of it a different way, a future insurgency may not see a Tet-style offensive attack in Hue, but rather Houston.

Key Resulting Questions:

- Is the U.S. prepared for multi-domain warfare, not just against peer states but also insurgents?

- What capabilities utilized in counterinsurgency today might not be available in 2030?

- Just as U.S. forces used capability in one domain to win battles in another, how might insurgents do so?

UnderMatch

In the final battles of World War II’s European theater, U.S. forces had to contend with an adversary that brought better technology to the fight. Fortunately for the Allies, the German “wonder weapons” of everything from rockets and jets to assault rifles entered the war too little and too late.

For the last 75 years, U.S. defense planning has focused on making sure that never happened again. Having a qualitative technology edge to "overmatch" our adversaries became baked into everything from our overall defense strategy to small-unit tactics. It is how the U.S. military deterred the Red Army in the Cold War despite having a much smaller military, and how it was able to invade Iraq with a force one-third the size of Saddam Hussein's (inverting the historic mantra that the attacker's force should be three times the size of the defender's).8

Even in painful insurgencies from Vietnam to the post-9-11 wars, this approach didn't always deliver easy victories, but it did become part of a changed worldview. A Marine officer once told me that if his unit of 30 men was attacked by 100 Taliban, he would have no fear that his unit might lose; indeed, he described how it would almost be a relief to face the foe in a stand-up fight, as opposed to the fruitless hunts, hidden ambushes and roadside bombs of insurgency. The reason wasn’t just his force’s training, but that in any battle, his side alone could call down systems of technology that the insurgency couldn’t dream of having, from pinpoint targeting of unmanned aerial systems controlled via satellite from thousands of miles away to hundreds of GPS-guided bombs dropped by high speed jet aircraft able to operate with impunity.

Yet U.S. forces can't count on that overmatch in the future. This is not just the issue of mass proliferation discussed above, driven by the lower barriers to entry and availability of key tech on the marketplace. Our future counterinsurgency thinking must also recognize that the geopolitical position has changed. As challenging as the Taliban and ISIS have been, they were not supported by a comparable peer state power, developing its own game-changing technology, and potentially supplying it to the world.

Mass campaigns of state-linked intellectual property theft have meant we are paying much of the research and development costs of China’s weapons development, while at the same time, it is investing in becoming a world leader in each of the above revolutionary technology clusters.9 For instance, in the field of AI, China has a dedicated national strategy to become the world leader in AI by the year 2030,10 with a massive array of planning and activity to achieve that goal. Meanwhile, it has displayed novel weapons programs in areas that range from space to armed robotics.11

The result is that in a future insurgency, whether from purchases off the global market or proxy warfare supplies, American soldiers could face the same kind of shock that the Soviet helicopter pilots had in their 1980s war in Afghanistan, when the Stinger missile showed up in the hands of the mujahideen. The United States could one day find itself fighting a guerilla force that brings better technology to the fight.

U.S. Department of Defense, courtesy of Wikimedia Commons

Key Resulting Questions:

- What changes in tactics are needed for counterinsurgents when they do not enjoy technology “over-match?”

- How does the growing geopolitical environment shift counterinsurgency? Are U.S. tactics and doctrine ready for great power supported insurgents?

Information Underload and LikeWars

“It is like sipping from a fire hose.”

This is how a U.S. military officer described to me a core problem in their job. The setting was the Combined Air Operations Center at Al Udeid Air Force Base, where U.S. forces coordinated the massive scale of operations in support of counterinsurgencies in Iraq and Afghanistan.

The challenge they felt was that there was too much data coming at them, from full motion video to chatroom posts, sent in by people ranging from soldiers in the midst of a firefight to intelligence analysts back in the United States. It was not just that the officer could barely keep up, but also that, in constantly servicing their in-box, it was hard to think and act strategically. In essence, their relationship to information was one that we all feel: TMI, Too Much Information.

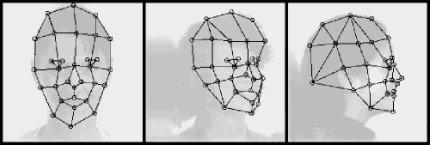

Some believe that mastering this problem actually holds the solution to ending insurgency as a phenomenon once and for all. In a world of mass surveillance, follows this thinking, if we can just sift through the information rapidly enough, insurgents will not be able to operate effectively. AI algorithms won’t just instantly identify insurgents via face recognition12 or gait detection analysis,13 but even move to predict their activities before they plan them. Indeed, projects like PreData14 already mine open source intelligence like social media posts to predict the very outbreak of insurgencies.

Federal Bureau of Investigation

We shouldn’t be so quick to declare victory against rebels of the future. Insurgency, like all conflict, involves a thinking adversary reacting to each and every move and technology. For instance, we are already seeing the rise of counters to mass surveillance like face paints15 and even stealth clothing.16 In turn, the spread of autonomous drones and cars will render whole swaths of current counterterrorism/insurgency defenses obsolete.

But the counters may be about more than merely deceiving sensors, but altering our very relationship with information itself.

The technology systems that we rely on for counterinsurgency are only as reliable as the information that goes into them. The connection points of this information can be attacked. The communications signals to drones have already been blocked and manipulated in tests, while merchant ships in the Black Sea off Russia experienced a suspected hack where their GPS started to tell the ship captains they were sailing miles inland.17

In these situations, it was self-evident that the “spigot” of information was being cut off or tampered with. But what also bodes is a kind of poison to the overall system, targeting the people behind the networks.

“Terrorism is theater,” declared RAND Corporation analyst Brian Jenkins in a 1974 report that became one of the field’s foundational studies.18 Command enough attention, and it didn’t matter how weak or strong you were: you could bend populations to your will and cow the most powerful adversaries into submission. This simple principle has guided insurgents and terrorists for millennia. Whether via assassination in the town squares of ancient Judea, marketplace bombings in colonial wars in Algeria, or ISIS’s carefully edited beheadings in Syria, the goal has always been the same: Control what people thought (and feared).

Already we have seen the power of online networks to control this crucial narrative, perhaps most illustratively with the invasion of Mosul, where an insurgent force didn’t hide from, but embraced surveillance. Indeed, ISIS even branded its 2014 offensive #AllEyesOnISIS to ensure that the world was watching. It was effective, but again, quite basic. We can increasingly place false information that is overwhelming for not just our political systems, but our very human senses.

Screenshot by author

One vector of this is bots—algorithms that perform automated tasks, such as acting like humans online. The early versions of social media bots proved able to drive what people thought, knew, and even argued about during the most crucial elections of our time from their massive presence during the Brexit referendum (researchers found approximately 20 percent of the users shaping online discussion on Britain leaving the European Union were actually bots) to the 2016 U.S. presidential election (Twitter concluded that bots helped drive Russian-generated propaganda to users 454.7 million times).19 Years later, the similar use of artificial accounts would strike not just in elections like in Mexico’s, but also in controversies ranging from the Colin Kaepernick NFL boycott to the spread of anti-vaccine conspiracy theories.20

Artificial intelligence, again available to all actors, is set to massively compound this problem with the creation of what are known as “deep fakes.” Artificial neural networks mimic how the human brain works by having individual nodes that activate or not to a single point of information and carry out incredibly complex tasks by layering the connections together. Through this, machines now can study a database of images, words, and sounds to learn to mimic a human speaker’s face and voice almost perfectly. An early example of the potential political impact of this came in the creation of an eerily accurate, entirely fake conversation between Barack Obama, Hillary Clinton, and Donald Trump.21 Indeed, only drawing from the data of two-dimensional photographs, these systems can build photorealistic, three-dimensional models of someone’s face to place into online settings. A demonstration with the late boxing legend Muhammad Ali transformed a single picture into “photorealistic facial texture inference” that was essentially able to rewrite what “the greatest” actually did and said when he was alive, at least in our online records of him (which will be the source of “truth” to the vast majority).22

Neural networks can also be used to create deep fakes that aren’t copies at all. Rather than study images to learn the names of different objects, these networks learn how to produce new, never-before-seen versions of the objects in question. These are called “generative networks.”23 As an example, computer scientists unveiled a generative network that could create photorealistic synthetic images on demand, all with only a keyword. Ask for “volcano,” and you got fiery eruptions as well as serene, dormant mountains—wholly familiar-seeming landscapes that had no earthly counterparts. Another system created faces of people who didn’t exist but which real humans would likely view as being Hollywood movie stars.24

With such technology, users will eventually be able to conjure a convincing likeness of any scene or person they can imagine. Because the image will be truly original, it will be difficult to identify the forgery via many of the old methods of detection. And such networks can do the same thing with video to create new moments in time that never happened.25 They have produced eerie, looping clips of a “beach,” a “baby,” or even “golf.” They’ve also learned how to take a static image (a man on a field; a train in the station) and generate a short video of a predictive future (the man walking away; the train departing). In this way, events that never took place may nonetheless be presented online as real occurrences, documented with compelling video evidence.

Finally, there are neural network-trained chatbots—also known as machine-driven communications tools or MADCOMs.26 The inherent promise of such technology—an AI that is essentially indistinguishable from a human operator—is being built to help companies replace their help desks and sell products online. But it also could be utilized for terrible misuse. Today, it still remains possible for a savvy internet user to distinguish “real” people from automated botnets and even many sockpuppets (the combination of Russophied English and a love for red #MAGA hats often gives them away). Soon enough, even this uncertain state of affairs may be recalled fondly as the “good old days”—the last time it was possible to have some confidence that another social media user was a flesh-and-blood human being instead of a manipulative machine.

Combine all these pernicious applications of neural networks—mimicked voices, stolen faces, real-time audiovisual editing, artificial image and video generation, and MADCOM manipulation—and it’s tough to shake the conclusion that the long feared “Cyberwar” of hacking networks will prove less important than what one might think of as the “LikeWar” side of battle: hacking the people on the networks by driving ideas viral through a mix of likes, shares, and lies.27

P.W. Singer and Emerson Brooking.

The likely outcome is that the insurgencies of 2030 won’t just be fought by people, but also by highly intelligent, inscrutable algorithms that will speak convincingly of things that never happened and produce “proof” that doesn’t really exist. They’ll seed falsehoods across the social media landscape with an intensity and volume that will make the current state of affairs look quaint.

For instance, as futuristic as the counterinsurgency training on the SMEIR at Fort Polk that opened this report seems, it captures a point in time soon to be passed by technology and tactics. Thwarted by the eagle-eyed U.S. Army tactical operations officer, the terrorists might not just fade back into the crowd. They might instead shoot the civilians anyway and simply manufacture compelling online evidence of U.S. involvement. Or, maybe they never even show up in the first place, able manufacture everything about the massacre using neural networks that would produce hyper-realistic imagery, distribute information outwards with armies of AI-infused bots, and manipulate the algorithms of the web itself. In turn, the role of that officer might be replaced by the only entity able to effectively battle back: another artificial intelligence. The result will be algorithms battling over the hearts and minds of humans.

The result will be algorithms battling over the hearts and minds of humans.

It is easy to downplay the effects of these online battles, but to do so ignores how the age-old lessons of counterinsurgency are likely to cross with the new features of LikeWar. As General Stanley McChrystal told a conference of military officers in the Middle East, “…For the foreseeable future,” the online space of social media will be as crucial of a domain to any war as that of the air, land, or sea. The reason, he explains, is that “There is a war on reality…Shaping the perception of which side is right or which side is winning will be more important than actually which side is right or winning."28

Key Resulting Questions:

- What aspects of our relationship to information itself will change in future insurgencies?

- What will the LikeWar battles between insurgents and counterinsurgents look like in the future? Are we prepared to fight and win them? How will we even know?

Citations

- Meserole and Polyakova, “Disinformation Wars.”

- “Why TensorFlow,” TensorFlow, accessed March 28, 2019, source.

- Eric Schmitt, “Pentagon Tests Lasers and Nets to Combat a Vexing Foe: ISIS Drones,” New York Times, September 23, 2017, source.

- “Houthi Drones Kill Several at Yemeni Military Parade,” Reuters, January 10, 2019, source.

- Becky Ferreira, “The Race to Launch the First Student-Built Rocket into Space Is On,” Vice Motherboard, February 23, 2018, source.

- Peter W. Singer, “The 2018 State of the Digitial Union: The Seven Deadly Sins of Cyber Security We Must Face,” War on the Rocks, January 30, 2018, source.

- Jack Wallen, “Five Nightmarish Attacks That Show the Risks of IoT Security,” ZDNet, June 1, 2017, source.

- The coalition invasion force numbered roughly 380,000 vs roughly 1.3 million defenders. Kenneth Katzman, "Iraq: Post-Saddam Governance and Security," fpc.state.gov/. Congressional Research Service. Retrieved 23 September 2014.

- Dennis C. Blair and Jon M. Huntsman Jr., “The IP Commission Report” (National Bureau of Asian Research, May 2013), source.

- Paul Triolo and Jimmy Goodrich, “From Riding a Wave to Full Steam Ahead,” DigiChina (blog), February 28, 2018, source.

- P. W. Singer and Emerson T. Brooking, “Eastern Arsenal,” Popular Science, accessed March 28, 2019, source.

- Cade Metz and Natasha Singer, “Newspaper Shooting Shows Widening Use of Facial Recognition by Authorities,” New York Times, June 29, 2018, source.

- Jim Giles, “Cameras Know You by Your Walk,” New Scientist, September 19, 2012, source.

- “Predictive Analytics for Geopolitical Risk,” PreData, accessed March 28, 2019, source.

- Marc Bain, “New ‘Camouflage’ Seeks to Make You Unrecognizable to Facial-Recognition Technology,” Quartz, January 6, 2017, source.

- Tim Maly, “Anti-Drone Camouflage: What to Wear in Total Surveillance,” WIRED, January 17, 2013, source.

- David Hambling, “Ships Fooled in GPS Spoofing Attack Suggest Russian Cyberweapon,” New Scientist, August 10, 2017, source.

- Brian Michael Jenkins, “International Terrorism: A New Kind of Warfare” (Santa Monica, CA: RAND Corporation, 1974), source.

- “Research suggests bots generated social media stories during EU Referendum,” Swansea University Press Office, November 22, 2017, source, United States Senate Committee on the Judiciary, Subcommittee on Crime and Terrorism, Update on Results of Retrospective Review of Russian-Related Election Activity, hearing on Twitter, Inc. January 19, 2019, source.

- “Mexico election: Concerns about election bots, trolls and fakes,” BBC, May 30, 2018. source; Andrew Beaton, “How Russian Trolls Inflamed the NFL’s Anthem Controversy,” Wall Street Journal, Oct 22, 2018, source; “Weaponized Health Communication: Twitter Bots and Russian Trolls Amplify the Vaccine Debate,” American Journal of Public Health, Oct 2018. source

- Natasha Lomas, “Lyrebird Is a Voice Mimic for the Fake News Era,” Tech Crunch (blog), April 25, 2017, source.

- Shunsuke Saito et al. “Photorealistic Facial Texture Inference Using Deep Neural Networks,” December 2, 2016, source.

- Anh Nguyen et al. “Plug & Play Generative Networks: Conditional Iterative Generation of Images in Latent Space,” November 30, 2016, source.

- Will Knight, “Meet the Fake Celebrities Dreamed Up by AI,” MIT Technology Review, October 31, 2017, source.

- Carl Vondrick, Hamed Pirsiavash, and Antonio Torralba, “Generating Videos with Scene Dynamics” (29th Conference on Neural Information Processing Systems, Barcelona, Spain, 2016), source.

- Matt Chessen, “The MADCOM Future” (Atlantic Council, September 26, 2017), source.

- Singer and Brooking, Likewar.

- General Stanley McChrystal, “War in the 21st Century,” Remarks at ECCSR, UAE, Oct 22, 2017.