Governing the Digital Future

Abstract

This report is part of a multiyear project undertaken by New America’s Planetary Politics initiative on the geopolitics and global governance of the digital domain. The report analyzes divides and debates in key digital issue areas, maps the state of the global digital governance landscape, and identifies priorities for global action. The analysis draws on a review of the literature and a series of consultations and workshops held from January through June 2023. It is especially informed by the insights of the Digital Futures Task Force, an international, multidisciplinary group of researchers, technologists, and policymakers that convened at New America’s Washington, DC, office for intensive discussions on these issues.

Acknowledgments

The authors would like to thank Candace Rondeaux, Senior Director of New America’s Planetary Politics initiative, for invaluable guidance conceptualizing and steering the production of this report and the research that informed it. Planetary Politics Director Heela Rasool-Ayub provided helpful editorial direction. Several colleagues at New America contributed time, effort, and thought to this project, notably Public Interest Technology Senior Program Manager Alberto Rodriguez, Open Technology Institute Senior Policy Analyst David Morar, Future Frontlines Program Manager Ben Dalton, and Digital Impacts and Governance Initiative Senior Advisor Allison Price.

We are grateful also to the dozens of collaborators who contributed to the research process and who are too numerous to list in full here. Deborah Avant of the University of Denver’s Sié Center, Tithi Chattopadhyay at Princeton University’s Center for Information Technology Policy, and Shawn Walker and Michael Simeone at Arizona State University helped plan and carry out the research agenda. We are indebted to the Digital Futures Task Force, especially working group leads Constanza Gomez-Mont, Alejandro Pisanty, Sagwadi Mabunda, Swati Srivastava, Landry Signe, and Robin Renee-Sanders. A crack team of rapporteurs, including Merle Weidt, Kenia Hale, Jonas Heering, Abigail Giles, and Summer Boucher-Robinson, captured and distilled a staggering amount of information. Early internet luminaries Vint Cerf, Mike Roberts, and Roberto Gaetano generously gave their time to share an under-the-hood look at ICANN.

Gratitude is also due to New America intern T Nang Seng Pan, who diligently fact-checked the report and created compelling and informative visualizations with the assistance of Naomi Morduch Toubman. Sabrina Detlef performed sharp copyedits, and Kelley Gardner managed the layout. Melissa Salyk-Virk provided indispensable organizational support throughout the research process. This project was made possible by a grant from the Ford Foundation, where Salih Booker and Alberto Cerda Silva were integral thought partners whose insights helped shape the research and report.

Editorial disclosure: The views expressed in this report are solely those of the authors and do not reflect the views of New America, its staff, fellows, funders, or its board of directors.

Downloads

At a Glance

- Worsening power asymmetries are at the heart of much conflict, harm, and governance dysfunction in the digital domain.

- Power in and over the digital realm is more concentrated than ever before, as outsized corporate influence and increasing government control create a trajectory for the digital future that imperils security, equity, and human rights.

- At the same time, the digital domain is the most active battlefield in an escalating, zero-sum power struggle between the U.S. and China, and intensifying skirmishes between established and emergent leaders in digital tech such as Russia and India.

- Countering global power imbalances and promoting an equitable, safe digital domain will take an intentional approach to expanding the multi-stakeholder global governance ecosystem.

- We have an opportunity to get in front of some of the worst possible harms stemming from artificial intelligence right now. To do that, we will need to invest in institutional vehicles committed to paying it forward to future generations when it comes to ensuring a safe, secure, and equitable digital domain.

- Stewardship of connectivity needs to shift away from internet service providers and corporate power toward a more distributed model based on various fail-safes so as to enable alternative and redundant means of access.

- In the contest to shape global standards in areas like cybercrime, privileging regional guidelines could prove more fruitful than the pursuit of universal requirements.

- To reduce global conflict and harm from digital surveillance, democracies should practice what they preach and ban commercial spyware outright.

- The big data value chain must be reformed to afford individuals and less influential countries more rights and proceeds from their data.

Executive Summary

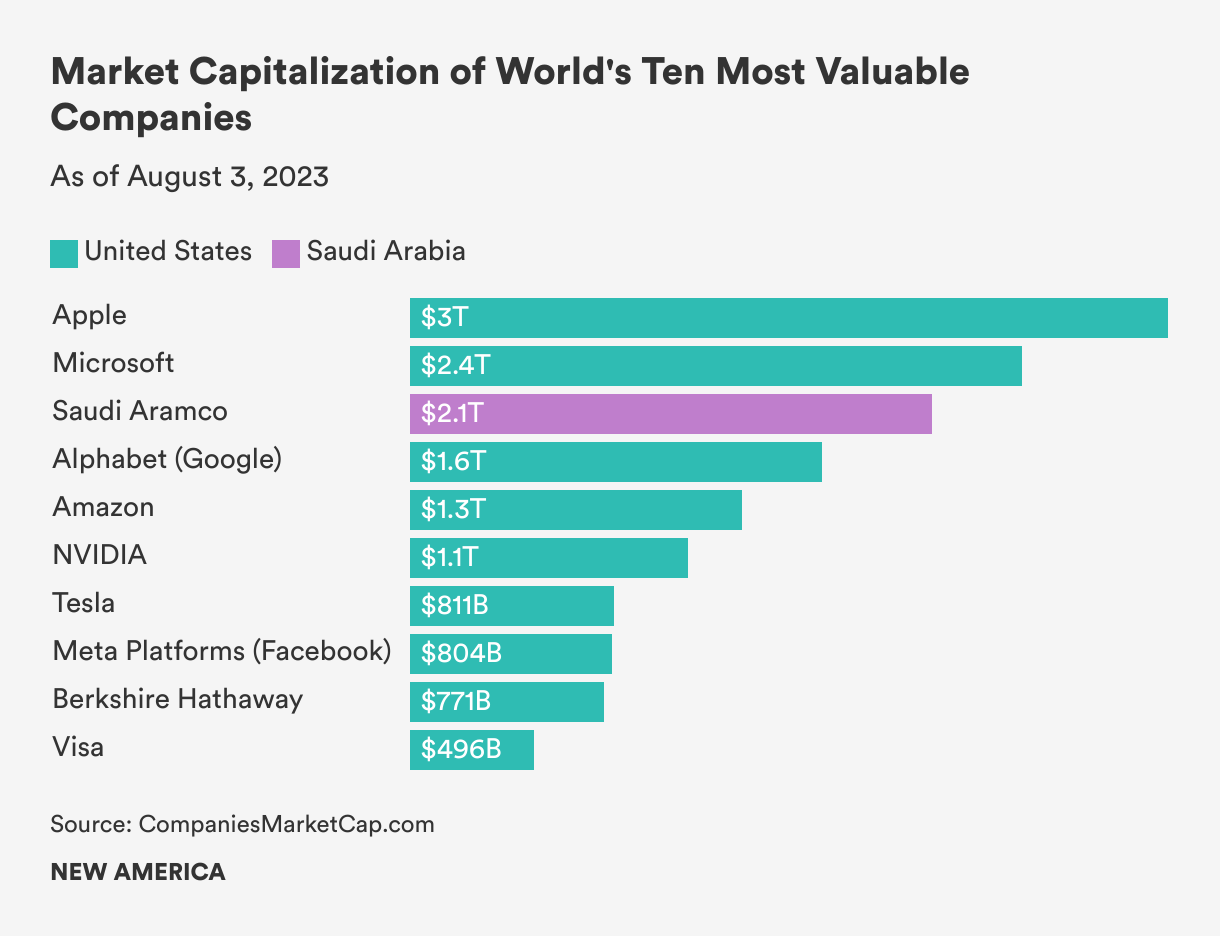

The digital world is in disarray. For all their benefits, digital technologies have unleashed harms ranging from algorithmic bias and disinformation to ransomware attacks. Rising inequality, social and political divisions, and escalating geopolitical tensions have darkened more hopeful visions of our shared digital future. Tech companies, arguably the most powerful private entities in history, are racing to deploy powerful artificial intelligence (AI) systems that will transform societies. At a time when global cooperation is essential, governance is fragmented within the different layers of the digital domain and failing to manage risk and conflict. Never before has the future of the digital revolution felt so uncertain and contested.

By now, the ills of digitization are well-trod research terrain. Yet, there are poorly understood divergences in how different nations, sectors, epistemic communities, and socioeconomic groups perceive, experience, and address digital harm. From January through June 2023, New America’s Planetary Politics initiative undertook a research agenda to understand these fault lines and to identify first-order principles that could move the digital world toward greater safety and equity. To do this, we conducted an extensive literature review, consulted with experts and hosted workshops, and convened a global, multidisciplinary group of researchers, technologists, and policymakers we named the Digital Futures Task Force.

The first part of this analysis was focused on five issue areas in digital technology that are driving conflict, human rights violations, and socioeconomic displacement: (1) AI and algorithmic decision-making, (2) digital access and divides, (3) data protection and data sovereignty, (4) digital identity and surveillance, and (5) transnational cybercrime.

We then mapped the where, why, and how of the ways competition, contestation, and cooperation in those five issue areas are shaping the patchy global digital governance landscape today. What came through right up front was that trend-setting nation-states including the United States, China, European Union, and India have divergent visions for the digital future. Arguably, Russia, too, falls within this category of trendsetters as well but more as a result of its default to adopting policies, customs, and approaches to tech governance that fall in line with China’s vision. Now more than ever, we see the ways clashes between those trend-setting states are spilling into the open in multilateral fora focused on shaping global cyber norms. Large American technology companies are digital sovereigns in their own right, with governing power to rival that of governments. Amid increasing contestation, multi-stakeholder institutions still find consensus among diverse interests to manage the global internet.

From our dialogues, consultations, and analysis, a fundamental conclusion emerged: An over-concentration of power and severe power asymmetries are causing conflict, harm, and governance dysfunction in the digital domain. Whereas the internet began as a distributed enterprise that connected and empowered individuals worldwide, extreme concentrations of political, economic, and social power now characterize the digital domain. Power imbalances are especially acute between developing and wealthy nations, as a handful of rich-world tech companies and nation-states control the terms and trajectory of digitization.

The Digital Futures Task Force identified first principles for positive interventions and explored governing frameworks for countering power asymmetries and steering the world toward a safer, more equitable digital future. At a conceptual level, this will take not a single international agency, but rather a networked, multi-stakeholder ecosystem of institutions, agreements, and initiatives that work as a fluid, shifting, federated whole, like, in the words of one task force member, a school of fish moving individually yet in concert through a changing current.

On a more practical level, a few takeaways and first principles stood out as in need of urgent attention:

- We have a critical opportunity to get ahead of possible harms that will stem from AI; science and citizen-centric fora like the Pugwash Conferences on Science and Technology offer a model means of refocusing the digital governance ecosystem beyond the myopic logic of national sovereignty.

- Amid digital divides and increasing government control over the internet, multilateral and multi-stakeholder agencies should invest in fail-safes, alternative or redundant means of access, that can shift the stewardship of connectivity away from concentrated power centers.

- Regional standards that respect diverse local circumstances can help generate global cooperation on challenges such as cybercrime.

- To reduce global conflict in digital surveillance, democracies should practice what they preach and ban commercial spyware outright.

- Redistributing the value from big data can diminish corporate power and empower individuals.

From a research perspective, more work is needed to understand and draw attention to the ways digital power asymmetries between the rich and developing worlds are shaping opportunity, risk, and sovereignty. In the next year, we plan to reconvene an expanded Digital Futures Task Force to conduct further analysis in two areas: (1) AI governance and impacts in the developing world and (2) the battles over digital sovereignty playing out in Africa, Latin America, Southeast Asia, and the Middle East.

We intend this report and the next phase of work as a modest contribution to the effort to bring about principled stewardship of the digital domain. We believe more global engagement and attention to power imbalances are essential to address the widening gaps among nations and resolve conflicts between corporations, governments, and citizens over the contours of sovereignty in the digital domain.

Introduction

As we enter the age of AI, the digital future appears uncertain. Even before the advent of large language models, digital technologies were straining and fracturing economic, political, and social systems. From Myanmar to the United States, algorithmically boosted hate and disinformation fuel polarization and political violence. Digital state surveillance violates human rights, while corporate surveillance deprives citizens of value and dignity and drives addictive social media platforms that many think are precipitating a mental health crisis among teenagers and young adults.1 Cybercrime causes billions of dollars in losses to governments and companies and threatens critical infrastructure. The open, global internet is in peril, as governments more frequently shut down access and seek to steer cyber norms toward authoritarian frameworks. Control of data, network infrastructure, semiconductors, and governance of the internet itself is at the heart of escalating geopolitical competition, particularly between the U.S. and China.

It will take governance systems to mitigate the risks and harms of the digital revolution. Yet so far, global digital governance is incoherent and patchwork—fractured along technical, national, geographic, and sectoral lines. Countries impose domestic regulations, but cyberspace is transnational, and digital technologies proliferate at astonishing speed. These technologies challenge the centuries-old notion of sovereignty as distinctly territory-bound, a consensus that has underpinned the international order for centuries. The sovereignty of nation-states still depends on control over physical terrain, but in the theoretically borderless landscape of cyberspace, sovereignty is unbound from conventional geography.

Geopolitical competition, divergent national visions of digital sovereignty and governance, and the power of the private sector mean nation-states struggle to agree on norms to sustain an equitable, safe, and innovative digital domain. The consequential, and in some cases widening, divides between developing and wealthy nations over priorities, impacts, perspectives, and resources illustrate the need not so much for consensus as for justice. Effective global digital governance will depend not on imposing conformity or aligning ideologies, but on developing frameworks and institutions that can rectify power imbalances, as well as make space for areas of agreement and cooperation.

“Effective global digital governance will depend not on imposing conformity or aligning ideologies, but on developing frameworks and institutions that can rectify power imbalances, as well as make space for areas of agreement and cooperation.”

The scale and complexity of the digital domain makes global governance all the more difficult. Consider, for example, the current dynamics of global cooperation and competition in each of the internet’s four layers. The physical layer features intensifying competition between the U.S. and China to control network architecture and infrastructure, such as subsea cables and semiconductors.2 The logic layer, the “central nervous system of cyberspace,” functions coherently under the supervision of multi-stakeholder bodies like the Internet Corporation for Assigned Names and Numbers (ICANN), but autocratic countries are trying to bring this layer under greater state control, which threatens to splinter the global internet.3 The platform or application layer is highly fragmented, with different nations adopting different regulatory frameworks and Silicon Valley corporations in the United States wielding more governance power than all but a handful of nations. The machine layer, where powerful AI systems are emerging, is the focus of many regulatory proposals, yet risks defaulting to the same shambolic governance dynamics of the platform layer.

With these challenges and issues in mind, New America’s Planetary Politics initiative spent six months examining fault lines in the digital domain and the gaps and prospects for global digital governance. We started with an examination of digital harm worldwide. There are a variety of reasons to start with harms when thinking about the governance of an emerging technology. For one, it is generally easier to find agreement around the question of what is harmful rather than the question of what is good, as humans are wired to be risk-averse, overweighting the impact of potential negative outcomes relative to potential gains.4 The legal and ethical codes of societies tend to focus on deterring what is bad rather than encouraging what is good.

We focused on five issue areas that are generating risk and harm for societies everywhere:

- AI and algorithms;

- Digital access and divides;

- Data protection and data sovereignty;

- Digital identity and digital surveillance; and

- Transnational cybercrime.

We conducted a literature review to understand the tensions and areas of contestation in the academic, policy, and public debates surrounding these issues. Then, to deepen and broaden the inquiry, we convened groups of experts and practitioners. The first forum was a workshop featuring scholars and civil society leaders who either study, manage, or are otherwise involved in initiatives that attempt to exercise democratic governance over aspects of the digital domain (e.g., the Facebook Oversight Board, the Global Network Initiative, the Digital Trust and Safety Partnership). A series of virtual consultations followed, including one with former leaders of ICANN, the multi-stakeholder body that manages the Domain Name System (DNS), the phonebook of the indexed internet.

The culmination of this effort was the establishment of the 30-member Digital Futures Task Force, the first step on a multiyear process that New America has undertaken to shape the public debate on preventing, mitigating, and managing digital harms. The task force consists of five working groups, one for each issue area. Each working group had six members, each either hailing from or focused in their work on a different region: Africa, Latin America, North America, Europe, the Middle East, and Asia. We anticipate that membership will continue to grow as we take aim at making the debate about the digital future more inclusive and equitable.

The geographical range aimed to bring developing and wealthy nations’ perspectives in equal weight. Task force members include distinguished scholars and researchers, diplomats, law enforcement officials, technologists, entrepreneurs, and lawyers. The essential selection criteria was diversity—of nationality, identity, expertise, and experience. In May, the task force convened for a two-day symposium in Washington, DC, in which the working groups mapped harms and areas of contestation in their issue area and began pointing the way to principles and frameworks for governance.

We had three questions to answer.

First, we sought to identify some of the global fault lines that are shaping the digital future: Where and how do scholars, policymakers, cultures, sectors, and socioeconomic groups diverge in their experience and perception of digital harm and risks? Second, we wanted to map the existing global digital governance landscape: Which nations, companies, multilateral organizations, and multi-stakeholder bodies are shaping the digital domain, and how? Lastly, we wanted to identify potential solutions: What new governing frameworks and institutional models could bring greater security, equity, and prosperity to the digital future?

Citations

- Benjamin Hart, “The Grim New Consensus on Social Media and Teen Depression,” New York Magazine, May 8, 2023, source.

- Mayumi Hirosawa and Ryohei Yasoshima, “ITU G7 Backs Deep Sea Cable Network for Nations,” SubTel Forum, source.

- Kristen Cordell, “The International Telecommunication Union: The Most Important UN Agency You Have Never Heard Of,” Center for Strategic and International Studies Commentary (blog), December 14, 2020, source.

- Daniel Kahneman, Thinking, Fast and Slow (New York: Farrar, Straus and Giroux, 2011), 283.

Digital Fault Lines

AI and Algorithmic Decision-Making

First coined in the 1950s by American computer scientist John McCarthy, the term artificial intelligence refers to machines that can learn and make decisions or predictions in ways that simulate or mimic human intelligence.1 Scholars treat AI as an umbrella concept that encompasses a range of both current and potential future technologies. They credit this flexibility for enabling continuity even as the technology and its uses have changed, but the lack of a precise definition has confounded alignment among legislative and regulatory processes and has invited litigation.2

In policy debates, the most salient branch of AI is machine learning, whereby algorithms trained on datasets to detect patterns and make inferences are able to describe something, predict what will happen, or prescribe what action to take. Machine learning has advanced at an astonishing pace in recent years, owing to falling costs of computation, the availability of massive amounts of data, and the development of more sophisticated algorithmic models.3 Machine learning is now commonplace, at use around the world in sectors including health care, entertainment, manufacturing, policing, and national security. These systems have unlocked greater efficiencies, generating billions in revenue, and helping solve public policy problems, such as social assistance targeting.4

They also carry risks and harms. Ill-designed or insufficiently trained systems have caused physical injury and even death, such as in the case of vehicle crashes caused by autonomous self-driving systems.5 The massive quantities of data collected to train AI systems raise concerns for privacy, as researchers argue that the established privacy discourse—which relies on the assumption that data is a tradable good over which its creator has agency—is moot when a system’s operations are so complex that they cannot be understood, a growing power asymmetry present in AI systems.6 Extensive literature has illustrated the propensity for opaque, algorithm-based machine learning systems to exhibit bias and discriminate based on race, gender, or other socioeconomic qualifiers.7

The advent of powerful generative AI systems has shaped and supercharged public and policy debates about the technology. In November 2022, the private firm OpenAI publicly released ChatGPT, a chatbot built atop a so-called large language model, which trains a layered neural network on massive datasets in order to predictively generate text or code. ChatGPT was at the time the fastest-growing consumer application in history, attracting 100 million monthly active users in two months.8 Similarly powerful chatbots from Microsoft, Google, Meta, and others followed. Advanced machine learning systems had been on the public radar for years, but the capability and human-like output of these generative chatbots sparked a high-profile, fractious debate about the harms and risks of AI.

The most prominent voices in the debate are those warning of speculative, catastrophic risks of AI. Their central concern is the direct alignment problem, the question of how to ensure that an AI system pursues the goals and intentions of the humans who created it. The most extreme scenario of misaligned AI envisions a system escaping human control and pursuing goals that threaten human rights, safety, or existence.9 A statement released by the nonprofit Center for AI Safety, that reads “Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war,” has been signed by dozens of leading AI technologists, researchers, and other notable public figures.10

Others criticize the focus on speculative extinction risk, insisting that it distracts from—and even exacerbates—more likely and urgent risks stemming from human misuse of AI systems and their propensity to concentrate power, exacerbate structural discrimination, and further inequality.11 AI is not a creature, but rather a set of tools, and the pressing concerns for society—researchers such as Princeton Professor Arvind Narayanan argue—stem not from the prospect of a rogue AI, but rather from the humans who design and deploy it. Humans might build and use AI tools in harmful or malicious ways, such as developing autonomous weapons systems, producing sophisticated disinformation, or distributing the knowledge to create biological, chemical, or cyber weapons.12 Less directly, a system will express the worldview and interests of its creator in ways that can harm others. As political theorist Langon Winner noted, all technologies “have politics,” in that they reflect the preferences and biases of their creators.13

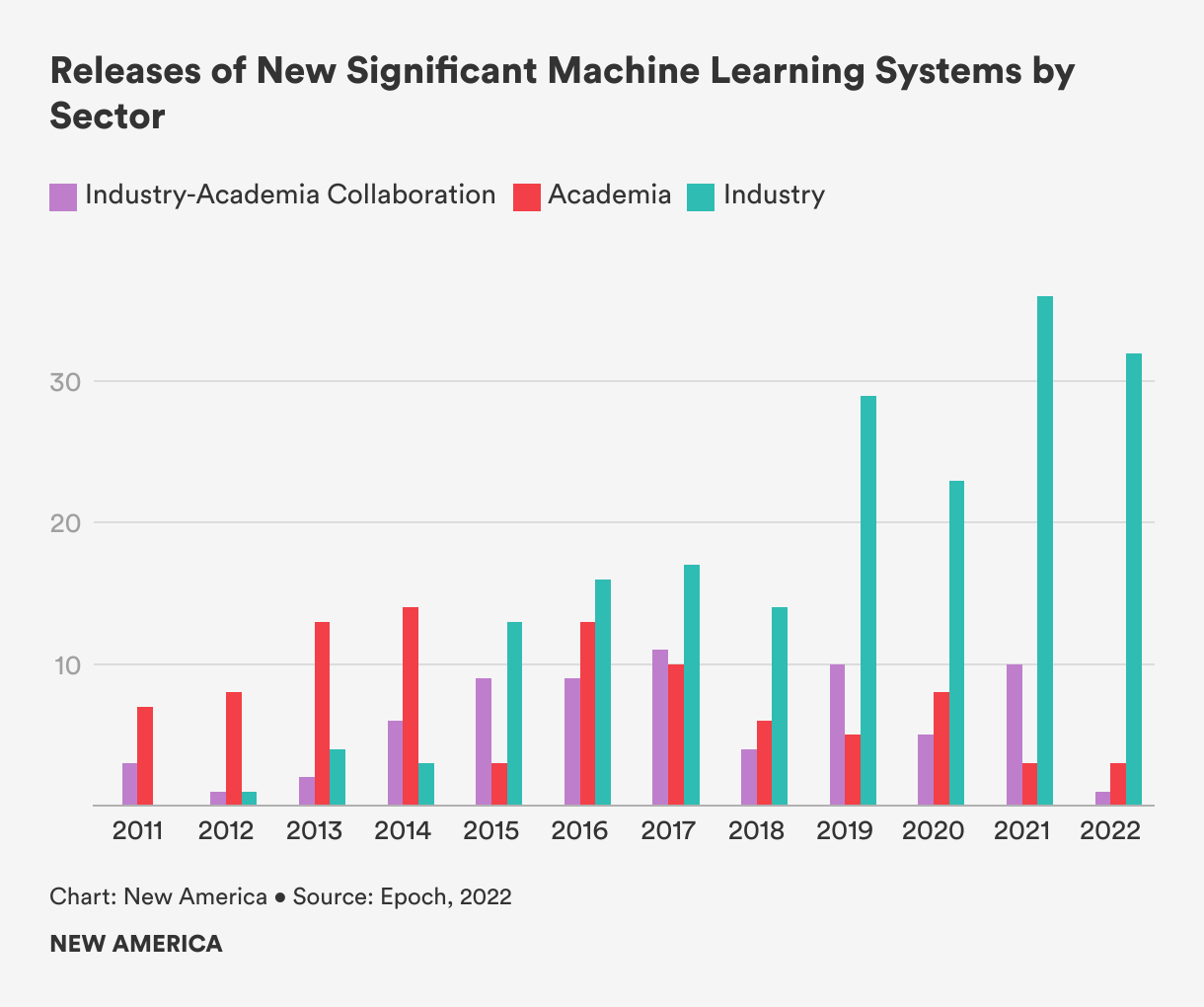

Right now, the prevailing source of such bias in AI systems is that they are being developed primarily by white, American men in the service of private and corporate interests like profit and shareholder returns. A decade ago, universities developed most of the cutting-edge machine-learning systems, but industry now dominates.14 The risks emerging as a result include labor market displacement, environmental damage, economic inequality, and racism.15 These impacts disproportionately harm marginalized communities, especially in developing nations.

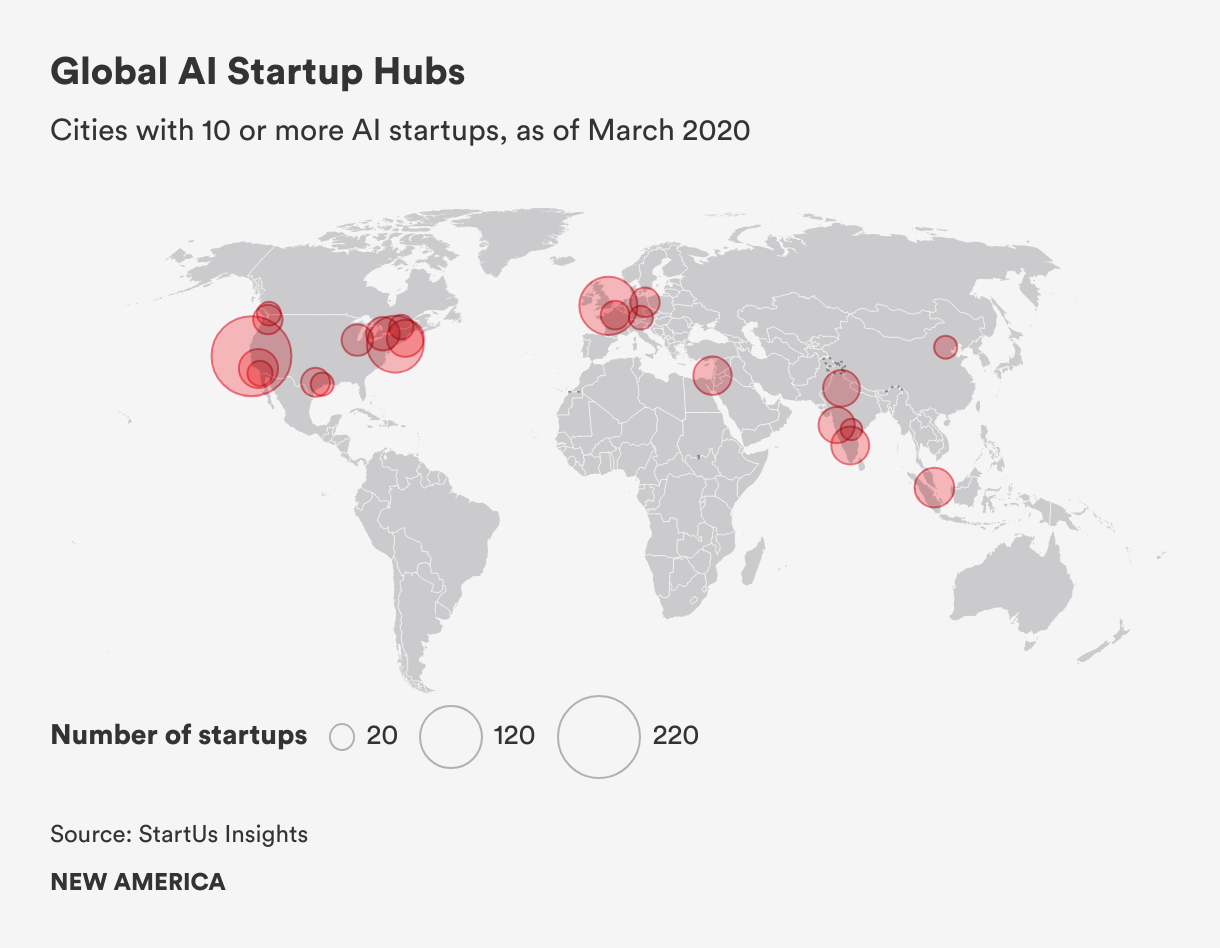

The global AI labor market is illustrative of these risks. Though there are emerging AI ecosystems in developing countries such as Brazil, Kenya, and India, research and development of AI systems is heavily concentrated in a handful of rich-world cities.16 One analysis conducted at the start of 2023 found that more than 50 percent of all venture capital investments to AI startups went to companies based in the San Francisco Bay Area.17 Yet millions of gig workers, many of whom live in the developing world, perform the menial labor necessary for building and maintaining AI systems.18 Workers in countries such as India, Kenya, and Nigeria are paid as little as $1.50 per hour to perform tasks such as data annotation and labeling for content moderation, which often entails scrutinizing violent, traumatic videos for hours on end.19

Researchers and firms project the AI revolution will usher in unprecedented economic precarity, as tens or hundreds of millions of jobs might be lost to automation over the next decade.20 And though AI systems are expected to add as much as 7 percent to global GDP by 2030, those proceeds will be unequally distributed; McKinsey Global Institute projects that rich-world AI leaders will accrue an additional 20 to 25 percent in net economic benefits, compared to 5 to 15 percent in developing countries.21

Thus, at the root of the AI harm debate are deep divisions along geographic, economic, social, and ethnic lines. Those who own, develop, deploy—and hence stand to be most enriched by AI systems—and dominate the risks discourse, are generally ethnic majorities from well-off cities, especially in the U.S., Europe, and China. The rest of the world—the workers, the ethnic minorities, and the poor who have little power and voice in the debate—may see their lives upended by this powerful new technology.22 The pressing problem is not the direct alignment problem, but rather the social alignment problem—the question of ensuring that an AI system serves the goals not just of the entity that created it, but of society more broadly. Will the market be left to decide, or will governments and societies mobilize to steer the technology toward public goals?

The Digital Futures Task Force working group on AI developed a taxonomy of AI harm. This taxonomy is broad enough to pertain to different jurisdictions, countries, and groups and can be applied to present-day systems and those of the future.

- Input harms refer to those stemming from a system’s inputs (i.e., the datasets used to train AI models). The data—whether individual images labeled by humans or massive amounts of online text ingested by large language models—have an embedded substrate of systems of thought, culture, and power. One working group member quipped that “historical data doesn’t have the luxury of historical amnesia.” Other harms can emerge when training data do not represent the context where a system is being used. For instance, a farming AI trained on European agricultural data would be ill-suited for Africa. Definitional disconnects—“justice,” for example, means something different in Islamic thought than it does in Western thought—raise a fundamental epistemological problem, as much of the world’s potential training data reflect only two intellectual traditions: the Western, Judeo-Christian and the Eastern, Confucian. As algorithms adjudicate and implement more and more activities, other traditions of knowledge risk further marginalization.

- Design harms emerge from how an algorithm is built and who builds it. Some look to the idea of participatory design to mitigate potential design harms, as many researchers, policymakers, and even industry technologists admit the need for more diverse design teams and civic participation. Our working group, however, noted that participation is a luxury enjoyed by educated, socioeconomically well-off elites. When it does occur, participation is often meaningless, as it does not automatically translate to ownership or influence.23

- Procedural and access harms arise when opacity, inscrutability, and secrecy surrounding the use and process of an AI system in decision-making violates individuals’ procedural rights. In various settings, such as hiring or criminal justice, an AI system could be used to make a decision about individuals without their awareness, leaving them unable to challenge its use. Further, the operations of many complex algorithmic models are either hidden from public view or so complex as to be inscrutable, which precludes the possibility of identifying errors and making a case for redress.

- Outcome harms describe the adverse physical, social, economic, and psychological range effects of negative consequences that arise from the application and deployment of implementation of AI. These can include physical, social, emotional, and economic damages resulting from system outcomes such as autonomous vehicle accidents or the political strife caused by the spread of deepfake videos.

- Accountability harms relate to issues regarding inadequate or unclear responsibility for an AI system and its actions. Who is responsible for the decisions made by an algorithm? Who is at fault when one of those decisions causes harm? Various mechanisms may help ensure accountability, such as liability laws and algorithmic auditing.24

Digital Access and Divides

Digital divides encompass disparities in infrastructure and investment in digital technologies between the rural and the urban, between developing and wealthy nations, and among different socioeconomic and identity groups within societies. While wealthy countries push forward into new digital frontiers such as AI, developing economies still have limited access to digital markets, technologies, and broadband, as well as slower internet speed and a lack of opportunities for digital entrepreneurship.

The most fundamental barriers to digital access are physical: unreliable electricity, lack of wired internet access, and other network infrastructure shortcomings. For example, more than 63 percent of internet exchange points (IXPs), the physical infrastructure that maintains local internet traffic and reduces the costs and latency associated with long-distance traffic exchanges between internet service providers (ISPs), are located in Organization for Economic Cooperation and Development (OECD) countries.25 For example, Sweden has nine IXPs, while Colombia, a country 2.5 times larger in size, has one.26 This infrastructure imbalance translates to higher internet traffic costs and slower internet speeds for those in developing economies.

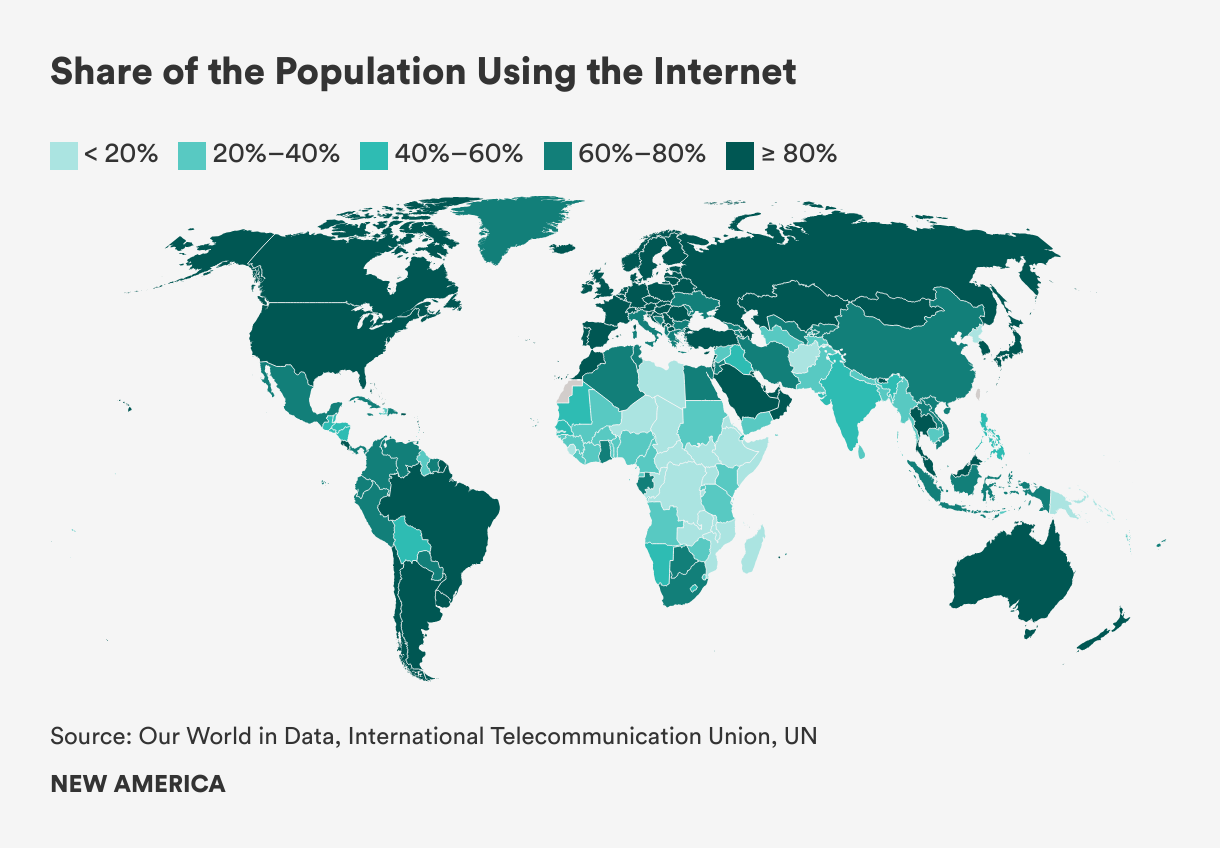

Internet access is similarly divided by geography, with most of the 2.7 billion unconnected located in the developing world.27 Internet penetration is 89 percent in Europe, over 80 percent in the Americas, and 70 percent in the Arab states, compared to 61 percent in Asia and 40 percent in Africa.28

Differences in internet connectivity and use also extend to gender, age, and rural versus urban populations. As of May 2023, there were 310 million fewer women accessing the internet than men, with women 7 percent less likely to own a mobile phone and 19 percent less likely to use mobile internet than men.29 Younger populations are more likely to be online, with 75 percent of global youth (aged 15 to 24) connected to the internet, compared to 65 percent of the rest of the population.30 In 2021, the number of internet users in urban areas was double the number in rural areas.31 Research has demonstrated significant positive associations between internet use and wage growth, which indicates that certain digital skills and behaviors were rewarded by the labor market.32

Though all of this data paints a clear picture of the digital divide, there are disagreements around the definition of access. In multi-stakeholder forums such as the Internet Governance Forum (IGF), representatives from wealthy nations have dominated the discussion by framing access as a human rights issue, rather than focusing on more immediate concerns such as the need for infrastructure and investment.33 The wider debate over how to expand access is a clash of two visions, the “free market” view, often promoted by large companies, versus “universal service,” whereby a government ensures all citizens have access to the internet. In addition, wealthy nations prioritize issue areas related to internet use and coordinated security policies, while developing nations are concerned with the high costs of international internet traffic and infrastructure development.34

“The wider debate over how to expand access is a clash of two visions, the ‘free market’ view, often promoted by large companies, versus ‘universal service,’ whereby a government ensures all citizens have access to the internet.”

The task force also noted that having access does not necessarily mean freedom of use. Even with physical access to the internet, the design and control of digital infrastructure can prevent free use. The prevailing paradigm of internet infrastructure design and ownership, which is highly centralized, enables shutdowns, which occur when an ISP or government deliberately terminates access to the internet. ISPs are few, and in many jurisdictions they are state-controlled, which concentrates decision-making related to internet access, online content, and cost.

Beyond access are overlapping divides in digital skills, digital use, quality of infrastructure, and content availability. The International Telecommunication Union (ITU) organizes its goals for bridging the digital divide into two categories: universal connectivity and meaningful connectivity.35 It identifies physical, financial, socio-demographic, cognitive, institutional, political, and cultural factors that affect access.36 Despite the multilateral body’s efforts to build consensus among nation-states, there is a lack of alignment among governments, technology companies, start-ups, and nonprofits on the root causes, definitions, issues, and consequences of the digital divide and the overall digital economy. Without harmonization, collaboration has been difficult, as each stakeholder has a limited view of what is a multifaceted issue.37

Geopolitical tensions pose another challenge, especially as technology becomes increasingly central to the power struggle between the U.S. and China.38 One of the battlefields of this strategic competition is access to the internet itself, particularly in the developing world, which is highly reliant on American, Chinese, and European digital infrastructure. As part of its Belt and Road Initiative, China has invested heavily in building digital infrastructure for developing nations; its investment in the African technology sector totaled $7.19 billion from 2005 to 2020.39 Chinese telecoms have been building submarine cables in the developing world, including a 12,000-kilometer cable connecting Pakistan, Europe, and East Africa (called PEACE) that will be maintained by Huawei.40 Chinese hardware frequently includes surveillance technologies, which allows governments, as well as the Chinese, to collect data and manipulate online content, which poses a threat to democracy.

Shutterstock

To counter China’s expansion, the U.S. is rallying G-7 nations, the World Bank, ITU, and American and European companies to increase investment in submarine cables.41 It is also using the threat of sanctions to deter countries from accepting Chinese projects.42 Tech giants such as Alphabet, Meta, and Microsoft stand to benefit from increasing U.S. efforts to build digital infrastructure in the developing world, as more bandwidth in the developing world translates to more hours on their platforms and thus more ad revenue.43 However, some researchers warn that if the future of the infrastructure of the internet is entrusted solely to monopolistic technology corporations, the internet will become an ever-more commercialized space primarily serving the financial interests of a handful of companies.44

Issues of power and justice, meanwhile, remain perennial. Wealthy nations are often the producers of digital technologies and infrastructure and designers of virtual worlds and experience, while developing nations are perpetual consumers. Developing countries are caught between either relinquishing digital sovereignty and giving up control of internet infrastructure or continuing to endure high international traffic costs and slow internet speeds. Some observers call this state of affairs “digital colonialism,” the twenty-first century version of the nineteenth century “Scramble for Africa,” where imperialist nations sought to divide, conquer, and exploit the whole continent for resources.45 In an ominous echo of colonialism’s tainted past, today’s subsea cables often follow the same shipping routes of the colonial powers in the original contest.

Data Protection and Data Sovereignty

Data protection refers to a set of norms regarding the acceptable use, transfer, and ownership of data that extend beyond the concept of privacy. It has been defined in conflicting ways in various countries.46 The European Union (EU) put forth the most widely accepted definition via the General Data Protection Regulation (GDPR), which treats data protection as a stand-alone right and addresses the abuse, collection, and processing of personal data.47 The GDPR sets limits on data collection, storage, and processing, and creates enforceable standards for lawfulness, fairness, accountability, and transparency.48 It also includes the “right to be forgotten,” whereby users can demand that a company remove their data completely.

Although the European definition has gained traction globally as countries seeking to maintain cross-border data flows with the EU have adopted similar regulations, other nations challenge the European conception of data protection. The U.S. lacks comprehensive federal data protection legislation and has only sector-specific laws that protect education or health data, for instance. Some U.S. states, such as California have begun to debate and embrace some of the definitions and approaches encompassed in the EU GDPR model. India recently passed a bill for data protection similar to the GDPR; however, it also allows state access to data under certain circumstances, which are determined by the government, without public input. China has a strict data protection policy, but, like India, allows government access to personal data and, unlike India, restricts cross-border data flows.

As a starting point for mapping the global fault lines in this area, the task force began by disentangling the terms data privacy and data protection. Notions of privacy are grounded in values and norms and vary widely among different cultures, so it would be difficult for a shared understanding of data privacy to emerge and form the basis for a global data protection governance framework. As a general approach, the group determined that discussions about data protection must start with values, then move to rights, and finally consider implementation.

Related to data protection is the notion of data sovereignty, which is also a contested term that came into vogue after former National Security Agency employee Edward Snowden disclosed the U.S. and U.K. governments’ metadata surveillance program.49 A basic definition of data sovereignty is “a state’s ability to control data originating and passing through its territory.”50 Because cyberspace transcends geographic borders, it poses a direct challenge to the prevailing terrestrial nation-state conception of sovereignty as geographically bound quality determined by international or regional agreement. Other literature describes data sovereignty as it relates to the individual, with some authors likening it to bodily sovereignty endowed to citizens per Enlightenment conceptions, particularly when it comes to health data, and tying it to the right to personal data protection.51 Across the scholarly discourse, the prevailing themes when it comes to data sovereignty are control and power: Who has sovereignty over different kinds of data, and what can those entities do with that data?

Among nations, data sovereignty is a highly contentious issue. The EU believes that states should have control over the data of its citizens to protect their rights from the overreach of corporations, law enforcement and intelligence agencies, and government regulators. The U.S., on the other hand, sees the control of data as conferring national economic and security benefits and protecting the U.S. from foreign adversaries.52 After the Snowden revelations exposed the extent of U.S. data abuse on national security grounds, this divergence broke out into the open, as the EU terminated the “EU-U.S. Safe Harbour Agreement” and subsequently the “Privacy Shield,” which served as the legal frameworks for regulating cross-border commercial data flows between the EU and the U.S.53

In July 2023, the EU and U.S. came to a new adequacy decision under the EU-U.S. Privacy Framework, which European officials claimed is a “very robust solution” to a long-standing legal debate. However, Max Schrems, an Austrian activist whose legal challenges to the past two privacy frameworks led to their invalidation by the Court of Justice of the EU, has announced that he will challenge this latest iteration.54 The survival of the agreement, as well as any prospective successors, will likely remain in question so long as the U.S. continues to collect the data of foreign nationals under Section 702 of the Foreign Intelligence Surveillance Act.55 At the core of this disagreement is the desire to exercise control over data.

Nation-states attempt to control data by implementing data localization measures, whether for purposes of national security, law enforcement, or influence over the private sector.56 For example, in China, the Data Security Law bans the transfer of all types of data within China to foreign legal or enforcement authorities, unless approval is granted by Chinese government officials.57 While China has strict laws protecting citizens’ data from foreign extradition, there are no regulations that protect citizens’ data from the Chinese government. Despite China's efforts for stricter control over data generated within its territory, the government itself is not bound by the same limitations.58

Harm related to data protection and data sovereignty does not necessarily require the actual misuse of data, such as when data are used to generate predictions about individuals. Even perceptions of data-related harm have deepened distrust between developing nations, powerful countries, and big tech firms. That distinction led the Digital Futures Task Force working group to develop a taxonomy of harm that distinguished between perceived versus measurable harms. A measurable harm might be the use of health data to drive up insurance premiums.

Perceived harms, on the other hand, are grounded in the values of the individual or community. An absence of trust in a digital technology would count as a perceived harm. Furthermore, these harms can occur at different levels: individual, group, societal, national, and geopolitical. An individual may experience harm through a loss of privacy or the misuse of personal data to predict a certain behavioral outcome. A group harm could manifest as an algorithmic bias against a marginalized community, such as immigrants. Societal harm could arise from interference in political processes. Geopolitical harm could be an imbalance in the benefits derived from the data economy between wealthy and developing nations.

“The global, open internet relies on cross-border data flows. Impeding those flows for reasons of security or sovereignty reduces the openness and innovation potential of the internet.”

The task force identified the top three areas of contestation in the global debate over data: cross-border data flows, usability, and value. The global, open internet relies on cross-border data flows. Impeding those flows for reasons of security or sovereignty reduces the openness and innovation potential of the internet. The usability of data determines its potential benefit and so determining usability is a fault line in data protection. Finally, the appraisal of value—how data are appraised and who is doing the appraising—raises questions about the beneficiaries of data-generated value and the equitable distribution of such gains. At the moment, the economic benefits from data extraction and processing primarily accrue to private firms, which are predominantly located in the U.S. and China. The central conflict over data sovereignty arises from the inherent asymmetry of power between those who create the data and those who extract and control the data.

Digital Identity and Surveillance

Digital identity systems, which are an online representation of a person’s attributes and credentials, can bestow economic and political opportunities on vulnerable populations while also enabling government or corporate surveillance. More than 850 million people, most of whom live in low-income countries or are members of marginalized populations, lack official identification.59 As a result, they are often invisible in the eyes of the state and face economic and legal deprivations, such as the inability to open a bank account; barriers to accessing government services such as social assistance payments and health care; forced eviction; and the threat of judicial and legal abuses.

A digital ID based on biometric data, such as fingerprints or iris scans, can enable governments and nonprofits to identify and deliver benefits to populations in a way that minimizes fraud or inaccuracy. But these systems also introduce the risk of harm, such as privacy violations, personal data abuse, discrimination, and potential for human rights abuses. Debates about digital ID are most salient in the context of development and humanitarian assistance.

Proponents point to benefits, such as access to social services. The government of India’s population-wide digital ID system Aadhaar has expanded financial inclusion and improved the targeting of welfare payments.60 International organizations such as the UN High Commissioner for Refugees (UNHCR) routinely use digital ID to deliver food and cash assistance to refugees and internally displaced populations.61 But critics note that digital ID systems can enable surveillance to exploit vulnerable populations. Governments might use digital ID programs to identify and persecute minorities.62 Humanitarian organizations often contract the development and maintenance of digital ID systems to private corporations, which can introduce risks related to data protection and abuse.

Some scholars argue that these arrangements perpetuate a form of “technocolonialism,” whereby the extraction of data from aid recipients enables multinational corporations to exploit vulnerable populations and experiment with emerging technologies.63 There are deep power inequities present in these transactions, as refugees, asylum seekers, and other vulnerable peoples are measured and translated into data in exchange for aid.64

Digital identity can lead to harms such as increased surveillance, including concerns over how data are collected and used in creating personal and group generalizations. In addition to surveillance, this practice of labeling is often done for marketing purposes. Digital identities provided by nonprofits or international organizations can also crowd out national legal identification. For instance, in providing digital identities for refugees, UNHCR alleviates pressure on host states to grant citizenship rights to stateless persons. Finally, as there are significant asymmetries in technical capacity in developing countries, governments often contract private foreign firms to develop and maintain digital identity systems. For example, Huawei employees are stationed in Kenyan police bureau offices and Kenyan biometric data is located in Shanghai as a result of this partnership.

Digital surveillance has become a feature of modernity. In his 2007 book Surveillance Studies, the Canadian sociologist David Lyon describes surveillance as “the focused, systematic, and routine attention to personal details for the purposes of influence, management, protection, or direction.”65 Scholars have different perspectives regarding the extent to which digital surveillance represents a contemporary manifestation of Jeremy Bentham's panopticon, a prison architecture in which inmates are watched from a tower in which the watcher is not visible.66 While some argue that digital surveillance adheres to the fundamental dichotomy of the “watcher” and the “watched,” others contend that it aligns more closely with the notion of a “surveillant assemblage,” in which individuals are transformed into discrete data flows and are subsequently reassembled as virtual “data doubles,” targeted for behavioral intervention.67 These conceptions, plus the use of surveillance in totalitarian regimes, carry negative connotations, but digital surveillance can yield societal benefits. For instance, surveillance is used to manage and contain disease outbreaks as well as prevent crime.

But states and corporations also use digital surveillance to repress populations, violate rights to expression and privacy, and extract value from individuals. Autocratic states, especially, use digital surveillance systems in a manner that evokes the panopticon.68 Perhaps the most extreme example at the moment is in the western Chinese province of Xinjiang, where the state uses a pervasive surveillance system involving biometric and digital checkpoints, tracking apps, big data processing systems, and social behavior data gathering to control the Muslim Uyghur minority.69 This type of surveillance aims to internalize control, morals, and values within the population, reinforcing the state's disciplinary power.

Western democracies also surveil their citizenry for “national security” purposes. Although Snowden’s disclosures of widespread surveillance of U.S. citizens eventually led to Congress banning the practice, the U.S. government still routinely surveils the public. According to a 2021 report by the Office of the Director of National Intelligence (ODNI), 3.4 million warrantless searches of Americans’ phone, email, and text records were conducted in 2021, as a result of Section 702 of the Foreign Intelligence Surveillance Act.70

In June 2023, ODNI reported that the U.S. government was voiding the Fourth Amendment by purchasing private data through data brokers. This report asserted that the “U.S. government believes it can ‘persistently’ track the phones of ‘millions of Americans’ without a warrant, so long as it pays for the information.”71 States also partake in this practice, particularly in monitoring social media accounts. Harms associated with these activities include eroding privacy, stifling open communication online due to fear of being monitored, misinterpreting the significance of social media activity, or erroneously attributing criminal conduct based on social media engagement.72 Because of long-standing societal biases, communities of color face an increased risk of surveillance, which exacerbates discrimination and perpetuates power inequities.

In the digital surveillance industry, the separation between the public and private sectors has become blurred. Private firms often carry out government-led surveillance, and corporate surveillance only exists in a conducive regulatory environment. Private companies have pioneered a lucrative business practice known as “surveillance capitalism.”73 Shoshana Zuboff, an American social psychologist and well-known critic of the tech industry, describes surveillance capitalism as a “new economic order that claims human experience as free raw material for hidden commercial practices of extraction, prediction, and sales.”74

Corporations gain access to personal data through their terms and conditions service agreements in which users give away their privacy in exchange for a free online service, such as access to friends’ photos or medical and legal advice. Sometimes, companies do not even ask for permission; Google’s Street View deploys cameras to take photos of people’s private residences without gaining consent from property owners.75 This asymmetry of knowledge between surveillance capitalists and users results in an asymmetry of power, as there is little to no oversight of these practices. Zuboff calls this imbalance “instrumentarian power,” which is a “ubiquitous, sensate, computational, actuating global architecture that renders, monitors, computes, and modifies human behavior.”76

Transnational Cybercrime

Ever since computer systems have been networked, there has been criminal activity occurring on them. In 1983, when the internet’s predecessor, the Advanced Research Projects Agency Network (ARPANET), was still relatively small, the Federal Bureau of Investigation arrested a group of six young men who had used personal computers and dial-up modems to hack into, and in some cases damage, more than 60 computer systems, including at the Los Alamos National Laboratory.77 A year later, an informant estimated that each year hackers were committing $200 million in credit card fraud and stealing $100 million in software.78

As the globalized internet has expanded, so has transnational cybercrime, proliferating to encompass a wide range of harmful activities targeting computer systems, critical infrastructure, organizations, and individuals. This type of crime has become more sophisticated, commercialized, and pervasive. Whereas most cybercrime was once carried out by individuals or groups of hackers, researchers and law enforcement officials have documented a rise in online criminal groups that regulate or control the production or distribution of a specific illicit product or service, much in the same way a mafia supplies protection or a cartel distributes narcotics.79

Accurate data are hard to come by for the scale and scope of cybercrime, but credible estimates say that damages increase 15 percent per year, going from $3 trillion in 2015 to a projected $10.5 trillion by 2025.80 Of particular note, ransomware attacks, whereby an attacker uses software that encrypts a user’s files and demands payment in exchange for the key, were up 62 percent worldwide from 2019 to 2020, with costs from these attacks increasing more than 60-fold, from $325 million in 2015 to $20 billion in 2021.81 In 2021, U.S. security agencies reported observing ransomware incidents in 14 of 16 critical infrastructure sectors.82 The highest profile of these was an attack that forced the temporary shutdown of 5,500 miles of the Colonial Pipeline on the East Coast, causing gasoline and jet fuel shortages that triggered a rise in gas prices.83

Though cybercrime is an ever-worsening global challenge, there is no precise definition of or consensus on what constitutes a cybercrime, nor are there agreed-upon classification systems that can account for the range of profit-seeking, ideological, and malicious cyber-activities that could qualify.84 In scholarly literature, the two most-cited definitions of cybercrime are (1) “computer-mediated activities which are either illegal or considered illicit by certain parties and which can be conducted through global electronic networks”85 and (2) “any crime that is facilitated or committed using a computer, network, or hardware device.”86 Such definitions are so vague as to lack utility, which has led researchers and policymakers to rely on various, often competing, classification systems. The most basic of these distinguishes between “cyber-enabled” crimes—traditional crimes such as money-laundering, drug trafficking, or terrorism that are facilitated by digital technology—and “cyber-dependent” offenses—crimes such as distributed denial-of-service or ransomware attacks that only exist in the digital world.87 Others establish even more categories.

This definitional ambiguity has implications in the worlds of policy and law. Regulatory and legal regimes vary widely across jurisdictions, confounding attempts at international cooperation and legal harmonization.88 The transnational nature of the digital domain means that cyber criminals can move their operations to less-regulated jurisdictions.

Government attempts to harmonize national laws have faced opposition. The Council of Europe, with participation from Canada, Japan, the Philippines, South Africa, and the U.S., drew up a legally binding treaty known as the Budapest Convention that opened for signature in 2001. The Convention established 14 different cybercrime offenses grouped under a four-category classification system, to which a fifth category was added in 2003: (1) Offenses against the Confidentiality, Integrity, and Availability of Computer Data and Systems; (2) Computer-Related Offenses; (3) Content-Related Offenses; (4) Offenses Related to Infringements of Copyright and Related Rights; and (5) Acts of a Racist and Xenophobic Nature Committed through Computer Systems.89

Members of the Digital Futures Task Force working group on cybercrime agreed that this taxonomy was out of date and too technical. It omits a range of harmful cyber-related or cyber-enabled criminal activities, such as election interference and other political crimes and various forms of online hate speech. As of 2021, only 68 nations were party to the Budapest Convention. Some non-signatory nations agreed with the content of the Convention but not the process by which it was created. India, for instance, cooperated with the Council of Europe to bring its cybercrime legislation in line with the Budapest Convention in 2008, though it refused to sign, in part because it did not participate in its negotiation.90 Autocratic states, many of which sponsor transnational cybercrime activities, opposed the principles of the Convention and organized to undermine it. Russia is the leader of this effort. Although Russia was a member of the Council of Europe at the time the Budapest Convention was negotiated, internal domestic views on the part of the ruling regime in the Kremlin have colored Russia’s subsequent criticism of the Budapest Convention. Before Russia exited the Council of Europe in March 2022 on the heels of the war in Ukraine, Kremlin representatives claimed the treaty violated state sovereignty by enabling cross-border cybercrime operations.91

In 2019, Russia, along with Belarus, Cambodia, China, Iran, Myanmar, Nicaragua, Syria, and Venezuela, presented a resolution to the UN General Assembly calling for the establishment of an international convention to combat cybercrime.92 Though the U.S., EU, and other signatories to the Budapest Convention opposed the motion, the resolution passed, leading to negotiations that, as of this writing, are ongoing. Language in the resolution, as well as a Russian draft treaty backed by China, proposed a vague definition of cybercrime, prompting the assertion by the U.S., EU, and other states, as well as human and digital rights groups, that the intention of this resolution was to give cover for autocratic states wanting to criminalize ordinary online expression and to exercise greater state control over the internet.93 The Russian-led effort to establish an international cybercrime convention at the UN is part of a larger campaign that autocracies are waging in international fora such as the International Telecommunication Union, to change global cyber norms and governance, such that the internet is brought under greater state control.94

In the view of the Digital Futures Task Force working group on cybercrime, a useful global-consensus taxonomy of cybercrime would be nearly impossible. Putting aside the fact that some states sponsor transnational cybercrime, different regions and groups experience cybercrime differently. Norms surrounding privacy and acceptable speech vary from one jurisdiction and culture to another, for instance. In addition, technical literacy and capacity determine the real and perceived harm of a cybercrime. Low-income countries might have justifiably little interest in passing cybercrime legislation when lack of broadband access or electricity are more pressing concerns for the population.

A more tractable approach, therefore, might be to define and categorize cybercrime on a regional basis. Venues for such efforts already exist. For instance, since 2013, the Association of Southeast Asian Nations (ASEAN) has convened a Senior Officials Meeting on Transnational Cybercrime to coordinate regional approaches to cybercrime, share information, conduct training, and carry out capacity-building activities. But the group agreed that the borderless nature of digital space confounds a regional approach. Criminal activity would shift to the most lenient, and, in many cases, the most vulnerable parts of the world.

One solution to this problem could be to distinguish between technical cybercrimes and social cybercrimes. Technical crimes—offenses against the confidentiality, integrity, and availability of computer data and systems—are universally measurable and consistent, whereas social cybercrimes are context-dependent. This leads to the idea that instead of focusing on the supply side (i.e., the perpetrators of cybercrimes), one could base a definitional framework around the demand side—the targets and victims. A definition rooted in this approach might stand a better chance of global acceptance, while allowing for local variation and national self-determination. The autocracies that sponsor and enable transnational cybercrime would still defect, but a demand-side framework might also encourage a greater emphasis on demand-side governance solutions: capacity building, victim compensation, and cyber awareness and education. As a practical matter that might be a more promising governance approach, since, so long as autocracies continue to enable transnational cybercrime, curbing the global supply of cybercriminal activity will be a challenge.

Citations

- John McCarthy’s work and life have been memorialized by the Computer History Museum. To learn more, read McCarthy’s bio here: source.

- Tim Büthe, Christian Djeffal, Christoph Lütge, Sabine Maasen, and Nora von Ingersleben-Seip, “Governing AI—Attempting to Herd Cats? Introduction to the Special Issue on the Governance of Artificial Intelligence,” Journal of European Public Policy 29, no. 11 (November 4, 2022): 1721–1752, source.

- Raffaele Pugliese, Stefano Regondi, and Ricardo Marini, “Machine Learning-Based Approach: Global Trends, Research Directions, and Regulatory Standpoints,” Data Science and Management 4 (December 2021): 19–29, source.

- Emily Aiken, Suzanne Bellue, Dean Karlan, Christopher R. Udry, and Joshua Blumenstock, Machine Learning and Mobile Phone Data Can Improve the Targeting of Humanitarian Assistance (Cambridge, MA: National Bureau of Economic Research, July 2021), source.

- Faiz Siddiqui and Jeremy B. Merrill, “17 Fatalities, 736 Crashes: The Shocking Toll of Tesla’s Autopilot,” Washington Post, June 10, 2023, source.

- Alex Campolo, Madelyn Sanfilippo, Meredith Whittaker, and Kate Crawford, AI Now 2017 Report (New York: AI Now Institute, October 18, 2017), source.

- For an example of gender bias, see Joy Buolamwini and Timnit Gebru, “Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification,” Proceedings of Machine Learning Research 81 (2018): 1–15, source.

- Krystal Hu, “ChatGPT Sets Record for Fastest-Growing User Base—Analyst Note,” Reuters, February 2, 2023, source.

- Policymaking in the Pause (Cambridge, MA: Future of Life Institute, April 12, 2023), source; Governing AI: A Blueprint for the Future (Redmond, WA: Microsoft, 2023), source.

- “Statement on AI Risk,” Center for AI Safety, May 30, 2023, source. See also: “An Overview of Catastrophic AI Risks,” Center for AI Safety, source.

- “Open Letter to News Media and Policy Makers re: Tech Experts from the Global Majority,” Free Press, May 8, 2023, source; Seth Lazar, Jeremy Howard, and Arvind Narayanan, “Is Avoiding Extinction from AI Really an Urgent Priority?” AI Snake Oil (blog), May 31, 2023, source.

- Blake Richards, Blaise Aguera y Arcas, Guillaume Lajoie, and Dhanya Sridhar, “The Illusion of AI’s Existential Risk,” Noema, July 18, 2023, source; Kenan Malik, “Fantasy Fears about AI Are Obscuring How We Already Abuse Machine Intelligence,” Guardian, June 11, 2023, source.

- Langdon Winner, “Do Artifacts Have Politics?” Daedalus 109, no. 1 (Winter 1980): 121–136, source.

- Nestor Maslej et al., Artificial Intelligence Index Report 2023 (Stanford, CA: Stanford Institute for Human-Centered Artificial Intelligence, April 2023), source.

- Emily M. Bender, Timnit Gebru, Angelina McMillan-Major, and Shmargaret Shmitchell, “On the Dangers of Stochastic Parrots: Can Language Models Be Too Big?” in FAccT ’21 Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency (March 2021): 610–623, source.

- Roxanne Heston and Remco Zwetsloot, Mapping U.S. Multinationals’ Global AI R&D Activity (Washington, DC: Center for Security and Emerging Technology, December 2020), source.

- Liz Lindqwister, “San Francisco’s Next Gold Rush Is Already Here, and You’ve Been Using It for Years,” San Francisco Standard, January 23, 2023, source.

- Josh Dzieza, “AI Is a Lot of Work,” Verge, June 20, 2023, source.

- Billy Perrigo, “Inside Facebook’s African Sweatshop,” Time, February 14, 2022, source.

- “Generative AI Could Raise Global GDP by 7%,” Goldman Sachs, April 5, 2023, source.

- Jacques Bughin, Jeongmin Seong, James Manyika, Michael Chui, and Raoul Joshi, Notes from the AI Frontier: Modeling the Impact of AI on the World Economy (Washington, DC: McKinsey Global Institute, September 4, 2018), source.

- Chinmayi Arun, “AI and the Global South: Designing for Other Worlds,” in The Oxford Handbook of Ethics of AI, ed. Markus D. Dubber, Frank Pasquale, and Sunit Das (Oxford, UK: Oxford Academic, July 9, 2020), source.

- Adam Zable and Susan Ariel Aaronson, For the People but Not by the People: Public Engagement in National AI Strategies (Washington, DC: Digital Trade and Data Governance Hub at George Washington University, December 22, 2022), source.

- John Villasenor, Products Liability Law as a Way to Address AI Harms (Washington, DC: Brookings Institution, 2019), source; James Guszcza, Iyad Rahwan, Will Bible, Manuel Cebrian, and Vic Katyal, “Why We Need to Audit Algorithms,” Harvard Business Review, November 28, 2018, source.

- Internet Society, “Promoting the Internet Exchange Points (IXP) Development,” December 2012, source; Daniel Opperman, ed., Internet Governance in the Global South: History, Theory, and Contemporary Debates (São Paulo, Brazil: University of São Paulo, 2008), source.

- Internet Society, Promoting the Internet Exchange Points (IXP) Development.

- International Telecommunication Union, “Internet Surge Slows as Pandemic Impact Evolves,” United Nations, September 16, 2022, source.

- International Telecommunication Union, “Internet Surge Slows as Pandemic Impact Evolves,” source.

- Nadia Jeffrie, The Mobile Gender Gap Report 2023 (London, UK: GSMA, 2023), source.

- International Telecommunication Union, “ICT Facts and Figures 2022,” source.

- “Digital Development,” World Bank, March 31, 2023, source.

- Paul DiMaggio and Bart Bonikowski, “Make Money Surfing the Web? The Impact of Internet Use on the Earnings of U.S. Workers,” American Sociological Review 73, no. 2 (April 1, 2008), source.

- Slavka Antonova, “Digital Divide in Global Internet Governance: The ‘Access’ Issue Area,” Journal of Power, Politics, and Governance 2, no. 2 (June 2014): 101–125, source.

- Antonova, “Digital Divide in Global Internet Governance.”

- International Telecommunication Union, “Internet Surge Slows as Pandemic Impact Evolves,” International Telecommunication Unions (press release), September 16, 2022, source.

- Natalia Williams, “Overview on Global Digital Divide,” Global Journal of Technology and Optimization 2, no 1. (2022), source.

- Akshit Singla, “Understanding the Impact of Social Media on Decision Making,” (master's thesis, Massachusetts Institute of Technology, September 2022), source.

- Cheng Li, “Worsening Global Digital Divide as the US and China Continue Zero-Sum Competitions,” Order from Chaos (blog), Brookings Institution, October 11, 2021, source.

- American Enterprise Institute, China Global Investment Tracker (Washington, DC: The American Enterprise Institute, 2023), source; Aubrey Hruby, “The Digital Infrastructure Imperative in African Markets,” AfricaSource (blog), Atlantic Council, April 8, 2021, source.

- Juliet Nanfuka, Digital Access and Economic Transformation in Africa (New York: Institute for New Economic Thinking, March 2022), source.

- Mayumi Hirosawa and Ryohei Yasoshima, “G-7 to Support Deep-Sea Cable Network for Emerging Nations,” SubTel Forum, April 26, 2023, source.

- Joe Brock, “U.S.-China Tech Cables: The Untold Story of How the U.S. Decided What China Could Learn,” Reuters, March 24, 2023, source.

- “2Africa Subsea Cable Makes First Landing in Genoa, Italy,” Meta Newsroom, April 2022, source; Paul Lipscombe, “Google Officially Launches Equiano Subsea Cable,” Datacenter Dynamics, source.

- Andrew Blum and Carey Baraka, “Google Meta Is Building an Underwater Cable Empire,” Rest of World, October 4, 2022, source.

- Danielle Coleman, “Digital Colonialism: The 21st Century Scramble for Africa through the Extraction and Control of User Data and the Limitations of Data Protection Laws,” Michigan Journal of Race and Law 24 (2019): 417–439, source.

- Lee Bygrave, “Privacy and Data Protection in an International Perspective,” Scandinavian Studies in Law 56 (2011): 139–164, source.

- Privacy International, Data Protection, Explained (London, UK: Privacy International, 2018), source.

- GDPR.EU, “What is GDPR? A Guide to the General Data Protection Regulation,” European Union, source.

- Agustín Rossi, “How the Snowden Revelations Saved the EU General Data Protection Regulation,” International Spectator 53, no. 4 (2018): 95–111, source.

- Marie Baezner and Robin Patrice, “Cyber Sovereignty and Data Sovereignty,” CSS CyberDefense Trend Analysis 2, no. 2 (2018), source.

- Anita Gurumurthy and Nandini Chami, Beyond Data Bodies: New Directions for a Feminist Theory of Data Sovereignty (Bengaluru, India: IT for Change, January 17, 2022), source.

- Shoshana Zuboff, The Age of Surveillance Capitalism: The Fight for the Future at the New Frontier of Power (London, U.K.: Profile Books, 2019), 360–363.

- Dennis Broeders, Fabio Cristiano, and Monika Kaminska, “In Search of Digital Sovereignty and Strategic Autonomy: Normative Power Europe to the Test of Its Geopolitical Ambitions,” JCMS: Journal of Common Market Studies (2023), source.

- Cynthia O’Donoghue et al., “Third Time’s a Charm: European Commission Adopts EU-U.S. Data Privacy Framework,” Reed Smith's Technology Law Dispatch, July 12, 2023. source.

- Natasha Lomas, “Europe Adopts US Data Adequacy Decision,” TechCrunch, July 10, 2023, source.

- Karthik Nachiappan, “The International Politics of Data: When Control Trumps Protection,” Raisina Debates (blog), Observer Research Foundation, October 26, 2022, source.

- Sammy Fang and Han Liang, “China's Emerging Data Protection Laws Bring Challenges for Conducting Investigations in China,” DLA Piper, July 24, 2022, source.

- Susan Ariel Aaronson, Data is Disruptive: How Data Sovereignty is Challenging Data Governance (Singapore: Hinrich Foundation, August 2021), source.

- Julia Clark, Anna Diofasi, and Claire Casher, “850 Million People Globally Don't Have ID: Why It Matters and What We Can Do About,” Digital Development (blog), World Bank, February 6, 2023, source.

- Rahul Verma and Shantanu Kulshrestha, Democratizing the Digital Space: Harnessing Technology to Amplify Participation in Governance Processes in the Global South (New York: UNDP, June 29, 2022), source.

- Nannie Sköld, “UNHCR Strengthens Efforts on Digital Identity for Refugees with Estonian Support,” UNHCR: Nordic and Baltic Countries, June 12, 2021, source.

- Mirca Madianou, “Technocolonialism: Digital Innovation and Data Practices in the Humanitarian Response to Refugee Crises,” Social Media + Society 5 (July–September 2019): 1–13, source.

- Madianou, “Technocolonialism,” source.

- Madianou, “Technocolonialism,” source.

- David Lyon, Surveillance Studies: An Overview (Malden, MA: Polity Press, 2007), 14.

- Thomas Mathiesen, “The Viewer Society: Michel Foucault's ‘Panopticon’ Revisited,” Theoretical Criminology 8, no. 1 (1997): 215–234, source.

- Kevin D. Haggerty and Richard V. Ericson, “The Surveillant Assemblage,” British Journal of Sociology 51, no. 4 (2000): 605–622, source.

- For further reading, see Michel Foucault, Discipline and Punish: The Birth of the Prison (New York: Pantheon Books, 1977), 203.

- Christina Larson, “Who Needs Democracy When You Have Data?” MIT Technology Review, August 20, 2018, source.

- Office of the Director of National Intelligence, Annual Statistical Transparency Report Regarding the Intelligence Community’s Use of National Security Surveillance Authorities (Washington, DC: Office of the Director of National Intelligence, April 2022), source.

- Dell Cameron, “The US Is Openly Stockpiling Dirt on All Its Citizens,” Wired, June 12, 2023, source.

- Rachel Levinson-Waldman, Harsha Panduranga, and Faiza Patel, Social Media Surveillance by the U.S. Government (New York: Brennan Center for Justice, January 7, 2022), www.brennancenter.org/our-work/research-reports/social-media-surveillance-us-government.

- Zuboff, The Age of Surveillance Capitalism, 14–15.

- Zuboff, The Age of Surveillance Capitalism, 8.

- Siva Vaidhyanathan, The Googlization of Everything (And Why We Should Worry) (Berkeley: University of California Press, 2011), 98–107.

- Zuboff, The Age of Surveillance Capitalism, 353.

- For details on that exploit and more during the early years of networked digital tech, see: Hugo Cornwall, The Hacker’s Handbook (London, UK: Century Communications Ltd., 1985), source.

- Susan W. Brenner, Cybercrime: Criminal Threats from Cyberspace (Santa Barbara, CA: Praeger, 2010), 16.

- Jonathan Lusthaus, “How Organized Is Organized Cybercrime?” Global Crime 14, no. 1 (2013), source.

- Steve Morgan, “Cybercrime to Cost the World $10.5 Trillion Annually by 2025,” Cybercrime Magazine, November 13, 2020, source.

- Geoff Blaine, “Tipping Point: SonicWall Exposes Soaring Threat Levels, Historic Power Shifts in New Report,” SonicWall (blog), March 16, 2021, source; David Braue, “Global Ransomware Damage Costs Predicted to Exceed $265 billion by 2031,” Cybercrime Magazine, June 2, 2022, source.

- “Cybersecurity Advisory: 2021 Trends Show Increased Globalized Threat of Ransomware,” U.S. Cybersecurity and Infrastructure Security Agency, February 10, 2022, source.

- David E. Sanger, Clifford Krauss, and Nicole Perlroth, “Cyberattack Forces a Shutdown of a Top U.S. Pipeline,” New York Times, May 8, 2021, source.

- Ravinder Barn and Balbir Barn, “An Ontological Representation of a Taxonomy for Cybercrime,” in Proceedings of the 24th European Conference on Information Systems (ECIS 2016), Istanbul, Turkey, June 2016, source.

- Douglas Thomas and Brian Loader, “Introduction-Cybercrime: Law Enforcement, Security and Surveillance in the Information Age,” in Cybercrime: Law Enforcement, Security and Surveillance in the Information Age, ed. Douglas Thomas and Brian Loader (London, UK: Routledge, 2000).

- Sarah Gordon and Richard Ford, “On the Definition and Classification of Cybercrime,” Journal in Computer Virology 2 (2006): 13–20, 10.1007/s11416-006-0015-z.

- Susan W. Brenner, “Cybercrime: Re-thinking Crime Control Strategies,” in Crime Online, ed. Yvonne Jewkes (Cullompton, U.K.: Willan Publishing, 2007), 12–28.

- Kirsty Phillips, Julia C. Davidson, Ruby R. Farr, Christine Burkhardt, Stefano Caneppele, and Mary Aiken, “Conceptualizing Cybercrime: Definitions, Typologies and Taxonomies,” Forensic Sciences 2, no. 2 (2002): 379–398, source.

- Convention on Cybercrime: Special Edition Dedicated to the Drafters of the Convention (1997–2001) (Strasbourg, France: Council on Europe, March 2022), source.

- Alexander Seger, “India and the Budapest Convention: Why Not?” Digital Frontiers (blog), Observer Research Foundation, October 20, 2016, source.

- Mercedes Page, “The Hypocrisy of Russia’s Push for a New Global Cybercrime Treaty,” The Interpreter (blog), Lowy Institute, March 7, 2022, source.

- 74th session of UN General Assembly, “Countering the Use of Information and Communications Technologies for Criminal Purposes: Report of the Third Committee,” United Nations, November 25, 2019, source.

- “Open Letter to UN General Assembly: Proposed International Convention on Cybercrime Poses a Threat to Human Rights Online,” Association for Progressive Communications, November 6, 2019, source.

- Mercedes Page, “The Election for the Future of the Internet,” The Interpreter (blog), Lowy Institute, February 24, 2022, source.

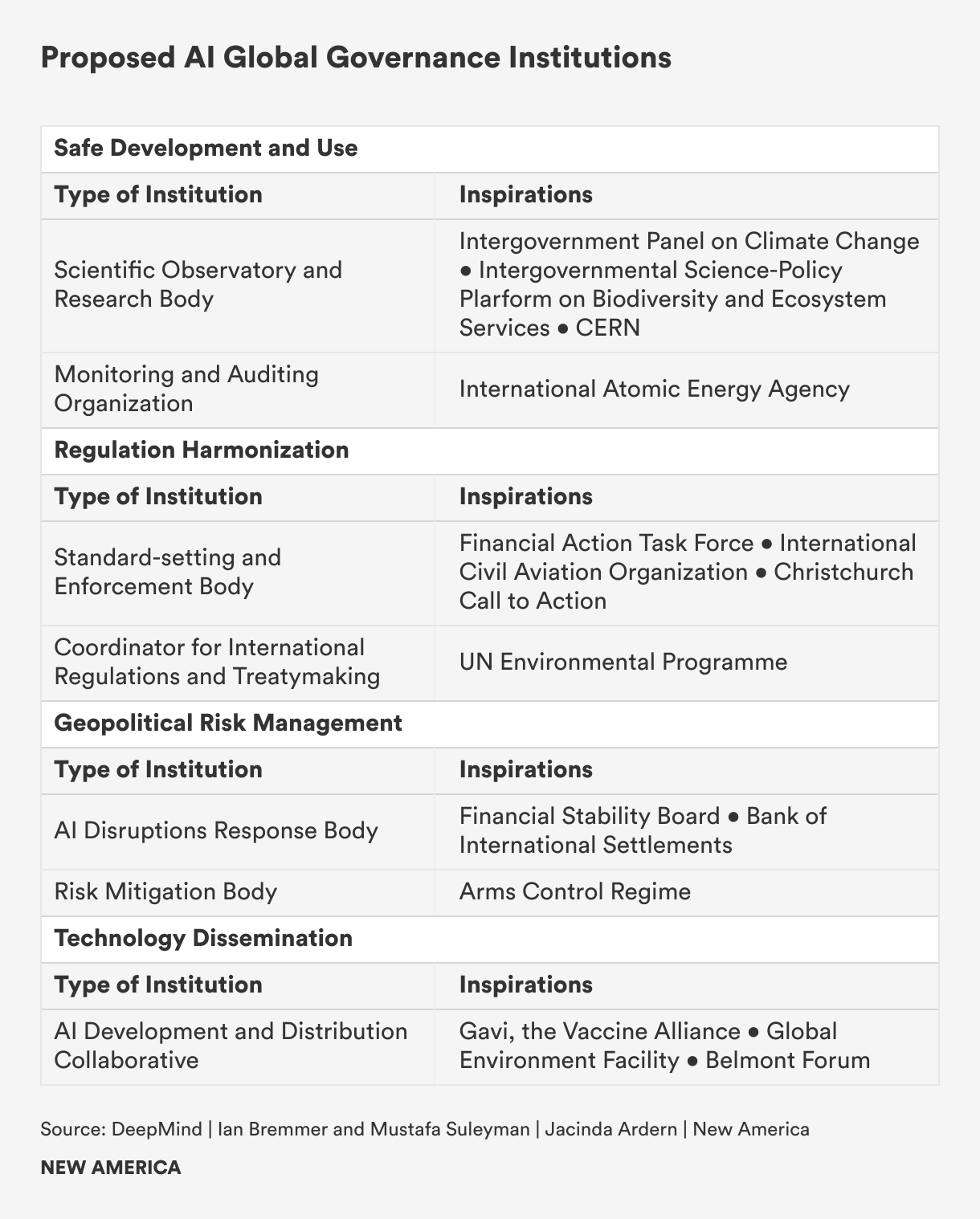

The Global Digital Governance Map

Currently, a patchwork of national regulatory regimes, multilateral bodies, corporate policies, and multi-stakeholder organizations governs the various layers of the digital domain. Internet governance has traditionally fallen to the private sector and technical community, as internet service providers and telecommunications companies built and own much of the world’s network infrastructure. Among nations, there is little agreement over the rules of cyberspace. The establishment of the World Wide Web in 1991 came at the start of a short-lived period of American hegemony, and as the internet expanded globally nations mostly deferred to U.S. norms for cyberspace. But as the world has become multipolar in the twenty-first century, governance of the digital domain is an increasingly contested front line in geopolitical power struggles.