Table of Contents

- Executive Summary

- Introduction

- Targeted Advertising and COVID-19 Misinformation: A Toxic Combination

- Human Rights: Our Best Toolbox for Platform Accountability

- Making All Ads “Honest” Through Transparency, Limited Targeting, and Enforcement

- By Protecting Data, Federal Privacy Law Can Reduce Algorithmic Targeting and the Spread of Disinformation

- Good Content Governance Requires Good Corporate Governance

- Without Civil Society, Platform Accountability is a Pipe Dream

- Key Recommendations for Policymakers

- Conclusion

Without Civil Society, Platform Accountability is a Pipe Dream

One of this report’s aims has been to suggest how Congress can most constructively and effectively hold digital platforms accountable for the social impact of their targeted advertising business models and algorithmic systems. Passing laws and boosting the power of regulatory agencies are essential steps for strengthening the governance and accountability of digital platforms. But even if law could keep up with technological change, responsive regulation is not possible without the work of a robust and independent civil society.

Key civil society actors include independent social science researchers and investigative journalists, watchdog and grassroots organizations, shareholders and investor alliances, and even allies and employee activists within the companies themselves. Researchers and journalists document and investigate harms while creating an evidence base all stakeholders can access and use to push companies to respond and improve policies and practices. Neither this report series, nor RDR’s work more generally, would be possible without the work of these people and organizations, many of whom are cited in our footnotes. The evidence and data that they produce helps advocacy and grassroots organizations, policymakers and investors not only identify what needs to change but also gain the credibility they need to drive the policy formulation, pressure campaigns, and responsible investing standards necessary to change it.

In the absence of effective regulation, independent civil society has an especially vital role to play in developing and deploying accountability mechanisms.

In the absence of effective regulation, independent civil society has an especially vital role to play in developing and deploying accountability mechanisms that fill key gaps where law and regulation have failed to hold companies responsible for their impact on society.

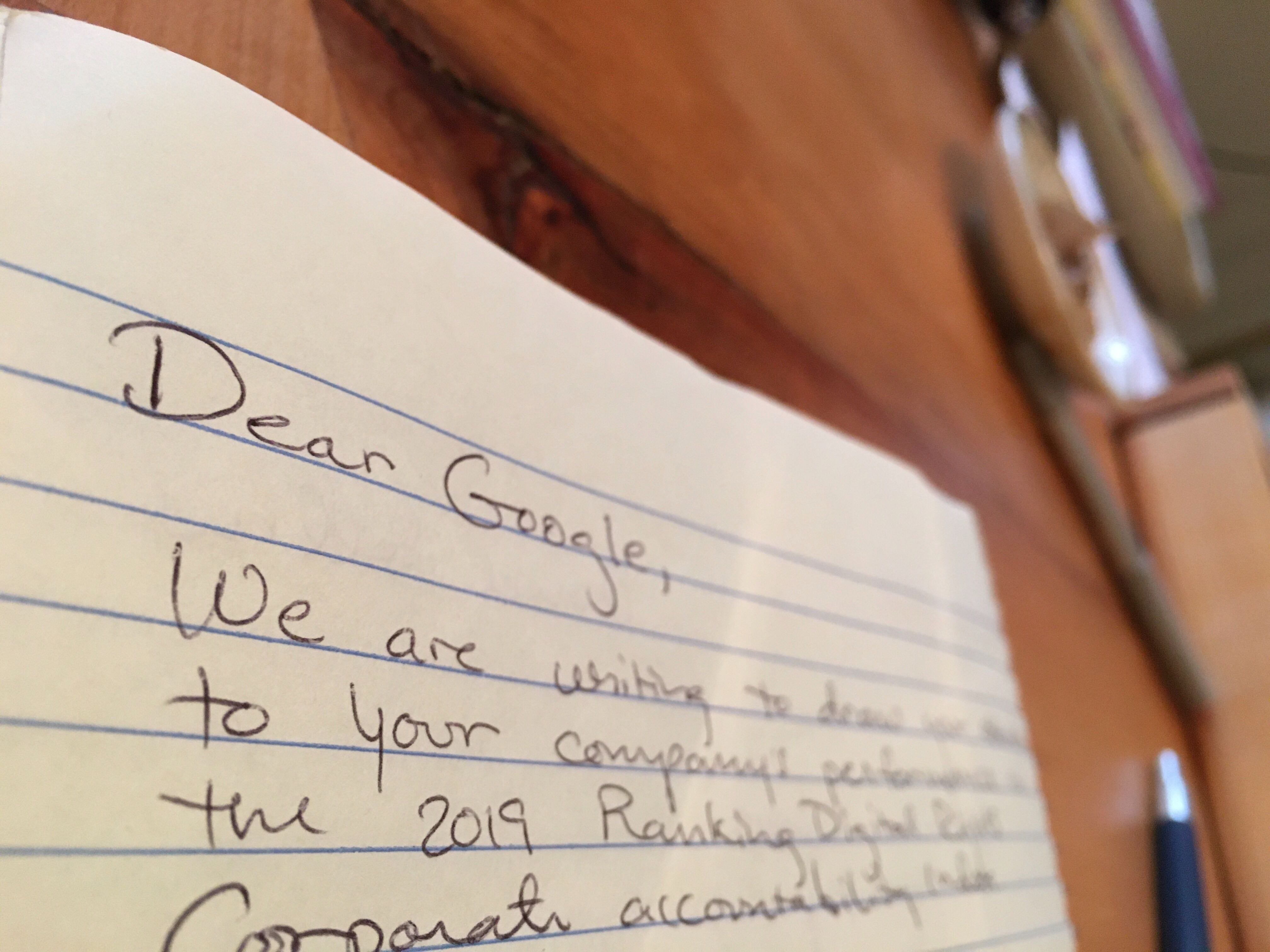

RDR is one of many such efforts, working closely with a network of researchers and civil society advocates and relying heavily on the work of investigative journalists, many of whom have been cited in this report. The RDR Index methodology, used as the framework for ranking global digital platforms and telecommunications companies on their respect for users’ human rights, was informed by two decades of academic research and investigative journalism, as well as by documentation from human rights advocates around the world. This work produced collectively by a broad range of civil society actors over time has clarified exactly how company policies, processes, and practices can affect users’ human rights. Once companies are evaluated by the annual RDR Index, civil society advocates use it to call for specific changes by companies and governments. Shareholders use the data to inform their engagement with companies as well as formal shareholder resolutions. This report itself is an example of how the RDR Index and related research can be used by policymakers to guide legal and regulatory reforms.

For social media platforms serious about respecting users’ rights and civil liberties and protecting democratic discourse, compliance with laws and engaging with policymakers is not enough. The only way to fully understand how their business operations affect society and users rights, is to consult with a wide range of stakeholders, whether through responding to open letters, participating in dialogue, or joining more formal multi stakeholder processes. For this reason, the RDR Index methodology evaluates companies on whether they engage with a range of stakeholders, particularly with those who face human rights risks in connection with their online activities. Where regulatory gaps exist or where law does not help companies understand their risk and demonstrate to stakeholders that those risks are being adequately addressed, companies should participate in processes and mechanisms through which stakeholders can hold them accountable.

Ellery Biddle

At a minimum, RDR researchers look for evidence that a company initiates or participates in meetings with stakeholders that represent, advocate on behalf of, or are people whose freedom of expression and privacy are directly affected by the company’s business. Companies that score well in the RDR Index do more than merely engage with responsible investors, civil society groups, and academic experts about their social impacts and human rights risks. The best performing companies stretch their stakeholder engagement beyond dialogue to accountability by participating in multi-stakeholder initiatives, such as the Global Network Initiative (GNI). Google and Facebook (but not Twitter) are members of GNI, which brings companies together with NGOs, investors, and academics to address the human rights risks related to government censorship and surveillance around the world.1 As members, they commit to respect and protect users’ freedom of expression and privacy in the face of government demands to remove content or hand over user data. As company members, they are also subject to independent assessments, overseen by a multistakeholder board, to determine whether they are satisfactorily upholding their commitments.2

Because Google and Facebook disclose evidence, verified by independent GNI assessors, that they conduct due diligence on how government demands for user data and content removal affect users’ human rights, they score well in the governance category of the RDR Index compared to many other companies (including Twitter), despite a serious lack of similar due diligence or disclosures related to algorithmic systems and targeted advertising.3 GNI does not address those aspects of the companies’ business, however.4

Companies have yet to work with civil society to build multi-stakeholder accountability mechanisms that would address the social and human rights impact of their business operations not related to government censorship and surveillance demands. Companies do engage in multi-stakeholder fora about content moderation and algorithmic systems, but without independent assessments or related accountability mechanisms. Google and Facebook are both members of the Partnership on AI (PAI), where they work with academics and NGOs to address thorny questions related to the ethics of artificial intelligence.5 While the PAI board includes non-company members, the organization has no mechanisms for accountability; companies are not subject to assessment and they are not held to a clear set of standards, including a commitment to human rights principles, as a condition of membership.

Facebook, Google, and Twitter also engage with a coalition of experts and NGOs who advocate for the Santa Clara Principles on Transparency and Accountability on Content Moderation. All three have endorsed the principles.6 But a recent evaluation by New America’s Open Technology Institute found that they all fall very short in actually implementing them, disclosing inadequate information about how humans and algorithms moderate content on their platforms.7 Companies also reach out to experts and NGOs for advice and input on policies, products and programs. For example, Twitter has created a Trust and Safety Council comprising a long list of experts and organizations ranging from the Anti-Defamation League to the Center for Democracy and Technology to the National Center for Missing and Exploited Children.8 Such engagement is at the companies’ invitation and on their terms.

To date, the most talked-about attempt at stakeholder engagement has been the launch of the Facebook Oversight Board. Funded and staffed by an independent trust, the Oversight Board brings together an international group of experts who are tasked with adjudicating Facebook’s most difficult and controversial content moderation cases.9 Given the extraordinary complexity and sensitivity of the issues it will consider and the platform’s global reach, Facebook’s willingness to open up its content moderation processes and decisions to world-class external expertise deserves praise. Still, the Oversight Board oversees only decisions related to content removal. It does not have a say in the company’s business decisions, not least Facebook’s targeted advertising business model.10 While decisions about individual content cases will be considered binding, Zuckerberg is under no obligation to heed broader concerns its members may raise about targeted advertising and algorithmic systems. Thus the launch of the Oversight Board does nothing to change the need to hold the company accountable through strong privacy law, anti-trust enforcement, due diligence and transparency requirements, and corporate governance reforms. Rather, it serves as another reminder that effective corporate accountability mechanisms must involve multiple actors applying pressure at various points and, most important, cannot be self-designed or self-enforced.

Holding companies accountable to the public interest is the responsibility of lawmakers, in consultation with civil society. In the next section, we outline our recommendations for concrete actions Congress should take to address the current corporate accountability gap.

Citations

- Global Network Initiative. 2020. “Global Network Initiative.” Global Network Initiative. source (May 16, 2020).

- Global Network Initiative. 2020. The GNI Principles at Work: Public Report on the Third Cycle of Independent Assessments of GNI Company Members 2018/2019. source.

- See Governance section, Ranking Digital Rights. 2019. Corporate Accountability Index. Washington, DC: New America. source

- The scope of GNI’s work does not include commercial privacy issues that are not directly related to government surveillance actions or user data demands. Nor does GNI address content moderation carried out to enforce companies’ own rules if the restrictions are not made in response to government demands or direct requirements. Any expansion of GNI’s scope would need the support of its board of directors. At present, GNI’s mandate does not cover the human rights risks caused by commercial business practices and mechanisms including content moderation, algorithmic systems for content recommendation and prioritization, commercial data collection and sharing, or human rights concerns related to targeted advertising business models.

- “The Partnership on AI.” source (May 16, 2020).

- Crocker, Andrew et al. 2019. Who Has Your Back? Censorship Edition 2019. Electronic Frontier Foundation. source (May 16, 2020).

- Singh, Spandana. 2019. Assessing YouTube, Facebook and Twitter’s Content Takedown Policies. Washington: New America. source (May 16, 2020).

- Twitter. “Twitter Safety Partners.” source (May 16, 2020).

- Hern, Alex. 2020. “Facebook Judges, Journalists and Politicians on Free Speech Panel.” The Guardian. source (May 16, 2020).Botero-Marino, Catalina, Jamal Greene, Michael W. McConnell, and Helle Thorning-Schmidt. 2020. “Opinion | We Are a New Board Overseeing Facebook. Here’s What We’ll Decide.” The New York Times. source (May 16, 2020).

- Wong, Julia Carrie. 2020. “Will Facebook’s New Oversight Board Be a Radical Shift or a Reputational Shield?” The Guardian. source (May 16, 2020).