Table of Contents

- Executive Summary

- Introduction

- Targeted Advertising and COVID-19 Misinformation: A Toxic Combination

- Human Rights: Our Best Toolbox for Platform Accountability

- Making All Ads “Honest” Through Transparency, Limited Targeting, and Enforcement

- By Protecting Data, Federal Privacy Law Can Reduce Algorithmic Targeting and the Spread of Disinformation

- Good Content Governance Requires Good Corporate Governance

- Without Civil Society, Platform Accountability is a Pipe Dream

- Key Recommendations for Policymakers

- Conclusion

Targeted Advertising and COVID-19 Misinformation: A Toxic Combination

In March, Facebook, Twitter, Google, YouTube, and others committed to join forces and work closely with networks of fact-checkers and researchers to combat the spread of pandemic-related misinformation.1 In April, as the U.S. economy slowed to a crawl and many state and local governments ordered people to stay home, Facebook took the further step of removing some posts that openly call on people to violate government orders, and committed to display warnings to people who had interacted with misinformation about COVID-19.2

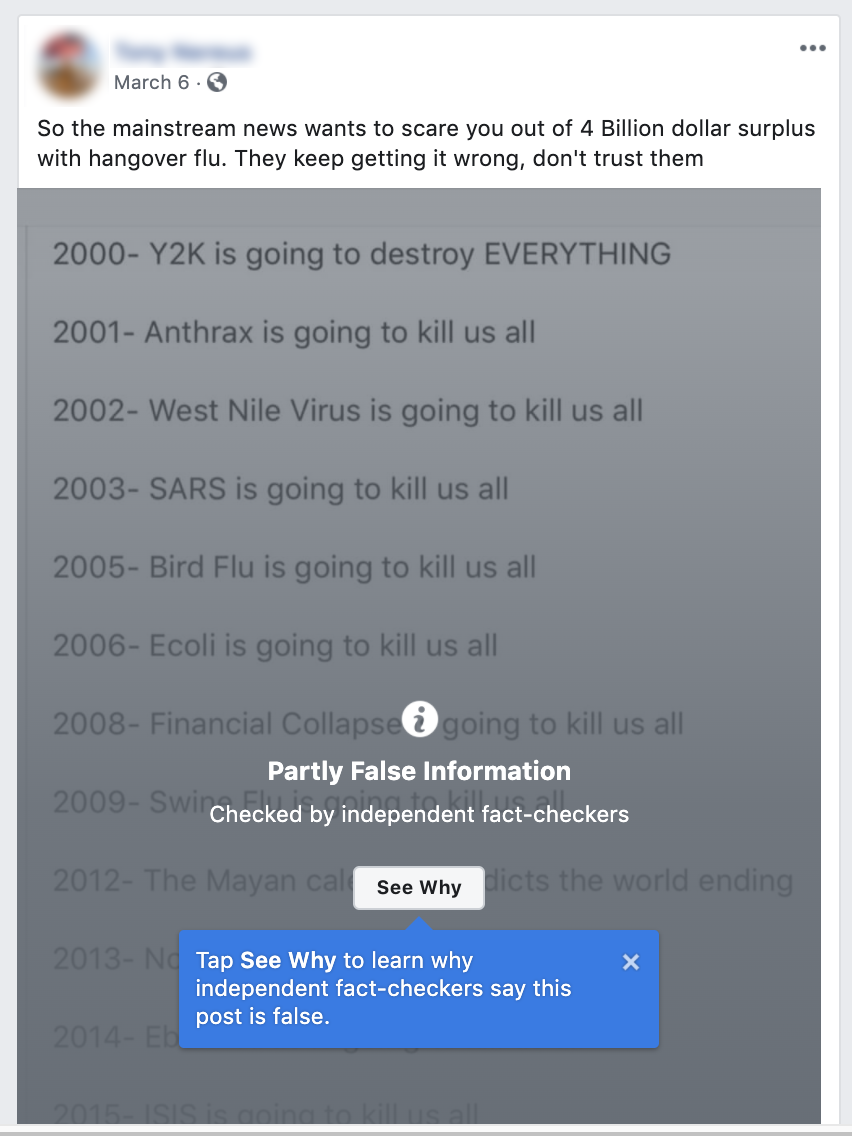

Yet the torrent of misinformation continued, despite the best efforts of a growing number of organizations dedicated to external monitoring, flagging, and fact-checking. The platforms’ content moderation systems could not keep up, and also made mistakes.3 University of Oxford’s Reuters Institute, examining a sample of 225 pieces of misinformation posted between January and the end of March, found that while more than half of the content was either deleted or labeled with warnings, significant amounts of such content remained in circulation.4 Fifty-nine percent of posts flagged as false by fact-checkers remained on Twitter without any warning labels. Among the tweets that did get deleted was one by Rudy Giuliani, Trump’s personal attorney and former mayor of New York City, quoting a well-known conservative activist making the false claim that “hydroxychloroquine has been shown to have a 100% effective rate treating COVID-19.”5

YouTube for its part kept up 27 percent of content flagged as false, while 24 percent of posts containing content that fact-checkers had identified to be false remained on Facebook without warnings of any kind.6 But what is the actual prevalence of pandemic-related misinformation across the platforms? Facebook disclosed that in March it had displayed fact-checking labels on 40 million dangerous posts, based on 4,000 articles that its third-party reviewers had rated as false.7 So given the findings from the Oxford sample, we can conclude that millions of pieces of COVID-19 misinformation remained in circulation.

Also by March, fact-checking organizations reported being overwhelmed as the volume of potential misinformation related to the pandemic exploded.8 And when governments around the world started issuing mandatory stay-at-home orders to mitigate contagion, the platforms announced that since their human content moderators could no longer work in the office, and most could not work remotely because they lacked home internet access (along with privacy concerns), they would need to rely more heavily on content moderation algorithms to determine what needs to be deleted. They warned that this would likely result in more mistakes being made in determining what content does or does not violate the platform rules.9

The platforms have also failed to stem the flow of paid misinformation as well as deliberate disinformation. In mid-March, Consumer Reports Journalist Kaveh Waddell decided to test the effectiveness of Facebook’s commitment to police its ad platform more closely. He created a page for a fake organization, then submitted several advertisements to run on the platform, calling the coronavirus a hoax and urging people to get out of the house and mingle:

“Don’t give in to the propaganda—just live your life like you always have,” stated one of the ads. Another instructed people to “stay healthy with SMALL daily doses” of bleach. All were approved by Facebook for publication. Waddell responsibly removed them before they went live.10

Facebook discloses a detailed system for reviewing all ads before they are published for compliance with its policies. Its ad policy states: “We’ll check your ad’s images, text, targeting, and positioning, in addition to the content on your ad’s landing page.”11 But the company discloses no further details about what processes or technologies are used to review these ads, including if and how automation is used or if there is any human involvement. Whatever those processes might be, Waddell’s experiment exposed just how ineffective Facebook’s ad content policing mechanisms actually are in life-and-death crisis situations—such as a global pandemic when people are desperate to understand what is happening and face an overwhelming avalanche of often contradictory information.

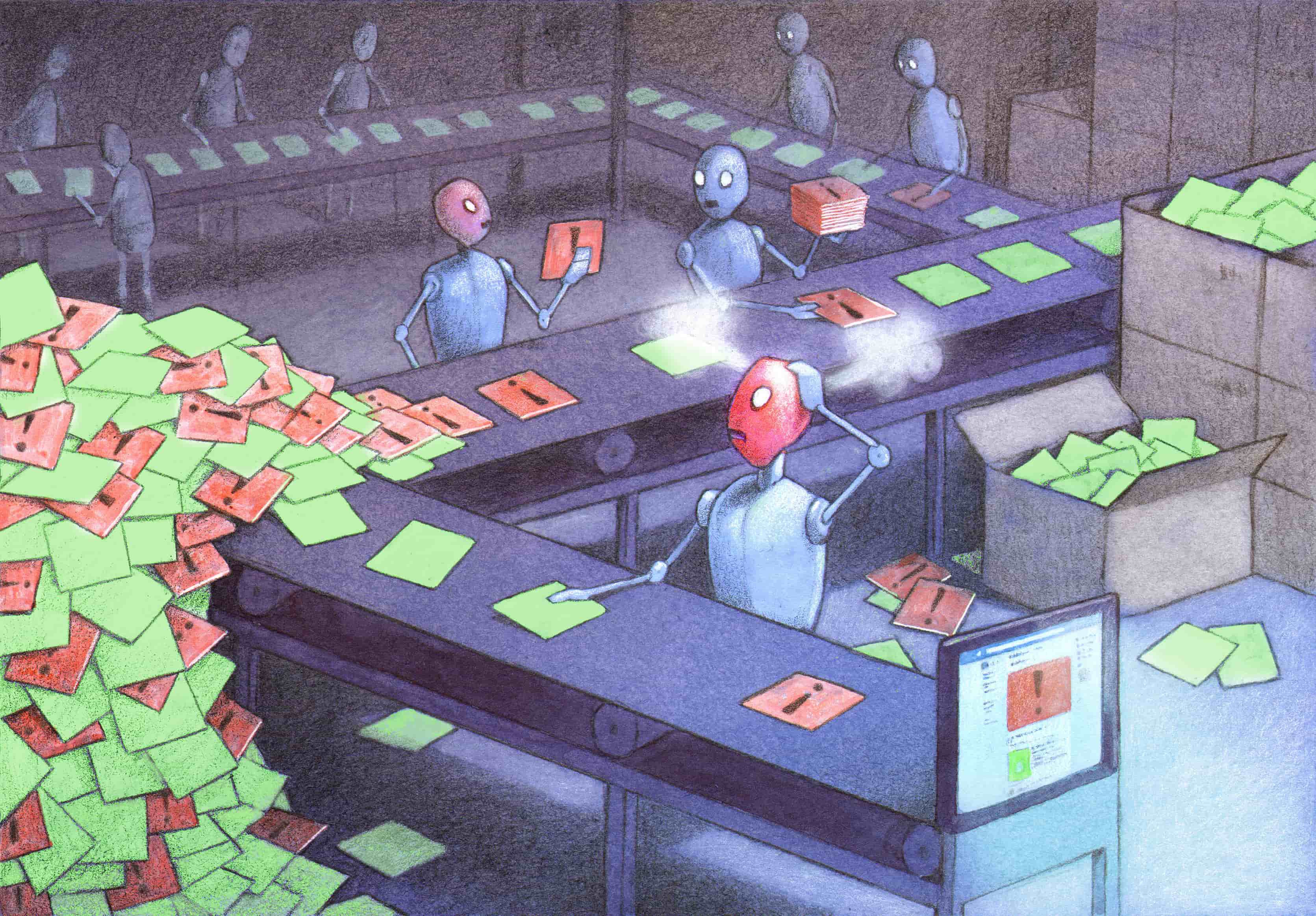

Content moderation is a downstream effort by platforms to clean up the mess caused upstream by their own systems designed for automated amplification and audience targeting.

To try to mitigate the devastating effects of targeted misinformation, social media platforms are engaged in an endless circular fight, in which they now must detect and delete content whose social, political, and even medical impact is magnified by the opaque and unaccountable mechanisms of their own business models. Content moderation is a downstream effort by platforms to clean up the mess caused upstream by their own systems designed for automated amplification and audience targeting. These downstream efforts—while necessary—do not fundamentally change the upstream systems that exacerbate the problem, and do not create greater transparency with the public about exactly how targeted systems are shaping what people can share, see, and know.

Take, for example, Facebook’s mechanisms used to build profiles on users and target them with specific content. In April, just a week after Facebook CEO Mark Zuckerberg pledged to fight misinformation about COVID-19, Aaron Sankin, a journalist with The Markup, noticed in his Facebook news feed an advertisement for “a hat that would supposedly protect my head from cellphone radiation.”12

One of the many conspiracy theories raging across social media links the spread of the coronavirus to signals emanating from 5G wireless cell towers.13 After clicking the “Why am I seeing this ad?” tab on the ad, Sankin learned that the ad was targeting people interested in “pseudoscience.” We do not know how Facebook determined that Sankin was interested in pseudoscience, what types of advertisers used the audience category, or how often they did so. We do know from information provided to advertisers that the pseudoscience audience category contained 78 million users. With a few more clicks, Sankin was able to use that same category to “boost” Markup posts on Instagram (paying to increase how often the posts were shown to Instagram users in the target audience category). The fact that he was able to do so confirmed that Facebook had not disabled it despite its clear connection to misinformation. The audience category was only removed after Sankin brought it to the company’s attention.14

This was not the first time journalists have exposed dangerous audience categories, prompting a company apology and removal. In 2017, ProPublica found that racist audience categories were being offered to advertisers.15 Like those disturbing categories, the pseudoscience audience category was likely generated by an algorithmic system that used a host of data points, like users’ Facebook and Instagram posts, comments, and interactions with other posts as well as off-platform data about their personal characteristics and behaviors. On that basis, Facebook determined that these 78 million people were interested in pseudoscience and enabled advertisers to reach them. What’s more, in many cases the automated ad targeting systems also determined which paid content was most likely to appeal to pseudoscience enthusiasts. This appears to have been the case for the anti-cell phone radiation hat: the CEO of the company behind the ad told The Markup that his team hadn’t chosen the pseudoscience category.16

Sankin’s experiment suggests that in addition to classifying individual users as “interested in pseudoscience,” Facebook’s algorithmic systems are also able to determine that specific ads contain pseudoscience. Rather than flagging such ads for further scrutiny by a human reviewer, the company’s algorithmic systems make it easier for such ads to reach users who are vulnerable to their messages. A hat that purports to block cell phone radiation may not be likely to cause much harm, but seeing an ad for one reinforces the worldview that creates demands for such products. The same ad-targeting technology also spreads dangerous hoaxes like the idea that consuming bleach protects against the novel coronavirus or that childhood immunizations cause autism, as well as posts that incite hate or violence.

The problem with targeted advertising is that you can’t put the cat back in the bag.

The problem with targeted advertising is that you can’t put the cat back in the bag. A clear example can be seen in how misinformation radiated from Facebook pages protesting the pandemic lockdown meant to slow the spread of the coronavirus.

In mid-April protesters in state capitols across the country wielded signs with slogans like “#endtheshutdown” and “give me liberty or give me COVID-19!,” calling for governors to lift their stay-at-home orders. The protesters were driven by different motives, from economic anxiety at a time of unprecedented unemployment to long-standing fears about government overreach.

Anti-quarantine protest participants may have seen themselves as part of a spontaneous grassroots movement. But the coordinated marketing and messaging around the Facebook pages used to organize the protests was supported by organizations with long histories and deep pockets. Thanks to dogged reporting by several news organizations, it became clear that the emergence and expansion of these protests were made possible in no small part to the support of politically influential, well-funded backers including the National Rifle Association.17

According to research by First Draft, an organization that tracks the spread and analyzes the causes of disinformation, 100 state-specific Facebook pages were created in April to protest state governments’ stay-at-home orders. NBC reported that as of April 20, these protest pages were used to organize at least 49 different events. The groups, many with names like “Wisconsinites Against Excessive Quarantine” and “Reopen Minnesota” repeated across Facebook with different state names, had by April 20 attracted more than 900,000 members.18 These groups and their members were also reported to be active spreaders of coronavirus misinformation, much of it coordinated. Researchers at Carnegie Mellon University’s CyLab Security and Privacy Institute tracked nearly identical claims posted across multiple platforms, from the Facebook groups to Twitter and Reddit.19

We cannot rely on a game of whack-a-mole to protect public health—and ultimately the health of our democracy.

Frustratingly, it is impossible to document with any precision the scale at which COVID-19 misinformation circulating on social media platforms has been boosted by targeted advertising tools, as the companies keep that information to themselves. In general, the platforms do not disclose nearly enough information about whether the content users see on the platform was boosted, by whom, and whether they are being targeted by tools like Facebook’s “custom audiences,” which enable advertisers to target specific individuals.

But we do know that a large volume of that misinformation is being shared by followers and administrators of pages that can easily afford to pay for targeted advertising, and that many of these pages are run by people with plenty of experience in using such tools.

Disinformation, misinformation, hate speech, and scams of all sorts are powerful precisely because digital platforms’ automated content optimization systems aim them at just the people who are most vulnerable to these messages, while hiding them from other users who would otherwise be in a position to flag them and provide corrective counter-speech. False information about COVID-19, and other dangerous content, would be much less effective if it was not algorithmically targeted and amplified. It is clear that even if social media companies’ content rules were perfect—which they are not—enforcing them fairly and accurately at a global scale is not possible.

Banning dangerous content itself is both contrary to free expression standards and impossible to achieve at a global scale. But we can stymie its reach by denying targeting and optimization algorithms their power, through fundamental reforms grounded in human rights principles.

Citations

- Douek, Evelyn. 2020. “COVID-19 and Social Media Content Moderation.” Lawfare. source (May 15, 2020).

- Romm, Tony. 2020. “Facebook Will Alert People Who Have Interacted with Coronavirus ‘Misinformation.’” Washington Post. source (May 15, 2020).

- Heilweil, Rebecca. 2020. “Facebook Is Flagging Some Coronavirus News Posts as Spam.” Vox. source (May 15, 2020).

- Brennen, J. Scott, Felix Simon, Philip N. Howard, and Rasmus Kleis Nielsen. 2020. Types, Sources, and Claims of COVID-19 Misinformation. Oxford: University of Oxford. source (May 15, 2020).

- Wong, Queenie. 2020. “Twitter Leaves up Trump’s ‘liberate’ Tweets about States with Lockdown Protests.” CNET. source (May 15, 2020).

- Brennen, J. Scott, Felix Simon, Philip N. Howard, and Rasmus Kleis Nielsen. 2020. Types, Sources, and Claims of COVID-19 Misinformation. Oxford: University of Oxford. source (May 15, 2020).

- Rosen, Guy. 2020. “An Update on Our Work to Keep People Informed and Limit Misinformation About COVID-19.” Facebook Newsroom. source (May 7, 2020).Romm, Tony. 2020. “Facebook Will Alert People Who Have Interacted with Coronavirus ‘Misinformation.’” Washington Post. source (May 15, 2020).

- Leskin, Paige. 2020. “One of the Internet’s Oldest Fact-Checking Organizations Is Overwhelmed by Coronavirus Misinformation — and It Could Have Deadly Consequences.” Business Insider. source (May 15, 2020).

- Bergen, Mark, Joshua Brustein, and Sarah Frier. 2020. “Tech’s Shadow Workforce Sidelined, Leaving Social Media to the Machines.” Bloomberg. source (May 15, 2020).

- Waddell, Kaveh. 2020. “Facebook Approved Ads With Coronavirus Misinformation.” Consumer Reports. source (May 15, 2020).

- Facebook. n.d. “Advertising Policies.” source (May 15, 2020).

- Sankin, Aaron. 2020. “Want to Find a Misinformed Public? Facebook’s Already Done It.” The Markup. source (May 7, 2020).

- Satariano, Adam, and Davey Alba. 2020. “Burning Cell Towers, Out of Baseless Fear They Spread the Virus.” The New York Times. source (May 15, 2020).

- Sankin, Aaron. 2020. “Want to Find a Misinformed Public? Facebook’s Already Done It.” The Markup. source (May 7, 2020).

- Julia Angwin, Madeleine Varner. 2017. “Facebook Enabled Advertisers to Reach ‘Jew Haters.’” ProPublica. source (February 12, 2020).

- Sankin, Aaron. 2020. “Want to Find a Misinformed Public? Facebook’s Already Done It.” The Markup. source (May 7, 2020).

-

Bixby, Scott. 2020. “DeVos Has Deep Ties to Michigan Protest Group, But Is Quiet On Tactics.” The Daily Beast. source (May 5, 2020);

Stanley-Becker, Isaac, and Rony Romm. 2020. “Pro-Gun Activists Using Facebook Groups to Push Anti-Quarantine Protests.” Washington Post. source (May 15, 2020). - Zadrozny, Brandy, and Ben Collins. 2020. “Conservative Activist Family behind ‘Grassroots’ Anti-Quarantine Facebook Events.” NBC News. source (May 15, 2020).

- Seitz, Amanda. 2020. “Virus Misinformation Flourishes in Online Protest Groups.” Associated Press. source (May 15, 2020).