Table of Contents

- Executive Summary

- Introduction

- Targeted Advertising and COVID-19 Misinformation: A Toxic Combination

- Human Rights: Our Best Toolbox for Platform Accountability

- Making All Ads “Honest” Through Transparency, Limited Targeting, and Enforcement

- By Protecting Data, Federal Privacy Law Can Reduce Algorithmic Targeting and the Spread of Disinformation

- Good Content Governance Requires Good Corporate Governance

- Without Civil Society, Platform Accountability is a Pipe Dream

- Key Recommendations for Policymakers

- Conclusion

Good Content Governance Requires Good Corporate Governance

In our previous report, we called for greater transparency about the processes and technologies that digital platforms use to govern content shared by users, including advertisers. In this report, we have outlined the privacy and data protection measures necessary to lessen content-related harms that are rooted in the algorithmically curated personalization of users’ online experiences. We've also pointed out ways that advertising law needs to be upgraded. There is another vector for legislative and regulatory action: To rein in the tech giants’ power and hold them accountable to the public interest, lawmakers and policy advocates must prioritize corporate governance and shareholder accountability.

Good corporate governance is a prerequisite for good content governance by social media platforms. In order to identify, prevent, and mitigate social harms caused by the amplification and targeting of dangerous content, Facebook, Google, and Twitter should be subject to strong institutional oversight. Shareholders, a growing number of whom are concerned with companies’ social impact and governance, need to be empowered to hold companies responsible for addressing their social and human rights impacts. Shareholder empowerment can in turn strengthen the connection between shareholders and the ecosystem of non-governmental actors—NGOs, unions, consumer advocates, academic researchers, and journalists among others—whose expertise and perspective are essential for companies to be able to fully understand and address their social impact.

Empower Shareholders to Address Environmental, Social, and Governance Issues by Phasing Out Dual-class Shares

The case for shareholder empowerment was on display at Facebook’s 2019 annual shareholder meeting. Sixty-eight percent of the company’s shareholders who hold normal “Class A” shares (one share, one vote) voted for CEO Mark Zuckerberg to relinquish his position as board chairman to an “independent member of the Board.” The supporting statement for the resolution filed by Trillium Asset Management, and backed by a number of well-known mutual fund companies as well as several major state pension funds, called out Facebook management for “missing, or mishandling, a number of severe controversies, increasing risk exposure and costs to shareholders,” which board oversight led by an independent board chair might have helped prevent. 1

Yet even with a solid majority, the shareholder resolution failed. Why? Because Zuckerberg and other members of his inner circle hold “Class B” shares weighted at 10 votes per share, giving him effective control over 60 percent of shareholder votes at that meeting.2 As a result, only 20 percent of the total vote supported separation of CEO and board chair, a major governance reform that would have strengthened the board of directors’ power to oversee and potentially overrule the CEO’s decisions.3

The failed resolution was just one of several proposals spurred by investor concerns that Facebook’s management had failed to prevent harms to users and mitigate serious social risks, including privacy breaches and Russian meddling in the 2016 U.S. presidential election.4 Ironically, one of the other failed resolutions sought to end Facebook’s dual-class share structure altogether—filed to call attention to the problem itself, since it could only pass if Zuckerberg agreed to it.5

In 2019, shareholders also filed unsuccessful proposals with Alphabet (Google’s parent company), Facebook, and Twitter that would have required the companies to report to shareholders on various aspects of their content governance policies and enforcement. For example, the resolution filed with Alphabet sought a report “reviewing the efficacy of its enforcement of Google’s terms of service related to content policies and assessing the risks posed by content management controversies related to election interference, freedom of expression, and the spread of hate speech, to the company’s finances, operations, and reputation.” Google responded that its public reporting on these matters was already significant.6 Like Facebook, Alphabet also has a dual-class share structure. Twitter does not.

The shareholder resolutions described above reflect that a growing number of mutual funds and institutional investors pursue environmental, social, and governance (ESG) investment strategies that favor companies that are environmentally sustainable, socially responsible, and well governed. Global sustainable investments jumped by 34 percent to nearly $40 trillion between 2016 and 2018.7 In 2019, nearly half of U.S. institutional investors had either included ESG factors in their investment decisions or were considering doing so.8 According to the Sustainable Investments Institute, the percentage of shareholder resolutions addressing ESG issues grew by just 12 percent in the past 10 years, but average support of these resolutions has grown by a whopping 40 percent.9 Most important for people concerned with platform accountability, the number of resolutions filed with companies covered by the RDR Index that focused on issues affecting internet users’ rights jumped from just two in 2015 to 12 in 2019.10

Shareholder resolutions are a tool for investors to push companies in a more sustainable, socially responsible direction and improve their governance.11 Even when they do not pass, the attention that they bring to specific issues often prods companies to engage with investors and explain to the public how they are addressing the problems raised. But in cases like the resolution that would have removed Zuckerberg as chairman of the board, resolutions cannot ultimately curb a CEO’s unrestrained power if he is shielded by a dual-class share structure. In 2019, the Securities and Exchange Commission’s (SEC) then-Commissioner Robert Jackson, warning of the consequences if CEOs cannot be fired, making them effectively monarchs of private kingdoms, called for reforms. The SEC, he proposed, should require companies to phase out dual-class shares so that shareholders can actually be in a position to hold management accountable for failing to identify and mitigate risks.12 The SEC has not only disregarded his recommendation but has moved in the opposite direction, proposing a rule change that would make it harder for shareholders to file proposals.13 Congress could compel the SEC to implement dual-class share reform and other measures that would strengthen corporate governance and oversight.

Investors Need More and Better Disclosure

Investors concerned with ESG risks are also pushing for companies to disclose more information about how they identify, track, and mitigate these risks. Such disclosures inform portfolio construction, and some investors use it to inform their engagement with companies about how to improve.14 In 2018, a group of investors representing $5 trillion of assets under management petitioned the SEC to require corporate disclosure of ESG risks, to no avail.15

Proponents argue that ESG disclosure requirements would bring the United States into step with broader global trends.16 Since 2018, large companies in the EU are required by law to disclose non-financial information about “the way they operate and manage social and environmental challenges,” including human rights-related policies and risks.17 Meanwhile several organizations have been working to improve the quality of disclosures—whether mandatory or voluntary—by developing clear and consistent standards.18 Most relevant in the U.S. investment and regulatory context is the San Francisco-based Sustainability Accounting Standards Board (SASB). Their industry-specific reporting standards include guidelines for digital platforms to disclose “data standards, advertising, and freedom of expression,” including a “description of policies and practices relating to behavioral advertising and user privacy.”19 SASB is now in the early stages of developing disclosure standards related to content moderation.20

While U.S. companies are not required by law to disclose ESG information, 120 U.S.-listed companies now use SASB reporting standards, including a few in the tech and communications sector, such as Adobe, Netflix, and Salesforce.21 A handful of companies, including the crafting platform Etsy, are leading the way by furnishing audited SASB-based disclosures along with their official SEC filing documents, even though they are not required to do so. (They also included disclosures using standards developed by the Global Reporting Initiative, which target a broader set of stakeholders beyond investors and are widely used in Europe and internationally.)22

These companies are responding to market demand: According to the data provider Morningstar, in 2019 a record $20.6 billion flowed into U.S. sustainable investment funds, almost quadrupling net inflows from 2018.23 Those investment funds need better ESG data in order to make sure that the companies they invest in actually meet basic ESG standards. In January 2020, Larry Fink, the CEO of the global investment management firm Blackrock, called on all companies in which Blackrock invests to follow SASB’s standards to disclose relevant ESG information or similar data in a way that is relevant to their business.24 If Congress were to compel the SEC to require ESG disclosure, investors would be empowered to push companies to address many of their non-financial risks, including the social impact of targeted advertising and algorithmic systems.

Despite the growing investor demand, Facebook, Google, and Twitter do not systematically include ESG information in their disclosures to investors. Unlike many European telecommunications companies covered by the RDR Index, their annual reports do not cover the full range of social and human rights information that investors have signaled from shareholder resolutions and recent engagements that they consider potentially material. By contrast, Telefónica (headquartered in Spain)—the top-ranking telecommunications company in the 2019 RDR Index—publishes an annual Consolidated Management Report covering the full range of ways that the company is working to achieve “value for all our stakeholders.”25 The company’s Responsible Business portal offers a clear catalogue of information about how they track and assess impacts, including transparency reports and impact assessment data.26

The three U.S.-headquartered social media giants that are the focus of this report do disclose a great deal of information related to their social and human rights impacts in a range of policy documents, transparency reports, blog posts, and other materials published online. But the material is scattered across their websites and platforms, testing the research capacity of investors seeking to understand how these companies manage their human rights risks, or how they understand and track their social impact. The RDR Index tracks such disclosures and grades companies according to the comprehensiveness and quality of their disclosures about policies and practices affecting the human rights of internet users. A high score in the RDR Index indicates strong disclosure at least in some areas. But our most recent research found very poor disclosure by these three companies related to the social and human rights impact of their targeted advertising and algorithmic systems.27

A growing number of investors use the RDR Index and its methodology to evaluate company disclosures, and cite RDR data in shareholder resolutions.28 From such resolutions and SASB’s standards-development process it is clear that the investor community already has behavioral advertising on its radar and is considering the material risk associated with digital platforms’ content moderation practices. The 2020 RDR Index, forthcoming in February 2021, will report on whether these companies have made any progress in their policies and disclosures by then.29

Targeted Advertising Revenue Disclosure

The human rights issues associated with targeted advertising have prompted a growing number of commentators to call for a ban on targeted advertising as the best way to protect privacy and stem the spread of dangerous content.30 At very least, the burden should be on companies that rely on targeted advertising to prove that their business model can be modified to protect human rights and still remain tenable. So far they have failed to do so.

An important step in that direction would be for companies to disclose the extent to which they rely on targeted advertising as a percentage of revenue—as well as whether that percentage is trending up or down. Doing so would help business leaders and investors quantify the driving force behind maintaining these systems as well as the extent of content-related social impact and risks that the business is taking on. Only with this cost-benefit analysis can appropriate steps be taken to identify and mitigate the negative impact of targeted advertising before the problems spiral out of control.

Google and YouTube’s parent company, Alphabet, as well as Facebook and Twitter all disclose the percentage of their total revenue earned from advertising in general: In 2019, Facebook disclosed that it earned nearly all of its revenue from advertising (98.5 percent),31 while Twitter earned 86 percent from advertising,32 and Google’s advertising revenue (including YouTube) was 83 percent of its total earnings for the year.33 None of these companies, however, specifies how much of their revenue was earned from targeted advertising, let alone from different levels of targeting.

For example, Google’s AdSense platform offers advertisers several different options, including contextual advertising and behavioral targeting. In contextual advertising, advertisements are linked to the specific keywords or keyphrases in the content alongside which the ads will be shown. For example, a cooking tutorial might be paired with ads for a grocery delivery service or gourmet cookware, regardless of the viewer’s demographics or personal behavior.

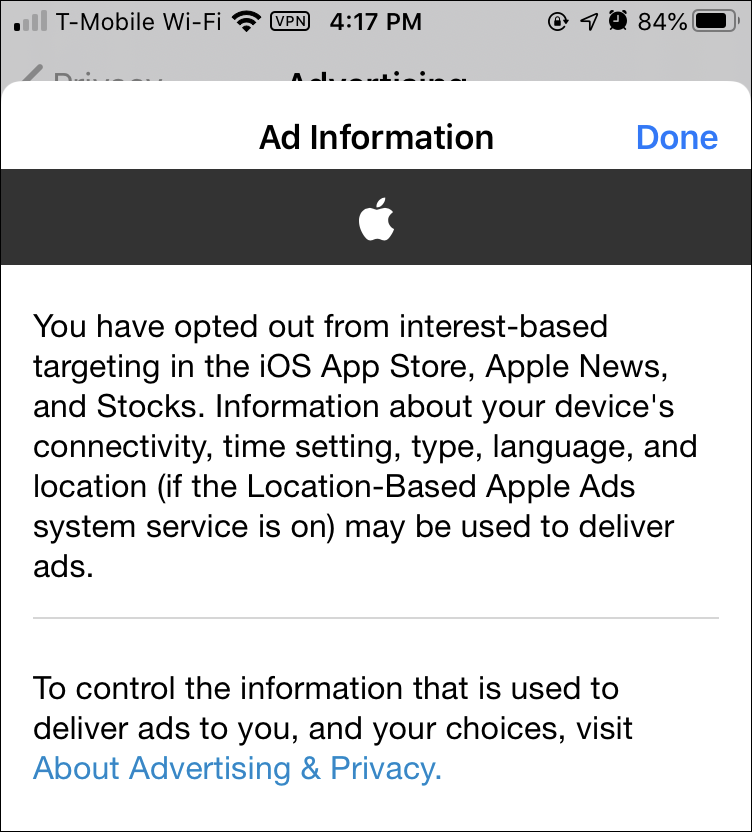

In behavioral targeting, ads are targeted to specific user characteristics based on profiles that the platform has built about interests, demographics, etc., that users have either declared or that algorithms have inferred after processing user data.34 But Alphabet doesn’t disclose the revenue breakdown for different types of advertising, or whether YouTube depends more heavily on behavioral advertising than other Google-operated platforms such as Search.35 Facebook’s Advertising for Business page reflects the company’s primary focus on behavioral targeting.36

Microsoft and Apple, which rely heavily on other types of revenue like service fees, software license purchases, and hardware sales, do not actually disclose their advertising earnings as a distinct revenue percentage, implying that it is quite small as a percentage of total revenue.37 However, a small share of revenue for such massive companies amounts to serious money: In 2019, Microsoft’s Bing search engine generated more than $7.5 billion dollars in advertising revenue, more than LinkedIn or the Surface hardware line, and three times more than Twitter’s total earnings.38 As for Apple, it is the only digital platform company in the RDR Index that does not track users across the internet.39 While the company’s restrained approach to monetizing user data hampers its competitiveness against Google and Facebook, App Store ad revenue still amounted to 13 percent of total revenue for the 2017 fiscal year.40

The bottom line is that advertising, much of it behavioral, is a growing revenue stream for both Apple and Microsoft, creating pressure to collect and monetize more and more user data.41 That trend in turn points to growing social and human rights risks that investors, policymakers, and other civil society stakeholders need to be aware of—and why all companies with targeted advertising revenue should disclose relevant information about it.

Due Diligence: Understanding the Full Scope of Platforms’ Social Impact

A key piece of information that investors expect from companies is whether they conduct meaningful and comprehensive due diligence about their ESG risks. Due diligence and impact assessment enables digital platforms to understand the full social impact of their targeted advertising business models, algorithmic systems, and all other processes and activities. Results of impact assessments inform how their policies, systems, and processes need to change in order to mitigate—and ideally prevent—harms to individuals and societies that are either caused or exacerbated by their business operations.

The UN Guiding Principles on Business and Human Rights set forth specific elements of due diligence that should guide companies’ approach to addressing and mitigating human rights risks. Specifically, Operational Principle 16 states that companies should start by adopting formal policies publicly expressing their commitment to international human rights principles and standards.42 A credible public commitment, published in official company documents (not just stated in ad hoc interviews or speeches by executives), confirms that the company, from the board of directors down, is aware of how its actions may affect users and their communities, and is committed to address, minimize, and ideally prevent any negative impacts. A public statement on its own may not amount to much, of course, unless there is verifiable evidence that it has been implemented. But it is the first step toward responsible practice and accountability: It provides a hook for employees, responsible investors, civil society advocates, and others to urge the company’s management to implement policies and practices in line with their commitments.

Facebook, Google, and Twitter make official public commitments to users’ freedom of expression and privacy, particularly in the face of efforts by governments around the world to censor content or grant access to user data and communications.43 The charter of Facebook’s new Oversight Board extends a general human rights commitment to content moderation in relation to its own private rules.44 Yet as of May 2020, Facebook and Twitter have made no explicit, formal commitment to protect human rights as they develop and use algorithmic systems.45 While Google does commit to avoid developing “technologies whose purpose contravenes widely accepted principles of international law and human rights,” it is not clear whether the company’s commitment extends to the human rights implications of algorithmic systems already developed and deployed on YouTube and other platforms whose purpose is to moderate, amplify, shape, and target content.46

Once a company makes a commitment to respect human rights across all of its business activities, its decision makers can only implement that commitment effectively if they actually understand whose human rights might be affected, and how. Due diligence processes enable the company to gain such an understanding. As the UN Guiding Principles Operating Principle 17 stipulates: “The process should include assessing actual and potential human rights impacts, integrating and acting upon the findings, tracking responses, and communicating how impacts are addressed.”47

Responsible companies that handle user information routinely carry out due diligence and impact assessment to identify security flaws and privacy weaknesses. Security audits are commonplace across the tech sector and legally mandated in many jurisdictions. Privacy impact assessments (PIAs) are now required by law in the EU and a growing number of countries.48 In the United States, Facebook, Google, and Twitter have been compelled by FTC consent orders to conduct PIAs in the wake of lapses that provoked complaints by privacy advocates, though as we have discussed in earlier parts of this report, such assessments are by no means adequate to address the negative social impact of the platforms’ exploitation of user information. Members of the GNI (which include Google and Facebook) carry out regular due diligence to identify and mitigate threats to users’ freedom of expression and privacy caused by censorship and surveillance demands that the platforms receive from governments all over the world.49 But those assessments do not seek evidence that companies carry out due diligence on human rights threats caused by company actions that are unrelated to government demands or requirements.

Given companies’ lack of transparency around targeted advertisements and their significant financial incentives to deploy the technology quickly, digital platforms’ human rights commitments lack full credibility if they fail to conduct regular and systematic due diligence on their algorithmic systems and targeted advertising business models. Due diligence alone is only credible when accompanied by evidence that companies have a process to revise or implement changes based on their findings.

Responding to longstanding demands from civil rights advocates, in 2018, Facebook initiated an audit of the platform’s impact on marginalized groups and people of color in the United States. The two published updates so far detail the company’s shortcomings with respect to voting rights50 and to hate speech, discriminatory ad targeting, the 2020 Census, and civil rights oversight within the company.51 The process, led by respected civil rights advocate Laura Murphy, also provided updates on Facebook’s efforts and recommended additional changes. Still, civil rights groups have pointed out that the mere existence of the audit process falls short, however, of what is needed to ensure that Facebook’s platform is not used to undermine civil rights by facilitating discriminatory targeting and the spread of harmful misinformation that disproportionately harms marginalized groups.52

The RDR Index evaluates whether companies conduct regular, comprehensive, and credible due diligence, such as human rights impact assessments, to identify how all aspects of their business affect freedom of expression and privacy and to mitigate any risks posed by those impacts. This includes due diligence pertaining to governments and regulations, to the company’s own policy enforcement processes, and to targeted advertising and algorithmic decision-making systems.

As of May 2020, none of the three social media giants—Google, Facebook, and Twitter—that are the focus of this report series disclosed any evidence that they conduct systematic human rights due diligence or impact assessments around their use of algorithmic systems. Nor do they disclose any impact assessment process to understand how their targeted advertising policies and practices affect human rights, or the social impact of their terms of service governing content, and related enforcement mechanisms.

Only one U.S. company evaluated by the RDR Index—Microsoft—discloses that it conducts impact assessments on its development and use of algorithmic systems.53 Microsoft, plus Verizon Media, are the only U.S. companies in the RDR Index that disclose impact assessments on their terms of service enforcement.54 As we have highlighted throughout this report, the uneven enforcement of platforms’ content policies contributes to human rights harms, both when dangerous content is left up and when protected speech is erroneously removed. Companies should consider how effectiveness and accuracy of their enforcement processes impact human rights alongside the impact of the rules themselves.

Among the companies covered by the RDR Index, the best examples of strong impact assessment around algorithmic systems and artificial intelligence come from European telecommunications companies. As investors increasingly factor ESG considerations into their decision-making, this raises concerns about U.S. companies’ competitiveness in global financial markets. Telefónica, for example, which operates in multiple European markets and across Latin America, clearly discloses that it assesses the freedom of expression, privacy, and discrimination risks associated with these systems, and that it conducts additional evaluations whenever these assessments identify concerns.55

Comprehensive due diligence and risk assessment by European companies prepares them well for future regulatory requirements. In April 2020, a group of 105 international investors representing $5 trillion in assets under management coordinated by the Investor Alliance for Human Rights called on governments to require companies to conduct ongoing risk assessment and human rights due diligence. They argued that such a step is not only a moral imperative but important for improving public trust in both business and government. 56

In 2017, in France, a new “duty of vigilance” law went into effect for French multinationals, making strong human rights oversight and risk assessment mandatory.57 Since then, political momentum has been building across the EU—with direct support even from some multinationals seeking an even regulatory playing field.58 With proposals for due diligence laws under varying stages of discussion or consideration in 13 European countries, in April 2020, EU Commissioner for Justice Didier Reynders committed to move forward with mandatory environmental and human rights due diligence legislation for EU companies in 2021.59

The U.S. Congress has taken one small step: In July 2019, the House Financial Services Committee held a hearing to discuss a draft bill to create an annual requirement for public companies to assess and report on their human rights risk exposure. The bill has not been formally introduced.60 Risks to the rights of users of digital platforms including privacy, information, and non-discrimination were not mentioned in the discussion, nor have they been raised in discussions of potential EU legislation. This gap must be addressed as an important tool for holding digital platforms accountable for all of their human rights impacts, including the social impact of algorithmic systems and targeted advertising business models.

Citations

- Trillium Asset Management. 2019. “Facebook, Inc. – Independent Board Chairman (2019).” source (May 16, 2020). Key supporters listed at: source

- Wolverton, Troy. 2018. “At Facebook’s Annual Meeting, Mark Zuckerberg Stuck to His Talking Points — and Ignored Some of Shareholders’ Biggest Concerns.” Business Insider. source (May 16, 2020).

- Fitzgerald, Meghan. 2019. “Why 68% of Facebook Investors Voted to Oust Zuckerberg as Chairman.” Yahoo! Finance. source (May 16, 2020).

- Mayer, Jane. 2018. “How Russia Helped Swing the Election for Trump.” The New Yorker. source (May 17, 2020).

- Stewart, Emily. 2019. “Facebook Will Never Strip Away Mark Zuckerberg’s Power.” Vox. source (May 16, 2020).

- Alphabet. 2019. Notice of 2019 Annual Meeting of Stockholders and Proxy Statement. “Proposal Number 16: Stockholder Proposal Regarding a Report on Content Governance.” U.S. Securities and Exchange Commission.“ source

- Chasan, Emily. 2019. “Global Sustainable Investments Rise 34 Percent to $30.7 Trillion.” Bloomberg.com. source (May 16, 2020).

- Callan Institute. 2019. 2019 ESG Survey. source

- Sustainable Investments Institute. 2020. Proxy Preview 2020 Shows Jump in ESG Shareholder Proposals as SEC Prepares to Restrict Shareholder Rights. Press release. source (May 16, 2020).

- MacKinnon, Rebecca, Melissa Brown, and Jasmine Arooni. 2020. Digital Rights 2020 Outlook: Market Realities and Regulation Are Raising the Bar. Washington, D.C.: New America. source

- Mooney, Attracta. 2020. “Coronavirus Forces Investor Rethink on Social Issues.” Financial Times. source (May 16, 2020).

- SEC Commissioner Robert J. Jackson, Jr. 2018. “SEC.Gov | Perpetual Dual-Class Stock: The Case Against Corporate Royalty.” Presented at San Francisco, CA. source (May 16, 2020).

- Setty, Ganesh. 2019. “Shareholders Would Have Tougher Time Submitting Resolutions under SEC’s Proposed Rule.” CNBC. source (May 16, 2020).

- World Economic Forum. 2020. Embracing the New Age of Materiality: Harnessing the Pace of Change in ESG. White paper. www3.weforum.org/docs/WEF_Embracing_the_New_Age_of_Materiality_2020.pdf

- Williams, Cynthia A. et al. 2018. Letter to the Secretary of the Securities and Exchange Commission Brent J. Fields. U.S. Securities and Exchange Commission. source

- Clancy, Heather. 2019. “Investor Interest Fuels SASB Adoption, Inspires New GRI Tax Disclosure Standard | Greenbiz.” GreenBiz. source (May 16, 2020).

- European Commission. “Non-Financial Reporting.” European Commission – European Commission. source (May 16, 2020).

- Rozen, Miriam. 2020. “Ethical Investors Want More Proof of Good Deeds.” Financial Times. source (May 16, 2020).

- Sustainability Accounting Standards Board. “Standards Overview.” source (May 16, 2020).Sustainability Accounting Standards Board. 2018. Internet Media & Services Sustainability Accounting Standard. Industry standard. source

- Waters, Greg. 2019. “SASB to Research Content Moderation on Internet Platforms.” Sustainability Accounting Standards Board. source (May 16, 2020).

- Sustainability Accounting Standards Board. “Companies Reporting with SASB Standards.” source (May 16, 2020).

- Ashwell, Ben. 2019. “More than 100 Companies Using SASB Standards.” IR Magazine. source Mirchandani, Bhakti. 2019. “Finally a Way to Assure Sustainability and Impact! Vornado, Etsy, and LeapFrog Lead the Charge.” Forbes. source (May 16, 2020).

- Flood, Chris. 2020. “Record Sums Deployed into Sustainable Investment Funds.” Financial Times. source (May 16, 2020).

- Fink, Larry. 2020. “A Fundamental Reshaping of Finance.” BlackRock. source (May 16, 2020).

- Telefónica. 2019. Summary Consolidated Management Report 2019. source

- Telefónica. “Responsible Business in Telefónica.” source (May 16, 2020).

- Ranking Digital Rights. 2020. The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems — Pilot Study and Lessons Learned. Washington D.C.: New America. www.rankingdigitalrights/pilot-report-2020

- MacKinnon, Rebecca. 2019. “Investors Urge Companies to Use the RDR Index to Improve Their Respect for Users’ Digital Rights.” Ranking Digital Rights. source (May 16, 2020).

- Ranking Digital Rights. 2020. 2020 Ranking Digital Rights Corporate Accountability Index Draft Indicators. Washington, D.C.: New America. source

- Edelman, Gilad. 2020. “Why Don’t We Just Ban Targeted Advertising?” Wired. source (May 16, 2020).Lomas, Natasha. 2019. “Twitter’s Political Ads Ban Is a Distraction from the Real Problem with Platforms | TechCrunch.” Tech Crunch. source (May 16, 2020).

- Facebook. 2020. Facebook Reports Fourth Quarter and Full Year 2019 Results. Press release. source (May 16, 2020).

- Twitter. 2020. Fiscal Year 2019 Annual Report. Press release. source

- Alphabet. 2020. Alphabet Announced Fourth Quarter and Fiscal Year 2019 Results. Press release. source

- Google. n.d. “How Ads Are Targeted to Your Site.” AdSense Help. source (May 16, 2020).

- Google. n.d. “About Targeting for Video Campaigns.” YouTube Help. source (May 16, 2020).

- Facebook. n.d. “Help Your Ads Find the People Who Will Love Your Business.” Facebook for Business. source (May 16, 2020).

- Apple. 2019. Apple Reports Fourth Quarter Results. Press release. source (May 16, 2020).Microsoft. Earnings Release FY20 Q1. Press release. source (May 16, 2020).

- Ovide, Shira. 2019. “Bing’s Not the Laughingstock of Technology Anymore.” Bloomberg. source (May 7, 2020).

- Ranking Digital Rights. 2019. Corporate Accountability Index. Indicator P9: Collection of user information from third parties. Washington, D.C: New America. source

- Mickle, Tripp, and Georgia Wells. 2018. “Apple Looks to Expand Advertising Business with New Network for Apps.” Wall Street Journal. source (May 7, 2020).

- Slefo, George P. 2019. “Apple’s Ad Business Borrows a Page from Facebook.” source (May 7, 2020).Monllos, Kristina. 2019. “‘Reclaim a Seat at the Table’: Microsoft Is Diversifying Its Advertising Business.” Digiday. source (May 7, 2020).

- OHCHR. 2011. Guiding Principles on Business and Human Rights: Implementing the United Nations “Respect, Protect and Remedy” Framework. Geneva: United Nations Office of the High Commissioner on Human Rights. source

- Ranking Digital Rights. 2019. Corporate Accountability Index. Indicator G1: Policy commitment. Washington, D.C.: New America. source

- Facebook. 2019. Oversight Board Charter. source

- Ranking Digital Rights. 2020. The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems — Pilot Study and Lessons Learned. Washington, D.C.: New America. www.rankingdigitalrights/pilot-report-2020

- Ranking Digital Rights. 2020. The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems — Pilot Study and Lessons Learned. Washington, D.C.: New America. www.rankingdigitalrights/pilot-report-2020

- OHCHR. 2011. Guiding Principles on Business and Human Rights: Implementing the United Nations “Respect, Protect and Remedy” Framework. Geneva: United Nations Office of the High Commissioner on Human Rights. source

- GDPR.EU. 2018. “Data Protection Impact Assessment (DPIA).” GDPR.eu. source (May 16, 2020).

- Global Network Initiative. 2020. The GNI Principles at Work: Public Report on the Third Cycle of Independent Assessments of GNI Company Members 2018/2019. source

- Sandberg, Sheryl. 2018. “An Update on Our Civil Rights Audit.” Facebook Newsroom. source (May 11, 2020).

- Sandberg, Sheryl. 2019. “A Second Update on Our Civil Rights Audit.” Facebook Newsroom. source (May 11, 2020).

- Scurato, Carmen. 2019. Facebook vs. Hate: An Analysis of Facebook’s Work to Disrupt Online Hate and the Path to Fully Protect Users. Free Press. source

- Ranking Digital Rights. 2020. The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems — Pilot Study and Lessons Learned. Washington, D.C.: New America. www.rankingdigitalrights/pilot-report-2020

- See Governance section, Ranking Digital Rights. 2019. Corporate Accountability Index. Washington, D.C.: New America. source

- Telefónica. 2018. Integrated Management Report 2018. source

- Investor Alliance for Human Rights. 2019. The Investor Case for Mandatory Human Rights Due Diligence. source

- Altschuller, Sarah A., and Amy Lehr. 2017. “The French Duty of Vigilance Law: What You Need to Know.” Corporate Social Responsibility and the Law. source (May 16, 2020).

- Business & Human Rights Resource Center. 2019. “National Movements for Mandatory Human Rights Due Diligence in European Countries.” source (May 16, 2020).Business & Human Rights Resource Centre. 2020. “Landmark Report on 1,000 European Companies Shows the Need for Human Rights Due Diligence Laws.” source (May 16, 2020).Business & Human Rights Resource Centre. “MEPs & Companies Call for EU-Level Human Rights Due Diligence Legislation.” source (May 16, 2020).

- “Commission Announcement of Due Diligence Legislation.” 2020. Heidi Hautala. source (May 16, 2020).European Coalition for Corporate Justice. 2020. Model Legislation on Corporate Responsibility to Respect Human Rights and the Environment – European Coalition for Corporate Justice. Legal brief. source (May 16, 2020).

- Kublin, Craig. 2019. “US House Financial Services Committee Holds Landmark Hearing on ESG Reporting.” Environment, Land & Resources. source (May 16, 2020).Zaidi, Ali. 2019. “INSIGHT: Pending Federal ESG Legislation Could Yield Significant and Step-Wise Change.” Bloomberg Law. source (May 16, 2020).Corporate Human Rights Risk Assessment, Prevent, and Mitigation Act. 2019. (U.S. House of Representatives) source