Estimating the Impact of Nation’s Largest Single Investment in Community Colleges

Abstract

The Trade Adjustment Assistance Community College and Career Training (TAACCCT) grant represented an unprecedented investment by the federal government in integrated postsecondary education and workforce training offered primarily by community and technical colleges. Between 2011 and 2018, 256 grants totaling nearly $2 billion were awarded through four rounds of competitive grants. Ultimately, 630 community colleges were represented in the overall group of 729 colleges and universities funded by TAACCCT, with community colleges making up 85 percent of all postsecondary institutions securing these grants (Cohen, 2017). More than any time in their over 100 years of existence, the TAACCCT grant spotlighted the critical role of community colleges in responding to economic downturns and preparing workers for a future in which postsecondary education and credentials are a necessity.

This brief presents results of a meta-analysis of quasi-experimental design (QED) evaluation studies to estimate the average effects of TAACCCT grants on four student outcomes: program completion, credential attainment, post-training employment, and pre- to post-training wage change. Complementing emerging evidence on TAACCCT reported by the Urban Institute (see Cohen et al., 2017; Durham et al., 2017; Eyster, Cohen, Mikelson, & Durham, 2017; and Durham, Eyster, Mikelson, & Cohen, 2017) and forthcoming results from ABT Associates’ national impact evaluation, we hope this brief contributes to a fuller understanding of the impact of TAACCCT on the outcomes of student participants, many of whom enrolled in community colleges to master skills needed to secure living-wage jobs in the aftermath of the Great Recession.

Acknowledgments

We would like to thank Lumina Foundation for their generous support of this work. Particularly, we would like to thank Frank Essien, Wendy Sedlak, and Holly Zanville for their helpful feedback and encouragement throughout the project. The authors want to acknowledge the team of researchers who made this meta-analysis possible, including New America colleagues Mary Alice McCarthy, team leader; Iris Palmer; and Sophie Nguyen; and Deborah Richie at Bragg & Associates, Inc. Without their eye for detail and commitment to the project, this research would not have been possible. Thank you to all of our New America colleagues including Sabrina Detlef, Maria Elkin, Riker Pasterkiewicz and Julie Brosnan. We also gratefully acknowledge the insights provided by Dr. Francesca Fiore, who carried out statistical analyses on TAACCCT grants that informed our work, and Dr. Robin Lasota and Dr. Burt Barnow, who shared valuable comments with us on the technical specifications of our approach.

Downloads

Introduction

The Trade Adjustment Act Community College and Career Training (TAACCCT) grant represented an unprecedented investment by the federal government in integrated postsecondary education and workforce training offered primarily by community and technical colleges. Between 2011 and 2018, 256 grants totaling nearly $2 billion were awarded through four rounds of competitive grants. Ultimately, 630 community colleges were represented in the overall group of 729 colleges and universities funded by TAACCCT, with community colleges making up 85 percent of all postsecondary institutions securing these grants (Cohen, 2017). More than any time in their over 100 years of existence, the TAACCCT grant spotlighted the critical role of community colleges in responding to economic downturns and preparing workers for a future in which postsecondary education and credentials are a necessity.

This brief presents results of a meta-analysis of quasi-experimental design (QED) evaluation studies to estimate the average effects of TAACCCT grants on four student outcomes: program completion, credential attainment, post-training employment, and pre- to post-training wage change. Complementing emerging evidence on TAACCCT reported by the Urban Institute (see Cohen et al., 2017; Durham et al., 2017; Eyster, Cohen, Mikelson, & Durham, 2017; and Durham, Eyster, Mikelson, & Cohen, 2017) and forthcoming results from ABT Associates’ national impact evaluation, we hope this brief contributes to a fuller understanding of the impact of TAACCCT on the outcomes of student participants, many of whom enrolled in community colleges to master skills needed to secure living-wage jobs in the aftermath of the Great Recession.

The TAACCCT Grant

Seeking policy levers to help turn around the nation’s weakest economy since the Great Depression, Congress created the created TAACCCT and tasked the U.S. Department of Labor (DOL) to administer the grant program, in collaboration with the U.S. Department of Education (ED). Making an unprecedented investment in the nation’s community and technical colleges, the DOL sought to direct its federal-funding authority in the Trade Adjustment Act (TAA) to substantially increase college access and credential attainment for adult workers detrimentally impacted by the recession. Beginning October 1, 2011 and continuing through September 30, 2018, almost $2 billion was invested in integrating postsecondary education and workforce training into programs of study in a wide range of occupational fields, from manufacturing to healthcare to energy. The DOL charged grantees with implementing programs that would not only help students secure immediate employment but also create career pathways that could offer longer-term economic and other benefits to participants over a lifetime. Put succinctly, the major goal of TAACCCT was to “provide workers with the education and skills to succeed in high-wage, high-skill occupations” (DOL, 2016, p. 3).

A specific charge of the TAACCCT grant was for community and technical colleges, and other postsecondary institutions, to enroll adult workers who had lost their jobs or who needed initial training or retraining to find employment in a dramatically changing workforce. From 2011 to 2018, four-year TAACCCT grants were made to all 50 states, Puerto Rico, and the District of Columbia (DC) with the stipulation that these federal funds be used to implement new or update programs of study using evidence-based innovations and strategies that increase the capacity of colleges to deliver more and better integrated postsecondary education and workforce training. Results reported by the DOL (2019) after the conclusion of the grant noted that TAACCCT supported an extensive amount of this activity.

TAACCCT grants created nearly 2,700 new or redesigned programs and enrolled over 500,000 students who earned more than 350,000 credentials. Across all rounds, the DOL awarded $393,734,412 in institutional grants to 146 single institution grantees, $1,120,036,098 to 84 single-state consortia, and $412,014,608 to 26 multi-state consortia (DOL, 2016). Manufacturing and healthcare were the two sectors with the largest grant activity, followed in descending order by energy, information technology, transportation and logistics, green technology, and agriculture (DOL, 2016).

Because TAACCCT sought to address the needs of individuals detrimentally impacted by the nation’s depressed economy, the student populations targeted for recruitment and enrollment reflected this phenomenon. The Solicitation for Grant Application (DOL, 2011) articulated that the TAACCCT should target “workers who have lost their jobs or are threatened with job loss as a result of foreign trade” (p. 1). As the economy improved in an uneven way across the country, the TAACCCT grantees were encouraged to implement programs targeting individuals eligible for training under the “TAA for Workers” program, as well as veterans, students with disabilities, and students who qualified for Pell grants. However, throughout the entire grant period, the DOL maintained a consistent emphasis on enrolling non-traditional age students, including individuals who were unemployed or underemployed. Reporting participation for these diverse populations was also a priority of performance-reporting requirements, so student enrollment was reported by grantees by gender, race/ethnicity, employment and enrollment status (full- or part-time), and veteran, disability, and Pell-eligibility status; however, evaluation results measuring the impact of the grants were not required for sub-populations, which is a point we will return to toward the conclusion of this paper.

After three rounds of TAACCCT grant awards, Durham et al. (2017) reported the average age of TAACCCT participants was 31 years, and also described a higher proportion of men than women enrolled in grant-funded programs (60% compared to 40%, respectively). Whereas the distribution by race/ethnicity of TAACCCT participants was similar to the nation’s population as a whole, the proportion of grant participants identifying with a non-white racial or ethnic group was lower than the overall average enrollment of racially minoritized groups in community and technical colleges nationally (59% versus 49%, respectively). This statistic varied by round but never approached the level of racial/ethnic minority representation in student enrollment in two-year colleges nationwide. Given the preponderance of grant activity in manufacturing and other occupations historically predominated by males, it is not surprising that more total participants were male than female, although it seems TAACCCT could have done more to close this gender gap. Interestingly, TAACCCT grants tended to enroll slightly more full- than part-time students, which is counter to national enrollment statistics for community colleges (American Association of Community Colleges, 2019); however, this relationship flipped between round three and round four when a higher proportion of TAACCCT participants were part-time enrolled and also full-time employed (46%), mirroring national statistics and possibly also reflecting the improving national economy.

Besides the occupational-technical programs created or improved with TAACCCT funding, grantees were encouraged to implement core elements identified as having sufficiently rigorous evidence to support federal funding. Shifting somewhat in foci across the rounds of the grant awards, some core elements consistently mentioned in the Solicitation for Grant Application (SGA) included the general categories of evidence-based designs; career pathways and stackable credentials; transfer and articulation; online and technology-enabled instruction; employer engagement; and strategic alignment with industry, governors, the public-workforce system, and others. These core elements help organize and provide some consistency for the wide-ranging programmatic approaches that grantees chose to pursue in the TAACCCT grant.

Third-Party Evaluation of TAACCCT

All rounds of the SGA asked applicants to implement and replicate evidence-based models, programs, and practices. The emphasis on evidence-based strategies seems to reflect the Obama administration’s “preference for competitive grants and evidence of effectiveness in designing its grant programs” (Haskins & Margolis, 2014, p. 192), mimicking the efforts by ED to apply the gold, silver, and bronze standard. However, this approach may not have accounted for the lack of evidence of the impact of a wide range of reforms in the context of the community college, including numrous reforms referenced in the TAACCCT SGA such as career pathways, Prior Learning Assessment (PLA, and intensive student services. Even so, a comprehensive approach to evaluation was seen as critical to advancing the TAACCCT grants, by providing funding for grantees to secure third-party evaluation that could measure implementation relative to student outcomes using designs that would produce evidence on what is working.

Round 1 of TAACCCT asked applicants to use an evidence-based framework for preparing proposals, recognizing that levels of evidence can be strong, moderate and preliminary. These levels are aligned to the Clearinghouse for Labor Evaluation and Research (CLEAR) standards of evidence created by the United States Department of Labor (DOL). Supplementary guidance for blueprint for the TAACCCT evaluation advocated by ED was the i3 grant program that sought evidence of impact consistent with the levels of rigorous evidence used by the Institute for Education Sciences (IES). In this schema, strong evidence refers to research and evaluation that addresses causal inference and conclusions (i.e., high internal validity) with sufficient sites and participants to suggest that interventions can be scaled up. Well-implemented experiments and QEDs supporting the effectiveness of programs and reform strategies fit this definition. Moderate evidence is generated by experimental, QEDs, and correlational designs with strong statistical controls for selection bias that provide some information useful to causal inference and conclusions, but lack broader generalizability. The third level of evidence, preliminary, refers to research yielding promising evidence of limited generalizability that are based on descriptive tracking studies and pre- and post-treatment comparison studies (Zandniapour & Deterding, 2018). On their own, these studies lack sufficient quality evidence to support scaling up.

The DOL also required grantees to provide annual performance report results for grant participants, as well as matched comparison groups. Rounds 2 through 4 continued the focus on rigorous evidence begun in Round 1 but moved to a requirement for a third-party evaluation to estimate the impact of the grant on student outcomes. Applicants were required to submit an evaluation plan and budget focusing on both implementation and impact. DOL’s directive was to use the most rigorous evaluation design feasible to estimate the effects of the grant, whenever possible using experimental or quasi-experimental design (QED). By QED, we mean study designs that are not experimental design in the form of randomized control trials but quasi-experimental in that use alternative designs that enable researchers to estimate causal effects when randomization is determined to be infeasible or inappropriate. The DOL also required national evaluation activities involving all TAACCCT grants to assess implementation and impact, with this aspect conducted by Urban Institute and ABT Associates. Review of the TAACCCT evaluation plans showed that most third-party evaluations intended to use propensity score matching (PSM), with 70% of Rounds 3 and 4 specifying PSM as the evaluation design most feasible to estimating causal impacts. Only about 17% of the evaluations planned to conduct correlational (non-causal) pre-post or outcomes-only studies, and even fewer included plans for experimental designs (Cohen et al., 2017).

The TAACCCT grant program wrapped up one year prior to this writing but only a handful of TAACCCT evaluation studies having been published (see, for example, Bragg & Krismer, 2016). This study fills a critical void in understanding the overall impact of TAACCCT as a federal policy focusing extensively on community and technical colleges.

Purpose of the Study

This brief describes findings from a meta-analysis of third-party evaluation (TPE) studies using QED designs to determine the impact of the TAACCCT grants on students’ educational and employment outcomes. The primary objective of this study was to examine the merits of a novel data source for meta-analysis—third-party evaluation reports using QED designs—on estimating the average effects of TAACCCT funding on student-level outcomes. Our second objective was to consider the implications of meta-analysis as a methodological approach for evaluating future postsecondary education and workforce training policy. We conclude with a brief discussion of the potential for improved alignment of large-scale federal investments with rigorous evaluation and meta-analytic designs that reveal effects, and we use this information for a second report offering recommendations for future federal investments in systematic and rigorous evaluation of community and technical college education (Bragg, 2019).

Meta-Analysis Methods

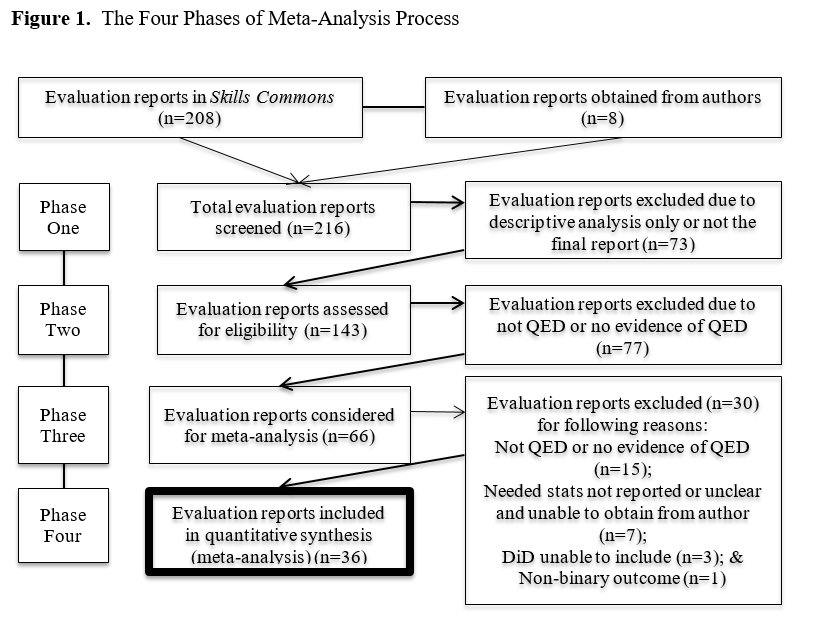

Meta-analysis aggregates statistical findings across multiple studies. Used to inform such questions as “What does the research tell us about this intervention?” meta-analysis is commonly used to synthesize epidemiology and health research and increasingly found in the social sciences and education (Cooper, Hedges, & Valentine, 2009). A meta-analysis process distills hundreds of publications into a handful of studies that meet a set of criteria for inclusion. The preliminary data for this meta-analysis came from 216 TAACCCT evaluation reports (comprising 84% of all TAACCCT grants), from which 36 evaluation reports were selected for inclusion after a four-phase review process. The vast majority of final third-party evaluation reports were accessed from the SkillsCommons Repository (see:skillscommons.org), but a few reports were obtained directly from evaluators when a search of the SkillsCommons Repository did not yield a copy of the final report. The four-phase process for reviewing and making decisions on inclusion/exclusion is described below.

In Phase One, the research team reviewed each publicly available TAACCCT evaluation report. Two reviewers read each report identifying the interventions, looking for evidence of a grant theory of change, quantitatively scoring implementation and outcomes, and identifying the evalaution design including comparison groups.

In Phase Two, the reports that claimed to employ QED (n=143) were reviewed to determine whether they were plausibly QED studies. This phase required careful reading; many reports contained treatment and comparison groups because of DOL instructions that third-party evaluations should include comparison studies, but upon further inspection were determined by reviewers to not be QED. Recognizing that evaluators faced pressure to implement QEDs even in the face of data limitations and other methodological constraints, reviewers also detailed efforts to implement QEDs that were unsuccessful. An additional component of this phase was determining which outcomes might be sufficiently represented in the evaluation reports that a critical mass of data points were present. We determined the outcomes of program completion, credential completion, employment, and wage change to be the most meaningful and plentiful of measures used across the full spectrum of evaluation studies for TAACCCT, and we narrowed to these outcomes in the next phase of the review process.

In Phase Three, the studies where authors claimed to have used any form of experimental and quasi-experimental design with causal estimates linking TAACCCT program participation to the outcomes of program completion, credential completion, employment, and wage change were evaluated for inclusion in our meta-analysis (n=66). The Phase Three review determined whether the studies included evidence of a QED, and in all cases but two were revealed to use propensity score matching (PSM). Given that the TAACCCT evaluation reports had not been published in refereed journals and therefore were not subject to the rigorous methodological review process associated with publication of meta-analysis studies in the literature, we established selection criteria that took into account factors normally considered in peer-refereed review. These criteria included: study authors identified their evaluation study design as a QED and described aspects of the QED design in the text of their evaluation report in sufficient depth and detail to provide reasonable confidence that the study design was actually a QED. Studies reviewed in Phase Three where the evaluation report lacked sufficient statistical information consistent with the purported approach to QED were eliminated from review. However, a sub-set of QED studies that lacked a specific statistic needed for the meta-analysis (i.e., lacking a sample size or standard error statistic) but otherwise which appeared to be a bona fide QED design, were identified as a potential QED and the authors of these studies, totaling 15, were contacted via email and phone to request the missing statistic that would allow us to include the study. All but four authors provided the requested statistic so that these studies could be included in our meta-analysis. This step was important, as these added studies made up 31% of the total evaluations included here.

Phase Four entailed conducting the meta-analysis using the 36 studies that met our inclusion criteria. At this point, each report’s outcomes (relative to treatment and comparison groups) were recorded and converted to standardized effects. Studies that were not included in the final meta-analysis either lacked requisite statistics for inclusion or, after all evidence was examined, were determined to be descriptive in nature and actually not QED studies. Ultimately, 60 effects representing education and employment outcomes were drawn from the final set of 36 studies included in the meta-analysis.

Table 1 provides a summary of the TAACCCT grants included in the meta-analysis study by grant title, author(s), round, grant type (single institution, state consortium or multi-state consortium), industry sector, and outcome(s). No QED studies were included from Round 1, but numerous studies were included from each of the other three rounds, with 14 studies from Round 2, 9 studies from Round 3, and 13 studies from Round 4. The breakdown of grant type included 12 studies conducted of TAACCCT grants awarded to a single institution, 15 studies of single-state consortia, and 9 studies of multi-state consortia. As with the overall TAACCCT grants, manufacturing was the most prevalent industry sector included in the evaluations, with 8 studies focusing on healthcare, 5 on information technology (IT), and 4 multi-sector grants that also included manufacturing, healthcare, and IT. Other sectors represented in the grants included energy; transportation, distribution and logistics; business services, various trades (i.e., construction, electronics), and various other occupational areas.

Table 1. Summary of TAACCCT Grant Characteristics in Meta-Analysis Study

| Round | TAACCCT Grant Title | Author(s) | Grant Type | Location | Industry Sector | Outcomes |

|---|---|---|---|---|---|---|

| 2 | AF-TEN | PTB & Associates (2016) | Multi-state Consortium | AL & FL (5 Colleges) | • Welding • Industrial Electronics | • Credential Completion • Employment |

| 2 | Allied Health Expansion | Caffey (2016) | Single Institution | NM | Healthcare | • Program Completion • Employment • Wage Change |

| 2 | AME Manufacturing | Ho (2016) | State Consortium | MN (3 Colleges) | Manufacturing | • Program Completion • Employment • Wage Change |

| 2 | Amplifying Montana | Feldman, Staklis, Hong, & Elrahman (2016) | Single Institution | MT | Manufacturing | Program Completion |

| 2 | Competency-Based Education in Community Colleges | Person, Thomes, Bruch, Johann, & Maestas (2016) | Multi-state Consortium | FL, OH, & TX (3 Colleges) | Information Technology (IT) | Credential Completion |

| 2 | Consortium for Bioscience | Alamprese, Costelloe, Price, & Zeidenberg (2017) | Multi-state Consortium | CA, FL, IN, NC, PA, TX, UT, WI (12 Colleges) | Bioscience | Credential Completion (2 effects) |

| 2 | CT Health and Life Sciences | Mokher & Pearson (2016) | State Consortium | CT (5 Colleges) | Health Sciences | Credential Completion |

| 2 | Iowa Advanced Manufacturing | Mora, Kemis, Callen, & Starobin (2016) | State Consortium | IA (15 Colleges) | Manufacturing | Credential Completion |

| 2 | Making the Future | Price, Sedlak, Roberts & Childress (2016) | State Consortium | WI (16 Colleges) | Manufacturing | • Credential Completion • Employment |

| 2 | Minneapolis MAAC | Kundin & Dretzke (2016) | Single Institution | MN | Manufacturing | Program Completion (2 effects) |

| 2 | Online2Workforce | Jensen, Horohov, & Wright (2016) | Single Institution | KY | Business Services | • Credential Attainment • Employment |

| 2 | Project IMPACT | Shain & Grandgenett (2016) | State Consortium | NE (5 Colleges) | Manufacturing | Credential Completion |

| 2 | ShaleNET | Dunham et al. (2016) | Multi-State Consortium | PA (4 Colleges) | Energy | Employment |

| 2 | Retraining the Gulf Workforce | Patnaik & Prince (2016) | Multi-State Consortium | LA & MS | Information Technology (IT) | Credential Completion |

| 3 | Bridging the Gap | Bellville et al. (2017) | State Consortium | WV (9 Colleges) | Multi-Sector | Credential Completion |

| 3 | Florida XCEL-IT | Swan et al. (2017) | State consortium | FL (7 Colleges) | Information Technology (IT) | • Program Completion • Wage Change |

| 3 | Golden Triangle | Harpole (2017) | Single Institution | MS | Manufacturing | • Program Completion • Employment |

| 3 | HOPE | Good & Yeh-Ho (2017) | Multi-state Consortium | FL, MN & MI (5 Colleges) | Healthcare | Program Completion (5 effects) |

| 3 | LA Healthcare | Tan & Moore (2017) | State Consortium | CA (8 Colleges) | Healthcare | Program Completion |

| 3 | Maine is IT | Horwood, Usher, McKinney, & Passa (2017) | State Consortium | ME (7 Colleges) | Information Technology (IT) | Program Completion |

| 3 | Mission Critical Operations | NC State Industry Expansion Solutions (2017) | Multi-State Consortium | NC & GA | • Information Technology • Engineering | Program Completion |

| 3 | North Dakota AM | Jensen, Horohov, & Wright (2016) | Single institution | KY | Manufacturing | Program Completion |

| 3 | Northeast Resiliency Consortium | Price, Childress, Sedlak, & Roach (2017) | Multi-state Consortium | NJ (7 Colleges) | Multi-Sector | • Program Completion • Credential Completion (2 effects) |

| 4 | Advancing Career & Training (ACT) for Healthcare | Price, Valentine, Sedlak, & Roberts (2018) | State Consortium | WI (16 Colleges) | Healthcare | • Credential Completion • Employment • Wage Change |

| 4 | Adult Competency-Based Education (ACED) | Bragg, Cosgrove, Cosgrove, & Blume (2018) | Single Institution | UT | Multi-Sector | • Program Completion • Employment |

| 4 | Advanced Manufacturing for Global Economy | Haviland, Van Noy, Kuang, Vinton, & Pardalis (2018) | Single Institution | OH | Manufacturing | Program Completion |

| 4 | Building Illinois Bioeconomy | New Growth Group (2018) | State Consortium | IL (5 Colleges) | Resource Management | Program Completion |

| 4 | Greater Memphis Alliance | Patnaik ( 2018) - impact; Juniper, C. (2018) | Multi-state Consortium | AR & TN (4 Colleges) | • Manufacturing • Transport, Distribution & Logistics | Credential Completion |

| 4 | Healthcare Careers Work | WorkEd Consulting (2018) | Single Institution | GA | Healthcare | Program Completion |

| 4 | Heroes for Hire | Horwood, Campbell, McKinney, & Bishop (2018) | State Consortium | WV (3 Colleges) | • Healthcare • Manufacturing | Program Completion |

| 4 | I Am STAR | Dockery et al. (2018) | Single institution | OH | Manufacturing | Employment |

| 4 | Kan-TRAIN | Foster, Staklis, Ott & Moyer (2018) | State Consortium | KS (5 Colleges) | Multi-Sector | • Credential Completion • Employment |

| 4 | New Mexico SUN | Dauphinee et al. (2018) | State Consortium | NM (11 Colleges) | Healthcare | • Program Completion • Employment • Wage Change |

| 4 | Ohio Tech Net | The New Growth Group & The Ohio Education Resource Center (2018) | State Consortium | OH (11 Colleges) | Manufacturing | Program Completion |

| 4 | Plugged-In and Ready to Work | Styers, Haden, Cosby, & Peery. (2018) | Single Institution | VA | Manufacturing | Employment |

| 4 | UDC Construction and Hospitality | Hendricks, Mitran, & Ferroggiaro (2018) | Single Institution | DC | • Construction • Hospitality | • Program Completion • Credential Completion |

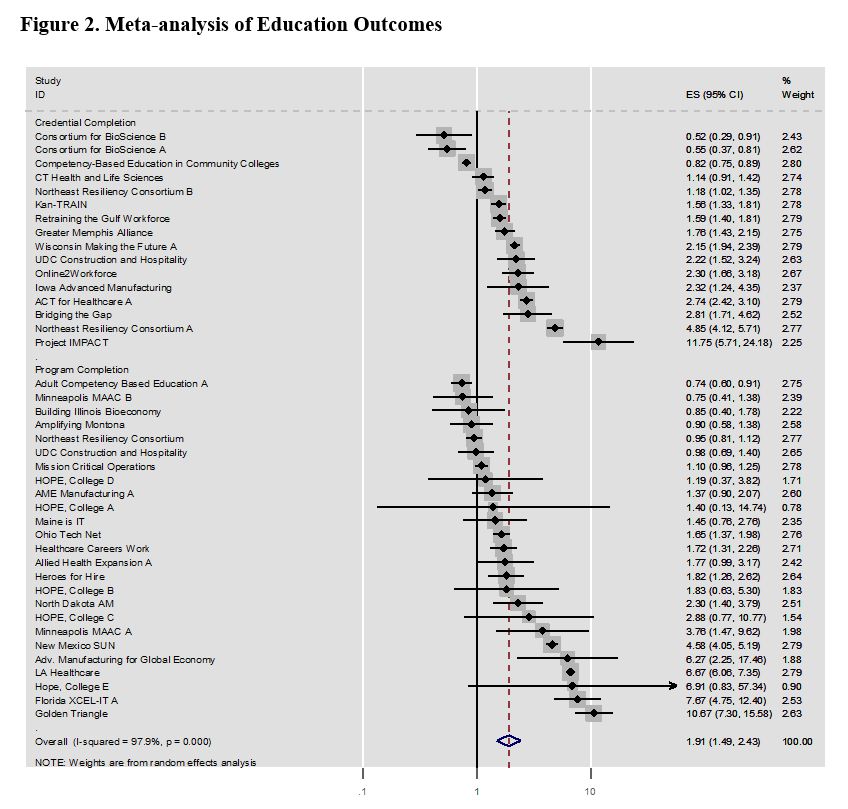

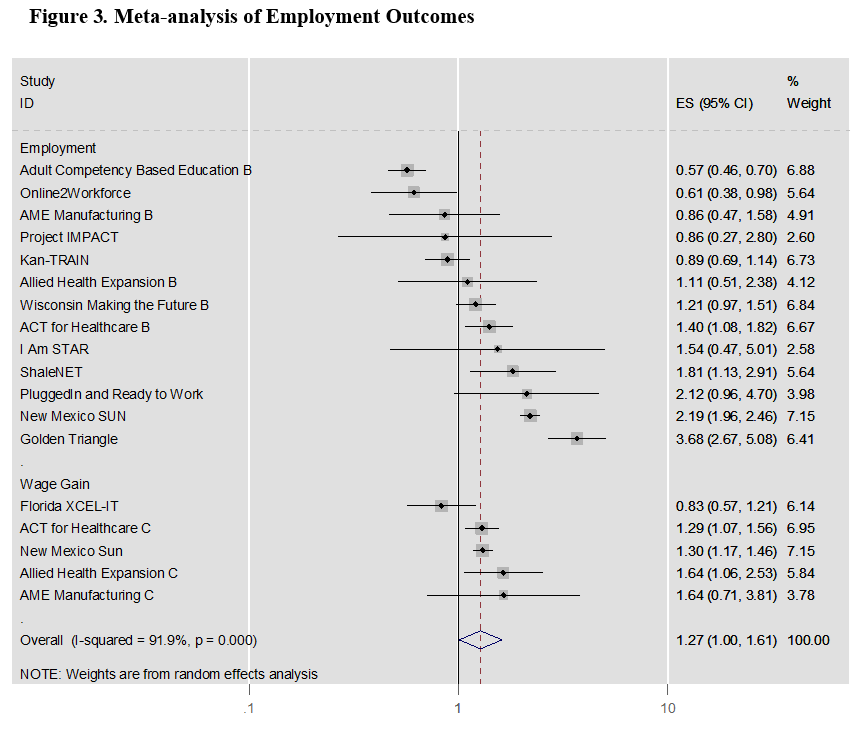

The relevant outcomes from qualified third-party evaluation reports were converted to a standardized effect in the form of an odds ratio and its associated standard error for the four outcomes: program completion, credential completion, post-program employment, and pre- to post-program wage change. Program completion and credential completion effects were pooled under the broader category of educational outcomes. Post-program employment and post-program wage change were likewise pooled and categorized as employment outcomes.

Using a random effects model, these standardized effects for education and employment outcomes were weighted and pooled, resulting in “forest plot” visualizations and an estimate of the overall effect for the two outcomes of interest. In addition, a measure of heterogeneity was calculated to gauge the consistency of the studies’ results across the education and employment outcomes analyzed in this brief. Appendix A provides additional technical narrative to supplement the description of our approach for this study. Even though more options for conducting meta-analysis are rapidly emerging as this form of research in education grows (including the emergence of various forms meta-regression), we chose a fairly straightforward approach to this initial meta-analysis and may advance to more advanced forms assuming the data support these analytical designs.

Findings

A series of forest plots represent our meta-analysis results for the selected TAACCCT grant evaluation studies. Figure 1 portrays educational outcomes, comprising program completion and credential completion, across 32 evaluation reports containing 41 effect sizes. The number “treated” by TAACCCT participation total 38,589 observations. Figure 2 illustrates an overall positive effect of TAACCCT participation on education outcomes (ESOR = 1.905; z = 5.18, p < 0.001).

Figure 3 portrays a similar, positive effect of TAACCCT participation relative to the odds of employment outcomes. Of the 15 evaluation reports from which 18 effects were identified and included in the meta-analysis, TAACCCT participation was associated with statistically significant and positive increase in the odds of employment outcomes (ESOR = 1.273; z = 2.00, p = 0.046). These 18 employment-related effects comprised 8,870 TAACCCT observations.

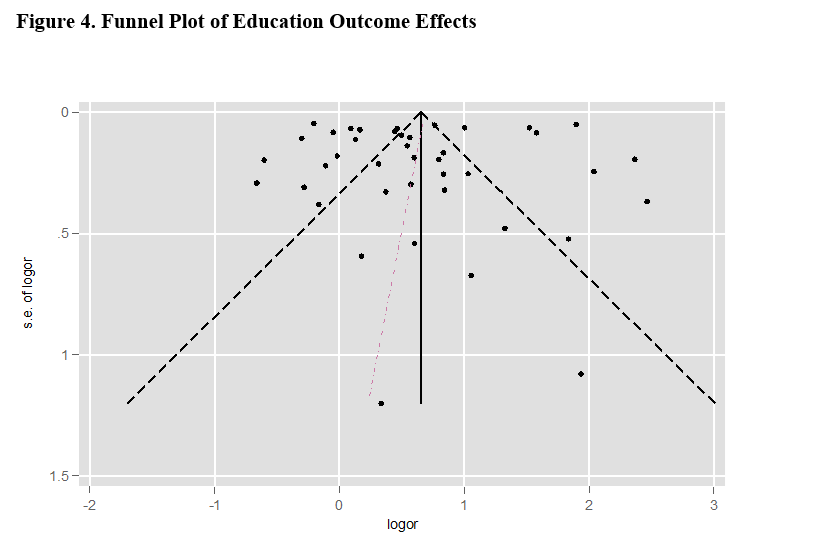

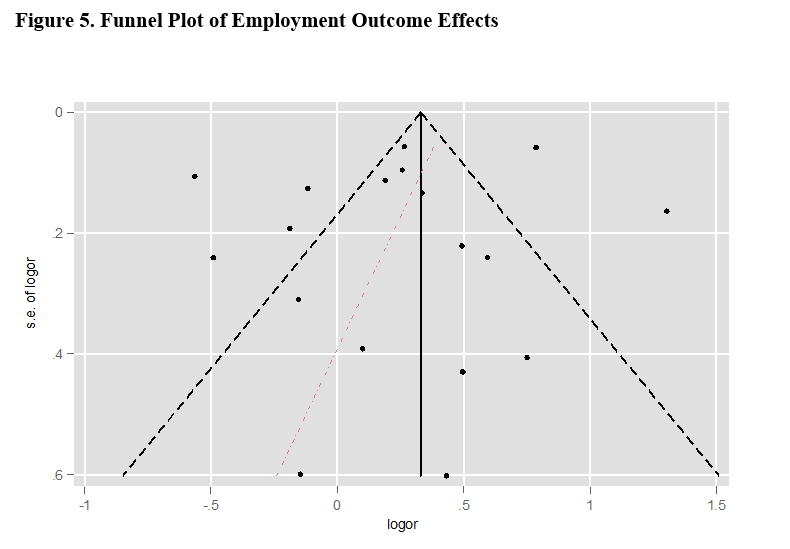

Funnel plots were used to test for biased results for educational and employment outcomes separately (Figure 4). In the traditional sense publication bias can be considered moot here because our meta-analysis is comprised of evaluation reports not published in peer-refereed journals. However, publication bias could be relevant nonetheless because of the pressure evaluators face to report positive, statistically significant findings (Scriven, 2001). In other words, TAACCCT evaluators could have included only positive significant results of program participation and suppressed or omitted negative findings. An asymmetric distribution of effects in a meta-analysis funnel plot suggests publication bias; the funnel plots generated for the education outcomes (Figure 4) and employment outcomes (Figure 5) suggest no visual asymmetry (i.e., bias) in how effects in the evaluation reports. The commonly used Egger test (Egger et al., 1997) provides evidence of this lack of bias for both education (-0.381, t = -0.21, p = 0.832) and employment (-1.140, t = -0.80, p = 0.438) outcomes.

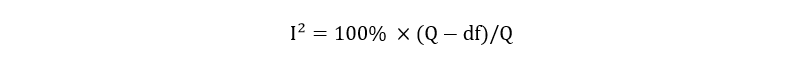

In addition to examining the possibility of biased results in the TAACCCT evaluation reports included in this meta-analysis, an I² statistic was calculated to gauge heterogeneity across the education and employment effects. The I² statistic provides a "measure of inconsistency in the studies' results" and captures the percentage of total variation across studies due to heterogeneity rather than chance (Higgins & Thompson, 2002). TAACCCT evaluation reports of varying sample sizes, study designs, and outcome measures means the I-squared measure is well suited to gauge heterogeneity since the measure was developed for these common characteristics (Higgins et al. 2003). Heterogeneous effects appear to be a characteristic of the education (97.9%, p < 0.001) and employment (91.9%, p < 0.001) outcomes.

The Appendix provides a summary of results of all studies by grant title, author(s), comparison sample, total analytic sample size, and effects size and also standard error by outcome for each study. This summary table provides a concise overview of the educational outcomes in the meta-analysis, including program completion and credential completion, and employment outcomes meta-analysis, including employment and wage change.

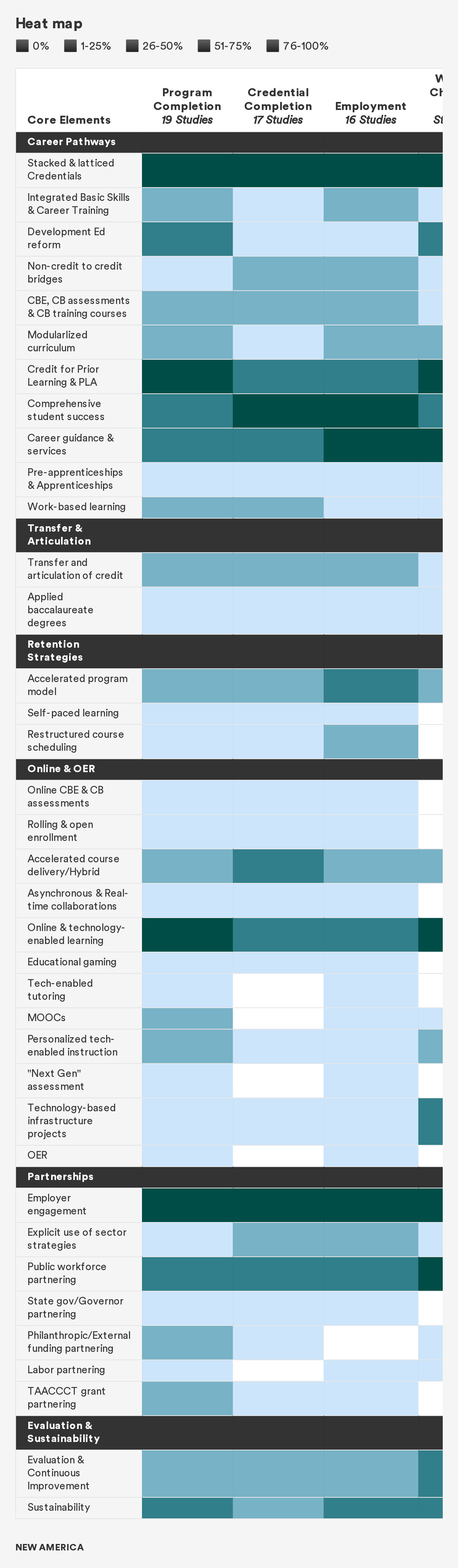

The final analysis conducted as part of this meta-analysis was a content analysis of the core elements purported by third-party evaluators to be implemented in the TAACCCT grants. This analysis involved two members of the research team independently reading and coding the grants using a rubric that identified SGA-specified core elements of all four rounds of the TAACCCT grants. Table 2 shows these results in the form of a “heat map,” which is a common way of visually displaying results associated with meta-analysis. Heat maps supplement meta-analysis studies by presenting the incidence of implementation of program elements, as used in our approach. In our case, the evaluation studies refer to core elements as part of a theory of change as well as planned implementation and provide varied levels of detail as to the extent to which they were actually implemented. Therefore, these heat maps should be interpreted as providing insights into core elements that may have some level of relationship to the average effects of the meta-analysis studies but not providing irrefutable evidence of impact.

The heat maps associated with this study show the prevalence of core elements implemented in TAACCCT grants. Occurring most frequently in these grants is core elements associated with career pathways, specifically stacked and latticed credentials, prior learning assessment (PLA), comprehensive student supports, and career and employment services and supports. Several core elements in the grant are associated with online learning, assessment, and open educational resources (OER), online and technology-enabled learning and, to a lesser extent, accelerated online learning. Also prevalent in the grants is employer engagement and workforce agency partnerships. Sustainability and evaluation are also documented in the grants. Taken together, these core elements give a sense of the grant-funded core elements and strategies that were implemented in programs funded by TAACCCT. They are not presumed to cause the positive outcomes but they are potential contributors to impact, and they deserve consideration in future evidence-based federal investments such as TAACCCT.

Discussion

These results suggest TAACCCT had a positive effect on both the education and employment outcomes of those who participated in its funded programs and strategies. The magnitude of TAACCCT’s positive effect appears greater for the educational outcomes of program completion and credential completion compared to the employment outcomes, measured as post-program employment and pre- to post-program wage change. These findings, resulting from a thorough review of more than 200 TAACCCT evaluation reports, make an important contribution to estimating the impact of the TAACCCT grant’s federal investment in community and technical colleges.

Although positive results emerged from the meta-analysis, it is important to heed the caution of Pigott (2012, p. 146), a noted expert in meta-analysis techniques, who observes that “even if a systematic review yields equivocal results, a well-conducted systematic review should help to illuminate the issues” related to the effects. This study is limited to the subset of QED studies that provided sufficient methodological and statistical information for inclusion in the analysis and therefore should not be assumed to necessarily represent all evaluation results. Thus, these results should not be expected to stand alone as a single source guiding decision-making about future policy and program design but complement the national evaluation efforts of the Urban Institute and ABT research teams who are continue to conduct independent evaluation studies to describe implementation and measure the impact of TAACCCT.

Complementing the quantitative results of the meta-analysis is a heat map, providing insight into the core elements implemented in the TAACCCT grants. In this analysis, the career pathways approach was implemented widely, including stackable and latticed credentials, prior learning assessment (PLA), comprehensive student supports, career advising and guidance, and employment supports. Online and technology-enabled learning and related online strategies were also implemented in a substantial number of the studies included in the meta-analysis. Partnerships between the community and technical colleges and employers and workforce agencies were also mentioned, and strategies emphasizing the importance of sustaining elements of the grant into the future was evident in the evaluation reports.

Evaluation was also mentioned as an important strategy associated with TAACCCT, with numerous grant reports offering recommendations to help grantees continue to track the educational and employment outcomes of grant-funded participants and add new participants into the tracking of these student outcomes in the future. Evaluators mentioned in their reports the importance of continuing to assess student outcomes, often recognizing complexities in carrying out rigorous impact evaluation.

Rigorous third-party evaluations using QED are challenging to conduct. As many as 70% of third-party evaluators made plans to implement a rigorous design for grants in Rounds 3 and 4, but many fewer were executed due to methodological, logistical, and other difficulties. Barriers that impeded the execution of rigorous evaluation designs are more fully explicated in Bragg (2019) in a companion brief that offers recommendations for future federal investments in community and technical colleges. These suggestions include a call for the federal government to establish realistic expectations and fund professional development and technical assistance that helps evaluators execute their studies usingdesigns that illuminate a program’s impact on targeted student populations, both in aggregate and broken down for sub-populations so that a determination can be made about whether outcomes are distributed equitably. More nuanced designs that delve into the impact of core elements, such as PLA and comprehensive student supports are also needed, and this idea is supported by the two briefs completed by members of the New America team (Palmer, Nguyen 2019; Love 2019).

References

ABT Associates. (2018). Supporting education strategies to improve skills, employment, and earnings. Bethesda, MD: author. https://www.abtassociates.com/sites/default/files/2018-05/090Career_Pathways_Brochure_March2018-single-page.pdf

Alamprese, J. A., Costelloe, S., Price, C., & Zeidenberg, M. (2017). Consortium for bioscience credentials (c3bc): Final report. Bethesda, MD: Abt Associates.

American Association of Community Colleges. (2019, March). Fast facts. Washington DC: Author. https://www.aacc.nche.edu/wp-content/uploads/2019/05/AACC2019FactSheet_rev.pdf

Bellville, J., Schoeph, K., Wilkinson, A., Leger, R., Jenner, E., Lass, K., & Coffman, K. (2017). West Virginia bridging the gap TAACCCT Round 3 final evaluation report. Indianapolis, IN: Thomas P. Miller & Associates.

Borenstein, M., Hedges, L. V., Higgins, J. P., & Rothstein, H. R. (2010). A basic introduction to fixed‐effect and random‐effects models for meta‐analysis. Research Synthesis Methods, 1(2), 97–111.

Bragg, D., & Krismer, M. (2016). Using career pathways to guide students through programs of study. New Directions for Community Colleges, 176, 63–72.

Bragg, D., Cosgrove, J., Cosgrove, M., & Blume, G. (2018). Final evaluation of the ACED grant at Salt Lake Community College. Salt Lake City, UT: Salt Lake Community College and Chicago, IL: Bragg & Associates, Inc.

Bragg, D. (2019). Recommendations for future federal investments in community and technical education. Washington, DC: New America.

Caffey, D. (2016). Final external evaluation report TAACCCT Clovis Community College.

Cochran, W. G. (1954). The combination of estimates from different experiments. Biometrics, 10(1), 101–129.

Cohen, E., Michelson, K. S., Durham, C., & Eyster, L. (2017). TAACCCT characteristics: The Trade Adjustment Assistance Community College and Career Training grant program brief 2. Washington, DC: Urban Institute. https://www.dol.gov/sites/dolgov/files/OASP/legacy/files/20170308-TAACCCT-Brief-2.pdf

Cooper, H., Hedges, L. V., & Valentine, J. C. (Eds.). (2009). The Handbook of Research Synthesis and Meta-Analysis. New York: Russell Sage Foundation.

Davis, M. R., Hume, M., Carr, S. L., Dauphinee, T. L., & Heredia‐Griego, M. (2018). Round Four status report: Progress toward achieving systemic change. Albuquerque, NM: Cradle to Career Policy Institute, University of New Mexico.

Dauphinee, T., & Bishwakarma, R. (2018). SUN Path comparison study. Albuquerque, NM: Cradle to Career Policy Institute, University of New Mexico.

DerSimonian, R. and Laird, N. (1986). Meta-analysis in clinical trials. Controlled Clinical Trials, 7(3), 177–188.

Dockery, J., Bottomley, M., Murray, C., Tichnell, T., Stover, S., Schroeder, N., & Franco, S. (2018). TAACCCT final evaluation report Northwest State Community College of Ohio industrial automation manufacturing innovative strategic training achieving results (IAM iSTAR) initiative. Dayton, OH: Wright State University Applied Policy Research Institute.

Dunham, K., Hebbar, L., Khemini, D., Comeaux, A., Diaz, H., Folsom, L., & Kuang, S. (2016). ShaleNET Round 2 TAACCCT grant third-party evaluation final report. Oakland, CA: Social Policy Research Associates.

Egger, M., Smith, G. D., Schneider, M., & Minder, C. (1997). Bias in meta-analysis detected by a simple, graphical test. BMJ, 315(7109), 629–634.

Eyster, L., Cohen, E., Mikelson, K., & Durham, C. TAACCCT approaches: Targeted industries, and partnerships: The Trade Adjustment Assistance Community College and Career Training grant program brief 3. Washington, DC: Urban Institute. https://www.urban.org/research/publication/taaccct-approaches-targeted-industries-and-partnerships

Feldman, J., Staklis, S., Hong, Y., & Elrahman, J. (2016). Evaluation report of the amplifying Montana's advanced manufacturing and innovation industry (AMAMII) project. Berkeley, CA: RTI International.

Foster, L. R., Staklis, S., Ott, N. R., & Moyer, R. (2018). Kansas technical re/training among industry-targeted networks (Kan-TRAIN). Portland, OR: RTI International.

Good, K., & Yeh-Ho, H. (2017). Orthopedics, Prosthetics, or Pediatrics (HOPE) careers consortium. Denver, CO: McREL International.

Harpole, S. H. (2017). Final evaluation golden triangle modern manufacturing project. SHH Consulting.

Haskins, R., & Margolis, G. (2014). Chapter 7: The Trade Adjustment Assistance Community College and Career Training Initiative. Show Me the Evidence: Obama's Fight for Rigor and Results in Social Policy. Washington, DC: Brookings Institution Press.

Haviland, S., Van Noy, M., Kuang, L., Vinton, J., & Pardalis, N. (2018). Evaluation of Clark State Community College's advanced manufacturing to compete in a global economy (AMCGE) training program. Piscataway, NJ: Education and Employment Research Center, Rutgers University.

Hendricks, A., Mitran, A., & Ferroggiaro, E. (2018). University of the District of Columbia final annual evaluation report. Fairfax, VA: ICF.

Higgins, J. P., & Thompson, S. G. (2002). Quantifying heterogeneity in a meta‐analysis. Statistics in Medicine, 21(11), 1539–1558.

Higgins, J. P., Thompson, S. G., Deeks, J. J., & Altman, D. G. (2003). Measuring inconsistency in meta-analyses. BMJ, 327(7414), 557–560.

Hillman, N. W., & Orians, E. L. (2013). Community colleges and labor market conditions: How does enrollment demand change relative to local unemployment rates? Research in Higher Education, 54(7), 765–780.

Ho, H. (2016). Advanced manufacturing education (AME) alliance evaluation: Final evaluation report. Charleston, WV: McREL International.

Horwood, T.J., Usher, K.P., McKinney, M.T., & Passa, K. (2017). Maine is IT! Program evaluation final report. Fairfax, VA: ICF.

Horwood, T., Campbell, J., McKinney, M., & Bishop, M. M. (2018). Heroes for hire (H4H) program evaluation final report. Fairfax, VA: ICF.

Huber, C. R., & Kuncel, N. R. (2016). Does college teach critical thinking? A meta-analysis. Review of Educational Research, 86(2), 431–468.

Jensen, J., Horohov, J., & Wright, C. (2016). Online2Workforce (O2W) TAACCCT Round II grant final evaluation report. Lexington, KY: University of Kentucky Evaluation Center.

Juniper, C. (2018). Greater Memphis alliance for competitive workforce (GMACW) TAACCCT Round 4 grant implementation evaluation final report. Austin, TX: Ray Marshall Center, University of Texas at Austin.

Kundin, D. M., & Dretzke, B. J. (2016). An evaluation of the manufacturing advancement and assessment center (MAAC) program, final report. St. Paul, MN: Center for applied research and educational improvement, College of Education and Human Development, University of Minnesota.

Love, I. (2019). Navigating the Journey: Encouraging Student Progress through Enhanced Support Services in TAACCCT. Washington, DC: New America.

NC State Industry Expansion Solutions. (2017). Final evaluation report Round 3 TAACCCT grant: Mission critical operations. Raleigh, NC: NC State Industry Expansion Solutions.

New Growth Group. (2018). Building Illinois' bioeconomy (BIB) consortium final evaluation report. Cleveland, OH: New Growth Group.

Mikelson, K. S., Eyster, L., Durham, C., & Cohen, E. (2017, March ). TAACCCT goals, design, and evaluation: The Trade Adjustment Assistance Community College and Career Training grant program brief 1. Washington, DC: Urban Institute. https://www.dol.gov/sites/dolgov/files/OASP/legacy/files/20170308-TAACCCT-Brief-1.pdf

Mokher, C., & Pearson, J. (2016). Evaluation of the Connecticut health and life sciences career initiative final report. Arlington, VA: CAN Analysis & Solutions.

Mora, X., Kemis, X., Callen, X., & Starobin, Y. (2016). 2016 I-AM annual evaluation report. Ames, IA: Research Institute for Studies in Education (RISE), Iowa State University.

The New Growth Group, & The Ohio Education Resource Center. (2018). The Ohio Skills Innovation Network (Ohio TechNet) final evaluation report. Cleveland, OH: The New Growth Group.

Palmer, I., & Nguyen, S. (2019). Connecting Adults to College with Credit for Prior Learning. Washington, DC: New America. https://www.newamerica.org/education-policy/reports/lessons-adapting-future-work-prior-learning-assessment/

Patnaik, A., & Prince, H. (2016). Retraining the Gulf Coast through information technology pathways: Final impact evaluation report. Austin, TX: Ray Marshall Center.

Patnaik, A. (2018). Greater Memphis alliance for competitive workforce (GMACW) TAACCCT Round 4 grant impact evaluation final report. Austin, TX: Ray Marshall Center, University of Texas at Austin.

Person, A. E., Thomas, J., Bruch, J., Johann, A., & Maestas, N. (2016). Outcomes of competency-based education in community colleges: Summative findings from an evaluation of a TAACCCT grant. Oakland, CA: Mathematica Policy Research.

Pigott, T. (2012). Advanced in Meta-Analysis. New York: Springer.

Price, D., Sedlak, W., Roberts, B., & Childress, L. (2016). Making the future: The Wisconsin Strategy Final Evaluation Report. Indianapolis, IN: DVP-Praxis LTD.

Price, D., Childress, L., Sedlak, W., & Roach, R. (2017). Northeast resiliency consortium final evaluation report. Indianapolis, IN: DVP-PRAXIS LTD.

Price, D., Valentine, J., Sedlak, W., & Roberts, B. (2018). Advancing careers and training (ACT) for healthcare final evaluation report. Indianapolis, IN: DVP-PRAXIS LTD.

PTB & Associates. (2016). Evaluation of the Alabama/Florida Technical Employment Network TAACCCT Program. Bethesda, MD: Author.

Ruder, A. What works at scale: A framework for scaling up workforce development programs. Atlanta, GA: Federal Reserve Bank of Atlanta. https://www.frbatlanta.org/community-

Scriven, M. (2001). Evaluation: future tense. American Journal of Evaluation, 22(3), 301–307.

Shain, M., & Grandgenett, N. (2016). Project IMPACT final evaluation report. Omaha, NE: Shain Consulting and University of Nebraska at Ohama.

Styers, M., Haden, C., Cosby, A., & Peery, E. (2018). Southwest Virginia Community College: Final Report. Charleston, VA: Magnolia Consulting, LLC

Swan, B., Hahs-Vaughn, D., Fidanzi, A., Serpa, A., DeStefano, C., & Clark, M.H. (2017). Florida XCEL-IT: Information technology careers for rural areas final evaluation report. Orlando, FL: Program Evaluation and Education Research Group, University of Central Florida.

Tan, C., & Moore, C. (2017). Developing pathways for careers in health: The Los Angeles competencies to careers consortium. Sacramento: CA: Education Insights Center, Sacramento State University.

Tight, M. (2018). Systematic reviews and meta-analyses of higher education research. European Journal of Higher Education, 9(2), 1–20.

U.S. Department of Labor (2011). Notice of availability of funds and solicitation for grant applications for Trade Adjustment Act Community College and Career Training grants program. Washington, DC: Author. https://doleta.gov/grants/pdf/SGA-DFA-PY-10-03.pdf

U.S. Department of Labor (2012). Notice of availability of funds and solicitation for grant applications for Trade Adjustment Act Community College and Career Training grants program. Washington, DC: Author. https://doleta.gov/grants/pdf/taaccct_sga_dfa_py_11_08.pdf

U.S. Department of Labor (2013). Notice of availability of funds and solicitation for grant applications for Trade Adjustment Act Community College and Career Training grants program. Washington, DC: Author. https://doleta.gov/grants/pdf/taaccct_sga_dfa_py_12_10.pdf

U.S. Department of Labor (2014). Notice of availability of funds and solicitation for grant applications for Trade Adjustment Act Community College and Career Training grants program. Washington, DC: Author. https://doleta.gov/grants/pdf/SGA-DFA-PY-10-03.pdf

U.S. Department of Labor. (2016). Trade Adjustment Assistance Community College and Career Training Grant Program: Fiscal Years 2014–2016 report to the Committee on Finance of the Senate and Committee on Ways and Means of the House of Representatives. Washington, DC: U.S. Department of Labor.

WorkEd Consulting. (2017). North Dakota advanced manufacturing skills training initiative (NDAMSTI) final evaluation report. Burke, VA: WorkED Consulting.

WorkEd Consulting. (2018). Southern Regional Technical College TAACCCT: Healthcare Career Works! Third-party evaluation report. Burke, VA: Author.

Zandniapour, L., & Deterding, N. M. (2018). Lessons from the Social Innovation Fund: Supporting evaluation to assess program effectiveness and build a body of evidence of research evidence. American Journal of Evaluation, 39(1), 27–41.

Appendix: Technical Notation

The preponderance of meta-analysis studies to synthesize research findings on education and workforce interventions have generally focused on student-level interventions, not public policy and its effects on student outcomes (Tight, 2018). The United States Department of Labor’s (DOL) Trade Adjustment Assistance Community College and Career Training (TAACCCT) grants to community colleges, plus the DOL’s evaluation requirement of TAACCCT grants, thus presents a unique opportunity to aggregate empirical findings across grantees to isolate the grant program’s broader effects on key policy-relevant outcomes.

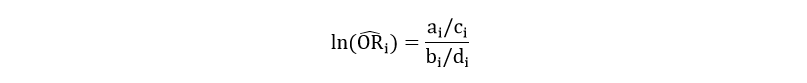

For each effect included in the meta-analysis, a conventional log odds ratio was calculated as:

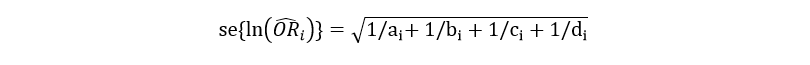

where for effect i from a given evaluation report, a and b are the participant counts of those in the treatment and comparison groups, respectively, achieving the outcome of interest (program completion, post-program employment, etc.); c and d are the participant counts for the treatment and comparison groups not achieving the outcome of interest. Using these counts for effect i, the standard error for each log odds ratio can also be calculated as (Pigott, 2012):

These odds ratios and standard errors were correspondingly analyzed using a random effects meta-analysis model (following DerSimonian & Laird, 1986). A random effects model was chosen, assuming that this analysis would be capturing a distribution of effects from TAACCCT, not one common or “true” effect (Borenstein et al., 2010).

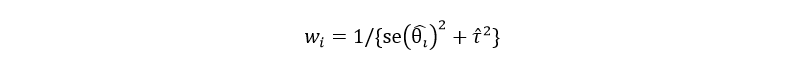

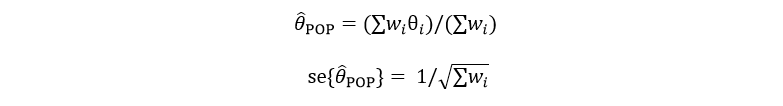

To implement a random effects meta-analysis, a variance τ² for a distribution of observed treatment effects, assumed θᵢ~ N(θ, τ²), was considered relative to calculating a weight for each study’s effect size:

These weights, in turn, allow for an overall effect size and standard error given as:

These overall effect sizes and standard errors yield the estimates that are used to generalize about a potential effect of TAACCCT funding on education and employment outcomes of interest.

In addition to the meta-analysis overall effect, an I² test statistic was calculated to provide a "measure of inconsistency in the studies' results" and capture the percentage of total variation across studies that is due to heterogeneity rather than chance (Higgins & Thompson 2002). The meta-analysis data collected from TAACCCT evaluation reports contained varying sample sizes, study designs, and outcome measures; the I² statistic is well suited to gauge possible heterogeneity to arise from these factors since the measure was developed for these common characteristics of meta-analysis (Higgins et al. 2003). This heterogeneity measure is calculated as:

where Q is the conventional Cochran's heterogeneity statistic and df is degrees of freedom, i.e., number of effects in the given meta-analysis. Cochran’s heterogeneity statistic is calculated by taking the sum of the squared deviations of each study's estimate from the meta-analytic overall effect and weighting each study's contribution in a manner that mirrors the meta-analysis weights (Cochran, 1954). Negative values for I2 are set to zero such that the measure ranges from 0 to 100%.

Higgins et al. (2002) note that heterogeneity above 75% is considered high and that only a quarter of all published meta-analysis have I2 values above 50%. A low level of heterogeneity shows an overall effect, reflecting results from studies that are largely homogeneous in terms of their effects' direction, magnitude, and significance. A high level of heterogeneity, on the other hand, means that an overall effect may be positive and significant but results from highly inconsistent effects. Higgins et al. (2003) note that generalizing from meta-analysis largely depends on overall effects that are the result of consistent results.

This brief’s education and workforce meta-analyses exhibit highly heterogeneous overall effects. Such heterogeneity is intuitive, given the diverse effects portrayed in forest plots. The education outcome’s heterogeneity (98.0%, p<0.001), for instance, contains 20 effects that are positive and statistically significant compared to 18 effects that are statistically insignificant (7 negative and 11 positive). This yields an overall effect that is positive, statistically significant, and highly heterogeneous. The effect of TAACCCT on employment likewise has a similar I2 (92.4%) that reflects an overall effect that is positive and statistically significant (p<0.001).