Table of Contents

- Foreword

- The Development of Smart Water Markets Using Blockchain Technology (Aditya K. Kaushik)

- Civilian Drones: Privacy Challenges and Potential Resolution (Ananth Padmanabhan)

- The Privacy Negotiators: The Need for U.S. Tech Companies to Mediate Agreements on Government Access to Data in India (Madhulika Srikumar)

- Governing Data: Non-Discrimination and Non-Domination in Decision-Making (Joshua Simons)

- Open Transit Data in India (Richard Abisla)

- Blockchain Regulation in the United States: Evaluating the overall approach to virtual asset regulation (Tanvi Ratna)

- Improving India’s Parliamentary Voting and Recordkeeping (Pranesh Prakash)

- India and the United States: The Time Has Come to Collaborate on Commercial Drones (Sylvia Mishra)

- Civic Futures 2.0: The Gamification of Civic Engagement in Cities (Subhodeep Jash)

- Key Differences Between the U.S. Social Security System and India’s Aadhaar System (Kaliya Young)

Governing Data: Non-Discrimination and Non-Domination in Decision-Making (Joshua Simons)

Joshua Simons is a Sheldon Fellow in Government at Harvard University, and is writing about the politics and ethics of machine learning. His research argues that machine learning is political.

Introduction

This paper is about how modern democracies should govern data-driven decision-making. It examines the goals that democracies might require different institutions to embed in their decision-making procedures, including those that use machine learning. I compare the public philosophies and constitutions of America and India to contrast such goals, the elimination of discrimination and domination respectively.

The present is a critical moment in the governance of data-driven decision-making. Across the world, the debate about the governance of data and the technology companies is gaining pace.1 In India, the government has begun to develop a ‘data marketplace’ for private sector institutions to stimulate innovation.2 India is also on the cusp of adopting a comprehensive legislative framework to govern data-driven decision-making.3 The draft Data Protection Bill, built on the conceptual foundations of the Srikrishna Report, will be the first significant legislation to draw on the data fiduciary concept developed by American lawyers.4

At such a moment, it is important to pause to ask fundamental questions about what the governance of data should aim to achieve. This paper goes beyond familiar concerns about privacy to compare two ideas that might underpin such a governance framework: discrimination, which I associate with American law and politics; and domination, which I associate with Indian law and politics. The U.S. places on institutions only the negative requirement not to discriminate in their decision-making procedures.5 This limited goal does not address the ways in which, without intent or malpractice, decision-making procedures can compound and entrench patterns of inequality.

India’s approach in politics and law to addressing structural social inequality is founded on the concept of domination. In particular, the ambition to eliminate relations of domination is central to the Constitution of India and the writings and speeches of B.R. Ambedkar. India requires society’s most important institutions to embed in their decision procedures the goal of eliminating persistent structures of power between social groups, a goal that extends beyond simply the pursuit of efficiency and non-discrimination.

Other democracies can learn much from India’s traditions, including the U.S. The Indian idea of domination treats equity as a policy to be pursued in the name of democracy.6 This recognizes that pervasive and persistent inequalities of power—of resources and income, education and health, social status and respect—ultimately undermine democracy itself. The promise of political equality, the foundation of collective self-government, becomes a mirage. Because domination is a threat to democracy, institutions across the social, economic, and political spheres must embed the goal of eliminating it in their decision-making procedures.

India’s current approach to governing data neglects these ideas. Instead of drawing fromIndia’s own constitutional and philosophical traditions, the draft Data Protection Bill imports several flimsy concepts from American and European privacy law, including from the EU’s General Data Protection Regulation (GDPR). India should instead pursue its own approach, built on the idea of non-domination, rather than the shaky foundations of privacy. The governance of data should aim not just to protect privacy, but to promote equity.

This paper applies these ideas about non-domination to the governance of data-driven decision-making. It explores how the responsibilities of data fiduciaries, the conceptual pillar of India’s recent Data Protection Bill, might be extended to include responsibilities to address structural injustice. This could be done in a context-sensitive way, that varies across institutions and sectors.

The great strength of the non-domination principle is that it forces us to ask: Who decides? It focuses on who gets to make choices about the design of data-driven decision procedures that will shape the contours of our societies. As we march rapidly towards an algorithmic world, the question of what principles should underpin the governance of data-driven decision-making, will be among the most important political questions in the data-drenched twenty-first century.

The Challenge: Data and Decisions

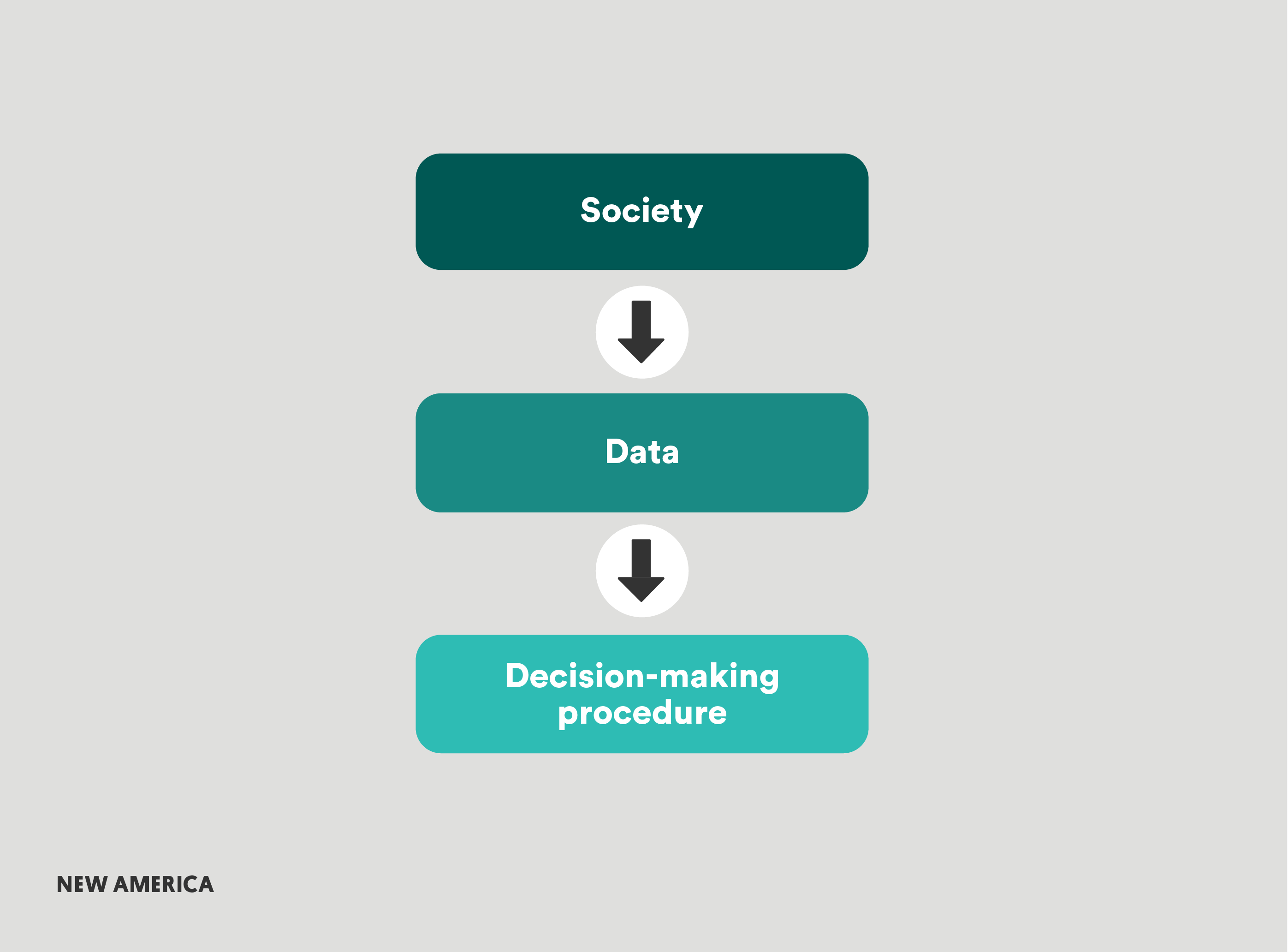

Data-driven decision-making operates at three levels. First, society itself. Societies are characterized by differences and inequalities of all kinds. Some we do not regard as important, such as what brand of raincoats different groups choose to wear, what humor they prefer, or how they dance. Some are the result of persistent structures of power, such as the way that race, gender, caste and class shape educational and employment opportunities, access to healthcare or credit, or the ability to be seen and heard in public with dignity and respect.7

Second, data. Data sets almost always reflect these differences and inequalities. They show us not only which clothes or humor different groups prefer, but also the complex ways in which race, gender, caste, and class condition the opportunities certain groups are afforded. If African Americans or Dalits are routinely excluded from educational opportunities, property in safe and prosperous locations, or valuable jobs and promotions, then a large and complex dataset will reflect how being an African American or Dalit conditions—and therefore correlates with—the opportunities an individual is afforded in life. Data encodes social inequalities and the structures of power that produce them.8

Third, the decision-making procedure. Data sets can be used to make decisions in a number of ways. These range from simple linear models to sophisticated forms of machine learning. When an institution develops its decision-making procedure, it makes choices about how to structure that procedure. These include choices about the data-driven model itself: which data to train it on, which features to include, what to train it to predict, rank, or classify. These also include choices about how to combine the model with human decision-making, such as what kind of human oversight, if any, to impose.9

There are conflicting ideas about how data makes decisions different. On the one hand, data offers the opportunity to eliminate intractable human prejudice.10 On the other, data threatens to reinforce existing prejudices and inequalities. Wealthy, white, men are better represented in data. Using that data to make decisions—without human reflection or intervention—will simply reproduce those injustices.11

The truth is that we have choices about how we use data to make decisions. Data gives us control over our decision-making procedures, especially machine learning. It enables us to be explicit about the goals we wish to prioritize, the values we wish to promote, and the trade-offs we are willing to make. We can decide to impose a definition of fairness. Or we can decide to ignore issues of justice and equality, and blindly pursue accuracy and efficiency.

Data raises the stakes of these choices, about which values to embed in our decision-making procedure. Data-driven decision-making operates on a vast scale. This means that the choices an institution makes as it designs decision-making procedures will, over time, shape the lives of large numbers of people. Especially in India where data-driven programs operate on a caste scale. Aadhar, India’s national identification system, is now reported to cover almost 1.2 billion citizens.12

To understand the kinds of choices involved, consider an example. Imagine an online advertising tool that recommends job adverts to individuals. The recommendations reflect the past online behavior of users seeking job postings, in particular, which advertisements they clicked on and applied to. Suppose women on average clicked on lower paid jobs than men; the tool then recommends lower paid jobs to women compared to men. The institution designing the tool has three options, each with quite different technical and legal implications.

First, the institution could establish a decision-making procedure that reflects the world (read: data) as it is. This tool would show women lower paid jobs because that’s the data users have given them. Second, the institution could impose some fairness metric on their decision-making procedure. For example, they could impose a maximum difference between average incomes in job postings for men and women within a specified geographic area. Computer scientists have developed a series of sophisticated individual13 and group-based definitions14 of fairness (some of which cannot simultaneously be achieved)15 which could be imposed on the model. Third, the institution could use their decision-making procedure to change the world (read: data). They could show women higher paid jobs on average, subject to some reasonable constraint.

What choice should the institution designing the jobs recommendation tool make? What should our governance framework say about which choice the institution should make? This paper compares two ideas in the U.S. and India about the kinds of burdens that should be placed on institutions as they make these choices: discrimination and domination.16

Governing in America: Non-Discrimination

Over the past five decades, non-discrimination has become among the most important principles for governing decision-making procedures in the U.S. It is broadly accepted that institutions should ensure their decision-making procedures do not discriminate, including procedures that use data. Let us examine this principle by focusing on one particular law, Title VII of the Civil Rights Act of 1964, which prohibits discrimination against employees and applicants on the basis of race, color, sex, national origin, and religion.17

Recall our tool that recommends job adverts to users. The company who designs it has three possible choices: To reflect the world as it is and show women lower paid jobs on average, because those are the job postings they tend to click on; to impose some fairness constraint, such as specifying some maximum difference between the average income of jobs postings between men and women; or to change the world and show women higher paid jobs on average than men, subject to some reasonable constraint.

U.S. discrimination law would not require this company to pursue either of the final two strategies. To require the company to pursue these would, in effect, would require institutions in their decision-procedures to take affirmative action on behalf of protected groups. This extension of the non-discrimination principle has never gained broad purchase in the United States.18 To see why, let’s focus in more detail on Title VII. This will clarify the ideas and concepts that actually underpin the non-discrimination duty—and most importantly, their limits.

Two kinds of legal cases can be brought under Title VII, disparate treatment and disparate impact. Disparate treatment concerns direct discrimination in which similar people are formally treated differently, usually when there is demonstrable intent to discriminate. More important for our purposes is disparate impact, which is indirect discrimination that does not concern intent.19

A disparate impact case involves three questions. First, is there a disparate impact of this policy on members of a protected class? The plaintiff has to show that the decision-making procedure causes a disparate impact with respect to a protected class. Second, is there some business justification for this disparate impact? This has historically been the most important part of a disparate impact case. The defendant has the opportunity to show there is a legitimate business case for this procedure and thereby avoid liability. In data-driven decision-making, the answer is usually likely to be that the procedure accurately predicts something useful to the defendant. Third, is there a less discriminatory means of achieving the same ends? This may become the most important component of a Title VII case in relation to data-driven decision-making. The plaintiff can show that there is another decision-making procedure that achieves the same goal, but produces less discriminatory outcomes across a protected group. 20

In most cases, efficient data-driven decision-making procedures will not fall foul of Title VII discrimination law as currently constituted. This is because the principle of non-discrimination on which the law is developed does not capture cases in which data-driven decision-making procedures reinforce existing structures of inequality and disadvantage.21

Consider two goals that have driven the development of U.S. discrimination law, anti-classification and anti-subordination. The anti-classification goal aims to combat unfairness for individuals in certain protected classes that results from the choices of decision-makers. This mostly concerns formal or intentional discrimination. The anti-subordination goal aims to eradicate inequalities based on membership in protected classes; not just procedurally, but substantively. On the anti-subordination view, the purpose of disparate impact law is not only to unearth well-hidden cases of intentional discrimination, it is also to cover cases of unintentional discrimination which entrench existing patterns of inequality.

Legal theorists and courts have reached consensus only on the anti-classification goal. The law is not now thought by most to serve the purpose of addressing the complex structural inequalities to which disadvantaged groups are subject. Without the anti-subordination grounding, U.S. discrimination law has no basis on which to impose on institutions the requirement to address structural injustice in their decision-making procedures.22 “As a society,” the historian Michael Selmi argues, “we have never been committed to eradicating racial or gender inequality beyond immediate issues of intentional discrimination.”23

The problem with the non-discrimination principle is this. It reinforces the idea that beyond efficiency, the sole responsibility of institutions is to pursue neutrality or blindness in the design of their decision procedures. Institutions have no obligation to ensure that their procedures do not entrench existing structures of inequality.24 Non-discrimination constrains attempts to embed equity in the decision-making procedures of important institutions. It does not support the goal of transforming underlying structures of power or confronting persistent patterns of domination. As the feminist scholar Nancy Down argues, “discrimination analysis is designed to ensure that no one is denied an equal opportunity within the existing structure; it is not designed to change the structure to the least discriminatory, most opportunity-maximizing pattern.”25

I believe that non-discrimination, and the ideas of neutrality or blindness it entails, obscures the politics of debating and deciding between substantive aims, and the trade-offs required to achieve them. Negative duties of non-discrimination are not a satisfactory basis on which to develop a framework for governing data-driven decision-making.26 We must think explicitly about how we should treat individuals differently on the basis of the disadvantages associated with protected groups. The rest of this paper explores how the constitution of India and the arguments of B.R. Ambedkar might enable us to reason through that challenge. They explore another idea to underpin the governance of data-driven decision-making: non-domination.

Governing in India: Non-Domination

In 1950, India adopted a constitution that represented a new social and political experiment. On the eve of independence, most of those who became citizens could not read or write. India was a society characterized by profound hierarchies of power and deep differences of language, education, and culture. The constitution aimed to harness the power of the state to transform these entrenched hierarchies. This was to be achieved, in part, by imposing on institutions a wide range of responsibilities to directly address structural social injustices.

India’s constitution directly challenged the liberal idea that underpins the approach to non-discrimination in the United States, “that every attempt to resolve social question of inequality and material destitution by political means would lead to terror and absolutism.”27 As Sunil Khilnani explains, democracy in India promised “to bring the alien and powerful machine like that of the state under the control of human will…to enable a community of political equals before constitutional law to make their own history.”28 The Constitution, as K. G. Kannabiran describes it, particularly the Social and Economic Directives, expressly places “the state under obligation to eliminate inequalities in status, facilities, and opportunities between people.”29

These ideas were in large part B.R. Ambedkar’s.30 Ambedkar’s primary concern was with the relationship between the political sphere, on the one hand, and the social and economic spheres, on the other. His work explored what burdens the pursuit of political equality in a democracy placed on different actors to address manifest social and economic inequalities.

Introducing the Constitution in 1949, Ambedkar issued a warning to India’s Constituent Assembly. He warned his comrades, “not [to] be content with mere political democracy. We must make our political democracy a social democracy as well.” He continued:

On the 26th January 1950, we are going to enter into a life of contradictions. In politics we will have equality and in social and economic life we will have inequality. In politics we will be recognizing the principle of one man one vote and one vote one value. In our social and economic life, we shall, by reason of our social and economic structure, continue to deny the principle of one man one value. How long shall we continue to live this life of contradictions? How long shall we continue to deny equality in our social and economic life? If we continue to deny it for long, we will do so only by putting our political democracy in peril. We must remove this contradiction at the earliest possible moment or else those who suffer from inequality will blow up the structure of political democracy.31

The contradiction between formal political equality and social and economic inequality is, in the end, a threat to democracy. For Ambedkar, if the equal worth of each citizen in politics stands in stark contrast to persistent inequalities in the economic and social spheres, political equality becomes a mere formalism. The idea of democracy becomes a mirage. Since patterns of domination reproduce persistent inequalities, democracy itself depends on the practical and continuous goal of eliminating patterns of domination.32

This prompts a series of important political questions: Who has what burdens to eliminate non-domination? How should they be imposed? Ambedkar’s reflections on these questions formed the basis of some of the most ambitious provisions in India’s Constitution. The idea was to embed social transformation into the aims and procedures of society’s most important institutions. Powerful institutions were explicitly required to direct their efforts towards the transformation of society. These were the politics of confronting a long history of injustice.

There are two relevant parts of the constitution. In Part III on Fundamental Rights, Article 14 establishes equality before the law; Article 15 prohibits discrimination on the grounds of religion, race, caste, sex, or place of birth; Article 16 establishes equality of opportunity in public employment; and Article 17 prohibits the practice of untouchability. Second, Part IV the Directive Principles of State Policy, which cannot be enforced in law, establish “equality as a policy aimed at bringing about…changes in the structure of society.”33

The implications of these provisions for public sector institutions are reasonably clear: their decision-making procedures must contribute to the elimination patterns of domination. For instance, in 1980, the Supreme Court ruled the policies of reservation and affirmative action of the Indian Railway Board to be consistent with the constitution’s aims and purposes. The Court argued that the State was in fact required to pursue policies of affirmative action—that is, to treat individuals differently based on their membership of groups historically subject to patterns of domination. Issuing the judgment, Justice Krishna Iyer wrote: “the Indian Constitution is a National Charter pregnant with social revolution, not a Legal Parchment barren of militant values, to usher in a democratic, secular, socialist society which belongs equally to the masses hungering for a human deal after feudal colonial history’s long night.”34

The implications for private institutions are less clear. Case law is still developing. Consider the scope of Article 15 (2), which states that no citizen can be restricted on protected grounds from access to “shops, public restaurants, hotels and places of public entertainment, as well as places of public resort dedicated to the use of the general public.” In a 2011 case, the Army College of Medical Services (ACMS) in New Delhi devised its own merit-based admissions procedure. The Court found the procedure to be unconstitutional. Its reasoning stated that private sector institutions were subject to the provisions of Article 15. “If a vast majority of our youngers, especially those belonging to disadvantaged groups, are denied access in the higher educational institutions in the private sector,” argued the judgment, “it would mean that a vast majority of youngers…belonging to disadvantaged groups would be left without access to higher education at all.”

The court drew on Ambedkar’s arguments in the Constituent Assembly debates, to expand the scope of the word “shop” to include private educational institutions. The ACMS admissions procedure, the Court found, produced disparate impact, reinforcing the disadvantage of students from disadvantaged backgrounds. Since ACMS was subject to the provision of Article 15 (2), and its admissions procedure produced demonstrable disparate impact against disadvantaged students, it was unacceptable.

More interesting was the Court’s interpretation of the word “only” when referring to the prohibited grounds of discrimination. They interpreted the word not to prohibit merely explicit or intentional discrimination on the grounds of some protected trait, as courts tend to in the U.S. Instead, the word meant that “the private establishment” must “ensure that the consequences” of its “rules of access . . .do not contribute to the perpetration of the unwarranted social disadvantages associated with the functioning of the social, cultural and economic order.”35 In other words, India’s Supreme Court has been willing to extend constitutional provisions beyond the requirements of non-discrimination. These provisions require private sector institutions to embed the aim of eliminating patterns of domination in their decision procedures.

Non-Domination in Decision-Making

How far this provision will extend remains to be seen, but the underlying idea is clear. Democracy depends on the promise of political equality. This promise is undermined by entrenched patterns of domination. These patterns can be reproduced not only by the state, but by institutions that perform public functions or that distribute important goods, such as employers, landlords, banks, telephone networks, and so on. When these institutions establish their decision-making procedures—hiring and firing, promoting and demoting, setting rent and granting mortgages—they must bear some of the burdens of eliminating patterns of domination.

As Justice Bhagwati, the 17th Chief Justice of India, wrote: “In a hierarchical society with an indelible feudal stamp and incurable actual inequality, it is absurd to suggest that progressive measures to eliminate group disabilities…are antagonistic to equality on the ground that every individual is entitled to equality of opportunity based purely on merit.”36

Let’s explore the implications of the non-domination principle for the governance of data-driven decision-making. Recall the example with which this essay began: the tool that recommends jobs adverts. I argued that that the U.S. non-discrimination principle provides no grounds for placing any responsibility on a public or private institution to either impose some fairness constraint, such as limiting the difference between the average incomes of job posting seen by men and women, or to show women higher average incomes than men, on the grounds that doing so would be to pursue some defensible aim of social justice.

The non-domination approach provides grounds for imposing the responsibility to pursue both of these more ambitious aims. It could require public and private institutions to impose some kind of fairness constraint on their data-driven decision-making procedures, or to construct that procedure to specifically eliminate patterns of domination. The Indian non-domination approach provides a conceptual and legal basis for applying many of the technical approaches to fairness developed in the computer science literature.37

India could develop an approach to governing data built on this non-domination principle. This could be done by broadening the responsibilities of data fiduciaries, established in the draft Data Protection Bill.38 The fiduciary concept rests on the idea of a relationship of trust. Banks are custodians of customers’ money; internet companies are custodians of customers’ data. That relationship of trust entails responsibilities for the fiduciary to act in the best interests of consumers. For instance, processing data is permitted only for purposes that the consumer might “reasonably expect.”39 In a country where citizens expect institutions to bear the responsibility of eliminating patterns of domination,40 the concept of trust might plausibly impose quite significant burdens on grant fiduciaries. They might be required to identify and raise important trade-off choices as they design and develop data-driven decision-making procedures, especially those that bear on entrenched social inequalities. Those choices could then be subject to meaningful public oversight, through explicit regulatory provisions, civil society organizations, and public debate.

Incorporating the non-domination principle into these fiduciary requirements of trust may stretch the idea of trust to its limits. This is not because non-domination is too ambitious an aim, but because the governance of data is about more than privacy. If trust cannot incorporate ideas like non-domination, fairness, and justice, the data fiduciary concept may prove limited in a world of algorithmic decision-making and machine learning. Jack Balkin, who first coined the information fiduciary concept, has written about this challenge. 41

Instead, more comprehensive structural regulation may be required. Such regulation must leave room for flexibility and variation. Eliminating patterns of domination may be part of the pursuit of political equality (essential in any democracy), but considerable variation should be permitted in terms of how this aim is embedded into decision-making procedures. Overly prescriptive and specific regulatory requirements would undermine the institutional innovation that is required to achieve the overall goal.42

At this critical moment in the development of regimes to govern data, we must pause to ask fundamental questions about the ideas and aims that should underpin them. The American approach, guided by the principle of non-discrimination, does not impose on institutions the obligation to transform entrenched social inequalities through their decision-making procedures. In an algorithmic society, in which decisions are ever more often made or informed by algorithmic procedures, there is a risk that these structures of injustice are reproduced on a vast scale that becomes ever harder to adjust. A giant form of path dependency.

The Indian approach, guided by the principle of non-domination, holds that decision-making procedures, in both private and public institutions, are the right place to intervene to address structural injustice. Many difficult questions—about the extent of the responsibilities that different institutions should bear—remain unanswered and profoundly under-theorized.

But what the Indian approach makes clear is that a comprehensive approach to governing data-driven decision-making cannot simply address issues of privacy. Such an approach must confront intractable questions about the burdens of pursuing political equality. After all, as Ambedkar argued, nothing less than the future of democracy is at stake. This has never been truer than in a world in which data-driven decision-making is pervasive.

Conclusion: Who Decides?

I would like to end by drawing attention to perhaps the most significant strength of the Indian approach and the non-domination principle on which it rests. It brings to the surface the politics of decision-making. It makes visible the inevitable trade-offs involved in establishing a decision-making procedure. It therefore forces us to confront the choices we have about the burdens we wish to impose on institutions as they establish those decision-making procedures. It forces us to ask what we wish to achieve and how.

This is an important strength in an algorithmic world. Too often, political choices are buried in the technical details of decision-making procedures. In machine learning, for instance, they are buried in choices about the construction of the dataset, choices about the outcome of interest or target variable, or which variables to include in the model. The risk is that a great many of the political choices necessary in a democracy are buried in the technical decision-making procedures of institutions, beyond the reach of legislative action and political debate. The pursuit of justice is moved slowly but imperceptibly outside the sphere of democratic politics.

Too often, political choices are buried in the technical details of decision-making procedures.

Avoiding this outcome will require comprehensive legislation. That legislation must not only set out the responsibilities of different institutions to address structural injustice in their decision-making procedures. It must also take on an even harder task: establishing the right institutional structure to enforce those provisions. There is a difficult balance to be struck. Regulation should not aim to specify precisely who has which burdens in every case; regulatory overreach can stifle democracy, as well as protect it. Yet without regulation, there can be no democratic oversight at all. This is not a tension specific to algorithmic decision-making, but rather, one that characterizes the relationship between democracy and the modern administrative state. But as ever, data and algorithms raise the stakes.43

The great strength of the non-domination principle is that it forces us to ask: Who decides? The risk is that we pretend that nobody decides. In practice, this means that institutions will decide unilaterally, often in secret and without public oversight, which priorities and values to build into their decision-making procedures. In the case of our example of the job recommendations tool, Google and Facebook decide, without accountability, whether they wish to pursue efficiency, fairness, or non-domination. With the right framework, Google and Facebook may decide first, but their decisions would then be subject to regulatory provisions. And those provisions would, in turn, be subject to the oversight of democratic institutions.

As our societies make more and more important decisions using data, democracies must make choices about how they wish to distribute the burdens of pursuing justice. Ignoring these choices will simply narrow the possibilities of political action, producing a kind of political atrophy, eroding the capacity of citizens to govern themselves in democratic politics. To stimulate their thinking as they confront these choices, democracies all over the world should draw on the writings of B.R. Ambedkar and the provisions of the Constitution of India.

This Indian approach, founded on the principle of non-domination, can provide an approach to governing data-driven decision-making that diverges from the familiar provisions of late twentieth century Anglo-American liberalism.

Citations

- For instance, in the UK, source; France, source; and Germany, source

- Varun Aggrawal, “Infosys to Set up Data Marketplace for Enterprises – The Hindu BusinessLine,” June 19, 2018, source.

- “The Personal Data Protection Bill” (2018), source; Justice B.N. Srikrishna, “A Free and Fair Digital Economy: Protecting Privacy, Empowering Indians,” (Committee of Experts under the Chairmanship of Justice B.N. Shrikrishna, July 2018), source.

- Jack M. Balkin, “Information Fiduciaries and the First Amendment,” U.C. Davis Law Review 49, no. 4 (2016): 1234; Lina Khan and David Pozen, “A Skeptical View of Information Fiduciaries” (SSRN, February 25, 2019), source.

- Whilst the European Union’s approach in the GDPR is limited by is focus on privacy. The challenges of governing data-driven decision-making go well beyond concerns about privacy. See Talia Gillis and Josh Simons, “Explanation < Justification: GDPR and the Perils of Privacy,” (SSRN, 2019).

- To use André Béteille’s phrase, one of India’s most important twentieth-century social theorists. André Béteille, Anti-Utopia: Essential Writings of André Béteille (New Delhi: Oxford University Press, 2005); André Béteille, Ramachandra Guha, and Jonathan P. Parry, Institutions and Inequalities: Essays in Honour of Andre Beteille (New Delhi: Oxford University Press, 1999).

- Purva Khera, Macroeconomic Impacts of Gender Inequality and Informality in India, IMF Working Paper (Washington, District of Columbia DC: International Monetary Fund, 2016); Carol Vlassoff, Gender Equality and Inequality in Rural India: Blessed with a Son (New York, NY: Palgrave Macmillan, 2013); Stephen M. Caliendo, Inequality in America: Race, Poverty, and Fulfilling Democracy’s Promise, Dilemmas in American Politics (Boulder, CO: Westview Press, 2014); Xavier N. De Souza Briggs, Social Capital and Segregation: Race, Connections, and Inequality in America (Cambridge, MassMA.: John F. Kennedy School of Government, 2002).

- It is important to note that this is not just about unrepresentative data. Unrepresentative data excludes some groups, perhaps because there are not enough data points about that group, or the data points that exist are less numerous or rich. This is an important problem, particularly in India, where the underrepresentation of Untouchables and women in data is systemic. But the problems this paper considers would remain even in the most accurate and careful data sets, which are still a reflection of the structure of our social world, including the inequalities and injustices that characterize it. A representative dataset is not devoid of injustice. This leaves open a host of important issues about when the representation of injustice in a dataset actually constitutes an injustice, an issue I hope to address in future work. Cynthia Dwork and Deirdre Mulligan, “It’s Not Privacy, and It’s Not Fair,” Stanford Law Review 66 (September 2013): 35–40; Kate Crawford, “The Hidden Biases in Big Data,” Harvard Business Review, April 1, 2013, source; David Garcia et al., “Analyzing Gender Inequality through Large-Scale Facebook Advertising Data,” Proceedings of the National Academy of Sciences of the United States of America 115, no. 27 (2018): 6958–63, source.

- Andrew D. Selbst and Solon Barocas, “The Intuitive Appeal of Explainable Machines,” SSRN Electronic Journal, 2018, source.

- Daniel Kahneman, Thinking, Fast and Slow (New York, NY: Farrar, Straus and Giroux, 2011).

- Virginia Eubanks, Automating Inequality: How High-Tech Tools Profile, Police and Punish the Poor (New York, NY: St. Martin’s Press, 2018).

- TOI, “Aadhaar Covers over 89% Population: Alphons,” The Times of India, accessed March 5, 2019, source.

- Cynthia Dwork et al., “Fairness through Awareness,” ITCS ’12 (ACM, 2012), 214–226.

- Sam Corbett-Davies and Sharad Goel, “The Measure and Mismeasure of Fairness: A Critical Review of Fair Machine Learning,” 20180731, source.

- Jon Kleinberg, Sendhil Mullainathan, and Manish Raghavan, “Inherent Trade-Offs in the Fair Determination of Risk Scores,” Proceedings of Innovations in Theoretical Computer Science (ITCS), 2017.

- I am therefore moving beyond privacy as a framework for thinking about the governance of data-driven decision-making. Privacy is one concern we might have about those decision-making procedures, usually called “data processing” in existing legislation in the EU and India. But it is far from the only concern. Other aims and values need to be brought into play. Privacy should not be the sole lens through which to view governance data and machine learning. A29WP, “Guidelines on Automated Individual Decision-Making and Profiling” (Article 29 Data Protection Working Party, 2018), source.; ICO, “Guide to the General Data Protection Regulation (GDPR)” (U.K.: Information Commissioner’s Office, August 2018), source; Srikrishna, “A Free and Fair Digital Economy: Protecting Privacy, Empowering Indians.”

- Most other important pieces of non-discrimination legislation extend the categories to which duties of non-discrimination apply. These include the Equal Pay Act of 1963, which prohibits the payment of different wages to employees of different sexes who perform equal work under similar conditions; the Americans with Disabilities Act (ADA) of 1990, which prohibits discrimination against individuals with a disability and requires the provision of reasonable accommodation to someone who is legally disabled; and the Genetic Information Non-Discrimination Act of 2008, which protects individuals from genetic discrimination in the healthcare and insurance industries.

- For instance, U.S. Executive Orders 10925, 11246, and 11375 require federal contractors who do over $10,000 in Government business in one year to “take affirmative action to ensure that applicants are employed, and that employees are treated during employment, without regard to their race, color, religion, sex or national origin.”

- David Strauss, “Discriminatory Intent and the Taming of Brown,” University of Chicago Law Review 56, no. 3 (1989): 935–935.

- Jon Kleinberg et al., “Discrimination in the Age of Algorithms,” 2019, source; Solon Barocas and Andrew D. Selbst, “Big Data’s Disparate Impact,” California Law Review 104, no. 3 (June 1, 2016): 671–732.

- There is an important emerging debate about precisely this point, including a paper of my own. For the key protagonists, see Kleinberg et al., “Discrimination in the Age of Algorithms,” 2019; Barocas and Selbst, “Big Data’s Disparate Impact”; Lior Strahilevitz, “Privacy versus Antidiscrimination,” Public Law & Legal Theory Working Paper, no. 174 (2007), source; Pamela L. Perry, “Two Faces of Disparate Impact Discrimination,” Fordham Law Review 59, no. 4 (March 1, 1991): 523–595; Cass Sunstein, “The Anticaste Principle,” Michigan Law Review, January 1, 1993, 2410.

- Barocas and Selbst, “Big Data’s Disparate Impact,” 724.

- Sophia Moreau and Deborah Hellman, Philosophical Foundations of Discrimination Law (Oxford: Oxford University Press, 2013), 259.

- Béteille, Anti-Utopia.

- Cass Sunstein makes a similar point. If there is prejudice and statistical discrimination, and if third parties promote discrimination, there will be decreased investments in human capital. Such decreased investments will be a perfectly reasonable response to the real world. And if there are decreased investments in human capital, then prejudice, statistical discrimination, and third-party effects will also increase. Statistical discrimination will become all the more rational; prejudice will hardly be broken down; consumers and employers will be more likely to be discriminators.Ronnie J. Steinberg, Applications of Feminist Legal Theory to Women’s Lives: Sex, Violence, Work and Reproduction. Women in the Political Economy. (Temple University Press, 2012), 560; Sunstein, “The Anticaste Principle,” 2431.

- Dwork et al., “Fairness through Awareness,” 215.

- As Hannah Arendt argued when comparing the American and French revolutions. Rohit De, A People’s Constitution: The Everyday Life of Law in the Indian Republic (Princeton, CT: Princeton University Press, 2018), 5; Uday S. Mehta, “The Social Question and the Absolutism of Politics,” 2010, source; Hannah Arendt, On Revolution (New York, NY: Viking Press, 1965).

- Sunil Khilnani, The Idea of India (New Delhi: Penguin, 2004).

- Kalpana Kannabirān, Tools of Justice: Non-Discrimination and the Indian Constitution (New York, NY: Routledge, 2012), 7.

- B. R. Ambedkar is a neglected character in India’s political history. His ideas have received little attention compared with more widely known figures M. K. Gandhi and Jawaharlal Nehru, in part because of the Congress Party’s dominant role in shaping India’s national story, and the contours of democratic politics after independence. See Valerian Rodrigues, “Justice as the Lens: Interrogating Rawls through Sen and Ambedkar,” Indian Journal of Human Development 5, no. 1 (2011): 153–174; Jean Drèze and Amartya Sen, “Democratic Practice and Social Inequality in India,” Journal of Asian and African Studies 37, no. 2 (2002): 6–37; Martha Nussbaum, “India: Implementing Sex Equality through Law,” Chicago Journal of International Law 2, no. 1 (2001): 35–58.

- Kannabirān, Tools of Justice, 162.

- Patterns of domination, which produce these persistent inequalities in the political and economic spheres, have two effects. First, they undermine the liberty of each individual to benefit from an effective and competent use of their powers. Democracy entailed political equality. Political equality entailed the absence of domination. Second, patterns of domination undermine the possibility of free association. Drawing on John Dewey, Ambedkar argued that free association – the capacity of groups to form links, to self-govern over time – was essential to democratic life. Democracy is not merely a form of government. It is primarily a mode of associated living, of conjoin communicated experience. It is essentially an attitude of respect and reverence towards fellow men. Patterns of domination made impossible the associations and relationships that encouraged and embodied this attitude of respect and reverence.B.R. Ambedkar, Annihilation of Caste: The Annotated Critical Edition, ed. S. Anand (New Delhi: Navayana, 2014), 280, 261.

- Béteille, Guha, and Parry, Institutions and Inequalities, 342.

- V. R. Krishnaiyer, Akhil Bharatiya Soshit Karamchari Sangh and Others vs. Union Of India, No. 185 (Supreme Court of India November 14, 1980).

- J B. Sudershan Reddy, Indian Medical Association vs. Union Of India & Ors, No. 8170 (Supreme Court of India May 12, 2011).

- Deepti Shenoy, “Courting Substantive Equality: Employment Discrimination Law in India,” University of Pennsylvania Journal of International Law 34, no. 3 (2013): 632.

- Jon Kleinberg et al., “Discrimination in the Age of Algorithms,” 20190210, source.

- The Personal Data Protection Bill; Srikrishna, “A Free and Fair Digital Economy: Protecting Privacy, Empowering Indians.”

- Srikrishna, “A Free and Fair Digital Economy: Protecting Privacy, Empowering Indians,” 8.

- De, A People’s Constitution; Béteille, Guha, and Parry, Institutions and Inequalities; Khilnani, The Idea of India.

- This is precisely the challenge that Jack Balkin, who first coined the data fiduciary concept, warned about. Balkin, “Information Fiduciaries and the First Amendment,” 1232–1324.

- As André Béteille argues, institutional well-being is an important consideration. He describes this as equality as a matter of policy, not of right, warning against placing too much faith specifically in the state to eliminate every social inequality, every difference of caste or class. Béteille, Anti-Utopia, 435.

- Joshua A. Kroll et al., “Accountable Algorithms,” University of Pennsylvania Law Review 165, no. 3 (2017): 633–705; Andrew Tutt, “An FDA for Algorithms,” Admin. L. Rev. 83 (2016); Jeremy Waldron, “Accountability: Fundamental to Democracy,” SSRN Scholarly Paper (Rochester, NY: Social Science Research Network, April 1, 2014), source; Craig T. Borowiak, Accountability and Democracy: The Pitfalls and Promise of Popular Control (Oxford University Press, 2011); Adam Przeworski, Susan Carol Stokes, and Bernard Manin, Democracy, Accountability, and Representation (Cambridge: Cambridge University Press, 1999).