Everything in Moderation

Table of Contents

- Introduction

- Legal Frameworks that Govern Online Expression

- How Automated Tools are Used in the Content Moderation Process

- The Limitations of Automated Tools in Content Moderation

- Case Study: Facebook

- Case Study: Reddit

- Case Study: Tumblr

- Promoting Fairness, Accountability, and Transparency Around Automated Content Moderation Practices

Abstract

Internet platforms are increasingly adopting artificial intelligence and machine-learning tools in order to shape the content we see and engage with online. The use of algorithmic decision-making is becoming particularly prevalent in online content moderation, as companies attempt to comply with speech-related legal frameworks while also trying to promote safety, positive user experiences, and free expression on their platforms.

This report is the first in a series of four reports that will explore different issues regarding how automated tools are being used by internet platforms to shape the content we see and influence how this content is delivered to us. The reports will focus on content moderation based on a platform’s content policies, the ranking of content on newsfeeds and in search results, the optimization and targeting of advertisement delivery, and content recommendations to users based on their prior content consumption. These reports will also explore how internet platforms, policymakers, and researchers can better promote fairness, accountability, and transparency around these automated tools and decision-making practices.

Acknowledgments

In addition to the many stakeholders across civil society and industry that have taken the time to talk to us over the years about our work on content moderation and transparency reporting, we would particularly like to thank Nathalie Maréchal from Ranking Digital Rights for her help in drafting this report. We would also like to thank Craig Newmark Philanthropies for its generous support of our work in this area.

Downloads

Introduction

The proliferation of digital platforms that host and enable users to create and share user-generated content has significantly altered how we communicate with one another. In the 20th century, individual communication designed to reach a broad audience was largely expressed through formal media channels, such as newspapers. Content was produced and curated by professional journalists and editors, and dissemination relied on physically transporting physical artifacts like books or newsprint. As a result, communication during this period was expensive, slow, and, with some notable exceptions, easily attributed to an individual speaker. In the twenty-first century, however, thanks to the expansion of the internet and social media, mass communication has become cheaper, faster, and sometimes difficult to trace.1

The widespread adoption and penetration of platforms such as YouTube, Facebook, and Twitter around the globe has significantly lowered the costs and barriers to communicating, thus democratizing speech online. Over the past decade, platforms have thrived off of users creating and exchanging their own content—whether it be family photographs, blog posts, or pieces of artwork—with speed and scale. However, in enabling user content production and dissemination, platforms also opened themselves up to unwanted forms of content, including hate speech, terror propaganda, harassment, and graphic violence. In this way, user-generated content has served as a key driver of growth for these platforms, as well as one of their greatest liabilities.2

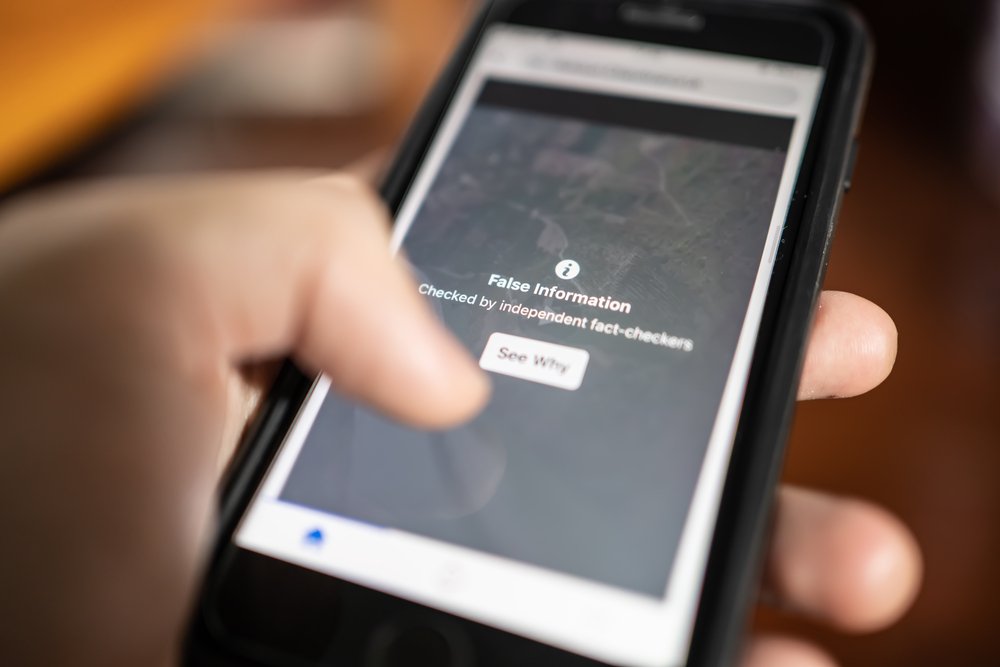

In response to the growing prevalence of objectionable content on their platforms, technology companies have had to create and implement content policies and content moderation processes that aim to remove these forms of content, as well as accounts responsible for sharing this content, from their products and services. This is both because companies need to comply with legal frameworks that prohibit certain forms of content online, and because companies want to promote greater safety and positive user experiences on their services. In addition, in the context of the United States, this is because the First Amendment limits the extent to which the government can set the rules for what type of speech is permissible. Over the last few years, both large and small platforms that host user-generated content have come under increased pressure from governments and the public to remove objectionable content. In response, many companies have developed or adopted automated tools to enhance their content moderation practices, many of which are fueled by artificial intelligence and machine learning. In addition to enabling the moderation of various types of content at scale, these automated tools aim to reduce the involvement of time-consuming human moderation.

“Over the last few years, both large and small platforms that host user-generated content have come under increased pressure from governments and the public to remove objectionable content.”

However, the development and deployment of these automated tools has demonstrated a range of concerning weaknesses, including dataset and creator bias, inaccuracy, an inability to interpret context and understand the nuances of human speech, and a significant lack of transparency and accountability mechanisms around how these algorithmic decision-making procedures impact user expression. As a result, automated tools have the potential to impact human rights on a global scale, and effective safeguards are needed to ensure the protection of human rights.

This report is the first in a series of four reports that will explore how automated tools are being used by major technology companies to shape the content we see and engage with online, and how internet platforms, policymakers, and researchers can promote greater fairness, accountability, and transparency around these algorithmic decision-making practices. This report focuses on automated content moderation policies and practices, and it uses case studies on three platforms—Facebook, Reddit, and Tumblr—to highlight the different ways automated tools can be deployed by technology companies to moderate content and the challenges associated with each of them.

Defining Content Moderation

Content moderation can be defined as the “governance mechanisms that structure participation in a community to facilitate cooperation and prevent abuse.”3 Currently, companies employ a range of approaches to content moderation, and they use a varied set of tools to enforce content policies and remove objectionable content and accounts. There are three primary approaches to content moderation:4

- Manual content moderation: This approach, which typically relies on the hiring, training, and deployment of human moderators to review and make decisions on content cases, can take many forms. Large platforms tend to rely primarily on outsourced contract employees to complete this work. Small- to medium-size platforms tend to employ full-time, in-house moderators or rely on user moderators who volunteer to review content.

- Automated content moderation: This approach involves the use of automated detection, filtering, and moderation tools to flag, separate, and remove particular pieces of content or accounts. Fully automated content detection and moderation practices are not widely used across all categories of objectionable content, as they have been found to lack accuracy and effectiveness for certain types of user speech. However, these tools are widely used for some types of objectionable content, such as child sexual abuse material (CSAM). In the case of CSAM, there is a clear international consensus that the content is illegal, there are clear parameters for what should be flagged and removed based on the law, and models have been trained on enough data to yield high levels of accuracy.

- Hybrid content moderation: This approach incorporates elements of both the manual and automated approaches. Typically, this involves using automated tools to flag and prioritize specific content cases to human reviewers, who then make the final judgment call on the case. This approach is being more widely adopted by both smaller and larger platforms, as it helps reduce the initial workload of human reviewers. Additionally, by letting a human make the final decision on a case, it comparatively limits the negative externalities that come from using automated tools for content moderation (e.g., accidental removal of content due to inaccurate tools or tools that cannot understand the nuances or context of human speech).

In addition, there are two different models of content moderation that are deployed by platforms, often depending on their size and capacity to engage in substantial content moderation practices.5

- Centralized content moderation: This approach often involves a company establishing a broad set of content policies that they apply globally, with exceptions carved out to ensure compliance with laws in different jurisdictions. These content policies are enforced by a large group of moderators who are trained, managed, and directed in a centralized manner. The most common examples of companies who utilize this model are large internet platforms like Facebook and YouTube.

- Decentralized content moderation: This approach often tasks users themselves with enforcing content policies. This can take different forms. In most cases, users are given an overarching set of global policies by a platform, which serve as a guiding framework. These companies also typically employ a small number of full-time content moderation staff to oversee general enforcement. The majority of the moderation, however, occurs in a decentralized manner. For example, on Reddit, user moderators are responsible for removing and regulating content in the same way that a moderator in a centralized model does. In addition, moderators on Reddit also have the power to create additional content guidelines for their particular domains.

Both models offer benefits to platforms. For example, centralized models help platforms promote consistency in how they enforce their content policies, and they provide a clear starting point for creating and enforcing new policies. Decentralized models, on the other hand, enable more localized, culture-specific, and context-specific moderation to take place, fostering a diversity of viewpoints on a platform. Centralized models also create robust checkpoints for evaluating content. However, should this checkpoint be evaded, these platforms have few methods of then removing the evading content. In comparison, decentralized platforms offer multiple levels of content evaluation and review.6

Finally, content moderation can take place at three different stages of the content lifecycle. These often involve competing pressure for promoting safety and security on platforms while also safeguarding free expression.7

- Ex-Ante Content Moderation: Typically, when a user attempts to upload a photograph or video to a website, it is screened before it is published. This moderation is mostly carried out through algorithmic screening and does not involve active human decision-makers. This form of content moderation is most commonly used to screen for CSAM or copyright-infringing material using tools such as PhotoDNA and ContentID. In these cases, there is typically no competing pressure between promoting safety and security and safeguarding free expression, as these clearly illegal forms of content do not have recognized free expression rights.

- Ex-Post Proactive Content Moderation: As platforms have come under increased pressure to identify and remove objectionable forms of content such as terror propaganda, they have begun using automated tools to proactively search for and remove content and accounts in these domains.

- Ex-Post Reactive Content Moderation: This form of content moderation takes place after a post has been published on a platform and subsequently flagged or reported for review by a user or entity such as an Internet Referral Unit or Trusted Flagger.8 On most platforms, content that has been flagged is typically processed and triaged by an automated system that then relays relevant content to human moderators for review.

Citations

- Kyle Langvardt, “Regulating Online Content Moderation,” The Georgetown Law Journal 106, no. 1353 (2018): source.

- Sarah T. Roberts, “Digital Detritus: ‘Error’ and the Logic of Opacity in Social Media Content Moderation,” First Monday 23, no. 3 (March 5, 2018): source.

- James Grimmelmann, “The Virtues of Moderation,” Yale Journal of Law and Technology 17, no. 1 (2015): source.

- Grimmelmann, “The Virtues of Moderation”.

- Grimmelmann, “The Virtues of Moderation”.

- Grimmelmann, “The Virtues of Moderation”.

- Kate Klonick, “The New Governors: The People, Rules, and Processes Governing Online Speech,” Harvard Law Review 131, no. 1598 (April 10, 2018): source. This report incorporates Klonick’s framework for the different stages of content moderation. However, the framework has been adapted to emphasize the role of algorithmic tools and manual content moderation processes during the ex-post proactive and ex-post reactive content moderation stages.

- Internet Referral Units are government-established entities responsible for flagging content to internet platforms that violates the platform’s Terms of Service. Trusted Flaggers are individuals, NGOs, government agencies, and other entities that have demonstrated accuracy and reliability in flagging content that violates a platform’s Terms of Service. As a result, they often receive special flagging tools such as the ability to bulk flag content.

Legal Frameworks that Govern Online Expression

In order to effectively assess how content moderation practices and automated tools are shaping online speech, it is important to also understand the legal frameworks—both domestic and international—that underpin contemporary notions of freedom of expression online. Internet platforms such as Facebook, YouTube, and Twitter are popular not only in the United States, but across the globe. According to January 2019 statistics, 85 percent of Facebook’s daily active users are based outside the United States and Canada,1 and 80 percent of YouTube users2 and 79 percent of Twitter accounts3 are based outside the United States, with many of them residing in emerging markets such as India, Brazil, and Indonesia. However, despite the fact that the majority of these platforms’ users reside outside the United States, these companies are headquartered in the United States and are therefore primarily bound by U.S. laws. Under U.S. law, there are two principal legal frameworks that shape how we view freedom of expression online: The First Amendment to the U.S. Constitution and Section 230 of the Communications Decency Act.

In the United States, the First Amendment establishes the right to free speech for individuals and prevents the government from infringing on this right. Internet platforms, however, are not similarly bound by the First Amendment. As a result, they are able to establish their own content policies and codes of conduct that often restrict speech that could not be prohibited by the government under the First Amendment. For example, Facebook, and most recently Tumblr, prohibit the dissemination of adult content and graphic nudity on their platforms. Under the First Amendment, however, such speech prohibitions by the government would be unconstitutional. Section 230 of the Communications Decency Act is a statute that establishes intermediary liability protections related to user content in the United States.4 Under Section 230, web hosts, social media networks, website operators, and other intermediaries are, for the most part, shielded from being held liable for the content of their users’ speech. In addition, companies are able to moderate content on their platforms without being held liable. Such protections have enabled user-generated content-based platforms to grow and thrive without fear of being held liable for the content of their users’ posts. However, in 2018, an amended version of the Allow States and Victims to Fight Online Sex Trafficking Act of 2017 (also known as FOSTA) was passed into law. FOSTA amended Section 230 of the Communications Decency Act so that online platforms could be held liable for unlawfully promoting and facilitating “prostitution and websites that facilitate traffickers in advertising the sale of unlawful sex acts with sex trafficking victims.”5 Although intended to address real harms, the law was not well-crafted to address the harms of sex trafficking, and instead it has undermined one of the foundational frameworks that created the internet as we know it. It opened up new discussions on whether further exemptions to intermediary liability protections should be proposed. In addition, FOSTA was criticized for silencing user discussions on controversial topics such as sex work, as well as for making the lives of such sex workers more dangerous, as they were forced off of online platforms and back onto the streets to solicit clients.6

Most recently, conservative politicians in the United States have begun claiming that major internet platforms are demonstrating political bias against conservatives in their content moderation practices. As a result, in June 2019 Senator Josh Hawley (R-Mo.) introduced the “Ending Support for Internet Censorship Act,” which aims to amend Section 230 so that larger internet platforms may only receive liability protections if they are able to demonstrate to the Federal Trade Commission that they are “politically neutral” platforms. The Act raises First Amendment concerns, as it tasks the government to compel and regulate what platforms can and cannot remove from their websites and requires platforms to meet a broad, undefined definition of “politically neutral.”7

On an international level, there are two primary documents that provide protections for freedom of expression. The first is Article 19 of the Universal Declaration of Human Rights (UDHR), and the second is Article 19 of the International Covenant on Civil and Political Rights (ICCPR). Both of these documents recognize that free speech and free expression are fundamental human rights, and both prohibit efforts to unjustly clamp down on them. However, freedom of expression is not an absolute right under human rights law and can be subject to necessary and proportionate limitations.8

Up until now, internet platforms in the U.S. have engaged in voluntary content moderation and self-regulation. However, a wave of terror attacks facilitated through online platforms and foreign interference in the 2016 U.S. presidential election have sparked concerns about the use of these platforms to spread terror propaganda and political disinformation.9 As a result, platforms have come under increased pressure to identify and moderate these forms of objectionable content.

This pressure has manifested into legislation around the world. In 2016, Germany introduced the Netzwerkdurchsetzungsgesetz—also known as the Network Enforcement Act or the NetzDG—which requires platforms to delete hate speech, terror propaganda, and other designated forms of illegal and objectionable content within 24 hours of it being flagged to the platform—or risk substantial fines.10

In addition, in April 2019, the European Commission approved a proposal for similar regulation that would require internet platforms to remove terrorism-related content that had been flagged to them within an hour or face fines amounting to billions of dollars.11 There has been a string of similar legislative proposals and laws emerging in countries around the world, including India, Singapore, and Kenya. These laws aim at tackling particular categories of objectionable content, such as hate speech or fake news, and attempt to impose criminal penalties on individuals or platforms for posting and sharing such content. Most recently, in April 2019, the government of the United Kingdom released a white paper focused on combating online harms, which proposes multiple requirements for internet companies to ensure they keep their platforms safe and can be held responsible for the content on their platforms, as well as the decisions of the company. The white paper proposes a framework to be enforced by a new regulatory body, under which companies and executives who breach the proposed “statutory duty of care” could be charged with hefty fines.12 Many of these forms of regulation place undue pressure on companies to remove content quickly or face liability, thereby creating strong incentives for them to err on the side of broad censorship. Mandating that companies remove content along arbitrary timeframes is particularly concerning because it exacerbates this pressure. In order to comply, companies have invested more in automated tools to flag and remove such objectionable content. However, the mandatory timelines set forth by many of these regulations establish a content moderation environment that prioritizes speed over accuracy. In response, many companies are rapidly developing and implementing automated tools that take down a wide range of content quickly, often with little transparency to the public. This has resulted in overbroad content takedowns and increased threats to user expression online.

“Many of these forms of regulation place undue pressure on companies to remove content quickly or face liability, thereby creating strong incentives for them to err on the side of broad censorship.”

For example, shortly after the NetzDG came into effect in Germany, two senior members of the far-right Alternative for Germany (AfD) party who had tweeted anti-Muslim and anti-immigrant content had their tweets flagged and removed for containing hate speech. However, a series of tweets from the satirical magazine Titanic, which caricatured the initial tweets and which were not hate speech in themselves, were also removed,13 demonstrating how such regulation pushes companies to engage in overbroad takedowns of content in order to avoid fines. This case, which was one of the first to occur after the NetzDG was introduced, also demonstrated how automated tools lack a nuanced and contextualized understanding of human speech, as they were unable to distinguish between hate speech and satire.

Citations

- Salman Aslam, "Facebook by the Numbers: Stats, Demographics & Fun Facts," Omnicore Agency, last modified January 6, 2019, source.

- Salman Aslam, "YouTube by the Numbers: Stats, Demographics & Fun Facts," Omnicore Agency, last modified January 6, 2019, source.

- Salman Aslam, "Twitter by the Numbers: Stats, Demographics & Fun Facts," Omnicore Agency, last modified January 6, 2019, source.

- Mark MacCarthy, "It's Time to Think Seriously About Regulating Platform Content Moderation Practices," CIO, February 14, 2019, source.

- Allow States and Victims to Fight Online Sex Trafficking Act of 2017, H.R. 1865, 115th Cong. (2018)

- New America's Open Technology Institute, "OTI Disappointed in the House-Passed FOSTA-SESTA Bill," news release, February 27, 2018, source.

- New America's Open Technology Institute, "Bill Purporting to End Internet Censorship Would Actually Threaten Free Expression Online," news release, June 20, 2019, source.

- Filippo A. Raso et al., Artificial Intelligence & Human Rights: Opportunities & Risks, September 25, 2018, source.

- MacCarthy, "It's Time to Think Seriously About Regulating Platform Content Moderation Practices".

- Center for Democracy & Technology, "Overview of the NetzDG Network Enforcement Law," Center for Democracy & Technology, last modified July 17, 2017, source.

- Zak Doffman, "EU Approves Billions In Fines For Google And Facebook If Terrorist Content Not Removed," Forbes, April 18, 2019, source.

- Department of Digital, Culture, Media & Sport, Online Harms White Paper, April 2019, source.

- Linda Kinstler, "Germany's Attempt to Fix Facebook Is Backfiring," The Atlantic, May 18, 2018, source.

How Automated Tools are Used in the Content Moderation Process

Automated tools are used to curate, organize, filter, and classify the information we see online. They are therefore pivotal in shaping not only the content we engage with, but also the experience each individual user has on a given platform. There are a host of automated tools, many fuelled by artificial intelligence and machine learning, that can be deployed during the content moderation process. These tools can be deployed across a range of categories of content and media formats, as well as at different stages of the content lifecycle, to identify, sort, and remove content. This section aims to provide an overview of some of the most widely used automated tools and methods in this field, as well as their strengths and limitations.

Digital Hash Technology

Digital hash technology works by converting images and videos from an existing database into a grayscale format. It then overlays them onto a grid and assigns each square a numerical value. The designation of a numerical value converts the square into a hash, or digital signature, which remains tied to the image or video and can be used to identify other iterations of the content either during ex-ante moderation or ex-post proactive moderation.1

Digital hash technology has thus far been widely adopted by internet platforms to identify CSAM and copyright-infringing material. The CSAM detection technology, known as PhotoDNA, was originally developed by Microsoft and has expanded to become a powerful tool used by companies such as Twitter, Google, Facebook, law enforcement, and organizations such as the National Center for Missing & Exploited Children. PhotoDNA generates digital hashes from a database of thousands of existing illegal CSAM images and can detect hashes across a broad spectrum in microseconds. In response to growing concerns around copyright-infringement from its users, YouTube adapted PhotoDNA technology to create the ContentID technology. ContentID enables YouTube users to create digital hashes for their video content to help protect against copyright violations. Once these hashes have been created, all content that is subsequently uploaded to the YouTube platform is screened against its database of audio and video files in order to identify potential copyright violations.2 Both of these tools are particularly resilient against manipulation, including resizing, color alterations, and watermarking.3

The databases of signatures that these algorithms are trained on are continuously updated. There are over 720,000 known instances of CSAM,4 and once new images or videos are identified, they are added to the database. Similarly, when copyright holders flag infringing content to YouTube, this content is added to the ContentID database so that future screenings of content will incorporate these materials.5 The expansion of these databases, as well as the continuous evaluation of and updates to these software programs, aim to improve the effectiveness of these tools. This is one particular area where machine learning is being deployed to utilize past learnings in order to inform future predictions and behaviors.6 Further, although it is much harder now to circumvent image hashes, it is still possible to circumvent audio and video hashing by, for example, altering the length or encoding format of the file, as this would require a new hash of the file to be generated.7 Most recently, PhotoDNA technology has been adapted in order to detect and remove extremist content and terror propaganda-related images, video, and audio online. Similar to PhotoDNA, this tool, known as eGLYPH, is capable of detecting and removing content on a platform that has a corresponding hash, and is also able to prevent the upload of such content ex-ante.8 However, the application of this automated technology to content moderation decision-making around extremist content has raised a significant number of concerns, as the definition of what is extremist content, and what should therefore be included in hash databases, is vague and largely platform-dependent. In addition, most platforms focus their content moderation efforts on certain extremist groups, such as the Islamic State and al-Qaeda. As a result, these automated tools demonstrate a bias in terms of which groups they are trained to focus on, and demonstrate less reliability when addressing the larger corpus of extremist groups and movements that may use online services. Furthermore, moderating extremist content often requires a nuanced understanding of varied regions and cultures and an appreciation for the context in which an image is posted, something automated tools do not have. For example, while platforms will want to take down terrorist propaganda that glorifies acts of gruesome violence, it is important to permit journalists and human rights organizations to raise awareness about terrorist atrocities. As a result, automated moderation of this content has resulted in overbroad takedowns and infringements on user expression.

There is also a significant lack of transparency and accountability around how digital hash technology is being deployed to identify and moderate extremist content. For example, in June 2017, Facebook, Microsoft, Twitter, and YouTube formed the Global Internet Forum to Counter Terrorism (GIFCT) in order to curb the spread of extremist content online. One of the main efforts of the GIFCT was the creation of a shared industry hash database that contains over 40,000 image and video hashes that can aid company efforts to moderate extremist content. However, despite the fact that the database has been used by companies for over two years, there has been little transparency around what specific groups this database focuses on, how content added to the database is vetted and verified as extremist content, and how much content and how many accounts have been correctly and erroneously removed across participating platforms as a result of the database.9 As a result, it is difficult to assess the effectiveness and accuracy of such tools.

Image Recognition

Digital hash technologies utilize image recognition. However, image recognition can also be used more broadly during the content moderation process. For example, during ex-post proactive moderation, image recognition tools can identify specific objects within an image, such as a weapon, and decide based on factors including user experience and risk whether the image should be flagged to a human for review. Automated image recognition tools are currently employed by several internet platforms, as they help filter through and prioritize cases for human moderators, thus saving time.10 Although the algorithms that power image recognition tools are continuously reinforced when information regarding the ultimate decision a human moderator made is fed back into it, this feedback loop does not provide detailed information on why the moderator made this decision. As a result, these algorithms are unable to develop into more dynamic tools that could incorporate nuanced and contextual insights into their detection procedures, such as whether content with a weapon in it is actually violent, or—for example—satirical in nature.11 In addition, the accuracy of these models depends on the quality of the datasets they are trained on. If these models are trained on datasets that focus on specific types of weapons, they would reflect this bias and would not be able to accurately identify all potential instances of violent content containing weapons on a platform. There is also a lack of transparency around how these image recognition databases are compiled, what types of content they focus on, how effective and accurate they are across different categories of content, and how much user expression has been accurately and erroneously removed as a result of these tools.

Metadata Filtering

Most digital files contain information that provides descriptive characteristics about their content. This is known as a file’s metadata. For example, an audio file that is a song could be labeled with information such as the song’s title or the length. Metadata filtering tools can be used during ex-ante and ex-post proactive moderation to search a series of files in order to identify content that fits a particular set of metadata parameters. Metadata filtering tools are particularly used to identify copyright-infringing materials. However, because a file’s metadata label can be easily manipulated or mislabeled, the effectiveness and accuracy of metadata filtering tools is limited, and these tools can be easily gamed.12

Natural Language Processing (NLP)

NLP is a set of techniques that use computers to parse text. In the context of content detection and moderation, text is typically parsed in order to make predictions about the meaning of the text, such as what sentiments it indicates.13 Currently, a wide-range of NLP tools can be purchased off the shelf and are applicable in a range of use cases, including spam detection, content filtering, and translation services. In the context of content moderation, NLP classifiers are particularly being used to detect hate speech and extremist content and to perform sentiment analysis on content.14 As outlined by researchers from the Center for Democracy & Technology, NLP classifiers are generally trained on text examples, known as documents, that have been annotated by humans in order to indicate whether they belong to a particular category or not (e.g., extremist content vs. not extremist content). When a model is provided a collection of documents, known as a corpus, it works to identify patterns and features associated with each annotated category. These corpora are pre-processed so that they numerically represent particular characteristics in the text, such as the absence of a specific word. The annotated and pre-processed text documents are used to train machine learning models to classify new documents, and the classifier is tested on a separate sample of the training data in order to determine how much the model’s classifications matched those of the human coders.15

Although internet platforms are increasingly exploring and adopting the use of NLP classifiers, these technologies are limited for a number of reasons. First, NLP technologies are domain-specific, which means that they can only focus on one particular type of objectionable content. In addition, because there is significant variation in how speech is expressed, these categories are very narrow. For example, to maximize accuracy, these models are trained to detect and flag one specific type of hate speech.16 This means that a classifier trained to detect hate speech could only be trained to focus on a particular sub-domain of hate speech, such as anti-Semitic speech. If this classifier was trained on datasets that also included some examples of other forms of hate speech, it would still only be able to be applied with relative accuracy to anti-Semitic speech. In addition, finding and compiling comprehensive enough datasets to train NLP classifiers is a challenging, expensive, and tedious process. As a result, many researchers have resorted to filtering through content using search terms or hashtags that focus on subtypes of a particular domain of speech, such as hate speech directed at a certain religious group. However, this creates and operationalizes dataset and creator bias, which can disproportionately emphasize certain types of hate speech. This re-emphasizes that such tools cannot be widely applied to multiple forms of hate speech.17

Furthermore, in order for NLP classifiers to operate accurately, they need to be provided with clear and consistent parameters and definitions of speech. Depending on the type of speech, this can be challenging. For example, definitions around extremist content and disinformation are vague, and they are often unable to capture the full breadth, context, and nuances of such activity. On the other hand, tools that are developed based on definitions that are overly narrow may fail to detect some speech and may be easier to bypass.18

“On the other hand, tools that are developed based on definitions that are overly narrow may fail to detect some speech and may be easier to bypass.”

In addition, NLP classifiers are limited in that they are unable to comprehend the nuances and contextual elements of human speech. For example, this could include whether a word is being used in a literal or satirical context, or whether a derogatory term is being used in slang form. This therefore decreases the accuracy or these classifiers, particularly when they are applied across platforms, content formats, languages, and contexts.19

Finally, there is also a lack of transparency around how corpora are compiled, what manual filtering processes—such as hashtag filtering—creators undergo to create these datasets, how accurate these tools are, and how much user expression these NLP tools remove both correctly and incorrectly.

Citations

- Klonick, "The New Governors: The People, Rules, and Processes Governing Online Speech".

- Klonick, "The New Governors: The People, Rules, and Processes Governing Online Speech".

- Kalev Leetaru, "The Problem With AI-Powered Content Moderation Is Incentives Not Technology," Forbes, March 19, 2019, source.

- Klonick, "The New Governors.”

- Klonick, "The New Governors.”

- Klonick, "The New Governors.”

- Evan Engstrom and Nick Feamster, The Limits of Filtering: A Look at the Functionality & Shortcomings of Content Detection Tools, March 2017, source.

- Counter Extremism Project, "How CEP's eGLYPH Technology Works," Counter Extremism Project, last modified December 8, 2016, source.

- Some platforms such as Facebook, YouTube, and Twitter do provide limited disclosures on how much extremist content or accounts they remove in their transparency reports. Facebook also reports on how much extremist content the platform erroneously removed and restored. However, it is unclear what proportion of these removals were due to the use of the shared hash database.

- Accenture, Content Moderation: The Future is Bionic, 2017, source.

- Accenture, Content Moderation: The Future is Bionic.

- Engstrom and Feamster, The Limits of Filtering: A Look at the Functionality & Shortcomings of Content Detection Tools

- Natasha Duarte, Emma Llansó, and Anna Loup, Mixed Messages? The Limits of Automated Social Media Content Analysis, November 28, 2017, source.

- Duarte, Llansó, and Loup, Mixed Messages?

- Duarte, Llansó, and Loup, Mixed Messages?

- Raso, Filippo and Hilligoss, Hannah and Krishnamurthy, Vivek and Bavitz, Christopher and Kim, Levin Yerin, Artificial Intelligence & Human Rights: Opportunities & Risks (September 25, 2018). Berkman Klein Center Research Publication No. 2018-6. Available at SSRN: source or source

- Duarte, Llansó, and Loup, Mixed Messages?

- Duarte, Llansó, and Loup, Mixed Messages?

- Raso, Filippo and Hilligoss, Hannah and Krishnamurthy, Vivek and Bavitz, Christopher and Kim, Levin Yerin, Artificial Intelligence & Human Rights: Opportunities & Risk

The Limitations of Automated Tools in Content Moderation

As highlighted in the previous section, automated tools used for content moderation are limited in a number of ways. Given that these tools are increasingly being adopted by internet platforms, it is important to understand how they shape the content we engage with and see online, as well as user expression more broadly. This section provides a more detailed discussion of the primary limitations of these automated tools.

Accuracy and Reliability

The accuracy of a given tool in detecting and removing content online is highly dependent on the type of content it is trained to tackle. Developers have been able to train and operate tools that focus on certain types of content—such as CSAM and copyright-infringing content—so that they have a low enough error rate and can be widely adopted by small and large platforms. This is because these categories of content have large corpora with which tools can be trained and clear parameters around what falls in these categories. However, in the case of content such as extremist content and hate speech, there are a range of nuanced variations in speech related to different groups and regions, and the context of this content can be critical in understanding whether or not it should be removed. As a result, developing comprehensive datasets for these categories of content is challenging, and developing and operationalizing a tool that can be reliably applied across different groups, regions, and sub-types of speech is also extremely difficult. In addition, the definition of what types of speech fall under these categories is much less clear.1 Although smaller platforms may rely on off-the-shelf automated tools, the reliability of these tools to identify content across a range of platforms is limited. In comparison, proprietary tools developed by larger platforms are often comparatively more accurate, as they are trained on datasets reflective of the types of content and speech they are meant to evaluate.2

“Although smaller platforms may rely on off-the-shelf automated tools, the reliability of these tools to identify content across a range of platforms is limited.”

Additionally, the definition of what constitutes accuracy varies based on the objectives of a researcher and a given model. In most NLP studies, accuracy can be defined as the degree to which a model could make the same decisions as a human being. However, because human beings come with their own set of biases and opinions that influence how they would categorize speech, this definition of accuracy is perhaps not the most reliable metric for evaluating automated tools in the content moderation space. Other factors, such as the ratio and number of false positives and false negatives, should also be considered. However, researchers and developers should recognize that these statistics represent more than just quantitative metrics. They also represent real impacts on user expression, and should therefore be weighted accordingly. 3

Contextual Understanding of Human Speech

In theory, automated content moderation tools should be easy to create and implement, as they are far more rule-bound than human beings. However, because human speech is not objective and the process of content moderation is inherently subjective, these tools are limited in that they are unable to comprehend the nuances and contextual variations present in human speech.4 As discussed above, these tools are limited in their ability to parse and understand variances in language and behavior that may result from different demographic and regional factors. For example, excessively liking someone’s pictures or using certain slang words may be construed as harassment on one platform or in one region of the world. However, these behaviors and speech may take on an entirely different meaning on another platform or in another community.5 In addition, automated tools are also limited in their ability to derive contextual insights from content. For example, an image recognition tool could identify an instance of nudity, such as a breast, in a piece of content. However, it is unlikely to be able to determine whether the post depicts pornography or perhaps breastfeeding, which is permitted on many platforms.6 In addition, automated content moderation tools can become outdated rapidly. On Twitter, members of the LGBTQ+ community found that there was a significant lack of search results that incorporated hashtags such as #gay and #bisexual, raising concerns of censorship. The company stated that this was due to the deployment of an outdated algorithm that mistakenly identified posts with these hashtags as potentially offensive. This demonstrates the need to continuously update algorithmic tools, as well as the need for decision-making processes to incorporate context in judging whether posts with such hashtags are objectionable or not.7 These automated tools also need to be updated as language and meaning evolves. For example, in an attempt to avoid moderation, some hateful groups have adopted new methods of slang and representations for indicating hate. One example of this is white supremacists using the names of companies, such as “Google” and “Yahoo” to replace ethnic slurs. In order to keep up, automated tools would have to adapt quickly and be trained across a wide range of domains. However, users could continue developing new forms of speech in response, thus limiting the ability of these tools to act with significant speed and scale.8 On some platforms when humans moderators engage in content moderation, they are able to combat the rapidly changing nature of speech by viewing additional information on the case, such as information on the user who is accused of violating the platform’s rules. However, incorporating such assumptions and processes into an automated tool runs the risk of enhancing biases around particular groups of individuals and could result in skewed or even discriminatory enforcement of content policies.9

As of now, AI researchers have been unable to construct comprehensive enough datasets that can account for the vast fluidity and variances in human language and expression. As a result, these automated tools cannot be reliably deployed across different cultures and contexts, as they are unable to effectively account for the various political, cultural, economic, social, and power dynamics that shape how individuals express themselves and engage with one another.

Creator and Dataset Bias

One of the key concerns around algorithmic decision-making across a range of industries is the presence of bias in automated tools. Decisions based on automated tools, including in the content moderation space, run the risk of further marginalizing and censoring groups that already face disproportionate prejudice and discrimination online and offline.10 As outlined in a report by the Center for Democracy & Technology, there are many types of biases that can be amplified through the use of these tools. NLP tools, for example, are typically used to parse text in English. Tools that have a lower accuracy when parsing non-English text can therefore result in harmful outcomes for non-English speakers, especially when applied to languages that are not very prominent on the internet, as this reduces the comprehensiveness of any corpora that models are trained on. Given that a large number of the users of major internet platforms reside outside English-speaking countries, this is highly concerning. The use of such automated tools in decision-making should therefore be limited when making globally relevant content moderation decisions.11 These tools are also unable to effectively process differences in dialect and language use that may result from demographic differences.12

In addition, the personal and cultural biases of researchers are likely to find their way into training datasets. For example, when a corpus is being created, the personal judgments of the individuals annotating each document can impact what is constituted as hate speech, as well as what specific types of speech, demographic groups, and so on are prioritized in the training data. This bias can be mitigated to some extent by testing for intercoder reliability, but it is unlikely to combat the majority view on what falls into a particular category.13

Transparency and Accountability

One of the primary concerns around the deployment of automated solutions in the content moderation space is the fundamental lack of transparency that exists around algorithmic decision-making as a whole. These algorithms are often referred to as “black boxes,” because there is little insight into how they are coded, what datasets they are trained on, how they identify correlations and make decisions, and how reliable and accurate they are. Indeed, with black box machine learning systems, researchers are not able to identify how the algorithm makes the correlations it identifies. Currently, some internet platforms provide limited disclosures around the extent to which automated tools are used to detect and remove content on their platforms. In its Community Guidelines enforcement report, for example, YouTube discloses how many of the videos and comments it removed were originally detected using automated flagging tools, as well as what percentage of these videos were removed before they were viewed or after they were viewed.14Although many companies have been pushed to provide more transparency around their own proprietary automated tools, they have refrained from doing so, claiming that the tools are protected as trade secrets in order to maintain their competitive edge in the market—and also to prevent bad actors from learning enough to game their systems.15 In addition, some researchers have suggested that, in this regard, transparency does not necessarily generate accountability. In the broader content moderation space, it is gradually becoming a best practice for technology companies to issue transparency reports that highlight the scope and volume of content moderation requests they received, as well as the amount of content they proactively removed as a result of their own efforts. In this case, transparency around these practices can generate accountability around how these platforms are managing user expression.

However, in the case of algorithmic decision-making, researchers such as Maayan Perel and Niva Elkin-Koren have suggested that looking “under the hood” of black boxes would yield a large volume of incomprehensible data that is a combination of inputs and outputs and that would require significant data analysis in order to extract insights. Although processing this data is not impossible, it would not generally provide any transparency around how the actual decision-making occurred, as well as how a company is ensuring tools are being used fairly. In addition, unlike humans, algorithms lack “critical reflection.”16 As a result, other ways for companies to provide transparency in a manner that generates accountability are also being explored.17 One example of such a mechanism is providing greater transparency into the training data, as this can help researchers understand decisions being made by black-box algorithmic models to a certain extent.

“Unlike humans, algorithms lack critical reflection.”

Two mechanisms for providing accountability around content takedown decisions that are gradually being adopted are notice and appeals. Internet platforms have begun providing notices to users who have had their content removed or accounts suspended or deleted for violating content guidelines. In addition, some platforms have introduced appeals processes so that users can seek review of content or account-related decisions. However, these mechanisms have not yet been perfected. Although users may receive notifications that their content has been removed or their account has been suspended or deleted, these notices often lack meaningful explanations on which specific content guidelines the user violated. In addition, on some platforms, appeals processes do not enable users to provide more context or an explanation around the content or account in question, and appeals are often not available for all categories of content that are removed. Furthermore, the appeals process can often be a lengthy procedure that leaves a user without access to their account for a significant period of time. Although these mechanisms for generating accountability around content takedown practices are not perfect, they are gradually being adopted by a range of internet platforms.18

Citations

- Raso, Filippo and Hilligoss, Hannah and Krishnamurthy, Vivek and Bavitz, Christopher and Kim, Levin Yerin, Artificial Intelligence & Human Rights: Opportunities & Risk

- Duarte, Llansó, and Loup, Mixed Messages?

- Duarte, Llansó, and Loup, Mixed Messages?

- Grimmelmann, "The Virtues of Moderation".

- Robyn Caplan, Content or Context Moderation: Artisanal, Community-Reliant, and Industrial Approaches, November 14, 2018, source.

- James Vincent, "AI Won't Relieve the Misery of Facebook's Human Moderators," The Verge, February 27, 2019, source.

- Hillary K. Grigonis, "Social (Net)Work: What can A.I. Catch — and Where Does It Fail Miserably?," Digital Trends, February 3, 2018, source.

- Duarte, Llansó, and Loup, Mixed Messages?

- Duarte, Llansó, and Loup, Mixed Messages?

- Duarte, Llansó, and Loup, Mixed Messages?

- Duarte, Llansó, and Loup, Mixed Messages?

- Duarte, Llansó, and Loup, Mixed Messages?

- Duarte, Llansó, and Loup, Mixed Messages?

- YouTube, YouTube Community Guidelines Enforcement Report, 2019, source.

- Langvardt, "Regulating Online Content Moderation”.

- Maayan Perel and Niva Elkin-Koren, "Black Box Tinkering: Beyond Disclosure in Algorithmic Enforcement," Florida Law Review69, no. 181 (2017): source.

- Perel and Elkin-Koren, "Black Box Tinkering: Beyond Disclosure in Algorithmic Enforcement".

- Gennie Gebhart, Who Has Your Back? Censorship Edition 2019, June 12, 2019, source.

Case Study: Facebook

Out of the three platforms covered by case studies in this report, Facebook has by far the largest content moderation operation. In addition, it is the platform that has come under the most scrutiny for its content moderation decision-making practices, both human and automated. One of the reasons for this is that Facebook is one of the largest social media platforms in the world. It ranks third in global internet engagement after YouTube and Google.com,1 and the platform has over 2.38 billion monthly active users worldwide.2 As a result, Facebook’s content moderation practices affect a significant amount of user expression across the globe. Facebook utilizes both a centralized and hybrid approach to content moderation. In order to provide consistency in how its rules are applied across the world, the company has a global set of Community Standards. These Community Standards are enforced by Facebook’s enormous global pool of human content moderators, who are part of the 30,000 people who work on safety and security for the platform.3 In an attempt to ensure that its policies can be localized and enforced appropriately in different regions and across different contexts, Facebook tries to hire moderators based on their language or regional expertise. All reviewers receive the same general training on the company’s Community Standards and how to enforce them. Some of these moderators later develop specialties with certain sensitive content areas such as self-harm.4 Facebook has stated that its rules are structured to reduce bias and subjectivity so that reviewers can make consistent judgements on each case.5 In response to growing global pressure from governments and the public to take down violating content quickly, Facebook has invested heavily in automated tools for content moderation. These include image recognition and matching tools to identify and remove objectionable content such as terror-related content; NLP and language matching tools that seek to recognize and learn from patterns in text related to topics such as propaganda and harm; and pattern identification tools, which seek to identify patterns of similar objectionable content on multiple Facebook pages or patterns among individuals who post similar types of objectionable content. The platform has found that pattern detection is most effective for images, such as resized terror propaganda images, rather than text, as text can be more easily manipulated in order to evade detection and removal—and because text requires greater contextual understanding to evaluate.6

As part of its hybrid approach to content moderation, Facebook engages in several phases of algorithmic and human review in order to identify, assess, and take action against content that potentially violates its Community Standards. Automated tools are typically the first layer of review when identifying violating content on the platform. Depending on the level of complexity and the degree of additional judgment needed, the content may then be relayed to human moderators.7

Facebook deploys automated tools during the ex-ante stage of content moderation. When a user submits content to Facebook, such as a photograph or video, it is immediately screened in an automated process. As described in the section on how automated tools are used in the content moderation process above, this algorithmic screening uses digital hashes to proactively identify and block content that matches existing hash databases for content such as CSAM and terrorism-related imagery.8 Facebook also uses proactive match and action tools to detect and remove content that matches some previously identified spam violations. However, it does not screen new posts against every single previously identified spam violation, as this would result in a significant delay between when the user posts the content and when it appears on the website. Rather, this proactive screening process focuses on identifying CSAM and terrorism-related imagery.9 Once content has been posted to Facebook, the company engages in ex-post proactive moderation as it employs a different set of algorithms to screen and identify objectionable content. These algorithms assess content to identify similarities to specific patterns found in, for example, images, words, and behaviors that are commonly associated with different types of objectionable content. In its latest report, the Facebook Data Transparency Advisory Group (DTAG), an independent advisory board chartered by Facebook and composed of seven experts from various disciplines, has stated that this process is challenging and limited in that additional context is often required in order to evaluate whether the presence of a certain indicator, such as a specific word, is being used in a violating manner. The algorithms involved in this process also consider other factors related to the post, such as the identity of the poster, the content of the comments, likes, and shares, as well as what is depicted in the rest of an image or video if the content is visual in nature. These elements add context and are used to calculate the likelihood that a piece of content violates the platform’s Community Standards. According to the DTAG report, this list of classifiers is continuously updated and algorithms are retrained to incorporate insights that are acquired as more violating content is identified or is missed. The DTAG report also asserts that if these algorithms determine that the content in question clearly violates a Community Standard, it may remove it automatically without relaying it to a human moderator. However, the report notes that, in cases where the algorithm is uncertain on whether a piece of content violates the platform’s rules, the content is sent to a human moderator for review.10 The report does not clarify the circumstances in which the company believes an algorithm can make such a definitive determination.Automated tools are also used to triage and prioritize content that is flagged by users during the ex-post reactive portion of content moderation. When a user flags content on the platform, it goes through an automated system that decides how the content should be reviewed. According to the DTAG report, if the system identifies that the content violates the Community Standards, it may be automatically removed. However, as with ex-post proactive moderation, if the algorithm is unsure, the content will be routed to a human moderator.11 If a user flags content before the company is able to identify it, this flag also informs the platform’s machine learning models.12 In order to audit the accuracy of automated decision-making in content moderation, Facebook calculates two primary metrics: precision and recall. Precision measures the percentage of posts that were correctly labeled as violations out of all the posts that were labeled as violations. Recall measures the percentage of posts that were correctly labeled as violations out of all the posts that were actually violations. Facebook calculates these two metrics separately for each classifier in each algorithm.13 However, the DTAG reported that they were unable to acquire details on topics such as specific classifiers, the accuracy of Facebook’s enforcement system, and error and reversal rates, thus limiting the amount of insight the group had into the platform’s algorithmic decision-making processes for content moderation.

Facebook does provide some degree of transparency around how it uses automated tools to proactively identify and remove content and how much user speech this has impacted, in its Community Standards Enforcement Report (CSER). In the CSER, Facebook reports on how much of the objectionable content they removed was identified proactively using their automated tools (“proactivity rate”) in comparison to objectionable content that users reported to Facebook first. Facebook provides this data for nine content categories, including adult nudity and sexual activity, hate speech, terrorist propaganda (focused on ISIS, al-Qaeda, and affiliated groups), and violence and graphic content. Facebook does not provide this data, however, for all of the categories of content that the company has deemed impermissible, and that Facebook moderates, under its Community Standards. These categories include suicide and self-injury.14 The proactivity rate that Facebook discloses in its CSER can change due to a number of factors, including the fact that Facebook is continuously updating and refining its algorithmic models, as well as the fact that the degree to which content is deemed “likely” to violate the Community Standards varies over time.15 Although the platform has invested significantly in artificial intelligence and machine learning, its algorithmic decision-making capabilities in terms of content moderation are still limited. For example, despite the fact that Facebook has technology that can detect images, audio, and text that potentially violate the company’s Community Standards on the livestream feature, the Christchurch terrorist was still able to livestream his attack in New Zealand.16 In addition, the company has faced significant criticism when its automated tools have resulted in the erroneous takedown of user expression. This has included content posted by human rights activists seeking to document atrocities in Syria, which was mislabeled and removed for violating Facebook’s policies on graphic violence.17

Facebook’s centralized and hybrid approach to content moderation enables the company to deploy a range of tools to moderate content at scale and around the world. However, as demonstrated, the effectiveness of automated tools when identifying and moderating content is limited. As a result, although the platform is investing heavily in new artificial intelligence-driven content moderation tools, it is vital that the company continues adopting a hybrid model of content moderation so that a human moderator is always in the loop to ensure decisions are fair and context-specific. In addition, given that the company is a gatekeeper of a significant amount of user expression, it needs to provide greater transparency and accountability around how it deploys automated tools for content moderation practices and how much user expression this impacts. Although the platform already issues a CSER, it discloses limited information around the role and impact of automated tools in enforcing community standards. This information should be expanded, and the platform should disclose information about how its algorithms are created, trained, tested, and improved.

“It is vital that the company continues adopting a hybrid model of content moderation so that a human moderator is always in the loop to ensure decisions are fair and context-specific.”

Currently, Facebook provides relatively detailed notices to users when their content is removed, offers an appeals process to users who have had certain categories of content removed, and reports on the number of content actions that were appealed and the amount of content restored as a result of appeals in its CSER. However, these processes can be improved in order to provide transparency and accountability around their use of automated tools.18 For example, in its notice to users, Facebook should specify whether the content removed was flagged and detected by an automated tool, an entity such as an Internet Referral Unit, or a user. In addition, the platform should enable users to provide more context and information during the appeals process, particularly in cases where the content was erroneously flagged or removed by an automated tool.19 The platform should also work to expand its appeals process to cover the range of objectionable content prohibited by its Community Standards.

Citations

- Alexa’s global internet engagement metric is based on the global internet traffic and engagement a platform receives over the past 90 days.

- Dan Noyes, "The Top 20 Valuable Facebook Statistics – Updated July 2019," Zephoria Digital Marketing, last modified July 2019, source.

- Casey Newton, "Bodies in Seats," The Verge, June 19, 2019, source.

- Alexis C. Madrigal, "Inside Facebook's Fast-Growing Content-Moderation Effort," The Atlantic, February 7, 2018, source.

- Madrigal, "Inside Facebook's Fast-Growing Content-Moderation Effort".

- Under the Hood Session

- Under the Hood Session

- Klonick, "The New Governors: The People, Rules, and Processes Governing Online Speech".

- Ben Bradford et al., Report Of The Facebook Data Transparency Advisory Group, April 2019, source.

- Bradford et al., Report Of The Facebook Data Transparency Advisory Group.

- Bradford et al., Report Of The Facebook Data Transparency Advisory Group.

- Under the Hood Session

- Bradford et al., Report Of The Facebook Data Transparency Advisory Group.

- Facebook, Community Standards Enforcement Report, 2019, source.

- Bradford et al., Report Of The Facebook Data Transparency Advisory Group.

- Joseph Cox, "Machine Learning Identifies Weapons in the Christchurch Attack Video. We Know, We Tried It," Motherboard, April 17, 2019, source.

- Avi Asher-Schapiro, "YouTube and Facebook Are Removing Evidence of Atrocities, Jeopardizing Cases Against War Criminals," The Intercept, last modified November 2, 2017, source.

- Spandana Singh, Assessing YouTube, Facebook and Twitter's Content Takedown Policies: How Internet Platforms Have Adopted the 2018 Santa Clara Principles, May 7, 2019, source

- The Santa Clara Principles On Transparency and Accountability in Content Moderation," Santa Clara Principles, last modified May 7, 2018, source.

Case Study: Reddit

Reddit is a social news aggregation and discussion website based in the United States. The platform has approximately 330 million monthly active users,1 is ranked 16th for global internet engagement,2 and has been labeled “the biggest little site no one’s ever heard of.”3 The platform enables users, who operate under pseudonyms, to create subpages, called subreddits, on specific interests or topics. In this way, the platform has become popular among particular interest or activity-focused communities, such as gamers and sports fans. The home page of Reddit, as well as each individual subreddit, uses a user-driven voting system that determines the ranking of content posted on each given page.4

Reddit utilizes a primarily decentralized and hybrid approach to content moderation. The company has a set of overarching content policies regarding acceptable content that are high-level and prohibit illegal content such as CSAM, as well as objectionable behaviors such as harassment and content that encourages or incites violence.5 In order to broadly enforce these content policies, Reddit has a small, centralized team of moderators (known to users as administrators or admins), who comprise approximately 10 percent of Reddit’s 400-person workforce.6 However, the majority of content moderation on the platform is carried out by the moderators of individual subreddits, who are known as mods. Mods are users who volunteer to moderate content on a particular subreddit. They have significant editorial discretion and can choose to remove content that violates Reddit’s rules or that they deem objectionable or off-topic. They can also temporarily mute or ban users from their subreddit. Mods are also empowered to create additional content policies that define acceptable content and use for their subreddits as long as they do not conflict with Reddit’s global set of content policies. All of the mods on a subreddit can also collectively create guidelines that outline their own responsibilities and codes of conduct. Mods may also have additional roles, such as fostering discussions, depending on the subreddit.7 Admins rarely intervene in content moderation decisions unless it is to remove objectionable content that is illegal or clearly prohibited by Reddit’s content policies,8 or to ban users from the site as a whole.9 According to researchers from Microsoft, there are approximately 91,563 unique mods on the platform, with an average of five mods per subreddit.10

By employing a decentralized approach to content moderation, Reddit is able to save time and resources by relying on its users to aid with content moderation. This approach keeps users engaged and serves the overall business aims of the company. In addition, it positions the company as a promoter of diverse viewpoints, since each individual subreddit has its own content policies that are tailored to the needs of each specific subreddit community.11 This decentralized approach to content moderation has also resulted in users self-policing to ensure they do not violate specific content policies and has fostered an environment in which users call one another out for violating policies or posting objectionable content.12 Further, this decentralized model enables localized and context-specific moderation decisions as mods set and enforce content guidelines that are appropriate to the particular nuances, norms, and variations attributed to different discussion topics.

“By employing a decentralized approach to content moderation, Reddit is able to save time and resources by relying on its users to aid with content moderation.”

In addition to employing a small number of human moderators to engage in ex-post reactive content moderation, Reddit admins also employ some automated tools in order to identify and remove objectionable content such as CSAM. However, because the majority of content moderation is carried out by users, Reddit has also developed an automated tool, known as the AutoModerator, that mods can use to moderate content on their subreddits at scale. The AutoModerator is a built-in, customizable bot that provides basic algorithmic tools to proactively identify, filter, and remove objectionable content during the ex-ante moderation stage. The bot operates based on mod-chosen parameters such as keywords, content that has a high number of reports, website links, and specific users, etc. that are not permitted in a particular subreddit. The AutoModerator can automatically remove this objectionable content, but mods also have the opportunity to review this removed content later and can reverse any erroneous removals. In addition to using the AutoModerator, many Reddit mods have turned to creating their own bots or tools, or using free versions available online, in order to flag custom words and enhance their moderation practices.13 The decentralized approach to content moderation empowers users to manage their own speech and helps democratize expression and enable localized and diverse viewpoints, as well as context-specific content moderation practices. However, it does raise a number of questions regarding accuracy and reliability, bias, and transparency and accountability. There is little insight into how accurate the AutoModerator is across different subreddits and categories of content or violations. In addition, because mods create the content policies for subreddits and define the parameters that the AutoModerator operates on, the deployment of automated tools for content moderation will undoubtedly reflect the personal biases of the mods. There is little transparency around this process, and because Reddit operates in a decentralized manner, there is a lack of a clear accountability mechanism.

In its transparency report, Reddit discloses the amount of content removed by mods—including through the use of the AutoModerator—and the amount of content removed by admins.14 However, this is the only metric that touches on the scope and volume of content moderation carried out by mods. The remainder of the metrics covered in the report, such as the number of potential content policy violations received, what percent of these reports were actionable, what content policies these actionable reports covered, and how many appeal requests were received and granted, are all admin-focused. Therefore, although the majority of content takedowns on Reddit involved removals by mods, most metrics in the report do not cover mod activities.15

The decentralized nature of Reddit’s content moderation approach therefore prevents further transparency around the activities of mods, who are responsible for moderating the most content, and how they are deploying algorithmic tools to manage and moderate user expression. Going forward, Reddit should consider requiring mods to monitor and track the amount of content they remove, both manually and using the AutoModerator, so the platform can disclose this information in its transparency report. In addition, Reddit offers notice to users who have had their content removed or accounts suspended. It also offers an appeals process to users who feel their content or accounts have been erroneously impacted by content moderation activities. However, it is unclear whether mods offer notices or a similar appeals processes to users who have been impacted by mods’ moderation processes. The AutoModerator, does, in fact, provide notice to users when it removes content from subreddits, suggesting that, when automated tools are deployed by mods to moderate content, users are notified of the resulting impact.16

Citations

- Lauren Feiner, "Reddit Users Are The Least Valuable Of Any Social Network," CNBC, February 11, 2019, source.

- Alexa, "reddit.com Competitive Analysis, Marketing Mix and Traffic," Alexa, source.

- Colm Gorey, "How Reddit's Dublin Office Plans to Tackle Evil On The 'Front Page Of The Internet,'" Silicon Republic, May 13, 2019, source.

- Grimmelmann, "The Virtues of Moderation".

- Caplan, Content or Context Moderation.

- Caplan, Content or Context Moderation.

- Christine Kim, "Ethereum's Reddit Moderators Resign Amid Controversy," Coindesk, May 12, 2019, source .

- Grimmelmann, "The Virtues of Moderation".

- Benjamin Plackett, "Unpaid and Abused: Moderators Speak Out Against Reddit," Engadget, August 31, 2018, source.

- Caplan, Content or Context Moderation.

- Caplan, Content or Context Moderation.

- Gorey, "How Reddit's Dublin Office Plans to Tackle Evil On The 'Front Page Of The Internet".

- Joseph Seering et al., "Moderator Engagement and Community Development in the Age of Algorithms," New Media & Society21, no. 7 (January 11, 2019): source.

- This reporting excludes spam-related removals

- Reddit, Transparency Report 2018, source.

- Eshwar Chandrasekharan et al., "The Internet's Hidden Rules: An Empirical Study of Reddit Norm Violations at Micro, Meso, and Macro Scales," Proceedings of the ACM on Human Computer Interaction, 2nd ser., November 2018, source.

Case Study: Tumblr

Tumblr is a microblogging and social media website currently owned by Verizon Media. The platform ranks 78th for global internet engagement,1 and as of April 2017, it had 738 million unique visitors worldwide,2 with 2019 statistics citing that the number of blog accounts on the platform had grown to 463.5 million.3 The company utilizes a centralized, hybrid approach to content moderation, although it is unclear how many human moderators the company employs and to what extent the platform uses automated tools to moderate content. Although Tumblr is not one of the largest or most widely used internet platforms, it is an interesting one to consider when assessing how algorithmic decision-making is deployed for content moderation purposes because the platform recently amended its Community Guidelines to ban adult content and nudity. Before this policy change, the platform was considered a haven for graphic forms of expression on the internet. However, as of December 2018, Tumblr’s rules were updated to state that pornography and adult content were no longer permitted on the platform.4 In its announcement to users, Tumblr outlined that any content that violated this new policy would be flagged using a “mix of machine-learning classification and human moderation.” The use of automated tools to flag potentially violating content in this case makes sense, as the platform had millions of posts and blogs featuring adult content, and the scope and scale of removing such content could not be achieved by human moderators alone.5 However, identifying and removing such content also requires context. For example, although an algorithm could be trained to identify all images with female breasts, they are unlikely to be able to distinguish whether these images are graphic in nature or whether they are discussing or depicting mastectomies, gender confirmation surgeries, or breastfeeding. As a result, it is vital that a human moderator always remains in the loop during the content moderation process.

Prior to this announcement, Tumblr only filtered out adult content through its “Safe Mode” feature that allows users to select which content they will see. Shortly after Tumblr was acquired by Verizon Media in June 2017, it introduced this opt-in feature in order to let users filter “sensitive” content from their own dashboard and search results. However, the feature had flaws. Users quickly found that it filtered out non-adult content, including LGBTQ+ posts. It is unclear whether the company is deploying the same artificial intelligence technology used for Safe Mode to implement this new platform-wide ban on adult content, but WIRED reported that the company would be using modified proprietary technology. The company also announced it would be hiring more human moderators.6