Table of Contents

- Executive Summary

- Introduction

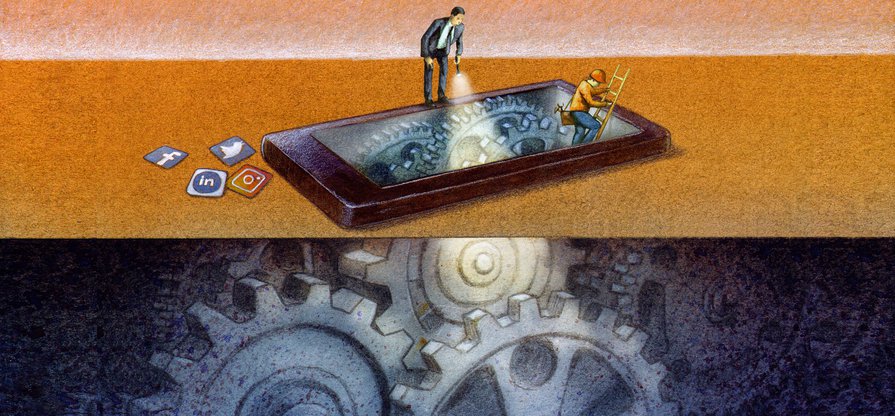

- A Tale of Two Algorithms

- Russian Interference, Radicalization, and Dishonest Ads: What Makes Them So Powerful?

- Algorithmic Transparency: Peeking Into the Black Box

- Who Gets Targeted—Or Excluded—By Ad Systems?

- When Ad Targeting Meets the 2020 Election

- Regulatory Challenges: A Free Speech Problem—and a Tech Problem

- So What Should Companies Do?

- Key Transparency Recommendations for Content Shaping and Moderation

- Conclusion

Conclusion

Companies have long accepted the need to moderate content, and to interface with policymakers and civil society about their content moderation practices, as a cost of doing business. It may be in their short-term commercial interest to keep the public debate at the level of content, without questioning the core assumptions of the surveillance-based, targeted advertising business model: scale, collection and monetization of user information, and the use of opaque algorithmic systems to shape users’ experiences. But this focus may backfire if Section 230 is abolished or drastically changed. Furthermore, U.S. business leaders, investors, and consumers have been voicing growing expectations that the American economy should serve all parts of society, not just Big Tech’s shareholders.1 Companies that want to be considered responsible actors in society must make credible efforts to understand and mitigate the harms caused by their business models, particularly companies whose platforms have the power to shape public discourse and thereby our democracy.

Reliance on revenue from targeted advertising incentivizes companies to design platforms that are addictive, that manufacture virality, and that maximize the information that the company can collect about its users.2 Policymakers and the American public are starting to understand this, but have not taken this insight to its logical conclusion: the business model needs to be regulated.

Reliance on revenue from targeted advertising incentivizes companies to design platforms that are addictive, that manufacture virality, and that maximize the information that the company can collect about its users.

Instead, as privacy bills languish in Congress, calls to reform Section 230 put the focus on interventions at the content level. Such reforms risk endangering free speech by incentivizing companies to remove much more user content than they currently do. They may not even address lawmakers’ concerns, as much of the speech in question is protected by the First Amendment (like hate speech).

We have to pursue a different path, one that allows us to preserve freedom of expression and hold internet platforms accountable. Policymakers and activists alike must shift their focus to the power that troubling content can attain when it is plugged into the algorithmic and ad-targeting systems of companies like Google, Facebook, and Twitter. This is where regulatory efforts could truly shift our trajectory.

We will never be able to eliminate all violent extremism or disinformation online any more than we can eliminate all crime or national security threats in a city or country—at least not without sacrificing core American values like free speech, due process, and the rule of law. But we can drastically reduce the power of such content—its capacity to throw an election or bring about other kinds of real-life harm—if we focus on regulating companies’ underlying data-driven (and money-making) technological systems and on good corporate governance. Our next report will do just that.

Citations

- Business Roundtable. 2019. “Business Roundtable Redefines the Purpose of a Corporation to Promote ‘An Economy That Serves All Americans.’” source">source ; Behar, Andrew. 2019. “CEOs of World’s Largest Corporations: Shareholder Profit No Longer Sole Objective.” As You Sow.source"> source

- Gary, Jeff, and Ashkan Soltani. 2019. “First Things First: Online Advertising Practices and Their Effects on Platform Speech.” Knight First Amendment Institute. source