Table of Contents

- Executive Summary

- Introduction

- A Tale of Two Algorithms

- Russian Interference, Radicalization, and Dishonest Ads: What Makes Them So Powerful?

- Algorithmic Transparency: Peeking Into the Black Box

- Who Gets Targeted—Or Excluded—By Ad Systems?

- When Ad Targeting Meets the 2020 Election

- Regulatory Challenges: A Free Speech Problem—and a Tech Problem

- So What Should Companies Do?

- Key Transparency Recommendations for Content Shaping and Moderation

- Conclusion

Russian Interference, Radicalization, and Dishonest Ads: What Makes Them So Powerful?

Russian interference in recent U.S. elections and online radicalization by proponents of violent extremism are just two recent, large-scale examples of the content problems that we reference above.

Following the 2016 election, it was revealed that Russian government actors had attempted to influence U.S. election results by promoting false online content (posts and ads alike) and using online messaging to stir tensions between different voter factions. These influence efforts, alongside robust disinformation campaigns run by domestic actors,1 were able to flourish and reach millions of voters (and perhaps influence their choices) in an online environment where content-shaping and ad-targeting algorithms play the role of human editors. Indeed, it was not the mere existence of misleading content that interfered with people’s understanding of what was true about each candidate and their positions—it was the reach of these messages, enabled by algorithms that selectively targeted the voters whom they were most likely to influence, in the platforms’ estimation.2

The revelations set in motion a frenzy of content restriction efforts by major tech companies and fact-checking initiatives by companies and independent groups alike,3 alongside Congressional investigations into the issue. Policymakers soon demanded that companies rein in online messages from abroad meant to skew a voter’s perspective on a candidate or issue.

How would companies achieve this? With more algorithms, they said.4 Alongside changes in policies regarding disinformation, companies soon adjusted their content moderation algorithms to better identify and weed out harmful election-related posts and ads. But the scale of the problem all but forced them to stick to technical solutions, with the same limitations as those that caused these messages to flourish in the first place.

Content moderation algorithms are notoriously difficult to implement effectively, and often create new problems. Academic studies published in 2019 found that algorithms trained to identify hate speech for removal were more likely to flag social media content created by African Americans, including posts using slang to discuss contentious events and personal experiences related to racism in America.5 While companies are free to set their own rules and take down any content that breaks those rules, these kinds of removals are in tension with U.S. free speech values, and have elicited the public blowback to match.

Content restriction measures have also inflicted collateral damage on unsuspecting users outside the United States with no discernible connection to disinformation campaigns. As companies raced to reduce foreign interference on their platforms, social network analyst Lawrence Alexander identified several users on Twitter and Reddit whose accounts were suspended simply because they happened to share some of the key characteristics of disinformation purveyors.

One user had even tried to notify Twitter of a pro-Kremlin campaign, but ended up being banned himself. “[This] quick-fix approach to bot-hunting seemed to have dragged a number of innocent victims into its nets,” wrote Alexander, in a research piece for Global Voices. For one user who describes himself in his Twitter profile as an artist and creator of online comic books, “it appears that the key ‘suspicious’ thing about their account was their location—Russia.”6

The lack of corporate transparency regarding the full scope of disinformation and malicious behaviors on social media platforms makes it difficult to assess how effective these efforts actually are.

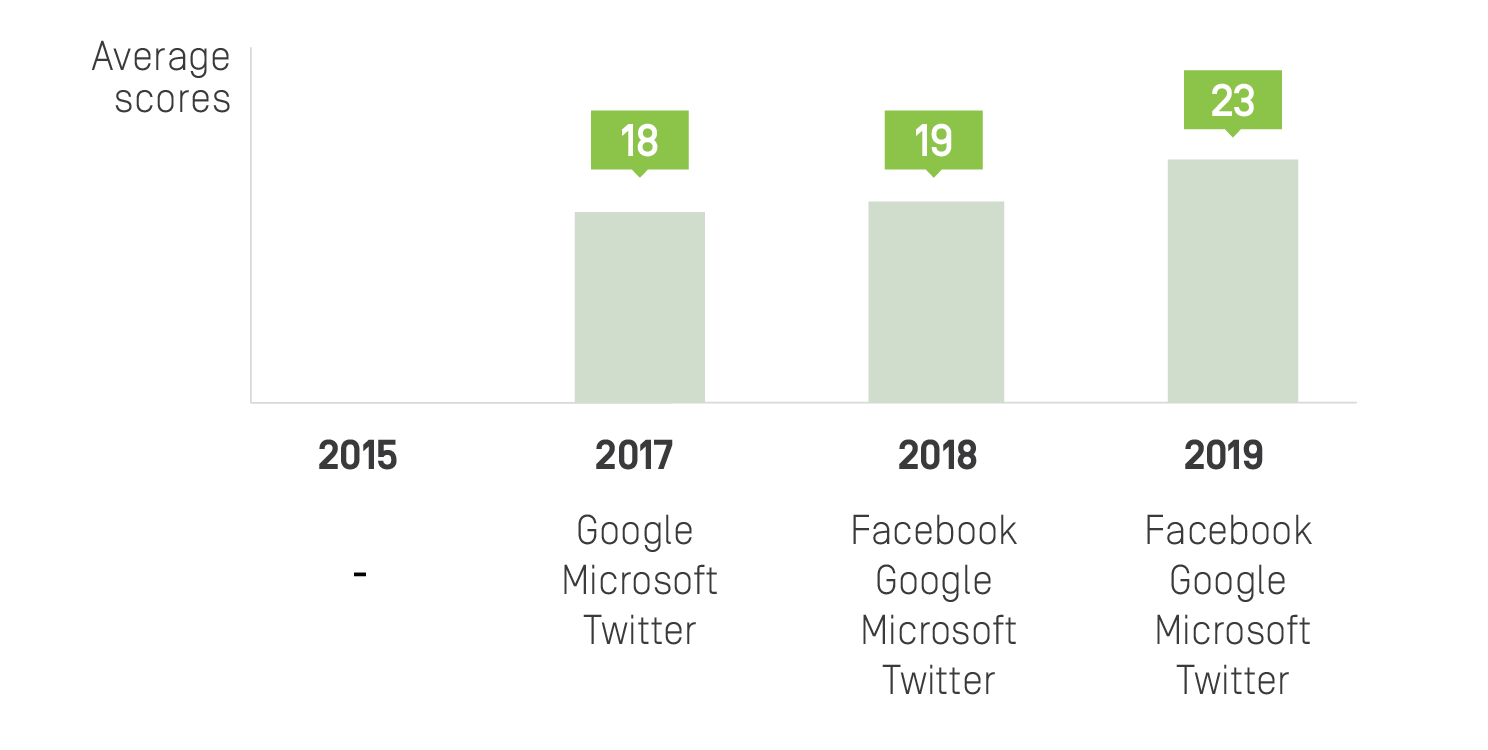

For four years, RDR has tracked whether companies publish key information about how they enforce their content rules. In 2015, none of the companies we evaluated published any data about the content they removed for violating their platform rules.7 Four years later, we found that Facebook, Google, Microsoft, and Twitter published at least some information about their rules enforcement,8 including in transparency reports.9 But this information still doesn’t demonstrate how effective their content moderation mechanisms have actually been in enforcing their rules, or how often acceptable content gets caught up in their nets.

Companies have also deployed automated systems to review election-related ads, in an effort to better enforce their policies. But these efforts too have proven problematic. Various entities, ranging from media outlets10 to LGBTQ-rights groups11 to Bush’s Baked Beans,12 have reported having their ads rejected for violating election-ad policies, despite the fact that their ads had nothing to do with elections.13 Yet companies’ disclosures about how they police ads are even less transparent than those pertaining to user-generated content, and there’s no way to know how effective these policing mechanisms have been in enforcing the actual rules as intended. 14

The issue of online radicalization is another area of concern for U.S. policymakers, in which both types of algorithms have been in play. Videos and social media channels enticing young people to join violent extremist groups or to commit violent acts became remarkably easy to stumble upon online, due in part to what seems to be these groups’ savvy understanding of how to make algorithms that amplify and spread content work to their advantage.15 Online extremism and radicalization are very real problems that the internet has exacerbated. But efforts to address this problem have led to unjustified censorship.

Widespread concern that international terrorist organizations were recruiting new members online has led to the creation of various voluntary initiatives, including the Global Internet Forum to Counter Terrorism (GIFCT), which helps companies jointly assess content that has been identified as promoting or celebrating terrorism.

Scale matters—the societal impact of a single message or video rises exponentially when a powerful algorithm is driving its distribution.

The GIFCT has built an industry-wide database of digital fingerprints or “hashes” for such content. Companies use these hashes to filter offending content, often prior to upload. As a result, any errors made in labeling content as terrorist in this database16 are replicated on all participating platforms, leading to the censorship of photos and videos containing speech that should be protected under international human rights law.

Thousands of videos and photos from the Syrian civil war have disappeared in the course of these efforts—videos that one day could be used as evidence against perpetrators of violence. No one knows for sure whether these videos were removed because they matched a hash in the GIFCT database, because they were flagged by a content moderation algorithm or human reviewer, or some other reason. The point is that this evidence is often impossible to replace. But little has been done to change the way this type of content is handled, despite its enormous potential evidentiary value.17

In an ideal world, violent extremist messages would not reach anyone. But the public safety risks that these carry rise dramatically when such messages reach tens of thousands, or even millions, of people.

The same logic applies to disinformation targeted at voters. Scale matters—the societal impact of a single message or video rises exponentially when a powerful algorithm is driving its distribution. Yet the solutions for these problems that we have seen companies, governments, and other stakeholders put forth focus primarily on eliminating content itself, rather than altering the algorithmic engines that drive its distribution.

Citations

- Benkler, Yochai, Rob Faris, and Hal Roberts. 2018. Network Propaganda: Manipulation, Disinformation, and Radicalization in American Politics. New York, NY: Oxford University Press.

- U.S. Senate Select Committee on Intelligence. 2019a. Report of the Select Committee on Intelligence United States Senate on Russian Active Measures Campaigns and Interference in the 2016 U.S. Election Volume 1: Russian Efforts against Election Infrastructure. Washington, D.C.: U.S. Senate. source; U.S. Senate Select Committee on Intelligence. 2019b. Report of the Select Committee on Intelligence United States Senate on Russian Active Measures Campaigns and Interference in the 2016 U.S. Election Volume 2: Russia’s Use of Social Media. Washington, D.C.: U.S. Senate. source

- Many independent fact-checking efforts stemmed from this moment, but it is difficult to assess their effectiveness.

- Harwell, Drew. 2018. “AI Will Solve Facebook’s Most Vexing Problems, Mark Zuckerberg Says. Just Don’t Ask When or How.” Washington Post. source

- Ghaffary, Shirin. 2019. “The Algorithms That Detect Hate Speech Online Are Biased against Black People.” Vox. source; Sap, Maarten et al. 2019. “The Risk of Racial Bias in Hate Speech Detection.” In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, Florence, Italy, 1668–78. source; Davidson, Thomas, Debasmita Bhattacharya, and Ingmar Weber. 2019. “Racial Bias in Hate Speech and Abusive Language Detection Datasets.” In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, Florence, Italy, 25–35. source

- Lawrence Alexander. “In the Fight against Pro-Kremlin Bots, Tech Companies Are Suspending Regular Users.” 2018. Global Voices. source

- Several companies (including Facebook, Google, and Twitter) did publish some information about content they removed as a result of a government request or a copyright claim. Ranking Digital Rights. 2015. Corporate Accountability Index. Washington, DC: New America. source

- Ranking Digital Rights. 2019. Corporate Accountability Index. Washington, DC: New America. source

- Transparency reports contain aggregate data about the actions that companies take to enforce their rules. See Budish, Ryan, Liz Woolery, and Kevin Bankston. 2016. The Transparency Reporting Toolkit. Washington D.C.: New America’s Open Technology Institute. source

- Jeremy B. Merrill, Ariana Tobin. 2018. “Facebook’s Screening for Political Ads Nabs News Sites Instead of Politicians.” ProPublica. source

- Eli Rosenberg. “Facebook Blocked Many Gay-Themed Ads as Part of Its New Advertising Policy, Angering LGBT Groups.” Washington Post. source

- Mak, Aaron. 2018. “Facebook Thought an Ad From Bush’s Baked Beans Was ‘Political’ and Removed It.” Slate Magazine. source

- In a 2018 experiment, Cornell University professor Nathan Matias and a team of colleagues tested the two systems by submitting several hundred software-generated ads that were not election-related, but contained some information that the researchers suspected might get caught in the companies’ filters. The ads were primarily related to national parks and Veterans’ Day events. While all of the ads were approved by Google, 11 percent of the ads submitted to Facebook were prohibited, with a notice citing the company’s election-related ads policy. See Matias, J. Nathan, Austin Hounsel, and Melissa Hopkins. 2018. “We Tested Facebook’s Ad Screeners and Some Were Too Strict.” The Atlantic. source

- Our research found that while Facebook, Google and Twitter did provide some information about their processes for reviewing ads for rules violations, they provided no data about what actions they actually take to remove or restrict ads that violate ad content or targeting rules once violations are identified. And because none of these companies include information about ads that were rejected or taken down in their transparency reports, it’s impossible to evaluate how well their internal processes are working. Ranking Digital Rights. 2020. The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems – Pilot Study and Lessons Learned. Washington D.C.: New America. source

- Popken, Ben. 2018. “As Algorithms Take over, YouTube’s Recommendations Highlight a Human Problem.” NBC News. source; Marwick, Alice, and Rebecca Lewis. 2017. Media Manipulation and Disinformation Online. New York, NY: Data & Society Research Institute. source; Caplan, Robin, and danah boyd. 2016. Who Controls the Public Sphere in an Era of Algorithms? New York, NY: Data & Society Research Institute. source.

- Companies take different approaches to defining what constitutes “terrorist” content. This is a highly subjective exercise.

- Biddle, Ellery Roberts. “‘Envision a New War’: The Syrian Archive, Corporate Censorship and the Struggle to Preserve Public History Online.” Global Voices. source; MacDonald, Alex. “YouTube Admits ‘wrong Call’ over Deletion of Syrian War Crime Videos.” Middle East Eye. source