Table of Contents

- Executive Summary

- Introduction

- Targeted Advertising and COVID-19 Misinformation: A Toxic Combination

- Human Rights: Our Best Toolbox for Platform Accountability

- Making All Ads “Honest” Through Transparency, Limited Targeting, and Enforcement

- By Protecting Data, Federal Privacy Law Can Reduce Algorithmic Targeting and the Spread of Disinformation

- Good Content Governance Requires Good Corporate Governance

- Without Civil Society, Platform Accountability is a Pipe Dream

- Key Recommendations for Policymakers

- Conclusion

By Protecting Data, Federal Privacy Law Can Reduce Algorithmic Targeting and the Spread of Disinformation

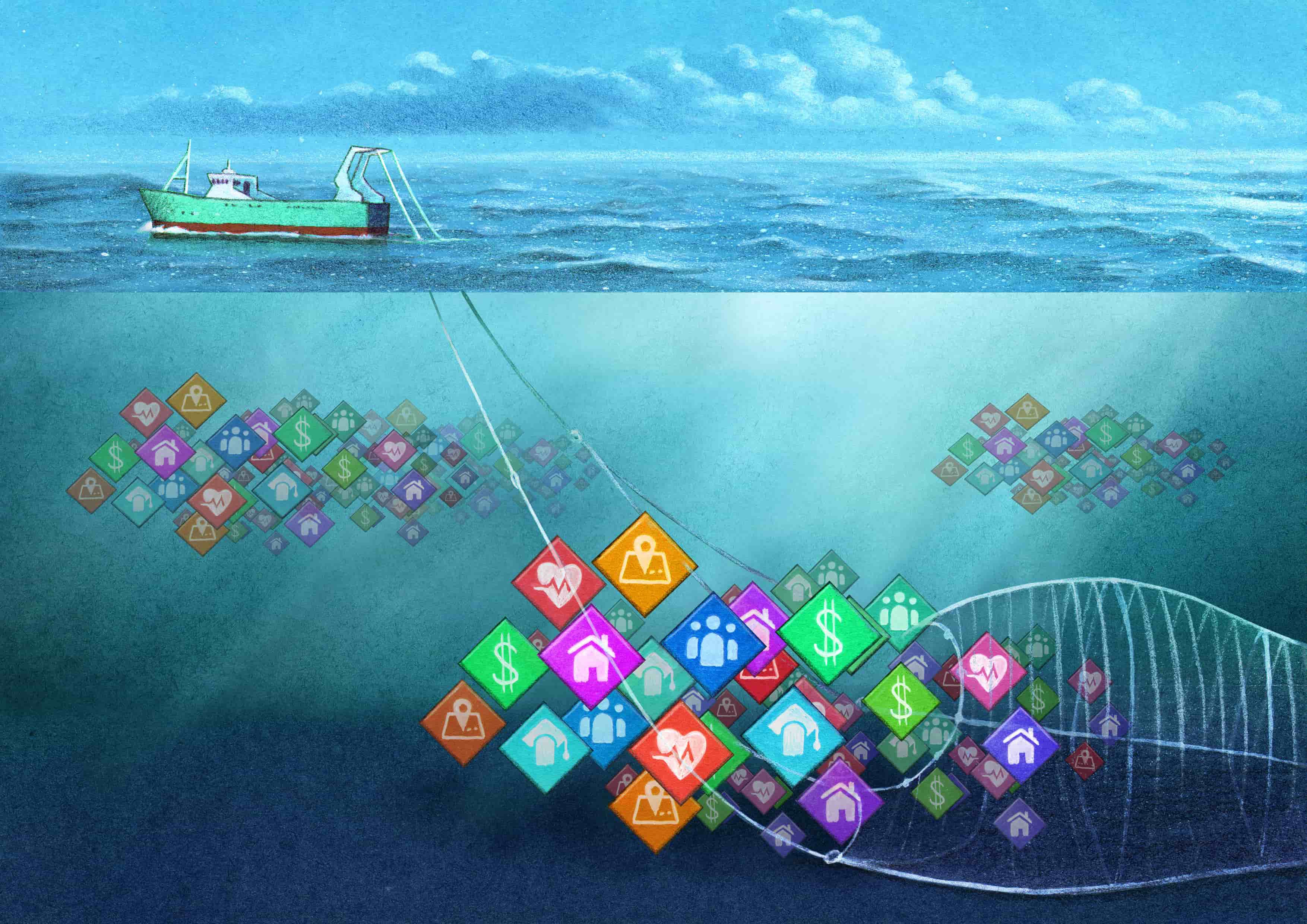

A strong data privacy law, effectively enforced, can protect internet users from the discrimination inherent to automated content optimization and limit the viral spread of harmful messages. The way to achieve that is by strictly limiting data collection and retention to the absolute minimum that is required to deliver the service to the end-user, irrespective of the company’s business model or financial interests. As Alex Campbell wrote in Just Security: “Absent the detailed data on users’ political beliefs, age, location, and gender that currently guide ads and suggested content, disinformation has a higher chance of being lost in the noise.”1

The United States lacks a comprehensive federal privacy law governing the collection, processing, and retention of personal information, though there are sector-specific laws that apply to education, healthcare, and other sectors.2 A strong federal privacy law, backed up by robust enforcement mechanisms, is perhaps the strongest tool at Congress’ disposal to stem the tide of online misinformation and dangerous speech by disrupting the algorithmic systems that amplify such content. This approach would also have the benefit of side-stepping the thornier issues related to free speech and the First Amendment.

Even when these companies do disclose what they collect, the scope of what is collected is staggering.

But as things stand, targeted advertising companies are free to collect virtually any information they want to, and use it however it benefits their bottom line. Facebook, Google, and Twitter hoover up massive amounts of data about internet users (both on their platforms and off). Indeed, not only do platforms track what users do while using their services, they also follow them around the internet and purchase data about the offline behavior from credit card companies and data brokers.3 The data that is collected becomes the core ingredient for developing very powerful digital profiles about users that can then be used by advertisers and political operatives to target groups and individuals, like in the pseudoscience example described previously. What’s worse, the tech giants do not clearly disclose exactly what they are doing with users’ data. In such conditions, the notion of user consent is meaningless.

RDR’s evaluation of the three American social media giants’ policies and disclosed practices is useful in clarifying just what needs to change, and how the companies should be regulated. Data from the 2019 RDR Index highlights the opacity of the major U.S. digital platforms when it comes to the collection, processing, and sharing of user information. Even when these companies do disclose what they collect, the scope of what is collected is staggering.

Scope and Purpose of Data Collection, Use, and Sharing

In evaluating companies for the RDR Index, we have examined if companies clearly disclose why and how they collect user information, by which we mean any data that is connected to an identifiable person, or may be connected to such a person by combining datasets or utilizing data-mining techniques. We look for a commitment to limit collection of user information to what is directly relevant and necessary to accomplish the purpose of the service from the end user’s perspective.4 In our evaluation, we do not consider targeted advertising as the purpose of the service: While the revenue it generates enables the company to provide the service, users would get the same benefit if the company made money differently. User information should not be collected for targeted advertising by default, though people can be given a choice to opt in.

Google, Facebook, and Twitter all track users across the internet: Using “cookies” and other tracking technologies embedded in many websites, they collect data about what web pages people visited and what they have done there (purchased items, watched videos, etc).5 The three companies all disclose and explain what types of user information they collect but are less clear about how, and none commit to collecting only data that is necessary to provide the service.6 Facebook, for instance, states that it collects “things you do and others do and provide,” including the “content, communications and other information” users provide when using Facebook products (for example when signing up for an account, posting text, videos or images, and using messaging functions). This can include the content of user posts, metadata, and more. Facebook also discloses that it collects device information, including location information. In short: If Facebook can capture a piece of information about you, it does.7

All three companies disclose some information about the types of entities they share user data with, but none get more specific. Yet in the context of targeted advertising, it is especially important for companies to disclose to users specifically who their information is shared with for any purpose that isn’t subject to legitimate law enforcement or national security limitations in accordance with human rights standards.8

While no company discloses enough about how they handle user information, Twitter deserves credit for having disclosed more than other U.S. platforms (Google, Facebook, Microsoft, and Apple) about its data handling policies, across all the user information indicators in the 2019 RDR Index.9

Federal privacy legislation should include strong data minimization and purpose limitation provisions: Users should not be able to opt in to discriminatory advertising or to the collection of data that would enable it.10 Any data processing that remains should be opt in. Using the service should not be contingent on giving up more data than that which is necessary to accomplish the purpose of the service. Crucially, the delivery of targeted advertising should not be considered the purpose of the service unless the service’s primary purpose is in fact clearly described as such by the company to the general public in its marketing and public communications. Companies should disclose to users and to the relevant regulatory agency what user information they collect, share, retain, and infer; for what purpose; and how long it is retained. Companies should only collect user information from third parties, or share user information with third parties, if the two companies have a vendor-contractor relationship and the sharing of this user information is directly relevant and necessary for the purpose of delivering a service to the user. Companies should allow users to obtain all of their user information (collected and inferred, broadly defined) that the company holds, in a structured data format. They should delete all user information after a user terminates their account or at the user’s request. Finally, the accuracy of disclosures and compliance with the above requirements should be independently audited.

Inferred Information and Targeting

In future versions of the RDR Index we will look for the same level of disclosure and commitment about what companies infer about users. Inference is a key way that user profiles are built. Companies perform big data analytics on their troves of collected user data in order to make predictions about the behaviors, preferences, and private lives of their users, and to then sort users into audience categories on that basis.11 Take, for example, the case of Aaron Sankin at The Markup. He doesn’t know exactly why his account was included in the pseudoscience audience category, but he speculates it was likely because he had conducted research about medical misinformation on Facebook, causing the company’s algorithms to assume he had an interest in pseudoscience.12

Targeted advertising should be allowed only if the default is that users are not targeted upon joining a service. Companies would be wise to avoid relying on targeted advertising as their sole source of revenue and consider contextual advertising and subscription models, among others. Users must be able to actively opt in to being targeted and able to fully control what information can be used to target them, if any.13 Users might choose to be targeted based on certain types of data but not others. Companies should disclose sufficient information to users and to regulators so that people can understand exactly how, why, and by whom they have been targeted, and regulators can track broader patterns to identify abusive practices.

In the first report in this series, we called on companies to publicly explain the content-shaping algorithms that determine what user-generated content users see, and the ad-targeting systems that determine who can pay to influence them. We also called on them to disclose their rules for user content, advertising content, and ad targeting; to explain how they enforce those rules; and to publish regular transparency reports containing data about the actions they take to enforce these rules. We further call on the U.S. Congress to enact legislation to require companies to provide this information to policymakers and the public as a first step toward greater accountability.14 These transparency measures are necessary for a more nuanced understanding of a complex and dysfunctional ecosystem but insufficient to the task of making our online ecosystem work for democracy and the public interest. For that, much more active regulatory intervention is needed, starting with the barely regulated targeted advertising industry.

Policymakers should not be convinced by tech giants’ claim that targeted advertising benefits internet users by showing them ads that are most relevant to them. Targeted advertising is on by default on Facebook, Google, and Twitter. If users find targeted advertising as useful as companies say they do, many will choose to opt in. While users can customize options for the types of ads they want to see, they can’t opt out of receiving tailored ads altogether. In the past year, Facebook has improved its disclosure of options users have to remove categories of interests and pages they’ve visited, constituting some but not all of the information used to customize the ads they are seeing. Even when customization is an option, however, the settings can be hard to find.

A federal privacy law should also restrict the targeting options that platforms are allowed to offer to advertisers. Based on our research team’s examination of company policy disclosures across Facebook, Google, and Twitter, Facebook appears to have the fewest restrictions on ad targeting and the least transparency. It disclosed that users will be targeted with ads but did not disclose the exact ad targeting parameters that are prohibited. It disclosed that advertisers can tailor ads to custom audiences—lists of individuals that advertisers can upload to the platform—but are prohibited from using these targeting options “to discriminate against, harass, provoke, or disparage users or to engage in predatory advertising practices.”15 None of these terms are defined, and the company does not clarify how it would detect breaches of the policy or what the penalty might be for doing so.

In addition to uploading their own custom audience lists, advertisers can also select from among Facebook’s algorithmically-determined audience categories, which are based on profiles that Facebook has created from people’s online and offline activities. These profiles can include the content a user posts, the accounts and pages they follow, the content they like or otherwise engage with, and the known and/or inferred interests of the other users they are connected to on the platform. These targeting options, however, are only visible when logged into the platform and going through the process of placing ads. As a result, only Facebook account holders who take the time to investigate the platform’s audience categories can know what they are. This is how researchers and investigative journalists have discovered the existence of racist16 and otherwise problematic audience categories,17 alerting public opinion to the dark side of targeted advertising and leading companies to remove the offending categories. Despite calls from civil society (including RDR) to publish the list of available categories, companies have thus far declined to do so, making any kind of systematic oversight impossible.

The Challenge of Effective Enforcement

Privacy law is key to preventing targeted advertising systems from profiling and targeting people in dangerous and harmful ways. But a law is only as good as its enforcement. In that, the EU’s challenges in enforcing the General Data Protection Regulation (GDPR) offer important lessons. National-level data protection agencies are under-funded with massive case backlogs, and critics worry that fines are not high enough, nor are other penalties sufficiently punitive, to force a change in industry practices.18 Enforcement needs a bigger stick to protect privacy rights.

For example, since the GDPR went into force in 2018, only one major tech giant has been fined for a violation: In early 2019, Google was docked 50 million euros (about $54 million, which the New York Times estimated is about one-tenth of Google’s daily sales) for failing to adequately disclose how data is collected across its services for use in targeted advertising.19 A complaint filed against Facebook in May 2018 by privacy advocate Max Schrems argues that in order for users to even sign onto the company’s services (Facebook, Instagram, and WhatsApp), they are forced to agree to having their personal information harvested for targeted advertising. Such “forced consent” is illegal, the complaint argues, if the core purpose of the service is social networking—as the company states—and not targeted advertising.20 In contrast to some major multinational Asian and European internet, mobile, and telecommunications companies who disclose that they limit collection of user information to what is directly relevant and necessary to accomplish the purpose of the service, none of the U.S.-based companies evaluated in the 2019 RDR Index (including Facebook) were found to have done so.21 While digital marketers once fretted that the GDPR would render targeted advertising a shadow of its former self, that will only happen if the law is strictly interpreted by courts and enforced.22

Whether Congress opts to confer increased authority and funding on the Federal Trade Commission (FTC) to enforce a strong privacy law, or sets up a new data protection agency, the key to success will certainly be strong enforcement authority—backed by adequate funding for the enforcement process.23 This is a non-trivial challenge, but should be considered part of the price of protecting democracy.

The Public Interest Principles for Privacy Legislation, published by 34 civil rights, consumer, and privacy organizations in late 2018, set forth baseline objectives that need to be met in order to ensure that a privacy law truly protects the public interest:

- Privacy protections must be strong, meaningful, and comprehensive.

- Data practices must protect civil rights, prevent unlawful discrimination, and advance equal opportunity.

- Governments at all levels should play a role in protecting and enforcing privacy rights.

- Legislation should provide redress for privacy violations.24

The Public Interest Principles underscore the importance for individuals to have access to a wide range of redress mechanisms, including the right of private individuals to sue companies for privacy violations. The California Consumer Privacy Act, which grants individuals a private right of action against data breaches, is now being tested by lawsuits against the videoconferencing platforms Zoom and Houseparty for sharing user data with third parties without consent.25 A national privacy law that adheres to the Public Interest Principles would also contain a private right of action, and should meet all other relevant civil rights standards.

Citations

- Campbell, Alex. 2019. “How Data Privacy Laws Can Fight Fake News.” Just Security. source (May 16, 2020).

- The California Consumer Privacy Act (CCPA), which took effect on January 1 and will be enforced starting in July, may de facto apply to much of the country, as many companies may find it more expedient to extend the rights that the CCPA confers on California residents to those in other states.

- Madrigal, Alexis C. 2012. “I’m Being Followed: How Google—and 104 Other Companies—Are Tracking Me on the Web.” The Atlantic. source (May 17, 2020).

- Organisation for Economic Cooperation and Development. 2013. The OECD Privacy Framework. source (May 17, 2020).

- Ranking Digital Rights. 2019. Corporate Accountability Index. Indicator P9: Collection of user information from third parties. Washington, D.C.: New America. source

- Ranking Digital Rights. 2019. Corporate Accountability Index. Indicator P3: Collection of user information. Washington, D.C.: New America. source

- Facebook. 2018. “Data Policy.” source (May 16, 2020).

- Necessary and Proportionate: International Principles on the Application of Human Rights to Communications Surveillance. 2014. source

- Ranking Digital Rights. 2019. Corporate Accountability Index. Washington, D.C.: New America. source

- Laroia, Gaurav, and David Brody. 2019. “Privacy Rights Are Civil Rights. We Need to Protect Them.” Free Press. source (May 18, 2020).

- Wachter, Sandra. 2019. Affinity Profiling and Discrimination by Association in Online Behavioural Advertising. Rochester, NY: Social Science Research Network. SSRN Scholarly Paper. source (February 11, 2020).

- Sankin, Aaron. 2020. “Want to Find a Misinformed Public? Facebook’s Already Done It.” The Markup. source (May 7, 2020).

- Regulating so-called “dark patterns” would help ensure that consent is freely given. See Darlington, Alexander. “Dark Patterns.” source (May 17, 2020).

- Maréchal, Nathalie, and Ellery Roberts Biddle. 2020. It’s Not Just the Content, It’s the Business Model: Democracy’s Online Speech Challenge – A Report from Ranking Digital Rights. Washington D.C.: New America. source (May 7, 2020).

- Facebook. n.d. “Advertising Policies.” source (May 15, 2020). In contrast, see National Fair Housing Alliance. 2019. “Facebook Settlement.” source (May 17, 2020).

- Julia Angwin, Madeleine Varner. 2017. “Facebook Enabled Advertisers to Reach ‘Jew Haters.’” ProPublica. source (March 16, 2020).

- Sankin, Aaron. 2020. “Want to Find a Misinformed Public? Facebook’s Already Done It.” The Markup. source (May 7, 2020).

- Satariano, Adam. 2020. “Europe’s Privacy Law Hasn’t Shown Its Teeth, Frustrating Advocates.” The New York Times. source (May 16, 2020).

- Satariano, Adam. 2019. “Google Is Fined $57 Million Under Europe’s Data Privacy Law.” The New York Times. source (May 16, 2020).

- NOYB. n.d. “Forced Consent (DPAs in Austria, Belgium, France, Germany and Ireland).” noyb.eu. source (May 16, 2020).Lomas, Natasha. 2018. “Facebook, Google Face First GDPR Complaints over ‘Forced Consent.’” TechCrunch. source (May 16, 2020).

- Ranking Digital Rights. 2019. Corporate Accountability Index. Indicator P3: Collection of user information. Washington, D.C.: New America. source see section 5.3, Privacy Gaps: source.

- Naidu, Prash. 2019. “Why Advertisers Are Staring down the Barrel of Big GDPR Fines.” Marketing Week. source (May 16, 2020).

- Rich, Jessica. 2019. “Opinion | Give the F.T.C. Some Teeth to Guard Our Privacy.” The New York Times. source (May 16, 2020).

- U.S. PIRG. 2018. “Public Interest Privacy Principles.” source (May 16, 2020).

- JD Supra. 2020. “Privacy Suits Against Zoom and Houseparty Test the CCPA’s Private Right of Action.” Data Security Law Blog. source (May 16, 2020).