Takeaways and First Principles

This three-part analysis—the literature review, expert insights, and global governance mapping—revealed a through line: Worsening power asymmetries are at the heart of much conflict, harm, and governance dysfunction in the digital domain. Whereas the internet emerged and spread as a relatively open, distributed enterprise, extreme concentrations of power now prevail as a handful of private companies and nation-states have consolidated control over digital technologies and spaces. Power is neither the sole nor absolute source of the world’s digital troubles; the origins of any complex challenge are multidimensional. But power in and over the digital realm is more concentrated than ever before and that has created a trajectory for the digital future that imperils security, equity, and human rights.

“Power in and over the digital realm is more concentrated than ever before and that has created a trajectory for the digital future that imperils security, equity, and human rights.”

First, consider corporate concentrations of power. Commentators have noted that cycles of decentralization and centralization pervade the history of the digital technology industry: Computing decentralized when the personal computer replaced mainframes, but then centralized again with Microsoft’s proprietary operating system.1 Open-source software and open protocols made for a decentralized world wide web, until Google, Meta, Amazon, and others used big data to monopolize core online services. Acquisitions and the high cost of computing hardware needed to train large language models have shifted cutting-edge AI research and development from a distributed network of university labs and startups to a handful of tech giants.2 (It remains to be seen if Meta’s decision to release the source code of its LLaMA foundation model will lead to meaningful decentralization).3

Today, in most areas of digital technology, centralization is at a zenith, as a combination of network effects, anti-competitive practices, and laissez-faire regulation have enabled American tech companies to become the world’s largest, most influential firms and carry out policies and practices worldwide that advance corporate interest, rather than the public interest. National governments, too, are concentrating digital power. Big data and ever-more sophisticated and pervasive surveillance technologies enable state censorship and control.

Government-ordered internet shutdowns have become more frequent, more precise, harder to detect, and longer in duration.4 Although an American was elected to lead the ITU, the digital authoritarian model of governance is gaining ground: In its 2022 annual report, Freedom House, a pro-democracy NGO, found that internet freedom had declined worldwide for the 12th consecutive year, as “more governments than ever are exerting control over what people can access and share online by blocking foreign websites, hoarding personal data, and centralizing their countries’ technical infrastructure.”5

At the same time, the digital domain is the most active battlefield in the escalating, zero-sum power struggle between the U.S. and China. In addition to the tussle over cyber norms and technical standards playing out in multilateral fora, the U.S. and China are vying to limit the other’s access to rare earth minerals and the development and spread of the other’s digital technologies like 5G infrastructure, subsea internet cables, semiconductors, and cloud computing. Increasing acrimony in the politics of each country toward the other impedes cooperation on urgent global digital governance areas, such as AI risk.

This struggle affects all nations, as the great powers push less influential countries to choose a side, and it shapes the parameters of digitization for the world. Especially when it comes to AI, considerations of safety, justice, and human rights take a back seat in the rush, echoed by U.S. policymakers and commentators, to “win the AI arms race.”6

Addressing these global power asymmetries is not simple. Since the issue is planetary in scope and born of entrenched social, political, and economic systems, it is not a problem to be solved but a situation to be managed. Though they can help, new technologies and markets are insufficient tools. It will require global governance that can diminish concentrations of power and make institutions and systems more accessible and equitable. It must at the same time maintain the decentralized, open, and democratic approaches to technological design and application that have enabled innovation and growth in the digital economy.

Global digital governance is beyond the scope of a single institution. Rather, as Anne-Marie Slaughter and Fadi Chehadé have written, it will take a networked, multi-stakeholder ecosystem able to “co-design digital norms, or actionable rules and implementation guidelines that give companies and citizens clear incentives to cooperate responsibly in the digital world.”7 Some of these will be national regulations and international treaties; some will be new multi-stakeholder bodies in the ICANN mold; some will be private sector initiatives like the Santa Clara Principles8 or the Frontier Model Forum; and others will be norms and habits of mind.

In our analysis, many ideas emerged about the frameworks and institutions that should be a part of this ecosystem. We identified several takeaways from across the five issue areas of study that could contribute to shaping a more equitable digital future. Below we identify five first principles that should inform future interventions and the way forward.

1. Pay It Forward to the Next Generation on AI Governance

It is not a stretch to say that the digital future depends on the global governance models and principles we build consensus on for AI. These systems are the cutting edge of digital technology, and they will have an impact on all of the issue areas examined in this report. Governance will steer the course of the technology and its outcomes. As the AI researcher Timnit Gebru and her co-authors have written, AI development “is not a preordained path where our only choice is how fast to run.”9 Global institutions are needed to bend that path toward safety, equity, and societal benefit. We have an opportunity to get in front of some of the worst possible harms stemming from AI right now, and if we do so we will be able to pay it forward to future generations when it comes to ensuring a safe, secure, and equitable digital domain.

There is no shortage of work on principles and guidelines for safe, ethical AI. Over the past several years, research labs, companies, governments, and many others have produced more than 100 sets of principles for safe, trustworthy, and ethical AI. A 2020 survey of 36 major ethical and rights-based AI principles documents found a fair amount of consensus, what the authors called a “normative core,” around the themes of privacy, accountability, safety, transparency, fairness and non-discrimination, human control of technology, professional responsibility, and human values.10 Since the release of ChatGPT, the proliferation of guidelines and calls to action have only intensified.11

Fewer have tackled the question of what kinds of institutions could govern the technology and facilitate global adoption of responsible AI principles and practices. A fragmented global AI governance regime exists, but only among Western democracies and their allies.12 The main players are intergovernmental organizations, such as the OECD, G7, and the ITU’s AI for Good initiative; technical and standards associations like the IEEE and ISO/IEC; companies, such as the AI giants that recently announced the Frontier Model Forum; and loose partnerships of non-state actors like the Partnership on AI. A slew of initiatives involving state and local governments, activist movements, research institutes, NGOs, and others also play a role in agenda-setting and norm-creation that indirectly influence global outcomes. Bilaterally, the EU-U.S. Trade and Technology Council has opened discussions to harmonize the jurisdictions’ emerging regulatory approaches to AI.13

None of these “global” efforts involve much of the globe. China is absent, as are developing nations with the exception of the OECD’s three Latin American members: Chile, Colombia, and Costa Rica. Given the ease with which AI models can proliferate across borders, global governance cannot have any gaps. It must involve the two biggest national players, the U.S. and China, which is no easy task given the digital competition and acrimonious domestic politics that dim the prospects for cooperation. Nor can global governance exclude the developing economies that will not only play an ever-larger role in the digital ecosystem as they continue to develop, but who are major stakeholders today, given that the digital economy, AI workforce, and impacts of technology are all transnational.

The governance model must be multi-stakeholder, involving those in civil society, ethics, and technical communities, and especially the tech companies who are developing AI. These firms are sovereign actors with as much power as nation-states; as Ian Bremmer and Mustafa Suleyman put it, “any regulatory structure that excludes the real agents of AI power is doomed to fail.”14

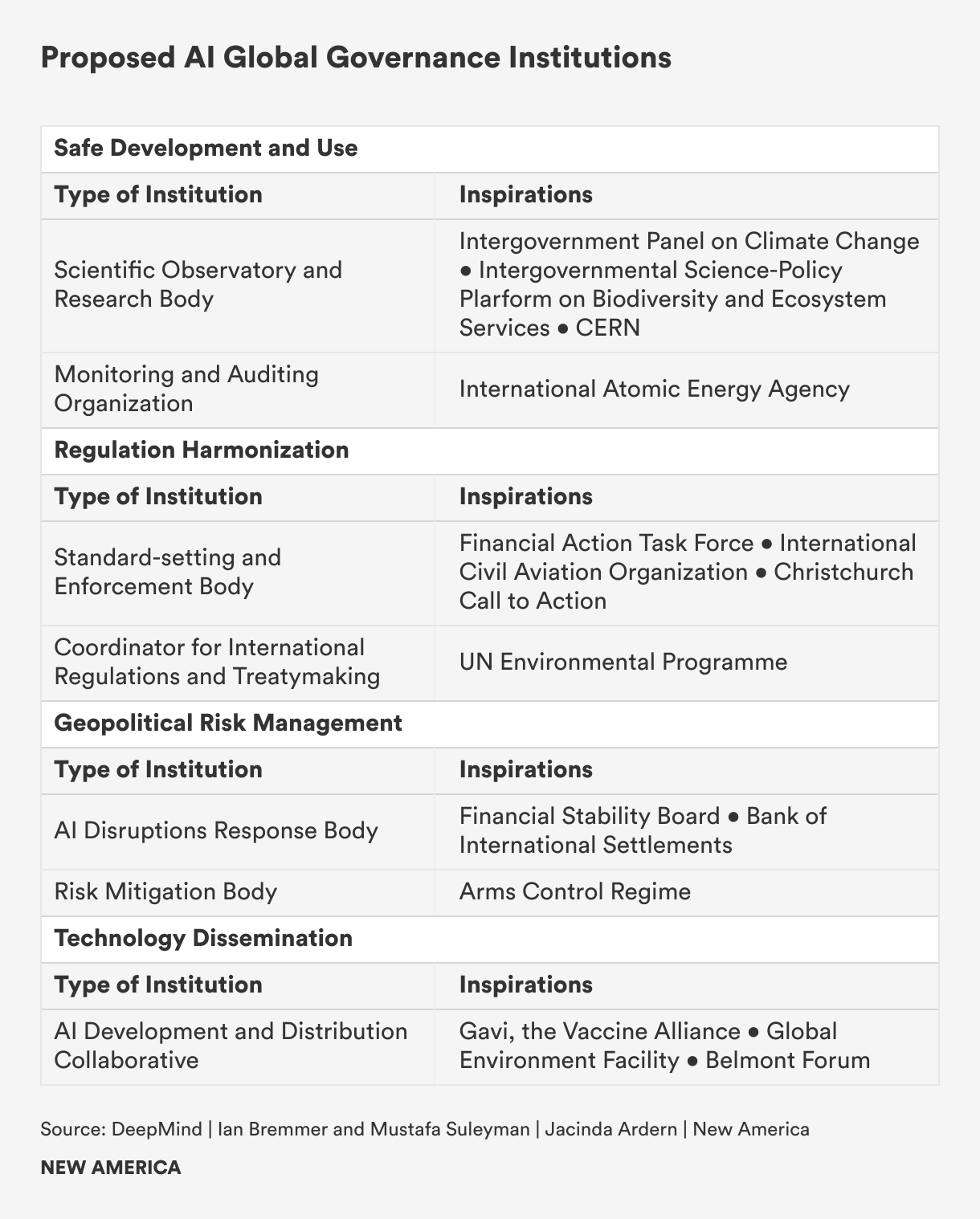

An AI global governance model would have several objectives. A team of researchers from DeepMind and a few universities identified four: spreading beneficial technology, harmonizing regulation, ensuring safe development and use, and managing geopolitical risks.15 Several researchers, experts, and officials have proposed new international governance bodies and agencies that could carry out these objectives.

The challenge, however, is not so much conceptualizing new institutions for global governance, but overcoming the geopolitical status quo to actually create them. In the face of entrenched corporate power and the fragmentation, distrust, and competition among nations—the U.S. and China especially—what kind of process could be both inclusive and high-level enough to bring all the players to the table and facilitate the cooperation necessary to stand up new institutions?

Nearly 70 years ago, the world faced an uncertain future due to the proliferation of nuclear weapons. The Cold War was heating up, with the U.S. and Soviet Union racing to build more and more-powerful bombs. Albert Einstein and Bertrand Russell penned a manifesto calling for a global scientific conference to understand and build consensus for managing the risks posed by the novel technology. In 1957, 22 eminent scientists from the U.S., Soviet Union, China, Japan, and six other nations gathered in Pugwash, Nova Scotia.16

That first meeting spawned follow-up conferences, workshops, study groups, and symposia, involving an ever-greater number of scientists, experts, and government officials attending in an unofficial capacity. Pugwash, as the platform became known, was vital for building global scientific consensus and shaping an international governance regime to manage nuclear, chemical, and biological weapons during an era of fraught or frozen relations between the Eastern Bloc and the Western democracies.17 The institution performed essential scientific and consensus-building work that contributed to the Partial Test Ban Treaty of 1963, the Non-Proliferation Treaty of 1968, and other international agreements on weapons of mass destruction (WMD).18 In a larger sense, Pugwash expanded global WMD governance beyond nation-states, operationalizing the idea that national sovereignty was not singular but that the greater interests of humanity also mattered. In 1995, Pugwash was awarded the Nobel Peace Prize for its contributions to WMD governance.

AI is vastly different from nuclear technology. AI proliferates easily and has countless applications, with varying levels of risk. The most powerful systems are built not by governments but by private companies. Perhaps only with the exception of autonomous military applications, the nonproliferation and control regime that has managed nuclear weapons is wholly unsuited for governing AI.

An evolved model of the Pugwash conference format could be just as effective for AI as it was for WMD. The starting point would be the recognition that AI is a planetary issue, one that affects societies worldwide, regardless of whether they own or develop the technology. Countries large and small will adopt, shape, and experience the consequences of AI systems; everyone has a stake in their safe, beneficial development and use. Thus, governance of the technology must be multi-stakeholder and globally representative, not left solely in the hands of the most powerful nations and corporations.

Just as the original Pugwash conferences made progress on WMD governance by involving scientists and experts representing non-national interests, a similar effort for AI would need to grapple with the challenge posed by not only national sovereignty, but also corporate sovereignty. Primarily, that means facilitating productive cooperation between the U.S. and China. Despite political tensions, the two countries’ scientific and research communities frequently collaborate: Chinese and American AI researchers teamed up to publish more articles between 2010 and 2021 than collaborators from any two other nations.19 And, it means building cross-sectoral consensus that can translate to regulation or other forms of pressure that can diminish corporate power and positively shape corporate behavior.

2. Invest in Distributed Access and Failsafes to Safeguard Connectivity

Concentration of power and inequality characterize various types of digital access, whether to the internet or to emerging technologies like AI models. Harmful outcomes, especially those that emerge from disparities between developing and wealthy nations, will persist so long as ownership and access are concentrated in the rich world. The absence of technology means underserved populations miss out on the benefits; the presence of technology developed by and for a different population can have unintended, dangerous consequences. Global governance should go beyond simply managing risks and harms. It should recognize that digital access is a priority for development as well as for security: Inequalities and economic disruption resulting from AI and other emerging technologies risk stoking populism and dangerous social upheaval.20

Despite advances in network technology, internet access is still highly centralized in ISPs. In many countries, these gatekeepers are either private monopolies or state-owned enterprises, often subject to government influence and control, which enables arbitrary shutdowns. In other countries, these gatekeepers lack the profit incentive to provide broadband or mobile service in marginalized, poor, or rural communities. They might have political clout that enables them to block competitors from entering a market.21 The first objective laid out in the UN Secretary General’s Roadmap for Digital Cooperation is to achieve universal connectivity by 2030.22 That connectivity will only be meaningful if distributed modes of access are made available.

Multilateral development banks and agencies should increase investments in “fail-safes” that can help shift the stewardship of connectivity away from ISPs and corporate power toward a more distributed model based on various multi-stakeholder initiatives that enable multiple access points, multiple providers, and multiple modes and means to connect. Fail-safes are alternative, modularized, or redundant means of access and connectivity. The World Bank, which is administering the Digital Development Partnership involving 11 national governments and three multinational corporations, the United Nations Development Programme, and other multilateral agencies and partnerships focused on universal access should promote the development and deployment of fail-safes.

For instance, satellite internet systems are one type of fail-safe. Solar-based internet protocols mitigate the power of nation states to block or interrupt access by optimizing system designs around planetary limitations. Though the first entrants in this field are private companies such as SpaceX and Amazon, public agencies such as the European Commission are investing in satellite internet systems, and it would be easy to imagine new private-public partnerships aimed at providing affordable or free access in rural, impoverished, or conflict-affected areas.23

Some municipalities seeking to strengthen meaningful connectivity for historically marginalized communities are taking the fail-safe approach, deploying redundant community and municipal broadband networks. In the city of Chattanooga, Tennessee, an open network called “the Gig” was built on the back of a city-owned electricity distribution system and is funded by a bond issue and a stimulus grant. The Gig charges reasonable rates for some of the fastest internet speeds on the planet and prioritizes access for low-income individuals.24 In New York City, a community network called NYC Mesh taps into existing internet infrastructure and connects to IXPs to provide a low-cost alternative to ISPs and more coverage opportunities across the city. Volunteers operate the network, which relies on user donations to operate. These kinds of local, grassroots initiatives can provide access to historically marginalized communities or individuals disenfranchised by the prohibitive costs imposed by ISPs.

At the same time, governments, multilateral agencies, and companies should address the divide in access to emerging technologies such as AI systems by investing in mechanisms that can transfer technology, raise funding, provide technical assistance, and develop education programs for data literacy. A team of DeepMind and university researchers, for example, have proposed a public-private “Frontier AI Collaborative” that would pool funding to purchase beneficial advanced AI models and then provide access to developing countries.25 The inspiration for the collaborative is GAVI, The Vaccine Alliance, a global public-private hub that gathers resources to purchase and then deliver vaccines to the world’s poorest people.

Instead of directly transferring technology, a multi-stakeholder financing mechanism could provide grants and blended funding for specific AI or other emerging technology development projects. Such an institution might emulate features of the Global Environment Facility, the multilateral fund that finances environmental and climate change mitigation and adaptation in developing countries. A true multi-stakeholder analog is the Belmont Forum, a collaborative of funding organizations, international science councils, and regional consortia that facilitates international, transdisciplinary research to help understand, mitigate, and support adaptation to climate change. Since 2009, the Forum has disbursed hundreds of millions of euros to support 134 projects undertaken by more than 1,000 scientists hailing from 90 countries.26 In addition to helping with financing, a digitally focused Belmont Forum could provide developing nations with publicly accessible large datasets to develop and train their own AI foundation models.

In addition, governments should create incentives to decentralize AI research and development. For instance, legislation passed by the 117th U.S. Congress designates roughly $80 billion to regional industrial policy initiatives such as the U.S. Economic Development Agency’s Tech Hub investments and the National Science Foundation’s Regional Innovation Engines competition.27 Regional innovation ecosystems such as Southwestern Pennsylvania’s New Economy Collaborative have received funds to supercharge their robotics and autonomous technologies sectors. This model of regional innovation hubs could be implemented globally by increasing investment outside of the U.S. in nontraditional innovation ecosystems in the developing world, which, as of now, lack the public and private investment necessary to compete with wealthy economies.

3. Privilege Regional Principles and Standards in Cybercrime

The battles playing out in multilateral fora between democracies and autocracies over the standards and norms of the digital domain illustrate the paradox at the heart of global digital governance. On the one hand, digital space is essentially borderless, and without universal standards and norms malicious actors will simply move to lenient jurisdictions. Yet, geopolitical competition and bad-faith engagement of autocracies stymie the prospects for global consensus on various cyber issues. Not only that, strict universal standards are not always desirable or practicable, given the vast differences in digitization across the world.

Regionally based institutions and coalitions of stakeholders that draw from similar legal and ethical traditions should be privileged when it comes to driving consensus on policy responses to cybercrime. At the same time, states seeking to advance global cyber norms that encourage a safe, open, equitable digital domain should try to generate consensus for widely-acceptable baseline standards on attribution, accountability, and risk management when it comes to cyberattacks and cyber-enabled criminal acts. Even though changing the cyber behavior of autocracies may prove difficult, democracies have an opportunity to encourage non-aligned, developing countries to adopt minimum standards and norms. But in order to succeed, those democracies need to understand and respect local context and allow countries the agency to tailor digital norms to their circumstances.

Cybercrime is one area where this approach might yield results. Putting aside the fact that some states sponsor transnational cybercrime, different regions and groups experience cybercrime differently. Norms surrounding privacy and acceptable speech vary from one jurisdiction and culture to another, for instance. In addition, technical literacy and capacity determine the real and perceived harm of a cybercrime. Low-income countries might have justifiably little interest in passing cybercrime legislation when lack of broadband access or electricity are more pressing concerns for the population. These challenges dim the prospects of universal support for a comprehensive definition of cybercrime.

The Digital Futures Task Force working group on cybercrime proposed a looser global framework definition of cybercrime that distinguishes between technical cybercrimes and social cybercrimes. Democracies then could encourage regional organizations and clubs, such as ASEAN and ECOWAS (the Economic Community of West African States), to elevate the issue on their agendas and categorize cybercrime within that framework on a regional basis. The demand-side approach could encourage national governments and regional organizations to prioritize demand-side governance solutions: capacity building, victim compensation, and cyber awareness and education. As a practical matter, that might be a more promising governance approach, since, so long as autocracies continue to enable transnational cybercrime, curbing the global supply of cybercriminal activity will be a challenge.

4. Practice What You Preach on Surveillance and Spyware

Another area where democracies could do more to shape global cyber norms is in digital surveillance. The prospect of international agreements prohibiting or constraining the use of surveillance technologies in the near future appears remote, as these tools have become indispensable instruments of control for authoritarian nations. However, the U.S. and other democracies could build greater international consensus for limiting the export and use of certain harmful surveillance technologies.

One such type of technology is commercial spyware. Between 2011 and 2023, at least 74 governments hired commercial firms to acquire spyware and digital forensic technologies.28 These technologies violate privacy rights and also threaten national security: In 2021, revelations emerged that foreign entities had used Pegasus software, a hacking tool used to spy on individuals via mobile phone, to spy on government officials such as French President Emmanuel Macron and Pakistan’s Prime Minister Imran Khan.29

Following a public outcry amid revelations that the U.S. Federal Bureau of Investigation had purchased the software, the U.S. blacklisted NSO, the producer of Pegasus software, nearly bankrupting it. In March 2023, the U.S. announced rules that restrict the operational use of commercial spyware that poses a risk to national security.30 Across the Atlantic, there have been calls for the E.U. to implement a moratorium on commercial spyware.31 In March 2023, the Biden Administration issued a joint statement with the governments of Australia, Canada, Costa Rica, Denmark, France, New Zealand, Norway, Sweden, Switzerland, and the United Kingdom on their efforts to prevent the proliferation and misuse of commercial spyware.

While these were promising steps, the U.S. has not fully implemented its own commitments. Despite its prohibition on the use of commercial spyware, the U.S. government blacklist is spotty and agencies such as the Drug Enforcement Administration continue to use spyware tools created by foreign companies.32 The U.S. should lead by example and enact a truly government-wide moratorium on commercial spyware. Otherwise, these declarations and statements ring hollow. In doing so, the U.S. has the opportunity to shape global cyber norms and rally foreign partners to implement an international moratorium on the exportation, sale, and use of spyware. While autocratic nations are unlikely to endorse such a moratorium, there is a potential for engagement with developing, non-aligned nations that are frequently the victims of spyware, who may be inclined to adopt this norm.

5. Redistribute Data Value to Rebalance the Data Protection Equation

Central to the debate on data protection and data sovereignty is the question of ownership. Who should own the data, and therefore the value, generated by individuals? As it stands now, those who create data do not own it. In order to access most digital services, users relinquish their right to ownership per the website’s terms and conditions. This is by design. The business models of several of the largest technology companies depend on big data. These companies generate vast revenues from the personal data of their product users, who provide that data freely and see no share of the value it affords. Large language models (LLMs) are the latest example of this imbalance; training an advanced LLM requires tens of gigabytes of text found on millions of websites. The creators of this text receive no compensation for its use.

The users who generate big data should have rights and be entitled to compensation for its use. One mechanism to do this could be a fund that allocates a “data dividend” to individuals. Modeled after Alaska’s Permanent Fund, a state-owned corporation that issues every Alaskan an annual payment drawn from the proceeds of the state’s oil revenues, a permanent data fund would pay every resident of a jurisdiction an annual payment for the value of the data they create and that tech companies use.33 National and even state governments should create data funds, capitalizing them with fees levied on large tech companies.34

Other proposals envision new guilds, unions, or public coalitions that could collectively bargain with Big Tech companies over compensation and usage rights for the data they provide.35 Because the data of one user is virtually worthless but the data of many together is valuable, these proposals would require users to collectively organize and bargain to have any power in the data economy.36 The responsibility of collective bargaining could be taken on by existing unions or civil society organizations that have the experience needed to negotiate with Big Tech multinationals.

Next Steps: The Future of the Digital Futures Task Force

In 2024, we plan to reconvene the Digital Futures Task Force and expand its membership to include even more participants from the developing world and a larger number of three types of experts: (1) lawyers, who understand the legal implications and constraints imposed by emergent technologies on shared conceptions of sovereignty and citizenship; (2) technologists, who understand the possibilities and limitations of emergent capabilities and utilities; and (3) ethicists, who understand the social, psychological, and moral ramifications of emergent technologies. These three disciplines—law, engineering, and ethics—are essential for developing a sociotechnical approach to digital governance.

The task force will narrow its focus to two areas where acute power asymmetries, especially between wealthy nations and developing nations, are especially consequential for the digital future.

The first is global AI governance. There are few multi-stakeholder processes aimed at creating frameworks and institutions for governing AI; even fewer include representation from much of the developing world. As an example, one international assessment seeking to inform global governance models for artificial general intelligence surveyed 55 AI leaders, not one of whom was from Africa, South Asia, or Latin America.37 Our plan is to change the conversation by changing who is at the table talking about what is next. The task force will bring a geographically diverse set of views together to provide a better understanding of how developing nations experience AI and what their priorities are for its governance. In this way, we aim to help broaden global engagement in the AI governance conversation.

The second area the task force will address is the global battle over data protection and data access playing out in the developing world. The efforts of U.S. and Chinese companies to control the data, eyeballs, and network infrastructure in developing countries is undermining national sovereignty and driving conflict. The EU, India, and Russia, meanwhile, are carving out their own lanes in this race for influence over how emergent technologies are governed in developing economies. A detailed, region-by-region and country-by-country picture of this frontline in global cyberpolitics is needed, especially in four areas:

- Data Sovereignty: Who is laying claim to a country’s data? And how?

- Data Protection: Who is supplying a country with surveillance technologies, big data tools, and the computing resources used in security and public safety?

- Data Access: How do foreign countries and companies influence the parameters of internet access in a given country?

- Agenda Setting: Who is influencing a nation’s decision-making when it comes to legislation and policy surrounding digital technologies? And how?

Big picture: The challenge we’ve set for ourselves is to contribute to the dispersed global effort to bring about more principled stewardship of the digital domain. The question of how to conceptualize sovereignty is the backbone of many conversations about the future of the digital domain. The struggles we are now experiencing over how to think about and define so-called Rubicon thresholds in the digital domain will have implications for the future of the open internet, cybersecurity, AI, and the balance of power in the digital domain between technopolies and nations, citizens and governments, and wealthy and developing economies. We know that global institutions and fora are bound to proliferate; that is a good thing. We need more public debate, not less, if we want to get a handle on the digital future.

Citations

- Tim O’Reilly, “Why It’s Too Early to Get Excited about Web3,” O’Reilly Media, December 13, 2021, source; Steve Lohr, “At Tech’s Leading Edge, Worry About a Concentration of Power,” New York Times, September 26, 2019, source.

- O’Reilly, “Why It’s Too Early to Get Excited about Web3”; Lohr, “At Tech’s Leading Edge.”

- Cade Metz and Mike Isaac, “In Battle Over A.I., Meta Decides to Give Away Its Crown Jewels,” New York Times, May 18, 2023, source.

- Steven Feldstein, Government Internet Shutdowns Are Changing. How Should Citizens and Democracies Respond? (Washington, DC: Carnegie Endowment for International Peace, March 31, 2022), source.

- Freedom on the Net 2022: Countering an Authoritarian Overhaul of the Internet (Washington, DC: Freedom House, October 2022), source.

- Justin Sherman, Reframing the U.S.-China AI ‘Arms Race’ (Washington, DC: New America, March 6, 2019), source.

- Anne-Marie Slaughter and Fadi Chehadé, “AI’s Pugwash Moment,” July 24, 2023, Project Syndicate, source.

- The Santa Clara Principles on Transparency and Accountability in Content Moderation, source.

- Timnit Gebru, Emily M. Bender, Angelina McMillan-Major, and Margaret Mitchell, “Statement from the Listed Authors of Stochastic Parrots on the ‘AI Pause’ Letter,” DAIR Institute, March 31, 2023, source.

- Jessica Fjeld, Nele Achten, Hannah Hilligoss, Adam Nagy, and Madhulika Srikumar, Principled Artificial Intelligence: Mapping Consensus in Ethical and Rights-based Approaches to Principles for AI (Cambridge, MA: Berkman Klein Center for Internet & Society, January 2020), source.

- The Presidio Recommendations on Responsible Generative AI (Geneva, Switzerland: World Economic Forum, June 2023), source.

- Lewin Schmitt, “Mapping Global AI Governance: A Nascent Regime in a Fragmented Landscape,” AI and Ethics 2 (2022): 303–314, source.

- Alex Engler, The EU and U.S. Diverge on AI Regulation: A Transatlantic Comparison and Steps to Alignment (Washington, DC: Brookings Institution, April 25, 2023), source.

- Ian Bremmer and Mustafa Suleyman, “The AI Power Paradox,” Foreign Affairs, August 16, 2023, source.

- Lewis Ho et al., “International Institutions for Advanced AI,” DeepMind, July 11, 2023, source.

- For background and more information, see the website for the Pugwash Conferences on Science and Global Affairs: source.

- Alison Kraft and Carola Sachse, “The Pugwash Conferences on Science and World Affairs: Vision, Rhetoric, Realities,” in Science, (Anti-)Communism and Diplomacy: The Pugwash Conferences on Science and World Affairs in the Early Cold War, ed. Alison Kraft and Carola Sachse (Boston, MA: Brill, 2020), 1–39, source.

- “Pugwash Conferences on Science and World Affairs: Facts,” Nobel Prize, source.

- Edmund L. Andrews, “China and the United States: Unlikely Partners in AI,” Stanford University Human-Centered Artificial Intelligence, March 16, 2022, source; Cameron F. Kerry, Joshua P. Meltzer, and Matt Sheehan, Can Democracies Cooperate with China on AI Research? (Washington, DC: Brookings Institution, January 2023), source.

- Kyle Hiebert, “Tech-Fuelled Inequality Could Catalyze Populism 2.0,” Centre for International Governance Innovation, October 19, 2022, source.

- Bill Snyder and Chris Witteman, “The Anti-Competitive Forces that Foil Speedy, Affordable Broadband,” Fast Company, March 29, 2019, source.

- UN General Assembly, “Road map for digital cooperation: implementation of the recommendations of the High-level Panel on Digital Cooperation: Report of the Secretary-General,” 74th session, May 29, 2020, source.

- European Commission, “Satellite Broadband,” source.

- Ben Tarnoff, Internet for the People (New York: Verso, 2022), 41–42.

- Ho et al., “International Institutions for Advanced AI,” source.

- Belmont Forum, Annual Report 2022 (Montevideo, Uruguay: Belmont Forum, 2022), source.

- Mark Muro, Julian Jacobs, and Sifan Liu, Building AI Cities: How to Spread the Benefits of an Emerging Technology Across More of America (Washington, DC: Brookings Institution, July 20, 2023), source.

- Steven Feldstein and Brian (Chun Hey) Kot, Why Does the Global Spyware Industry Continue to Thrive? Trends, Explanations, and Responses (Washington, DC: Carnegie Endowment for International Peace, March 14, 2023), source.

- Sabrina Tavernise (host), “The U.S. Banned Spyware — and Then Kept Trying to Use It,” The Daily (podcast), May 15, 2023, source.

- “Executive Order on Prohibition on Use by the United States Government of Commercial Spyware that Poses Risks to National Security,” The White House, March 27, 2023, source; Sabrina Tavernise (host), “The U.S. Banned Spyware — and Then Kept Trying to Use It,” The Daily (podcast), May 15, 2023, source.

- “EU Final Vote on Spyware Inquiry Must Lead to Stronger Regulation,” Amnesty International, June 15, 2023, source.

- Mark Mazzetti, Ronen Bergman, and Matina Stevis-Gridneff, “How the Global Spyware Industry Spiraled Out of Control,” New York Times, December 8, 2022, source.

- Angel Au-Yeung, “California Wants To Copy Alaska And Pay People A ‘Data Dividend,’ Is It Realistic?” Forbes, February 14, 2019, source.

- Barath Raghavan and Bruce Schneier, “Artificial Intelligence Can’t Work Without Our Data,” Politico Magazine, June 29, 2023, source.

- Matt Prewitt, “A View of the Future of Our Data,” Noema Magazine, February 23, 2021, source.

- Jaron Lanier, “There Is No AI,” New Yorker, April 20, 2023, source.

- “Transition from Artificial Narrow Intelligence to Artificial General Intelligence,” The Millenium Project, April 12, 2021, source.