The Rise of Neurotech and the Risks for Our Brain Data

Table of Contents

- Introduction

- Brain-Computer Interfaces: Fundamentals and Applications in Commercial and Medical Contexts

- Privacy and Security Challenges

- Current Legislative Landscape and Proposed Regulatory Approaches

- Proposed Approaches for Data Protection

- The Path Forward: Balancing Security, Ethics, and Regulation

Abstract

Until recently, controlling your environment through thought was the realm of science fiction. Today, brain-computer interfaces are turning that vision into reality, enabling people to communicate, interact with digital devices, and even regain mobility through neural signals. While these advancements hold transformative potential, especially for individuals with speech and motor impairments, they also present serious privacy and security risks. Brain-computer interfaces capture neural data, some of the most intimate and personal information imaginable, yet there is little regulatory oversight governing their use outside of medical contexts.

This report explores the emerging threats posed by commercial and consumer brain-computer interfaces, from data exploitation and manipulative behavioral profiling to cybersecurity vulnerabilities that could allow unauthorized access to neural activity. It also examines the gaps in U.S. regulatory frameworks, where the Food and Drug Administration’s jurisdiction over medical devices fails to extend to commercial neurotechnology, leaving consumers exposed to unchecked data collection. State-level efforts in California and Colorado signal a growing recognition of these risks, but without a unified national approach, inconsistencies in legal protections persist. To address these challenges, this report proposes a comprehensive regulatory framework emphasizing secure-by-design principles, manufacturer accountability, and stronger legal protections for neural data privacy. As brain-computer interface adoption accelerates, policymakers, technologists, and legal experts must work together to ensure these technologies are developed responsibly while protecting both innovation and fundamental rights in the process.

Acknowledgments

I would like to express my gratitude to Lauren Zabierek, Peter Singer, and Bridget Chan for their valuable feedback and insights on this report. Their expertise and thoughtful contributions have been instrumental in refining the analysis and recommendations presented here. Their time and support are greatly appreciated.

Editorial disclosure: The views expressed in this report are solely those of the author and do not reflect the views of New America, its staff, fellows, funders, or board of directors.

Downloads

Introduction

Until recently, the idea of someone controlling their environment through thought was confined to science fiction. But technological advancements have shifted this concept into the realm of possibility. Today, humans can harness electrical signals produced by brain activity to interact with, influence, and modify their surroundings. The rapidly evolving field of brain-computer interface (BCI) technology offers transformative potential, particularly for individuals with speech and mobility impairments, by allowing them to communicate or operate assistive devices through the power of thought. The potential applications of these technologies are vast. Examples include enabling speech for individuals like Casey Harwell, who was overjoyed to communicate with his family again after losing his ability to speak due to amyotrophic lateral sclerosis (ALS).1

The integration of BCIs into everyday life raises significant privacy and security concerns. As companies gather and analyze the resulting data, often without explicit consent or knowledge from users, the potential for misuse or unintended consequences becomes pressing. The platforms that utilize BCIs will capture and record individuals’ neural activity data at an unprecedented scale as researchers continue to integrate the power of BCI technologies into more applications.

When neural data is collected from a BCI device, it can be analyzed by companies, allowing them to gather information about users that was not volunteered or directly related to the device itself. The neural activity data captured by these devices is deeply personal and, in many cases, could reveal intimate details about an individual’s thoughts, emotions, and cognitive states. This concern is exacerbated by the current lack of comprehensive federal regulation governing the use of BCIs outside of medical contexts, as well as the absence of robust data privacy and security laws.

While the U.S. Food and Drug Administration (FDA) regulates medical devices, including those incorporating BCI technology for therapeutic purposes, its rulemaking authority does not extend to commercial uses of BCI technology.2 The FDA’s guidance on cybersecurity protections for medical devices does not apply in the context of commercial neurotechnology applications because those are typically categorized as consumer electronics rather than medical devices.3 This leaves a significant gap in protections for consumer neurotechnology products, making their users particularly vulnerable to privacy breaches and data exploitation.

Companies like Snap and Apple are exploring BCI applications for consumer use, from enhancing augmented and virtual reality experiences to developing new communication tools.4 These companies are actively researching and developing BCI technologies, often leveraging the neural data they collect to refine their products and services. Beyond what consumers expect, the data collected through neurotechnology applications could be used for more troubling purposes, such as manipulative behavioral profiling, unauthorized cognitive monitoring, or even influencing decision-making processes. In more extreme cases, there is potential for misuse in surveillance, law enforcement, and military contexts.

“Data collected through neurotechnology applications could be used for more troubling purposes, such as manipulative behavioral profiling, unauthorized cognitive monitoring, or even influencing decision-making processes.”

The need for regulation becomes even more urgent considering the legislative landscape. While several federal comprehensive privacy bills have been introduced in Congress that could include BCI oversight, none have been enacted into law. Some states are beginning to address these concerns: Recent legislation in Colorado and California specifically targets neurotechnology, imposing restrictions on the collection and use of neural data.5 Colorado’s legislation requires companies to obtain explicit consent before collecting neural data and provides users with the right to access and delete their data. Similarly, California has introduced regulations emphasizing transparency and user control over neurodata. These state-level initiatives highlight a growing recognition of the need to protect individuals’ neural privacy and may serve as a model for future federal regulations.

Nonetheless, without a unified national framework, inconsistencies in legal protections across states could leave many consumers vulnerable. In addition to regulatory measures, efforts to enhance public education and awareness of BCI technology are crucial to ensuring that individuals understand how their neural data is collected, used, and protected.

To address these challenges, it is imperative to establish a regulatory framework that encompasses all BCI technologies, regardless of their intended use. This framework should ensure that consumers are fully informed about how their neural data is collected, used, and protected. It should also establish clear guidelines for companies on data handling and set stringent penalties for violations. The framework should include guidelines for secure software development, ensuring that BCI technologies are built and maintained with strong cybersecurity measures. Additionally, appropriate regulatory bodies should be designated to oversee the implementation and enforcement of these regulations.

Given its expertise in medical device regulation, the FDA could play a central role in this oversight, possibly in conjunction with other agencies like the Cybersecurity and Infrastructure Security Agency (CISA) and the Federal Trade Commission (FTC), which is experienced in consumer protection and data privacy issues. This holistic approach would help safeguard user data and maintain public trust in these emerging technologies.

To fully address the challenges and opportunities presented by neurotechnology, this report will explore key areas essential for establishing a robust regulatory framework. Following this section, we will first delve into the fundamentals of BCIs, examining their applications in both medical and commercial contexts. Next, we will analyze the privacy and security challenges unique to BCI technology, highlighting the ethical concerns and potential risks associated with neural data collection and usage. Building on these insights, we will evaluate the current legislative landscape, including state-level initiatives and gaps in federal oversight. Finally, the report will propose a comprehensive regulatory approach that incorporates secure-by-design principles, defines clear accountability for software manufacturers, and establishes a unified federal framework to ensure consumer protection while fostering innovation. By outlining these critical elements, this report aims to provide a roadmap for policymakers, technologists, and stakeholders in navigating the evolving landscape of neurotechnology regulation.

Citations

- “Brain-Computer Interface Allows Man with ALS to ‘Speak’ Again,” Brown University News, August 14, 2024, source; Jianan Chen et al., “fNIRS-EEG BCIs for Motor Rehabilitation: A Review,” Bioengineering 10, no. 12 (December 6, 2023): 1393, source; Daniel Feit, “Hands On: NeuroBoy, a Game You Play With Your Brain,” WIRED, October 1, 2009, source.

- Neuromodulation and Physical Medicine Devices/Acute Injury Devices Team, Implanted Brain-Computer Interface (BCI) Devices for Patients with Paralysis or Amputation: Non-Clinical Testing and Clinical Considerations (U.S. Food and Drug Administration, May 20, 2021), source.

- Center for Biologics Evaluation and Research, Cybersecurity in Medical Devices: Quality System Considerations and Content of Premarket Submissions,” (U.S. Food and Drug Administration, September 2023), source.

- Sissi Cao, “Snap’s Latest Acquisition Is a Bet on a Metaverse Controlled By Thoughts,” Observer, March 24, 2022, source; Synchron, “Synchron Announces First Use of Apple Vision Pro with a Brain Computer Interface,” Business Wire, July 30, 2024, source.

- “HB24-1058: Protect Privacy of Biological Data,” Colorado General Assembly, April 17, 2024, source; “SB 1223: Consumer Privacy: Sensitive Personal Information: Neural Data,” California State Legislature, September 28, 2024, source.

Brain-Computer Interfaces: Fundamentals and Applications in Commercial and Medical Contexts

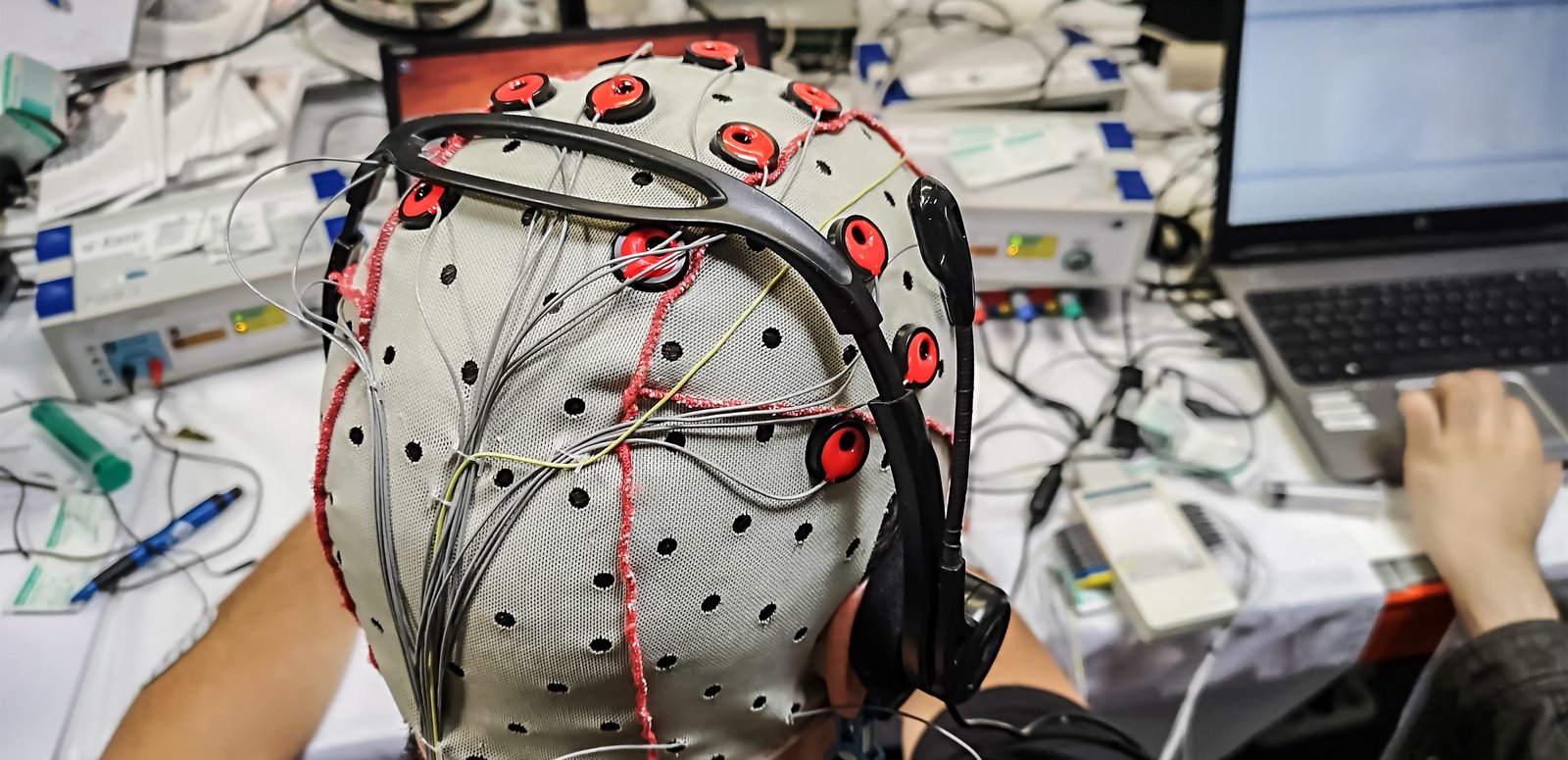

Brain-computer interfaces (BCIs) represent a transformative leap in technology, bridging the gap between the human brain and external devices. At their core, BCIs facilitate direct communication between the brain and computers by leveraging neural signals, which are either recorded through noninvasive methods like electroencephalography (EEG) or invasive techniques involving implants, to interpret and act upon the user’s thoughts and intentions.

BCIs can be broadly classified into three categories: invasive, partially invasive, and noninvasive.1 Invasive BCIs involve the surgical implantation of electrode arrays directly into the brain. This method requires intricate and invasive surgery to position the electrodes near the target neurons, and it typically delivers more precise results than noninvasive alternatives. Partially invasive BCIs, such as those utilizing electrocorticography (ECoG), involve implanting electrodes within the skull or on the brain’s surface. This method serves as a middle ground between invasive and noninvasive BCIs, balancing signal quality and invasiveness. Lastly, noninvasive BCIs, which include techniques such as EEG and functional magnetic resonance imaging (fMRI), do not require surgical intervention. These BCIs detect the activity of larger groups of neurons through devices that do not penetrate the skull, and they provide less precision compared to invasive methods.

The varying levels of invasiveness in BCIs offer a spectrum of options, accommodating different applications and needs while shaping the future of human-computer interaction and neurotechnology. However, these distinctions also raise important regulatory questions. Invasive BCIs, which require surgical implantation, often fall under medical device regulations, whereas noninvasive and partially invasive BCIs—particularly those marketed for consumer use—may not be subject to the same oversight. This regulatory gap highlights the need for clearer standards that address the unique risks associated with each category. The potential for harm varies across these categories, with invasive BCIs posing risks related to surgical procedures and device integration, while noninvasive BCIs present privacy and cybersecurity concerns due to the scale of data collection. Further research is needed to explore the intersection of these categories, develop appropriate regulatory frameworks, and establish standards that mitigate the risks associated with each type of BCI.

The potential applications of BCIs are vast, from enhancing communication and control for individuals with disabilities to creating new forms of human-computer interaction in everyday life. As BCIs continue to develop, they are poised to revolutionize both medical treatments and commercial products, offering unprecedented access to the intricacies of the human mind.

Medical Applications

In the medical field, BCIs have garnered significant attention for their potential to restore and enhance functions in individuals with neurological impairments. One of the most promising applications is in aiding individuals with severe motor disabilities, such as those resulting from spinal cord injuries, amyotrophic lateral sclerosis (ALS), or strokes. For instance, BCIs can enable users to control prosthetic limbs or computer cursors directly with their thoughts, providing a newfound level of independence and interaction with their environment.2

BCIs also play a role in communication aids for patients who have lost the ability to speak. By decoding neural signals associated with speech planning, these systems can translate thoughts into synthesized speech, which enables communication. This is especially valuable for patients with conditions like ALS, where individuals may lose the ability to speak due to nerve or muscle degeneration while retaining full cognitive function and a desire to communicate effectively.3 Additionally, BCIs are utilized in monitoring and treating neurological conditions such as epilepsy and Parkinson’s disease, providing real-time data and enabling interventions that can alleviate symptoms or manage disease progression.

The life-changing potential of BCIs in medical settings is clear, offering enhanced communication for individuals with speech impairments and innovative rehabilitation methods. However, the deployment of these technologies also presents ethical and practical challenges, including safety, accessibility, and long-term implications. These concerns will be further explored in the following sections, where we will examine regulatory gaps, privacy risks, and the steps needed to ensure responsible development and use of BCIs.

Commercial Applications

Imagine you’re using a BCI device to control your smart home system. As you think about adjusting the lighting or temperature or even playing your favorite music, the BCI picks up your neural signals and executes these commands seamlessly. While doing so, the device also monitors your physiological responses—such as changes in brainwave patterns, heart rate, and other biomarkers—providing a detailed map of your emotional and cognitive states.

As you continue using the BCI for other tasks, such as browsing the internet or interacting with social media, the device gathers more data on your preferences and behaviors. It notices that you spend more time engaging with content related to certain brands or topics, and it records the neural responses associated with these interactions. Soon, advertisements for those brands and similar content begin appearing more frequently in your digital environment. This is because the data collected by the BCI, including your neural responses and engagement patterns, is analyzed and sold to marketing agencies.4 These agencies use the data to craft personalized marketing strategies that target you based on the insights gleaned from your neural activity.

While personalization may enhance user experience, it introduces serious privacy and ethical concerns, particularly when neural data is used for manipulative, coercive, or exploitative purposes. The risk of weaponized neural data mirrors broader concerns about digital surveillance and behavioral exploitation. In a 2024 article, Pavlina Pavlova explores how personal data—particularly biometric and behavioral information—has been misused to track, manipulate, and control individuals.5 Her research underscores how highly sensitive data, once collected, is often repurposed in ways users never intended, with little transparency or oversight.

A striking parallel between Pavlova’s findings and the risks posed by BCIs is the way intimate personal data can be leveraged for psychological and social manipulation. Pavlova documents how stalkerware and invasive surveillance tools have enabled perpetrators to track victims’ behaviors, monitor their interactions, and exert control over their decisions. BCIs introduce an even more concerning possibility—instead of merely tracking behaviors, they capture real-time cognitive responses, giving companies, governments, or bad actors the ability to interpret and predict thoughts before they are even consciously expressed. This creates new vulnerabilities for coercive influence, neuro-surveillance, and cognitive manipulation, particularly in high-risk populations such as activists, journalists, and marginalized groups.

“This creates new vulnerabilities for coercive influence, neuro-surveillance, and cognitive manipulation, particularly in high-risk populations such as activists, journalists, and marginalized groups.”

The depth and scope of personal information that can be extracted from BCI data are far more extensive than one might expect from simply using the technology for convenience or entertainment. BCIs can reveal not only your immediate preferences but also deeper psychological states, potentially exposing intimate aspects of your personality and mental health. In workplace settings, BCIs are being developed to monitor cognitive states, track focus, detect fatigue, and assess stress levels in employees.6 While proponents argue that such tools could improve productivity and well-being, they also raise concerns about workplace surveillance, autonomy, and employer control over mental states. If employers have access to real-time neural data, workers may face pressure to optimize their cognitive performance at the cost of privacy and personal agency. Without clear regulations, neural monitoring in workplaces could blur the lines between productivity enhancement and invasive oversight. The commodification of such sensitive data could lead to its exploitation by companies and government entities for purposes even beyond consumer profiling.7

BCIs are making significant strides in commercial markets, transforming how consumers interact with technology. In gaming and entertainment, BCIs offer immersive experiences by allowing users to control game elements or virtual environments through thought alone.8 This capability extends to virtual reality and augmented reality systems, enhancing user experiences with more intuitive and natural interactions. Companies like Neuralink are pushing the boundaries by developing interfaces that integrate seamlessly with computers and digital devices, promising a future where mind control is a part of everyday technology use.9

As BCIs become more prevalent in commercial settings, it is important to consider the implications for consumer rights and privacy. The potential for misuse of neural data in commercial applications, such as targeted advertising or unauthorized data collection, underscores the need for robust regulatory frameworks. These frameworks must ensure that companies are transparent about their data practices and provide consumers with control over their neural data. Additionally, as the technology advances, there is a need for ongoing public education and dialogue about the ethical and societal implications of BCIs in commercial contexts.

Citations

- Gabriel G. De la Torre et al., “Wireless Computer-Supported Cooperative Work: A Pilot Experiment on Art and Brain–Computer Interfaces,” Brain Sciences 9, no. 4 (April 25, 2019): 94, source.

- Avery Watkins, “Restoring Amputees’ Natural Functionality with Brain-Controlled Interfaces,” MIT News, July 13, 2021, source; Yijun Wang et al., “A P300-Based BCI System for Controlling Computer Cursor Movement,” Proceedings of the 2011 Annual International Conference of the IEEE Engineering in Medicine and Biology Society, August 2011, source.

- Yijun Wang et al., “A P300-Based BCI System for Controlling Computer Cursor Movement,” Proceedings of the 2011 Annual International Conference of the IEEE Engineering in Medicine and Biology Society, August 2011, source.

- Daniel Berrick, “BCI Commercial and Government Use: Gaming, Education, Employment, and More,” Future of Privacy Forum Blog, February 8, 2022, source.

- Pavlina Pavlova, “The Digital War on Women: Sexualized Deepfakes, Weaponized Data, and Stalkerware That Monitors Victims Online,” Ms. Magazine, November 21, 2024, source.

- David J. Lynch and Jacob Ward, “What Brain-Computer Interfaces Could Mean for the Future of Work,” Harvard Business Review, October 1, 2020, source.

- Sasha Burwell, Matthew Sample, and Eric Racine, “Ethical Aspects of Brain Computer Interfaces: A Scoping Review,” BMC Medical Ethics 18, no. 60 (November 9, 2017), source.

- Louise Poirier, “Video Games Offer Brain-Computer Interface Training Ground,” American Society of Mechanical Engineers, May 16, 2024, source.

- “PRIME Study Progress Update,” Neuralink Blog, April 2024, source.

Privacy and Security Challenges

Brain-computer interfaces (BCIs) can access neural signals directly from an individual’s brain and potentially translate these signals into data without that person’s explicit consent. This capability poses significant privacy concerns, primarily because the data extracted can reveal intimate information about a person’s thoughts, emotions, and subconscious states. As noted, BCI utilization can be concerning in contexts such as the workplace or consumer spaces where BCIs might be employed under less stringent ethical standards. For example, China has deployed BCI technology to detect changes in emotional states in employees on the production line.1

Privacy risks are exacerbated by the depth of information available from neural data, which could be more revealing and personal than any data collected through conventional means. Marketers might exploit emotional or cognitive information to tailor advertisements in real-time, manipulating consumer behavior more effectively and intrusively than ever before. In an article published by the audience agency Nielsen, the authors noted that by “using electroencephalography, we’re able to tell, second by second, what parts of the commercial elicit a response from the viewer, including what in particular is catching the attention and activating the memory of the viewer.”2

This level of insight into a viewer’s brain activity has troubling potential for manipulation, behavioral conditioning, and the erosion of personal autonomy. Unlike traditional digital marketing, which relies on engagement tracking and browsing behavior, BCIs provide direct access to subconscious cognitive and emotional responses, creating an entirely new frontier for behavioral targeting. Advertisers, political strategists, and technology companies could leverage this data to develop hyper-personalized content that not only responds to user preferences but actively shapes them over time.

The ability to analyze and exploit neural activity introduces significant ethical concerns. If companies can detect which types of content trigger emotional responses such as excitement, fear, or trust, they can optimize not just ad placement but the neurological impact of the content itself. This could lead to a feedback loop where users are unknowingly conditioned to respond more strongly to specific emotional triggers, making them more susceptible to targeted persuasion, compulsive engagement, and even ideological manipulations.

Beyond commercial applications, the potential for coercion and psychological influence through neural data analysis presents broader societal risks. Governments, interest groups, and political campaigns could use real-time neural feedback to refine messaging strategies that subtly nudge individuals toward specific attitudes or beliefs. The ability to prime emotional responses before conscious decision-making occurs could be exploited to influence voting behavior, shape public opinion, and reinforce ideological divides, all while bypassing traditional forms of cognitive resistance.

“Neuro-targeting could become one of the most invasive forms of psychological influence ever deployed in digital spaces, allowing corporations and institutions to alter consumer behavior, political preferences, and emotional states without users ever realizing.”

The lack of regulatory safeguards surrounding this technology makes these risks even more urgent. Without strict legal protections, there are no clear restrictions preventing companies from storing, analyzing, or selling neural response data, nor are there established guidelines limiting how deeply artificial intelligence (AI) models can interpret and manipulate cognitive states. If left unchecked, neuro-targeting could become one of the most invasive forms of psychological influence ever deployed in digital spaces, allowing corporations and institutions to alter consumer behavior, political preferences, and emotional states without users ever realizing the extent of external influence.

Cybersecurity Threats

BCI technologies face unique cybersecurity threats, as stolen neural data could not only compromise personal privacy but also exacerbate risks related to national security, economic stability, and digital safety.3 Neural data is a prime target for cyberattacks, as it provides valuable insights into an individual’s mental and emotional state, cognitive functions, and decision-making processes. If hacked or misused, this data could be exploited in ways that extend far beyond individual harm, affecting government operations, financial markets, and public health systems.

From a national security perspective, adversarial nations or criminal organizations could use stolen neural data to manipulate individuals in sensitive government, military, or corporate positions. The ability to map cognitive patterns, detect psychological weaknesses, or influence neural responses could be exploited for blackmail, psychological warfare, and even cognitive hacking, where external actors attempt to subtly alter thought patterns or decision-making processes over time.

The economic risks are also profound. BCI-generated insights could become a highly valuable commodity, leading to an underground market where businesses and malicious entities might trade in stolen neural profiles. The unauthorized use of such data could fuel insider trading, corporate espionage, and fraudulent financial activity, as businesses or investors gain access to real-time cognitive responses to market trends, corporate strategies, or high-stakes negotiations.

From a public health and safety perspective, compromised BCI systems could pose severe risks for medical patients relying on neurotechnology for essential functions, such as prosthetic control, communication assistance, or cognitive therapy. A cyberattack targeting neural implants or cloud-based BCI platforms could disable medical functions, manipulate cognitive states, or even induce distressing neurological effects. Such vulnerabilities raise urgent questions about the need for stronger cybersecurity protections, fail-safe mechanisms, and regulatory oversight in the deployment of BCI technology.

As BCI adoption accelerates, clear cybersecurity frameworks and enforcement mechanisms must be established to protect against these growing risks. Without proactive intervention, BCI technology could become a prime vector for cyberattacks that threaten not only individual users but also global stability in security, economic, and public health domains.

Neural Flooding and Neural Scanning Cyberattacks

While BCI technology has demonstrated immense potential in medical applications, such as neurostimulation for treating Parkinson’s disease, epilepsy, and depression, these same advancements could also be exploited for harm. Researchers have studied various neuro-attacks that could manipulate brain activity, cognitive functions, and even emotional states, indicating that the very mechanisms designed to restore function could also be used to disrupt it.

One study explored two types of attacks on neural systems: neuronal flooding (FLO) and neuronal scanning (SCA). The FLO attack involves overloading a specific group of neurons at once, while the SCA attack targets individual neurons to disrupt normal activity.4 The findings highlight the dual-use nature of BCI technology—while BCIs can improve quality of life for patients with neurological disorders, adversaries could theoretically exploit these same systems to mimic or induce the symptoms of neurological diseases. In a later study, researchers proposed that similar attacks could theoretically replicate the effects of neurodegenerative diseases like Parkinson’s and Alzheimer’s and suggested that if attackers were able to access BCI-connected neurostimulation systems, they could artificially trigger tremors, memory loss, or cognitive impairment, raising ethical and security concerns over the potential for targeted neurological sabotage.5

Beyond the medical field, this dual-use risk extends to other BCI-integrated systems, such as advertising, smart home control, and military applications. The ability to track, interpret, and influence neural responses is already being leveraged to enhance human-computer interaction, optimize user engagement, and automate cognitive decision-making. However, these same advancements also expand the attack surface for adversaries looking to manipulate thoughts, behaviors, and actions.

This follows a broader pattern seen in cyber warfare and information operations, where innovations originally developed for commercial or personal convenience, including internet of things (IoT) devices, social media algorithms, and biometric authentication systems, have later been exploited for misinformation campaigns, cyberattacks, and digital surveillance. If BCI systems become widely adopted for decision-making, productivity enhancement, or communication, they may become new targets for cyber-enabled influence operations, potentially allowing attackers to introduce false sensory inputs, alter emotional responses, or distort an individual’s perception of reality.

Citations

- Phillip Tracy, “China Is Using Brain-Scanning Hats to Track Workers’ Emotions,” Daily Dot, April 30, 2018, source.

- Michael Smith and Carl Marci, “From Theory to Common Practice: Consumer Neuroscience Goes Mainstream,” Nielsen, July 2016, source.

- Sergio López Bernal, Alberto Huertas Celdrán, and Gregorio Martínez Pérez, “Eight Reasons to Prioritize Brain-Computer Interface Cybersecurity,” Communications of the Association for Computing Machinery 66, no. 4 (April 2023): 68–78, source.

- Sundararajan Karthikeyan, “Cyberattacks on Miniature Brain Implants to Disrupt Spontaneous Neural Signaling,” IEEE Consumer Electronics Magazine 9, no. 6 (November 2020): 35–41, source.

- Sergio López Bernal, Alberto Huertas Celdrán, and Gregorio Martínez Pérez, “Security in Brain-Computer Interfaces: State-of-the-Art, Opportunities, and Challenges,” Association for Computing Machinery Computing Surveys 54, no. 1 (January 2021): 1–35, source.

Current Legislative Landscape and Proposed Regulatory Approaches

While solving the technical challenges of brain-computer interface (BCI) technology is important, it does not resolve the accompanying ethical and legal uncertainties. For example, many of these technologies are developed and sold by private tech companies rather than medical professionals, raising the question of whether they are subject to regulations like the Health Insurance Portability and Accountability Act (HIPAA), which governs medical data. This ambiguity highlights the need for clearer regulatory frameworks, as BCIs currently operate in a “gray area” where the line between consumer technology and health care is blurred, leaving significant gaps in oversight and accountability.

A Lack of Comprehensive Federal Regulation

The legal landscape surrounding BCI technology in the United States is currently fragmented and lacks comprehensive federal regulation, especially outside the medical context. The regulatory gap for consumer neurotechnology products leaves a vast area vulnerable to potential exploitation and misuse, as the market for nonmedical BCIs continues to expand and these devices may be used for wide-ranging purposes such as entertainment, wellness, or personal enhancement.

There is an unresolved legal question regarding whether the Food and Drug Administration (FDA)—or any federal agency—has the authority to regulate nonmedical BCIs without explicit congressional authorization. This uncertainty has been reinforced by the Supreme Court’s decision in West Virginia v. Environmental Protection Agency (2022), which limited federal agency power by ruling that agencies cannot regulate major economic and political issues without clear congressional authorization. This ruling applied the major questions doctrine, meaning that if a regulatory issue is of significant national importance, agencies like the FDA cannot unilaterally assert authority unless Congress has explicitly granted them the power to do so.

“Without federal oversight, there is a risk that consumer BCI products could be sold with misleading claims, inadequate security protections, and unclear data privacy standards.”

Congress has not yet passed legislation explicitly granting the FDA, Federal Trade Commission, or any other federal agency jurisdiction over consumer BCIs. If federal legislators do not act to explicitly authorize regulation, the FDA may not be able to extend its authority to these products, leaving them to be developed and marketed with minimal scrutiny. Without federal oversight, there is a risk that consumer BCI products could be sold with misleading claims, inadequate security protections, and unclear data privacy standards. This regulatory gap highlights the urgency of legislative action to ensure that neurotechnology is not left unregulated in areas where it could pose risks to consumers.

State-Level Efforts to Fill the Regulatory Void

Because of the legal uncertainty surrounding whether the federal government can regulate nonmedical BCIs, much of the responsibility for addressing neural data privacy and security has shifted to individual states. Some states have begun to develop their own legal frameworks for BCI oversight. Notably, Colorado and California have pioneered legislation specific to neural data. Colorado’s laws are the strongest to date, requiring explicit consent for data collection and granting users rights to access and delete their neural data. In August last year, the California assembly passed SB1223, which amends the California Consumer Privacy Act to include neural data as a type of sensitive data.1

These state-level initiatives are significant as they begin to fill the regulatory void left by federal inaction. However, they are not without their challenges. There are gaps in the scope of their application, particularly regarding smaller entities or individual developers who may handle neural data without falling under the purview of these regulations. Additionally, the enforcement mechanisms in place lack the necessary detail for proactive monitoring, enforcement, and auditing, which are crucial for ensuring compliance. This is compounded by the fact that current legislation may not be adaptable enough to address future technological advancements or new methods of data manipulation, potentially leaving consumers unprotected against emerging threats.

California’s SB1223 also excludes inferences made from “non-neural information” from the definition of “neural data,” but since “non-neural information” is not clearly defined, it creates confusion about what qualifies as neural data. If non-neural information refers in this context to anything not produced by the central or peripheral nervous system, that runs into the uncertainty about what exactly constitutes nervous system activity. A key debate in neuro privacy is the blurred line between muscle movements and the nervous system’s role in controlling them. For instance, eye-tracking technology raises questions: While eye movements result from muscle contractions, they are controlled by a cranial nerve in the peripheral nervous system. TechNet, opposing SB1223, pointed out that systems monitoring eye movements could be considered measurements of the nervous system, underscoring the uncertainty around what legally counts as neural data.2

This highlights the need for more research and the development of standards. Without clear definitions, companies and regulators will struggle to implement effective protections, and the risk remains that certain categories of neural-adjacent data could be exploited outside the scope of existing privacy laws. The lack of a standardized regulatory approach highlights the necessity of further legislative refinement to ensure neural data privacy protections are comprehensive and enforceable.

Citations

- “SB 1223: Consumer Privacy: Sensitive Personal Information: Neural Data,” California State Legislature, September 28, 2024, source; California State Legislature, California Consumer Privacy Act of 2018, California Civil Code, Title 1.81.5, Sections 1798.100–1798.199, source.

- Dylan Hoffman, Ronak Daylami, and Khara Boender, “RE: SB 1223 (Becker) – Neural Data Privacy – Oppose Unless Amended,” Computer and Communications Industry Association, April 8, 2024, source.

Proposed Approaches for Data Protection

As brain-computer interface (BCI) technology continues to evolve, so too do the challenges of ensuring security, privacy, and accountability in its development and deployment. With federal regulatory uncertainty limiting oversight, this responsibility is increasingly shifting to states and industry leaders. However, without uniform standards, this fragmented approach risks inconsistencies in enforcement, loopholes in security, and inadequate consumer protections. Addressing these gaps requires a multi-faceted approach that prioritizes security at every stage of BCI development, holds manufacturers accountable for unsafe products, and ensures that regulatory solutions remain adaptable as the technology advances. Industry-driven best practices also play a crucial role in mitigating risks where formal regulation may be absent. Collaboration between policymakers, technologists, and consumer advocates will be essential to ensuring that BCI technology can evolve without exposing users to excessive privacy or security threats.

Building Security into BCI Development: Secure By Design

One of the most pressing risks associated with BCIs is the potential for security vulnerabilities due to poor software design and inadequate cybersecurity measures. Historically, many software and hardware developers have prioritized getting products quickly to markets over ensuring security, resulting in devices that lack critical protections against data breaches, manipulation, and unauthorized access.

The secure-by-design approach aims to address this by integrating security features at the earliest stages of development rather than as an afterthought.1 Secure-by-design advocates for integrating security into BCI technology from the initial design phase to the final product, ensuring that user security and privacy are prioritized throughout the entire lifecycle. The Cybersecurity and Infrastructure Security Agency (CISA) has championed this approach as part of its broader initiative to enhance cybersecurity across various sectors. Secure-by-design entails implementing security controls early in development, conducting thorough risk assessments, and continuously monitoring and updating security measures as technology evolves.

As noted earlier, unsecured-by-design products could expose users to unauthorized neural data collection, cyberattacks that manipulate brain signals, and misuse of biometric insights. Without proper safeguards, these devices could become a new vector for digital exploitation, making regulatory or industry-driven intervention critical. Security vulnerabilities should be mitigated before products reach consumers by enforcing mandatory encryption standards and requiring rigorous security testing before release.

“Security vulnerabilities should be mitigated before products reach consumers by enforcing mandatory encryption standards and requiring rigorous security testing.”

While some companies already implement basic security practices, the reality is that many BCI developers still operate with minimal oversight. Industry-wide adoption of secure-by-design principles could reduce security risks while providing a framework for best practices that states could incorporate into legislation.

For BCIs, this means that developers must consider potential security threats from the outset, designing systems that are resilient to attacks and protect user data from unauthorized access or manipulation. In practical terms, this could involve designing BCI devices with built-in encryption for all neural data, secure authentication mechanisms to prevent unauthorized access, and regular updates to address new vulnerabilities. By embedding these security measures from the start, the risk of exploitation is significantly reduced, offering greater protection to users who entrust their most sensitive data—literally their thoughts—to these devices.

Accountability for Software Manufacturers

One of the critical issues in the current technological landscape is the lack of accountability for manufacturers that release products with vulnerabilities.2 This gap in responsibility often leads to consumers being unwittingly exposed to significant risks, as many products enter the market without adequate security measures. As noted by CISA, “We have allowed a system where the cybersecurity burden is placed disproportionately on the shoulders of consumers…away from the producers of the technology,” highlighting the urgent need for a new model that ensures the safety and integrity of this technology.3

To address this need, legislation and regulations should establish clear liability for manufacturers and developers who fail to secure their products adequately. If a BCI device is released with easily exploitable vulnerabilities, the manufacturer should be held legally responsible for any harm that results from those flaws. This liability would act as a powerful incentive for companies to invest in rigorous security testing and validation processes before releasing their products to the public. As privacy and internet policy scholar Jim Dempsey suggests in a 2024 paper, a “rules-based floor” should be established as a minimum legal standard of care for software, which would focus on specific product features or behaviors that need to be included or avoided.4 Additionally, Dempsey advocates for a “process-based safe harbor” that shields developers from liability for hard-to-detect flaws, provided they adhere to recognized secure development practices.

Furthermore, liability should not only apply to the initial release of the product but also extend to ongoing support. Dempsey emphasizes this ongoing responsibility, suggesting that manufacturers must provide timely security updates and patches to address newly discovered vulnerabilities. Failure to do so should result in penalties or legal action, ensuring that companies remain vigilant in maintaining the security of their products over time. This approach balances the need for robust legal standards with the flexibility necessary to accommodate the complexities of software development, ultimately promoting a more secure technological environment.

Comprehensive Legislation

Consumers have the right to know how their data is being protected and what measures are in place to safeguard their privacy and security. Legislation should, therefore, grant consumers access to detailed information about the security practices of BCI devices and software. This transparency would provide consumers with the knowledge necessary to assess the risks associated with those products, empowering them to make informed decisions about their use. Additionally, the law should establish clear avenues for consumers to seek recourse if security breaches harm them. This could include the right to take legal action against manufacturers who fail to protect their data and access to compensation or support services in the event of a breach. By providing these rights, the legislation would help ensure that consumers are not left vulnerable or unsupported in the face of security failures.

Under the second Trump administration, it is likely that federal priorities will shift away from direct BCI regulation, placing greater responsibility on state governments to address neural data privacy, cybersecurity, and commercial oversight. Traditionally, there has been an expectation that emerging technologies would be addressed at the federal level, but as national agencies deprioritize consumer privacy regulations, protections will likely need to be enacted by the states.

“As national agencies deprioritize consumer privacy regulations, protections will likely need to be enacted by the states.”

This shift reinforces the fragmented nature of U.S. technology regulation. Without federal action, different states may implement conflicting standards, making compliance complex for businesses operating across multiple jurisdictions. While some states might enact strict neural data privacy laws, others could take a more permissive approach, allowing industry-led self-regulation to dictate standards.

The lack of federal coordination raises concerns about enforcement inconsistencies and potential loopholes in state laws that could be exploited by companies handling neural data. Without federal coordination, BCIs could become subject to widely varying standards depending on where a consumer is located. This creates an uneven playing field for businesses and increases the risk that companies will exploit regulatory gaps to bypass stricter privacy protections. State efforts would benefit from greater cross-state collaboration to establish shared principles for neural data protection. While federal legislation may not be viable, multi-state agreements could help create consistency in how BCIs are governed, ensuring that security and privacy protections apply regardless of jurisdiction.

Citations

- “Secure by Design,” Cybersecurity and Infrastructure Security Agency, U.S. Department of Homeland Security, source.

- Eduard Kovacs, “Medtronic Releases Patches for Cardiac Device Flaws Disclosed in 2018, 2019,” SecurityWeek, February 3, 2020, source.

- “Secure by Design,” Cybersecurity and Infrastructure Security Agency, U.S. Department of Homeland Security, source.

- Jim Dempsey, “Standards for Software Liability: Focus on the Product for Liability, Focus on the Process for Safe Harbor,” Lawfare, January 23, 2024, source.

The Path Forward: Balancing Security, Ethics, and Regulation

The rapid advancement of brain-computer interface (BCI) technology represents both an unprecedented opportunity and a significant regulatory challenge. As BCIs integrate more deeply into consumer and medical applications, the stakes for privacy, security, and accountability grow exponentially. These technologies hold immense potential to improve lives—enabling communication for individuals with disabilities, enhancing cognitive performance, and offering new frontiers in entertainment and human-computer interaction. However, without clear regulatory oversight, they also pose risks of data exploitation, manipulation, and cybersecurity threats.

At the federal level, uncertainty regarding regulatory authority has left a significant gap in oversight. The Supreme Court’s ruling in West Virginia v. Environmental Protection Agency has cast doubt on whether agencies like the Food and Drug Administration or Federal Trade Commission can regulate consumer-grade BCIs without explicit congressional authorization. As a result, the burden of regulation has shifted to individual states, with Colorado and California taking the lead in establishing legal frameworks for neural data protection. While these state-level efforts mark an important step forward, they also highlight the challenges of a fragmented regulatory landscape, where inconsistent laws create compliance difficulties and leave gaps in consumer protections.

To mitigate these risks, a multi-pronged approach is essential. The secure-by-design framework must be embedded in the development of BCI technologies to prevent security vulnerabilities before products reach consumers. Additionally, software manufacturers must be held accountable for ensuring robust security measures and providing ongoing updates to protect users from emerging threats. Furthermore, comprehensive legislation at the state level should establish clear consumer rights regarding neural data transparency, control, and legal recourse in cases of misuse. While federal regulation remains uncertain, multi-state agreements could offer a pathway toward standardized protections, ensuring that all consumers—regardless of where they live—are afforded the same level of security and privacy.

As BCIs continue to evolve, collaboration between technologists, policymakers, and legal experts will be critical in shaping a regulatory framework that balances innovation with consumer protection. Without proactive measures, the risks of neural data exploitation, cyber vulnerabilities, and ethical concerns will only escalate. Addressing these challenges today will ensure that the future of BCIs remains both secure and equitable, fostering trust in neurotechnology while safeguarding the fundamental rights of its users.