Table of Contents

III. The Policy Imperative: Understanding the Link Between Human and Cybersecurity Vulnerabilities

When policymakers and standards developers design cybersecurity frameworks, they focus on the needs and perspectives of industry professionals. The National Institute of Standards and Technology (NIST) cybersecurity framework serves as a useful example.1 The NIST framework is a widely used but voluntary guide for cybersecurity activities and practices within organizations. Frameworks such as this are useful for guiding organizational cybersecurity protocols, but they largely overlook the human and systemic factors that could also lead to cybersecurity risks.

Scholars have studied the “human factor” and the human vulnerabilities (typically understood as mistakes or a lack of training) that lead to cybersecurity risks within companies, but this research is not prevalent in, nor meaningfully integrated into, policy frameworks. This report is not advocating for replacing technical approaches with human-centered ones; rather, it proposes adapting the risk management mindset that underpins frameworks like NIST’s to better address the human vulnerabilities this report has defined: limited digital access, skills, and literacy.

The RAND framework takes a step in that direction by recognizing the role of government and considering the interconnected concerns of various cybersecurity stakeholders. Developed in consultation with a variety of industry professionals and experts, the framework is intended to guide policymakers, and it is instructive. It identifies users as stakeholders and emphasizes “the need to provide information to users about their vulnerabilities and risks.”2 It also highlights the necessity of educating consumers on cybersecurity best practices and managing privacy. RAND acknowledged that more research is needed for cyber education and awareness in its framework—a gap this report seeks to fill, particularly by integrating insights from digital divide policy.

Instead of broad focuses on cyber education and awareness, policymakers can channel the existing body of research and literature from digital divide policy. Both the Broadband Equity Access and Deployment Program (BEAD) and the Digital Equity Act (DEA) of 2021 take aim at the digital divide: BEAD by (primarily) seeking to expand access, and the DEA by seeking to increase digital literacy and skills.3 Although BEAD and the DEA have both faced devastating funding cuts in the early months of the second Trump administration, their creation reflected a bipartisan commitment to closing the digital divide.4 Tackling the digital divide, which is increasingly framed as a rural economic issue, remains a concern across party lines despite shifts in federal priorities that have disrupted national momentum toward broader digital inclusion.5

However, what is missing in both programs is a recognition of the link between the human vulnerabilities of the digital divide and cybersecurity vulnerabilities. In today’s increasingly digital world, it is more important than ever to explore, understand, and find solutions for the human vulnerabilities that serve as entry points for cybersecurity vulnerabilities. Understanding this connection will enable more effective, inclusive, and preventative cybersecurity policies for the public.

Policymakers should apply the risk management approach used in organizational cybersecurity policy to address human vulnerabilities related to limited digital access, skills, and literacy. Just as organizations identify, assess, and mitigate technical threats in their systems, policymakers should systematically evaluate how human vulnerabilities create cybersecurity vulnerabilities and develop proactive safeguards to reduce harm at the human level. In Amy’s case, the likelihood of a cybersecurity incident was heightened precisely because of her human vulnerabilities. Even more, the likelihood increased due to her layered exposure: She was rendered vulnerable due to both systemic (access) and individual (skills and literacy) factors. To better protect individuals like Amy, cybersecurity and digital divide policies must be strategically aligned: bridging technical risk management understandings with the human-centered prioritization of digital divide policies.

What the Data Show

To understand how human vulnerabilities contribute to cybersecurity vulnerabilities, this report draws on both testimonial and governmental data sources. First, 50 testimonial cases of online scams were identified and analyzed, drawn from news stories and user-submitted experiences. These cases were chosen to explore the intersection between human and cybersecurity vulnerabilities. While hypothetical cases like Amy’s illustrate how human vulnerabilities can lead to a cybersecurity breach, many people are rendered vulnerable through scams such as phishing, social engineering, and other tactics employed by malicious actors. These scams exploit gaps in digital literacy, skills, and access, making them a practical lens through which to examine the real-world consequences of human vulnerabilities.

In addition to these qualitative accounts, Federal Trade Commission (FTC) data on the types of scams, frequency, demographic patterns, and other characteristics of reported scams were reviewed. These data provide a more comprehensive, national-level picture of cybersecurity threats as experienced by the public. Taken together, the two sources capture individual experiences and how they map onto larger trends. They also serve as a foundation for identifying common conditions that render people vulnerable and for proposing policy responses grounded in lived experience.

Testimonial Evidence

Although online scams are not a new phenomenon, viral news reports have circulated of AI-generated images of a hospitalized Brad Pitt or the AI-generated voice recordings of President Biden. A Johnny Depp impersonator “swindled” as many as 26 elderly people out of money. “Kevin Costner” also entered the mix, with scammers demanding money for entry into his fan club, and Truth Social has been the site of a variety of scams. All of these scams—and many others not noted here—share one common factor that has not been directly connected to scam analysis: While there are a variety of sources that may attribute the rise of these scams solely to age (which we know is not true), each of the individuals scammed was rendered vulnerable because of their lack of digital literacy or skills.

And while fewer people may fall for a scam once it has received media attention, the data illustrate how many people are affected by the human vulnerabilities that this report focuses on. Analysis of the 50 cases reveals a common pattern: Victims often lacked digital literacy to recognize potential risks and the digital skills to investigate suspicious activity or implement protective measures.

Federal Trade Commission Data

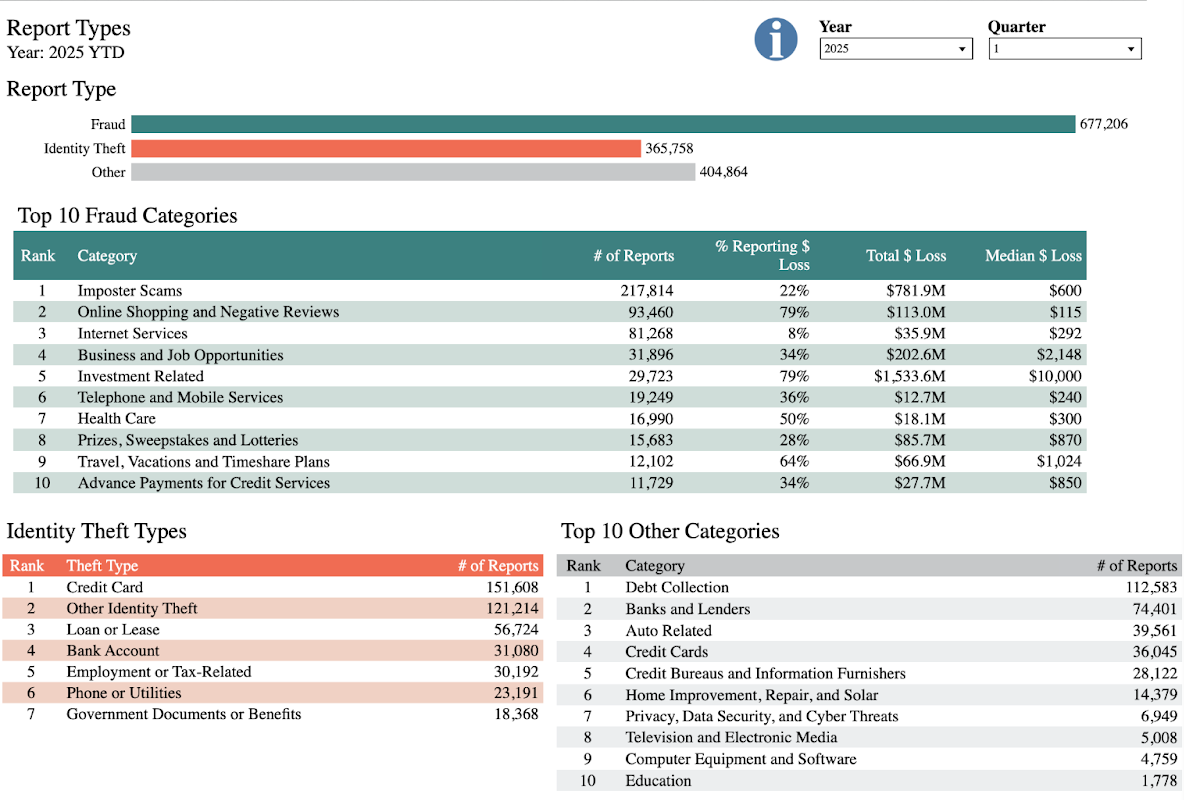

As shown in Figure 2, the FTC tallied 677, 206 reported cases of online fraud and 365,758 reported cases of identity theft in just the first three months of 2025.

There were almost 300,000 reports of individuals falling victim to scams of the categories of fraud most relevant to this report: imposter scams; business and job opportunities; investment-related fraud; and scams related to prizes, sweepstakes, and lotteries. The testimonial evidence described above also falls into these categories: the imposter scams involving the likenesses of Brad Pitt and Johnny Depp6 as well as scams involving fraudulent job opportunities, investments, and prizes and sweepstakes that are particularly prevalent on Truth Social. Although the aggregated FTC data lacks the specificity of the testimonial evidence on victims’ experiences, the data overwhelmingly illustrate the prevalence of these scams on the national level, as well as other scams that could potentially evolve and exploit human vulnerabilities.

As seen in the hypothetical case of Amy, digital access can also be an entry point for a cybersecurity vulnerability. Amy lacked digital access and then proceeded to make decisions that led to her data being exposed. Further, this scenario, and the data shared in this section, underscore the importance of understanding how human vulnerabilities are layered. Some of the individuals scammed on Truth Social or by an imposter had digital access in order to communicate with scammers and imposters, but they did not have digital literacy or skills to navigate away from the scams they became victims of. Unfortunately, they were unable to recognize risks or check for potential malicious activity.

Although many of the scams mentioned here used AI-generated content, the scams themselves are increasingly being automated through AI. That is, scamming operations that once featured real people using AI to create images or text are now using AI tools to handle the communication itself. According to Data & Society, “Generative AI serves as a force multiplier for existing scam tactics.” Data & Society describes a scenario similar to the testimonial evidence outlined in this report but with AI as the primary tool: “Workers in the [scam] compound use AI-powered translation tools to communicate fluently with targets around the world, AI-generated deepfakes to pose as good-looking romantic prospects, and large language models (LLMs) to tailor messages to each victim’s interests and emotional triggers. The phone, scripts, and app interfaces are all provided by the criminal syndicate running the compound.”7 Scams are thus being elevated in terms of scale, speed, and efficiency—trends that only emphasize the need for policy solutions that understand the connection between human and cybersecurity vulnerabilities.

While this report draws on equity-based frameworks to analyze digital risks, it is important to recall the erroneous assumption that only certain people get scammed. Scams, and other cybersecurity vulnerabilities, affect people across political and social identities: This is a universal issue, not a partisan one.

Citations

- “Cybersecurity Framework,” National Institute of Standards and Technology, source.

- Mikolic-Torreira, et al., A Framework for Exploring Cybersecurity Policy Options, source.

- “Broadband Equity Access and Deployment Program,” BroadbandUSA,National Telecommunications and Information Administration, source; “Digital Equity Act,” National Digital Inclusion Alliance, source.

- Justin Hendrix, “Digital Equity Advocates Rally to Restore Funds, Sustain Their Work,” Tech Policy Press, July 24, 2025, source; Barbara Ortutay and Claire Rush, “The Digital Equity Act Tried to Close the Digital Divide. Trump Targets It in His War on ‘Woke’,” PBS News, May 25, 2025, source.

- Clara Easterday, “Bipartisan Bill Aims to Help Rural Communities Access Federal Broadband Funds,” Broadband Breakfast, May 5, 2025, source.

- Imposter scams are conducted by a “trusted entity,” but this report argues that the scammers became trusted entities only after talking to victims and developing a relationship, sometimes over the course of months.

- Swartz, Marwick, and Larson, “Scam GPT,” source.