Recommendations: An ALT Framework

The public sector is at a crossroads. Data and information have never been more contested or more valuable. A primary driver of this is AI: a high-reward, high-risk technology.

As we found in our review, AI has indeed shaken up the field. Governments everywhere are preparing for a big shift: There is a significant move to build computational infrastructure, a serious commitment to on-the-job training and data literacy, and a near-universal demand for good partners.

But there is also a void in imagination and vision. The civic sector needs a framework.

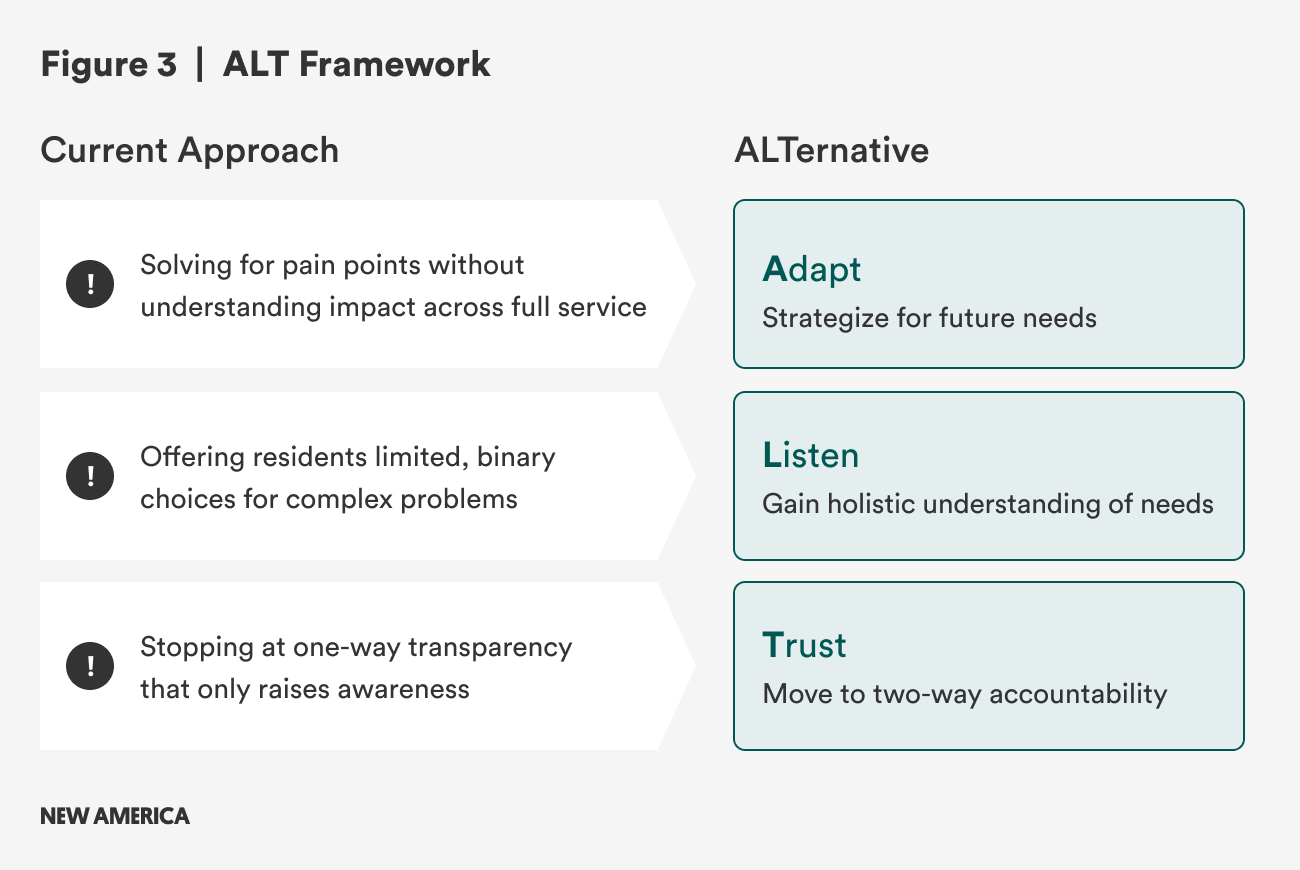

We propose an ALT approach to governance, not government. This is an alternative method of AI implementation and assessment in the public sector that focuses on how decisions are made, not just what decisions are made. It includes three imperatives for institutional reform: adapt, listen, and trust (see Figure 3).

Adaptation means anticipating future needs and repositioning institutions to meet them. Listening is a commitment to a holistic understanding of what people need. Trust is accountability to residents by demonstrating results.

What follows is a more in-depth look at each of these three components, providing hypothetical use cases and recommendations for implementation. We also recount the pilots we facilitated in Boston, New York, and San Jose and explain how they contributed to developing the ALT framework. Through worked examples, we developed a practical understanding of what works and what’s missing in AI development and implementation, and how to design a structure for guiding the field into the future.1 The goal is to guide the civic sector, including the public sector and its partners, towards the responsible and transformative adoption of AI.

ALT in Action

Adapt

AI is about anticipating future needs with more meaningful work, not just addressing current crises.

Adapt in a nutshell: How governments design and execute programs can be significantly altered by AI. For years, civic technology promised efficiency so that it could free up time for higher-touch services. In reality and in practice, better technology doesn’t just free up administrative time; it removes friction and makes it easier for people to access services. The result of well-placed AI isn’t efficiency, but more demand revealed. As a result, while AI agents don’t necessarily solve service surges, they will ultimately shift what people will spend time on.

Traditionally, governments and civic organizations have been able to act on data collected and analyzed through standard channels: existing government data, surveys, and participation metrics. But AI gives practitioners the opportunity to understand not only what people need now, but what they will need in the future. Fundamentally, this is a planning function and necessitates reallocation of resources and staff time. Done well, it can help invent jobs of the future, while offloading routine work to AI. As a result, governments must not design for job elimination; they should design for human redeployment and organization adaptation.

This shift is not only about how governments manage their people, but also about how policies set the conditions for adaptation. Most recent policymaking has leaned controlling and defensive, to include restrictions, audits, bans, and measures that control risk but don’t help agencies absorb new demand. Enabling policies, in contrast, create the flexibility to reallocate funds, redeploy staff, and iterate service models as needs surge. Without them, efficiency gains can backfire, turning frictionless access into overwhelming backlogs, as the next example illustrates.

Example: A city deploys a 311 chatbot to make it easier for residents to request bulky waste pickup. Service requests surge overnight. But staffing and routing processes remain unchanged. Instead of freeing up capacity, the system overwhelms crews with backlogs and longer delays. Efficiency gains on paper turn into operational strain in practice.

ALTernative: The city prepares for amplified demand before rollout.

- AI models forecast how much service use will increase once friction is removed.

- Staffing is adjusted and funding is reallocated to handle the surge and address the better-understood environment.

- Digital requests flow into redesigned triage and case management systems so pickups are routed efficiently.

- Routine scheduling is automated, while staff time is explicitly reallocated to higher-value engagement with residents (e.g., resolving complex or repeated requests).

- Managers are trained to exercise discretion and compassion in how scarce resources are deployed.

To achieve the ALTernative, we recommend the following:

- Realign budgets and staffing so saved time is explicitly reallocated to high-value human work, not lost to administrative creep.

- Forecast demand surges before pilot launch—model how reduced friction will increase requests, then stress-test systems for bottlenecks. This will require nimble, responsive strategies to AI grounded in service delivery, but adaptive approaches that respond to the changes in organizational need.

- Train staff in compassionate decision-making and bias awareness as humans ‘in the loop.’ Staff must be empowered to make decisions and be clear on the decisions they can and should make. These are cases that require human judgment, vulnerability, and fairness, which require more attention or human intervention outside of AI processing. This also presents a good opportunity to engage organized labor and unions to address issues of AI in the workplace.

- Leverage AI to support adaptation, not replace people. AI agents are autonomous software systems that can take in data and instructions, make decisions, and take authorized actions to achieve specific goals without constant human supervision. Used carefully, agents can automate processes, and with planning and forecasting, deploy humans for higher-value work.

In San Jose, the city government is addressing the challenge of homelessness by developing foundational applications that will support broader AI initiatives in the future. The city’s IT and housing departments have targeted the issue of evictions with an in-house predictive analytics tool. This tool helps them better understand when evictions are likely to occur and allows for preventative actions. While evictions represent only one aspect of the overall homelessness issue, the city’s innovative use of data is a crucial first step to adapting its programs, anticipating constituent needs, and proactively designing solutions to address them.

Since homelessness is a complex problem that extends beyond city borders, Rethink AI is collaborating with San Jose to explore the role a regional coalition—comprising government, nonprofit, and academic organizations—can play in adopting a new approach to homelessness. Building on the eviction application as a foundation, this coalition will leverage more extensive datasets and AI to develop regional solutions to the homelessness crisis. By applying AI regionally, all coalition members can adopt and adapt innovative strategies designed to anticipate future needs and improve program effectiveness.

Listen

AI is about clarifying information, not muddying it.

Listen in a nutshell: The ability for institutions to listen at scale has significant implications for governance.2 It promises that governments can make sense of existing structured data (like from 311) and solicit new unstructured data (like community conversations), and use both to make decisions. For all the talk of AI fueling disinformation, it can also clarify meaning by helping governments make sense of, and generate novel insights from, new and existing data sources.

Civic tech efforts have focused on accessibility. Official policy documents, planner presentations, and even meeting notes are available online. In some cases, policies are synthesized into comprehensible summaries. Residents are able to see what is being offered, but they don’t receive support in connecting that information to their needs or the needs of their communities.

Decisions are presented as a binary: Do you like or dislike this new zoning requirement? Residents have rarely been offered an opportunity to present what they want, just the option of responding “yay” or “nay.”

Example: A city website uses AI to post housing policies in plain language, and, maybe, a chatbot answers FAQs.

ALTernative: A resident begins their interaction with their government with an inquiry: “I need affordable housing in Cincinnati before December. What are my options?” AI analyzes such queries and recognizes a pattern of community needs, like heating complaints rising in a neighborhood in the winter or elderly residents who are having challenges accessing housing.

- AI interprets across zoning code, housing programs, wait lists, and deadlines.

- The system returns not just an answer, but a pathway: steps, timelines, forms, contacts.

- The system lets residents ask follow-up questions in their own words—“What if I’m a single parent?” or “What if I make $35K/year?”—and it adapts to help an individual.

- Information that was used to generate an individual response now motivates a coordinated strategy.

To achieve the ALTernative, we recommend the following:

- Build shared civic infrastructure through standing up a public AI sandbox where residents and staff use low-code/no-code tools to translate policies, budgets, and services into plain language. Low-code and no-code AI tools give people the ability to design AI workflows through graphical interfaces. These tools are proliferating, driving quality and usability up and costs down. This will make it easier for individuals and teams in smaller municipalities and community organizations to implement AI solutions without extensive technical resources. This trend particularly benefits resource-constrained local governments that previously couldn’t access enterprise AI solutions.

- Strengthen civic capacity by training civic workers in context engineering to ensure translations are accurate, relevant, and accessible across languages and literacy levels. This context engineering is a shift away from prompt engineering to involve systematically building comprehensive knowledge architectures that give AI systems deep understanding of the problem they are being asked to solve. Instead of crafting clever questions, civic organizations will need to build institutional memory systems and data architectures that give AI the same situational awareness that civic workers rely on.

In the future, platforms offering pre-built models for common civic use cases—like permit processing, service request routing, and community feedback analysis—will lower barriers to entry.

In Boston, we wanted to explore if generative AI could change the nature of interactions between government decision makers and a local community. The RethinkAI team, including Chris Le Dantec from Northeastern University, partnered with Talbot Norfolk Triangle Neighborhood United, a community organization in the Dorchester neighborhood of Boston. The leadership of that group was concerned that they had become increasingly dependent on the city for access to and analysis of data about them. They wondered what it would be like to own their own data resource so that they might level the playing field in local decision-making.

We ended up creating a local large language model, or LLM, called “On the Porch.” It is trained on structured data such as 311, traffic violations, 911 calls, and unstructured data such as planning documents and transcripts of local meetings. The result is a conversational tool that anyone in the community can use to inquire about what’s going on in their neighborhood and share things they might be concerned about. After a conversation, the bot provides a high-level summary, complete with any resources mentioned for follow-up.

The tool demonstrates the possibility of renewed listening. In this case, the community has access to the same data and analysis tools as the city. This level playing field can clear the way for the city government to truly listen to what the community has to say.

Trust

AI is about increasing accountability, not skirting it.

Trust in a nutshell: In order for people to trust government, they need to trust the tools that government uses to deliver services and communicate. The use of AI in the civic sector needs to be oriented around using trustworthy data and being held accountable to delivering trustworthy insights from that data. This involves making sure that users are involved, or at least aware, of the methods of collection, analysis, and use of data.

Today, much of the activity in government organizations is invisible to residents. This is especially true for digital activity and increasingly true for AI. This creates a paradox: More data does not equal more trust. AI can either reinforce the gap or open up new forms of accountability. More data than ever before is being used to inform government decision-making. Using predictive AI tools, governments are engaged in sophisticated analysis of often diverse datasets. This can lead to better decisions, but it doesn’t lead to residents’ trust in those decisions.

A key distinction between this and what we had in the past is building a civic tech movement on transparency. These ongoing progress checks built necessary awareness. Moving into this next era of trust relies on community members experiencing how their voices, values, and vulnerabilities influence collective decisions, alongside open data.

Example: The City of Boston’s “Council Roll Call Summaries” pilot translates complex legislative votes into plain language summaries. With the help of AI, residents can see how their council members voted without reviewing transcripts or listening to meetings.

ALTernative: Residents can see how the council voted, but what if they could also see resident reactions from social media or an online comment platform, alongside official votes? Government officials could see these patterns synthesized in real time and be able to prioritize their agenda accordingly. This could mark a shift from an awareness of votes to a reshaping of decision-making structure. Trust is cultivated not just when residents observe what’s happening, but when there is a move from one-way transparency to two-way accountability.

To achieve the ALTernative, we recommend the following:

- Develop enabling policies that facilitate pilots and transparency, while also creating opportunities for experimentation to enable rapid resident response.

- Incubate civic data trusts or community-controlled data infrastructure (e.g., local data trusts and sovereign AI models trained on local data).

- Evaluate for trustworthiness, not just efficiency. The earliest iterations of the digital era focused on efficiency measures and productivity gains. But the real currency in the public sector is trust—and we need to develop strong measures around its success.

- Integrate broad signals of public impact, like environmental stress, service usage, or safety outcomes, alongside individualized resident input. Enter into public compacts that are visible agreements between government, residents, and partners that spell out shared goals, timelines, and accountability measures.

In New York, we saw an opportunity to leverage generative AI to expand the visibility and resolution of funding needs for community-based initiatives. We partnered with CitizensNYC, one of the nation’s first micro-funding organizations, whose leaders wanted to analyze a large corpus of funding proposals collected over the last decade. Could an LLM generate thematic insights about shared needs across proposals, how issues are framed, and how that framing evolves over time? For CitizensNYC, this use of AI presented an opportunity to expand their capabilities and increase the trust between the organization, communities across the city, and the city government.

This pilot involves building a proposal analysis dashboard for CitizensNYC that will harness more than 5,000 past proposals and New York City open datasets to analyze and visualize the content and context of new proposals. Working with a broad cross section of the CitizensNYC team and leadership, the tool will support new use cases for both proposal review and communication. For instance, city data layers on flood risk can be used to understand the context of a proposal related to climate hazard risk in a particular neighborbood—and this validation in turn can reveal past proposals related to this need locally and citywide. There are several applications of this approach that will improve CitizensNYC grantmaking, which will increase the trust communities and the city government have in the process.

The Leadership We Need

In order to realize an ALTernative perspective, we need good leadership. As former Louisville Mayor Greg Fischer put it, “These AI tools can really help build social muscle. And social muscle is the kind of strength that you need to get through adversity.”3 That bold approach should guide this important moment and help us use AI for its best and highest applications—not just regulating its risks, but also enabling its potential to deliver real public value.

Government leaders must establish enabling conditions. We need a vision that allows us to use AI for its best and highest applications, not just regulating its risks. This means designing accountability structures that measure trustworthiness, not just data; realigning budgets; anticipating new resident needs and amplified demand and rapidly responding to those shifts in service; and translating AI’s promise into real public value.

Philanthropy could continue to shape and fund what governments can’t risk: field-defining networks, bold prototypes, and practical tools that can be scaled across cities and states. This creative capital can support experiments and connect technical tools with civic priorities so that the field can learn faster, space for vulnerabilities can be acknowledged, and progress isn’t defined solely by the private sector.

Universities can anchor this work with research, training, data capacity, and long-term evaluation. While basic training is available, what’s needed for cities is beyond the basic vocabulary of LLMs and prompt engineering. Instead, universities can lead in practical application and measurement as well as evaluations that extend beyond political cycles, playing the crucial role as a trusted evaluator of civic data and outcomes.

Community organizations and nonprofits must ground AI in lived experience and the tangible realities of public service. They not only mobilize residents; they are also the key agents responsible for localizing data, keeping culture, and ensuring that the use of AI reflects the needs and priorities of their communities. They hold governments accountable to real resident experience, not just machine-recorded records.

Coalitions, like RethinkAI, must continue to play the role of convenor, chronicler, and practical advisor to civic actors. They need to be committed to field-building alongside communities and other sectors.

The ALT leadership ecosystem is one in which government serves as an enabler, philanthropies buffer risk, universities evolve our understanding, and nonprofits ground us in real-world experience. Together, with these four sectors in the civic space playing their leadership role, AI can move beyond efficiency and build a model that residents truly need: one that fosters adaptation, listening, and trust.

Citations

- Teaching + Learning Lab, “Worked Examples,” Massachusetts Institute of Technology, source.

- Eric Gordon, How Institutions Listen: AI, Civic Data, and the Path to Public Trust (MIT Press, forthcoming, 2026).

- Interview with Greg Fischer, September 25, 2024.