Table of Contents

Methodology: Building GovSCH

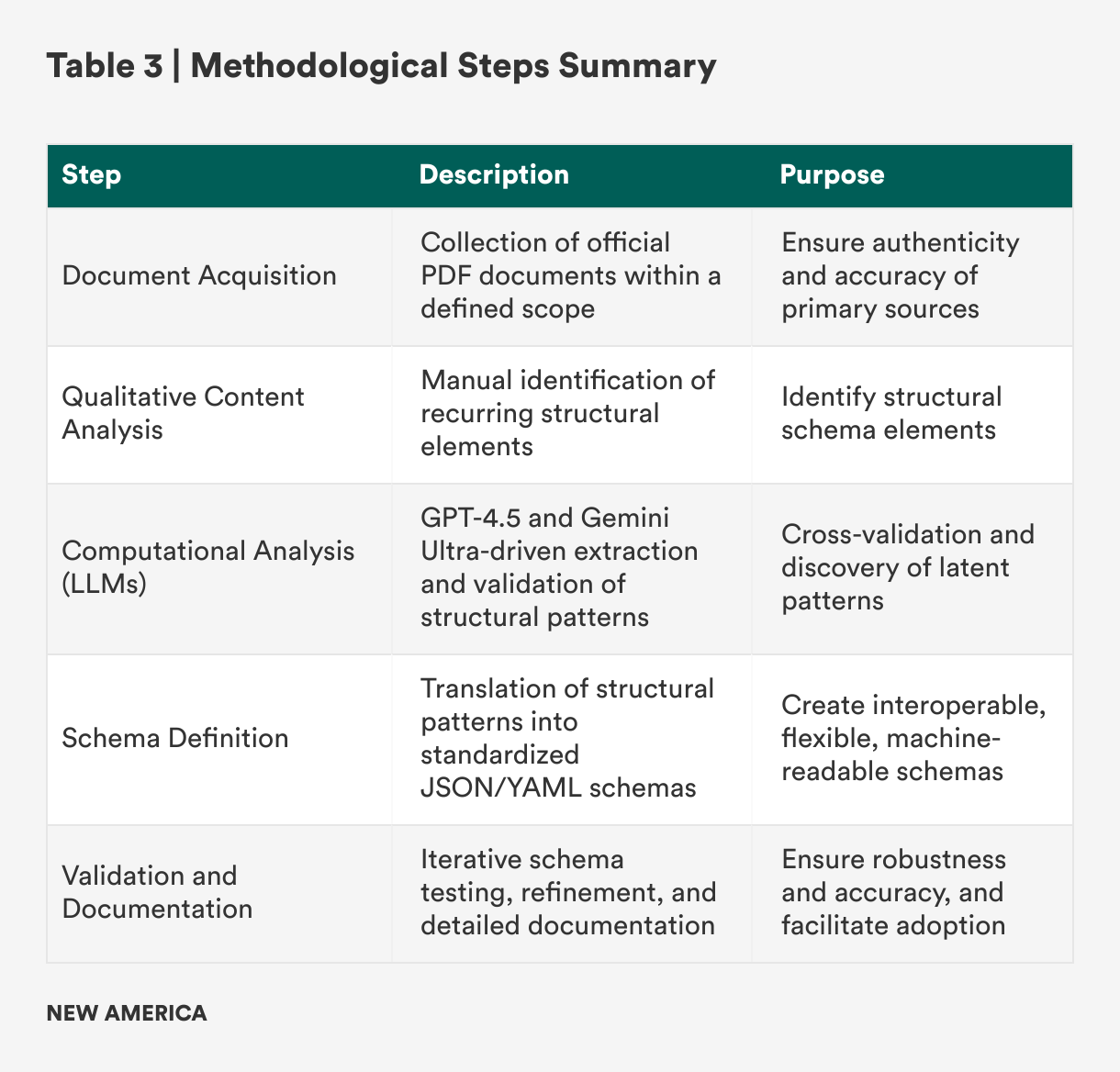

This section outlines the methodological approach taken to develop and document the three distinct GovSCH schemas, one each for (1) U.S. executive orders, (2) U.S.-centric cybersecurity frameworks, and (3) international data protection regulations. The methodology was executed through a systematic, structured process (see Table 3) involving document acquisition, qualitative content analysis, computational analysis via LLMs, and subsequent transformation into structured, machine-readable formats (JSON and YAML).

Document Acquisition

The first stage involved systematically acquiring authoritative source documents within the scope defined in the “Scope” and “Limitations” sections of this report. Official PDF documents for each governance instrument, U.S. executive orders, cybersecurity frameworks from NIST and DoD, and international regulatory frameworks were downloaded directly from official governmental, regulatory, and standards-issuing websites. This ensured the authenticity and integrity of the sources analyzed. The documents collected are listed in the scope section.

Qualitative Content Review and Analysis

Following the acquisition, each PDF document underwent an in-depth qualitative content analysis. The review involved reading, annotating, and systematically identifying structural elements common across each document type. Structural analysis focused primarily on identifying patterns. Recurring structural and semantic patterns were documented, with divergences noted to inform schema flexibility and adaptability.

Computational Analysis with LLMs

Complementing manual review, computational analysis using GPT-4.5 and Gemini-Ultra large language models systematically extracted, summarized, and validated structural components across documentation. LLM-assisted analysis served to:

- Confirm human-identified structural elements;

- Reveal additional latent patterns not immediately apparent;

- Validate semantic consistency across policy, framework, and regulatory domains; and

- Generate summaries and comparative analyses to streamline schema definition.

LLM-assisted analysis provided rigorous cross-validation, enhancing analytical accuracy, scalability, and consistency.

Schema Definition and Transformation

With structural patterns identified and validated, a standardized schema was developed for each document category: (1) executive orders, (2) frameworks, and (3) regulations. The schema design prioritized clarity, flexibility, and interoperability.

Key considerations during schema definition included:

- Standardization of structure: uniform schema elements across all three areas

- Flexibility for diversity: optional and extendable schema components

- Machine-readability: JSON and YAML formats for usability

- Semantic clarity: meaningful naming conventions and metadata definitions

Schemas underwent iterative validation (using the JSONlint and YAMLlint code quality validators) to ensure both formats can be machine-parsed, which is consistent with other JSON and YAML schemas (such as OSCAL) used by compliance automation systems.

Validation and Documentation

Schemas underwent systematic testing using representative documents. Adjustments ensured robustness, adaptability, and fidelity. Comprehensive documentation detailing schema elements, example usage, and implementation guidelines was developed and published on the project’s GitHub repository along with the complete machine-readable schema structures, supporting documentation, and illustrative examples.