Decision-Theoretic Explanations for Inaction

The preceding sections have described how Americans perceive nuclear risk—what they fear, how those fears differ across groups, and how they compare internationally. Yet polling data alone cannot explain why awareness so rarely produces action in the U.S. To bridge that gap, this section draws on decision theory to identify the cognitive mechanisms that sustain complacency despite the recognition of danger.

Although most Americans support arms control in principle, they rank nuclear risks well below climate change, cyberattacks, or terrorism. This “recognition without mobilization” reflects not ignorance but predictable patterns of reasoning under uncertainty. Common explanations—that “the threat feels distant,” “deterrence works,” “there’s nothing I can do”—are surface expressions of deeper biases and decision-theoretic mechanisms, such as temporal discounting, ambiguity aversion, and normalcy bias. These mechanisms help people manage anxiety about low-probability, high-consequence risks by pushing them to the cognitive background.

Recognizing these patterns reframes the challenge. If engagement stems from the structure of human cognition rather than lack of information, then supplying more data or more vivid depictions will not restore salience. Effective communication must work with cognitive tendencies, not against them: pairing fear with clear agency to counter avoidance, using concrete analogies to reduce abstraction, and presenting uncertainty as manageable rather than paralyzing. This approach modernizes the traditional theory of change for nuclear policy: from simply increasing awareness to strategically activating engagement.

The same dynamics that dampen nuclear concern—temporal discounting, scope neglect, and habituation—also constrain action on other global catastrophic risks such as climate change, pandemics, and AI safety. Understanding these shared mechanisms enables a transferable toolkit for fostering preparedness across domains: one rooted in psychological realism rather than rhetorical intensity.

Ultimately, decision-theoretic analysis shifts focus from describing apathy to explaining and anticipating it. It clarifies why even credible warnings fail—and how they might succeed—by transforming attention itself into a form of prevention. This perspective is equally vital for futures work: how individuals and societies imagine nuclear danger in their future worlds shapes which risks they prioritize, which they ignore, and how preparedness is designed. Understanding these mental models is thus a precondition for making future threats actionable rather than abstract.

The 13 reasons for recognition without mobilization below represent the clearest, most empirically grounded pathways from decision science that explain why people fail to act on warnings of nuclear risk. Each meets three criteria: It is extensively documented in behavioral research; it directly shapes individual reasoning under uncertainty; and it has been demonstrated across other domains of existential or systemic risk. There are additional dynamics—such as identity-protective cognition, partisan cue-taking, misinformation saturation, narrative crowding, the absence of any institutional actor formally responsible for public nuclear-risk communication, temporal inversion problems, and media framing effects—that also shape engagement. We acknowledge these factors but do not include them as core reasons here for two considerations. First, several are context-dependent social phenomena, not stable cognitive processes, and therefore function as amplifiers rather than root causes of inaction. Second, including them would broaden the analytic frame beyond individual-level decision mechanisms into the adjacent domains of political psychology, media systems, and institutional design. These omitted dynamics matter—and they should be examined in follow-on work—but the present analysis focuses intentionally on the 13 foundational cognitive reasons that systematically drive recognition without action across individuals and over time.

Stability and Rarity Biases: Perceptions of Safety or Improbability

Reason 1: Deterrence as Proof of Safety (Status Quo Perception)

Many Americans reason that, because nuclear weapons haven’t been used since 1945, they likely won’t be—interpreting the absence of use since then as evidence that deterrence “works.” Variations of this logic abound: Nuclear weapons are “unpleasant but necessary,” “grim insurance,” or the system that has “kept the peace for 80 years.”1 Across these frames, the underlying inference is the same: non-use equals stability.2

This reflects a broader psychological tendency to treat the past as evidence of future safety.3 The long record of nuclear non-use creates the perception of stability and self-correction, even as underlying risks increase. Decision theory explains this through three mechanisms. Status quo bias leads people to favor existing arrangements because change appears costly or risky.4 Prospect theory explains this through loss aversion, whereby potential losses outweigh equivalent gains, and through uncertainty aversion, where calculating alternatives is implicitly avoided because it demands effort and introduces unknowns.5 Normalcy bias encourages the assumption that tomorrow will resemble yesterday.6 This shortcut reduces cognitive strain and preserves emotional comfort, since imagining catastrophic discontinuities (like nuclear war) is distressing. Cold War conditioning further strengthens these tendencies. Repeated standoffs that did not escalate were interpreted as evidence that deterrence is inherently stable and each “near miss” reinforced status quo and normalcy bias.7

These mechanisms form a self-reinforcing loop: Because deterrence has worked, people assume it will continue to work (status quo bias) and do not expect nuclear threats to escalate (normalcy bias). Over time, this produces a complacency trap: Nuclear weapons are viewed as stable background conditions, not urgent threats. The longer this period of nuclear non-use extends, the stronger these biases become—intensifying complacency even as objective nuclear risks grow, from modernization and proliferation to the integration of destabilizing technologies like AI and hypersonic weapons.

Polling data reflects this pattern: Americans acknowledge nuclear danger but rank it below climate change, terrorism, or cyber risks in urgency.8 Support for arms control remains broad but shallow, strongest among groups that trust deterrence or feel insulated from risk—older Americans, men, conservatives, high-income, and defense-affiliated populations.

This creates a cognitive anchor: As long as nuclear weapons remain unused, the system appears to function, dulling urgency for reform.9

Reason 2: “It Feels Unlikely” (Rarity and Imaginability)

After nearly 80 years without wartime use, many Americans treat nuclear war as implausible or obsolete, a Cold War relic rather than a live risk. The absence of lived experience makes the threat abstract and hard to picture, which makes it easy to discount. Even those who acknowledge the weapons often bracket their use as remote, not today’s problem, so attention drifts and urgency decays.10

Several mechanisms drive this perception. People tend to assess the likelihood of events by how easily relevant examples come to mind (reverse availability heuristic). In the absence of recent, vivid instances of nuclear use, the prospect feels inherently improbable.11 Individuals also exhibit probability neglect, which is difficulty with reasoning about low-probability, high-consequence events. When outcomes are both catastrophic and rare, attention gravitates towards their improbability rather than potential magnitude.12 A further distortion comes from optimism bias, the tendency to assume disasters are less likely to affect oneself. This comforting illusion reduces anxiety in the short term but suppresses the sense of urgency required for prudent policy and preparedness.13

The result is a belief that nuclear war is “too rare to worry about,” even as expert warnings intensify. Each additional year without a detonation reinforces this illusion of safety.

This dynamic mirrors other domains: Few anticipated COVID-19 despite warnings; climate and cyber risks seem distant until they erupt. Yet these threats, unlike nuclear war, still feel proximate because they manifest through visible, recurring events—wildfires, hacks, and outbreaks—that people have lived through or watched unfold in real time. The result is a hierarchy of imagination: Nuclear risk remains the most catastrophic, but also the least experienced, and therefore the easiest to discount.

This perception is most pronounced among:

- Younger Americans (18–34): lacking Cold War memories or vivid mental anchors.

- Less formally educated respondents: for whom nuclear risk is more abstract and easier to dismiss.

- Republicans: less likely than Democrats to view nuclear weapons as major threats.

When danger feels implausible, it fails to motivate action. If nuclear war seems unimaginable, it will not sustain attention, political pressure, or resources—no matter how often experts warn that the risk is rising.

Cognitive Load and Emotional Defenses

Reason 3: Overwhelming Complexity

Many Americans disengage from nuclear issues because the technical, probabilistic, and geopolitical dimensions are too dense to easily understand. Even well-informed citizens often find the language of nuclear policy opaque—terms like “counterforce,” “no first use,” or “escalation dominance” difficult to interpret.14 Compared with more tangible threats, nuclear risk feels abstract and elite-driven, making avoidance cognitively easier.

Cultural depictions such as Oppenheimer or Nuclear War: A Scenario tend to evoke brief alarm but rarely sustain engagement, mirroring a broader pattern of recognition without urgency. This reflects cognitive overload: When information exceeds processing capacity, people disengage. It is compounded by ambiguity aversion, the tendency to avoid choices when probabilities are unclear. In the nuclear domain, where both the likelihood and consequences are uncertain, these dynamics produce inaction rather than inquiry.15

Robert J. Lifton and Paul Slovic’s work on psychic numbing shows that as potential destruction grows, emotional engagement declines.16 People tune out what they cannot comprehend. Similar effects appear in climate modeling, artificial intelligence, and biosecurity—areas where technical opacity suppresses public urgency. When a threat feels too complex to grasp or influence, people default to disengagement, an informed but inert awareness of danger.

Reason 4: Overwhelming Fear

For many Americans, nuclear war is simply too frightening to contemplate. Faced with uncontrollable risks, people turn away. Surveys show that large majorities support arms control, yet “rarely or never” read about nuclear issues, signaling avoidance rather than ignorance. This is not apathy but self-protection against existential anxiety.

This avoidance reflects the ostrich effect: deliberate ignorance to preserve emotional comfort. Studies by Cass Sunstein and Paul Slovic show that when danger feels uncontrollable, people divert attention as a coping mechanism.17 Psychic numbing (diminished emotional engagement as potential destruction increases) and learned helplessness (belief that action cannot meaningfully alter the outcome) reinforce the pattern: If individual action seems futile, withdrawal feels safer.

Nuclear risk follows this pattern: When the threat feels both catastrophic and beyond control, avoidance provides relief but erodes pressure for change. The same logic appears elsewhere. Catastrophic climate messages often paralyze rather than mobilize audiences.18 In cybersecurity, warnings of “inevitable” breaches foster apathy, not vigilance.19

Avoidance transforms recognition into silence. The public knows the danger but sustains it through disengagement—a psychological defense that preserves comfort at the cost of action.

Reason 5: Desensitization

After eight decades without catastrophe, nuclear danger has faded into background noise. Each generation has heard warnings that never materialized. Over time, repetition without consequence dulls emotion and urgency. Chicago Council polling data show that while support for arms control remains high, intensity of concern has steadily declined since the 1980s.20

This is habituation, in which repeated warnings without outcomes reduce responsiveness. As Slovic notes, people treat unfulfilled predictions as evidence of safety.21 Each “near miss” or unfulfilled prediction (e.g., the Cuban Missile Crisis, Able Archer scare, or North Korean missile test) confirms that deterrence works. Psychic numbing and normalcy reinforcement deepen the effect, normalizing danger through repetition.

Cultural saturation amplifies this desensitization. Popular media—from Mission: Impossible: Fallout to the Fallout franchise—turn existential threat into spectacle. Simulated destruction replaces genuine imagination, turning peril into entertainment. Paradoxically, success breeds complacency.

Similar processes appear in climate change and public health: Endless “code red” or pandemic alerts have bred resignation. Warnings lose traction not because risk is small, but because audiences have grown numb. Older generations and high-information elites show this most strongly; younger Americans, lacking Cold War memory, often exhibit detached abstraction instead. Even recent crises like Russia’s nuclear threats in Ukraine produced brief spikes of concern but not sustained mobilization.

Reason 6: Warning Fatigue

While desensitization dulls emotional response, warning fatigue erodes credibility. After repeated alerts that fail to materialize, people start to doubt the messenger.22 If the world has been “on the brink” for 70 years yet endures, people conclude the risk must be overstated.

This is the cry wolf effect: Repeated alarms without visible outcomes are ignored, even when valid. Combined with probability neglect (discounting of low-probability, high-consequence events) and social proof dynamics (muted reactions from elites or peers reinforce perception that warnings are not urgent), these mechanisms transform credible alerts into background noise.23

The nuclear arena is particularly vulnerable to this effect today. Coverage of crises like North Korea (2017) or Ukraine (2022) generate brief media cycles but little urgency.24 The field then lacks reliable media events to fill gaps between the crises. The exception is the Doomsday Clock announcement, which remains the field’s most visible annual media event. However, more and varied moments of visibility are needed to get the message across.

Other domains echo this pattern. Public health officials documented “pandemic fatigue;”25 cybersecurity experts face “alert fatigue.” Warning fatigue is strongest among older adults, who have lived through decades of dire predictions without nuclear war, and frequent news consumers, who are exposed to constant crisis narratives. The result is cynicism toward new alerts and a loss of trust in expert signaling.

Repetition without consequences undermines both attention and credibility. To sustain salience, nuclear warnings require not louder alarms but clearer evidence, fresh framing, and visible policy response.

Competing Attention and Delegation: Structural or Social Drivers of Inaction

Reason 7: Attention Scarcity and the Salience Hierarchy

Even when people acknowledge nuclear danger, it competes with a crowded field of more visible and emotionally immediate threats.26 Human attention is finite; in an era defined by overlapping crises—climate change, pandemics, cyberattacks, inflation, and inequality—people prioritize dangers they can see, or control.27 Nuclear risk, abstract and largely confined to elite discourse, sinks down the mental agenda.28

This pattern reflects several well-documented cognitive mechanisms. Salience bias and the availability heuristic elevate risks with vivid cues or recent examples—those easier to imagine or recall.29 After eight decades without nuclear use, the threat feels implausible and therefore less urgent. Temporal discounting directs attention toward immediate crises rather than distant ones, while scope neglect makes vast but abstract harms feel less motivating than smaller, tangible ones. Finally, rational inattention channels focus toward actionable information, sidelining low-frequency, high-impact threats like nuclear war.

In today’s environment of constant crisis and limited bandwidth, nuclear risk suffers from relative invisibility, not irrelevance. Unless linked to daily experience and agency, even credible warnings will be drowned out by louder, more available threats.

Reason 8: Reliance on Elites

Americans broadly support arms control and risk reduction, yet rarely engage directly. A 2021 Chicago Council survey found that 82 percent of Americans favored extending New START and 87 percent supported nuclear limits, but grassroots activism was minimal.30 The prevailing assumption is that nuclear policy is managed by experts, diplomats, and institutions—treated as the “grown-ups in the room.” Concern becomes deference.

This reflects delegation bias and diffusion of responsibility: When people perceive risk as managed by others, they feel less obligated to act.31 The technical nature of nuclear issues reinforces this tendency. As fewer citizens feel competent to assess policy choices, responsibility drifts upward, insulating elite decision-making and weakening democratic oversight.

This dynamic recurs across domains: Citizens trust central banks to manage financial risk, governments to handle cybersecurity and to defend critical infrastructure and secure networks, and international bodies to address climate change, resulting in publics that are supportive but politically inert.32

Higher-income and more educated Americans, who are more trusting of institutions and technocratic expertise, exhibit the strongest reliance on elites. Likewise, older cohorts who grew up during the Cold War, urban professionals, and political moderates tend to see strategic issues as the rightful domain of experts and insulated from partisan politics. For these groups, deference to authority is reinforced by familiarity with institutional processes and a belief that individual action is unlikely to make a difference in such a complex policy area.

Meaning and Moral Rationalization

Reason 9: Abstractness of Consequences

For many, nuclear war is simply too vast to imagine—either incomprehensible or dismissed as “too big to think about.” The result is a gap between intellectual awareness (the understanding that nuclear war would be bad) and motivational urgency (the idea that we must act to prevent it).33

This reflects scope insensitivity (difficulty scaling concern to the magnitude of the harm), probability neglect (the tendency to underweight very low-probability events), temporal discounting (downgrading future risks), and optimism bias (assuming immunity). The larger and more abstract a catastrophe becomes, the weaker its psychological pull—another instance of psychic numbing.34

The same abstraction dulls engagement on climate change and pandemic preparedness: People recognize global threats but find them too remote to act upon. In each case, the enormity of the risk itself becomes a barrier to engagement.

In the nuclear context, abstraction creates psychological distance. Citizens may support arms control, but struggle to see what personal or civic action looks like. Without concrete anchors or accessible frames, existential risk becomes background noise.

This tendency is strongest among those with limited exposure to nuclear education or Cold War memory and among populations preoccupied with immediate economic or social concerns. As a result, the unimaginable becomes ignorable—recognized yet detached from behavior.

Reason 10: Cognitive Dissonance (Motivated Ambivalence)

Many Americans see nuclear weapons immoral but necessary. This contradiction produces cognitive dissonance—accepting a dangerous system while rationalizing its legitimacy. If deterrence is immoral yet indispensable, then worrying about nuclear weapons feels futile.

Survey data reveal this tension: Majorities call nuclear weapons immoral and dangerous, but still favor retaining them for deterrence.35 Additionally, support for disarmament collapses when framed as unilateral, illustrating how moral discomfort coexists with pragmatic acceptance. This reflects motivated reasoning and system justification—mechanisms that align personal beliefs with dominant narratives to preserve psychological stability. People defend the legitimacy of existing institutions, especially those perceived as foundational to national security. As Jost et al. and Kunda show, coherence outweighs accuracy.36 In the nuclear context, this means people rationalize deterrence as “working” because it preserves both peace and psychological equilibrium. Likewise, Tannenwald observes that public discourse surrounding deterrence remains “morally suspended,” acknowledging the horror of nuclear war while normalizing the weapons that could cause it—creating a “moral trap of deterrence” that explains why moral recognition rarely leads to political mobilization.37

Similar ambivalence shapes climate and AI debates, where people accept ethical urgency but rationalize inaction through necessity, inevitability, or scale. Fear coexists with faith in the system, sustaining inertia.

Tracking attitudes over time shows this paradox clearly: Fear of nuclear war has waned, but so has confidence in nuclear weapons. What remains is moral ambivalence—continued acceptance of deterrence as a necessity, coupled with growing unease about its morality and relevance. Cognitive dissonance thus functions as both a psychological defense and a political barrier: It allows citizens to acknowledge existential danger while avoiding the discomfort of confronting what meaningful action would require.

Meta-Dynamics of Attention and Trust

Reason 11: Diffusion of Futility (Collective Action Problem)

Even when people recognize nuclear danger, the scale and institutional complexity of the problem foster a sense of futility. The risk is seen as collective but the solutions as inaccessible—matters for governments, treaties, not individuals.38 Awareness thus coexists with detachment: Nuclear danger feels real but belongs to someone else’s jurisdiction.

This reflects collective action failure, diffusion of responsibility, and learned helplessness.39 When a problem is systemic and global, individuals expect others—governments, NGOs, or international institutions—to act. Each person’s incentive to contribute diminishes because the perceived impact of individual action is infinitesimal. Over time, this logic creates a feedback loop: The more people defer responsibility, the weaker collective pressure becomes, confirming the sense that individual efforts are meaningless.

Surveys show this dynamic clearly, revealing patterns of high endorsement and low engagement, illustrating the classic collective action problem: People approve of solutions in principle, but lack belief in their personal efficacy to achieve them.

Comparable patterns appear in other domains. In climate policy, economists call this diffusion of futility: When problems feel too vast, efforts seem pointless. In public health, free riding on others’ compliance to achieve herd immunity produces the same logic. In the nuclear context, it manifests as “deterrence by delegation”—the belief that experts are managing the problem adequately. Institutional opacity deepens this perception. Nuclear decisions occur in classified bureaucracies with few visible points of entry for public influence. Even motivated citizens lack a clear theory of impact.

Demographically, the sense of futility is strongest among younger Americans, who are more skeptical that the government listens to them on national security issues, and among those with lower political efficacy overall. Older and higher-information respondents—those with Cold War memory or policy literacy—express somewhat greater belief that civic pressure once mattered but concede that it no longer does.

The result is collective paralysis: a public that recognizes danger yet sees no viable role for itself in reducing it. The expected utility of individual effort approaches zero. Overcoming this barrier requires restoring credible pathways for participation that transform diffuse concern into collective efficacy.

Reason 12: Information Decay and Issue Attention Cycles

Nuclear danger follows the predictable issue-attention cycle identified by Anthony Downs: Crisis-driven spikes of anxiety give way to fatigue, normalization, and eventual forgetfulness.40 Public concern surges in response to discrete events—nuclear tests, geopolitical crises, or cultural moments like Oppenheimer—but fades as attention shifts to new or more immediate threats. In the absence of sustained reinforcement, even vivid warnings rapidly decay.

This pattern reflects recency bias, emotional decay, and habituation. Attention peaks during visible danger, then diminishes once the perceived immediacy of the danger passes. As Kahneman and Tversky observed, memory and attention are optimized for short-term adaptation, not long-horizon vigilance.41 Emotional arousal, which drives short-term concern, is not cognitively sustainable over long periods—especially for abstract risks.

Empirical evidence confirms this cycle. Pew surveys in 2022 showed that Americans’ concern about nuclear-related dangers increased sharply after Russia’s invasion of Ukraine, with majorities expressing worry about escalation and the possibility of a nuclear accident at Ukrainian power plants.42 Within a year, by 2023, that number had dropped significantly despite ongoing nuclear rhetoric.43 Chicago Council data from 2004–2021 show that nuclear risk consistently cycles between temporary spikes and long troughs of inattention, rather than maintaining steady salience.44

This decay is reinforced by the structure of modern media. Contemporary news and social platforms operate on an economy of novelty, privileging immediacy and emotional engagement over continuity. Catastrophic risks that unfold slowly—or persist without visible consequence—struggle to compete. Algorithms reward what is new, not what is enduring. As a result, even existential dangers are treated as “content cycles,” spiking briefly when nuclear fears or “WWIII” moments trend, then receding as soon as the next crisis captures attention. The pattern is one of oscillation rather than engagement—volatile surges of alarm without sustained public focus.

Demographically, issue-attention volatility is strongest among younger, high-information media consumers, whose exposure to rapid news cycles fosters short attention spans and emotional desensitization. Older cohorts, by contrast, display slower reactivity but also less sustained engagement—responding to crises with worry but returning quickly to normal routines. Across groups, the result is the same: Periodic awareness spikes, followed by collective forgetting.

In practice, information decay turns warnings into noise. Fear fades faster than policy moves. Without deliberate reinforcement through education, participation, or sustained storytelling, nuclear danger repeatedly slips from view

Reason 13: Trust Erosion and Epistemic Fatigue

Even accurate warnings lose force when trust collapses. Today, confidence in government, media, and expert institutions is near historic lows, eroding the credibility of nuclear risk communication.45 This reflects source discounting and epistemic skepticism: When messengers are seen as biased, self-interested, or unreliable, their information is systematically discounted regardless of its quality. Over time, repeated exposure to contradictory or alarmist narratives can lead to credibility fatigue, in which citizens begin to doubt all claims equally.46 Meanwhile, public trust in media—long the key conduit for communicating existential risk—has fallen to 43 percent, its lowest level since tracking began. These numbers show that the credibility problem is structural, not episodic.47

This distrust interacts with cognitive biases. The cry-wolf effect leads people to dismiss repeated doomsday predictions; confirmation bias polarizes interpretation along partisan lines. Conservatives distrust arms-control advocates; progressives distrust deterrence defenders. Information becomes filtered through identity, not evidence.

Comparable patterns appear across other existential risk domains. During the COVID-19 pandemic, declining trust in public health institutions led to resistance against even basic safety measures. In climate communication, skepticism toward international organizations and scientific elites has slowed policy momentum. In the AI domain, public doubt toward tech companies’ self-regulation reflects a different dynamic—not rejection of expertise per se, but a reasonable distrust of corporate self-interest in the absence of external oversight. Across all cases, eroded trust transforms uncertainty into apathy: when citizens no longer know whose claims to believe, they disengage rather than act.

The consequences for nuclear policy are severe. If the public doubts both the threat and the messenger, policy elites lose the democratic legitimacy needed to pursue reform. Even credible alerts now struggle to command attention. What once symbolized urgency risks becoming background noise, perceived as either politicized or performative.

Demographically, trust divides mirror broader partisan polarization. Republicans express higher confidence in the military but deep distrust of media and international institutions, while Democrats show the inverse pattern. Younger Americans, shaped by digital ecosystems rife with misinformation, report the lowest overall institutional trust of any age cohort. This generational skepticism means that even as younger voters express support for nuclear restraint in principle, they are less likely to believe official narratives about risk.

Trust is the infrastructure of mobilization. When it collapses, even truthful communication fails. Rebuilding it requires more than repetition—it demands participatory transparency and new intermediaries that connect citizens to expert knowledge without relying on distrusted institutions. Without such bridges, recognition remains decoupled from action.

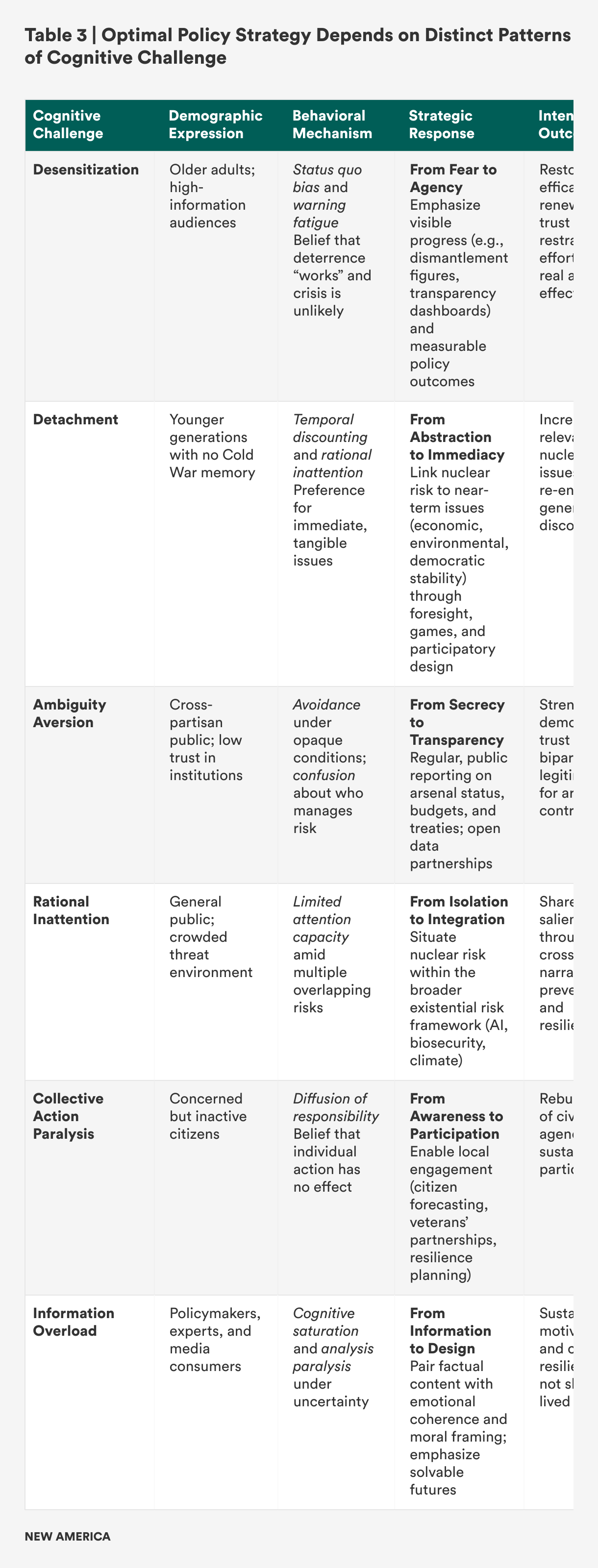

The pathways identified in the below table synthesize insights from public opinion data, demographic trends, and 13 reasons for recognition without mobilization detailed earlier in this report. Those cognitive and behavioral biases—ranging from status quo and normalcy bias to warning fatigue, ambiguity aversion, rational inattention, cognitive overload, and diffusion of futility—operate in overlapping clusters rather than in isolation. Table 3 consolidates these dynamics into six composite cognitive patterns that consistently appear across age groups, partisan identities, and information environments. Polling shows that nuclear awareness persists but rarely translates into sustained engagement; decision theory explains why: People manage uncertainty by simplifying, deferring, or externalizing distant risks. Each cognitive pattern implies a distinct communication and policy strategy. This table translates those insights into practical interventions, aligning behavioral understanding with institutional design to rebuild durable attention, moral salience, and civic agency around nuclear risk.

These pathways provide more than a communication roadmap—they create a behavioral foundation for action. By aligning narrative strategies, institutional design, and public participation with how different groups actually process uncertainty, institutions can shift nuclear risk from episodic awareness to sustained attention. The goal is not simply to inform the public, but to build durable civic agency and distributed ownership over nuclear restraint.

Implications Then and Now

Publicly driven arms control once succeeded because three reinforcing conditions—salience, trust, and simplicity—aligned to make nuclear risk both visible and actionable. During the 1960s through the 1980s, nuclear danger felt urgent and imaginable. The Cold War made threat perception visceral: duck-and-cover drills, televised missile crises, and cultural portrayals turned abstract danger into a shared civic experience. Fear was not hypothetical; it was lived. Because the threat felt proximate, engagement felt necessary—and because the media was less fragmented, the public experienced these moments through a common information environment, reinforcing a collective sense of urgency and shared responsibility.

Americans also trusted that their government could act effectively to reduce the danger. Arms control enjoyed broad—though never universal—bipartisan legitimacy, with leaders from Kennedy to Reagan framing it as pragmatic statecraft rather than ideology. While debates over verification, strength, and moral responsibility persisted, there remained a shared baseline of confidence that reducing nuclear risk was both possible and desirable. The Cold War’s binary structure simplified complexity: two superpowers, one existential risk, and one clear solution—limit weapons, avoid war. Public faith in science, diplomacy, and executive leadership reinforced a sense of efficacy: Citizens believed their voices could shape outcomes.

Equally important, movements could translate technical issues into simple, actionable demands. “Freeze the arms race now” and “ban atmospheric tests” converted anxiety into participation. The clarity of these messages, coupled with more active and organized civil society—churches, unions, women’s groups—lowered barriers to entry and created visible feedback loops between activism and policy. When Kennedy cited public support for the 1963 Limited Test Ban Treaty or Reagan echoed the Freeze-era sentiment that “a nuclear war cannot be won,” activism felt validated and influence tangible.

Today, those conditions have dissipated. Salience has faded: The threat feels distant, abstract, and cognitively discounted by habituation and warning fatigue. Trust has eroded: Citizens doubt the credibility of institutions and messengers, which is a form of source discounting amplified by polarization and media fragmentation. Simplicity has evaporated: Nuclear policy is now entangled in complex technical, legal, and geopolitical systems that defy concise framing.

A mix of rarity bias, competing attentional demands, fear-driven avoidance, and a sense of personal futility leads citizens to defer responsibility to institutions and to disengage emotionally from an overwhelming threat. The result is not apathy, but attenuated salience—an informed yet inert public that sees nuclear risk as real, but distant and beyond its influence.

In decision-theoretic terms, these shifts reflect well-documented cognitive mechanisms. The loss of salience follows habituation and attentional triage:repeated exposure to unrealized warnings dulls urgency and pushes nuclear danger to the cognitive margins. The loss of trust stems from credibility collapse and the diffusion of responsibility, as individuals assume that experts and institutions are managing risks that lie beyond their personal influence. The loss of simplicity stems from cognitive overload and ambiguity aversion, whereby the technical and probabilistic complexity of nuclear policy discourages engagement. Together, these forces produce what this paper terms “recognition without mobilization,” an informed but inert public that supports arms control in principle yet exerts little pressure in practice.

Modern information ecosystems deepen this problem. Rapid news cycles accelerate habituation; polarized media environments erode credibility; information saturation magnifies cognitive load. For the future of arms control and risk reduction, the implication is clear: Strategies that once relied on moral vividness, singular events, or mass mobilization cannot simply be revived. Instead, they must be redesigned to align with contemporary cognition: to restore salience without panic, rebuild trust through transparency and participation, and translate complexity into credible, actionable frameworks for engagement.

Citations

- Smeltz, Kafura, and Weiner, Majority in U.S. Want to Learn More about Nuclear Policy, source.

- Nina Tannenwald, The Nuclear Taboo: The United States and the Non-Use of Nuclear Weapons (Cambridge University Press, 2007), 22.

- Robert Jervis, Perception and Misperception in International Politics (Princeton University Press, 1976), 217–18.

- William Samuelson and Richard Zeckhauser, “Status Quo Bias in Decision Making,” Journal of Risk and Uncertainty 1, no. 1 (1988): 8–9.

- Daniel Kahneman and Amos Tversky, “Prospect Theory,” Econometrica (1979).

- Paul Slovic, “Perception of Risk,” Science 236, no. 4799 (1987): 280–285; Cass R. Sunstein, “Probability and Neglect,” Yale Law Journal 112, no. 1 (2002): 61–107.

- Tannenwald, The Nuclear Taboo.

- Smeltz et al., Divided We Stand: Democrats and Republicans Diverge on U.S. Foreign Policy, source.

- Jacques E. C. Hymans, The Psychology of Nuclear Proliferation: Identity, Emotions, and Foreign Policy (Cambridge University Press, 2006), 51–54.

- Marissa Fond et al., An Unthinkable Problem from a Bygone Era: How to Make Nuclear Risk and Disarmament a Salient Social Issue (FrameWorks Institute, August 2016), source; Paul Slovic, “The Perception of Risk,” in Scientists Making a Difference: One Hundred Eminent Behavioral and Brain Scientists Talk About Their Most Important Contributions, eds. Robert J. Sternberg, Susan T. Fiske, and Donald J. Foss (Cambridge University Press, 2016), source.

- Amos Tversky and Daniel Kahneman, “Availability: A Heuristic for Judging Frequency and Probability,” Cognitive Psychology 5, no. 2 (1973): 207–232.

- Cass R. Sunstein, “Probability Neglect: Emotions, Worst Cases, and Law,” Yale Law Journal 112 (October 2002): 61–107, source.

- Tali Sharot, Christoph W. Korn, and Raymond J. Dolan, “How Unrealistic Optimism Is Maintained in the Face of Reality,” Nature Neuroscience 14 (October 2011): 1475–79, source.

- Carol Cohn, “Sex and Death in the Rational World of Defense Intellectuals,” Signs: Journal of Women in Culture and Society 12, no. 4 (1987): 687–718.

- Amy J. Nelson and Alexander H. Montgomery, “How Not to Estimate the Likelihood of Nuclear War,” Brookings Institution, October 19, 2022, source.

- Robert Jay Lifton, Death in Life: Survivors of Hiroshima (Random House, 1967); Paul Slovic, “If I Look at the Mass I Will Never Act: Psychic Numbing and Genocide,” Judgment and Decision Making 2 (April 2007): 79–95.

- Cass R. Sunstein, Risk and Reason: Safety, Law, and the Environment (Cambridge University Press, 2002); Paul Slovic, The Feeling of Risk: New Perspectives on Risk Perception (Earthscan/Routledge, 2010).

- Donna van Eerd, “Paralyzing Fear or False Sense of Hope: The Role of Emotions in Climate-Change Communication,” Utblick, March 17, 2024, source; Susanne C. Moser, “Communicating Climate Change Adaptation and Resilience,” Oxford Research Encyclopedia of Climate Science, September 26, 2017, source.

- Kristen Doerer, “Consumers Are Becoming Apathetic to Cyber Incidents, Research Finds,” Cybersecurity Dive, January 13, 2025, source; Steve Schuchart, “We Are Becoming Numb to Cybersecurity Breaches,” Tech Monitor, July 1, 2025, source.

- Smeltz, Kafura, and Weiner, Majority in U.S. Interested in Boosting Their Nuclear Knowledge, source.

- Slovic, “The Perception of Risk,” source.

- Dennis Mileti and John Sorensen, Communication of Emergency Public Warnings: A Social Science Perspective and State-of-the-Art Assessment (Oak Ridge National Laboratory, January 1990, source.

- Mileti and Sorensen, Communication of Emergency Public Warnings, source.

- Kull et al., Americans on Nuclear Weapons, source.

- Regional Office for Europe, Pandemic Fatigue: Reinvigorating the Public to Prevent COVID-19—Policy Framework for Supporting Pandemic Prevention and Management (World Health Organization, December 2020), source.

- Cass R. Sunstein, Risk and Reason: Safety, Law, and the Environment (Cambridge University Press, 2002), 38–45.

- Daniel Kahnenman, Thinking, Fast and Slow (Farrar, Straus and Giroux, 2011).

- Michal Smetana et al., Atomic Responsiveness: How Public Opinion Shapes Elite Beliefs and Preferences on Nuclear Weapon Use (Social Science Research Network, April 30, 2025), source; David C. Logan, “Elite–Public Gaps on Nuclear Weapons: The Roles of Salience and Knowledge,” International Organization 79 (Summer 2025), source.

- Amos Tversky and Daniel Kahneman, “Availability: A Heuristic for Judging Frequency and Probability,” Cognitive Psychology 5, no. 2 (1973): 207–232; Pedro Bordalo, Nicola Gennaioli, and Andrei Shleifer, “Salience and Consumer Choice,” Political Economy 121, no. 5 (2013): 803–843.

- Dina Smeltz et al., Despite Political Tension, Americans and Russians See Cooperation as Essential (Chicago Council on Global Affairs and Levada Analytical Center, March 2021), source.

- John M. Darley and Bibb Latané, “Bystander Intervention in Emergencies: Diffusion of Responsibility,” Personality and Social Psychology 8, no. 4, pt. 1 (1968): 377–383.

- European Central Bank, “Trust in ECB—Insights from the Consumer Expectations Survey,” Economic Bulletin, March 2024, source; MITRE-Harris Poll, Public Perceptions on Securing Critical Infrastructure (MITRE, 2025), source; “Climate Change Remains Top Global Threat Across 19-Country Survey,” Pew Research Center, August 31, 2022, source.

- Thomas M. Skovholt, Richard C. Williams, and Cynthia Steffen, “Psychological Reactions to the Nuclear War Threat,” Medicine and War 4, no. 1 (1988): 3–16.

- Paul Slovic, The Feeling of Risk: New Perspectives on Risk Perception (Earthscan, 2010), 167–170.

- Dinic, The YouGov Big Survey on NATO and War, source.

- John T. Jost, Mahzarin R. Banaji, and Brian A. Nosek, “A Decade of System Justification Theory: Accumulated Evidence of Conscious and Unconscious Bolstering of the Status Quo,” Political Psychology 25, no. 6 (2004): 881–919, source; Ziva Kunda, “The Case for Motivated Reasoning,” Psychological Bulletin 108, no. 3 (1990): 480–98, source.

- Nina Tannenwald, The Nuclear Taboo; Nina Tannenwald, 23 Years of Nonuse: Does the Nuclear Taboo Constrain India and Pakistan? (Stimson Center, February 22, 2021), source.

- Mancur Olson, The Logic of Collective Action: Public Goods and the Theory of Groups (Harvard University Press, 1965), 2–6.

- Bibb Latane and John M. Darley, The Unresponsive Bystander: Why Doesn’t He Help? (Appleton-Century-Crofts, 1970).

- Anthony Downs, “Up and Down with Ecology—The ‘Issue-Attention Cycle,’” The Public Interest 28 (1972): 38–50, source.

- Daniel Kahneman and Amos Tversky, “Prospect Theory: An Analysis of Decision under Risk,” Econometrica 47, no. 2 (1979): 263–291, source.

- Pew Research Center, Americans’ Concerns About War in Ukraine (Pew Research Center, May 10, 2022).

- Pew Research Center, One Year into the Russia–Ukraine War, Americans’ Concerns About the Conflict Have Eased (Pew Research Center, February 23, 2023).

- Smeltz et al., Rejecting Retreat: Americans Support U.S. Engagement in Global Affairs, source; Smeltz et al., Divided We Stand: Democrats and Republicans Diverge on U.S. Foreign Policy, source.

- Edelman, 2024 Edelman Trust Barometer (Edelman, 2024), source.

- Valentin Mang, Bob M. Fennis, and Kai Epstude, “Source Credibility Effects in Misinformation Research: A Review and Primer,” Advances in Psychology (2024), source.

- Smeltz, Kafura, and Weiner, Majority in U.S. Interested in Boosting Their Nuclear Knowledge, source.